ChatGPT stands for Chat Generative Pre-trained Transformer. The name combines the product’s conversational format with the GPT model family behind it. “Chat” points to the interface: you type or speak in a back-and-forth conversation. “Generative” means the system creates new text, code, images, or other outputs instead of only retrieving fixed answers. “Pre-trained” means the model learned broad patterns from large datasets before being adapted for assistant-style use. “Transformer” refers to the neural network architecture that helps modern language models track relationships across a prompt. The acronym is useful, but it is not the full definition of what ChatGPT can do.

Quick answer

ChatGPT stands for Chat Generative Pre-trained Transformer. OpenAI describes ChatGPT as an AI assistant for everyday tasks such as brainstorming, writing, studying, planning, math, coding, and analyzing images or files, and says it is trained to follow instructions in a conversation.[1]

The name has two layers. The first layer is the product name: ChatGPT, the assistant people use in a chat interface. The second layer is the technical acronym GPT, which points to a family of generative pre-trained transformer models. If you want the shorter acronym-only version, see our separate guide to what does GPT stand for.

OpenAI introduced ChatGPT as a research release on November 30, 2022.[2] Britannica also records ChatGPT as released by OpenAI on November 30, 2022, which corroborates the date from an independent source.[8]

| Part of the name | Plain-English meaning | What it tells you |

|---|---|---|

| Chat | A conversational interface | You interact by asking questions, giving instructions, and following up. |

| Generative | Creates outputs | It can draft, summarize, explain, translate, code, and transform content. |

| Pre-trained | Trained before deployment | It learns broad language patterns first, then can be adapted for assistant behavior. |

| Transformer | A neural network architecture | It helps the model process relationships among tokens in a prompt. |

What each part of ChatGPT means

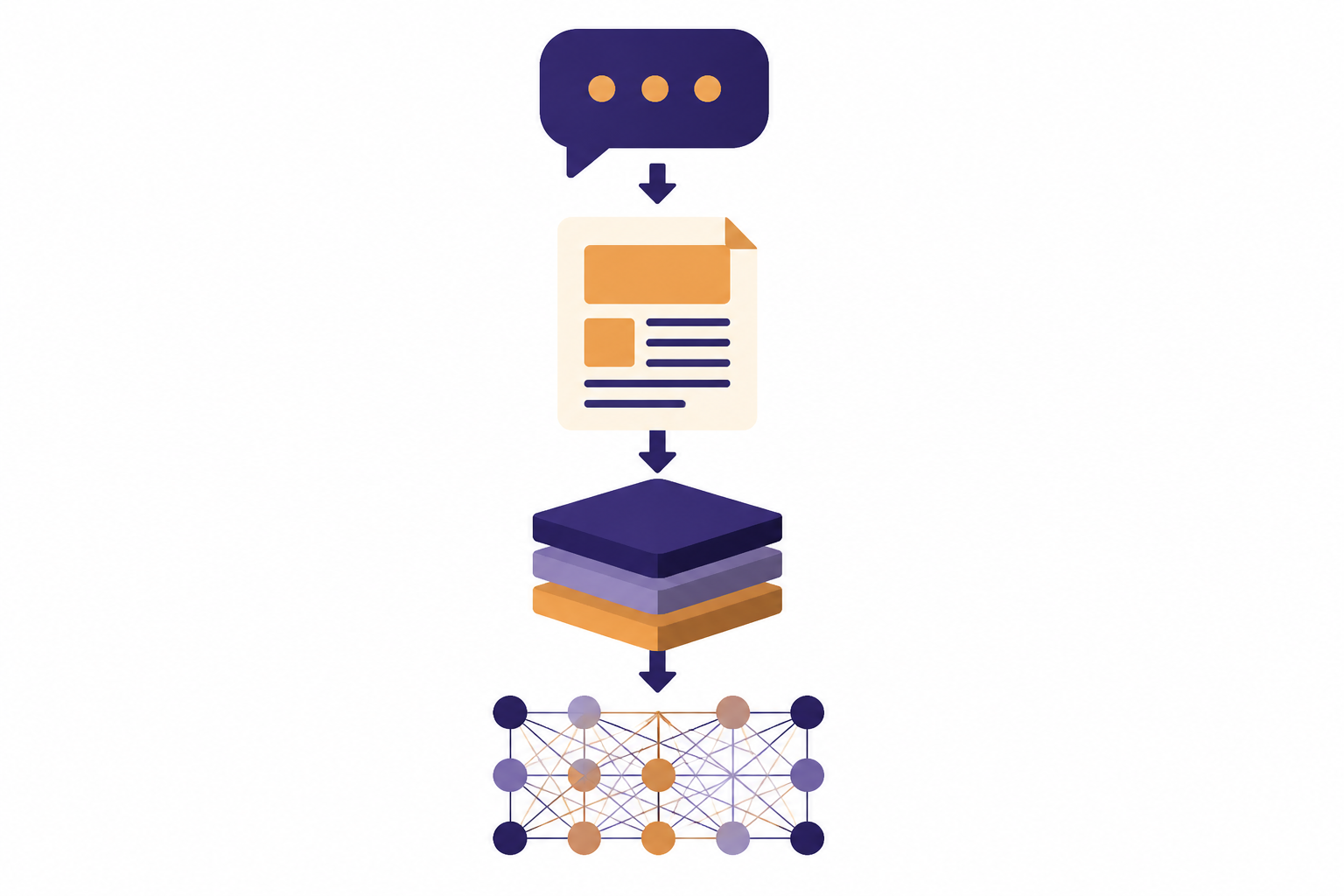

The expansion “Chat Generative Pre-trained Transformer” sounds technical because it mixes a product interface with machine-learning terminology. Each word carries a different kind of meaning.

Chat

“Chat” means the assistant is designed for a back-and-forth conversation. You can ask a question, read the answer, clarify your request, correct the model, or ask for a different format. OpenAI’s original ChatGPT announcement emphasized that the dialogue format made follow-up questions, corrections, and refusals possible in a conversational flow.[2]

This is why ChatGPT feels different from a static search box or a single-command tool. It keeps the recent conversation in view, within the limits of the model’s context window. If you are new to that idea, our primer on what a context window means explains why long conversations eventually need summaries or resets.

Generative

“Generative” means the system can produce new content. It does not only choose from a fixed menu of answers. It predicts and composes an output based on your prompt, its training, its instructions, and any tools or files available in that session.

That can include a paragraph, a spreadsheet formula, a Python function, an outline, a study plan, a rewritten email, or an explanation of a chart. This places ChatGPT inside the broader field covered in our plain-English guide to generative AI.

Pre-trained

“Pre-trained” means the base model learned broad patterns before it was used as an assistant. OpenAI’s early GPT research described the value of unsupervised pre-training for language tasks and noted the use of a Transformer architecture trained on sequences of up to 512 tokens in that work.[3]

Pre-training is not the same thing as memorizing a database. It is a training phase where a model learns statistical relationships in language and other data. Later steps can adapt that base behavior so the system is better at following instructions, refusing unsafe requests, and giving useful answers.

Transformer

“Transformer” refers to a neural network design introduced in the 2017 paper “Attention is All You Need.” The NeurIPS abstract describes a network architecture based on attention mechanisms rather than recurrence or convolution.[4]

For a beginner, the key idea is simple: a transformer helps the model weigh relationships among pieces of input. In language, that means the model can connect a pronoun to a noun, a question to a constraint, or a later instruction to an earlier detail.

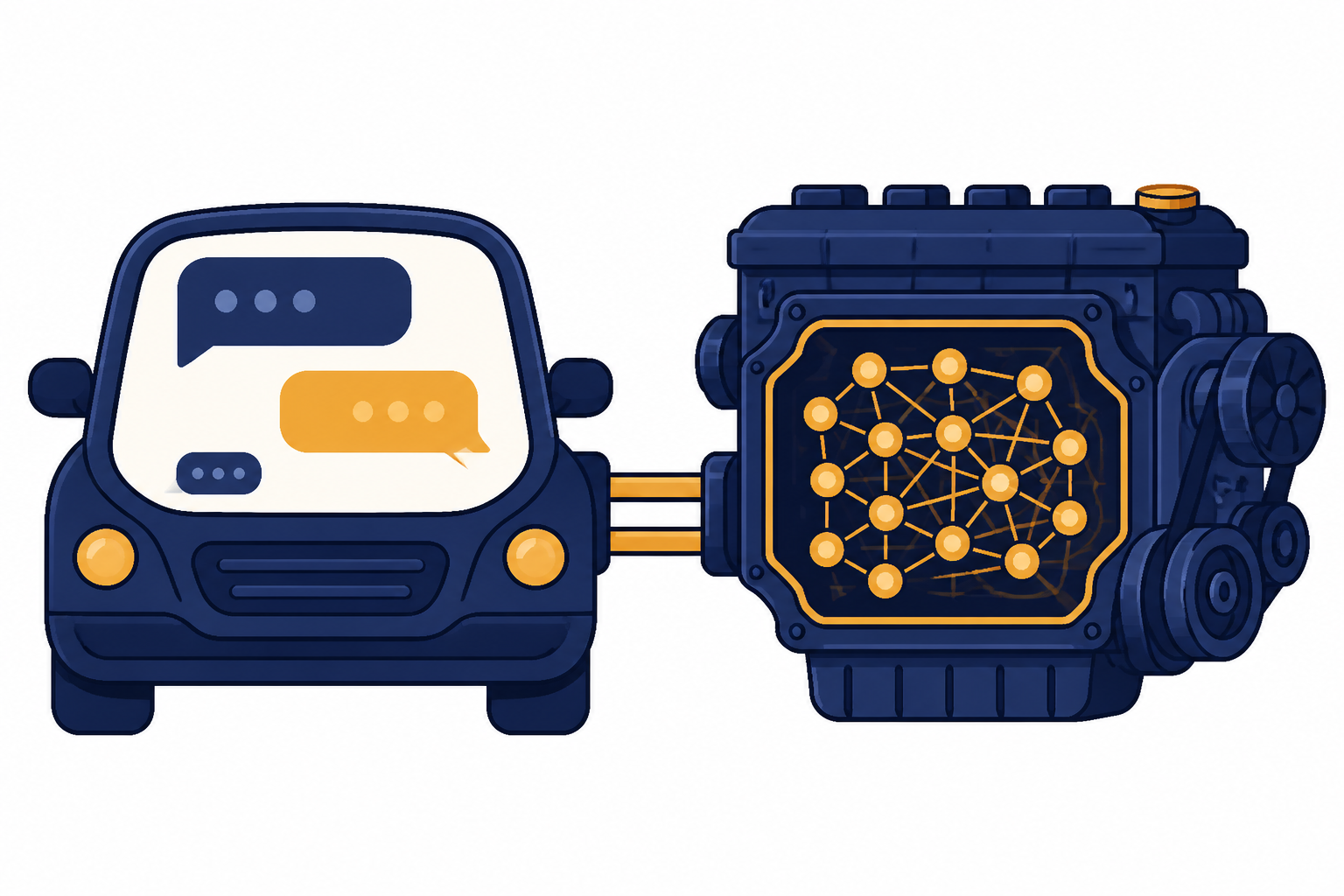

ChatGPT vs. GPT

ChatGPT and GPT are related, but they are not identical. GPT is the model-family term. ChatGPT is the assistant product built around conversational use.

Think of GPT as the engine type and ChatGPT as the vehicle you drive. The vehicle has an interface, safety rules, conversation history, file tools, voice options, and product settings. The engine supplies much of the language capability, but the product experience includes more than the model alone.

OpenAI’s first ChatGPT post called ChatGPT a model that interacts in a conversational way and described it as a sibling model to InstructGPT, which was trained to follow an instruction in a prompt and provide a detailed response.[2] For a deeper model-family explanation, read what GPT means as a model type.

| Term | Best definition | Example use | Common mistake |

|---|---|---|---|

| ChatGPT | The conversational assistant product from OpenAI | “I used ChatGPT to summarize a report.” | Treating it as only one static model forever. |

| GPT | A generative pre-trained transformer model family or model type | “That app uses a GPT model.” | Using GPT as a synonym for every AI system. |

| LLM | A large language model category | “ChatGPT is powered by large language models.” | Assuming every LLM has the same tools or safety behavior. |

| AI assistant | A product that uses models to help users complete tasks | “The assistant can help draft, analyze, and plan.” | Assuming it understands everything like a person. |

The distinction matters when you compare products. A chatbot may use a GPT-style model without being ChatGPT. ChatGPT may also include features that are not explained by the acronym alone, such as file analysis, browsing, memory settings, or voice interaction. For the broader beginner definition, see what ChatGPT is.

Why the transformer part matters

The transformer part of the name is the most technical piece, but it is also the reason GPT-style systems became practical at large scale. Earlier language systems often handled sequences in more rigid step-by-step ways. Transformers made it easier to process relationships across a sequence more efficiently.

The original transformer paper presented attention-based models that were more parallelizable and required less training time than the recurrent or convolutional sequence models it compared against.[4] That does not mean every transformer is a chatbot. It means the architecture became a useful building block for many modern AI systems.

In ChatGPT, the transformer idea helps with tasks like keeping a writing style consistent, connecting a user’s follow-up question to the previous answer, and balancing multiple instructions in one prompt. It still has limits. A model can miss details, overemphasize the wrong part of a prompt, or produce a confident answer that needs verification.

The transformer also works on tokens, not whole human thoughts. OpenAI explains that tokens are the building blocks of text that its models process, and that tokens may be as short as one character or as long as one full word depending on language and context.[6] If you want a more practical explanation, read what a token is in ChatGPT.

How the name connects to training

The name “Chat Generative Pre-trained Transformer” tells you about the model’s origin, but not the whole training process. A useful assistant normally needs more than pre-training. It needs post-training, evaluation, safety work, and product design.

OpenAI’s technology explainer describes foundation model development as including pre-training to teach abilities such as prediction, reasoning, and problem solving, followed by post-training to align the model to human values and preferences and make it useful, effective, and safe.[7]

One important post-training method is reinforcement learning from human feedback, often shortened to RLHF. OpenAI’s InstructGPT work describes using human demonstrations and human rankings of model outputs to fine-tune GPT-3, with the goal of making models more helpful and aligned with user intent.[5] You can read our separate beginner explanation of RLHF and human feedback.

This is why the word “pre-trained” should not be read as “finished after pre-training.” Pre-training builds the broad capability. Post-training shapes how that capability appears in a product. ChatGPT’s conversational behavior depends on both.

What the name does not mean

The acronym is helpful, but it can also mislead if you treat it as a complete explanation. Here are the main misunderstandings to avoid.

- It does not mean ChatGPT is a human-like mind. The system generates responses based on learned patterns, instructions, context, and tools. It can be useful without having human understanding.

- It does not mean every answer is true. A generative system can produce fluent errors. Use citations, primary sources, and domain experts for important claims.

- It does not mean GPT is the same as all AI. GPT is one major family of model technology. AI also includes vision systems, speech systems, robotics, recommendation models, and many other approaches.

- It does not mean the model only chats. Chat is the interface, but the same assistant can help with writing, coding, analysis, planning, and multimodal tasks when those features are available.

- It does not mean pre-training is the only training step. Assistant behavior also depends on post-training, safety evaluations, and product rules.

The name also does not explain newer product capabilities by itself. When ChatGPT can work with images, audio, or files, those abilities involve multimodal systems and product features beyond the original acronym. Our guide to multimodal AI covers that shift.

Simple examples

These examples show how the words in “Chat Generative Pre-trained Transformer” map to everyday use.

Example 1: You ask for a rewrite

You paste a rough email and ask ChatGPT to make it concise. “Chat” is the back-and-forth interface. “Generative” is the new draft. “Pre-trained” is the broad language ability that helps the model recognize tone and structure. “Transformer” is part of the architecture that weighs the relationship between your instruction, your original text, and the requested style.

Example 2: You ask a follow-up question

You ask for a lesson plan, then say, “Make it suitable for fourth grade.” The assistant uses the conversation context to revise the answer. This is why ChatGPT feels conversational rather than one-shot.

Example 3: You ask for a technical explanation

You ask, “Explain APIs like I am a new product manager.” ChatGPT generates a tailored explanation. It is not looking up one fixed paragraph unless a search or retrieval tool is involved. It is composing a response from the prompt, the context, and the model’s learned patterns.

Example 4: You attach a document

You upload meeting notes and ask for action items. The “ChatGPT” product experience may include file handling, but the acronym alone does not describe every tool in the workflow. For retrieval-based workflows, see what RAG means.

Related terms worth knowing

Once you understand what ChatGPT stands for, several nearby terms become easier to separate.

| Term | Short meaning | Why it matters |

|---|---|---|

| Large language model | A model trained to process and generate language at scale | It is the broader category behind many chat assistants. |

| Prompt | The instruction or input you give the model | Better prompts usually make the task clearer. |

| Token | A piece of text processed by the model | Token limits affect long documents, cost, and context size. |

| Context window | The amount of information a model can consider at once | It explains why long chats may lose earlier detail. |

| Fine-tuning | Additional training for a narrower behavior or domain | It can adapt a model for specific tasks. |

| AI agent | A system that can plan and act across steps | It goes beyond answering a single prompt. |

For the broader category, start with what a large language model is. If you want to write better instructions, read our prompt engineering guide. If you are comparing assistant systems that can take actions, see what an AI agent is.

It also helps to separate the product from the organization. ChatGPT was developed by OpenAI, and OpenAI’s own technology explainer says the organization was created in 2015 to ensure that artificial general intelligence benefits everyone.[7] For company background, read what OpenAI is, who created ChatGPT, and when ChatGPT was released.

Frequently asked questions

What does ChatGPT stand for exactly?

ChatGPT stands for Chat Generative Pre-trained Transformer. “Chat” refers to the conversational product interface. “GPT” stands for Generative Pre-trained Transformer.

Does GPT stand for general purpose technology?

No. In this context, GPT stands for Generative Pre-trained Transformer. “General purpose technology” is a separate phrase used in economics and policy discussions, not the expansion of GPT in ChatGPT.

Is ChatGPT the same thing as GPT?

No. GPT refers to a model family or model type. ChatGPT is the assistant product that uses models, an interface, instructions, safety systems, and product features to support conversation.

Who made ChatGPT?

ChatGPT was made by OpenAI. OpenAI introduced ChatGPT publicly on November 30, 2022.[2] The product has changed substantially since the original research release.

Why is it called pre-trained?

It is called pre-trained because the model learns broad patterns before it is adapted for specific assistant behavior. OpenAI describes foundation model development as including pre-training followed by post-training for usefulness, safety, and alignment.[7]

Does the acronym explain everything ChatGPT can do?

No. The acronym explains the name and the core model lineage, but not every product feature. Tools such as file analysis, image handling, memory settings, browsing, and agent-like workflows are product capabilities layered around the model.