Multimodal AI is artificial intelligence that can work with more than one type of information at the same time. Instead of only reading text, a multimodal system may also interpret images, listen to speech, analyze video, or produce spoken and visual outputs. This matters because real work rarely arrives in one clean format. A support request may include a screenshot, a voice note, and a written question. A medical chart may include notes, scans, and lab values. Multimodal AI tries to connect those signals so the model can answer with more context than a text-only system can use.

Multimodal AI, defined simply

Multimodal AI is an AI system that can process, connect, and sometimes generate multiple kinds of data. The main data types are called modalities. Text is one modality. Images are another. Audio, video, code, tables, sensor readings, and user actions can also be modalities.

A text-only chatbot receives words and returns words. A multimodal chatbot might receive a typed question plus a photo, then answer in text or voice. A more advanced system might watch a short video, listen to the spoken audio, inspect the visible objects, and produce a written summary.

The key idea is not just accepting many file types. A true multimodal system should connect information across them. If a user uploads a picture of a damaged bicycle and asks, “What part do I need?”, the model has to combine the visual evidence with the written question. Research on multimodal alignment and fusion describes this as the problem of bringing different data streams into a form the model can compare, combine, and reason over.[8]

Multimodal AI is part of the broader field of generative AI, but the terms are not identical. Generative AI describes systems that create outputs such as text, images, audio, or code. Multimodal AI describes the types of inputs and outputs the system can handle. A model can be generative, multimodal, both, or neither.

How multimodal AI works

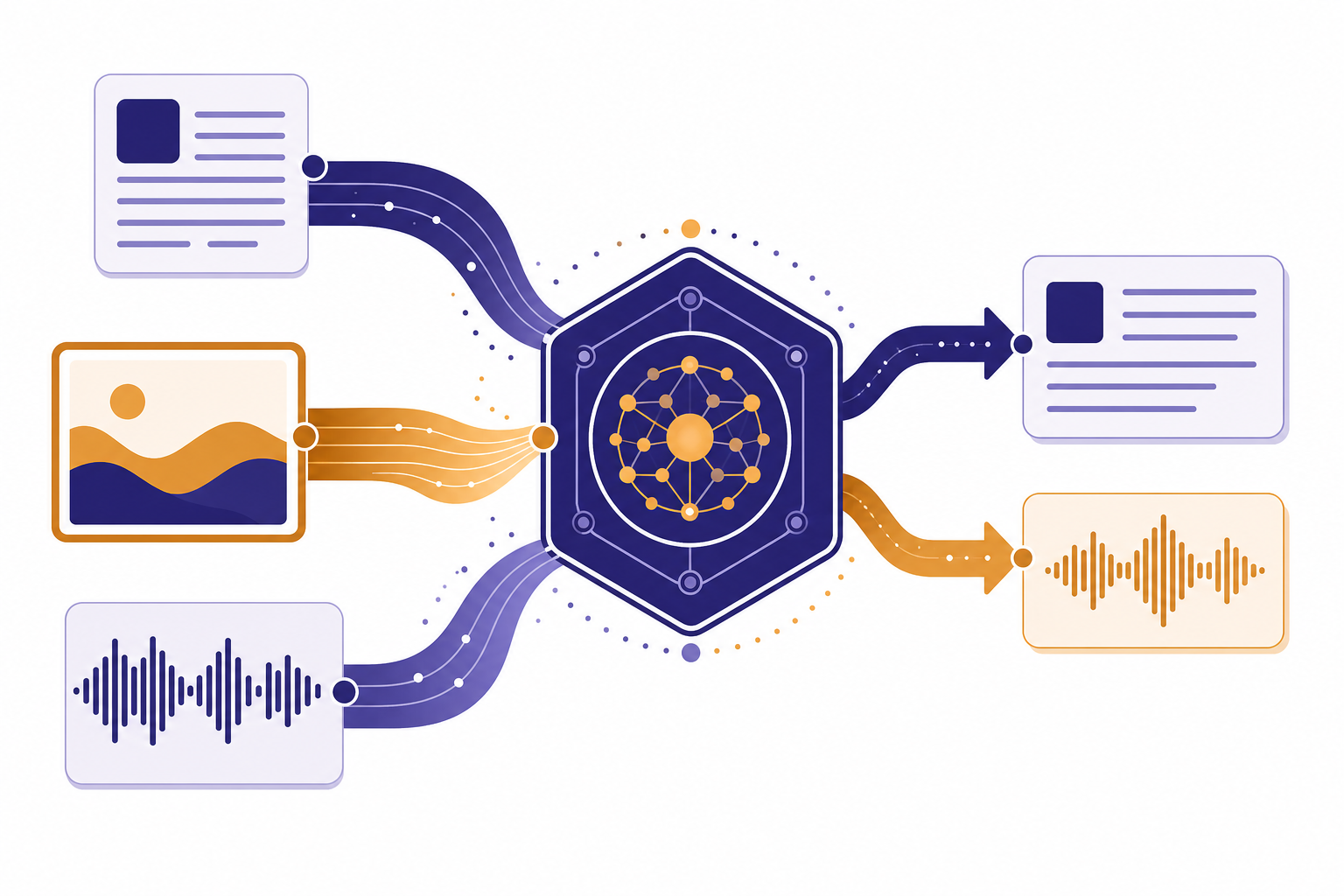

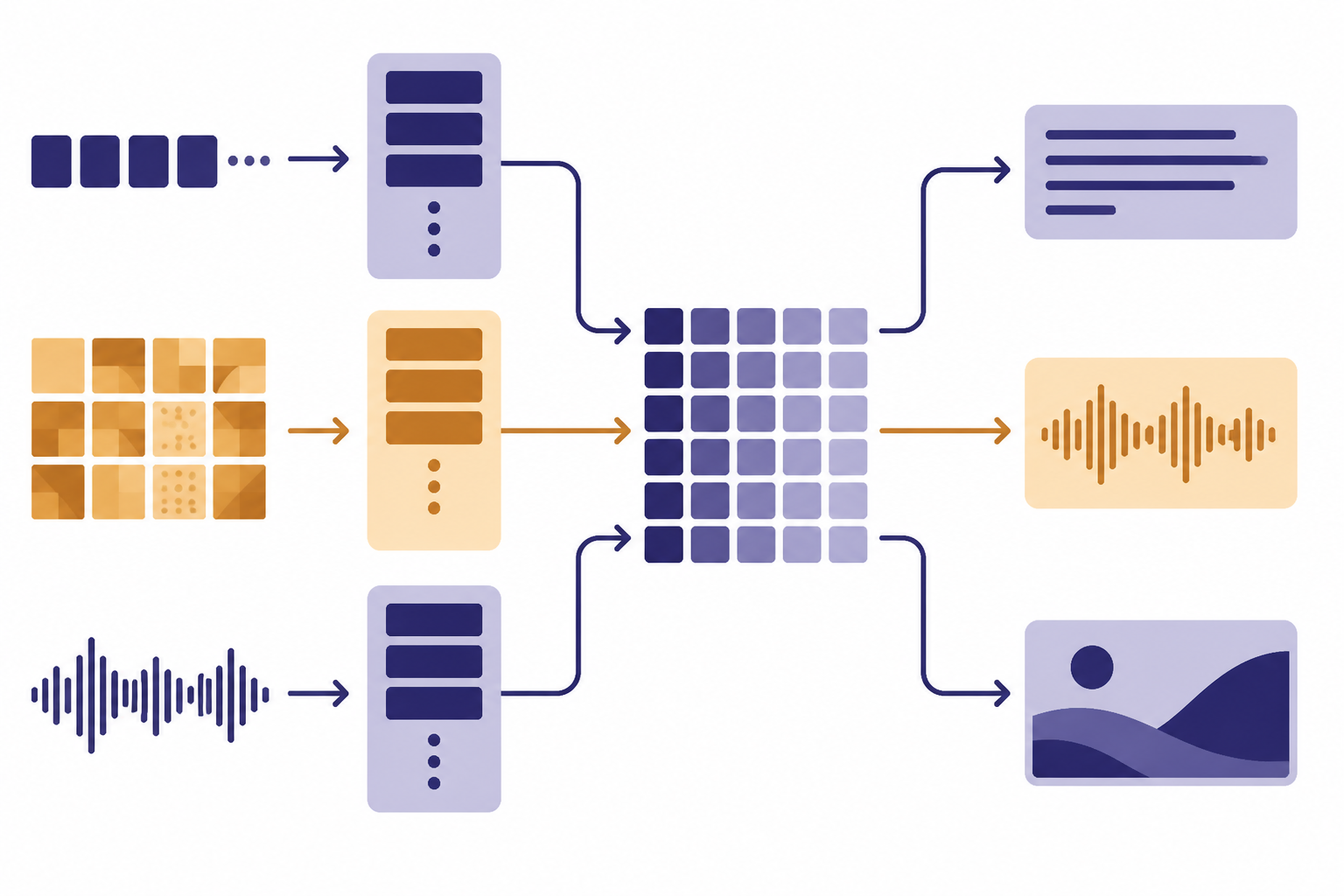

Most modern multimodal systems use specialized processing steps for each modality, then connect those signals inside a shared model space. An image may pass through a vision encoder. Audio may pass through a speech or sound encoder. Text may be split into tokens and processed by a language model. The system then aligns the representations so the model can relate “red warning light” in an image to the same idea described in words.

One influential earlier approach was CLIP, which OpenAI introduced as a system for connecting text and images by learning visual concepts from natural language supervision.[9] CLIP did not make today’s voice-and-vision assistants by itself, but it helped popularize a practical pattern: train models so related images and descriptions sit near each other in a shared representation space.

Modern multimodal AI can go beyond matching captions to pictures. Some systems are built as native multimodal models, where the model is trained from the start on multiple data types. Other systems are pipelines that connect separate models. For example, one component may transcribe speech, another may reason over the transcript, and another may synthesize spoken output.

Researchers often describe multimodal design in terms of fusion. Early fusion combines raw or low-level signals near the beginning. Intermediate fusion combines learned features inside the model. Late fusion lets separate models make partial judgments, then combines the results near the end. Current systems often mix these patterns rather than using only one.[8]

| Architecture pattern | How it works | Plain-English example | Main tradeoff |

|---|---|---|---|

| Early fusion | Combines inputs close to the raw data stage. | A robot merges camera and depth-sensor signals before deciding what object it sees. | Can capture fine detail, but different data types can be hard to align. |

| Intermediate fusion | Combines learned features after each modality has been partly processed. | A model reads a chart image and the text around it, then reasons over both together. | Often powerful, but harder to design and evaluate. |

| Late fusion | Combines decisions from separate models. | One model scores an image, another scores a transcript, and a final step merges the scores. | Easier to build, but may miss subtle cross-modal clues. |

| Tool-chain multimodality | Uses separate tools for speech, vision, text, or image generation. | A voice app transcribes speech, sends text to a language model, then reads the answer aloud. | Flexible, but errors can compound between tools. |

What vision, voice, and text each add

Text gives the model explicit instructions. It is precise, searchable, and easy to quote. Text is still the best modality for policies, contracts, code, recipes, prompts, and step-by-step instructions. If you want to understand the language side more deeply, start with what GPT means and what a token is in ChatGPT.

Vision gives the model access to visible structure. A vision-capable model can inspect screenshots, forms, product photos, diagrams, whiteboards, charts, and images embedded in documents. OpenAI’s image and vision documentation describes API paths for analyzing image inputs and generating image outputs, including multimodal applications that combine images with text or audio.[3]

Voice adds timing, tone, and hands-free interaction. Audio can be used as input, output, or both. OpenAI’s audio documentation describes applications for speech-to-speech voice agents, transcription, and text-to-speech, and notes that some models are natively multimodal across audio and other modalities.[4]

Video is often treated as a combination of visual frames, motion, timing, and audio. A video-capable system may need to understand what appears, what changes, what is said, and when events happen. That makes video especially useful, but also computationally demanding.

The most useful multimodal systems combine these strengths. A user can speak a messy question, show the model a broken part, and ask for a repair plan. The model can use the spoken intent, visible details, and general knowledge together. A text-only model would need the user to describe every relevant detail manually.

Examples of multimodal AI systems

GPT-4V was an OpenAI vision capability that allowed users to instruct GPT-4 to analyze image inputs.[2] It was an important step because it brought image understanding into a GPT-style assistant workflow. Users could ask questions about screenshots, pictures, documents, and diagrams rather than only typing descriptions.

GPT-4o moved the idea further. OpenAI announced GPT-4o on May 13, 2024, describing it as a model that can reason across audio, vision, and text in real time and accept combinations of text, audio, image, and video as inputs.[1] That announcement made “multimodal AI” a mainstream product term, not just a research phrase.

OpenAI’s API documentation also separates practical multimodal tasks into image-related and audio-related workflows. For speech-to-text, the documentation lists transcription and translation endpoints and includes models such as gpt-4o-mini-transcribe, gpt-4o-transcribe, and gpt-4o-transcribe-diarize.[5]

Google has described Gemini as built from the ground up to be multimodal, able to understand and combine text, images, audio, video, and code.[6] Anthropic’s Claude documentation describes vision capabilities that allow Claude models to understand and analyze images.[7] These examples show that multimodality is now a core direction across major AI labs, not a feature limited to one company.

Earlier systems also matter. OpenAI introduced DALL·E on January 5, 2021, as a 12-billion-parameter version of GPT-3 trained to generate images from text descriptions.[10] That is a different kind of multimodality: the input is language, but the output is an image. It helped make cross-modal generation familiar to everyday users.

Multimodal AI compared with related AI terms

Multimodal AI overlaps with several common AI terms, but each term answers a different question. The easiest way to separate them is to ask what the term is describing: the data types, the model family, the retrieval method, the training method, or the system behavior.

| Term | What it describes | Simple example | How it relates to multimodal AI |

|---|---|---|---|

| Multimodal AI | The system can work across multiple data types. | A model answers a question about a screenshot and a voice note. | This is the main concept in this article. |

| Large language model | A model centered on language prediction and generation. | A chatbot drafts an email from a typed prompt. | An LLM can be text-only or extended with multimodal abilities. See what an LLM is. |

| Generative AI | A system that creates new content. | A model writes a summary or creates an image. | Many multimodal systems are generative, but multimodality is about input and output types. |

| RAG | A method that retrieves external information before answering. | A support bot searches a help center before replying. | RAG can be text-only or multimodal. See retrieval-augmented generation. |

| AI agent | A system that can plan steps and use tools toward a goal. | An assistant reads an email, checks a calendar, and drafts a reply. | An agent can use multimodal perception as one input source. See what an AI agent is. |

| Fine-tuning | A training method for adapting a model to a task or style. | A company adapts a model to classify its internal tickets. | Fine-tuning may be applied to text or multimodal systems. See fine-tuning in AI. |

This distinction helps avoid a common mistake. “Multimodal” does not automatically mean “smarter.” It means the system has access to more kinds of signals. Whether that improves the answer depends on model quality, the task, the prompt, and the reliability of the input.

Common uses for multimodal AI

Multimodal AI is most useful when the missing context is visual, spoken, or spread across formats. It can reduce the burden on users because they no longer have to translate everything into text before asking for help.

- Screenshot troubleshooting. A user can upload an error screen and ask what to try next. The model can inspect buttons, warnings, layout, and typed instructions together.

- Document review. A model can read typed text, tables, stamps, diagrams, and handwritten notes in the same file, though accuracy still needs verification.

- Accessibility support. A vision model can describe a scene, label objects, or summarize a photo for a user who cannot easily inspect it visually.

- Learning and tutoring. A student can show a math worksheet, speak a question, and receive a guided explanation.

- Customer service. A customer can send a photo of a device, a serial label, or a damaged shipment rather than writing a long description.

- Creative work. A user can combine a text brief, reference images, and spoken direction to explore ideas faster.

Developers also use multimodal models in apps that sit outside ChatGPT. The OpenAI Vision API is one example of an API-oriented topic where image understanding becomes a product feature rather than a standalone chatbot feature. Teams that compare models for production work should also look at GPT model comparisons and context window sizes, because multimodal inputs can consume more context than plain text.

Limits, risks, and safety issues

Multimodal AI can still be wrong. It may misread small text in an image, miss an object, invent a detail that is not visible, or overstate certainty. Vision does not make a model an eyewitness. Audio does not make it a perfect listener. A blurry photo, noisy recording, cropped screenshot, or ambiguous prompt can lead to a confident but false answer.

OpenAI’s GPT-4V system card describes safety work around vision capabilities and discusses risks tied to image inputs, including cases where models may produce incorrect or sensitive inferences.[2] The same general caution applies to other multimodal systems. If a model is asked to identify a person, infer private traits, diagnose a medical issue, or judge legal evidence from an image, the stakes rise quickly.

Multimodal systems also raise privacy concerns. Images and audio can contain more personal information than a short text prompt. A screenshot may include names, addresses, account numbers, browser tabs, or workplace data. A voice recording may include background speakers or location clues. Users should crop, redact, or summarize sensitive material before uploading it when possible.

There is also a cost and latency tradeoff. Images, audio, and video often require more processing than plain text. A model that handles many modalities may be slower or more expensive than a smaller text-only model for simple tasks. For a typed question like “rewrite this sentence,” multimodal AI adds little value.

The safest habit is to treat multimodal output as an informed draft. Verify important claims against the original image, audio, document, or a trusted source. This is especially important for medical, legal, financial, workplace, and safety-critical decisions.

How to use multimodal AI well

Good multimodal prompting is mostly good communication. Tell the model what to look at, what to ignore, and what output you need. Do not assume it knows which part of an image or recording matters.

- Point to the relevant area. Say “focus on the table in the lower half” or “ignore the browser tabs at the top.”

- State the task. Ask for a diagnosis of a spreadsheet error, a summary of a chart, a repair checklist, or a transcription review.

- Ask for uncertainty. Use wording such as “tell me what you can verify from the image and what is only a guess.”

- Provide context in text. If the image is a screenshot from a tool, name the tool and describe what you were trying to do.

- Use cleaner inputs. Crop images, improve lighting, upload higher-resolution files, and reduce background noise when possible.

- Check the answer. Compare the output with the original material before acting on it.

For text prompts, the same basic skills still apply. Clear instructions, examples, constraints, and desired format can improve the answer. If you want a deeper beginner guide, read what prompt engineering is.

Multimodal AI is strongest when each modality contributes something real. Use text for instructions, images for visible evidence, audio for speech or sound, and documents for structure. If one modality does not help the task, leave it out.

Frequently asked questions

Is ChatGPT multimodal?

Some ChatGPT experiences are multimodal when they support images, voice, file uploads, or other non-text inputs. The exact capabilities depend on the model, product surface, account type, and date. The concept is broader than ChatGPT, though; other systems also support multimodal inputs and outputs.

Is multimodal AI the same as a large language model?

No. A large language model is centered on language, while multimodal AI is defined by the ability to work across multiple data types. Some modern LLM-based systems have multimodal features. Others remain mostly text-based.

Does multimodal AI understand images like a person does?

No. It can detect patterns and make useful inferences, but it does not see with human experience or judgment. It can miss obvious details, misread text, or infer things that are not actually present. Treat its visual answers as assistive, not authoritative.

What is the difference between vision AI and multimodal AI?

Vision AI focuses on images or video. Multimodal AI combines vision with other modalities, such as text, speech, audio, or structured data. A system that only detects objects in photos is vision AI; a system that discusses the photo in a conversation is multimodal.

Why does multimodal AI matter?

It matters because many real tasks are not text-only. People use screenshots, photos, charts, spoken questions, PDFs, and videos to explain problems. Multimodal AI can reduce manual description work and give the model more context.

Can multimodal AI generate images and audio?

Some systems can generate images, audio, or both. Others can only analyze those inputs and respond in text. Always check the specific model or product documentation, because “multimodal” does not guarantee every input and output combination.