RAG stands for retrieval-augmented generation. It is a way to make an AI system look up relevant information before it writes an answer. Instead of relying only on what a language model learned during training, a RAG system retrieves passages from documents, databases, websites, tickets, manuals, or other approved sources, then gives those passages to the model as context. The model uses that context to generate a more grounded response. RAG is common in customer support bots, internal knowledge assistants, legal research tools, product documentation search, and AI apps that need current or private information. It does not make AI perfectly accurate, but it gives the system better evidence to work from.

What RAG means

RAG is an architecture pattern for AI applications. The word retrieval means the system searches for relevant source material. Augmented means the search results are added to the model’s prompt as extra context. Generation means the model writes the final answer.

The basic idea is simple. A standalone model answers from its trained knowledge and the conversation in front of it. A RAG system gives the model a small packet of supporting material first. That packet might include a section from a policy manual, a paragraph from a product spec, a clause from a contract, or a few rows from a database.

The original RAG paper, Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks, appeared in 2020 and described RAG models as systems that combine pretrained parametric memory with non-parametric memory for language generation.[1] In plain English, the model has learned patterns during training, but it can also consult an external store of information when it needs facts.

That external store is what makes RAG useful for real organizations. A model may know general facts about software, medicine, law, or finance. It does not automatically know your refund policy, current price sheet, release notes, internal HR handbook, or latest support incidents. RAG gives the application a controlled way to retrieve that information at answer time.

If you are new to the model side of this, start with what an LLM is and what generative AI means. RAG sits on top of those concepts. It does not replace the language model. It changes what information the model sees before it responds.

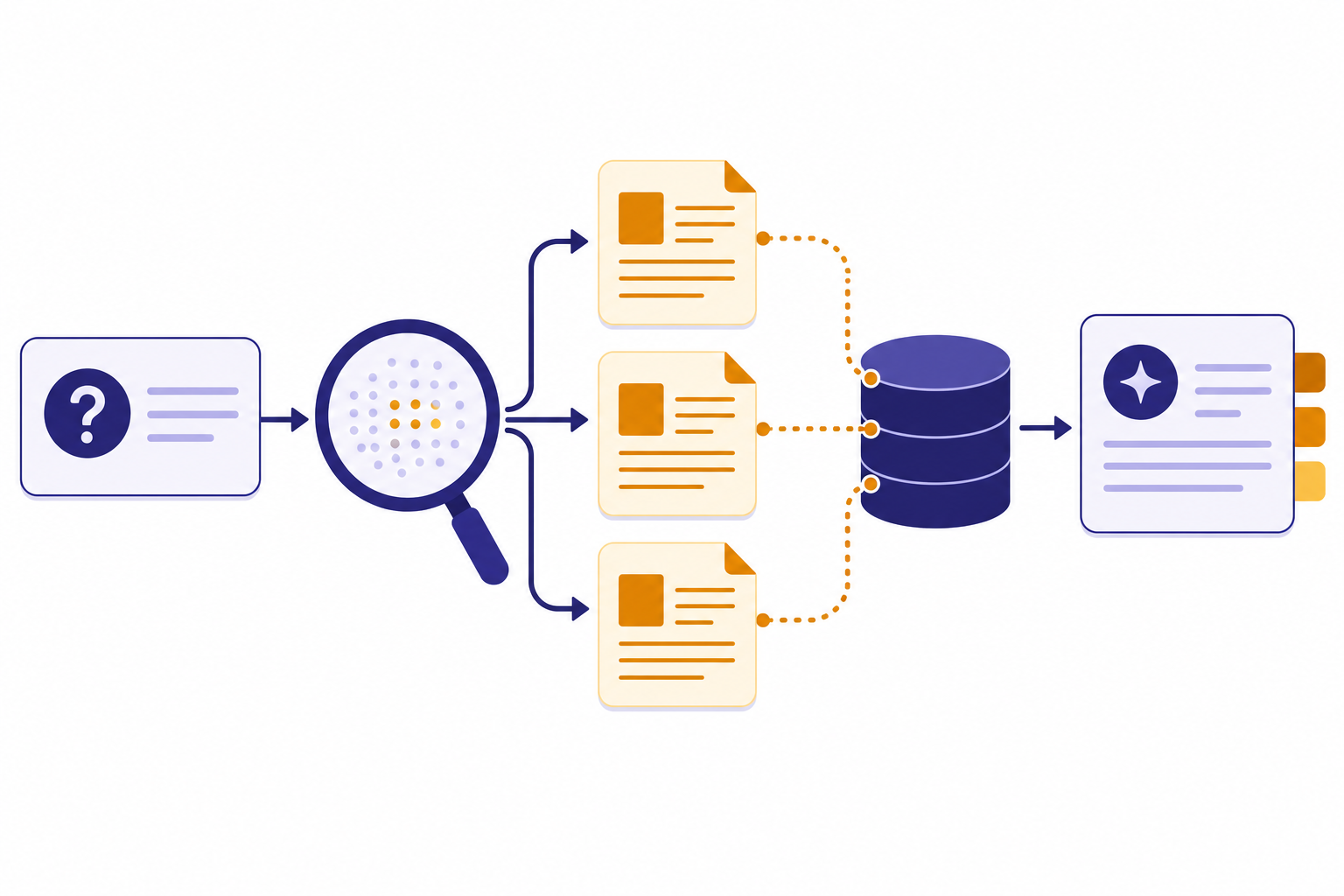

How RAG works

A typical RAG pipeline has two phases. The first phase prepares the knowledge base. The second phase answers user questions.

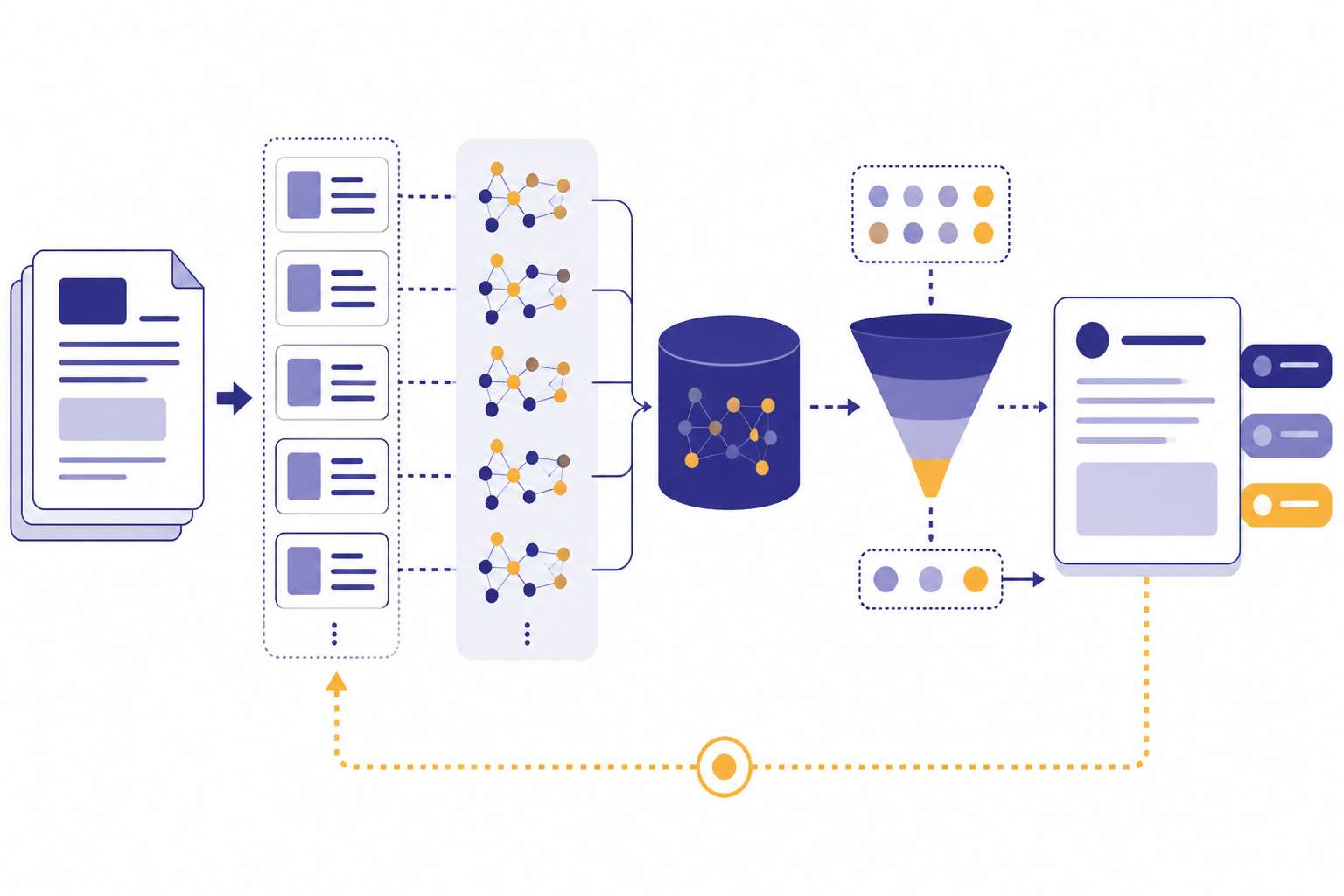

Preparation phase

- Collect the source material. The team chooses approved documents, web pages, database records, tickets, or other reference material.

- Clean and split the material. Long documents are broken into smaller chunks so the system can retrieve focused passages instead of entire files.

- Create embeddings or indexes. Many RAG systems convert chunks into embeddings, which are numerical representations used for semantic search. Some systems also keep keyword indexes.

- Store the chunks. The text, metadata, and search representations go into a vector database, search engine, or managed retrieval service.

Answer phase

- The user asks a question. The application receives the question and may rewrite it for better search.

- The retriever searches the knowledge base. It finds passages that appear relevant to the question.

- The system builds an augmented prompt. It combines the user’s question, the retrieved passages, and instructions for how to answer.

- The model generates a response. The model writes an answer using both its general language ability and the retrieved context.

- The app may show citations. A well-designed RAG app often links the answer back to the source passages.

Google Cloud describes RAG as a framework that combines traditional information retrieval, such as search and databases, with generative large language models.[5] AWS similarly defines RAG as a process that makes a large language model reference an authoritative knowledge base outside its training data before generating a response.[6] These definitions point to the same core pattern: search first, answer second.

RAG is different from pasting a giant document into a chat. A large context window lets a model read more text in one request, but RAG tries to select only the most relevant text. That matters because context is not free. More context can increase cost, slow the answer, and distract the model if the retrieved passages are noisy. If you need the basics, see our guide to tokens in ChatGPT.

RAG compared with other AI methods

RAG is often confused with fine-tuning, prompt engineering, and long-context prompting. They solve different problems. A production AI system may use more than one of them.

| Method | What it changes | Best for | Weak spot |

|---|---|---|---|

| RAG | The information supplied to the model at answer time | Current, private, or source-grounded answers | Fails if retrieval finds the wrong material |

| Fine-tuning | The model’s learned behavior or task pattern | Consistent style, format, classification, or domain behavior | Not ideal for frequently changing facts |

| Prompt engineering | The instructions and examples in the request | Guiding reasoning, tone, output format, and constraints | Cannot provide facts the system does not have |

| Long-context prompting | The amount of text placed directly into the prompt | One-off analysis of long material | Can be costly, slow, and prone to distraction |

Use RAG when the main challenge is knowledge access. Use fine-tuning when the main challenge is behavior. Use prompt engineering when the model already has the needed information but needs clearer instructions. Use long-context prompting when the user supplies a specific document or conversation that the model must inspect directly.

For example, a support chatbot that must answer from the latest help center articles is a RAG candidate. A classifier that must label emails in a fixed format may be a fine-tuning candidate. A writing assistant that must produce a stricter summary may only need better prompting. A research assistant that must analyze a single long filing may need a large context window rather than a full retrieval system.

The main parts of a RAG system

A RAG system is more than a model connected to a folder of documents. The quality comes from the parts around the model.

Source documents

The source set defines what the system can know. Good RAG sources are current, readable, deduplicated, and clearly scoped. Bad source material creates bad answers. If two documents conflict, the system needs metadata or rules that tell it which source wins.

Chunks

Chunking splits documents into smaller units. A chunk should be large enough to preserve meaning and small enough to retrieve precisely. A policy paragraph, a product feature section, or a troubleshooting step often works better than a random slice of text. Tables, PDFs, and slide decks need extra care because layout can carry meaning.

Embeddings and search

Embeddings help a system find semantically related text. OpenAI’s Embeddings FAQ says OpenAI released text-embedding-3-small and text-embedding-3-large on January 25, 2024, and recommends a vector database for quickly retrieving nearest embedding vectors.[4] Keyword search can still matter, especially for exact product names, legal terms, ticket IDs, error codes, or part numbers. Many strong RAG systems use hybrid search, which blends semantic and keyword retrieval.

Retriever and ranker

The retriever selects candidate chunks. A ranker may then reorder those chunks so the best evidence appears first. This step is critical. The model can only answer from the evidence it receives. If the retriever misses the right passage, the model may give a vague answer, overgeneralize, or invent a bridge between unrelated facts.

Prompt and generation policy

The prompt tells the model how to use retrieved material. A good instruction might say: answer only from the provided sources, cite the source title, say when the sources do not contain the answer, and do not merge conflicting sources without explaining the conflict. This is where RAG connects to practical prompting.

Citations and audit trail

Citations help users inspect the answer. They also help teams debug failures. If the answer is wrong and the citation is wrong, retrieval likely failed. If the citation is right but the answer misstates it, generation likely failed. If the source itself is wrong, the knowledge base needs governance.

Where RAG helps

RAG is strongest when the answer depends on information that is outside the model’s training data, changes over time, or must come from approved sources.

- Customer support. A chatbot can retrieve current help articles, warranty policies, shipping rules, or troubleshooting steps.

- Internal knowledge search. Employees can ask natural-language questions about handbooks, onboarding material, engineering docs, or sales enablement notes.

- Compliance and legal review. A system can retrieve clauses, policy excerpts, and citations instead of giving a generic answer.

- Product documentation. A developer assistant can answer from current API docs, migration notes, known issues, and release notes.

- Research workflows. A system can search a curated paper library or case database and summarize the relevant passages.

RAG also helps with user trust. AWS notes that RAG can let an LLM present source attribution, so users can inspect the source documents behind an answer.[6] This does not prove the answer is correct, but it gives the reader a path to verify it.

RAG can also reduce the need to retrain a model every time a document changes. If a benefits policy changes, a team can update the indexed source material. That is usually simpler than training a new model. This is one reason RAG appears often in enterprise AI systems.

RAG can work with AI agents, but the two ideas are not the same. A RAG assistant retrieves knowledge. An agent may plan tasks, call tools, browse systems, write files, or take actions. A practical agent often uses RAG as one of its tools.

Where RAG can fail

RAG is not a guarantee of truth. It reduces some failure modes and introduces others.

- Bad retrieval. The system may retrieve passages that look similar but do not answer the question.

- Missing source material. The correct answer may not exist in the indexed knowledge base.

- Stale documents. The system may retrieve an old policy because the source set was not updated.

- Conflicting sources. Two documents may disagree, and the model may choose one without saying so.

- Poor chunking. A retrieved passage may omit the exception, footnote, table header, or previous paragraph needed to understand it.

- Prompt injection in retrieved content. A malicious or messy document can contain instructions that try to override the system’s normal behavior.

- Overconfident generation. The model may write a fluent answer that goes beyond the retrieved evidence.

The original RAG paper focused on knowledge-intensive tasks and noted that updating model world knowledge and providing provenance were open problems for pretrained models.[2] RAG helps address those problems by making retrieved knowledge inspectable and easier to update, but the implementation must still be tested.

One common mistake is treating RAG as a document dump. More documents do not automatically mean better answers. A small, clean, versioned knowledge base can outperform a large pile of PDFs with conflicting language, scanned images, duplicated sections, and missing metadata.

Another mistake is asking the model to answer when retrieval found weak evidence. A better system can say, “I could not find this in the approved sources,” then offer the closest source or ask a clarifying question. In many business settings, a safe refusal is better than a polished guess.

How to evaluate RAG

RAG evaluation should separate retrieval quality from answer quality. If you only score the final answer, you will not know whether the failure came from the search system, the prompt, the model, or the source documents.

| Evaluation question | What it tests | Example failure |

|---|---|---|

| Did retrieval find the right source? | Recall and source coverage | The refund policy exists, but the system retrieves a blog post instead. |

| Were the retrieved chunks precise? | Context precision | The answer includes three unrelated excerpts and one useful paragraph. |

| Did the model follow the source? | Faithfulness | The cited policy says “business days,” but the answer says “calendar days.” |

| Did the answer address the user’s question? | Answer relevance | The answer quotes a policy but does not answer the user’s eligibility question. |

| Did the system handle uncertainty? | Safety and refusal behavior | The system guesses when the knowledge base has no answer. |

The RAGAS paper, published in 2023, introduced a reference-free evaluation framework for RAG pipelines and described evaluation across retrieval relevance, faithful use of context, and generation quality.[7] Those categories are useful even if you do not use that specific framework. They remind teams to test the pipeline, not just the prose.

A practical test set should include normal questions, ambiguous questions, out-of-scope questions, conflicting-source questions, and questions that require exact wording. For a customer support bot, include real support tickets. For an internal policy assistant, include questions employees actually ask. For an API assistant, include errors, version changes, parameter names, and edge cases.

Human review still matters. Automated metrics can catch patterns, but people should inspect high-risk outputs, citation quality, and user experience. The best RAG systems improve through a loop: log failures, identify the failing component, adjust the source set or retrieval settings, and retest.

How RAG relates to ChatGPT and OpenAI tools

In ChatGPT, the closest user-facing idea is “knowledge” in custom GPTs. OpenAI’s help documentation says knowledge lets a GPT use information from uploaded files and works best for reference material such as documentation, guides, handbooks, or internal content.[8] That is a managed version of the same general concept: provide source material so the assistant can answer from it.

For developers, OpenAI’s File Search example describes a hosted retrieval flow in the Responses API. The cookbook says File Search can search a knowledge base, generate an answer based on retrieved content, and use a vector store where PDFs are read, split into chunks, embedded, and stored.[3] That is a practical RAG implementation path for applications that do not want to build every retrieval component from scratch.

RAG can also connect with multimodal systems. A multimodal AI application may retrieve text, tables, screenshots, transcripts, or image-derived descriptions before answering. The same principle applies: the model should see the most relevant evidence before it generates.

Cost and latency depend on the design. Retrieval adds extra steps before generation. Larger retrieved contexts can also increase model input size. If you build with an API, compare model, embedding, storage, and retrieval costs in your provider’s current pricing. For OpenAI-specific cost planning, see our OpenAI API pricing guide.

The key design question is not “Should we use RAG?” It is “What should the model be allowed to retrieve, and how will we know the retrieved evidence is right?” If the answer requires controlled, changing, or private knowledge, RAG is often the right starting pattern.

Frequently asked questions

What is RAG in simple terms?

RAG is a way for an AI system to search approved sources before answering. The system retrieves relevant text, gives it to the model, and asks the model to write an answer based on that text. It is like giving the model a short research packet before it responds.

Does RAG stop hallucinations?

No. RAG can reduce hallucinations by giving the model relevant evidence, but it does not eliminate them. The system can still retrieve the wrong passage, miss the right source, or misstate what the source says.

Is RAG the same as fine-tuning?

No. RAG changes the information supplied to the model at answer time. Fine-tuning changes model behavior through additional training. Use RAG for changing facts and private documents. Use fine-tuning when you need a model to follow a repeated style, format, or task pattern.

Do you need a vector database for RAG?

Not always. Many RAG systems use vector databases because embeddings are useful for semantic search. A system can also use keyword search, SQL queries, graph databases, file search tools, or a hybrid approach. The right retrieval method depends on the data and the questions users ask.

Can ChatGPT use RAG?

Yes, depending on the product or developer setup. Custom GPT knowledge, file search tools, and API-based retrieval systems can all support RAG-like behavior. The important part is that the model receives retrieved source material before generating the answer.

When should you not use RAG?

Do not use RAG when the problem is only tone, format, or a stable repetitive task. Prompting or fine-tuning may be simpler. RAG also may not be worth the complexity if the source set is tiny and can fit directly into the prompt.