Prompt engineering is the practice of designing, testing, and improving instructions for AI systems so they return useful results. A prompt can be a question, a task, source material, examples, formatting rules, or a mix of all of them. The job is not to find magic words. It is to explain the goal, supply the right context, define the output, and check the result. Prompt engineering matters for ChatGPT users, developers, analysts, marketers, support teams, and anyone who uses generative AI at work. As a career, it is best viewed as a practical AI skill that strengthens other roles, not a guaranteed standalone job title.

What prompt engineering means

OpenAI defines prompt engineering as designing and optimizing input prompts to guide a language model’s responses.[1] In plain English, it means giving an AI system better instructions so it can do the right work with less guessing.

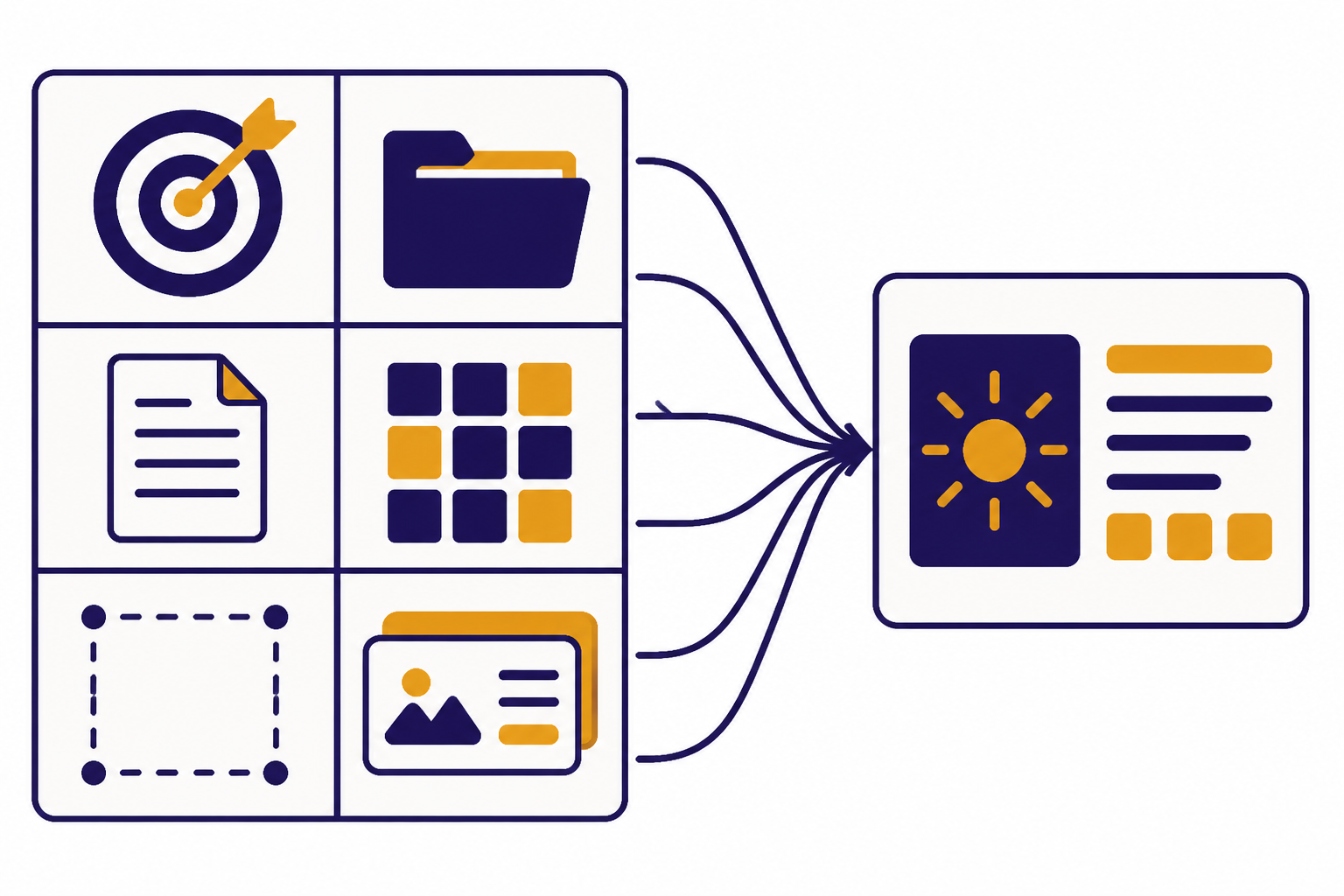

A prompt can be short. It can also be a structured work order. It may include a role, a goal, background information, examples, source text, constraints, and a desired output format. In ChatGPT, a prompt can also include non-text input such as an image or audio, depending on the product features available to the user.[1] If you are new to the tool itself, start with our plain-English guide to what ChatGPT is.

Prompt engineering sits on top of how a large language model works. The model predicts useful continuations from the input it receives. It does not read your mind. It responds to the task, context, and constraints in the conversation. That is why vague prompts usually produce vague answers.

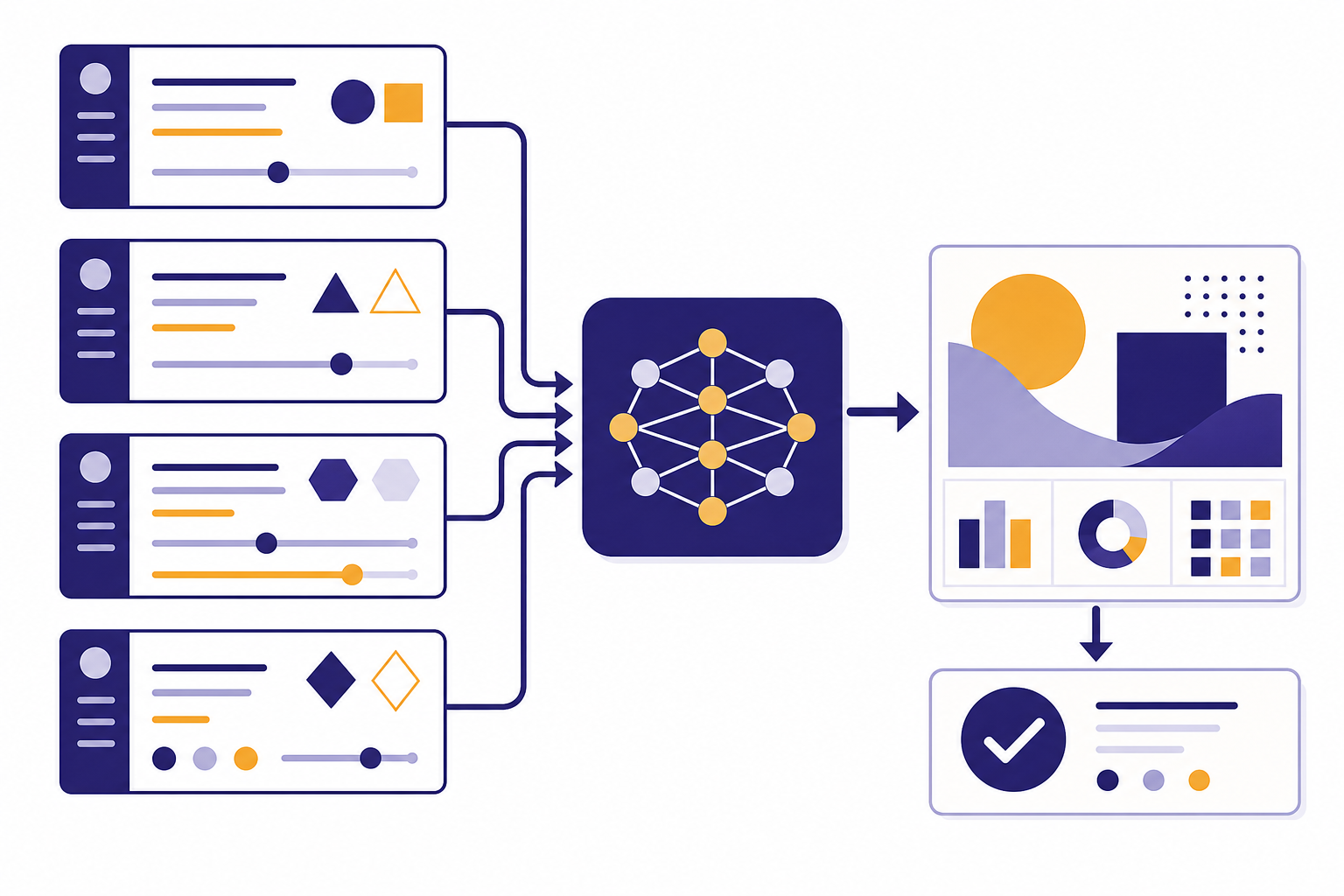

The word “engineering” can sound more formal than the work feels. For many users, prompt engineering is structured communication. For developers, product teams, and AI operations teams, it can become a repeatable process: write a prompt, test it against examples, measure failures, revise it, and deploy a better version.

What a good prompt includes

A strong prompt tells the model what to do, what information to use, what to avoid, and what the final answer should look like. OpenAI recommends being clear, specific, and descriptive about the desired context, outcome, length, format, and style.[2]

The best prompt is usually not the longest prompt. It is the prompt that removes the most uncertainty. A useful prompt gives the model enough direction without burying the task under irrelevant detail.

| Prompt part | What it does | Simple example |

|---|---|---|

| Task | States the job clearly. | Summarize the meeting notes. |

| Context | Gives background the model needs. | The audience is a finance leadership team. |

| Source material | Limits the answer to provided facts. | Use only the transcript below. |

| Output format | Defines the shape of the answer. | Return a table with risks, owners, and deadlines. |

| Constraints | Sets boundaries and quality rules. | If a deadline is missing, write “not provided.” |

| Examples | Shows the pattern to follow. | Use this sample row as the model for all rows. |

For longer tasks, separators can help. OpenAI’s API guidance recommends placing instructions at the beginning and separating instructions from context with clear delimiters such as triple quotes or section markers.[2] Microsoft’s Azure OpenAI guidance also recommends clear syntax, section headings, and separators when a prompt includes multiple sources or steps.[4]

Task: Turn the notes into an executive summary.

Audience: Operations leadership.

Rules:

- Use only the notes below.

- Call out missing facts instead of inventing them.

- Keep the tone direct and neutral.

Output format:

- Decision needed

- Key evidence

- Risks

- Next actions

Notes:

"""

Paste notes here.

"""This structure works because it separates the instruction from the material being processed. It also tells the model how to handle uncertainty. That last point matters. A prompt should not only ask for an answer. It should tell the model what to do when the answer is not in the provided material.

Prompt engineering techniques that work

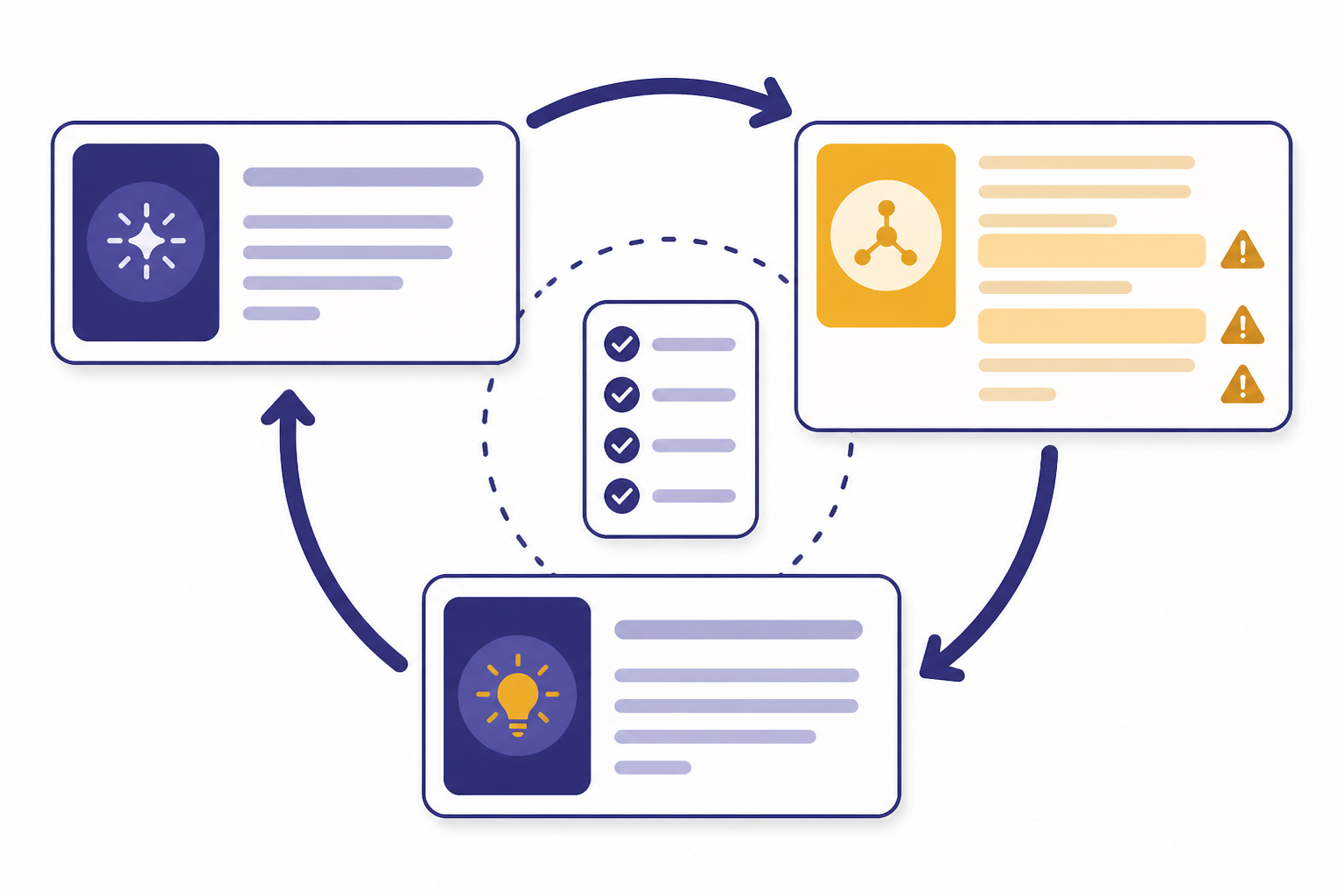

Good prompt engineering starts with clear instructions. It improves through testing. OpenAI describes prompt engineering as iterative: write an initial prompt, review the output, and refine the prompt based on what failed or worked.[1]

Use zero-shot prompting first

Zero-shot prompting means asking the model to perform the task without examples. It is the fastest way to test whether the task is already clear. OpenAI’s guidance recommends starting with zero-shot, then trying examples, and only moving to fine-tuning if prompting does not solve the problem.[2]

Add examples when format matters

Few-shot prompting means adding examples of input and output inside the prompt. Microsoft describes few-shot learning as providing a set of training examples in the prompt to give the model more context for the task.[4] This is useful when you need a consistent tone, label scheme, classification style, or data format.

Break complex work into steps

A prompt can ask for one large answer, but complex work often improves when you split it into stages. Microsoft’s guidance notes that large language models often perform better when a task is broken down into smaller steps.[4] For example, ask the model to extract claims first, then check which claims are supported by the source text, then draft the final answer.

Define the output before asking for content

If you need a JSON object, a table, a checklist, or a client-ready email, say that up front. OpenAI’s guidance says showing a specific output format can make outputs easier to parse reliably.[2] This is especially important for API workflows, spreadsheets, support macros, and repeatable business processes.

Validate the answer

Prompt engineering does not remove the need for review. Microsoft warns that even when prompt engineering works well, users still need to validate model responses because a prompt that works in one scenario may not generalize broadly.[4] For factual work, use source documents, retrieval, citations, or human review. Our guide to RAG explains how retrieval can supply outside knowledge to a model instead of relying only on the prompt.

Prompt engineering vs. related AI skills

Prompt engineering overlaps with several AI skills, but it is not the same as all of them. The difference matters if you are choosing what to learn next or writing a job description.

| Skill | Main job | When to use it | How it relates to prompting |

|---|---|---|---|

| Prompt engineering | Write and test instructions. | You need better outputs from an existing AI model. | The prompt controls the task, context, and format. |

| Context management | Choose what information enters the conversation. | The model needs files, history, or domain facts. | A prompt is only as useful as the context it receives. |

| RAG | Retrieve relevant external information. | The answer must use current or private knowledge. | Prompting tells the model how to use retrieved passages. |

| Fine-tuning | Train a model variant on examples. | Prompting alone cannot produce consistent behavior. | OpenAI recommends trying zero-shot and few-shot prompting before fine-tuning.[2] |

| Evaluation | Measure quality across test cases. | You need repeatable performance, not a lucky answer. | Evaluation shows whether prompt changes improved the system. |

| Agent design | Connect a model to tools and actions. | The AI must plan, call tools, or complete workflows. | Prompts define instructions, tool rules, and stopping conditions. |

Prompting is often the entry point. It teaches you how models respond to instructions. But durable AI work usually adds context windows, tool use, retrieval, evaluation, and product judgment. If you want the broader map, read our explanations of context windows, tokens, fine-tuning, and AI agents.

Prompt engineering also changes when the input is not only text. Multimodal systems can use images, voice, documents, and other media. The same basic discipline still applies: define the task, supply the right input, and check the output. Our guide to multimodal AI covers that shift in more detail.

Career path and job market

Prompt engineering is a real skill, but the standalone “prompt engineer” job title is narrower than the public hype suggests. A 2025 study analyzed 20,662 LinkedIn job postings and found 72 prompt engineer positions, or less than 0.5 percent of the sample.[5] The same study found that listed prompt engineer roles emphasized AI knowledge, prompt design, communication, and creative problem-solving.[5]

That means the safer career bet is not “I write prompts.” It is “I use AI systems to improve a valuable workflow.” Prompting helps product managers write better requirements, analysts summarize and transform data, lawyers review documents more carefully, developers generate and test code, support teams draft accurate responses, and marketers create structured campaign variants.

Salary data is noisy because job titles vary. Coursera’s 2026 salary guide reported a prompt engineer salary range of $62,977 to $126,000 per year based on Glassdoor and ZipRecruiter figures available to its editors.[6] Glassdoor reported an average U.S. prompt engineer salary of $127,869 per year based on 29 salary submissions as of February 2026.[7] Treat these figures as rough market signals, not guarantees.

Broad AI skills have stronger evidence of demand than the exact prompt engineer title. The World Economic Forum’s 2025 Future of Jobs Report surveyed more than 1,000 employers representing over 14 million workers across 22 industry clusters and 55 economies about workforce changes from 2025 to 2030.[8] Prompt engineering belongs inside that wider shift toward AI literacy, automation, data work, and human judgment.

If you want a career path, combine prompting with a domain. A healthcare operations analyst who can build safe summarization workflows is more credible than a generic prompt writer. A software QA specialist who can design test prompts, evaluate failures, and document model behavior is more useful than someone who only knows prompt templates. A customer support lead who can turn policies into reliable AI macros has a concrete business problem to solve.

Skills to build

The best prompt engineers combine writing, systems thinking, testing, and domain knowledge. You do not need to become a machine learning researcher to write better prompts, but you do need to understand the limits of the tool you are using.

- Clear writing. State the task, audience, constraints, and format without ambiguity.

- Task decomposition. Split a large request into steps the model can complete and verify.

- Source handling. Tell the model which material to use and what to do when facts are missing.

- Evaluation. Test prompts against realistic examples, edge cases, and failure cases.

- Domain judgment. Know what a good answer looks like in the field where the prompt will be used.

- Basic AI literacy. Understand models, tokens, context windows, hallucination risk, and retrieval.

- Workflow design. Connect prompts to business processes, tools, review steps, and handoffs.

- Ethical judgment. Protect private data, avoid unsafe instructions, and document uncertainty.

Technical skills help when prompts become part of software. You may need JSON, regular expressions, spreadsheets, Python, API basics, or evaluation scripts. You may also need to compare model behavior across products. Our GPT models comparison is useful when the question is not only “what prompt should I write,” but also “which model is appropriate for this job.”

Nontechnical users can still become strong prompt engineers. A teacher, paralegal, recruiter, financial analyst, or support manager may understand the work better than a generalist technologist. In many settings, domain expertise is what turns an average prompt into a useful one.

Portfolio examples

A prompt engineering portfolio should show before-and-after thinking. Do not only publish a library of clever prompts. Show the problem, the prompt design, the test cases, the failures, and the revision.

Strong portfolio projects include:

- Support response assistant. Turn a policy document into draft replies, with rules for missing information and escalation.

- Contract clause extractor. Extract parties, dates, obligations, renewal terms, and unknown fields from sample agreements.

- Research summarizer. Summarize a packet of source text and flag unsupported claims.

- Sales call analyzer. Convert transcripts into pain points, objections, follow-up items, and CRM-ready notes.

- Code review helper. Ask the model to identify risky changes, missing tests, and unclear assumptions.

- Multimodal inspection workflow. Use an image input and a checklist to identify visible issues, then require human review.

For each project, include a small evaluation set. Show examples where the prompt succeeds and examples where it fails. Explain what you changed. This demonstrates judgment. It also separates real skill from prompt-template collecting.

A good portfolio can be simple. One well-documented workflow is better than dozens of generic prompts. Employers and clients want to see that you can reduce risk, save time, and produce consistent outputs in a real process.

Common mistakes

The most common prompt engineering mistake is asking for a result without defining success. “Make this better” gives the model too much room to guess. “Rewrite this for a skeptical CFO, keep all numbers unchanged, and return a shorter version with a risk note” is much stronger.

Another mistake is overusing persona language. “Act as an expert” may help set tone, but it does not replace facts, examples, constraints, or review. The model needs the actual task and the material to work from.

Do not paste private or regulated information into tools unless your organization has approved the workflow. Prompt engineering includes data judgment. If the task involves customer records, legal matters, health data, financial data, credentials, or confidential source code, follow your organization’s security rules.

Do not assume one good answer means the prompt is reliable. Test variations. Try messy inputs. Try missing data. Try adversarial wording. A prompt that works only for your perfect example is not production-ready.

Finally, do not treat prompting as a substitute for learning the underlying work. AI can speed up writing, summarizing, classification, coding, and analysis. It cannot take responsibility for your judgment. The human still owns the final decision.

Frequently asked questions

Is prompt engineering hard to learn?

The basics are easy to learn because they are mostly clear writing, context, examples, and revision. The advanced version is harder because it involves testing, evaluation, domain knowledge, and workflow design. A beginner can improve quickly by rewriting vague prompts into structured prompts and comparing the results.

Do prompt engineers need to code?

Not always. Many business users can write excellent prompts without coding. Coding becomes useful when prompts are part of an app, API workflow, evaluation system, data pipeline, or automated agent.

Is prompt engineering a good career?

It can be a useful career skill, but the standalone job title is limited. The stronger path is to combine prompting with a domain such as law, customer support, product management, data analysis, software development, education, or operations. Employers usually pay for business outcomes, not for prompt wording alone.

What is the difference between prompt engineering and fine-tuning?

Prompt engineering changes the instructions and context you give a model at run time. Fine-tuning changes a model’s behavior by training it on examples. For many tasks, prompting is faster and cheaper to try first; fine-tuning is better when you need a consistent pattern that prompts cannot reliably produce.

What should I learn after prompt engineering?

Learn evaluation, retrieval, context management, and basic automation. These skills make prompts more reliable in real work. If you are technical, learn API basics and structured outputs; if you are nontechnical, build domain-specific workflows and document your testing process.

Can prompt engineering prevent hallucinations?

No. Better prompts can reduce some errors by providing source material, constraints, and instructions for uncertainty. They cannot guarantee truth. For important factual work, pair prompting with source documents, retrieval, citations, and human review.