ChatGPT stands for Chat Generative Pre-trained Transformer. The “Chat” part means you interact with it through conversation. “GPT” stands for Generative Pre-trained Transformer, the model family and design pattern behind many modern AI language systems.[1][2] In plain English, ChatGPT is a conversational AI assistant built to understand prompts, generate responses, and keep track of a back-and-forth exchange within a chat.[1] The name sounds technical because it describes both the user experience and the machine-learning method underneath it. This guide breaks down each part of the name without assuming you already know AI vocabulary.

The short answer

ChatGPT stands for Chat Generative Pre-trained Transformer. That expansion is useful, but it can also mislead beginners if each word is treated too literally. ChatGPT is not a person chatting. It is not a search engine by default. It is not a database that simply looks up an answer. It is an AI assistant that generates responses from patterns learned during training and from the context you provide in the conversation.

OpenAI describes ChatGPT as an AI assistant for tasks such as brainstorming, writing, studying, planning, math, coding, and analyzing images or files. OpenAI also says it is trained to follow instructions in a conversation.[1] That last phrase explains why the “Chat” part matters. The product is designed around a turn-by-turn exchange, not a single static command.

If you want the broader beginner explanation, start with what ChatGPT is. If you only want the acronym, remember this version: Chat is the interface, and GPT is the generative language-model technology.

GPT, word by word

The acronym GPT has three parts: generative, pre-trained, and transformer. Each word points to a different layer of how the system works. The simplest way to read it is: a GPT model is built to generate new output, after being trained ahead of time, using a transformer neural-network architecture.

| Part of the name | Plain-English meaning | Why it matters |

|---|---|---|

| Generative | It creates new text or other output rather than only classifying input. | This is why it can draft, summarize, rewrite, explain, and answer in its own wording. |

| Pre-trained | The model learns broad language patterns before it is adapted for specific uses. | This gives it general language ability before the chat-specific tuning layer. |

| Transformer | It uses a neural-network architecture based on attention mechanisms. | This helps the model weigh relationships among tokens in a prompt and generate a continuation. |

Generative

“Generative” means the model produces new output. A non-generative system might sort emails into categories or flag whether a sentence is positive or negative. A generative model writes a fresh response. That response can be useful, but it can also be wrong, because generation is not the same thing as guaranteed factual recall.

For a broader definition of this family of tools, read our plain-English guide to generative AI.

Pre-trained

“Pre-trained” means the model is trained before you use it. Early OpenAI GPT research described a process where a language model was trained on a broad corpus of unlabeled text and then adapted for tasks through fine-tuning.[6] Later OpenAI work on GPT-2 described a language model trained to predict the next word from previous words in text.[4]

This does not mean the model studies your question from scratch every time. It means the model already has learned statistical patterns. Your prompt then steers which patterns become relevant. If you want to understand the small units the model reads and writes, see what a token is in ChatGPT.

Transformer

“Transformer” refers to a neural-network architecture introduced in the 2017 paper “Attention Is All You Need.” The paper proposed a network architecture based on attention mechanisms and removed recurrence and convolutions from the main sequence-transduction design.[5] In simple terms, attention helps the model weigh which parts of the input are most relevant while producing output.

Transformers are not exclusive to ChatGPT. Many AI systems use transformer-based designs. GPT is one transformer-based language-model family associated with OpenAI. For the more technical acronym-only breakdown, see what GPT stands for.

How ChatGPT uses GPT

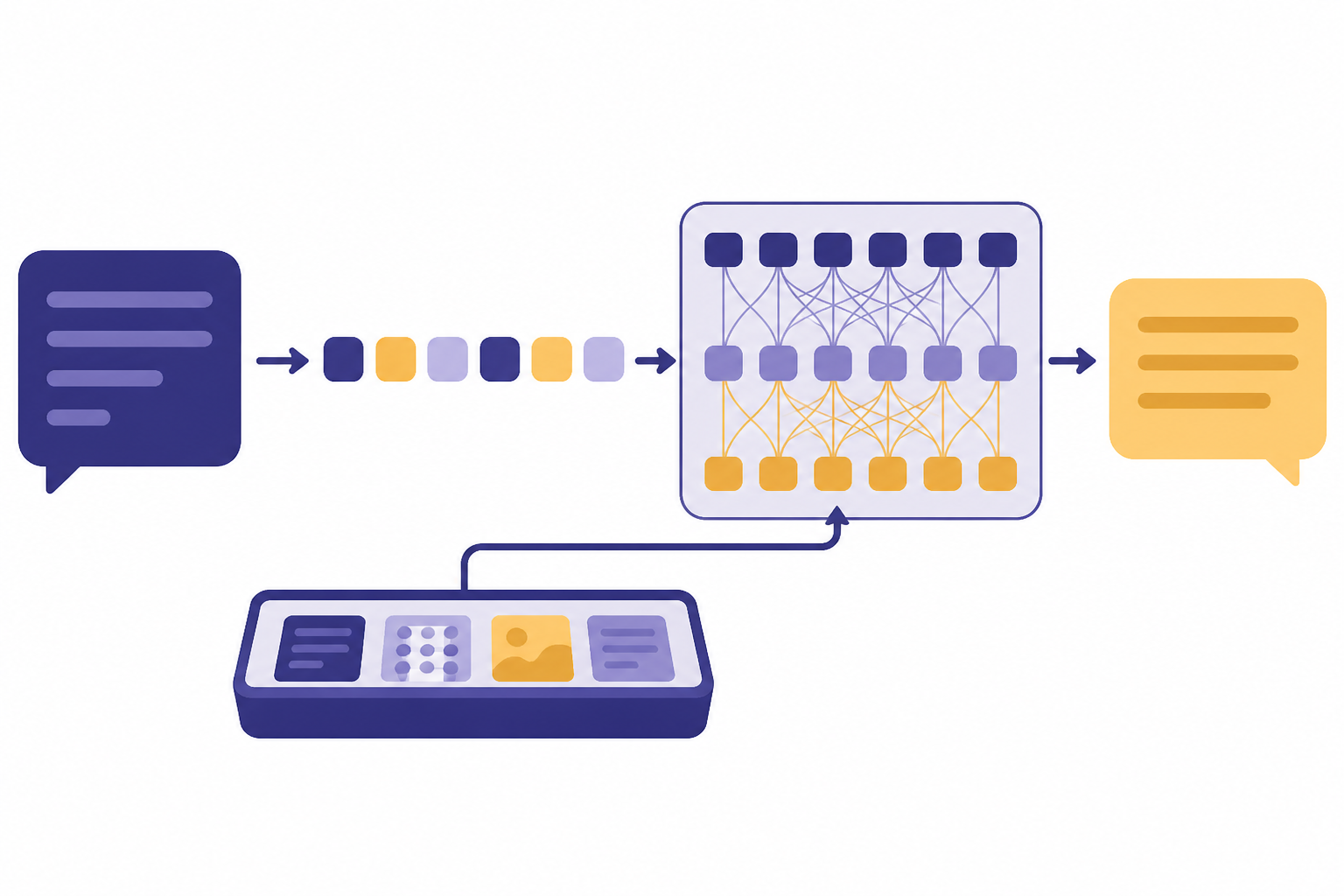

ChatGPT is the chat product. GPT is the model technology underneath it. You type a message. The system turns that message into tokens, combines it with relevant conversation context, and uses a model to generate the next response. The response appears as a natural-language answer, but under the hood it is built one piece at a time.

OpenAI’s GPT-2 description gives a useful simplified view of the basic language-model objective: predict the next word given previous words. OpenAI said GPT-2 used a 40GB text dataset, had 1.5 billion parameters, and was trained on a dataset of 8 million web pages.[4] Those numbers are about GPT-2, not today’s ChatGPT models, but they show the basic idea behind pretraining at scale.

ChatGPT adds a conversational product layer on top of language-model capability. OpenAI’s original ChatGPT announcement said ChatGPT was fine-tuned from a model in the GPT-3.5 series, which finished training in early 2022.[3] OpenAI also noted limitations, including plausible-sounding incorrect answers and sensitivity to prompt phrasing.[3] Those limitations remain important to understand even as models improve.

Instruction following is another key piece. OpenAI’s InstructGPT work used reinforcement learning from human feedback, or RLHF, to make models more helpful and aligned with user intent.[8] If you want the alignment concept in more detail, read what RLHF means.

The conversation also depends on a context window. The model does not remember every word you have ever written. It works with the active context available to it in the current interaction and any enabled product memory features. For the size-limit concept, see what a context window is.

What the name does not mean

The phrase “Chat Generative Pre-trained Transformer” is a name, not a full explanation of the product. It is best treated as a compact technical label. Several common misunderstandings come from reading too much into the acronym.

- It does not mean ChatGPT is always right. A generated answer can sound confident while containing mistakes.

- It does not mean ChatGPT searches the web for every answer. ChatGPT can search the web in supported experiences, but generation and web search are different capabilities.[1]

- It does not mean the model learns permanently from each message you send. A chat can use the current conversation context, but that is different from retraining the model weights during your session.

- It does not mean every AI chatbot is ChatGPT. ChatGPT is OpenAI’s product. Other companies build different assistants using different model families.

- It does not mean GPT is the same as AGI. GPT describes a model approach. AGI is a broader and more contested idea.

A practical example helps. If you ask ChatGPT to “write a polite email asking for a deadline extension,” it generates a new email based on the instruction, style cues, and context. If you ask it to “summarize this pasted article,” it uses the article text in the prompt. If you ask it about a current event, it may need web search or another retrieval method to avoid relying only on model knowledge.

This distinction is why retrieval-augmented generation matters. RAG connects generation to external information retrieval, so the model can use supplied or retrieved source material rather than relying only on what it learned during training. For that next layer, see what RAG is.

Where the name came from

The “GPT” part predates ChatGPT. OpenAI’s 2018 paper “Improving Language Understanding by Generative Pre-Training” described gains from generative pretraining followed by fine-tuning across language-understanding tasks.[6] OpenAI then described GPT-2 in 2019 as a transformer-based language model and a scale-up of GPT.[4]

GPT-3 continued the scale-up pattern. The GPT-3 paper said OpenAI trained an autoregressive language model with 175 billion parameters and tested it in few-shot settings.[7] That figure belongs to GPT-3, not to ChatGPT as a product. OpenAI has not published an official figure for the parameter count of every current ChatGPT model.

ChatGPT arrived later as a conversational interface around GPT-style model capability. OpenAI introduced ChatGPT on November 30, 2022, as a research release designed to gather feedback on strengths and weaknesses.[3] For a fuller chronology, see when ChatGPT was released and who created ChatGPT.

Related concepts to know next

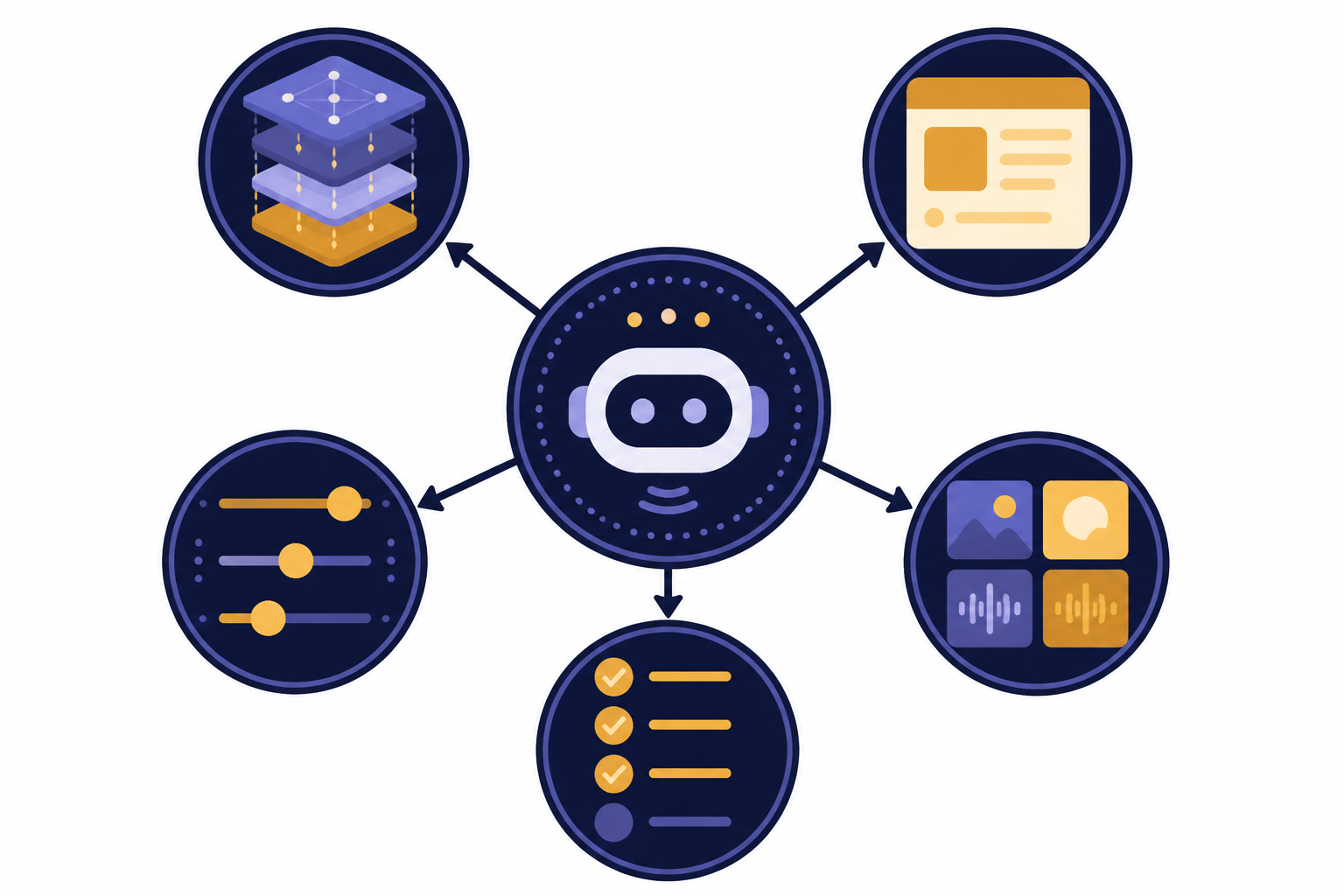

Once you understand what ChatGPT stands for, the next step is to separate the surrounding AI terms. These terms often appear together, but they do not mean the same thing.

| Concept | How it relates to ChatGPT | Beginner takeaway |

|---|---|---|

| Large language model | The broad category of models trained to process and generate language. | GPT is a type of LLM, but not every LLM is an OpenAI GPT model. |

| Prompt engineering | The practice of writing instructions that produce better responses. | Clear prompts help, but they do not remove the need to verify important answers. |

| Fine-tuning | A method for adapting a model toward a narrower task or behavior. | Fine-tuning changes model behavior more deeply than writing a better prompt. |

| Multimodal AI | AI that can work across modes such as text, images, audio, or files. | Modern assistants are no longer limited to plain text in every setting. |

| AI agent | A system that can plan and take steps toward a goal with some autonomy. | An agent is more action-oriented than a basic chatbot. |

For the broader model category, read what a large language model is. For better instructions, read what prompt engineering means. For adapting models, read what fine-tuning is. For systems that work beyond text, read what multimodal AI is. For tools that can take more steps on your behalf, read what an AI agent is.

Frequently asked questions

What does ChatGPT stand for?

ChatGPT stands for Chat Generative Pre-trained Transformer.[2] “Chat” describes the conversational interface. “GPT” describes the generative pre-trained transformer model approach behind the assistant.

What does GPT stand for in ChatGPT?

GPT stands for Generative Pre-trained Transformer. “Generative” means it can create output, “pre-trained” means it learned broad patterns before use, and “transformer” refers to the attention-based neural-network architecture introduced in 2017.[5]

Is ChatGPT the same thing as GPT?

No. ChatGPT is the user-facing AI assistant. GPT is the model family or model approach that helps power the assistant. A simple analogy is that ChatGPT is the app experience, while GPT is part of the engine.

Who made ChatGPT?

OpenAI made ChatGPT. OpenAI introduced ChatGPT on November 30, 2022, as a research release for conversational AI feedback.[3] The product has changed since launch, but OpenAI remains the organization behind ChatGPT.

Does “pre-trained” mean ChatGPT cannot use new information?

No. “Pre-trained” refers to the model’s training process before deployment. ChatGPT can also use information you provide in the conversation, files you upload in supported plans, and web search in supported experiences.[1] Those are different from retraining the base model during your chat.

Why is it called a transformer?

The name comes from the transformer architecture in machine learning. The 2017 transformer paper proposed an attention-based architecture for sequence tasks.[5] In GPT-style systems, that architecture helps the model process relationships among tokens and generate a response.