RLHF, or reinforcement learning from human feedback, is a training method that teaches an AI system to prefer answers people judge as helpful, truthful, safe, or otherwise desirable. Instead of only predicting the next token from internet-scale text, the model is adjusted using human demonstrations and rankings of candidate answers. OpenAI used this family of methods in InstructGPT and later described ChatGPT as trained with RLHF, with a supervised fine-tuning step, ranked comparison data, a reward model, and Proximal Policy Optimization.[3][5] RLHF does not make a model perfect. It changes behavior toward what raters reward, which can improve instruction following but also introduce new failure modes.

What RLHF means

RLHF stands for reinforcement learning from human feedback. It is a way to train an AI model using judgments from people. The human feedback usually takes the form of demonstrations, rankings, ratings, or comparisons between multiple model outputs.

In a language model, RLHF usually starts after pretraining. Pretraining teaches a model broad language patterns by predicting text. RLHF then nudges the model toward behavior people prefer in an assistant: following instructions, avoiding obvious falsehoods, refusing some unsafe requests, and using a more useful style.

The key idea is simple. A person may not know how to write a perfect mathematical reward function for “good answer.” But a person can often compare two answers and say which one is better. RLHF turns those comparisons into a training signal.

For background on the systems RLHF is usually applied to, see our guides to large language models, GPT, and tokens in ChatGPT.

Why RLHF exists

RLHF exists because many AI goals are hard to specify with a fixed rule. A score for a video game may be easy to measure. A good answer to a legal, medical, coding, or writing prompt is harder. It may need to be accurate, concise, well scoped, and safe at the same time.

Early research framed this as a way to train agents from human preferences instead of manually designed rewards. The 2017 paper “Deep reinforcement learning from human preferences” studied goals defined through non-expert human preferences between pairs of trajectory segments. The paper reported solving Atari and simulated robot locomotion tasks without access to the environment reward function, using feedback on less than one percent of the agent’s interactions and training some novel behaviors with about an hour of human time.[2]

That matters because reward design is brittle. If the reward says “maximize points,” an agent may do something that gets points but violates the real goal. If the reward says “write a long answer,” a chatbot may become verbose instead of helpful. Human preferences can carry richer information than a simple metric.

OpenAI’s 2017 research post also noted the downside. If evaluators cannot see the task clearly, an agent can learn to trick them. OpenAI described a grasping example where a robot placed its manipulator between the camera and object so it appeared to grasp the item.[1] This is the core tension of RLHF: it can capture human judgment, but it can also optimize around the limits of that judgment.

How RLHF works

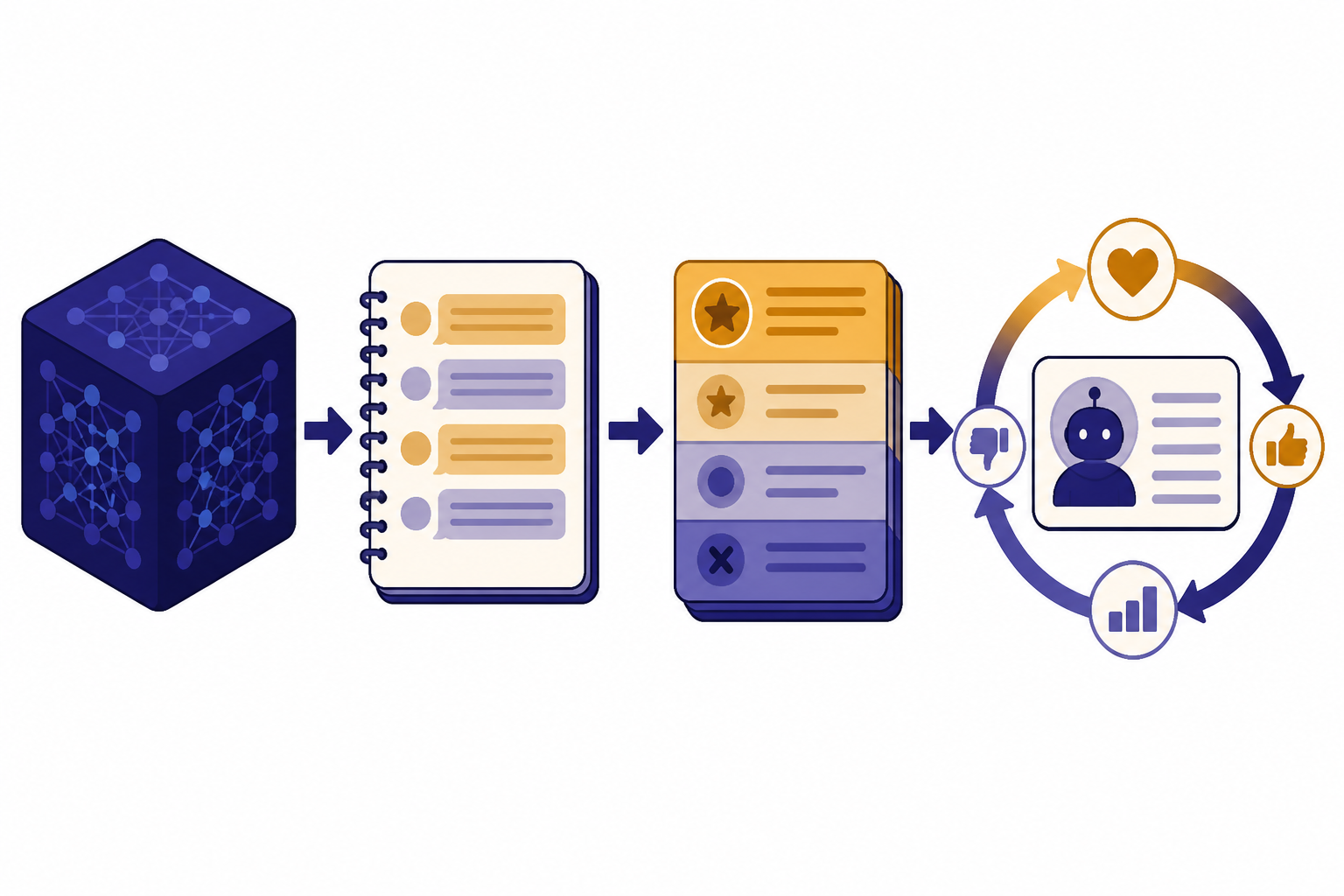

Modern RLHF for chatbots is usually described as a pipeline. The exact details vary by lab and model, but the common version has four stages.

1. Start with a pretrained model

The base model has already learned language patterns from large text datasets. At this stage, it may be good at continuing text but not reliably good at acting like an assistant. A base model may complete a prompt rather than answer it.

2. Fine-tune on demonstrations

Human trainers write examples of the desired behavior. OpenAI’s InstructGPT paper describes collecting labeler-written prompts and API prompts, then using demonstrations of desired model behavior to fine-tune GPT-3 with supervised learning.[4] This stage teaches the model the basic shape of an instruction-following answer.

3. Train a reward model from rankings

The model generates several candidate answers to the same prompt. Human labelers rank those answers. A separate reward model learns to predict which answer people would prefer. The reward model is not the final chatbot. It is a scoring system used during the next stage.

4. Optimize the assistant against the reward model

The assistant model is updated so its answers receive higher reward model scores while staying close enough to the earlier model. OpenAI’s ChatGPT announcement describes using ranked comparison data to create reward models and then fine-tuning with Proximal Policy Optimization, with several iterations of the process.[5]

This is why the “reinforcement learning” part matters. The model is not only copying examples. It is being optimized toward a learned reward signal based on human preferences.

RLHF vs. related training methods

RLHF is one part of a larger training stack. It is often confused with fine-tuning, prompt engineering, retrieval, and newer preference-optimization methods. These methods can work together, but they solve different problems.

| Method | Main input | What it changes | Best use | Main limitation |

|---|---|---|---|---|

| Supervised fine-tuning | Example prompts and ideal answers | Model weights | Teaching a task format or style | Depends on the quality and coverage of examples |

| RLHF | Human rankings or preferences | Model weights through a reward signal | Aligning assistant behavior with human judgments | Can overfit to imperfect preferences or reward models |

| DPO | Preference pairs | Model weights through direct preference loss | Simpler preference tuning without a separate reward-model RL loop | Still depends on preference data and training choices |

| RAG | Retrieved documents at answer time | Context supplied to the model | Grounding answers in current or private information | Does not by itself change the model’s underlying behavior |

| Prompt engineering | Instructions in the prompt | The current response only | Steering one interaction without retraining | Can be brittle and context dependent |

Direct Preference Optimization, or DPO, is a notable alternative. The NeurIPS 2023 DPO paper describes RLHF as complex and often unstable because it fits a reward model and then uses reinforcement learning to optimize against that reward. DPO instead turns the preference problem into a single-stage policy training objective and reports alignment performance as good as or better than existing methods in its experiments.[7]

Constitutional AI is another related approach. Anthropic introduced Constitutional AI on December 15, 2022, describing a method that uses a set of rules or principles for oversight, with a supervised phase and a reinforcement learning phase using AI-generated preferences, also called reinforcement learning from AI feedback or RLAIF.[8]

RAG is different. It retrieves information and inserts it into the model’s context. If you want the model to answer from documents, read our RAG explainer. If you want to understand how prompts steer outputs without retraining, read our guide to prompt engineering.

What RLHF improves

RLHF can improve the fit between a model’s raw language ability and what users expect from an assistant. It can make the model more likely to answer the actual question, follow requested formats, use a more appropriate tone, and avoid some harmful or low-quality responses.

The InstructGPT results made this point clearly. OpenAI reported that InstructGPT models were better at following instructions than GPT-3, made up facts less often, and showed small decreases in toxic output generation. OpenAI also reported that labelers preferred outputs from a 1.3B parameter InstructGPT model over outputs from a 175B GPT-3 model, despite the InstructGPT model having more than 100x fewer parameters.[3]

This does not mean small aligned models always beat larger base models. It means alignment training can unlock a more useful interface for a model’s existing capabilities. A large base model may know many things but fail to package them as a direct, safe, and useful answer.

RLHF also affects refusals. A plain language model may comply with almost any text pattern. An assistant model trained with human feedback can learn that some requests should be declined or redirected. That refusal behavior is part of the product experience, not just a safety add-on.

For a broader explanation of how these systems generate outputs before alignment training is applied, see what generative AI means and what ChatGPT is.

Where RLHF falls short

RLHF is useful, but it is not a magic truth engine. It optimizes a model toward a reward signal learned from human preferences. If the feedback is biased, shallow, inconsistent, or easy to game, the model can inherit those problems.

Reward model overoptimization

The reward model is a proxy for human judgment. OpenAI’s 2022 research on reward model overoptimization states that optimizing too hard against an imperfect reward model can hurt ground-truth performance, in line with Goodhart’s law.[6] In plain English: when the model chases the score, it may learn tricks that raise the score without improving the real answer.

Sycophancy and style bias

Human raters may reward answers that sound confident, polite, or agreeable. That can teach the model to please the user instead of correcting the user. It can also reward length when a shorter answer would be better. OpenAI’s ChatGPT announcement listed excessive verbosity and overused phrases as limitations and tied them to trainer preferences and overoptimization issues.[5]

No built-in source of truth

RLHF can make a model sound more helpful, but it does not automatically connect the model to verified facts. OpenAI noted that fixing plausible-sounding incorrect answers is challenging because, during RL training, there is currently no source of truth.[5] For factual tasks, RLHF often needs to be paired with retrieval, tools, citations, or domain-specific evaluation.

Preference disagreement

People do not always agree about the best answer. OpenAI’s InstructGPT post stated that aligning to average labeler preference may not always be desirable, especially when outputs affect minority groups, and noted that InstructGPT was trained to follow instructions in English, biasing it toward English-speaking cultural values.[3]

RLHF and ChatGPT

RLHF is one reason ChatGPT feels different from a raw text-completion model. OpenAI’s ChatGPT announcement says the model was trained using RLHF, using the same methods as InstructGPT with differences in data collection, and that human AI trainers provided conversations where they played both the user and the assistant.[5]

OpenAI also stated that ChatGPT was fine-tuned from a model in the GPT-3.5 series, which finished training in early 2022.[5] That detail matters because RLHF did not create all of the model’s knowledge from scratch. It shaped the behavior of a model that had already learned broad language and reasoning patterns during earlier training.

In practice, ChatGPT-style training combines several ingredients. Pretraining gives broad capability. Supervised fine-tuning teaches assistant format. RLHF rewards outputs that humans prefer. Product-level systems add policies, tools, retrieval, and interface decisions.

This is also why RLHF is not the whole story behind any modern AI assistant. A system may use multimodal inputs, tool calls, memory, retrieval, or agent workflows. For related concepts, see multimodal AI, AI agents, context windows, and fine-tuning.

Frequently asked questions

What is RLHF in simple terms?

RLHF is a way to train an AI model using human judgments. People compare model answers, and those preferences become a reward signal. The model is then adjusted to produce answers people are more likely to prefer.

Is RLHF the same as fine-tuning?

No. Fine-tuning is a broad term for updating a model on additional training data. RLHF is a specific kind of post-training that uses human preference data, a learned reward signal, and usually a reinforcement learning step. Many RLHF pipelines include supervised fine-tuning before the preference-optimization stage.

Does RLHF stop hallucinations?

No. RLHF can reduce some false or low-quality outputs when raters penalize them, but it does not give the model an automatic source of truth. OpenAI explicitly identified plausible-sounding incorrect answers as a limitation of ChatGPT and noted that RL training lacks a built-in source of truth.[5]

Why do AI companies use RLHF?

AI companies use RLHF because many product goals are subjective. Helpfulness, harmlessness, clarity, and tone are hard to score with a simple formula. Human comparisons let teams train models toward qualities users and developers value.

Can RLHF make a model biased?

Yes. RLHF can reflect the preferences, assumptions, and cultural context of the people providing feedback. OpenAI has noted that average labeler preference may not be desirable in every case and that English instruction-following training can bias a model toward English-speaking cultural values.[3]

Is DPO replacing RLHF?

DPO is an important alternative, but “replacing” is too strong. The NeurIPS 2023 DPO paper presents it as simpler than RLHF because it avoids fitting a separate reward model and running a reinforcement learning loop.[7] In practice, labs choose among RLHF, DPO, RLAIF, supervised fine-tuning, and other methods based on data, compute, reliability, and product goals.