A large language model, usually shortened to LLM, is an AI model trained to process language and generate new text, code, summaries, answers, translations, and other structured outputs. Modern LLMs learn statistical patterns from large collections of data, then use those patterns to predict useful responses to prompts.[1] The easiest way to understand an LLM is this: it does not look up a sentence from memory and paste it back. It converts your prompt into tokens, estimates what should come next, and builds an answer step by step. This makes LLMs flexible, but it also means they can be confidently wrong.

What an LLM is

An LLM is a large language model. It is a type of deep learning model trained to understand and generate language-like output. IBM defines LLMs as deep learning models trained on immense amounts of data that can understand and generate natural language and other content.[2] Google Cloud describes an LLM as a statistical language model trained on a massive amount of data for text generation, translation, and other natural language processing tasks.[11]

The word large refers to scale. LLMs usually have many learned internal settings, broad training data, and enough computing behind them to model complex language patterns. OpenAI has not published an official figure for the size of many current closed models, so parameter counts should not be guessed.

The word language is broader than everyday writing. It can include natural language, code, math notation, structured data, and in newer systems, tokens representing images, audio, or other media. That is why some systems that started as text models now overlap with multimodal AI.

The word model means the system has learned patterns from data. It is not a database, search engine, or human mind. It is a mathematical system that turns input into likely output. This distinction matters because it explains both the power and the weakness of LLMs.

| Term | Plain meaning | How it relates to LLMs |

|---|---|---|

| AI | Software that performs tasks associated with intelligence | LLMs are one kind of AI system. |

| Machine learning | Systems that learn patterns from data | LLMs are built with machine learning methods. |

| Deep learning | Machine learning using layered neural networks | Modern LLMs are deep learning models. |

| Generative AI | AI that creates new content | LLMs are a major form of generative AI; see our plain-English guide to generative AI. |

| LLM | A large model focused on language | LLMs power chatbots, writing tools, coding assistants, and many AI agents. |

How an LLM works

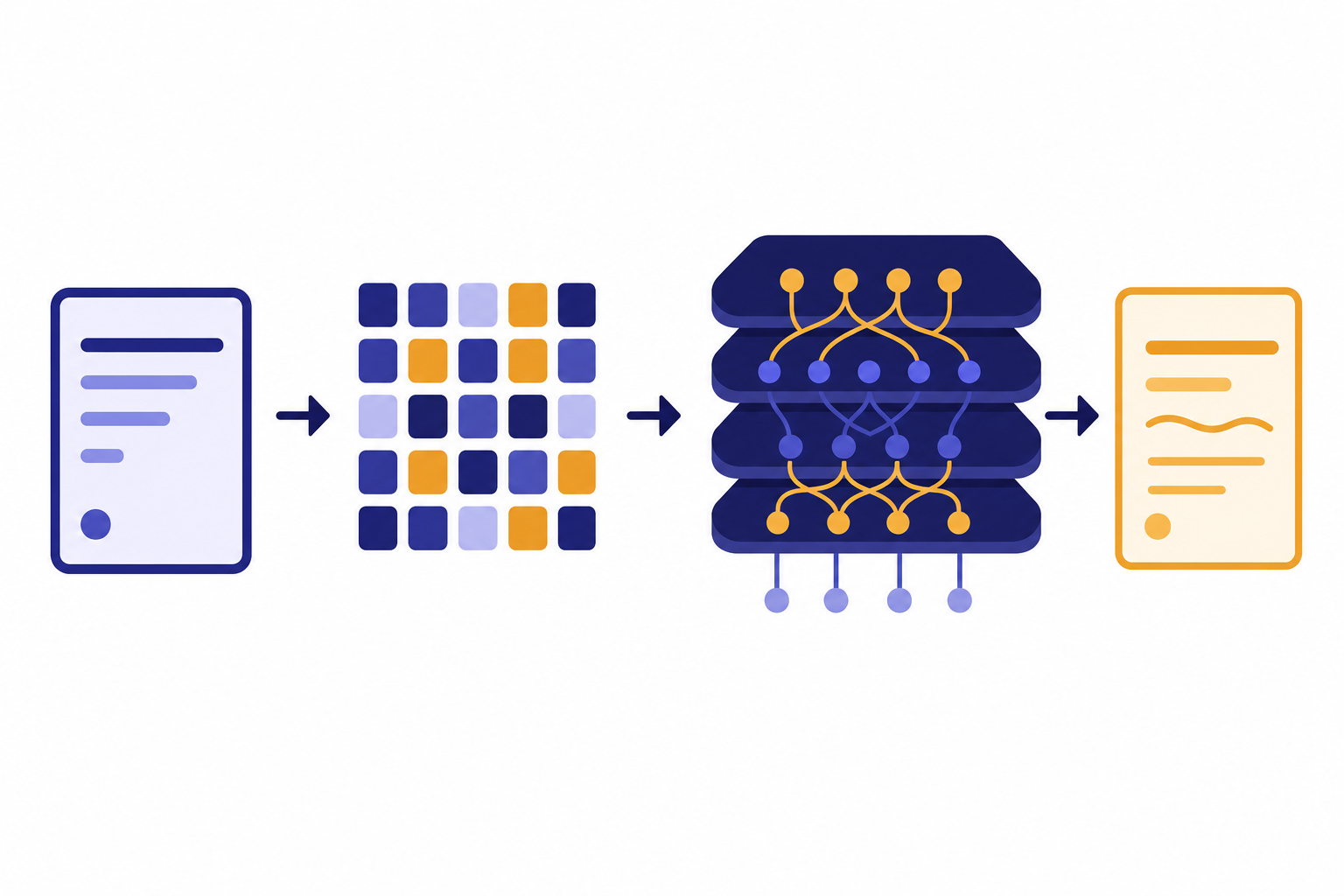

An LLM works by turning input into tokens, processing those tokens through a neural network, and generating more tokens as output. OpenAI says its models analyze relationships in training data, including how words appear together in context, and use that understanding to predict the next likely word when generating a response.[1]

Tokens are the pieces of text the model processes. A token can be a character, a whole word, punctuation, or part of a word. OpenAI’s rule of thumb for English says one token is about four characters, and 100 tokens are about 75 words.[4] For a deeper beginner explanation, read what a token is in ChatGPT.

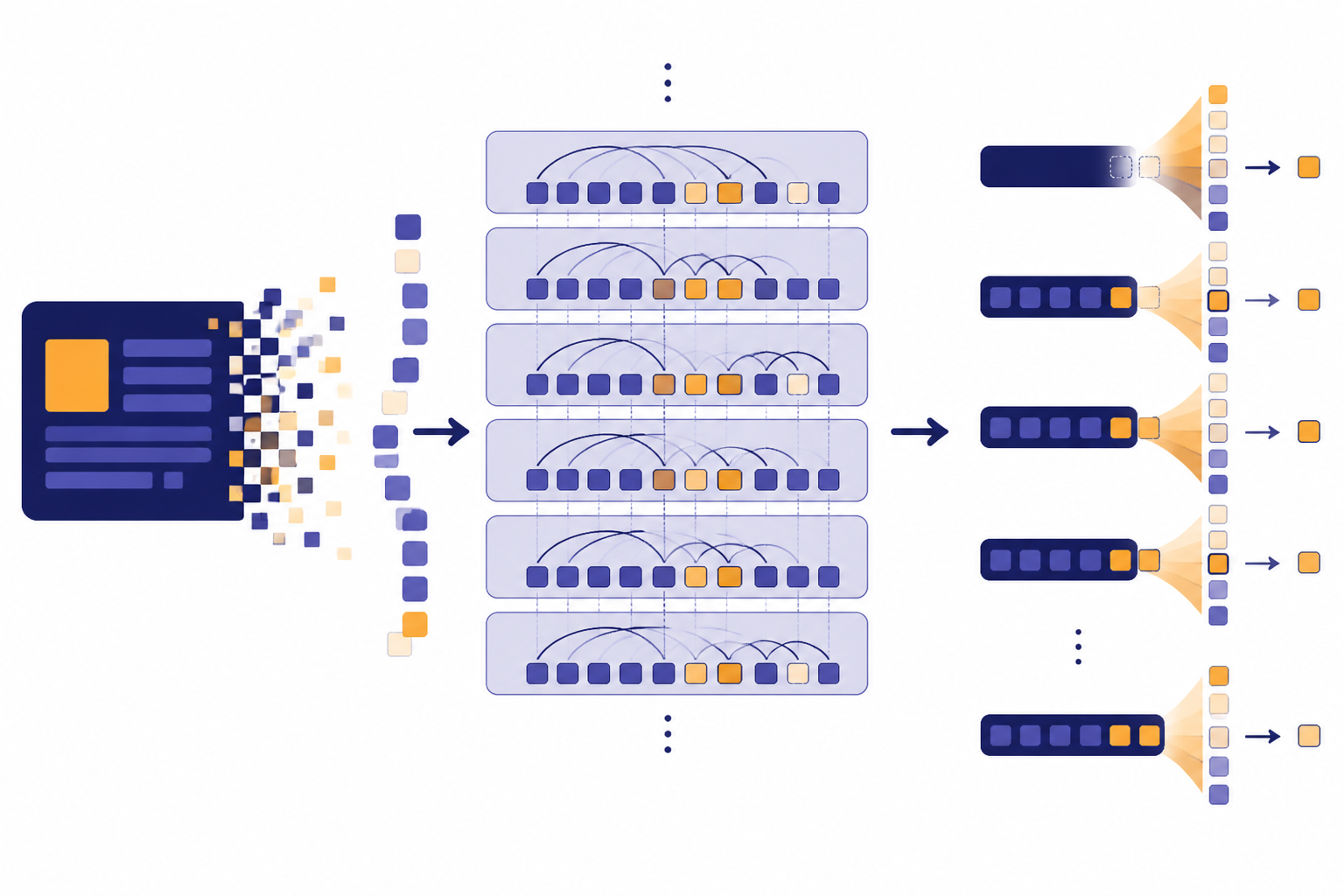

Most modern LLMs use the transformer architecture. The transformer was introduced in the paper Attention Is All You Need, first posted on June 12, 2017.[3] The important idea is attention: the model can weigh relationships among tokens instead of reading text as a simple left-to-right chain.

When you ask an LLM a question, the model does not simply search the web unless the product has a browsing or retrieval tool. The base model reads the prompt and the available conversation context. Then it predicts a continuation. Each generated token changes the context for the next token. This loop continues until the answer ends or the system reaches an output limit.

The context window is the amount of material the model can consider at once. It includes your prompt, previous messages, uploaded or retrieved text, tool results, and the model’s own answer. A larger context window lets a model consider more material, but it does not guarantee better reasoning. For more detail, see what a context window is.

This process explains why wording matters. A vague prompt gives the model weak constraints. A clear prompt gives it a stronger target. Prompting does not rewrite the model, but it changes the immediate context the model uses to answer. That is the practical foundation behind prompt engineering.

Training, prompting, fine-tuning, and RAG

People often use the word “train” for any interaction with an LLM. That is usually not precise. Asking a question, writing a better prompt, uploading a file, and fine-tuning a model are different actions.

Pretraining is the large initial training process. The model learns broad language patterns from large datasets. For many closed commercial models, the full dataset, architecture, and parameter count are not fully public. OpenAI has not published an official figure for many of these details.

Instruction tuning and RLHF make a base model more useful in conversation. OpenAI described InstructGPT models as models trained with humans in the loop, using reinforcement learning from human feedback, or RLHF.[7] OpenAI also said ChatGPT was trained with RLHF and fine-tuned from a model in the GPT-3.5 series; ChatGPT was introduced on November 30, 2022.[5] For more background, read what RLHF means and when ChatGPT was released.

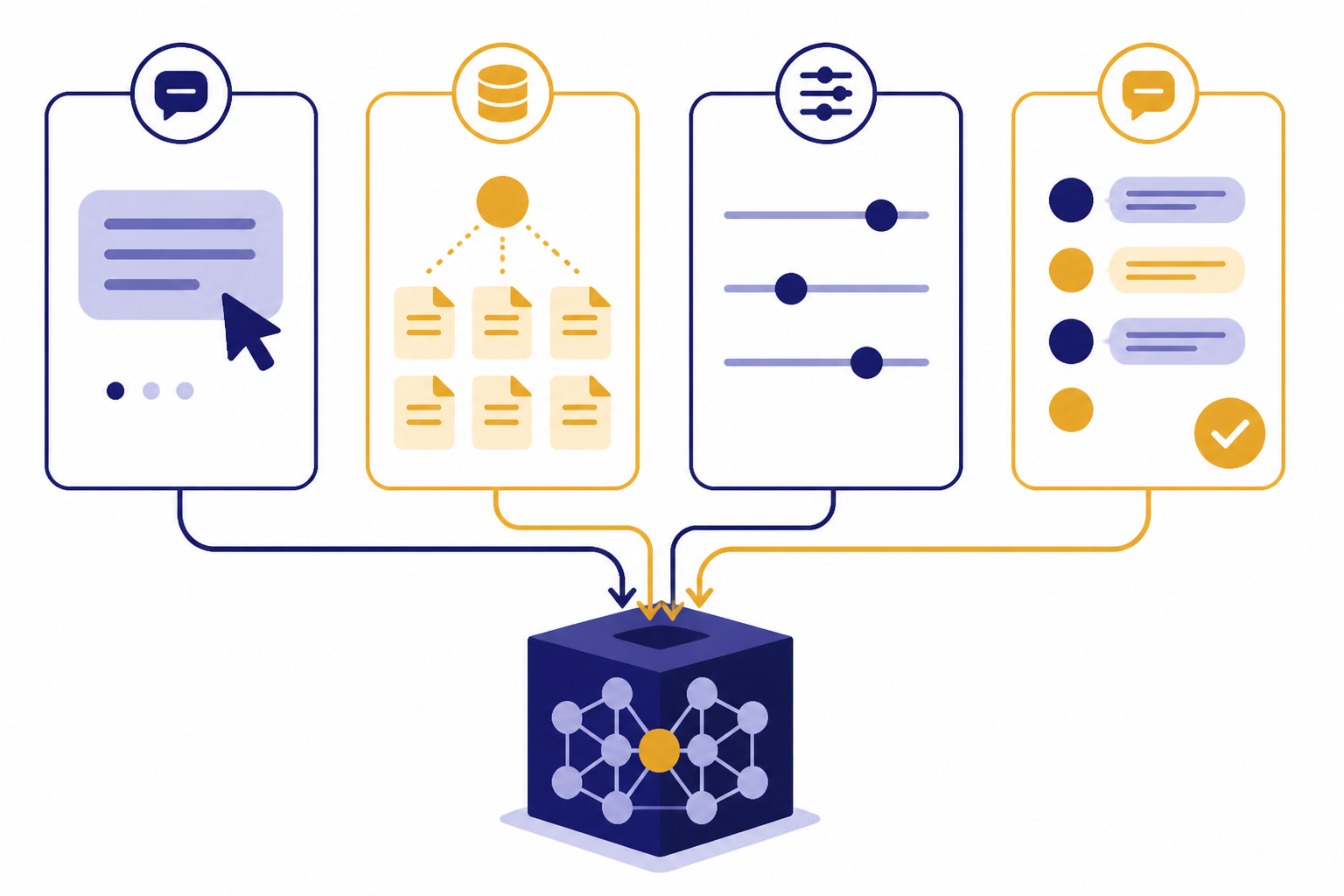

Prompting is not training. It supplies instructions and context for the current request. A prompt can tell the model what role to take, what format to use, what facts to consider, and what to avoid. The underlying model weights do not change just because you wrote a prompt.

Fine-tuning changes model behavior more directly. OpenAI describes fine-tuning as taking a base model, providing examples of the inputs and outputs expected in an application, and producing a model better suited to those tasks.[8] Fine-tuning can help with formatting, domain style, classification, and repeated task patterns. It is not the best first step for every problem; see what fine-tuning is in AI.

RAG, or retrieval-augmented generation, adds relevant outside information at request time. NVIDIA defines RAG as connecting an external data source to an LLM so it can generate domain-specific or up-to-date responses.[9] RAG is often the right choice when the model needs private documents, current policies, product manuals, or source citations. For a dedicated explainer, see what RAG is.

| Method | What changes | Best use | Common mistake |

|---|---|---|---|

| Prompting | The instructions and context in one request | Improving one answer or workflow | Expecting the model to permanently learn from the prompt |

| RAG | The outside documents supplied before generation | Answering from current or private sources | Assuming retrieval makes every answer correct |

| Fine-tuning | The behavior of a customized model | Consistent style, format, or task behavior | Using it to store facts that change often |

| RLHF | The model’s preference for helpful responses | Making models follow instructions better | Thinking it guarantees truth |

What LLMs can and cannot do

LLMs are useful because language sits inside many work tasks. Emails, code, support tickets, contracts, spreadsheets, meeting notes, requirements, research notes, and documentation all contain language. A model that can transform language can help with many jobs, even if it is not a complete expert system.

An LLM can draft a first version of a document, summarize a long passage, rewrite text for a different audience, extract fields from unstructured text, translate, classify, explain code, generate code examples, brainstorm options, and turn instructions into structured output. Some models can also process images. OpenAI described GPT-4 as a large multimodal model that accepts image and text inputs and emits text outputs.[6]

An LLM cannot guarantee truth by itself. It may produce a sentence that sounds plausible but is not supported by facts. It may miss details in a long document. It may follow a misleading premise. It may cite sources incorrectly if the product has no reliable retrieval and citation layer.

A good mental model is “capable assistant, not final authority.” Use it to accelerate drafting, analysis, and exploration. Use external checks for legal, medical, financial, safety, academic, or operational decisions.

| Task | LLM fit | Human check needed |

|---|---|---|

| Summarize meeting notes | Strong | Check decisions, names, and deadlines |

| Draft a customer email | Strong | Check tone, policy, and promises |

| Explain unfamiliar code | Useful | Run tests and inspect security implications |

| Answer from a private handbook | Useful with RAG | Check retrieved source passages |

| Give medical diagnosis | Poor as a standalone authority | Use a qualified clinician |

| Calculate exact tax liability | Poor as a standalone authority | Use official rules and a tax professional |

LLM vs. GPT, ChatGPT, generative AI, and agents

LLM is the broad category. GPT is a specific model family name. ChatGPT is a product that lets people interact with models through a chat interface. Generative AI is the broader class of AI systems that create content. An AI agent is a system that can use a model, tools, memory, and planning steps to pursue a goal.

GPT stands for Generative Pre-trained Transformer. The “transformer” part points back to the architecture introduced in 2017.[3] For acronym details, read what GPT stands for or our broader GPT explainer.

ChatGPT is not the same thing as an LLM. ChatGPT is an application. It may include a chat interface, memory features, tools, file handling, image features, voice features, search, and safety systems. The LLM is the core model that generates much of the response. For beginners, start with what ChatGPT is.

Generative AI includes LLMs, image generators, video generators, audio generators, and other systems that produce new content. Many modern products combine several model types. A writing assistant may use an LLM. A design assistant may use an image model. A voice assistant may combine speech recognition, an LLM, and text-to-speech.

Agents add action. An agentic system may decide which tool to call, search a database, write code, run a workflow, or ask for clarification. The LLM often acts as the reasoning and language layer, but the agent includes more than the model. See what an AI agent is for that distinction.

| Concept | Category | Simple distinction |

|---|---|---|

| LLM | Model type | The language-processing engine |

| GPT | Model family | A transformer-based model family name |

| ChatGPT | Application | A product interface built around models |

| Generative AI | Broader technology class | AI that creates text, images, audio, code, or other content |

| AI agent | System pattern | A model plus tools, goals, and action loops |

Limits and risks to understand

The central risk of an LLM is that fluent language can hide uncertainty. OpenAI says hallucinations remain a fundamental challenge for all large language models.[10] OpenAI’s Help Center also tells users to approach ChatGPT critically and verify important information from reliable sources.[12]

Hallucination means the model produces content that appears factual but is wrong, unsupported, or fabricated. It can happen with dates, quotes, citations, statistics, laws, medical information, product specs, and names. The more specific the claim, the more important verification becomes.

LLMs can also reflect bias in data and feedback. They can be sensitive to prompt wording. They can mishandle ambiguity. They can overfit to examples in the prompt. They can reveal private information if an application is built carelessly. They can be used to generate spam, phishing, misinformation, or low-quality content at scale.

Security matters when LLMs connect to tools. A model that can read email, edit files, call APIs, or submit forms needs boundaries. Developers should treat prompts and retrieved documents as untrusted input. A malicious instruction hidden inside a document can try to redirect the model. This is one reason agent systems need permissions, logs, and human approval for sensitive actions.

Privacy also matters. Do not paste secrets, credentials, regulated personal data, or confidential company material into a tool unless you understand its data handling terms and your organization allows that use. The model may not be the only risk. Logs, plugins, retrieval stores, browser tools, and connected apps can all affect exposure.

How to use an LLM well

Start by giving the model a clear job. State the goal, audience, source material, constraints, and output format. Good prompts reduce ambiguity. They do not make the model infallible, but they make errors easier to spot.

Use source material when accuracy matters. Paste the relevant excerpt, attach the document, or use a RAG-enabled system that retrieves passages from a trusted knowledge base. Ask the model to separate facts from assumptions. Ask it to say when the supplied material does not answer the question.

Break complex work into stages. For example, ask for an outline first, then a draft, then a critique, then a revised version. This makes the work inspectable. It also helps you catch a weak assumption before it becomes embedded in a polished answer.

Prefer structured outputs for operational work. If you need fields, ask for a table or JSON-like structure. If you need a decision, ask for criteria and evidence. If you need code, ask for tests and edge cases. The model can help generate these artifacts, but you still own validation.

Use the smallest reliable tool for the job. A full LLM is not always necessary. Search may be better for finding a source. A calculator may be better for arithmetic. A database query may be better for exact records. An LLM is strongest when the task needs language understanding, transformation, synthesis, or interaction.

- For writing: provide audience, tone, length, and examples.

- For research: require sources and check the sources yourself.

- For coding: run the code, test edge cases, and review dependencies.

- For business use: define approval rules before connecting tools.

- For learning: ask the model to explain, quiz you, and identify likely misunderstandings.

Frequently asked questions

What does LLM stand for?

LLM stands for large language model. It is a type of AI model trained to process and generate language. The term usually refers to modern deep learning systems that can answer questions, draft text, summarize, translate, code, and follow instructions.

Is ChatGPT an LLM?

ChatGPT is an application powered by large language models, not just a model by itself. OpenAI introduced ChatGPT on November 30, 2022, and described it as fine-tuned from a model in the GPT-3.5 series.[5] The app can include other product features around the model, such as tools, files, voice, images, or search.

Do LLMs understand language?

LLMs model language patterns in a way that can produce useful behavior. Whether that counts as “understanding” depends on how strictly you define the word. For practical use, it is safer to treat an LLM as a powerful pattern-based system that can reason in some contexts and fail badly in others.

Why do LLMs make things up?

LLMs generate likely continuations, not guaranteed facts. If the model lacks enough grounding, it may produce an answer that sounds correct but is false. OpenAI says hallucinations remain a fundamental challenge for all large language models.[10]

What is the difference between an LLM and generative AI?

An LLM is a language-focused model. Generative AI is the broader category of AI that creates new content, including text, images, audio, video, and code. Many generative AI products use LLMs, but not every generative AI system is only an LLM.

Can an LLM access current information?

A base LLM does not automatically know current events after its training period. It can access current or private information only if the product connects it to tools such as search, databases, files, or RAG. RAG connects an external data source to an LLM for domain-specific or up-to-date responses.[9]