GPT stands for Generative Pre-trained Transformer, a family and design pattern for AI models that generate language and other outputs from prompts.[1] A GPT learns broad patterns during pre-training, then later training and product instructions shape it into a useful assistant for conversation, coding, analysis, and writing.[2] The short version is this: a GPT breaks your prompt into tokens, uses a Transformer network to weigh the surrounding context, and predicts the next useful token until it builds a response.[8][9] ChatGPT is an app; GPT is the model technology that can sit inside apps, APIs, agents, and multimodal tools.

Quick definition

A GPT is a general-purpose AI model trained to generate likely continuations from an input. In everyday use, that input is a prompt. The output can be an answer, draft, summary, translation, code sample, plan, or structured data.

GPT belongs to the broader category of large language models, and it is also a major example of generative AI systems. It is not a traditional database. It does not retrieve a verified row and display it unchanged. It generates a response from patterns learned during training plus the text and instructions currently in its context.[8]

The product distinction matters. What ChatGPT is is the app or interface many people use. GPT is the model family and training approach that can power a chat app, developer API, coding assistant, search-like workflow, or agent. When someone says they are using GPT, they may mean the model. When they say they are using ChatGPT, they usually mean the product.

A simple mental model is autocomplete with a much larger context, a much richer training process, and post-training that teaches the model how to follow instructions. That analogy is incomplete, but it prevents the most common mistake: treating GPT as if it were a person, a live encyclopedia, or a guaranteed fact checker.

What each word in GPT means

The official expansion is Generative Pre-trained Transformer.[1] Each word describes a real part of the system, not just a brand label.

| Part | Plain-English meaning | Why it matters |

|---|---|---|

| Generative | The model produces new token sequences instead of only selecting from fixed categories. | This is why GPT can draft paragraphs, code, outlines, and answers rather than only classify text. |

| Pre-trained | The model first learns broad patterns from large unlabeled text before later adaptation. | OpenAI’s early GPT work described training a Transformer in an unsupervised way and then fine-tuning it for specific tasks.[2] |

| Transformer | The neural network architecture uses attention to weigh relationships among tokens. | The Transformer architecture was introduced in the 2017 paper Attention Is All You Need.[4] |

People sometimes describe GPT as a generative pre-trained model. That phrase is understandable, but the T in the acronym stands for Transformer. The distinction matters because a Transformer is the architecture that made this training pattern unusually effective for language tasks.

OpenAI’s 2018 GPT work combined these ideas: a Transformer model, unsupervised pre-training, and fine-tuning on smaller task datasets.[2] That mix helped turn one model into a flexible language system instead of a separate custom model for every narrow task.

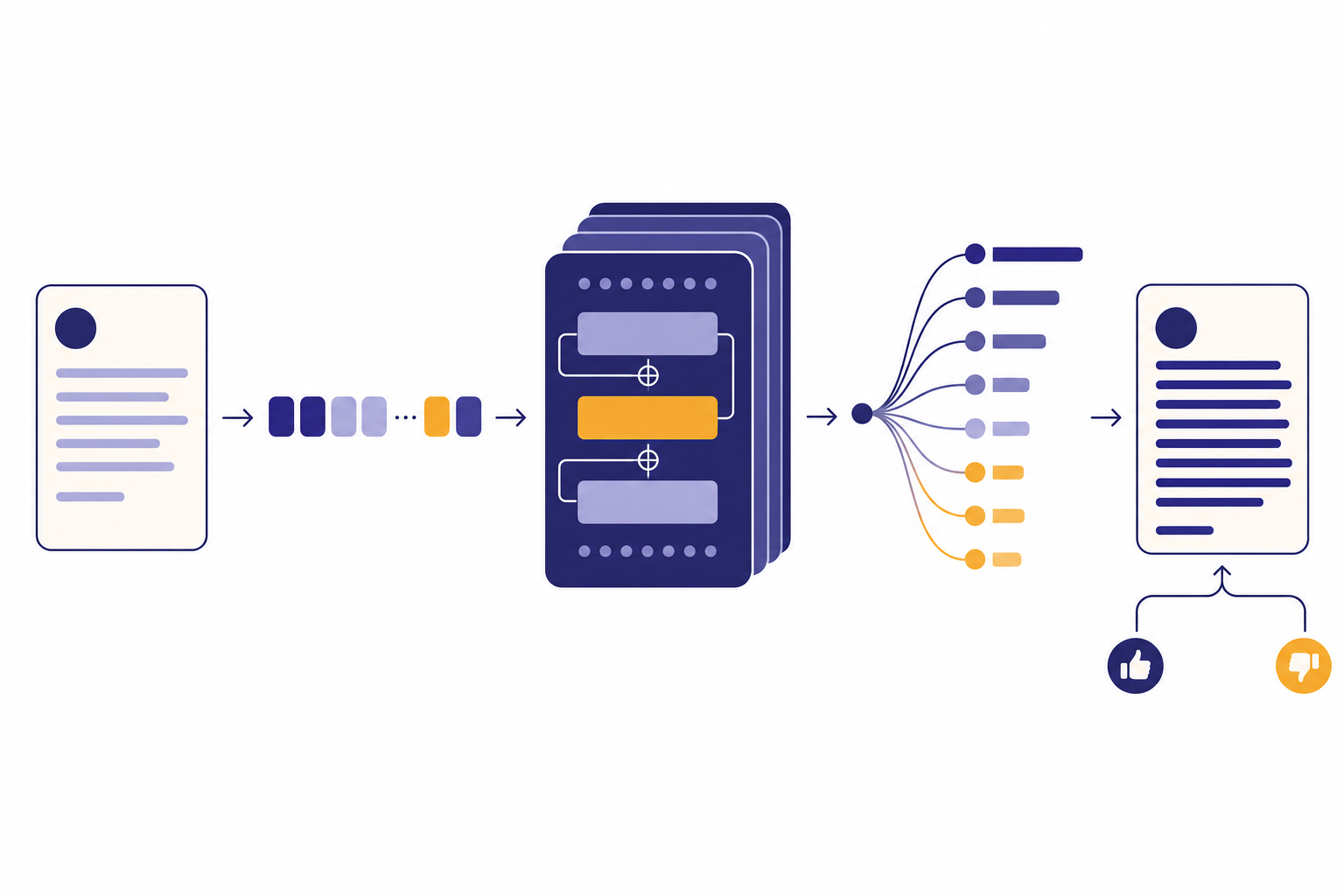

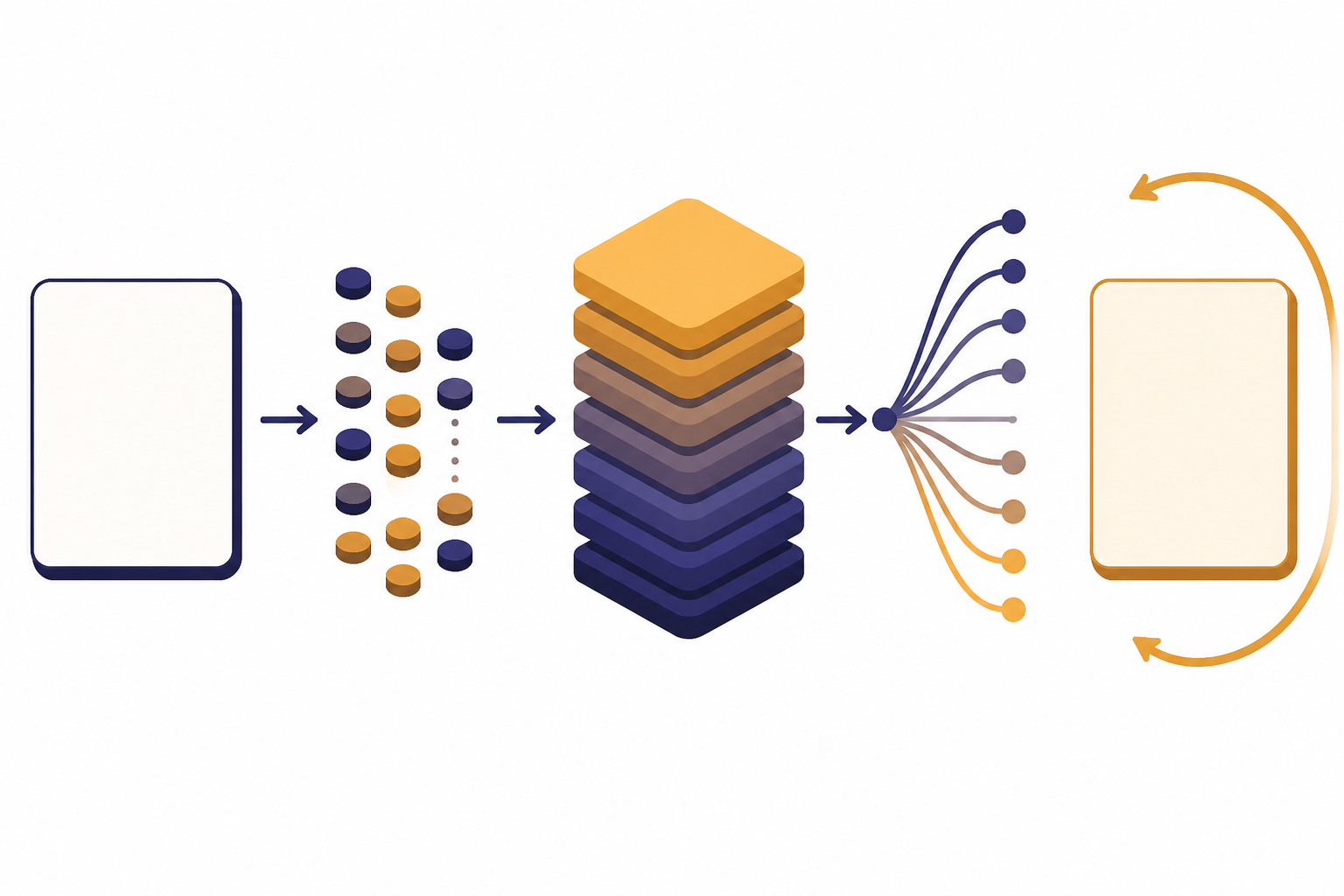

How a GPT model works

A GPT response feels conversational, but the internal process is mechanical. The model receives a context, transforms it into token representations, and generates one token at a time until the answer is complete.

| Stage | What happens | What the user notices |

|---|---|---|

| Prompt intake | Your message and relevant conversation history are prepared as input. | The model appears to remember what you just said. |

| Tokenization | The text is split into tokens, which are the units OpenAI models process.[9] | Very long prompts can hit token limits before they look long to a human. |

| Attention | The Transformer weighs relationships among tokens in the current context.[4] | The model can connect a question to earlier details in the same prompt. |

| Next-token prediction | The model predicts the next token repeatedly. OpenAI describes GPT-4 as pre-trained to predict the next token in a document.[8] | The answer appears word by word or sentence by sentence. |

| Post-training behavior | Additional training and alignment methods make the model more useful for instructions and dialogue.[6] | The model is more likely to follow directions, refuse unsafe requests, and use a helpful style. |

This is why wording matters. A prompt changes the context the model can use. It does not rewrite the underlying model. For practical guidance, see our guides to prompt engineering basics, tokens in ChatGPT, and context window size limits.

Instruction-following is not the same as raw pre-training. OpenAI’s InstructGPT work used human demonstrations and preference rankings to fine-tune GPT-3 so outputs better matched user intent.[6] That is why modern assistants are not just base autocomplete systems exposed directly to users.

GPT vs. ChatGPT, LLM, and generative AI

GPT overlaps with several AI terms, but the terms are not interchangeable. Use the most specific word that matches what you mean.

| Term | What it means | How it relates to GPT |

|---|---|---|

| Generative AI | The broad category of AI systems that create new outputs. | GPT is one important kind of generative AI, focused heavily on language and related outputs. |

| LLM | A large language model trained and used at large scale. | GPT is a well-known LLM family. |

| GPT | A Generative Pre-trained Transformer model family and approach.[1] | It is the model technology, not the chat product by itself. |

| ChatGPT | A user-facing product for talking with AI models. | OpenAI released GPT-4 through ChatGPT and the API, showing the difference between model and interface.[7] |

| Transformer | The neural network architecture behind GPT-style language modeling. | GPT uses Transformers, but not every Transformer model is called GPT. |

A useful rule: if you mean the app, say ChatGPT. If you mean the model family, say GPT. If you mean the broader technical class, say LLM. If you mean the broad category of systems that create new content, say generative AI.

The distinction also helps when reading model announcements. A new app feature may not mean a new base model. A new GPT model may appear first in an API, later in a chat product, or only in a limited research preview.

A short GPT timeline

GPT did not arrive fully formed. The term grew out of several research and product steps.

| Date | Milestone | Why it mattered |

|---|---|---|

| June 11, 2018 | OpenAI published work on improving language understanding with unsupervised learning.[2] | The work paired Transformers with unsupervised pre-training, then used fine-tuning for downstream language tasks.[3] |

| May 28, 2020 | OpenAI introduced GPT-3 as an autoregressive language model with 175 billion parameters.[5] | GPT-3 showed stronger few-shot behavior, where tasks could be specified with examples in text rather than task-specific training.[5] |

| January 27, 2022 | OpenAI described InstructGPT, using reinforcement learning from human feedback to better follow user intentions.[6] | This helped move GPT-style systems from raw completion toward assistant-like behavior. |

| March 14, 2023 | OpenAI introduced GPT-4 as a large multimodal model accepting image and text inputs and producing text outputs.[7] | The GPT label expanded beyond text-only interaction. |

| May 13, 2024 | OpenAI announced GPT-4o, a model built for real-time reasoning across audio, vision, and text.[10] | GPT-style systems moved further toward multimodal interaction. |

The pattern is clear. Early GPT work focused on language understanding and transfer learning. Later systems added stronger instruction following, safety work, larger-scale deployment, and multimodal inputs and outputs.

What GPT can and cannot do

GPT is useful because language is a common interface for work. It can transform a rough prompt into a polished draft, summarize long material, write code, classify text, translate between languages, and answer questions using information placed in the prompt. GPT-3 research specifically tested tasks such as translation, question answering, cloze tasks, word unscrambling, and arithmetic from text prompts.[5]

Modern GPT systems can also be multimodal when the specific model supports it. GPT-4o, for example, was described by OpenAI as accepting combinations of text, audio, image, and video inputs and generating text, audio, and image outputs.[10] That does not mean every GPT-branded model has the same input and output options.

| Strength | Good use | Watch-out |

|---|---|---|

| Language generation | Drafting emails, outlines, explanations, and scripts. | Polished wording can still contain wrong facts. |

| Text transformation | Summarizing, rewriting, formatting, and extracting structure. | The model may omit details if the prompt is vague. |

| Pattern completion | Generating code, examples, tables, and test cases. | Outputs need testing and review. |

| Context use | Answering based on pasted documents or conversation history. | The model is limited by the available context window.[9] |

The limits are just as important. OpenAI’s GPT-4 technical report says GPT-4 is not fully reliable, can hallucinate, has a limited context window, and does not learn from experience during use.[8] That means GPT output should be checked when accuracy, safety, legal risk, financial decisions, medical judgment, or public communication matter.

Do not treat parameter counts as public facts unless OpenAI publishes them. For GPT-4, OpenAI’s technical report said it did not disclose architecture details including model size, hardware, training compute, dataset construction, or similar information.[8] OpenAI has not published an official figure for this.

How GPT connects to prompting, fine-tuning, RAG, and agents

Once you understand GPT, the surrounding AI vocabulary becomes easier. Most practical AI systems are GPT plus a surrounding method for steering, grounding, customizing, or acting.

| Method | What it changes | When it helps |

|---|---|---|

| Prompting | Changes the instructions and context given to the model. | Use it when you need better answers without changing the model. Start with prompt engineering basics. |

| RLHF | Uses human preference data to steer model behavior after pre-training.[6] | It helps explain why assistant models follow instructions better than raw base models. See reinforcement learning from human feedback. |

| Fine-tuning | Adapts model behavior with additional examples. | Use it when you need a repeatable style, format, or domain behavior. See fine-tuning in AI. |

| RAG | Adds retrieved external material to the model context. | Use it when answers must cite or use a changing knowledge base. See retrieval-augmented generation. |

| Agents | Wrap the model with tools, steps, memory, and action policies. | Use them when the system must do work across multiple steps. See practical AI agents. |

These methods do different jobs. Prompting is the fastest lever. RAG helps with freshness and source grounding. Fine-tuning helps with consistency. Agents help with multi-step workflows. RLHF and other post-training methods shape the assistant behavior users often expect from a GPT-powered product.

Frequently asked questions

What does GPT stand for?

GPT stands for Generative Pre-trained Transformer.[1] The phrase generative pre-trained model describes the idea in plain English, but it is not the exact acronym expansion. The T refers to the Transformer architecture.

Is GPT the same as ChatGPT?

No. GPT is a model family and technical approach. ChatGPT is a product interface for interacting with AI models. A GPT model can power a chat app, API, coding tool, or agent workflow.

Who created GPT?

OpenAI researchers Alec Radford, Karthik Narasimhan, Tim Salimans, and Ilya Sutskever authored the original generative pre-training paper.[3] The Transformer architecture used by GPT came from the 2017 Attention Is All You Need paper by Vaswani and coauthors.[4]

Why does GPT sometimes make things up?

A GPT generates likely continuations; it does not inherently verify every claim against a live source. OpenAI’s GPT-4 report says GPT-4 can hallucinate and is not fully reliable.[8] Use retrieval, citations, tools, and human review for high-stakes work.

Does GPT learn from my prompt?

It can use your prompt and conversation context while generating the current response. That is different from changing the underlying model weights. OpenAI’s GPT-4 report says GPT-4 does not learn from experience during use.[8]

Is every GPT model text-only?

No. GPT-4 was introduced as a multimodal model that accepted image and text inputs and produced text outputs.[7] GPT-4o later expanded the idea with text, audio, image, and video inputs and text, audio, and image outputs.[10] Always check the specific model’s supported inputs and outputs.