GPT stands for Generative Pre-trained Transformer. The acronym describes how this kind of AI model works: it generates new output, starts from broad pre-training on large datasets, and uses the Transformer architecture to process relationships between tokens.[1] In everyday use, GPT usually refers to a family of language models, not one single product. ChatGPT is an application built around GPT-style models, while a GPT model is the underlying system that predicts and produces text, code, and sometimes other media. Understanding the acronym helps explain why these systems can answer questions, draft text, summarize documents, and follow prompts.

GPT means Generative Pre-trained Transformer

GPT stands for Generative Pre-trained Transformer. It is a technical phrase, but each word points to a practical part of the system. Generative means the model can create new output. Pre-trained means it first learns broad patterns before it is adapted for a narrower use. Transformer names the neural network architecture that helps the model weigh relationships across a sequence of tokens.[1]

The acronym is easiest to understand if you treat it as a recipe. A GPT model is trained to look at a sequence and predict what should come next. That sequence might be a sentence, a code snippet, a paragraph, or a longer conversation. The model does not retrieve a stored answer in the way a database does. It calculates likely continuations based on patterns learned during training.

This is why GPT models can feel flexible. The same underlying model can draft an email, explain a math concept, rewrite a paragraph, or produce code. The prompt changes the task. The model’s learned language patterns give it a way to respond. For a broader overview of the technology behind the acronym, see our guide to what is GPT.

The three parts of the acronym

The three words in GPT are not branding filler. They describe the model’s behavior, training path, and architecture. The table below gives the plain-English meaning.

| Part of GPT | Plain-English meaning | Why it matters |

|---|---|---|

| Generative | The model creates new text or other output instead of only classifying an input. | It can draft, summarize, explain, translate, and answer in natural language. |

| Pre-trained | The model first learns broad statistical patterns from large training data before later adaptation. | It can handle many tasks without being trained from scratch for each one. |

| Transformer | The model uses a Transformer architecture based on attention mechanisms. | It can track relationships among tokens across a prompt more effectively than older sequence methods. |

Generative means it produces output

A generative model makes something. In a text model, that output is usually a string of tokens that becomes words, punctuation, code, or structured data. This is different from a model that only labels an input, such as marking an email as spam or not spam. GPT models can still classify, but they do it by generating an answer.

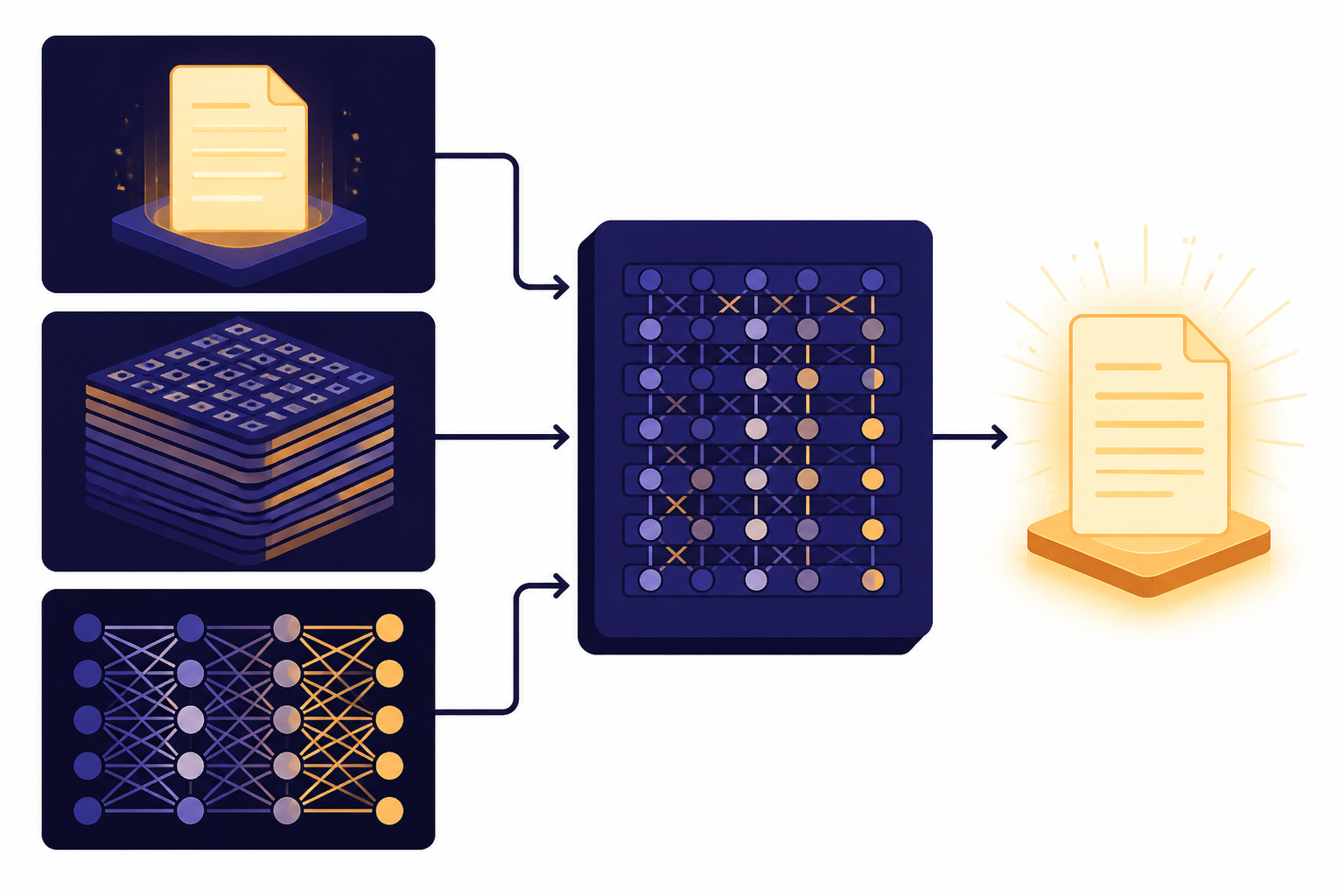

Pre-trained means it starts broad

Pre-training gives the model a broad foundation before it is tuned for specific behavior. OpenAI’s early GPT research described a two-stage approach: first train a Transformer model on a large amount of data using language modeling, then fine-tune it on smaller supervised datasets for specific tasks.[4] That idea explains why a single model can be useful across many prompts.

Transformer means it uses attention

The Transformer architecture came from the 2017 paper Attention Is All You Need. Google Research describes the Transformer as a model that generalized well beyond translation tasks.[3] In practice, attention helps a model decide which parts of the input are most relevant when predicting the next token.

GPT is not the same as ChatGPT

GPT and ChatGPT are related, but they are not identical. GPT names a model type or model family. ChatGPT is a product interface where people interact with models in a chat format. OpenAI introduced ChatGPT on November 30, 2022, and described it as a model that interacts conversationally.[10]

A simple analogy helps. GPT is like the engine. ChatGPT is like the car dashboard, controls, and driving experience around that engine. The product adds conversation history, tools, safety systems, file features, voice options, and other user-facing pieces. The model supplies much of the language capability underneath.

| Term | What it refers to | Good way to think about it |

|---|---|---|

| GPT | A Generative Pre-trained Transformer model or model family. | The underlying AI model type. |

| ChatGPT | A chat product made by OpenAI that uses GPT-style models and related systems. | The app or interface people use. |

| Prompt | The instruction or input you give the model. | The task request. |

| Response | The model’s generated output. | The answer, draft, code, or completion. |

If your question is about the full product name, read our separate explanation of what ChatGPT stands for. If you want the beginner product overview, start with what is ChatGPT.

Where the GPT term came from

The GPT acronym grew out of research language, not consumer software language. The word “Transformer” traces to the 2017 Transformer paper from Google Research.[3] OpenAI then used generative pre-training with a Transformer model in its 2018 work on language understanding.[4]

That early research showed a pattern that still matters: train a general language model first, then adapt or prompt it for many downstream tasks. Later GPT models scaled that idea. OpenAI’s GPT-2 work followed in 2019.[5] OpenAI’s GPT-3 paper described GPT-3 as an autoregressive language model with 175 billion parameters; the arXiv record for the same paper reports the same 175 billion-parameter figure.[6][7]

| Milestone | What happened | Why it matters for the acronym |

|---|---|---|

| 2017 | The Transformer architecture was published in Attention Is All You Need.[3] | It gave GPT the “T.” |

| 2018 | OpenAI published work on improving language understanding with unsupervised learning.[4] | It connected generative pre-training with Transformer-based language models. |

| 2019 | OpenAI published GPT-2 research and a staged release.[5] | It made the GPT naming pattern more visible. |

| 2020 | OpenAI described GPT-3 as a 175 billion-parameter autoregressive language model.[6][7] | It showed how scaling GPT models could broaden task performance. |

| 2022 | OpenAI introduced ChatGPT on November 30, 2022.[10] | It brought GPT-style models into a mainstream chat product. |

Not every later model name reveals its full technical design. For example, OpenAI has not published an official parameter count for GPT-4. The GPT-4 technical report says it does not include details about architecture, model size, hardware, training compute, dataset construction, or similar information.[9]

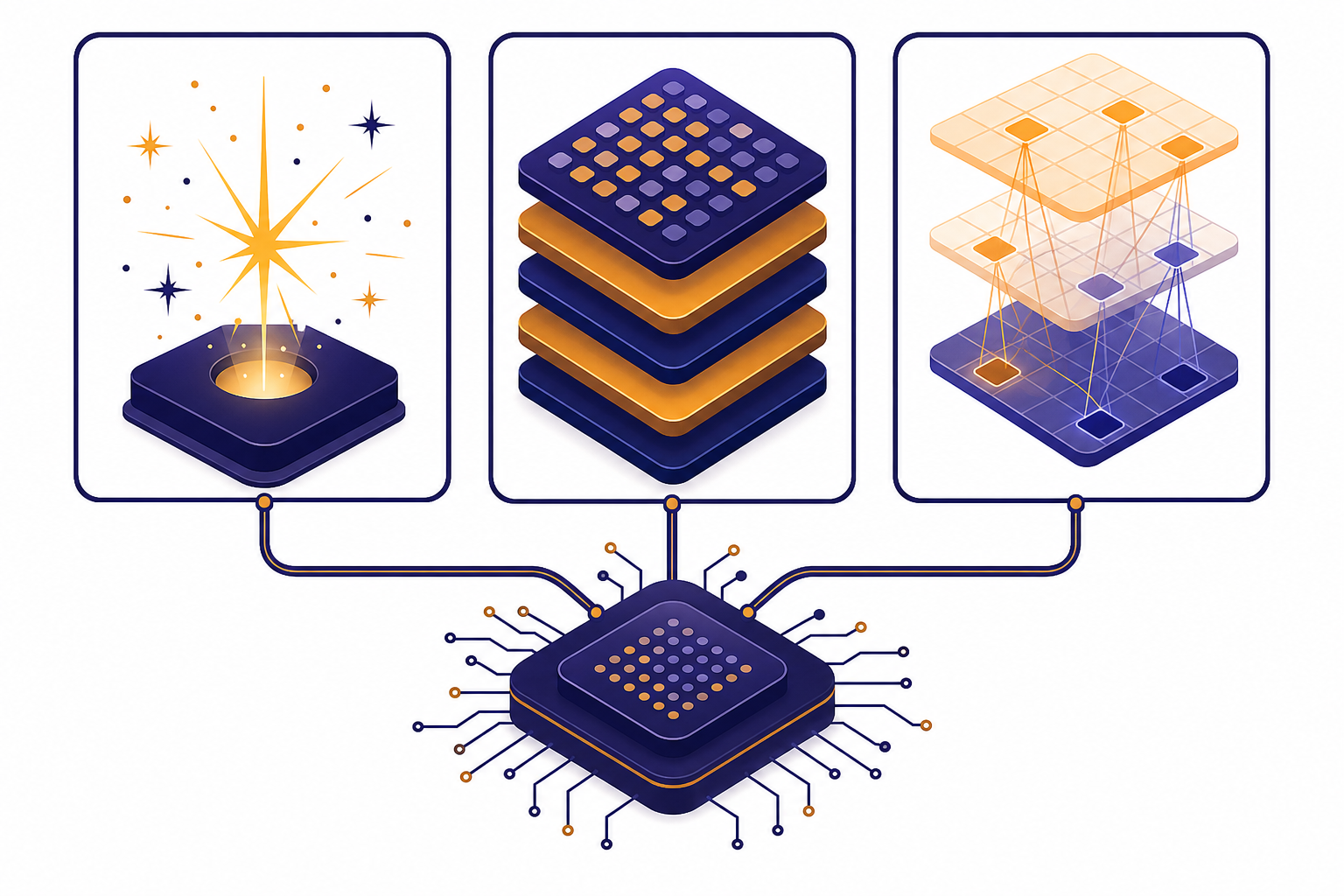

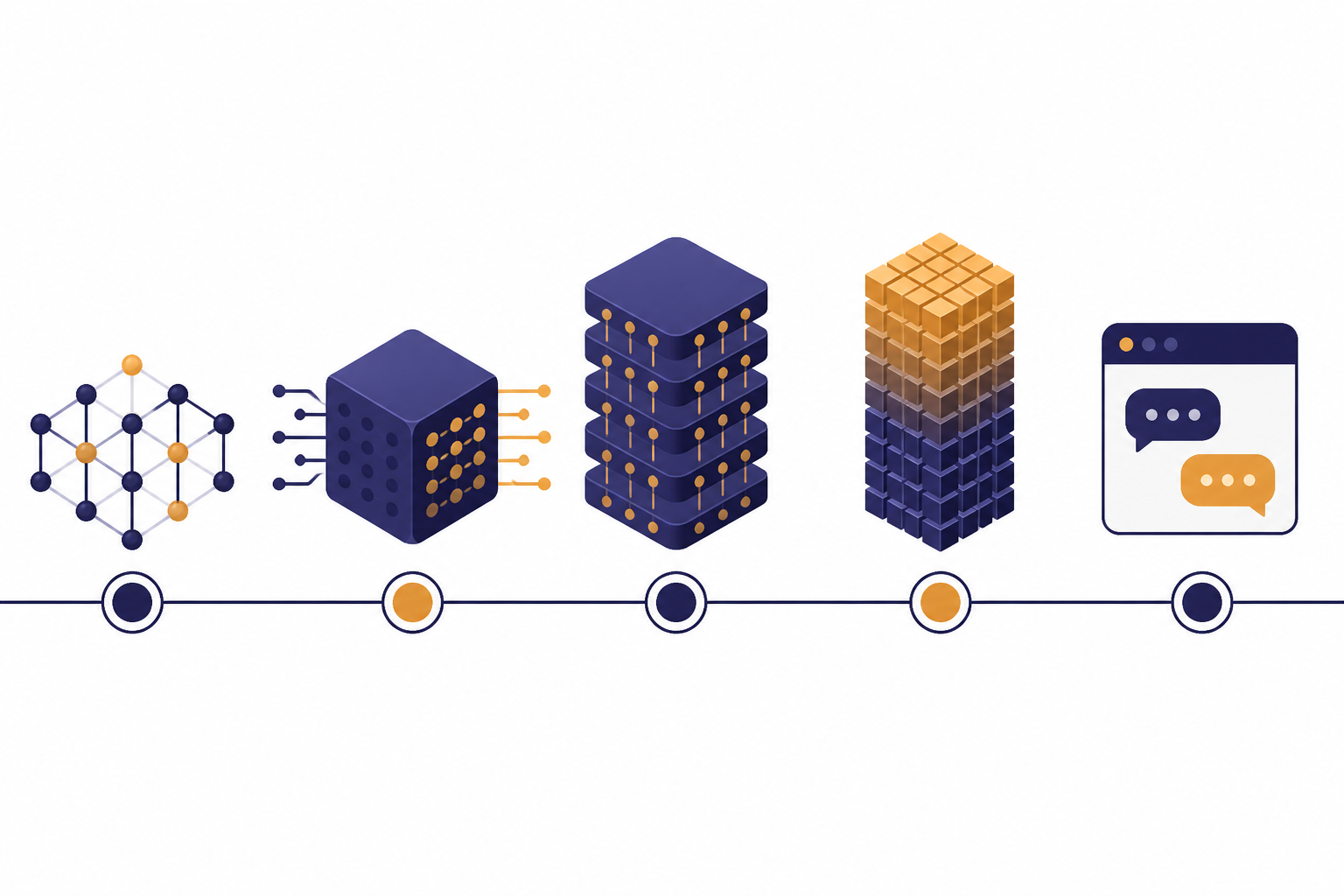

How a GPT model works in plain English

A GPT model works by turning input into tokens, processing those tokens through many learned layers, and generating likely next tokens. OpenAI explains that its models process text using tokens, which can be common character sequences rather than whole words.[11] This is why token limits matter. A model sees a prompt as a token sequence, not as a page the way a person sees it.

The model then uses the context it has available. The context includes your current prompt and, in a chat product, selected parts of the conversation or attached content. If you want a deeper explanation of this limit, read what is a context window. If you want the smallest building block first, read what is a token in ChatGPT.

The key prediction task is simple to state: given the tokens so far, what token should come next? The answer is not chosen by human rules. It comes from learned probabilities inside the model. When the model repeats this process many times, token by token, the result becomes a paragraph, list, answer, code sample, or other generated output.

This also explains a core limitation. A GPT model can produce fluent language without guaranteed truth. It can make a sentence that sounds plausible even when the claim is wrong. That is why high-stakes use needs checking, source review, or a system design that grounds answers in trusted data.

Common mix-ups about GPT

GPT does not mean “general purpose technology”

In AI product discussions, GPT means Generative Pre-trained Transformer.[1] “General purpose technology” is a separate economics phrase. It can describe broad technologies, but it is not what GPT stands for in ChatGPT or OpenAI model names.

GPT is not automatically a chatbot

A GPT model can power a chatbot, but it can also sit behind an API workflow, a writing tool, a code assistant, a search assistant, or an internal document system. The chat interface is one product pattern. The model is the more general capability.

GPT does not guarantee accuracy

The acronym describes architecture and training, not reliability. A GPT model can generate a confident answer that needs verification. Systems such as retrieval-augmented generation can reduce this risk by giving the model relevant source material at answer time.

GPT is not the only kind of AI model

GPT-style models are important, but they are only part of the AI landscape. Some systems focus on vision, speech, robotics, recommendation, forecasting, or structured prediction. Many modern products combine several model types behind one interface.

Related concepts worth knowing next

Once you know what GPT stands for, the next useful step is learning the neighboring terms. GPT is one example of generative AI, and most GPT systems are also large language models. Those two labels explain the broader category and the language-focused model class.

Training terms matter too. RLHF explains how human feedback can shape model behavior after pre-training. Fine-tuning explains how a model can be adapted with narrower training examples. These are different from prompting, which changes the instruction at use time rather than changing the model’s learned weights.

Application terms are also useful. RAG describes systems that retrieve relevant information before generating an answer. Prompt engineering describes the skill of writing instructions that get better model behavior. Multimodal AI covers models that work with more than text, such as images, audio, and video.

Finally, the word “agent” has become common in AI tools. An AI agent usually means a system that can take steps toward a goal, use tools, and carry out a workflow with some autonomy. GPT models often provide the language and reasoning layer inside those systems, but the agent includes more than the model alone.

Frequently asked questions

What does GPT stand for in ChatGPT?

GPT stands for Generative Pre-trained Transformer.[1] In ChatGPT, the “Chat” part refers to the conversational interface, while “GPT” points to the model family behind the experience.

Is GPT a model or a company?

GPT is not a company. It is a type of AI model name associated with Generative Pre-trained Transformer systems. OpenAI is the company that published influential GPT models and built ChatGPT around GPT-style technology.

Does GPT only generate text?

Early GPT models were mainly language models. Later AI systems can combine text with other inputs and outputs, including images or audio, depending on the product and model. The acronym still comes from the language-model lineage.

Why is it called pre-trained?

It is called pre-trained because the model learns broad patterns before it is adapted for specific tasks or product behavior. OpenAI’s early GPT work described training a Transformer language model first and then fine-tuning it for individual tasks.[4]

What is the Transformer in GPT?

The Transformer is the neural network architecture behind the “T” in GPT. It uses attention mechanisms to process relationships among tokens in a sequence. The architecture traces to the 2017 paper Attention Is All You Need.[3]

Does GPT know facts?

A GPT model can generate factual statements, but it does not know facts the way a database stores records. It predicts likely output from learned patterns and the context it receives. For important claims, check sources or use systems that ground the answer in trusted documents.