Generative AI is artificial intelligence that creates new content from a prompt. It can draft text, answer questions, make images, write code, summarize documents, generate audio, and help shape ideas. The key word is “generate.” Older AI often classified, ranked, predicted, or detected things. Generative AI produces a new output that did not exist in that exact form before. It does this by learning patterns from large amounts of training data, then using those patterns to predict a useful next piece of content. Tools such as ChatGPT made this idea familiar to millions of people after OpenAI introduced ChatGPT on November 30, 2022.[1][4][5]

Simple definition of generative AI

Generative AI is software that creates new material in response to instructions. Those instructions are usually called prompts. A prompt can be a sentence, a question, a document, an image, a voice command, or a mix of inputs.

The output can be practical or creative. You might ask for a polite customer email, a summary of a long report, a Python function, a study plan, a product mockup, or a list of possible names for a new project. The system returns a generated answer rather than pulling one fixed answer from a database.

That distinction matters. A search engine usually helps you find existing pages. A spreadsheet formula calculates from fixed rules. A recommendation system ranks likely choices. Generative AI creates a fresh response based on patterns it learned during training and the context you provide in the prompt. IBM defines generative AI as AI that can create original content such as text, images, video, audio, or software code in response to a user prompt.[1]

Generative AI is not magic and it is not human thought. It does not “know” in the way a person knows. It learns statistical relationships among words, pixels, sounds, code, and other data. When you give it a prompt, it estimates what output would fit the request. That estimate can be useful, fluent, and impressive. It can also be wrong.

If you are learning the broader family of terms, start with what is ChatGPT, what GPT means, and what ChatGPT stands for. Those concepts overlap with generative AI, but they are not identical.

How generative AI works

A generative AI system usually has three broad stages: training, instruction, and generation. Training happens before you use the tool. Instruction happens when you write a prompt. Generation happens when the model produces the answer.

Training teaches patterns

During training, developers feed a model large collections of examples. For a language model, those examples include text. For an image model, they include images and related descriptions. For a multimodal model, they may include more than one kind of input. The model adjusts its internal settings so it can detect patterns in the data. AWS describes generative AI systems as learning complex subject matter such as human language, programming languages, art, chemistry, and biology.[2]

Training does not mean the model stores a neat library of every fact. It means the model learns relationships. In a text model, it learns which words, phrases, structures, and ideas tend to appear together. In an image model, it learns relationships among shapes, colors, textures, and descriptions.

Your prompt supplies the task

When you use the model, the prompt tells it what to do. A vague prompt gives the model more room to guess. A specific prompt narrows the task. “Write about onboarding” is broad. “Draft a friendly onboarding email for a new accounting client, under 200 words, with three next steps” gives the model a clearer target.

The prompt is placed into the model’s active working space, often called the context window. That space can include your current message, previous messages, uploaded text, tool results, and system instructions. If you want the deeper version, read our guide to context windows and size limits and our explanation of tokens in ChatGPT.

The model generates one step at a time

A language model generates text by predicting likely next pieces of text. It repeats that process until it has formed a complete answer. That is why the answer can feel conversational. It is also why wording, order, and small prompt changes can shift the result.

Many modern systems add extra layers around the base model. They may use retrieval to look up outside information, safety classifiers to block harmful requests, tools to run code, or human feedback methods to improve behavior. For those next layers, see RAG explained, RLHF in plain English, and fine-tuning basics.

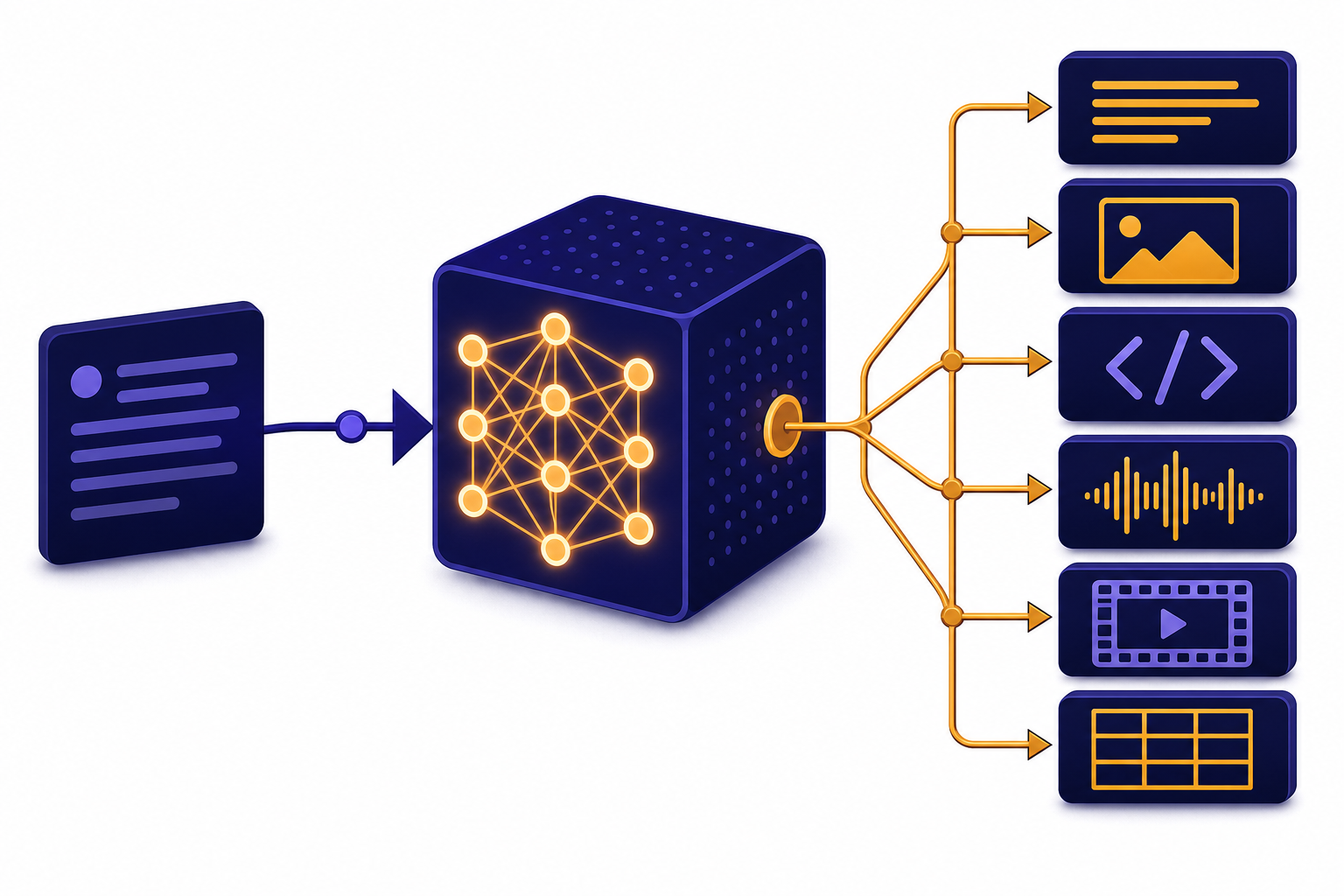

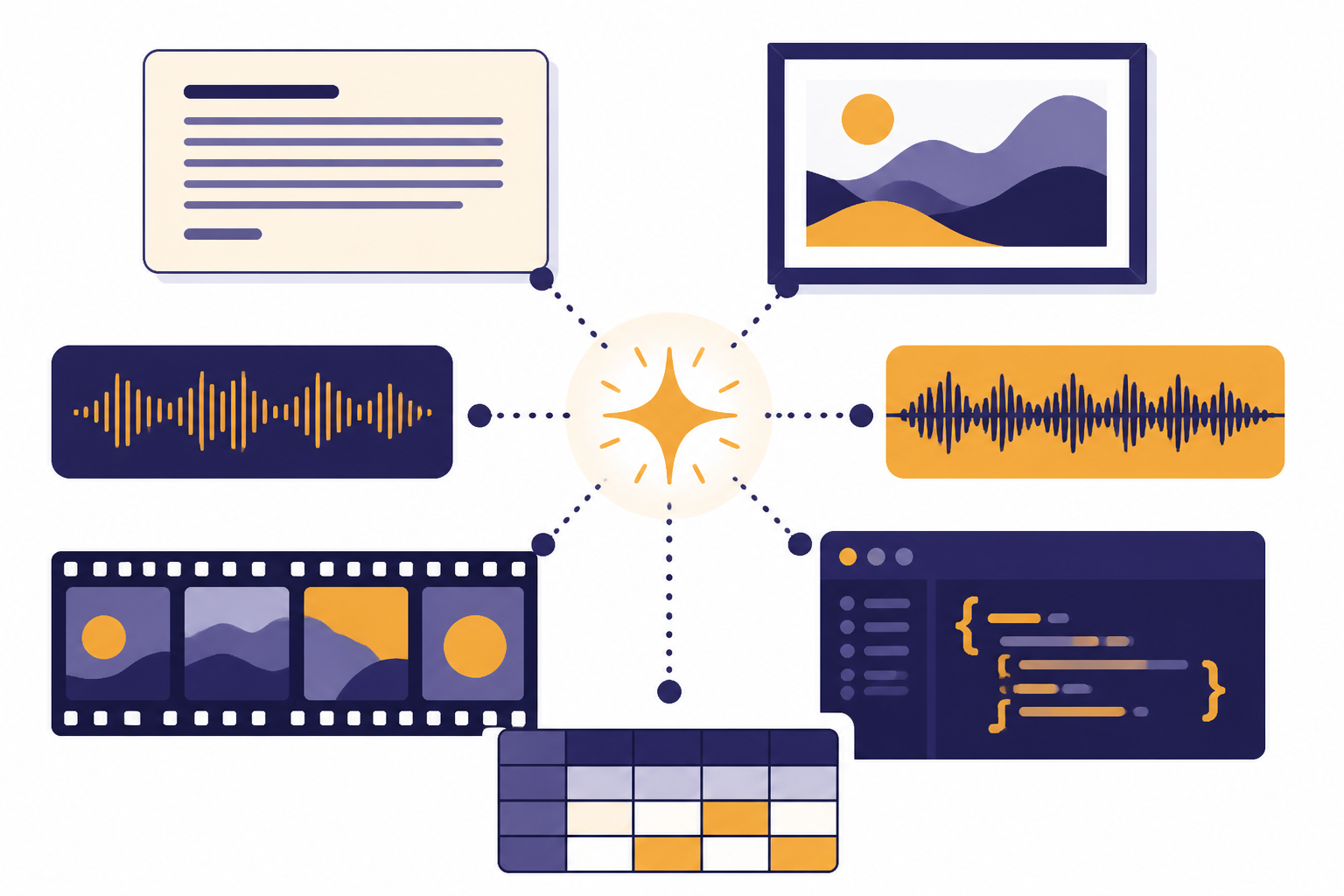

What generative AI can create

Generative AI is not limited to chat. It can produce several kinds of output. IBM lists text, images, video, audio, software code, design, art, simulations, and synthetic data as content areas for generative AI.[1] AWS also describes generative AI as creating conversations, stories, images, videos, and music.[2]

Text is the most familiar output. A model can draft messages, rewrite paragraphs, summarize documents, create outlines, answer questions, and convert rough notes into structured prose. It can also help with tone. For example, it can turn a blunt support reply into a calmer version.

Code generation is another common use. A model can sketch a function, explain an error message, translate logic between languages, or create test cases. Developers still need to review the output. Generated code can contain security problems, outdated library calls, or logic that passes a simple example but fails in production.

Image generation turns written descriptions into pictures or edits existing images. The same general idea applies to audio, video, and design tasks. A user describes the desired output, and the model generates something that matches the pattern. More advanced systems combine inputs. OpenAI described GPT-4 as a large multimodal model that accepted image and text inputs and produced text outputs when it was announced on March 14, 2023.[6] For the beginner version, see our guide to multimodal AI.

Generative AI can also create structured material. That includes tables, classification drafts, data-cleaning suggestions, synthetic examples, lesson plans, rubrics, checklists, and workflows. The more structured the request, the easier it is to review the output.

Generative AI vs. other types of AI

Generative AI is one branch of artificial intelligence. It overlaps with machine learning, deep learning, language models, computer vision, and recommendation systems. The easiest way to separate it from other AI is to ask what the system is mainly trying to do.

| Type of AI | Main job | Plain-English example | Typical output |

|---|---|---|---|

| Generative AI | Create new content | Draft a product announcement from bullet points | A new paragraph, image, code file, or audio clip |

| Predictive AI | Estimate what is likely to happen | Predict whether a customer may cancel | A probability, score, or forecast |

| Discriminative AI | Classify or separate inputs | Decide whether an email looks like spam | A label or category |

| Search and retrieval | Find existing information | Return the most relevant policy document | A link, passage, or ranked list |

| Rule-based automation | Follow fixed instructions | Send an alert when inventory drops below a threshold | A triggered action |

The categories often work together. A customer support product might retrieve a help article, use a generative model to draft a reply, use a classifier to detect sentiment, and use rules to decide whether to escalate the ticket. The user may only see one chat box, but several systems can be working behind it.

Generative AI is strongest when the desired output has room for language, synthesis, examples, explanation, or creative variation. It is weaker when you need guaranteed arithmetic, exact legal interpretation, medical diagnosis, or live facts that the system has not checked. In those cases, it should assist a verified workflow rather than replace one.

Why generative AI makes mistakes

Generative AI can sound confident even when it is wrong. That happens because the model is optimized to produce plausible output, not because it has a built-in guarantee of truth. It may fill gaps with a likely-sounding answer. It may misread your request. It may combine ideas that do not belong together. It may use outdated knowledge if no current source is available.

NIST’s Generative AI Profile was published on July 26, 2024, as a companion to the AI Risk Management Framework, and it focuses on risks that organizations should manage when designing, deploying, or using generative AI systems.[7] The practical lesson for everyday users is simple: treat generated output as a draft until you verify it.

Common failure patterns include fabricated citations, incorrect dates, broken code, missing edge cases, overconfident summaries, biased wording, and privacy leaks caused by pasting sensitive information into the wrong tool. Some outputs are harmless rough drafts. Others can create real damage if copied into contracts, medical advice, financial decisions, security settings, or public statements without review.

Use a higher standard when the stakes are high. Ask the model to show its assumptions. Request sources when factual accuracy matters. Compare the answer against primary documents. Keep private data out unless you know the tool’s data controls. Make a human accountable for the final decision.

Where ChatGPT fits

ChatGPT is a generative AI application. The chat interface is the product you type into. The model is the system that generates the response. OpenAI introduced ChatGPT on November 30, 2022, as a research release designed to gather feedback on its strengths and weaknesses.[4][5]

ChatGPT is not the only generative AI tool. Other companies build chatbots, image generators, coding assistants, document tools, voice systems, and enterprise platforms. The shared idea is generation from prompts. The differences are in the model, interface, available tools, data controls, speed, cost, and safety design.

GPT is one important term inside the ChatGPT world. OpenAI’s API documentation describes its text generation models as often referred to as generative pre-trained transformers, or GPT models for short.[8] If you want the acronym breakdown, read what does GPT stand for and what does ChatGPT stand for.

Large language models are the core technology behind many text-based generative AI tools. They generate language, explain concepts, write drafts, and transform text. They can be connected to tools that search, calculate, retrieve files, or take actions. Our LLM beginner guide explains that layer in more detail.

How to use generative AI well

Good use starts with the right job. Generative AI is useful for first drafts, explanations, brainstorming, summarization, translation support, code sketches, learning plans, and comparison frameworks. It is less suitable as the only source for facts, the only reviewer for important work, or the final authority in regulated decisions.

Give the model context. Tell it the audience, goal, format, constraints, and source material. Instead of “write a proposal,” say, “Write a one-page proposal for a small accounting firm that wants a client portal. Use a calm professional tone. Include scope, timeline, risks, and next steps.” The second prompt produces a more reviewable answer.

- State the role. Ask for a tutor, editor, analyst, reviewer, or coding assistant.

- Define the audience. A beginner, executive, developer, lawyer, student, or customer needs a different answer.

- Set boundaries. Give length, format, tone, and exclusions.

- Provide source material. Paste the policy, notes, transcript, or requirements you want it to use.

- Ask for uncertainty. Tell the model to mark assumptions and say when it does not know.

- Review the result. Check facts, logic, tone, privacy, and fit before using it.

Prompting is a skill, but it is not a secret code. Clear instructions beat clever wording. If the first answer misses, revise the prompt or ask for a different structure. Our prompt engineering guide gives practical patterns for better prompts.

For teams, the bigger question is workflow design. Decide which tasks AI can draft, which tasks require human approval, what data may be entered, what sources count as authoritative, and how errors get reported. A lightweight checklist is better than informal guessing.

Terms to know

Generative AI discussions use a cluster of related terms. These definitions will help you read product pages, model announcements, and AI policies without getting lost.

| Term | Plain-English meaning |

|---|---|

| Prompt | The instruction or input you give the AI system. |

| Model | The trained system that generates, classifies, predicts, or transforms information. |

| Large language model | A model trained to work with language at large scale. |

| Token | A chunk of text the model processes, often a word part rather than a full word. |

| Context window | The active working space the model can use while responding. |

| Multimodal AI | AI that can work with more than one kind of input or output, such as text and images. |

| RAG | Retrieval-augmented generation, a method that adds outside source material before generating an answer. |

| Fine-tuning | Additional training that adapts a model to a narrower task, style, or domain. |

| AI agent | A system that can use tools or steps to pursue a goal, not just return one answer. |

Generative AI becomes more understandable when you separate the layers. The model generates. The prompt instructs. The context window limits what the model can see at once. Retrieval can add documents. Fine-tuning can adapt behavior. An agent can connect generation to actions. If you want to go deeper, read what is an AI agent after this article.

Frequently asked questions

Is generative AI the same as ChatGPT?

No. ChatGPT is one generative AI application. Generative AI is the broader category of systems that create new text, images, audio, video, code, or other content from prompts.

Does generative AI copy from the internet?

Not in the simple sense of copying and pasting every answer from a web page. A model learns patterns from training data and then generates new output. Some systems can also retrieve current web or document information, so you should check the tool’s sources and data controls.

Why does generative AI sometimes invent facts?

It generates plausible responses from patterns, and plausible is not the same as true. If the prompt is ambiguous, the needed fact is missing, or the model lacks current information, it may produce a confident wrong answer. Verify important claims against primary sources.

What is the best beginner use for generative AI?

Start with low-risk drafting and learning tasks. Ask it to summarize your own notes, explain a concept at your level, create an outline, or suggest edits to text you already wrote. These uses are easy to review and correct.

Can generative AI replace human workers?

It can automate or speed up parts of many jobs, especially drafting, summarizing, coding assistance, and research preparation. It usually works best as a tool inside a human workflow. People still need to define goals, judge quality, handle exceptions, and take responsibility for final decisions.

Is generative AI safe to use with private information?

It depends on the tool, account settings, organization policy, and data handling terms. Do not paste secrets, personal data, contracts, source code, or regulated information into a tool unless you know how that data is stored and used. When in doubt, remove sensitive details first.