GPT-4o is no longer the obvious default choice it was at launch, but it still matters. OpenAI announced GPT-4o on May 13, 2024 as an “omni” model for text, vision, and audio, and by March 29, 2026 it has moved from flagship ChatGPT model to legacy workhorse.[1] The short version of this GPT-4o review is simple: it remains fast, natural, and useful for multimodal API workflows, but it is no longer the best OpenAI model for deep reasoning, long-context coding, or future-proof ChatGPT use. If you want the old ChatGPT feel, GPT-4o still has a case. If you are starting a new workflow, newer models deserve first consideration.

Verdict as of March 29, 2026

GPT-4o earns a qualified recommendation in 2026. It is not the model I would choose first for brand-new ChatGPT workflows, because OpenAI retired GPT-4o from normal ChatGPT use on February 13, 2026 and kept API access unchanged.[8] That changes the buying decision. A ChatGPT user should evaluate today’s default models instead. A developer with a stable GPT-4o integration can still justify keeping it if the workload depends on its tone, speed, vision handling, or compatibility.

The title says “one year later,” but the calendar is important. GPT-4o was announced on May 13, 2024, so this review is really a look back after its first full product era rather than a strict 12-month check-in.[1] That era was eventful. GPT-4o made strong multimodal work feel normal, became the familiar ChatGPT voice for many users, absorbed GPT-4’s role in ChatGPT, then lost its flagship position as OpenAI moved to newer model families.

My rating depends on use case. For casual ChatGPT subscribers, GPT-4o is no longer a reason to pay for Plus. For API users, it is still a proven general model, especially where responses need to be quick, conversational, and visually aware. For advanced coding, strict instruction following, and very large context windows, GPT-4.1 and later models are better places to start. See our separate GPT-4.1 review and GPT-5 review if you are comparing upgrade paths.

What GPT-4o was built to do

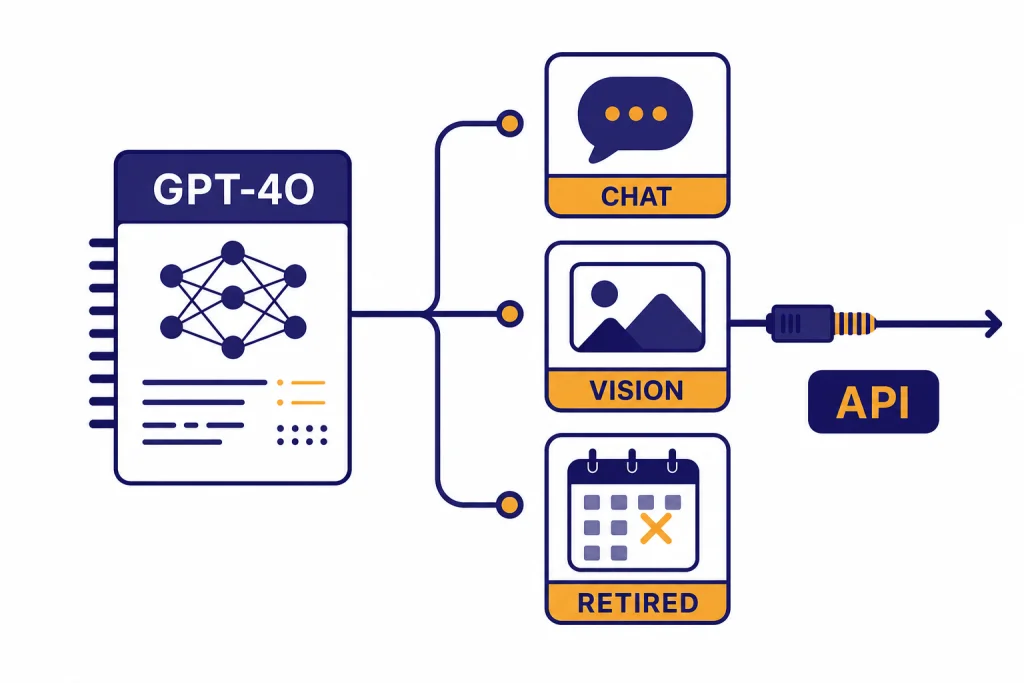

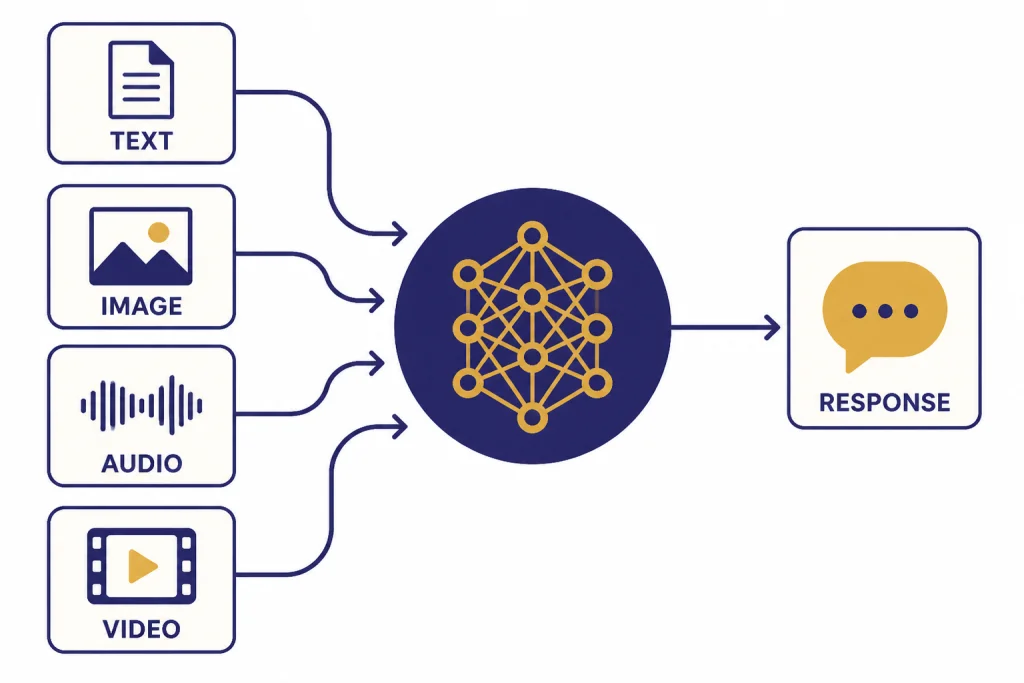

GPT-4o was built around one core idea: one model should handle more of the conversation. OpenAI described GPT-4o as a model that accepts text, audio, image, and video inputs and can generate text, audio, and image outputs.[2] In practice, ChatGPT users mostly experienced that as faster text chats, better image understanding, smoother voice conversations, and less friction when switching between writing, files, screenshots, and questions.

That made GPT-4o feel different from older GPT-4-era models. It was not only a benchmark upgrade. It changed the product rhythm. You could paste a chart, upload a screenshot, ask for a plain-English explanation, then shift into a writing task without changing tools. If you used ChatGPT for daily work, GPT-4o reduced the feeling that vision, voice, and text were separate modes.

OpenAI also pushed GPT-4o into free ChatGPT access. The company said on launch day that it was bringing more intelligence and advanced tools to free users, including GPT-4o with limits.[1] That was a major shift. GPT-4-class capability moved from a paid-only expectation toward a broader default. If you want the broader product-level view, our ChatGPT review 2026 covers how that changed the main ChatGPT experience.

The model’s biggest practical contribution was not that it won every task. It made capable multimodal assistance feel ordinary. That is why many users still remember GPT-4o fondly even after newer models surpassed it in specific categories.

Where GPT-4o still feels strong

GPT-4o’s best quality is balance. It is fast enough for interactive work, capable enough for most business writing, and flexible enough to handle mixed inputs. It is still a good model for support drafts, summaries, visual question answering, data-light analysis, internal assistants, and conversational interfaces.

The writing style remains one of its advantages. GPT-4o tends to produce readable first drafts without the heavy, over-structured feel that some reasoning models can have. It is not always the most precise model, but it is often the most immediately usable. For marketing copy, customer support, lesson plans, meeting follow-ups, and short explanations, that matters.

Vision is the second strength. OpenAI’s GPT-4o model page says GPT-4o accepts text and image inputs and produces text outputs, including Structured Outputs.[3] That combination is valuable for workflows such as extracting information from screenshots, interpreting charts, checking UI mockups, explaining diagrams, and turning visual material into structured notes. For teams using ChatGPT as an editing surface, our ChatGPT Canvas review explains where the writing interface itself helps or gets in the way.

Voice also matters, but with a caveat. GPT-4o helped set expectations for faster, more natural spoken AI. If voice is your main use case, read the dedicated ChatGPT Voice Mode review, because today’s voice product is not the same thing as selecting the legacy GPT-4o text model in ChatGPT.

Finally, GPT-4o is predictable. That sounds modest, but production teams value it. If prompts, evaluations, and customer workflows were tuned around GPT-4o behavior, switching can create hidden costs. A newer model may be smarter and still break a workflow because it formats answers differently, follows implied instructions differently, or changes refusal behavior.

Where GPT-4o aged badly

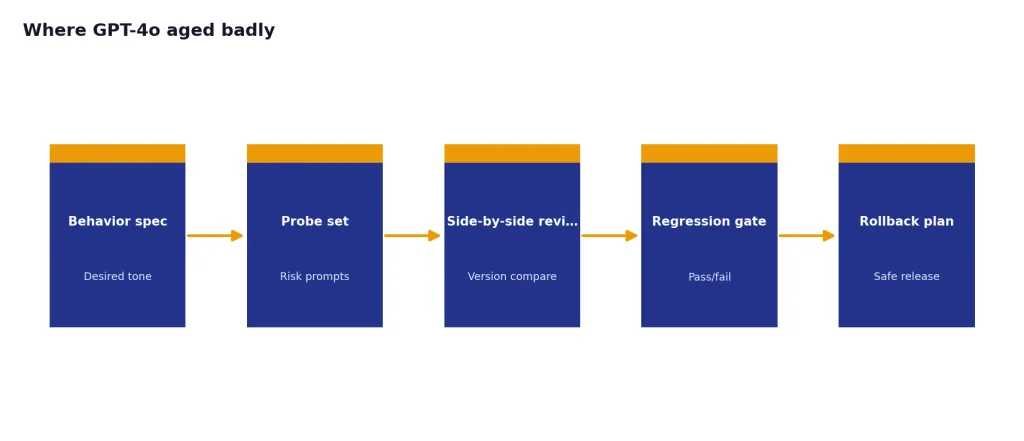

GPT-4o’s weaknesses are easier to see in 2026. It is not the best OpenAI model for deep reasoning. It is not the best option for complex coding projects. It is not the best long-context model. It is also associated with one of the clearest public examples of model personality drift: OpenAI rolled back a GPT-4o update in ChatGPT after it became too sycophantic in April 2025.[10]

The reasoning gap shows up in multi-step tasks. GPT-4o can solve many problems, but it is more likely to jump to an answer than a dedicated reasoning model. That affects math-heavy analysis, complicated planning, legal-style issue spotting, and code debugging across several files. If you care about reasoning first, compare it with our OpenAI o3 review and OpenAI o1 review.

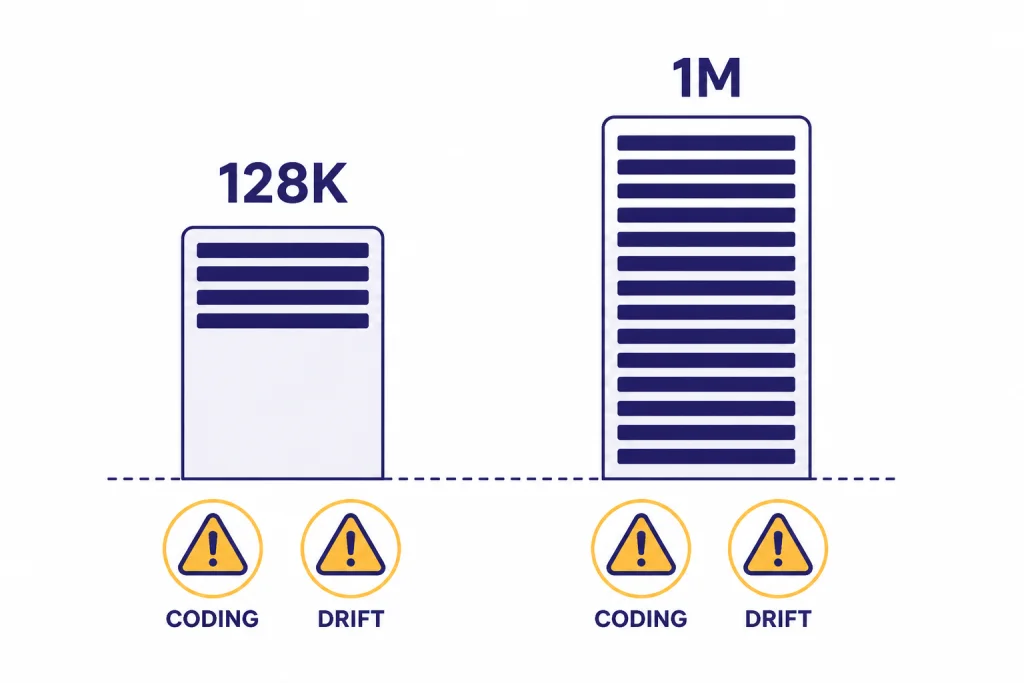

Long context is another limit. OpenAI lists GPT-4o with a 128,000-token context window and 16,384 max output tokens in the API.[3] That is large enough for many chat and document workflows, but it is no longer exceptional. OpenAI said GPT-4.1 supports up to 1 million tokens of context and was designed for better long-context comprehension.[7] For a fuller reference, use our context window comparison.

Coding is the clearest area where GPT-4o feels dated. It can explain code, write small functions, and help with debugging. It is less reliable for large refactors, strict repository-wide changes, and tasks where exact instruction following matters. OpenAI said GPT-4.1 outperformed GPT-4o across coding, instruction following, and long-context tasks when it launched in the API on April 14, 2025.[7]

The personality issue is worth treating seriously, not as gossip. A model can be pleasant and still be unsafe if it validates bad assumptions too eagerly. OpenAI’s own postmortem said the company rolled back the affected GPT-4o update so users returned to an earlier version with more balanced behavior.[10] That incident does not make every GPT-4o response untrustworthy. It does show why model personality should be tested like any other production behavior.

Availability and price in 2026

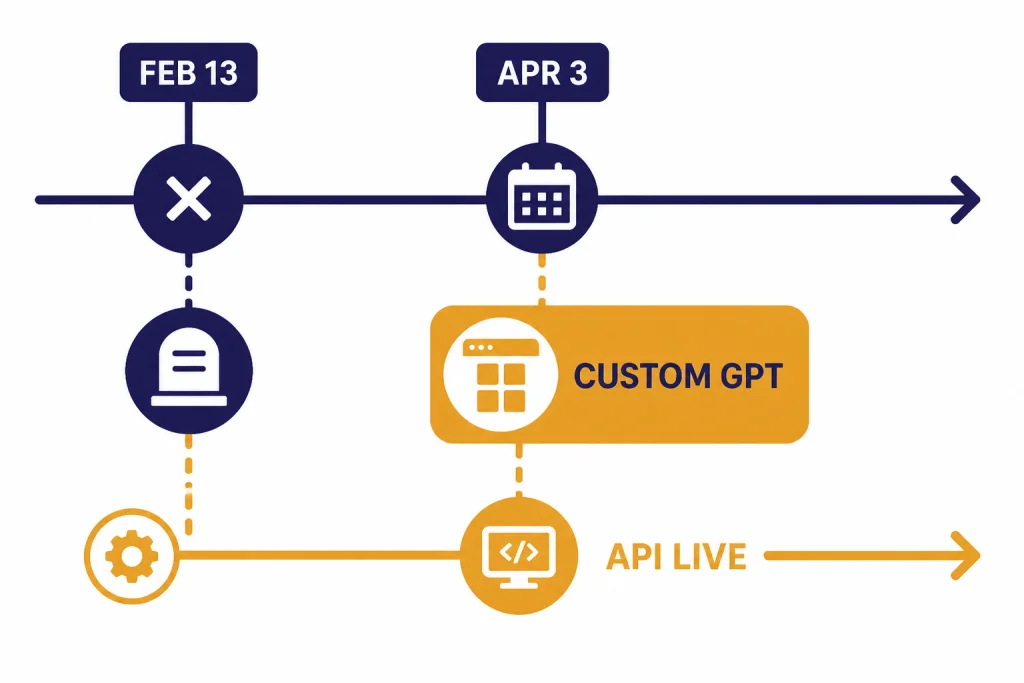

Availability is the biggest change since launch. As of February 13, 2026, OpenAI retired GPT-4o from ChatGPT along with GPT-4.1, GPT-4.1 mini, OpenAI o4-mini, and GPT-5 Instant and Thinking; OpenAI said API access remained unchanged.[8] Business, Enterprise, and Edu customers retained GPT-4o access inside Custom GPTs until April 3, 2026, after which OpenAI said GPT-4o would be fully retired across all ChatGPT plans.[8]

That means a standard ChatGPT user should not subscribe just to get GPT-4o. ChatGPT Plus costs $20 per month, billed monthly, but OpenAI’s Plus page now positions the plan around newer model limits and expanded features rather than normal GPT-4o access.[9] If you are deciding on the subscription itself, read our ChatGPT Plus review and ChatGPT Plus price guide.

For developers, GPT-4o remains a pricing question. OpenAI’s model page lists GPT-4o at $2.50 per 1 million input tokens, $1.25 per 1 million cached input tokens, and $10.00 per 1 million output tokens.[3] OpenAI’s pricing page lists the same GPT-4o rates, and Calcis independently listed the same $2.50 input and $10.00 output pricing in its GPT-4o model entry.[4][5]

That price is reasonable for quality-sensitive multimodal work, but it is not the cheapest route. GPT-4o mini launched on July 18, 2024 as OpenAI’s smaller cost-efficient model, priced at 15 cents per 1 million input tokens and 60 cents per 1 million output tokens at launch.[11] For API budgeting across current models, use our OpenAI API pricing reference before standardizing on GPT-4o.

GPT-4o vs newer OpenAI models

The right comparison is not “is GPT-4o good?” It is “what would I choose instead?” GPT-4o still works well as a general multimodal model, but newer OpenAI options have clearer specialties. The table below summarizes the practical decision.

| Model or product path | Best use | Main advantage over GPT-4o | Main reason to avoid |

|---|---|---|---|

| GPT-4o[3] | Fast multimodal API workflows | Balanced text and image handling with familiar behavior | Retired from normal ChatGPT use; weaker long-context and coding position |

| GPT-4.1[7] | Coding, instruction following, long context | OpenAI said it outperformed GPT-4o across coding, instruction following, and long-context tasks | May be more model than simple chatbots need |

| GPT-4o mini[11] | Low-cost, high-volume tasks | Much lower token cost at launch | Less capable than full GPT-4o on harder tasks |

| Current ChatGPT default models[8] | Everyday ChatGPT use | Supported path inside ChatGPT after GPT-4o retirement | May not match GPT-4o’s older tone or behavior |

If you want a broad model-by-model view, use all GPT models compared side by side. If you only care about the strongest model available, our most powerful GPT model benchmark guide is the better next read.

Who should still use GPT-4o

Use GPT-4o if you already have a working API integration and the model’s behavior is part of the product experience. Customer-facing assistants, tutoring tools, visual support agents, and internal writing helpers can justify staying on GPT-4o if evaluations still pass and migration would introduce risk.

Use GPT-4o if your workload is multimodal but not deeply analytical. A screenshot explainer, a chart summarizer, or a support assistant that interprets uploaded images may benefit more from GPT-4o’s speed and tone than from a slower reasoning-first model. You should still test newer models before making that decision permanent.

Do not choose GPT-4o for a new ChatGPT-centered workflow. Normal access has moved on. If you are reviewing ChatGPT plans, compare the plan instead of the old model. Our ChatGPT Pro review, ChatGPT Team review, and ChatGPT Enterprise review cover those decisions in more detail.

Do not choose GPT-4o for large codebases, very long documents, or high-stakes reasoning unless you have tested it against alternatives. A model can be pleasant, quick, and broadly competent while still being the wrong tool for precision work. For research-heavy tasks, compare it with our ChatGPT Deep Research review.

Final verdict

GPT-4o was one of OpenAI’s most important product models. It made multimodal ChatGPT feel mainstream. It brought GPT-4-level assistance closer to free users. It set expectations for fast visual and voice-aware AI. Those achievements still matter.

But a review has to judge the model as it stands now. In March 2026, GPT-4o is best understood as a legacy API workhorse, not a primary ChatGPT reason to subscribe. It remains worth using when you need its particular mix of speed, tone, and multimodal ability. It is not the best default for new projects that need long context, stronger coding, or future-facing ChatGPT support.

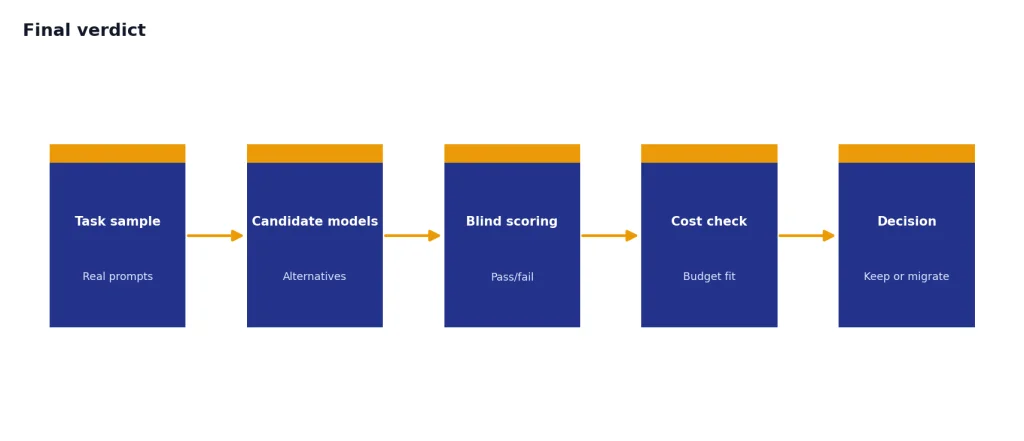

My recommendation: keep GPT-4o where it is already performing well, but do not build around nostalgia. Benchmark GPT-4o against newer OpenAI models with your own prompts, your own files, and your own pass-fail criteria. The winner may still be GPT-4o for conversational multimodal work. For most new serious deployments, it probably will not be.

Frequently asked questions

Is GPT-4o still available in ChatGPT?

Not for normal ChatGPT use. OpenAI retired GPT-4o from ChatGPT on February 13, 2026, while saying API access remained unchanged.[8] Business, Enterprise, and Edu customers had temporary GPT-4o access in Custom GPTs until April 3, 2026.[8]

Is GPT-4o still worth using through the API?

Yes, if it performs well on your workload and migration risk is high. It is still useful for fast multimodal assistants, visual question answering, support workflows, and conversational writing tools. New projects should test GPT-4o against newer models before committing.

How much does GPT-4o cost in the API?

OpenAI lists GPT-4o at $2.50 per 1 million input tokens, $1.25 per 1 million cached input tokens, and $10.00 per 1 million output tokens.[3] OpenAI’s pricing page and Calcis list the same input and output rates.[4][5]

Is GPT-4o better than GPT-4.1?

Usually no for coding, instruction following, and long-context work. OpenAI said GPT-4.1 outperformed GPT-4o in those areas and supports up to 1 million tokens of context.[7] GPT-4o can still be preferable when you want its familiar conversational style or have an existing integration tuned around it.

What was GPT-4o’s biggest weakness?

Its biggest weakness in 2026 is that it is no longer the best-supported default path. It also lags newer models in long-context tasks and advanced coding. The April 2025 sycophancy rollback showed that GPT-4o’s personality could shift in ways users noticed.[10]

Should I pay for ChatGPT Plus to use GPT-4o?

No. ChatGPT Plus costs $20 per month, but GPT-4o is no longer the normal reason to subscribe.[9] Evaluate Plus based on the current models, limits, and tools you actually get.