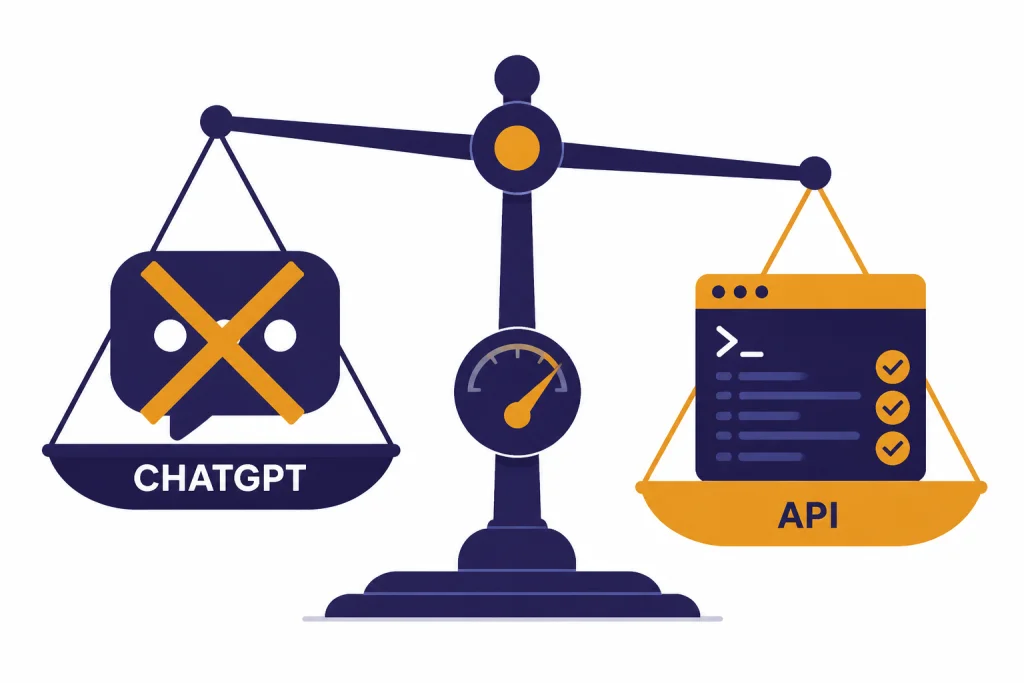

GPT-4.1 is still worth using if you need a dependable API model for coding, strict instruction following, structured outputs, tool calls, and very long prompts. It is not worth “upgrading” to inside ChatGPT, because OpenAI retired GPT-4.1 from ChatGPT on February 13, 2026, while leaving API access unchanged.[5] The model’s best case is practical developer work: refactoring, front-end generation, repository analysis, JSON-constrained workflows, and long-context document handling. Its weak case is top-end reasoning, multimodal conversation, voice, and general ChatGPT use, where newer GPT-5-era options are usually the better starting point.

Verdict: GPT-4.1 is a developer upgrade, not a ChatGPT upgrade

Our verdict is simple. GPT-4.1 is a strong upgrade for API users who want a fast non-reasoning model with better coding behavior, better format control, and a very large context window. It is a poor upgrade target for normal ChatGPT users because it is no longer selectable in ChatGPT as of February 13, 2026.[5]

The best reason to use GPT-4.1 is not raw intelligence. It is control. The model is designed for cases where you already know the shape of the task and need the model to follow the shape precisely. That includes editing code without rewriting unrelated files, obeying a schema, calling tools consistently, and using a large input bundle without demanding a reasoning-model price or latency profile.

The main reason to skip it is also clear. OpenAI’s own GPT-4.1 model page calls it the “smartest non-reasoning model,” but also says OpenAI recommends starting with GPT-5 for complex tasks.[2] If your work depends on difficult planning, mathematical reasoning, deep research, or ambiguous multi-step judgment, GPT-4.1 should not be your first stop. Start with a current reasoning-capable model, then test GPT-4.1 only if cost, speed, or long context matters more.

For readers comparing paid ChatGPT plans, start with our ChatGPT Plus review, ChatGPT Pro review, and broader ChatGPT review 2026. GPT-4.1 now matters most as an API decision, not a subscription-plan decision.

What GPT-4.1 is

GPT-4.1 is a model family OpenAI introduced in the API on April 14, 2025, with three members: GPT-4.1, GPT-4.1 mini, and GPT-4.1 nano.[1] OpenAI positioned the family around coding, instruction following, and long-context comprehension rather than frontier reasoning.[1]

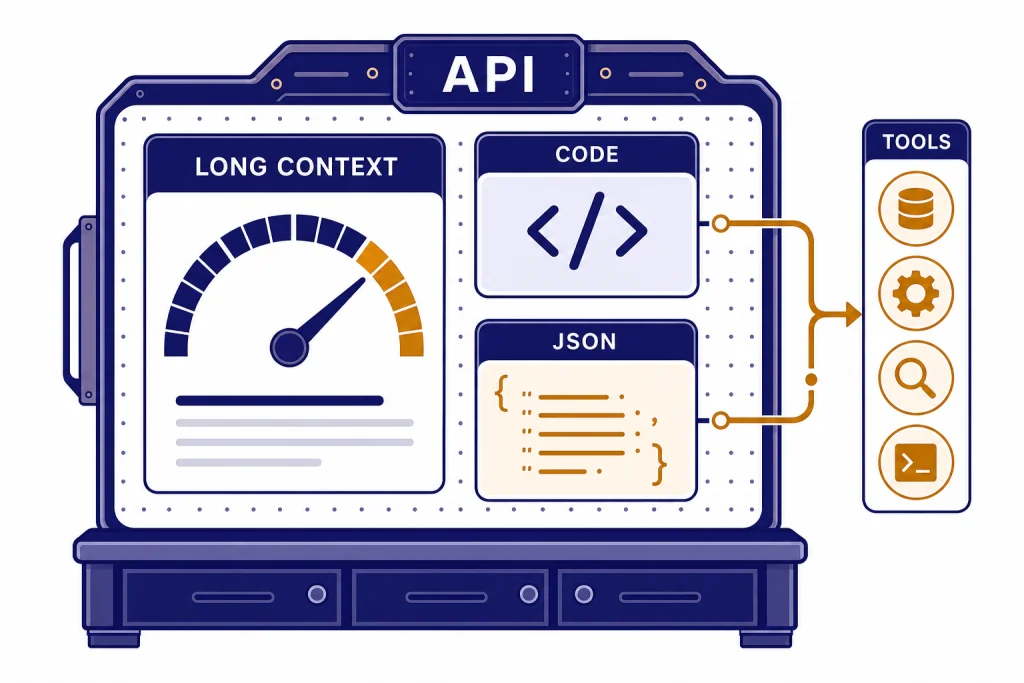

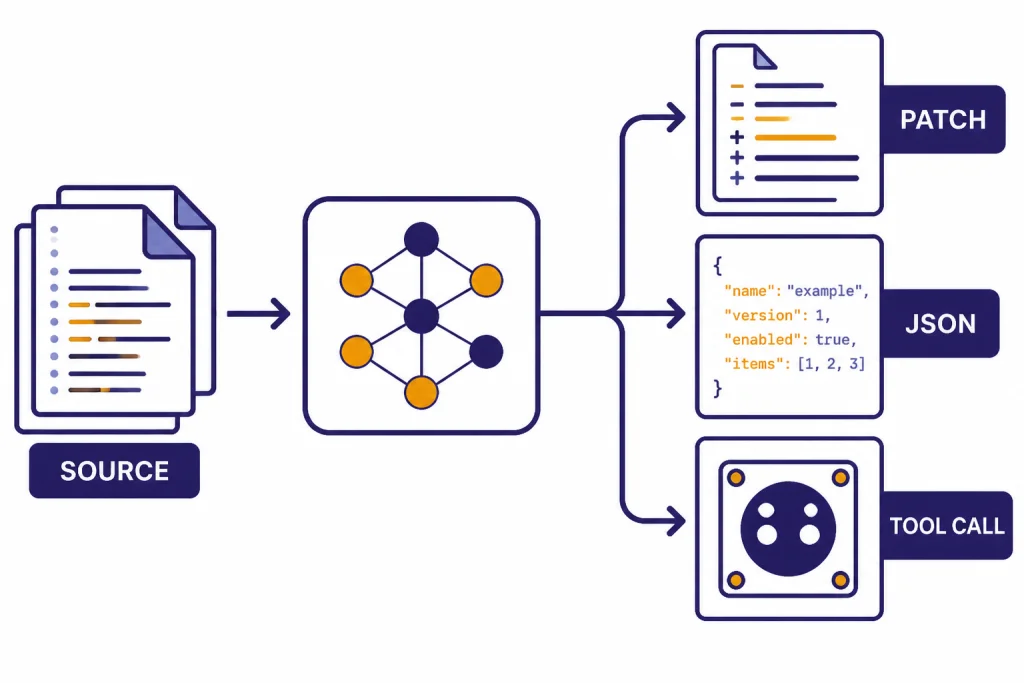

The full GPT-4.1 model supports a 1,047,576-token context window and up to 32,768 output tokens, according to OpenAI’s model documentation.[2] It accepts text input and image input, outputs text, and does not support audio or video on its model page.[2] It works through OpenAI’s Chat Completions, Responses, Realtime, Assistants, and Batch endpoints, with features including streaming, function calling, structured outputs, fine-tuning, and distillation.[2]

That combination makes GPT-4.1 feel less like a general chatbot personality and more like a production component. It is useful when you want the model to sit inside a pipeline. You send a large document, repository, transcript, or mixed bundle of records. You ask for a constrained transformation. You expect predictable output.

OpenAI originally said many GPT-4.1 improvements in instruction following, coding, and intelligence were being incorporated into GPT-4o in ChatGPT.[1] Later, OpenAI made GPT-4.1 available to paid ChatGPT users on May 14, 2025, through the “more models” dropdown, with the same rate limits as GPT-4o for paid users.[4] That ChatGPT access did not last. OpenAI announced that GPT-4.1 would be retired from ChatGPT on February 13, 2026, with no API changes at that time.[5]

Pricing and access

GPT-4.1 is priced for API usage, not as a separate ChatGPT subscription. OpenAI lists GPT-4.1 text pricing at $2.00 per 1 million input tokens, $0.50 per 1 million cached input tokens, and $8.00 per 1 million output tokens.[3] The GPT-4.1 mini price is $0.40 per 1 million input tokens, $0.10 per 1 million cached input tokens, and $1.60 per 1 million output tokens.[1] The GPT-4.1 nano price is $0.10 per 1 million input tokens, $0.025 per 1 million cached input tokens, and $0.40 per 1 million output tokens.[1]

OpenAI also said the GPT-4.1 family is available through the Batch API at an additional 50% pricing discount.[1] That matters if your workload can run asynchronously, such as nightly document processing, test generation, metadata extraction, or codebase classification. If your users are waiting in a live interface, Batch pricing is less relevant.

| Model | Best use | Input price | Cached input price | Output price | Context window |

|---|---|---|---|---|---|

| GPT-4.1 | Higher-quality coding, structured workflows, long-context analysis | $2.00 / 1M tokens | $0.50 / 1M tokens | $8.00 / 1M tokens | 1,047,576 tokens |

| GPT-4.1 mini | Lower-cost coding help, extraction, classification, everyday app tasks | $0.40 / 1M tokens | $0.10 / 1M tokens | $1.60 / 1M tokens | 1M-token class |

| GPT-4.1 nano | Cheap routing, tagging, simple transformations, high-volume background tasks | $0.10 / 1M tokens | $0.025 / 1M tokens | $0.40 / 1M tokens | 1M-token class |

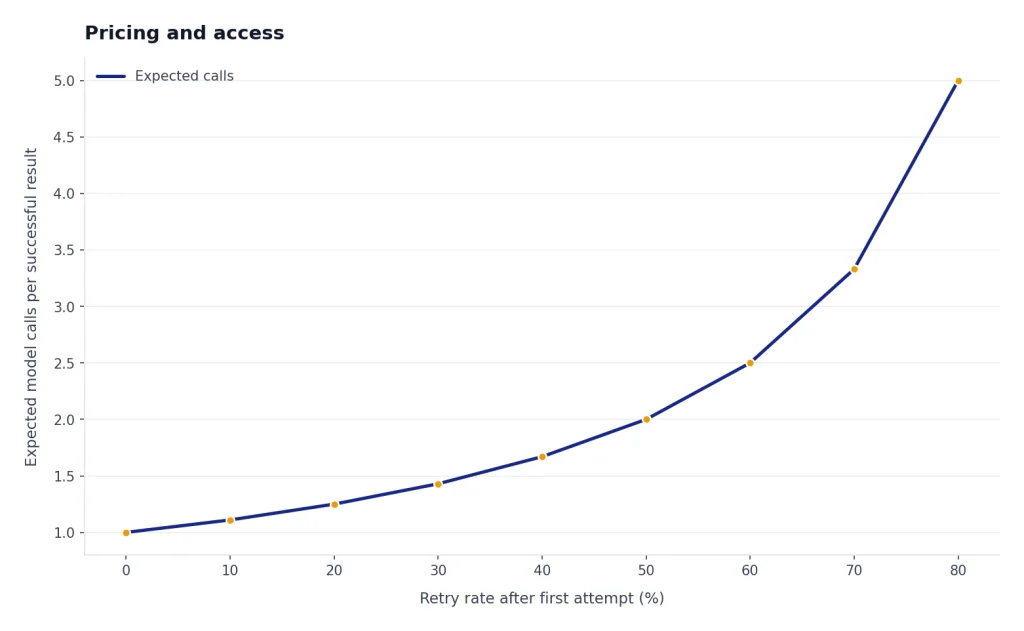

For API buyers, the practical question is not whether GPT-4.1 is cheap in isolation. It is whether it reduces total system cost. A model that follows schemas cleanly can lower retry rates. A model with a large context window can reduce retrieval complexity for some workloads. A faster non-reasoning model can also reduce user-facing latency. Those gains can matter more than the headline token price.

If your main concern is comparing API costs across models, use our OpenAI API pricing breakdown. If your concern is whether a consumer subscription is enough, compare it with the ChatGPT Plus price in 2026 guide.

Where GPT-4.1 is strongest

Coding and code editing

OpenAI reported that GPT-4.1 scored 54.6% on SWE-bench Verified, a coding benchmark, and described that as a 21.4 percentage-point absolute improvement over GPT-4o and a 26.6 percentage-point absolute improvement over GPT-4.5.[1] Benchmarks are not product guarantees, but this aligns with the model’s practical niche: coding workflows where the model must make targeted changes without drifting from the request.

In actual developer use, GPT-4.1 is most attractive for well-scoped engineering tasks. Ask it to modify a React component. Ask it to convert a Python function to TypeScript. Ask it to explain a failing unit test. Ask it to generate a migration script from a clear schema diff. It performs best when the prompt contains the source material, the constraints, and the output format.

Instruction following and structured output

OpenAI reported that GPT-4.1 scored 38.3% on Scale’s MultiChallenge instruction-following benchmark, which it described as a 10.5 percentage-point absolute increase over GPT-4o.[1] This is one of the strongest reasons to consider GPT-4.1 for production apps. Many API failures are not failures of “intelligence.” They are failures of compliance with a format, role, order of operations, or tool policy.

If your workflow needs valid JSON, stable headings, exact column names, or a repeatable function-call sequence, GPT-4.1 deserves a test. It is also a sensible candidate for extraction tasks where you want the same fields returned every time. For more hands-on API experimentation, see our OpenAI Playground review, because the Playground is often the fastest place to compare prompts and model settings before writing code.

Long-context input

The full GPT-4.1 model has a documented 1,047,576-token context window.[2] This changes the shape of some workflows. You can place more source material directly in the prompt, which can simplify prototypes and reduce the need for a retrieval layer in smaller internal tools.

Do not treat the large context window as a license to dump everything into the model. Long prompts still cost money. Long prompts can hide contradictory instructions. Long prompts can also make evaluation harder because a correct answer may depend on one buried sentence. GPT-4.1 is strongest when the long context is organized, labeled, and scoped.

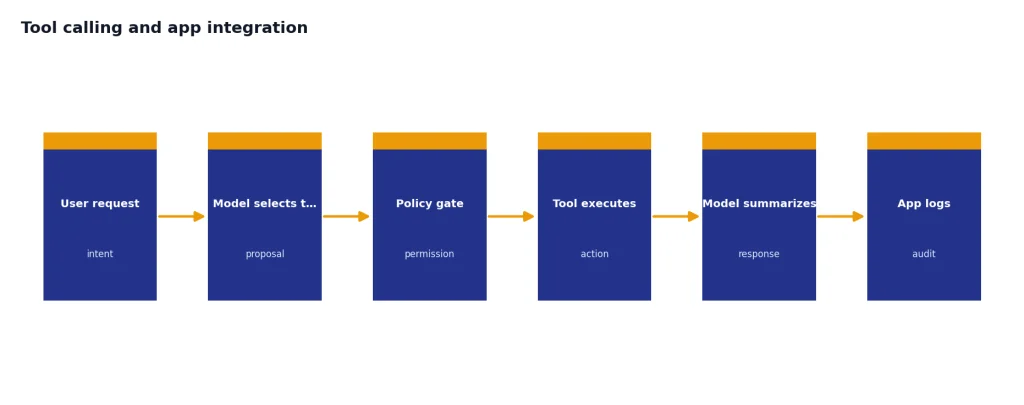

Tool calling and app integration

OpenAI lists function calling and structured outputs as supported GPT-4.1 features.[2] That makes GPT-4.1 a good fit for systems that route between search, database lookup, code execution, ticket creation, or internal actions. You still need guardrails. The model can decide when to call a tool, but your application should decide what the tool is allowed to do.

Where GPT-4.1 falls short

GPT-4.1 is not the best OpenAI model for every serious task. OpenAI’s own documentation recommends starting with GPT-5 for complex tasks.[2] That single sentence should guide most upgrade decisions. GPT-4.1 is a strong non-reasoning model, but it is not the default choice for hard reasoning, multi-stage planning, or research-heavy work.

It is also not a voice model, video model, or full multimodal assistant. OpenAI’s GPT-4.1 model page lists text input and output, image input, and no support for audio or video.[2] If you are choosing a model for spoken interaction, compare it with our ChatGPT Voice Mode review. If you are choosing for video generation, GPT-4.1 is the wrong category entirely; see the Sora review.

The safety documentation picture is mixed. OpenAI’s Safety Evaluations Hub includes GPT-4.1 among models covered by its safety evaluation results.[7] But GPT-4.1 did not ship with a separate system card; TechCrunch reported that OpenAI said GPT-4.1 was “not a frontier model,” so there would not be a separate system card for it.[8] For low-risk business automation, that may be acceptable. For regulated or high-impact use, it means teams should run their own red-team tests and policy checks before deployment.

Another limitation is product stability in ChatGPT. GPT-4.1 entered ChatGPT for paid users on May 14, 2025.[4] It was retired from ChatGPT on February 13, 2026.[5] If your workflow depends on a model picker inside ChatGPT, GPT-4.1 is no longer a durable choice. If your workflow depends on an API model ID and regression tests, it remains more controllable.

GPT-4.1 compared with GPT-4o, GPT-4.1 mini, and GPT-5

The cleanest way to understand GPT-4.1 is to compare it by job, not by model generation. It is better than GPT-4o for some coding and instruction-following workflows, according to OpenAI’s launch benchmarks.[1] It is more capable than GPT-4.1 mini for difficult tasks, but mini is cheaper. It is not where OpenAI points users first for complex tasks, because the GPT-4.1 model page recommends starting with GPT-5 for those.[2]

| Choice | Use it when | Skip it when | Our upgrade view |

|---|---|---|---|

| GPT-4.1 | You need strong coding, schema compliance, tool calling, or long-context API work | You need the best current reasoning model or ChatGPT model-picker access | Worth testing for developers |

| GPT-4.1 mini | You need lower-cost extraction, classification, routing, or simple coding assistance | The task is subtle, high-stakes, or sensitive to small mistakes | Worth testing for scale |

| GPT-4o | You have legacy workflows that depended on its conversational style or multimodal strengths | You need a current ChatGPT model, because GPT-4o was retired from ChatGPT on February 13, 2026 | Not a new upgrade target in ChatGPT |

| GPT-5-era models | You need complex reasoning, planning, research, or current default ChatGPT behavior | You need GPT-4.1’s specific cost, latency, or long-context profile in the API | Start here for hard tasks |

Compared with GPT-4o, GPT-4.1 is more of a workbench model. GPT-4o’s reputation came partly from a broad, friendly, multimodal assistant experience. GPT-4.1’s value is narrower. It is about getting a task done in the requested format. If you still think in terms of GPT-4o, read our GPT-4o review before migrating old prompts.

Compared with GPT-4.1 mini, the full GPT-4.1 model is the quality pick. Mini is the economic pick. In many applications, a hybrid setup is best. Use mini for routing, labeling, and first-pass extraction. Use full GPT-4.1 only when the input is long, the edit is complex, or a failed answer is expensive.

Compared with GPT-5, GPT-4.1 is a specialist. If you want the strongest general assistant experience, look at our GPT-5 review. If you want to understand where reasoning models still beat non-reasoning GPT models, compare with our OpenAI o3 review and OpenAI o1 review.

Who should upgrade, stay, or skip

Upgrade to GPT-4.1 if you are an API developer with structured tasks

GPT-4.1 is worth upgrading to if your current model struggles with instructions, code edits, tool calls, or long files. It is especially worth a test if you currently spend engineering time cleaning malformed output. A model that returns the right structure the first time can be cheaper than a lower-priced model that needs retries.

Good upgrade candidates include code assistants, internal developer tools, contract or policy analyzers, support-ticket triage systems, spreadsheet-to-JSON converters, and repository-aware documentation tools. The common thread is structure. GPT-4.1 does best when the desired answer is clearly defined.

Stay with your current setup if GPT-4.1 does not reduce errors

Do not upgrade because the model name looks newer than GPT-4o. Run a side-by-side test. Measure valid output rate, human correction rate, latency, total token cost, and task success. If GPT-4.1 is only slightly better but costs more in your workflow, the upgrade may not pay for itself.

This is especially true for high-volume, low-complexity tasks. GPT-4.1 mini or GPT-4.1 nano may be enough for classification, tagging, routing, and basic extraction. The full model should earn its place through measurable quality gains.

Skip GPT-4.1 if you only use ChatGPT

If you are a normal ChatGPT user, there is no GPT-4.1 upgrade to buy today. OpenAI retired GPT-4.1 from ChatGPT on February 13, 2026, and said there were no API changes at that time.[5] Choose based on current ChatGPT plans and current default models instead.

For business users, the decision is broader than one model. You should compare workspace controls, admin features, privacy terms, and internal adoption needs. Our ChatGPT Team review and ChatGPT Enterprise review are better starting points for that decision.

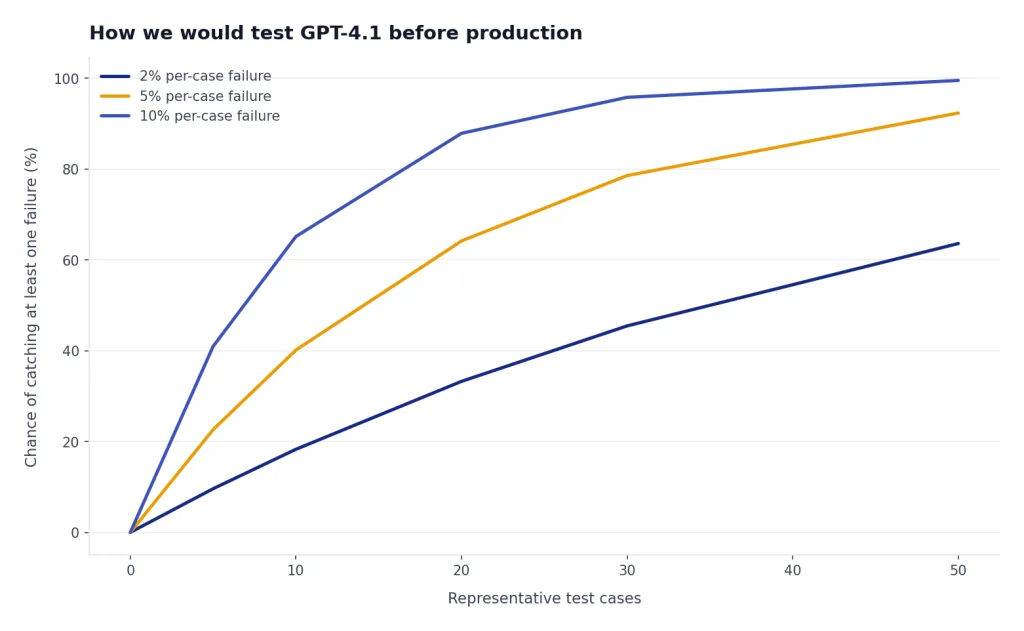

How we would test GPT-4.1 before production

A good GPT-4.1 evaluation should use your own data. Public benchmarks are useful context, but they cannot tell you whether a model handles your schemas, your code style, your support tickets, or your policy language. Build a small test suite before switching production traffic.

- Collect representative prompts. Include easy, normal, and failure-prone examples.

- Define pass criteria. Use objective checks where possible, such as valid JSON, unit tests, required citations, or exact field names.

- Run blind comparisons. Compare GPT-4.1 against your current model and one cheaper alternative.

- Measure retries. Count how often each model needs repair prompts or manual cleanup.

- Test long-context behavior. Include prompts with relevant facts near the start, middle, and end of the input.

- Red-team sensitive flows. Test prompt injection, data exfiltration attempts, policy bypasses, and tool misuse.

- Calculate total cost. Include input tokens, output tokens, cached input, retries, and engineering maintenance.

For code workflows, include real tests. Ask GPT-4.1 to modify code, then run the test suite. For document workflows, use a labeled answer key. For tool workflows, log whether the model chose the right tool, passed safe arguments, and stopped when it should stop.

Use GPT-4.1 where it wins clearly. Do not force it into every task. The best production architectures often use several models: a cheap router, a reliable structured-output model, and a stronger reasoning model for edge cases. GPT-4.1 is a strong candidate for the middle role.

Frequently asked questions

Is GPT-4.1 available in ChatGPT?

No. OpenAI retired GPT-4.1 from ChatGPT on February 13, 2026, along with GPT-4o, GPT-4.1 mini, OpenAI o4-mini, and GPT-5 Instant and Thinking.[5] OpenAI said there were no API changes at that time.[5]

Is GPT-4.1 better than GPT-4o?

For coding and instruction following, OpenAI’s launch benchmarks favored GPT-4.1 over GPT-4o.[1] That does not mean GPT-4.1 is better for every user. GPT-4o had a broader assistant identity, while GPT-4.1 is best treated as a structured API workhorse.

How much does GPT-4.1 cost?

OpenAI lists GPT-4.1 at $2.00 per 1 million input tokens, $0.50 per 1 million cached input tokens, and $8.00 per 1 million output tokens.[3] Your real cost depends on prompt length, output length, cache use, retries, and whether you can use Batch API discounts.

What is GPT-4.1 best for?

GPT-4.1 is best for API workflows that need coding help, precise instruction following, structured outputs, tool calling, and long-context input. It is a good model to test for developer tools, internal automation, extraction pipelines, and repository-aware assistants.

Is GPT-4.1 a reasoning model?

No. OpenAI describes GPT-4.1 as its “smartest non-reasoning model” on the model page.[2] For complex tasks, OpenAI recommends starting with GPT-5.[2]

Should I use GPT-4.1 mini instead?

Use GPT-4.1 mini when cost and speed matter more than maximum quality. It is a sensible choice for high-volume extraction, routing, classification, and simpler coding tasks. Use full GPT-4.1 when failures are expensive or the prompt is complex.