The best prompt engineering course in 2026 is not the longest course or the one with the flashiest certificate. It is the course that matches your daily AI work. For most ChatGPT users, start with a structured beginner course, then practice on real tasks, then move into advanced workflows such as research, coding, data analysis, agents, and evaluation. This guide compares the strongest course paths for beginners, developers, workplace users, and advanced learners. It also gives you a practical 30-day syllabus you can follow without getting stuck in theory or collecting prompt templates you never use.

Best prompt engineering course in 2026

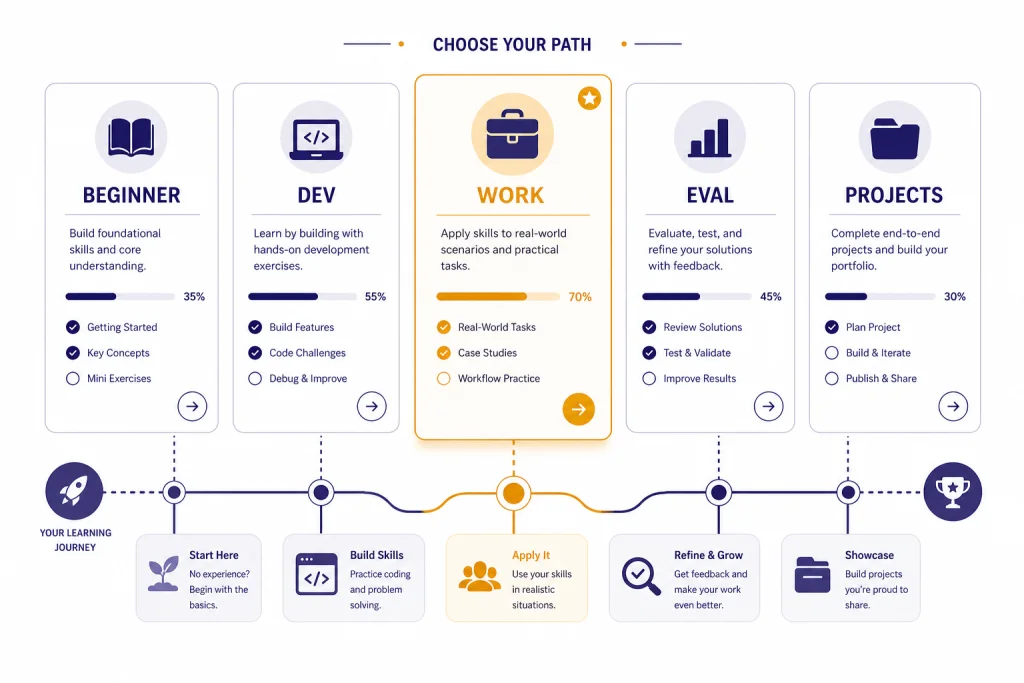

The best prompt engineering course for most readers is a sequence, not a single certificate. Start with a fundamentals course that teaches clear task framing, context, constraints, output format, and iteration. Then add a role-specific course or project path. Prompting for writing, research, coding, data analysis, and agents all share the same foundation, but they fail in different ways.

If you want a fast developer-focused start, DeepLearning.AI’s ChatGPT Prompt Engineering for Developers is a strong first pick because it is beginner level, runs 1 hour and 30 minutes, includes 9 video lessons and 7 code examples, and is taught by Isa Fulford and Andrew Ng.[3] If you want a broader no-code course, Vanderbilt’s Prompt Engineering for ChatGPT on Coursera is beginner level, has 6 modules, and is listed at 2 weeks at 10 hours a week.[4] If you want workplace prompting across writing, analysis, presentations, and expert-partner use cases, Google Prompting Essentials is a 4-course series listed at 1 month at 1 hour a week.[5]

For ChatGPT users, I would not treat course completion as the goal. Treat it as scaffolding. A useful prompt engineering course should make you better at diagnosing weak outputs. You should finish with a reusable system for giving instructions, testing results, saving good prompts, and adapting them to new tools. OpenAI’s own guidance emphasizes clear instructions, useful context, output expectations, and iteration rather than a single perfect prompt formula.[1]

What a good prompt engineering course teaches

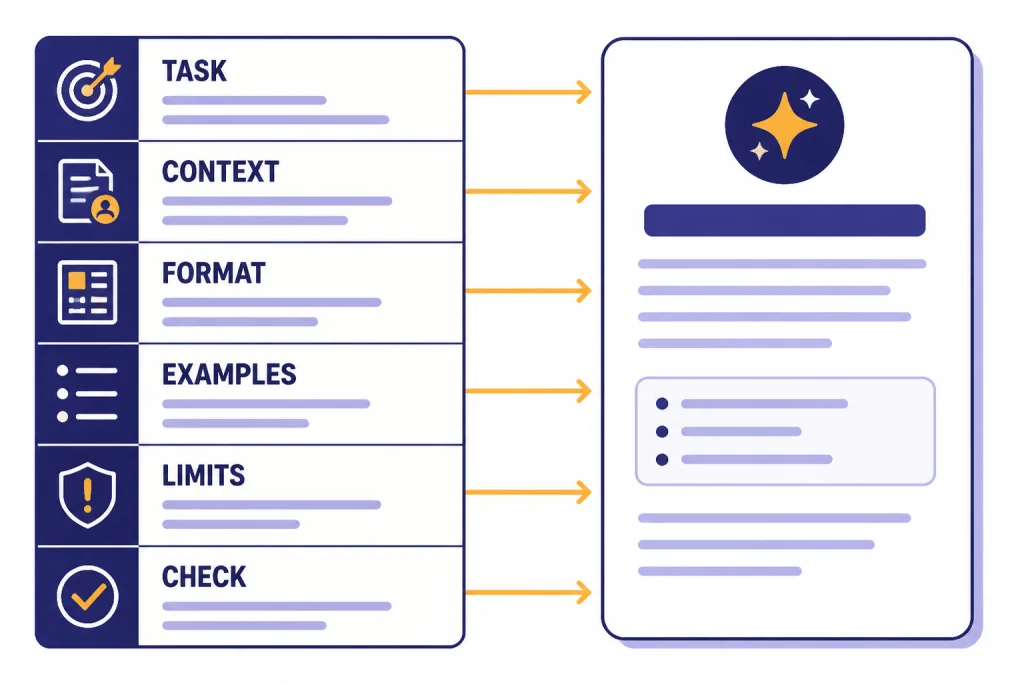

A good prompt engineering course teaches decision-making. It does not only hand you a pack of prompts. The skill is knowing what to ask, what context to include, what format to require, what failure modes to test, and when to stop prompting and use a better workflow.

At minimum, your course should cover five habits. First, define the task in plain language. Second, provide the right context without burying the model in irrelevant details. Third, specify the output format. Fourth, ask for alternatives or checks when quality matters. Fifth, iterate against a clear standard. OpenAI’s API prompt engineering guide frames prompt engineering as writing effective instructions so a model consistently generates content that meets your requirements.[2]

Beginner courses should teach structure

Beginners need a repeatable prompt shape. A simple version is: role, task, context, constraints, examples, output format, and review criteria. You do not need every element every time. You need to know which element is missing when the answer is vague, too long, too generic, or formatted incorrectly.

For a beginner, the most important habit is moving from “write something about this” to “draft this specific output for this audience, using this context, in this format, while avoiding these mistakes.” That shift matters more than memorizing names for advanced techniques.

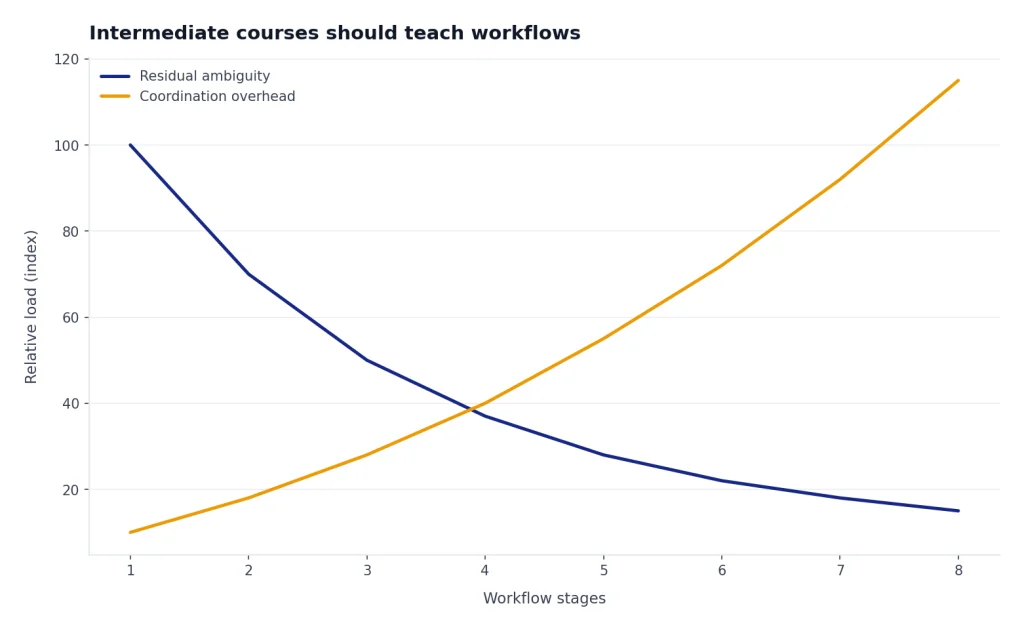

Intermediate courses should teach workflows

Intermediate learners should move beyond single prompts. A real workflow breaks a task into stages. For example, a writing workflow may include brief creation, outline, draft, critique, revision, fact check, and final formatting. A research workflow may include source gathering, claim extraction, contradiction checks, synthesis, and citation cleanup. If research is your main use case, pair this article with our academic research ChatGPT tutorial and deep research project guide.

Course material should also explain when prompting alone is not enough. If you need calculations, structured files, charts, or repeatable analysis, you may need a tool-based workflow. Our data analysis step-by-step tutorial and Code Interpreter mastery guide are better next steps than another generic prompt list.

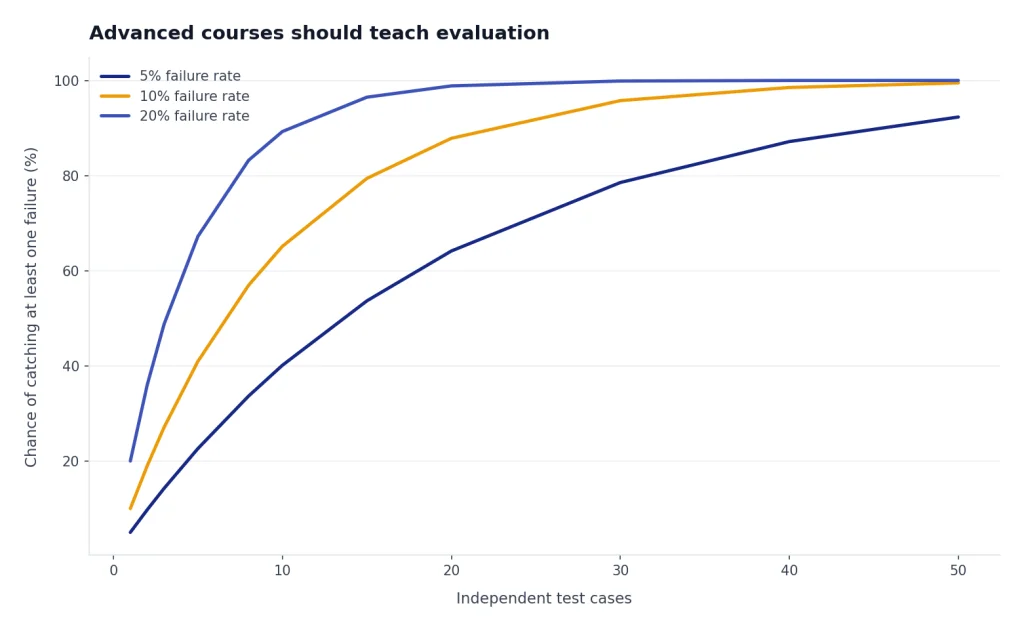

Advanced courses should teach evaluation

Advanced prompt engineering is less about clever wording and more about evaluation. You define what a good answer means, test multiple prompt versions, compare outputs, and document the version that works. Anthropic’s prompt engineering overview starts with success criteria, empirical testing, and a first draft prompt before optimization.[6] That is the right order for serious work.

For builders, this is where prompt engineering becomes product design. You need prompt versioning, test cases, tool permissions, retrieval design, and fallback behavior. If you build assistants rather than one-off prompts, our custom GPT tutorial and agent mode tutorial will be more useful than another beginner course.

Prompt engineering course comparison

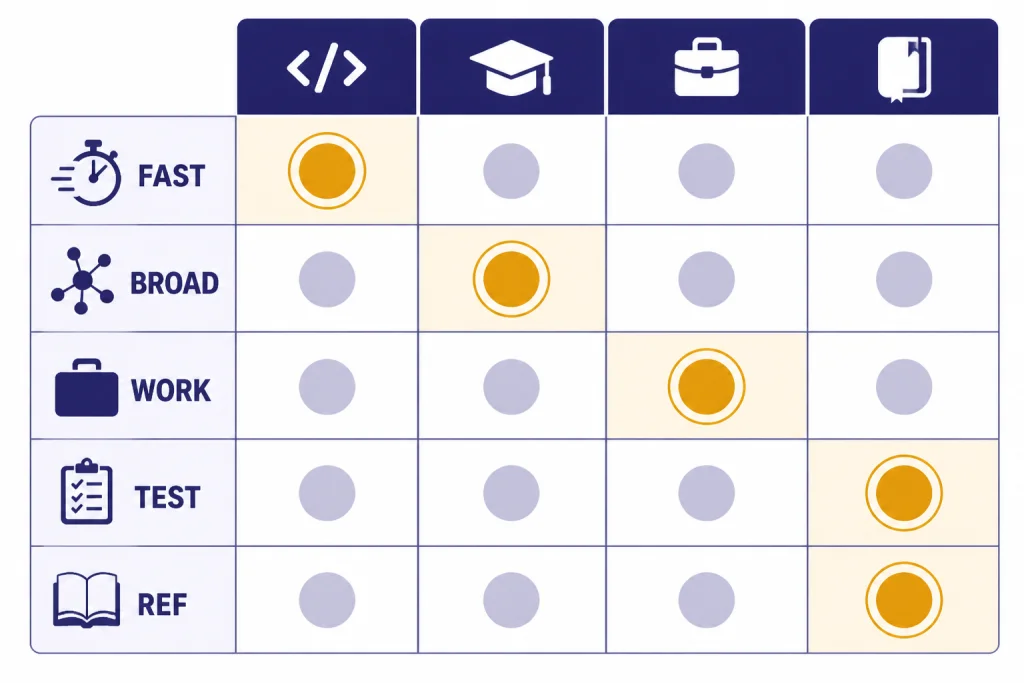

Use this table as a selection guide. The right prompt engineering course depends on whether you want ChatGPT fluency, developer skills, workplace productivity, or an advanced reference library.

| Course or resource | Best for | What it emphasizes | Time or structure | Our verdict |

|---|---|---|---|---|

| DeepLearning.AI: ChatGPT Prompt Engineering for Developers | Developers and technical beginners | Prompting with an API, summarizing, inferring, transforming, expanding, and building a chatbot | 1 hour and 30 minutes, 9 video lessons, 7 code examples[3] | Best fast technical start |

| Vanderbilt: Prompt Engineering for ChatGPT | No-code learners who want a structured university-style course | Prompt patterns, fundamentals, and ChatGPT use across tasks | 6 modules; 2 weeks at 10 hours a week[4] | Best broad beginner course |

| Google Prompting Essentials | Workplace users | A 5-step prompting framework, everyday work tasks, data analysis, presentations, creative partnership, and expert feedback | 4-course series; 1 month at 1 hour a week[5] | Best business productivity path |

| Anthropic prompt engineering docs and tutorial | Intermediate users who want systematic testing | Success criteria, examples, chain prompts, roles, XML tags, and long-context tips | Documentation plus interactive tutorial references[6] | Best free advanced supplement |

| DAIR.AI Prompt Engineering Guide | Researchers and self-directed learners | Papers, learning guides, examples, tools, RAG, context engineering, and AI agents | Reference guide and educational project[8] | Best reference library |

If you are starting from zero, choose one primary course and one practice path. Do not enroll in every course at once. Most courses repeat the same foundations. You learn faster by applying one framework to your own documents, spreadsheets, codebase, marketing plan, or research question.

If your work is writing-heavy, pair your course with our writing better content tutorial. If your work is SEO-heavy, use our SEO workflow tutorial. If you work in spreadsheets, combine prompt engineering with our Excel formulas and pivot tables guide. Prompt engineering becomes useful when it attaches to a real job to be done.

Recommended learning path

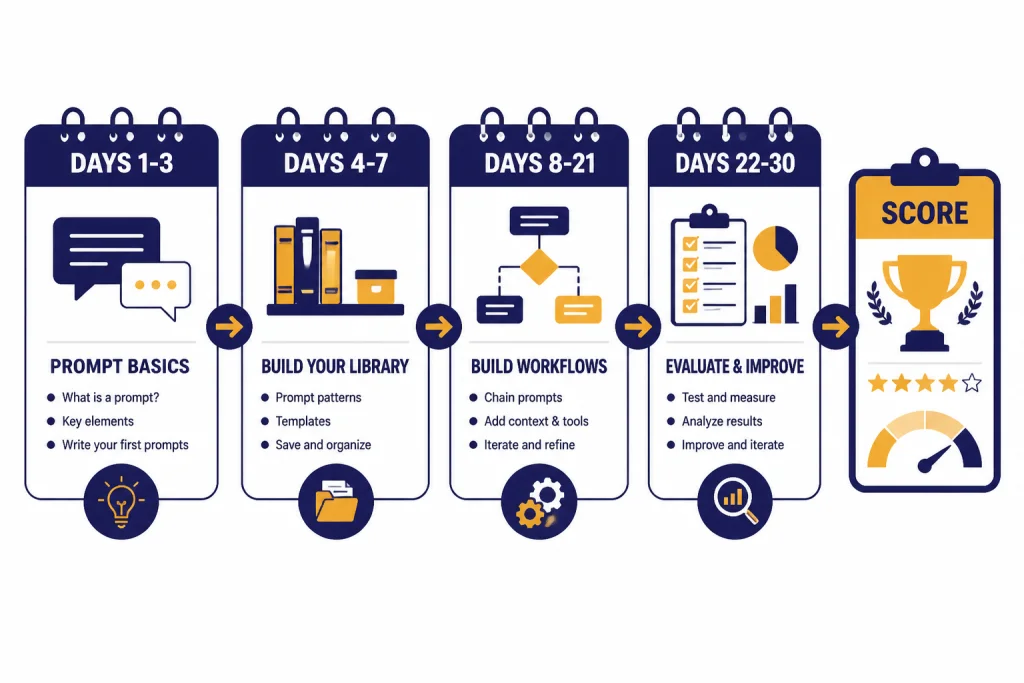

Here is a practical 30-day prompt engineering course plan. It works whether you use a formal course, free documentation, or your own projects. The goal is not to become a prompt collector. The goal is to build a prompt operating system you can reuse.

Days 1-3: Learn the basic prompt shape

Practice the same task with weak and strong prompts. Ask for a summary, then add audience, source material, length, tone, and output format. Compare the results. Save the improved version. This teaches the core lesson: prompt quality is mostly task clarity plus useful constraints.

Use this starter template:

Task: [What you want]

Audience: [Who will use it]

Context: [Relevant facts, source text, files, or assumptions]

Constraints: [Length, tone, exclusions, compliance needs]

Output format: [Table, bullets, memo, JSON, checklist]

Quality bar: [How the answer should be judged]Days 4-7: Build a reusable prompt library

Create prompts for tasks you repeat. Good candidates include summarizing meetings, rewriting emails, extracting action items, creating outlines, classifying feedback, and reviewing drafts. Store the prompts with notes about what worked and what failed. If you want a dedicated library workflow, use our ChatGPT prompt generator guide.

Days 8-14: Learn examples and constraints

Examples are often the fastest way to improve output. Give the model one good example and one bad example. Explain the difference. Then ask it to produce a new output that follows the good pattern and avoids the bad one. This is especially useful for brand voice, sales emails, documentation, data labels, and structured summaries.

Constraints should be testable. “Make it better” is weak. “Use 5 bullets, each under 18 words, with one concrete next step per bullet” is stronger. The numbers in that sentence are not magic. They are checkable requirements.

Days 15-21: Turn prompts into workflows

Pick one real process and split it into stages. For example, a marketing workflow could include audience research, message angles, draft copy, critique, revision, and final checklist. A coding workflow could include requirements, edge cases, pseudocode, implementation, tests, and review. If coding is your target, read our coding like a 10x engineer tutorial after you finish the basics.

Do not ask for final work too early. Ask for questions first when the task is unclear. Ask for a plan before the draft when the task is complex. Ask for a critique before the final answer when quality matters.

Days 22-30: Add evaluation

Evaluation turns prompt engineering into a reliable skill. Create a small test set of real examples. Run the same prompt against each one. Score the output for accuracy, completeness, format, tone, and usefulness. Revise the prompt only when the test set shows a pattern.

This is the point where many courses stop too soon. A prompt that works once is not a workflow. A prompt that works across varied inputs, produces predictable output, and has known failure modes is a reusable asset.

Practice projects that build real skill

The fastest way to finish a prompt engineering course with useful skill is to attach each lesson to a project. Choose one project from this list and complete it while you take the course.

- Personal knowledge assistant: Upload or paste notes, then ask for summaries, action items, flashcards, and gaps.

- Research brief: Ask for a source plan, compare claims, extract evidence, and write an executive summary.

- Writing system: Build prompts for outlines, drafts, critique, style matching, and final edits.

- Spreadsheet helper: Ask for formula explanations, data cleaning steps, pivot table plans, and chart recommendations.

- Customer support classifier: Create labels, provide examples, test edge cases, and review misclassifications.

- Custom assistant: Turn your best prompts into instructions for a custom GPT or reusable workspace assistant.

Each project should produce artifacts. Save the prompt, the output, the critique, the revised prompt, and the final result. This gives you a portfolio of working prompts rather than a certificate with no evidence of skill.

For multimodal work, extend the same discipline to images, voice, and video. The principle is still task, context, constraints, and output expectations. The details change. Our image generation mastery tutorial, voice mode use cases, and AI video tutorial show how prompt design changes when the output is not plain text.

What to avoid when choosing a course

A weak prompt engineering course usually has the same warning signs. It promises secret prompts. It focuses on persona tricks. It sells massive prompt packs without teaching diagnosis. It ignores evaluation. It treats every tool as if it behaves the same. It gives you templates but no practice system.

Be careful with courses that promise a dedicated prompt engineer job from a short certificate. Prompting is valuable, but most employers need prompting inside another skill: writing, analysis, product, support, coding, operations, research, or marketing. A certificate can help show initiative. It does not replace domain skill.

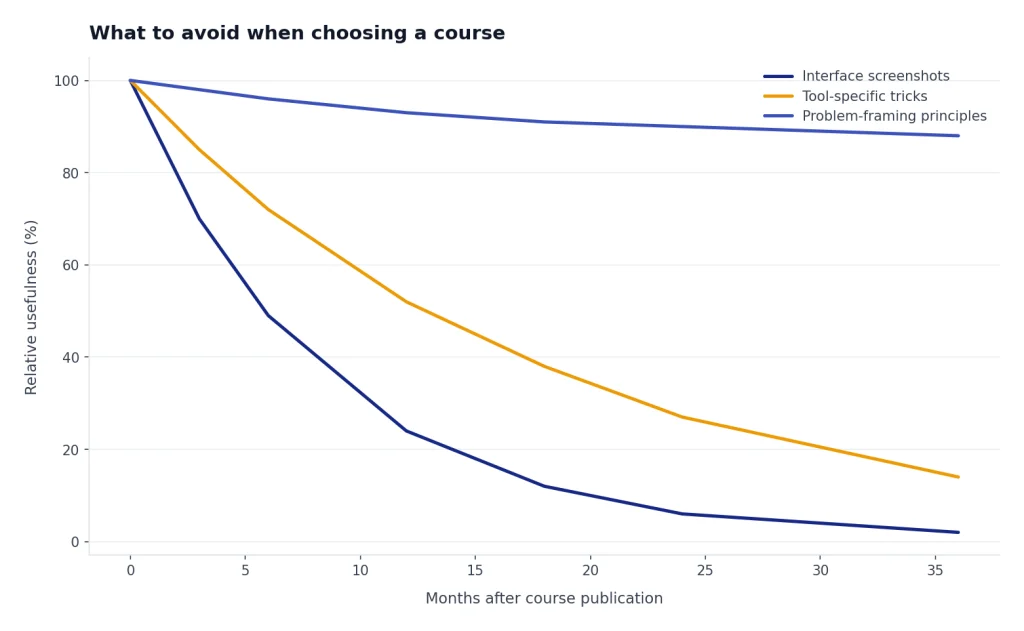

Also avoid courses that do not update their examples. AI tools change quickly. A durable course should teach principles that survive interface changes: clear instructions, grounded context, examples, constraints, decomposition, tool choice, verification, and evaluation. Course screenshots age. Good problem-framing does not.

The best prompt engineering course is the one you finish with working prompts, not the one you finish with notes. Pick one strong course, run the 30-day practice path, and build a small library around the work you actually do.

Frequently asked questions

What is the best prompt engineering course for beginners?

For a broad no-code start, Vanderbilt’s Prompt Engineering for ChatGPT is a strong beginner option because the course page lists it as beginner level with 6 modules.[4] For a shorter technical start, DeepLearning.AI’s course is better if you are comfortable with basic Python and want API examples.[3]

Is prompt engineering still worth learning in 2026?

Yes, but the useful version is not prompt hacking. The useful skill is structured instruction, context selection, workflow design, and evaluation. As models improve, vague prompts work better than they used to, but high-stakes and repeatable work still needs clear requirements and testing.

Do I need to pay for a prompt engineering course?

Not always. You can learn a lot from official documentation, free tutorials, and practice projects. Pay for a course if you need structure, graded exercises, a certificate, or a guided path that helps you stay consistent.

How long does it take to learn prompt engineering?

You can learn the basic prompt structure in a weekend. DeepLearning.AI lists its developer course at 1 hour and 30 minutes, while Vanderbilt’s Coursera course is listed at 2 weeks at 10 hours a week.[3][4] Real competence takes longer because you need practice across messy tasks and failure cases.

Should developers take a different prompt engineering course?

Yes. Developers should learn prompting with APIs, structured outputs, testing, tool use, and evaluation. A general ChatGPT course is useful, but it will not cover enough implementation detail for production systems.

What should I learn after a prompt engineering course?

Learn a workflow tied to your job. Good next steps include research, data analysis, coding, writing, SEO, custom GPTs, or agents. Prompt engineering is the foundation; applied workflow design is where the productivity gains usually appear.