OpenAI lawsuits in 2026 fall into four main buckets: Elon Musk’s challenge to the company’s nonprofit-to-commercial evolution, copyright cases from publishers and authors, product-safety claims tied to ChatGPT conversations, and antitrust disputes involving Microsoft. As of April 24, 2026, the Musk case is the nearest courtroom flashpoint after a judge dismissed his fraud claims but left other claims headed toward trial. The copyright fights are broader and slower, with discovery still shaping what plaintiffs can prove. The safety cases are newer and more emotionally charged. None of these lawsuits has yet produced a final ruling that shuts down ChatGPT or resolves the core legality of training frontier models on copyrighted material.

Where OpenAI lawsuits stand now

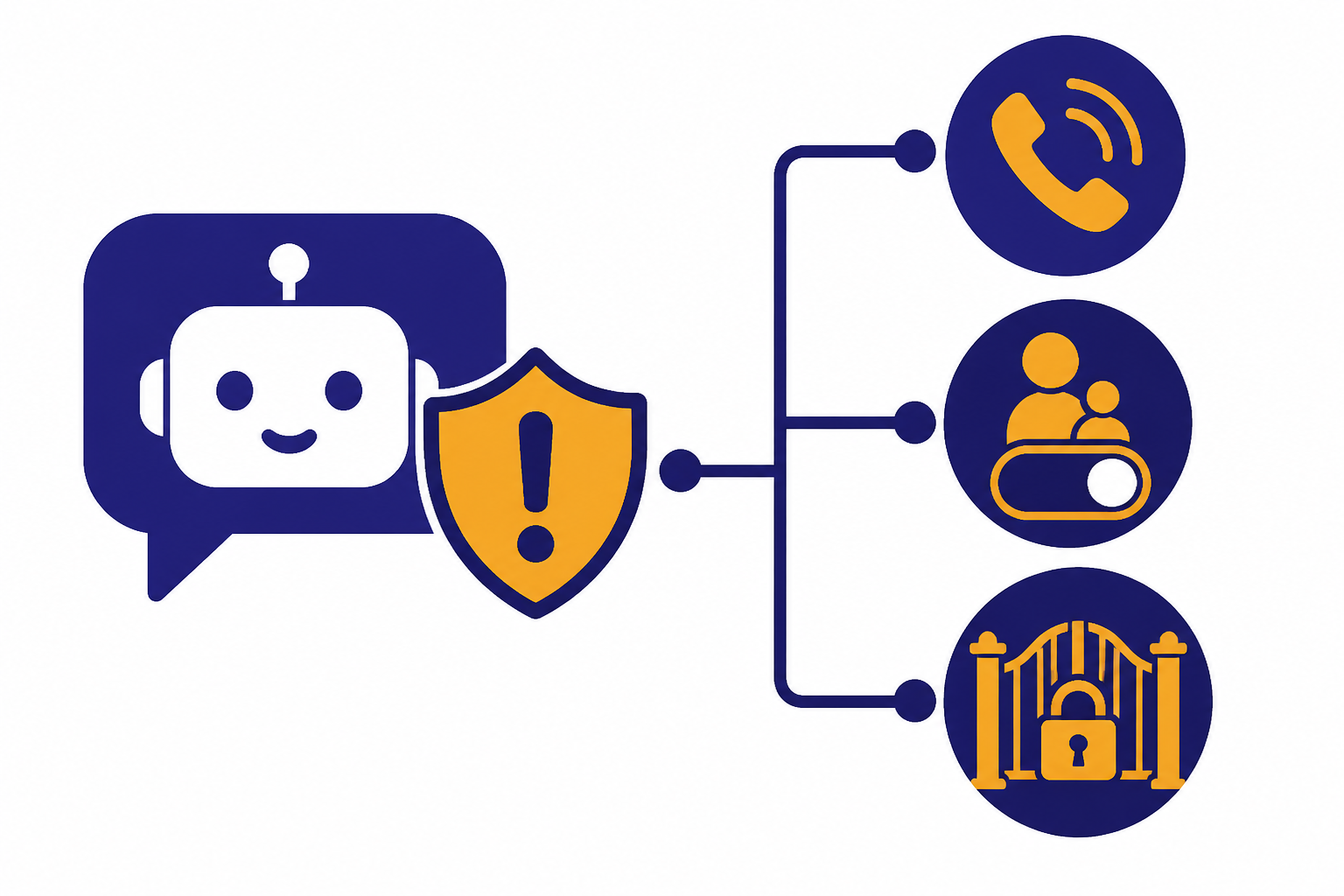

The short version: OpenAI is fighting several legal fronts at once, but they are not all the same kind of risk. Some cases ask whether OpenAI and Microsoft copied protected works without permission. Some ask whether OpenAI’s corporate structure broke promises tied to its original nonprofit mission. Others ask whether ChatGPT harmed users in ways that product-liability law should recognize.

That distinction matters. A copyright ruling could affect training data, licensing costs, model outputs, and discovery obligations. A Musk win could affect governance and the relationship between OpenAI’s nonprofit and commercial entities. A safety case could change product design, age controls, warnings, and crisis-response behavior. An antitrust case could affect how OpenAI and Microsoft structure pricing, distribution, and cloud access.

OpenAI has not published an official figure for the total number of pending lawsuits against it as of April 24, 2026. Public court records and news reports show multiple active matters, but the cases differ in court, claim type, plaintiff group, and procedural posture. For readers tracking company risk, that means the useful question is not whether OpenAI is being sued. It is which lawsuits could force a practical change in ChatGPT, the OpenAI API, licensing, governance, or the Microsoft relationship.

This article focuses on the active matters most likely to affect users, developers, publishers, investors, and people following OpenAI News This Week. It is not legal advice. It is a status map for the major OpenAI lawsuits 2026 readers keep seeing in headlines.

Quick status map

The cases below are grouped by the practical issue they raise. The same legal fight can touch more than one category. For example, the copyright cases also raise privacy questions because plaintiffs have sought access to ChatGPT conversation data during discovery.

| Legal front | Main issue | Status as of April 24, 2026 | Why it matters |

|---|---|---|---|

| Musk v. OpenAI and related defendants | Whether OpenAI’s shift from nonprofit roots toward commercial structure violated obligations tied to its founding mission | A judge dismissed Musk’s fraud claims on April 24, 2026, but planned to proceed to trial on breach of charitable trust and unjust enrichment claims.[1] | Could affect governance, remedies, and public scrutiny of OpenAI’s structure. |

| Publisher and author copyright cases | Whether OpenAI and Microsoft infringed copyrighted books, news articles, and other content in training or outputs | Several cases are centralized in multidistrict litigation, with discovery disputes continuing in 2026.[7][8] | Could shape licensing, model training evidence, and fair-use defenses. |

| The New York Times and newspaper cases | Use of news content and whether AI products compete with or reproduce publishers’ work | The cases remain active after earlier motion-to-dismiss rulings narrowed but did not eliminate key claims.[6] | Could set practical rules for news content, attribution, and output controls. |

| User safety and mental-health-related claims | Whether ChatGPT design, warnings, or safeguards contributed to harm | Wrongful-death and other product-safety claims are active, and OpenAI has publicly described its litigation approach and product changes.[12][13] | Could affect age gates, parental controls, crisis escalation, and model behavior. |

| Microsoft and antitrust claims | Whether Microsoft and OpenAI arrangements harmed competition or ChatGPT subscribers | Microsoft has asked a court to send subscriber claims to arbitration or dismiss them.[15] | Could affect pricing theories, distribution, and how courts view the OpenAI-Microsoft partnership. |

The Musk case is the near-term headline

The Musk case is the most visible OpenAI lawsuit in April 2026 because it is closest to trial. The dispute centers on whether OpenAI departed from commitments tied to its original nonprofit mission. Musk has argued that OpenAI’s current path conflicts with the public-benefit structure he says he helped fund and support. OpenAI disputes the allegations and has framed the suit as an attempt to damage the organization.

On April 24, 2026, a U.S. judge dismissed Musk’s fraud claims but said the case would continue toward trial on breach of charitable trust and unjust enrichment claims.[1] Earlier reporting had the trial set to begin on April 27, 2026.[3] That timing makes the Musk case the first one many readers should watch if they want near-term courtroom developments rather than long discovery fights.

The remedy fight is as important as the liability fight. In April 2026, Musk amended his position to say any recovered “ill-gotten gains” should go to OpenAI’s charitable nonprofit arm rather than to him personally.[2] He has also sought remedies aimed at OpenAI’s structure and leadership. Those requests are unusual because they go beyond ordinary money damages. They ask the court to influence how OpenAI is organized and controlled.

Readers should separate this lawsuit from everyday questions like whether ChatGPT will disappear. A trial does not mean ChatGPT is shutting down. It means a court will hear evidence about OpenAI’s governance, mission, and restructuring. For the broader company context, see our guide to OpenAI’s CTO and leadership team and our explainer on what OpenAI is.

The case also intersects with the Microsoft relationship because OpenAI’s commercial growth has depended heavily on Microsoft infrastructure and investment. If you are tracking that angle, our OpenAI Microsoft News page follows current partnership updates, while OpenAI and Microsoft explains the relationship in a more durable way.

The copyright cases are the structural threat

The copyright cases are slower than the Musk case, but they may be more important for the AI industry. Authors, news publishers, and information companies argue that OpenAI and Microsoft used copyrighted works without permission to train or operate AI products. OpenAI and other AI companies generally argue that model training can be lawful fair use, depending on the facts and the use.

The New York Times sued OpenAI and Microsoft in late 2023, accusing them of using millions of Times articles without permission to train chatbots and build competing AI products.[11] In 2024, a group of regional newspapers also sued OpenAI and Microsoft over alleged misuse of their journalism.[10] The author cases include claims from the Authors Guild and individual writers, and the litigation has produced a long-running discovery fight in the Southern District of New York.[8]

A key procedural event came when multiple OpenAI copyright actions were centralized in multidistrict litigation, known as MDL No. 3143.[7] Centralization does not decide who is right. It helps one court coordinate overlapping discovery and pretrial issues across related cases. That matters because the same factual questions keep appearing: what data was used, how it was processed, whether protected works can be identified in training corpora, and whether outputs reproduce or substitute for the original works.

The cases have already survived in part. An April 2025 Southern District of New York opinion granted some dismissal requests and denied others, leaving important claims alive rather than ending the litigation at the pleading stage.[6] That is why the 2026 fight is largely about evidence. Plaintiffs want records, datasets, output samples, and internal documents. OpenAI wants to limit discovery it views as overbroad, burdensome, or risky for users and business information.

The newest copyright plaintiffs are not limited to book authors and newspapers. In March 2026, Axios reported that Nielsen’s Gracenote sued OpenAI in the Southern District of New York, alleging OpenAI copied and used Gracenote’s entertainment metadata and relational framework for large language models that support products such as ChatGPT.[9] That kind of case widens the copyright debate from books and articles to structured databases and metadata.

The big unresolved question is not just whether a model saw copyrighted material. Courts will also consider whether the use was transformative, whether the use harmed licensing markets, whether outputs substitute for protected works, and whether particular plaintiffs can prove copying of their own protected expression. Those questions will shape future model training, licensing negotiations, and publisher deals. They also explain why copyright litigation may matter more to OpenAI’s cost structure than a single product update. For financial context, read our OpenAI funding history and ChatGPT Stock News coverage.

The New York Times data fight matters for users

The copyright litigation has a user-privacy dimension. OpenAI has publicly objected to data demands in The New York Times case, saying the requested discovery would require it to retain and turn over large volumes of user conversation data. OpenAI said the Times sought a sample of 20 million user conversations in the litigation.[4]

That dispute matters even for users who do not follow copyright law. If courts require AI companies to preserve or produce more conversation data during litigation, it can complicate deletion expectations and privacy promises. OpenAI’s public position is that broad preservation and production demands can conflict with user privacy, data minimization, and ordinary deletion practices.[4] The publishers’ position is that discovery is needed to test whether ChatGPT can reproduce protected works and how OpenAI’s systems behave.

Users should not assume that deleting a chat always means no copy can ever be retained for legal reasons. OpenAI’s own materials note that legal or security obligations can affect retention in some circumstances.[4] That is common in litigation, but it is also why the Times dispute has drawn attention outside copyright circles.

The practical takeaway is simple: do not put sensitive personal, legal, medical, employment, or confidential business information into ChatGPT unless you are comfortable with the service’s data practices and the possibility of legally required retention. For a beginner-friendly explanation of the product itself, see what ChatGPT is. For developers, the same caution applies when sending user data through APIs.

Safety and product-liability cases are growing

The safety cases are different from the copyright cases. They do not ask whether OpenAI copied content. They ask whether ChatGPT was designed, deployed, or warned about in a way that contributed to serious real-world harm. These claims are newer, and courts have not yet supplied a mature rulebook for general-purpose chatbot liability.

In August 2025, the parents of Adam Raine sued OpenAI and Sam Altman after their 16-year-old son died by suicide, alleging ChatGPT played a role in his death.[12] OpenAI has described the death as tragic while disputing legal responsibility and explaining its approach to mental-health-related litigation.[13] The company has also said it continues to improve ChatGPT’s responses in sensitive conversations and to guide users toward real-world support.[13]

On April 10, 2026, TechCrunch reported another suit in which a stalking victim alleged ChatGPT fueled her abuser’s delusions and ignored warnings.[14] That case points to a broader category of claims often described in public debate as “AI psychosis,” dependency, or delusion reinforcement. Those are not settled legal categories. They are factual patterns plaintiffs are trying to fit into product-liability, negligence, failure-to-warn, and consumer-protection theories.

These lawsuits could affect product design faster than copyright cases do. Even before final judgments, litigation can push companies to add crisis routing, parental controls, age-aware experiences, refusal behavior, break reminders, and clearer warnings. OpenAI has already published multiple updates about mental-health-related work, but plaintiffs may argue those changes show earlier safeguards were inadequate. OpenAI may argue the opposite: that it is improving a complex product while users remain responsible for misuse and while no AI system can prevent every harm.

Readers should handle these cases with care. The allegations involve deaths, self-harm, stalking, and mental-health crises. They also involve unresolved legal questions about how much responsibility a general-purpose chatbot provider has when users engage in long, private, emotionally intense conversations. The answer may vary by age, warning signs, product version, account settings, and what the system actually said.

Microsoft and antitrust claims add another layer

OpenAI’s relationship with Microsoft appears in several legal contexts. In copyright suits, Microsoft is often a co-defendant because its Copilot products and cloud partnership are tied to OpenAI technology. In governance disputes, Microsoft’s role is part of the broader story of OpenAI’s commercial evolution. In antitrust claims, plaintiffs focus on whether Microsoft’s arrangements with OpenAI affected competition, output, quality, or prices.

One subscriber antitrust case alleges that the Microsoft-OpenAI relationship harmed ChatGPT Plus subscribers. In April 2026, Microsoft urged a court to send those claims to arbitration or dismiss them, arguing that the alleged injury was too indirect and speculative.[15] That is an early procedural posture, not a final ruling on whether the Microsoft-OpenAI partnership violates antitrust law.

Antitrust cases can be easy to overread. A motion to dismiss does not prove the claims are weak, and a surviving complaint does not prove wrongdoing. It only tells readers whether the case gets to move into more expensive stages such as discovery. For OpenAI, the practical antitrust risk is not only damages. It is whether courts or regulators eventually limit exclusivity, revenue sharing, cloud restrictions, or distribution terms.

This matters for users because partnership structure can affect where models run, how products are bundled, and how quickly features reach enterprise customers. It matters for developers because cloud availability and API distribution can shape reliability and pricing. It matters for investors because any forced change to the Microsoft relationship could change OpenAI’s path to revenue and public-market expectations. Our OpenAI funding round coverage follows that investor angle.

What could change in 2026

The biggest legal developments to watch in 2026 are not all final verdicts. Many will be procedural rulings that change leverage. A discovery ruling can force production of sensitive documents. A class-certification ruling can make a copyright or consumer case much larger. A motion-to-dismiss ruling can narrow claims and reduce settlement value. A preliminary remedy in a governance case can affect company operations before a full appeal.

For users, the most likely visible changes are product changes rather than sudden service interruptions. Safety litigation may push more conservative behavior in mental-health conversations. Copyright litigation may push stronger output filters, citations, licensing deals, or limits on requests for paywalled news and book excerpts. Privacy fights may clarify when deleted chats can be preserved for litigation. Antitrust pressure may affect how OpenAI distributes models through Microsoft and other cloud providers.

For publishers and authors, the key issue is whether courts treat AI training as fair use, infringement, or something that depends heavily on the dataset and market evidence. A broad ruling for OpenAI would strengthen the position of AI labs. A broad ruling for plaintiffs would accelerate licensing markets and could make training frontier models more expensive. A narrow ruling could leave both sides with uncertainty.

For OpenAI watchers, the legal calendar now sits beside the product calendar. Model launches, data deals, governance changes, and safety updates all land in a litigation environment. That is why major product coverage, such as ChatGPT updates 2026, increasingly needs a legal footnote. The lawsuits are not a side story anymore. They are part of how the company will operate.

Frequently asked questions

Is ChatGPT shutting down because of the OpenAI lawsuits?

No public court order as of April 24, 2026 requires ChatGPT to shut down. The lawsuits could affect governance, data practices, licensing, safety features, or business terms, but a lawsuit heading to trial is not the same as a shutdown. For a separate status check, see is ChatGPT shutting down?.

Which OpenAI lawsuit is most important right now?

The Musk case is the most immediate because trial proceedings were set to begin in late April 2026 after the judge dismissed fraud claims but allowed other claims to continue.[1][3] The copyright cases may be more important long term because they could shape training-data rules and licensing economics.

What is the main issue in the copyright lawsuits?

The central issue is whether OpenAI and Microsoft unlawfully used protected works to train or operate AI systems, or whether those uses are protected by fair use. Plaintiffs also focus on outputs, market harm, and removal of copyright-management information. OpenAI disputes the claims and has defended its position publicly.[5]

Do the lawsuits affect deleted ChatGPT conversations?

They can. OpenAI has objected to discovery demands in The New York Times case and said some legal obligations can affect data retention even when users delete chats.[4] Users should treat sensitive information carefully and read the current data controls before relying on deletion alone.

Are the mental-health lawsuits about copyright?

No. The mental-health and safety cases focus on product design, warnings, safeguards, and alleged real-world harm. They raise different legal theories from the publisher and author lawsuits, and they may lead to different remedies such as age controls, safer crisis responses, or warnings.

Has any court decided that OpenAI training is fair use?

Not in a final, industry-wide way that resolves all OpenAI copyright litigation. Some related AI copyright rulings have discussed fair use, and OpenAI points to favorable reasoning in its public materials.[5] The OpenAI publisher and author cases still require case-specific evidence and rulings.