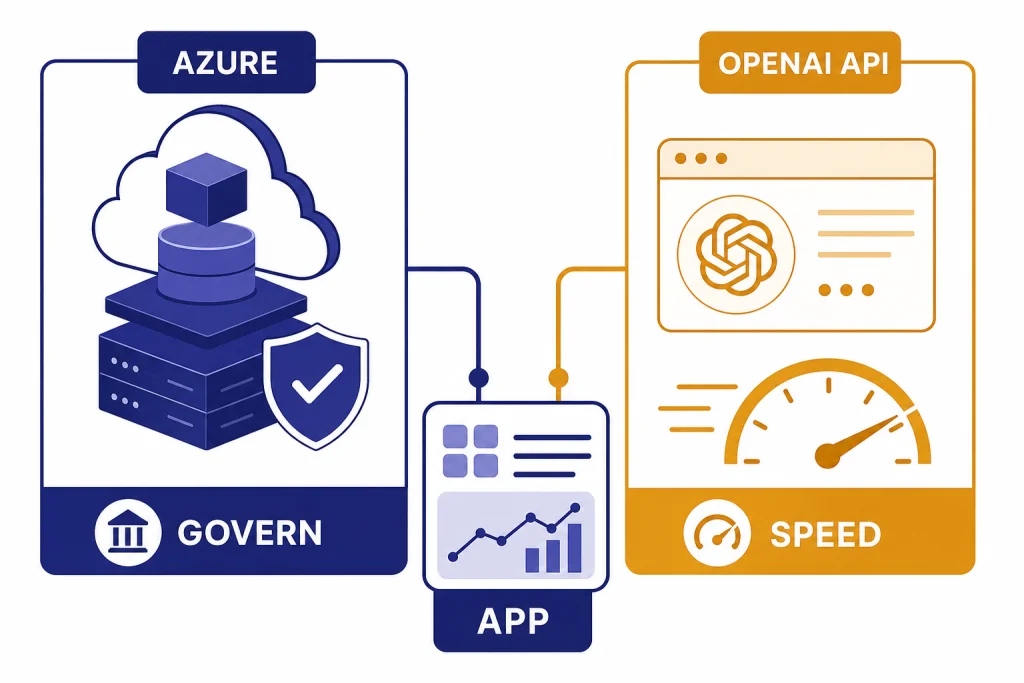

Azure OpenAI Service is usually the better choice for companies already standardized on Microsoft Azure, especially when procurement, networking, regional deployment, identity, and compliance controls matter. The OpenAI API is usually the better choice for teams that want the most direct OpenAI developer experience, faster access to OpenAI platform features, and simpler setup outside the Azure ecosystem. Both can power serious production apps. The right choice depends less on model quality and more on where your engineering, security, finance, and operations teams already work. This guide compares Azure OpenAI Service and the OpenAI API across deployment, pricing, model access, data handling, quotas, and common buyer scenarios.

Quick verdict

Choose Azure OpenAI Service if your organization already runs production workloads in Azure, uses Microsoft Entra ID, needs Azure-native networking and monitoring, or wants AI consumption to flow through existing Azure procurement. Microsoft describes Azure OpenAI models as part of Microsoft Foundry, hosted in Microsoft’s Azure environment rather than through the OpenAI API service.[3]

Choose the OpenAI API if your team wants the most direct OpenAI platform path, a smaller administrative surface, and faster experimentation with OpenAI’s own developer features. OpenAI’s platform documentation says API data is not used to train or improve OpenAI models unless you explicitly opt in, and its API pricing page lists standard rates, Batch, Flex, Priority processing, and data residency options.[9][8]

The short version: Azure OpenAI Service is an enterprise cloud integration decision. The OpenAI API is a product velocity and developer-platform decision. If you want the broader company context behind this split, read our backgrounder on OpenAI and Microsoft.

What each service is

Azure OpenAI Service is Microsoft’s Azure-hosted route to OpenAI models inside Azure AI Foundry and related Azure services. Microsoft’s documentation describes Azure OpenAI as providing REST API access to OpenAI models, with access through REST APIs and SDKs for languages such as Python, C#, JavaScript, Java, and Go.[1] It is managed like an Azure resource. That means Azure subscriptions, regions, role assignments, quota requests, deployments, monitoring, private networking patterns, and Microsoft billing shape the operational experience.

The OpenAI API is OpenAI’s direct developer platform. You create an OpenAI organization or project, call OpenAI endpoints, manage keys and usage in OpenAI’s platform, and follow OpenAI’s service terms and platform documentation. It is usually simpler for a startup, prototype, independent developer, or multi-cloud product team that does not need Azure-native controls on day one.

The services overlap because both can expose OpenAI model families. They differ because they are operated, governed, billed, and integrated through different clouds. A team evaluating OpenAI API pricing should treat Azure OpenAI as a related but separate purchasing and deployment path, not merely another endpoint name.

Side-by-side comparison

The table below gives the practical difference for most teams. It is intentionally operational. Model benchmarks rarely decide this purchase by themselves.

| Decision area | Azure OpenAI Service | OpenAI API | Best fit |

|---|---|---|---|

| Cloud home | Runs as an Azure resource inside Microsoft’s cloud environment. | Runs through OpenAI’s platform. | Azure for Microsoft-standardized enterprises; OpenAI API for direct platform use. |

| Identity and access | Fits Azure subscriptions, Azure role-based access, and enterprise cloud governance. | Uses OpenAI organization, project, and API controls. | Azure if your security team already governs Azure workloads. |

| Model access | Depends on Azure region, deployment type, and Microsoft availability. | Depends on OpenAI platform availability and account access. | OpenAI API if fastest direct OpenAI feature access matters most. |

| Capacity planning | Uses Azure quota, deployments, regional capacity, and optional provisioned throughput. | Uses OpenAI platform limits and service tiers. | Azure for predictable enterprise capacity planning; OpenAI API for simpler pay-as-you-go starts. |

| Data governance | Microsoft says prompts, completions, embeddings, and training data are not available to OpenAI or other Azure Direct Model providers.[3] | OpenAI says API data is not used for model training unless the customer opts in.[9] | Azure for Azure compliance alignment; OpenAI API for direct OpenAI controls. |

| Billing | Azure billing, pricing calculator, reservations, and enterprise agreements. | OpenAI platform pricing, service tiers, and OpenAI sales options. | Choose the path your finance and procurement teams can approve faster. |

Models and feature access

Model access is the most visible comparison, but it is also the easiest place to oversimplify. Azure OpenAI Service can expose many OpenAI models, but availability varies by region and deployment type. Microsoft’s model page lists Azure-hosted OpenAI families including GPT-5 series, GPT-4.1 series, o-series reasoning models, GPT-4o models, embeddings, image generation, audio, and other model categories.[1] It also shows specific model versions and region availability, which means a model can be generally documented but not available in the exact region or deployment mode your app needs.[1]

The OpenAI API is the source platform for OpenAI’s own model and tool releases. On March 5, 2026, OpenAI announced GPT-5.4 for ChatGPT, the API, and Codex, and described API availability for gpt-5.4 and gpt-5.4-pro.[7] OpenAI also said GPT-5.4 supports up to a 1M-token context in the API and Codex, with standard context behavior and special handling for longer requests.[7] If your team builds directly around new OpenAI platform capabilities, the OpenAI API often provides the cleanest path.

For many production apps, the model list is less important than deployment certainty. If a customer support bot, contract review tool, or internal search assistant only needs a stable model with predictable latency, Azure’s deployment controls may matter more than immediate access to the newest OpenAI feature. If your product differentiates on frontier agent behavior, tool use, or the newest multimodal API feature, the direct OpenAI path may be easier to justify.

If you are comparing agent stacks, also read our OpenAI Agents SDK guide and OpenAI Agent Builder overview. Those articles focus on how OpenAI’s direct tools fit into application architecture.

Pricing and capacity

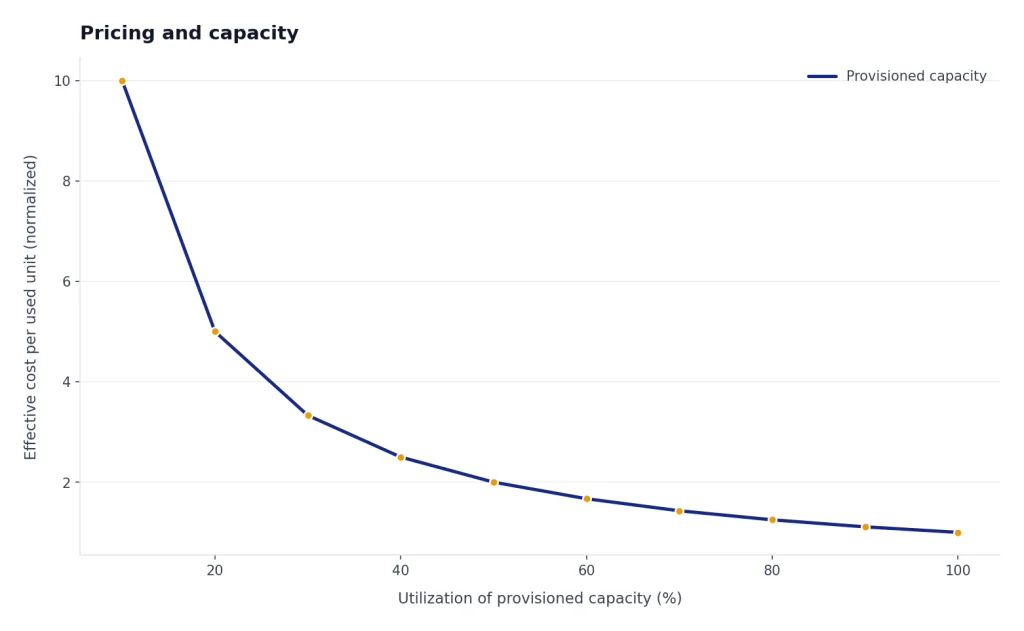

Do not compare only the headline token price. The full cost picture includes token rates, batch discounts, regional processing choices, provisioned capacity, quota approvals, engineering time, monitoring, retries, and procurement friction.

OpenAI’s pricing page listed GPT-5.4 at $2.50 per 1M input tokens, $0.25 per 1M cached input tokens, and $15.00 per 1M output tokens, with standard processing rates applying to context lengths under 270K.[8] OpenAI’s GPT-5.4 announcement also listed gpt-5.4 at $2.50 per million input tokens, $0.25 per million cached input tokens, and $15 per million output tokens; it listed gpt-5.4-pro at $30 per million input tokens and $180 per million output tokens.[7] OpenAI also describes Batch as saving 50% on inputs and outputs, while Flex trades lower cost for slower responses and occasional resource unavailability.[8]

Azure pricing is structured through Microsoft’s pricing pages, Azure billing, and the Azure pricing calculator. Microsoft’s Azure OpenAI pricing page describes token-based model pricing and a provisioned option where customers allocate throughput for deployments and are charged an hourly rate per model regardless of usage, with potential savings through monthly and annual reservations.[6] Microsoft’s provisioned throughput documentation says the feature lets you specify required throughput, after which Azure allocates model processing capacity and keeps it ready.[4]

Capacity behaves differently on Azure. Microsoft’s quota documentation says Azure OpenAI quota is scoped at the Azure subscription level, with tokens-per-minute and requests-per-minute limits defined per region, per subscription, and per model or deployment type.[2] The same page gives an example in which a gpt-4.1 Global Standard model quota of 5 million TPM and 5,000 RPM would apply as a dedicated quota pool in each region where that model or deployment type is available.[2] Microsoft also lists service limits such as 30 Azure OpenAI resources per region per Azure subscription and 32 maximum standard deployments per resource.[2]

Azure also offers dynamic quota. Microsoft says dynamic quota can let a standard deployment use more quota when extra capacity is available, but it is not predictable and may return HTTP 429 responses when limits are reached.[5] That makes application-level throttling and backoff important. For direct OpenAI troubleshooting patterns, see our OpenAI API Errors reference and our OpenAI Batch API explainer.

Security, data, and compliance

Security is the strongest reason to choose Azure OpenAI Service. Microsoft says Azure Direct Models, including Azure OpenAI models, are hosted in Microsoft’s Azure environment and do not interact with services operated by OpenAI, such as ChatGPT or the OpenAI API.[3] Microsoft also states that prompts, completions, embeddings, and training data are not available to other customers, are not available to OpenAI or other Azure Direct Model providers, and are not used to train generative AI foundation models without permission or instruction.[3]

The OpenAI API also has business data protections. OpenAI says API data is not used to train or improve OpenAI models unless the customer explicitly opts in.[9] OpenAI’s business data page says business data is encrypted at rest and in transit, using AES-256 at rest and TLS 1.2 or higher in transit.[10] It also says eligible API customers can use data residency options for sensitive customer content at rest and may select U.S. or Europe processing on supported API endpoints.[10]

The practical question is who your auditors, lawyers, and security reviewers already know how to assess. If your controls map to Azure Policy, Microsoft Defender, Azure Monitor, private networking, Microsoft contracts, and existing cloud compliance evidence, Azure OpenAI will usually shorten review cycles. If your application is already built outside Azure, or if your buyer accepts OpenAI’s enterprise privacy and API terms directly, the OpenAI API can be simpler.

This distinction matters for company-level analysis too. The Azure route reflects the broader strategic partnership covered in our OpenAI and Microsoft breakdown, while OpenAI’s own platform roadmap connects more directly to the company’s independent developer strategy and OpenAI history.

Developer experience

The OpenAI API is usually faster to start. A developer can create an account, generate a key, follow OpenAI’s docs, and build a prototype with fewer cloud-administration steps. The product surface is centered on OpenAI’s own models, tools, pricing, projects, data controls, and usage dashboards. That is helpful when a small team wants to ship quickly and does not need to coordinate with cloud platform teams.

Azure OpenAI Service adds setup work, but that work has value in larger organizations. You create Azure resources, choose deployment types, assign quota, configure access, and connect monitoring through Azure tooling. This can feel heavier during prototyping. In production, it can feel safer because the AI workload becomes one more governed Azure service instead of a separate vendor platform with separate access and cost controls.

Endpoint patterns also differ. Azure OpenAI typically uses Azure resource endpoints, deployments, API versions, and Azure-specific SDK configuration. The OpenAI API uses OpenAI’s platform endpoints and model identifiers directly. If your codebase is already abstracted behind a model provider layer, switching is manageable. If your app calls provider-specific features directly, expect migration work.

For exploratory prompting and quick model testing, the OpenAI Playground is still a useful companion to the direct API path. For production monitoring, keep the OpenAI Status Page handy if you depend on OpenAI’s platform directly.

Migration and hybrid use

You do not have to choose only one path forever. Many teams start with the OpenAI API for speed, then add Azure OpenAI Service when enterprise controls become the blocking issue. Others start on Azure because procurement requires it, then test direct OpenAI API features in a separate research environment.

A hybrid architecture works best when you keep provider-specific details behind a narrow interface. Store prompts, tool definitions, evaluation sets, and safety policies separately from the client code that calls the model. Log model name, deployment name, latency, input tokens, output tokens, failure mode, and user-visible result quality. That gives you enough evidence to decide whether a migration improves the product rather than merely changing the invoice.

The hardest migrations involve features that are not exact matches: tool calling behavior, image and audio inputs, retrieval patterns, response schemas, long-context behavior, batch jobs, and agentic workflows. If you use advanced OpenAI platform features, confirm Azure parity before committing. If you use Azure-specific controls such as private networking or provisioned throughput, confirm the direct OpenAI API can satisfy your risk and capacity requirements before moving away.

Decision framework

Use this checklist when the decision is stuck between engineering preference and enterprise requirements.

- Choose Azure OpenAI Service when the workload is enterprise production, the company already runs on Azure, private networking is important, cloud spend must go through Microsoft, or security review depends on Azure governance.

- Choose the OpenAI API when speed, direct OpenAI feature access, simpler setup, or cross-cloud portability matters more than Azure integration.

- Use both when research teams need direct OpenAI experiments while production teams need Azure-governed deployments.

- Delay the decision when you have not measured task quality, latency, token usage, and failure rates on your own prompts. Vendor choice cannot replace evaluation.

For a regulated enterprise, Azure OpenAI Service is often the default starting point. For a software company building an AI-native product, the OpenAI API is often the default starting point. For everyone else, run the same evaluation set through both, calculate total cost per successful task, and ask which platform your organization can operate reliably for the next year.

Frequently asked questions

Is Azure OpenAI Service the same as the OpenAI API?

No. They can expose overlapping OpenAI model families, but they are different services. Azure OpenAI Service is operated through Microsoft Azure and governed through Azure resources, regions, quota, and billing. The OpenAI API is OpenAI’s direct developer platform.

Does Azure OpenAI send my prompts to OpenAI?

Microsoft says Azure Direct Models, including Azure OpenAI models, are hosted in Microsoft’s Azure environment and do not interact with services operated by OpenAI, such as ChatGPT or the OpenAI API.[3] Microsoft also says prompts, completions, embeddings, and training data are not available to OpenAI or other Azure Direct Model providers.[3]

Is the OpenAI API data used for training?

OpenAI says data sent to the OpenAI API is not used to train or improve OpenAI models unless the customer explicitly opts in.[9] OpenAI also says API abuse monitoring logs are retained for up to 30 days by default unless longer retention is required by law or needed to protect services or third parties.[9]

Which option is cheaper?

It depends on workload shape and procurement. OpenAI publishes direct token pricing and service tiers, including Batch discounts and Flex processing.[8] Azure pricing can include token-based usage, provisioned throughput, Azure reservations, and enterprise agreements, so the better comparison is total cost per successful task.

Which option gets new OpenAI models first?

The direct OpenAI API is usually the cleanest path to OpenAI’s own newest platform releases. Azure OpenAI model access depends on Microsoft availability, region, and deployment type. Always check the model and region tables before promising a specific model in an Azure production plan.

Can I switch from OpenAI API to Azure OpenAI later?

Yes, but the effort depends on how much provider-specific functionality you used. Basic text calls are easier to port than agent workflows, tool integrations, batch jobs, long-context features, or multimodal pipelines. Build a provider abstraction early if you think a future migration is likely.