OpenAI news today centers on GPT-5.5, which launched in ChatGPT and Codex on April 23, 2026, then became available in the API on April 24, 2026. The week also brought ChatGPT Images 2.0, ChatGPT for Clinicians in the United States, OpenAI Privacy Filter, and a new GPT-5.5 Bio Bug Bounty. The practical takeaway is clear. OpenAI is moving from chat responses toward agentic work across coding, research, healthcare, image generation, and privacy infrastructure. This briefing separates confirmed product changes from speculation, explains who each update affects, and flags what developers, ChatGPT users, and enterprise teams should watch next.

Top OpenAI news today

The biggest confirmed OpenAI update today is API availability for GPT-5.5 and GPT-5.5 Pro. OpenAI published GPT-5.5 on April 23, 2026, and added an April 24 update stating that GPT-5.5 and GPT-5.5 Pro are now available in the API.[1] That matters because the release is no longer only a ChatGPT and Codex rollout. Developers can now begin testing the new model in production-style workflows, subject to their own safety, cost, and latency checks.

The week’s news is unusually dense. OpenAI also introduced ChatGPT Images 2.0 on April 21, 2026, launched ChatGPT for Clinicians on April 22, 2026, released OpenAI Privacy Filter on April 22, 2026, and opened a GPT-5.5 Bio Bug Bounty on April 23, 2026.[6][7][9][5] If you track longer arcs, compare this briefing with openai news this week and the running ChatGPT updates 2026 changelog.

| Story | Confirmed status on April 24, 2026 | Who should care first | Why it matters |

|---|---|---|---|

| GPT-5.5 API access | Available in the API after the April 24 update.[1] | Developers and enterprise AI teams | Teams can test the model outside ChatGPT and Codex. |

| GPT-5.5 in ChatGPT and Codex | Rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex.[1] | Paid ChatGPT users and coding teams | OpenAI is positioning it for multi-step work, tool use, and coding. |

| GPT-5.5 Pro | Rolling out to Pro, Business, and Enterprise users in ChatGPT.[1] | Power users and high-accuracy workflows | OpenAI describes it as the option for harder questions and higher-accuracy work. |

| ChatGPT for Clinicians | Free for verified U.S. physicians, nurse practitioners, physician assistants, and pharmacists.[8] | Healthcare professionals | It gives clinicians a separate workspace for evidence review, documentation, and medical research. |

| ChatGPT Images 2.0 | Introduced in ChatGPT on April 21, 2026.[6] | Creators, marketers, designers, and product teams | It expands OpenAI’s image generation stack. |

| OpenAI Privacy Filter | Released as an open-weight model for detecting and redacting PII in text.[9] | Developers handling sensitive text | It gives teams a local-first option for masking personal data. |

GPT-5.5 is the main story

GPT-5.5 is OpenAI’s headline release for April 24, 2026. OpenAI describes the model as built for complex work such as writing and debugging code, researching online, analyzing data, creating documents and spreadsheets, operating software, and moving across tools until a task is finished.[1] That wording matters. OpenAI is not presenting GPT-5.5 as a simple chatbot quality bump. It is pitching the model as a more capable worker inside tool-rich environments.

For ChatGPT users, the important split is between GPT-5.5 Thinking and GPT-5.5 Pro. GPT-5.5 Thinking is available to Plus, Pro, Business, and Enterprise users, while GPT-5.5 Pro is available to Pro, Business, and Enterprise users.[1] For context on how this fits into the broader model family, see all GPT models compared side by side and context window sizes for every GPT model.

For developers, the cost is now concrete. OpenAI’s API pricing page lists GPT-5.5 at $5.00 per 1 million input tokens, $0.50 per 1 million cached input tokens, and $30.00 per 1 million output tokens.[2] The same pricing page lists GPT-5.4 at $2.50 per 1 million input tokens, $0.25 per 1 million cached input tokens, and $15.00 per 1 million output tokens.[2] That means GPT-5.5 is priced at double GPT-5.4’s standard input and output token rates, before workload-specific token savings are considered.

OpenAI’s launch post says GPT-5.5 is available in Codex with a 400K context window, and that API developers get a 1M context window.[1] Those are large numbers, but they do not remove the need for prompt discipline. Larger context can improve long-document and long-codebase work, but it can also increase cost if teams push full transcripts, logs, or repositories into every request. If your main concern is API cost, keep OpenAI API pricing close while you test.

OpenAI also says GPT-5.5 in Codex can use Fast mode, generating tokens 1.5x faster for 2.5x the cost.[1] That is a specialized trade-off. It may fit interactive coding sessions where response time affects developer flow. It is harder to justify for background jobs, nightly analysis, or batch document processing.

The prudent path is not to switch every workflow on day one. Start with tasks where GPT-5.5’s claimed strengths match the failure mode you already see: code repair that spans many files, research that needs tool use, spreadsheet-like analysis, or agentic workflows that require checking intermediate work. Keep simpler prompts on lower-cost models until your logs show a clear gain.

ChatGPT for Clinicians expands OpenAI’s healthcare push

ChatGPT for Clinicians is one of the week’s most consequential product launches because it moves ChatGPT into a regulated, high-stakes professional setting. OpenAI says the product is free for verified clinicians in the United States, including physicians, nurse practitioners, physician assistants, and pharmacists.[8] It is designed for individual clinicians rather than full organization-wide deployment.[8]

The feature set is specific. OpenAI’s help article says ChatGPT for Clinicians includes trusted clinical search with citations, deep research across medical literature, pre-built skills, clinician-specific starter prompts, documentation support, CME support, custom GPTs, projects, connected apps, memory, and canvas.[8] Image generation is not supported in ChatGPT for Clinicians.[8]

The privacy boundary is also specific. OpenAI says content shared with ChatGPT for Clinicians is not used to train OpenAI’s models.[8] OpenAI also tells clinicians not to share protected health information in ChatGPT for Clinicians unless a Business Associate Agreement is in place and the user is authorized to sign one for the account.[8] That distinction is important. A clinical interface is not the same thing as blanket permission to paste patient-identifying data into a consumer-style workspace.

OpenAI’s launch post says physician advisors tested 6,924 conversations before release and rated 99.6% of responses as safe and accurate overall.[7] Those figures are useful, but they should not be read as a guarantee for every clinical situation. OpenAI’s own help article says the product is intended to support, not replace, professional judgment.[8]

This launch also matters for non-healthcare readers. It shows how OpenAI is packaging ChatGPT by profession, not only by model. The same pattern could appear in legal, finance, education, and engineering products. For company-level context, read what is OpenAI? company mission explained and OpenAI’s CTO and leadership team.

Images 2.0 and Privacy Filter show a broader platform shift

GPT-5.5 is the headline, but it is not the only signal. ChatGPT Images 2.0 arrived on April 21, 2026.[6] OpenAI’s release notes say ChatGPT Images 2.0 is available on all ChatGPT plans, while images with thinking are available on all paid ChatGPT plans when users select Thinking and Pro models.[3] That pairing links image generation with reasoning models rather than treating image creation as a separate toy feature.

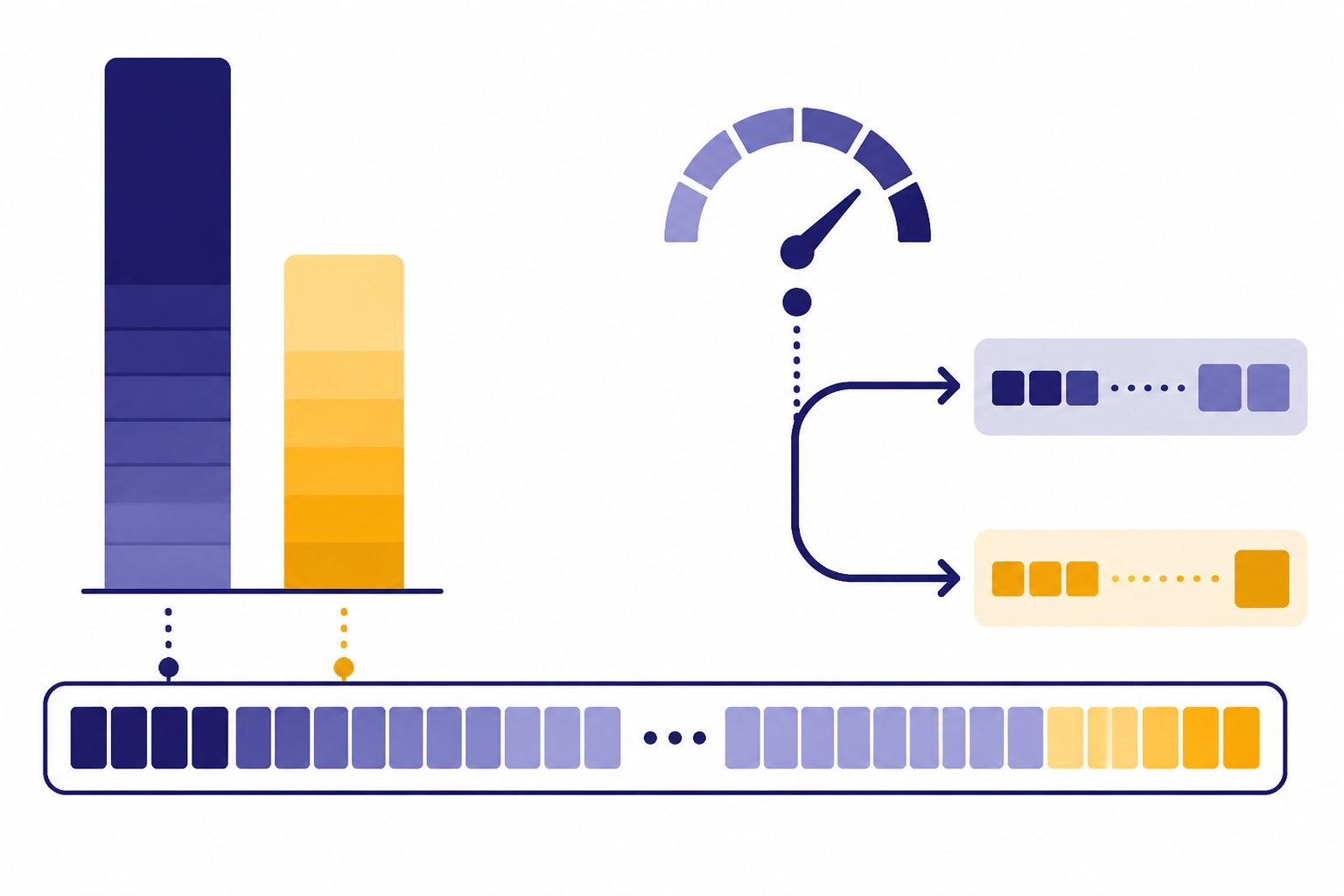

The Images 2.0 system card says the model adds stronger world knowledge, instruction following, and the ability to generate detail and complexity such as dense text.[10] It also says thinking mode can add reasoning and tool use to the image generation process, including live web search data and multiple images from a single prompt.[10] That makes the feature more relevant for research-backed visuals, product mockups, and structured creative work.

OpenAI Privacy Filter points in a different direction. OpenAI released it on April 22, 2026, as an open-weight model for detecting and redacting personally identifiable information in text.[9] OpenAI says it can run locally, allowing PII to be masked or redacted without leaving a user’s machine.[9] This is a developer infrastructure release, not a consumer feature. It fits teams that need to process logs, support tickets, transcripts, forms, and internal documents before sending content to a larger model.

Taken together, Images 2.0 and Privacy Filter show OpenAI building both ends of the workflow. One end helps users create richer outputs. The other helps developers reduce privacy risk before inputs reach a model. That is the platform story behind the product headlines.

Safety and access are now part of the product story

OpenAI no longer treats safety notes as a footnote after a model launch. The GPT-5.5 system card says OpenAI evaluated the model across its safety and preparedness frameworks, including targeted red-teaming for advanced cybersecurity and biology capabilities, and collected feedback from nearly 200 early-access partners before release.[4] The launch post repeats that GPT-5.5 ships with additional safeguards and says API deployment required different safeguards for serving at scale.[1]

The GPT-5.5 Bio Bug Bounty makes that concrete. OpenAI opened applications on April 23, 2026, and says they close on June 22, 2026.[5] Testing begins on April 28, 2026, and ends on July 27, 2026.[5] The bounty is focused on one universal jailbreak that can answer five bio safety questions from a clean chat without prompting moderation.[5] The top reward is $25,000 for the first true universal jailbreak that clears all five questions.[5]

For users, the practical lesson is simple. Availability can differ by plan, product surface, workspace settings, and risk category. A model may appear in ChatGPT before it appears in the API. A feature may be available to paid users but not free users. A workspace may disable or delay access. That is why rumor-based screenshots often age badly.

This also affects enterprise procurement. Security teams should ask whether a feature is available in their exact workspace, whether data is used for training, what admin controls exist, and whether the intended use requires a special agreement. For legal and governance background, track OpenAI lawsuits 2026, OpenAI Microsoft News, and OpenAI funding round.

How to read OpenAI news without getting misled

OpenAI news moves quickly, and not every viral post deserves the same weight. Use a source ladder. Start with OpenAI’s blog, Help Center, pricing page, system cards, and developer documentation. Then use reputable news reporting for business context, competitive context, lawsuits, and investor activity. Treat screenshots, social posts, and anonymous claims as leads, not facts.

Model names deserve extra caution. A model name in a dropdown is not the same thing as a general release. A model name in a benchmark table is not the same thing as API availability. A model name in a leaked prompt is not the same thing as pricing, limits, or long-term support. For example, GPT-5.5 was announced on April 23, 2026, but OpenAI’s launch post added a separate April 24 update saying API availability had begun.[1] That one-day distinction matters for developers.

Pricing deserves the same discipline. Use OpenAI’s pricing page for live API rates. Use your own logs for total cost. A higher token price can still be worth it if the model uses fewer tokens, completes tasks with fewer retries, or reduces human review time. The reverse is also true. A better benchmark score does not automatically lower your bill.

Consumer rumors need a different filter. If you see a claim that ChatGPT is disappearing, becoming paid-only, or losing a popular model, look for a Help Center notice or an official release note before reacting. Our separate explainer on is ChatGPT shutting down? the real answer covers that pattern in more detail.

What to watch next

The next watch item is GPT-5.5 adoption. Developers will test whether the new model justifies its API price in real workloads. Coding teams will compare GPT-5.5 in Codex against GPT-5.4, GPT-5.4 mini, and rival coding agents. Enterprise buyers will ask whether higher per-token costs translate into fewer failed tasks, fewer escalations, and faster project completion.

The second watch item is healthcare. ChatGPT for Clinicians is free at launch for verified U.S. clinicians, but healthcare institutions often need centralized controls, organization-wide deployment, and compliance agreements.[8] If clinician adoption is strong, expect more attention on the line between individual professional use and managed healthcare deployments.

The third watch item is the product cadence. OpenAI has stacked model releases, image upgrades, privacy tooling, and professional workspaces into a short window. That pace makes it harder for teams to rely on static model comparisons. Bookmark the official release notes, and use our GPT-5 launch coverage as a baseline for how the current generation has evolved.

The fourth watch item is access policy. The GPT-5.5 Bio Bug Bounty runs beyond today’s release window, with testing scheduled from April 28, 2026, through July 27, 2026.[5] Findings from safety testing may influence future model controls, API gating, or documentation. That does not mean access will be pulled. It means frontier model launches now come with a longer post-release safety process.

Frequently asked questions

What is the biggest OpenAI news today?

The biggest OpenAI news today is that GPT-5.5 and GPT-5.5 Pro are now available in the API after launching in ChatGPT and Codex on April 23, 2026.[1] This turns GPT-5.5 from a user-facing release into a developer-facing release.

Is GPT-5.5 available to all ChatGPT users?

No. OpenAI says GPT-5.5 is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex, while GPT-5.5 Pro is rolling out to Pro, Business, and Enterprise users in ChatGPT.[1] Availability can also depend on workspace settings.

How much does GPT-5.5 cost in the API?

OpenAI lists GPT-5.5 at $5.00 per 1 million input tokens, $0.50 per 1 million cached input tokens, and $30.00 per 1 million output tokens.[2] Teams should compare that with their own usage logs before changing default models.

What is ChatGPT for Clinicians?

ChatGPT for Clinicians is a separate ChatGPT workspace for verified clinicians in the United States. OpenAI says it supports evidence review, documentation, medical research, trusted clinical search, citations, deep research, and CME support.[8] It is meant to support professional judgment, not replace it.

What changed with ChatGPT Images 2.0?

OpenAI introduced ChatGPT Images 2.0 on April 21, 2026.[6] OpenAI’s release notes say the new image model is available on all ChatGPT plans, while images with thinking are available on paid plans when users select Thinking and Pro models.[3]

Is every OpenAI rumor worth covering?

No. The best first check is an official OpenAI source such as the blog, Help Center, pricing page, system card, or developer documentation. Third-party reports can add useful context, but screenshots and social posts should not be treated as confirmed product facts.