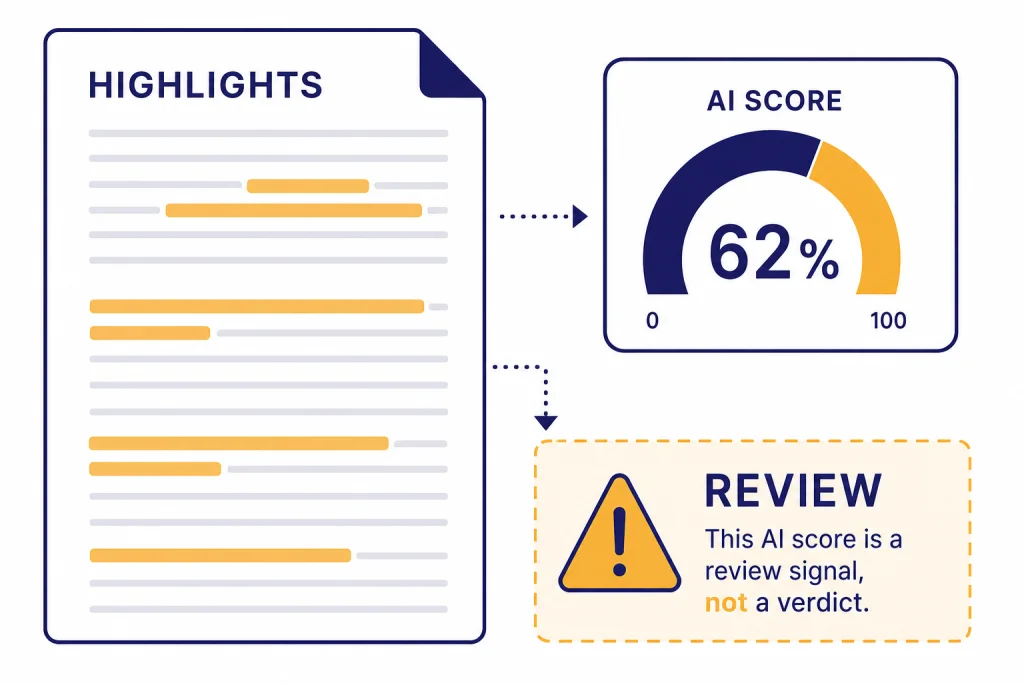

Turnitin’s AI detector is one of the most important AI-writing checks in education, but it should not be treated as a verdict. In this Turnitin AI detector review, the short answer is that Turnitin is useful as a screening signal for long-form academic prose, especially when the score is high and the surrounding evidence supports it. It is not accurate enough to stand alone in an academic misconduct case. Turnitin’s own guidance says the model may misidentify human-written, AI-generated, and AI-paraphrased text, and that instructors should not use it as the sole basis for adverse action.[1] The best use is cautious: review the report, compare it with drafts and process evidence, then talk with the student before making a decision.

Quick verdict

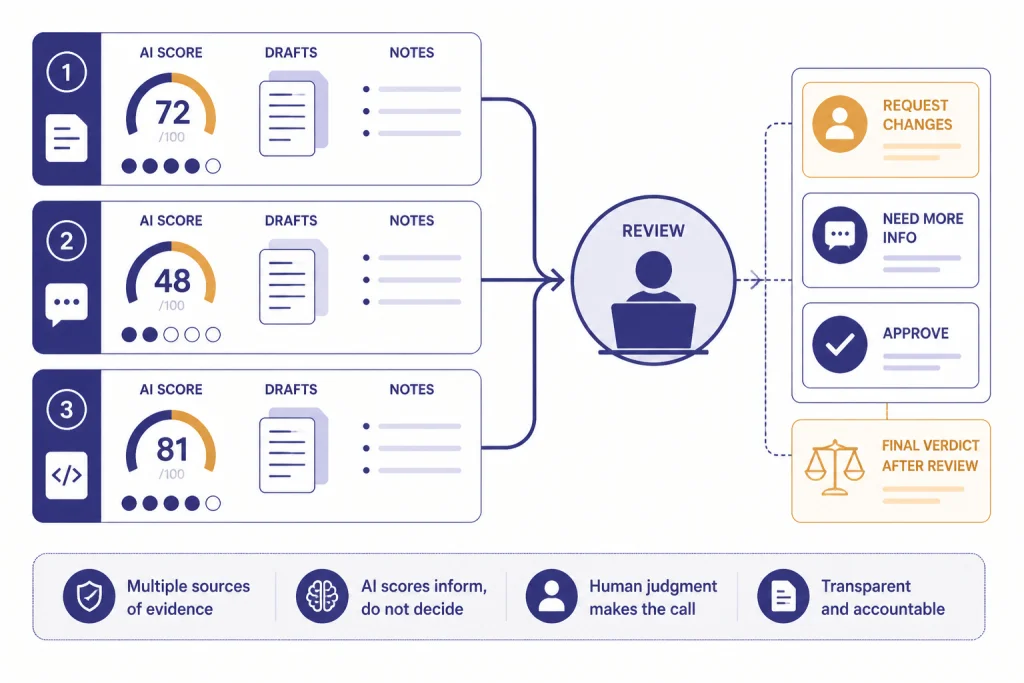

Turnitin’s AI detector is strongest when it is used as part of a broader academic integrity workflow. It is weak when schools treat the score as a direct accusation. The product is integrated into Turnitin’s Similarity Report, so it fits institutions that already use Turnitin for plagiarism review. That integration is the main advantage over standalone AI detectors.

The problem is not that Turnitin is useless. It is that AI detection is probabilistic. A score can look precise without proving intent, authorship, or misconduct. Turnitin’s documentation states that the AI writing score is separate from the similarity score and represents qualifying prose that the model determines could be AI-generated, AI-paraphrased, or modified by bypasser tools.[1] That is a useful lead for a teacher. It is not a complete case.

Our review: Turnitin is one of the better institutional AI detectors, but schools should use it only with written policy, human review, and a clear appeal process. If you are comparing broader classroom options, start with our guide to Best AI Detectors for Teachers and Schools and our separate roundup of AI and traditional plagiarism checkers.

What Turnitin’s AI detector checks

Turnitin’s AI detector reviews submitted text for patterns that its model associates with generative AI writing. In the current AI Writing Report, Turnitin describes its detector as looking for text that might be prepared by large-language models, chatbots, word spinners, and bypasser tools.[1] The report appears alongside the Turnitin workflow, but it is not the same as plagiarism detection.

This distinction matters. A plagiarism report points to matching sources. An AI report does not point to the source that supposedly generated the passage. It highlights text that the model predicts is likely AI-related. That makes it less evidentiary than a source-match report and more dependent on instructor judgment.

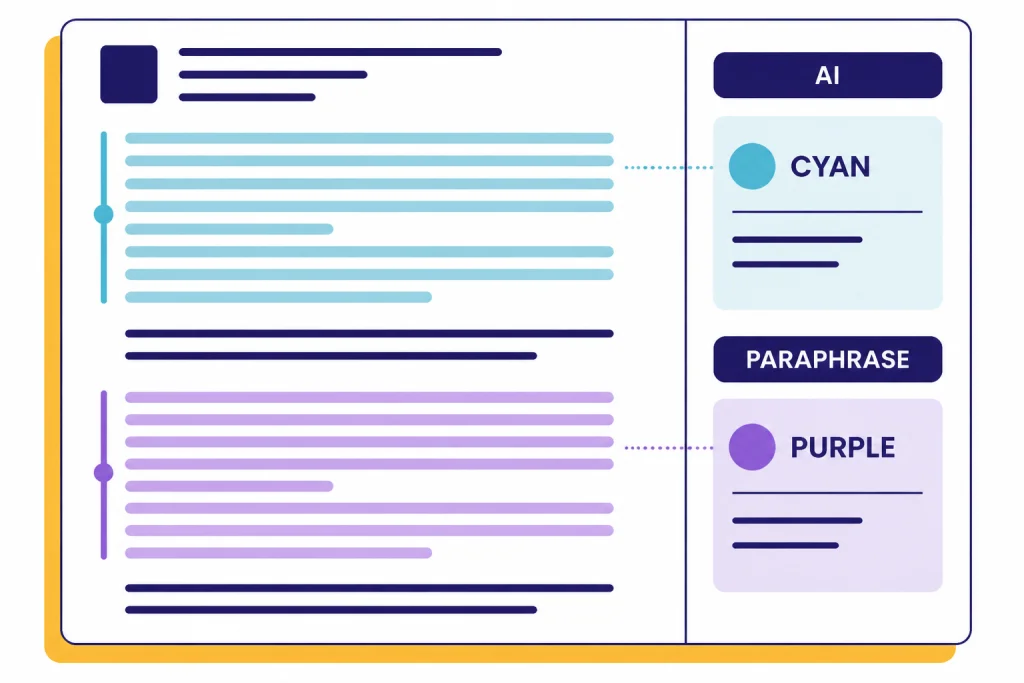

The report separates likely AI-generated text from likely AI-paraphrased text. Turnitin’s guide says likely AI-generated text, including text likely modified by a bypasser, is highlighted in cyan, while likely AI-generated text that was also likely AI-paraphrased is highlighted in purple.[1] This is helpful because a student may use an AI system to draft text, then run it through a paraphraser or rewriting tool. It also creates a new risk: instructors may read color-coded highlights as certainty when they are still model predictions.

Turnitin’s release notes show that the detector has changed repeatedly since launch. The company added AI paraphrasing detection in December 2023, changed low-score handling in July 2024, added Japanese AI writing detection in April 2025, added bypasser-tool detection in August 2025, and updated the AI writing model again on February 12, 2026.[3] This history is important because a report generated before a model update may not match a report generated after resubmission.

How accurate Turnitin says it is

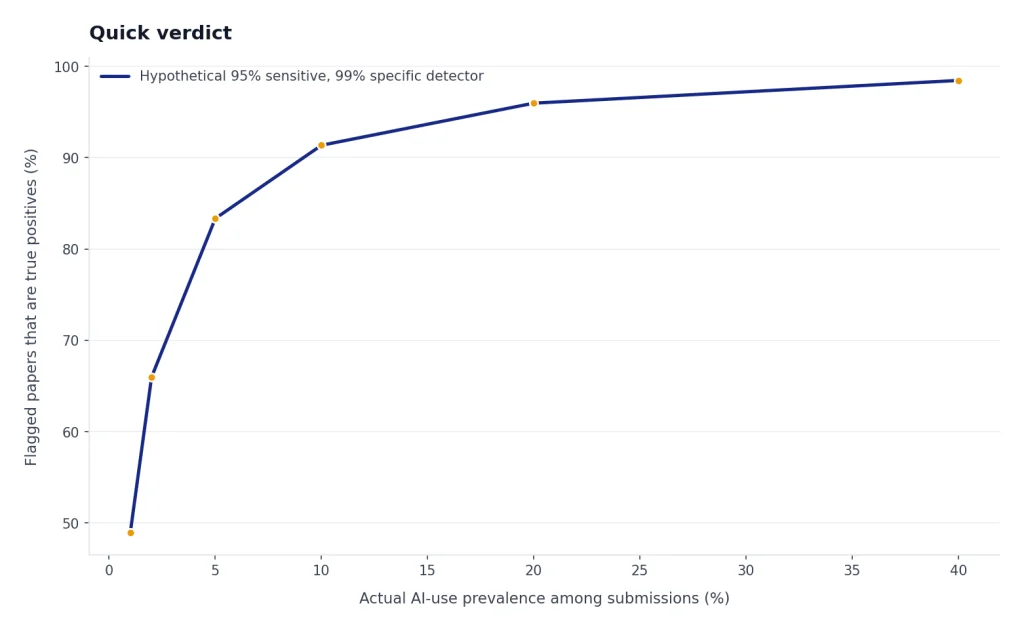

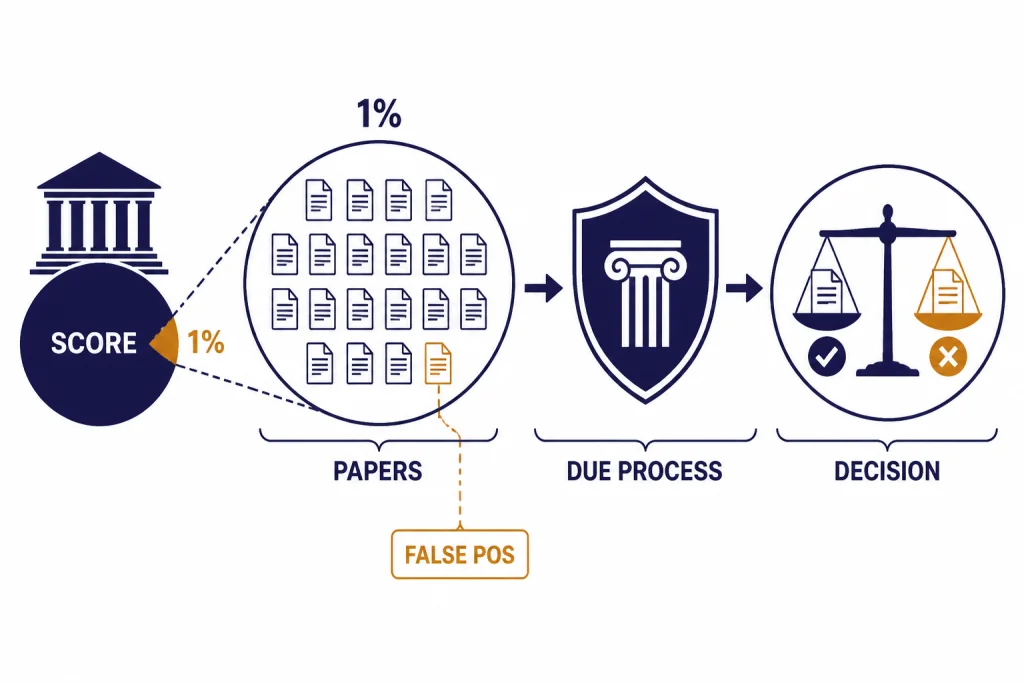

Turnitin has published several accuracy-related statements, but readers should separate marketing claims, technical claims, and product guidance. In a 2023 false-positive explainer, Turnitin said its document-level false positive rate was less than 1% for documents with 20% or more AI writing, while its sentence-level false positive rate was around 4%.[4] Those are not the same metric.

The document-level number asks whether a whole human-written document is incorrectly flagged as containing AI writing. The sentence-level number asks whether individual human-written sentences are incorrectly flagged. In a classroom, both matter. A small number of sentence-level errors can still create anxiety if the highlighted passage sits in a conclusion, introduction, or key paragraph.

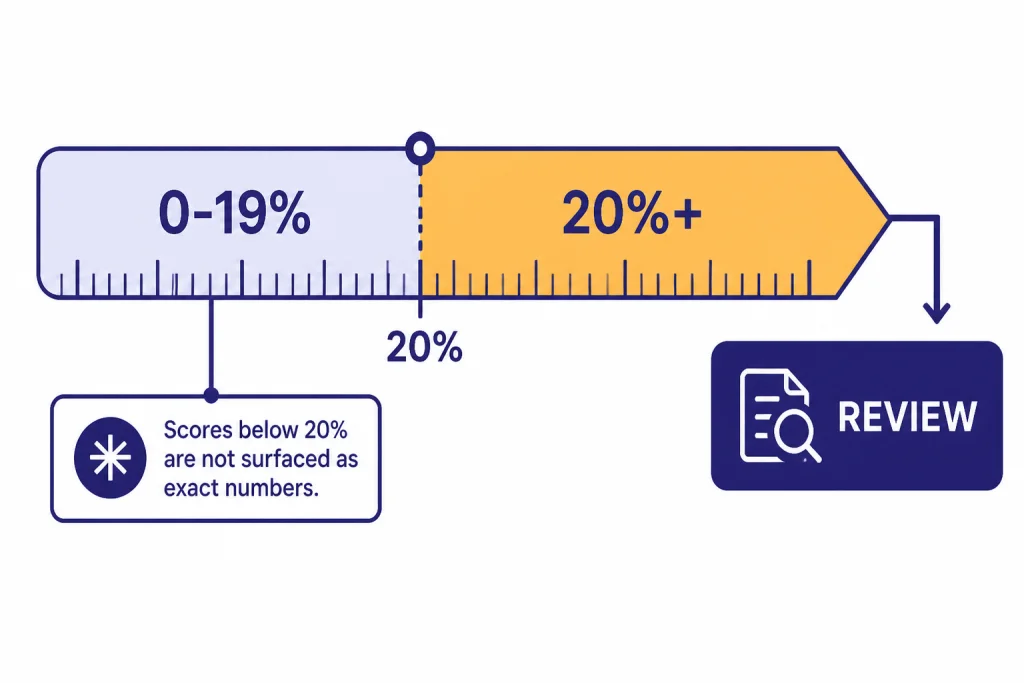

Turnitin’s own interface now avoids surfacing exact scores below 20%. Its guide says scores above 0% and below the 20% threshold are shown as an asterisk rather than a specific percentage, because Turnitin found a higher incidence of false positives in the 0% to 19% range.[1] That is a sensible product change. It is also an admission that low scores are too easy to overinterpret.

| Claim or signal | What it means | How to use it |

|---|---|---|

| Less than 1% document-level false positive rate for documents with 20% or more AI writing[4] | Turnitin says fully human documents are rarely flagged at the document level under that condition. | Treat as a confidence claim, not proof for a specific student. |

| Around 4% sentence-level false positive rate[4] | Individual sentences can be wrongly highlighted even when the overall document-level rate is lower. | Do not treat one highlighted passage as enough evidence. |

| No exact score shown below 20%[1] | Turnitin hides low numeric scores because false positives are more likely in that band. | Do not escalate low-score asterisk reports without other evidence. |

| Model updates do not retroactively change old reports[3] | A prior report can reflect an older model. | Be careful when comparing reports across semesters or resubmissions. |

If a school publishes a threshold policy, it should explain what each score range triggers. A high score can justify a conversation. A high score should not automatically trigger a penalty. A low score should not be used to clear or condemn a paper because low-score reporting has its own reliability limits.

What independent evidence shows

Independent testing supports a mixed conclusion. Turnitin often performs better than many public AI detectors, but the broader research literature warns against using AI detectors as stand-alone evidence. A 2023 study in the International Journal for Educational Integrity tested 14 detection systems, including Turnitin and PlagiarismCheck as commercial systems used in academic settings.[6] In that study’s accuracy table, Turnitin ranked first with 81% accuracy across the tested document categories.[6]

That result is favorable to Turnitin, but it is not the same as near-perfect accuracy. The same study concluded that detection tools have serious limitations and are not suitable as sole evidence in academic misconduct investigations.[6] That is the most important takeaway for schools. Turnitin may be the strongest tool in a weak category.

OpenAI’s own experience is a useful warning. OpenAI discontinued its AI Text Classifier on July 20, 2023 because of a low rate of accuracy.[9] That does not prove Turnitin is inaccurate. It shows that even the company behind major generative AI systems treated text detection as a difficult, unreliable problem when used as a general-purpose classifier.

Turnitin’s scale is also unusual. The company said that more than 200 million papers had been reviewed by its AI writing detector since the April 2023 launch, as of its April 9, 2024 anniversary announcement.[10] Scale can improve product iteration, but it does not eliminate the need for due process. Large systems can still make consequential mistakes.

False positives and why they matter

A false positive happens when human-written text is incorrectly labeled as AI-generated. In an academic setting, that error is not minor. It can affect grades, scholarships, disciplinary records, and student trust. This is the central issue in any serious Turnitin AI detector review.

Turnitin acknowledges false positives in its own guidance. Its AI Writing Report guide says the model may misidentify human-written, AI-generated, and AI-paraphrased text, and should not be used as the sole basis for adverse action.[1] Its false-positive explainer also states that a nonzero false positive rate means instructors need to apply professional judgment and assignment context.[4]

Institutions have responded differently to that risk. Vanderbilt University disabled Turnitin’s AI detection tool on August 16, 2023 after several months of use and testing.[7] Vanderbilt noted that Turnitin’s 1% false positive claim would translate to about 750 incorrectly labeled papers if applied to 75,000 papers submitted at Vanderbilt in 2022.[7] The exact math depends on what is counted as a false positive, but the policy point is clear: small percentages become large numbers at institutional scale.

The University of British Columbia also affirmed its decision not to enable Turnitin’s AI-detection feature in August 2023. UBC said Turnitin’s high reliability claim had not been independently evaluated and that the feature’s effectiveness remained unclear.[8] That does not mean every institution should disable the tool. It does mean schools should decide openly, not by default.

False positives also raise equity concerns. Students who write in highly formal prose, use templated academic structures, rely on grammar tools, or write in English as an additional language may be more exposed to detector suspicion. The safest policy is to require corroborating evidence: drafts, revision history, notes, oral explanation, citation trail, and consistency with prior work.

Report limits and file requirements

Turnitin’s AI detector does not evaluate every submission. Its file requirements are specific. A submission must be under 100 MB, include at least 300 words of prose text, contain no more than 30,000 words, use a supported language, and be submitted as .docx, .pdf, .txt, or .rtf.[2] The supported languages listed in Turnitin’s file requirements are English, Spanish, and Japanese.[2]

Those limits affect classroom use. A lab report with tables, code, bullet points, and short answers may not be a good fit. Turnitin says the model does not reliably detect AI-generated text in non-prose formats such as poetry, scripts, code, bullet points, tables, or annotated bibliographies.[1] Teachers should not expect the same reliability for every assignment type.

There is also a timing issue. Turnitin’s help center says an AI indicator may show as unavailable when a submission was made before the AI writing detection release on April 4, 2023, or when the submission does not meet file requirements.[2] Schools comparing old and new submissions should account for that difference.

Access is not a consumer self-serve product. Turnitin’s help center tells administrators interested in adding AI Writing Detection to speak with their Turnitin account manager.[11] OpenAI has not published an official figure for Turnitin’s institutional pricing because Turnitin pricing is handled through Turnitin contracts, not OpenAI.

| Submission type | Good fit for Turnitin AI review | Reason |

|---|---|---|

| Essay or dissertation chapter | Usually yes | Long-form prose is the main supported use case. |

| Short discussion post | Often no | May not reach the 300-word prose minimum.[2] |

| Code assignment | No | Turnitin says the model does not reliably detect AI-generated code.[1] |

| Annotated bibliography | Weak fit | Turnitin lists annotated bibliographies among formats the model does not reliably detect.[1] |

| Mixed tables and bullet points | Weak fit | Non-prose text may not count as qualifying text.[1] |

Best use for teachers and schools

The best use of Turnitin AI detection is as a triage signal. A report should start a review, not end one. Schools should define what happens after a report appears, who reviews it, what evidence is collected, and how students can respond.

- Use written policy. Tell students whether AI assistance is allowed, where it must be disclosed, and how AI reports are handled.

- Require more than a score. Ask for drafts, outlines, source notes, document history, and a short explanation of the writing process.

- Separate plagiarism from AI writing. A similarity match is source evidence. An AI score is a probability signal.

- Avoid automatic penalties. Turnitin’s own guidance says the model should not be the sole basis for adverse action.[1]

- Review assignment design. Use in-class writing, staged drafts, oral defenses, and process portfolios where authorship matters.

Teachers who need a broader technology stack should compare Turnitin with other classroom tools, not only other detectors. For writing support, see our review of AI writing tools compared in 2026. For research-heavy assignments, our guide to AI research tools for academics can help schools distinguish acceptable research assistance from undisclosed authorship. For long readings, our roundup of AI summarizer tools for long documents is more relevant than an authorship detector.

For institutions, the most defensible approach is to treat Turnitin AI as one part of assessment design. If a course bans all AI use, the instructor still needs a fair way to investigate suspected misuse. If a course allows limited AI use, the policy should say what disclosure looks like. A detector cannot solve that policy problem.

What students should do if flagged

If Turnitin flags your work, do not panic and do not try to “humanize” the paper after submission. That can make the situation look worse. Focus on evidence that shows your writing process.

- Save the original file, drafts, notes, outline, bibliography, and version history.

- Write a short timeline explaining when you researched, drafted, revised, and edited the assignment.

- Identify any allowed tools you used, such as grammar checkers, citation managers, translation aids, or AI brainstorming tools.

- Ask the instructor which parts were flagged and whether the score was above the reporting threshold.

- Request a chance to discuss the paper, sources, and argument in person or by video.

Do not rely on a free online AI detector to “prove” Turnitin is wrong. Different detectors use different models and thresholds. A clean result from another tool may be useful context, but it is not definitive. The stronger evidence is process evidence: drafts, document history, source notes, and your ability to explain your own choices.

Students should also understand the difference between AI assistance and undisclosed AI authorship. A teacher may permit brainstorming but prohibit generated paragraphs. Another may allow grammar revision but require disclosure. The tool result matters less than the course policy and your ability to show what you did.

How it compares with alternatives

Turnitin’s main advantage is institutional integration. Many schools already use Turnitin for similarity checking, so the AI report appears inside a familiar workflow. Standalone detectors can be easier to access, but they usually lack the same institutional audit trail and assignment context.

Turnitin’s main disadvantage is opacity. Instructors see a score and highlights, but they do not see a source, a reproducible generation trace, or a full explanation of the model’s reasoning. That makes the tool less transparent than plagiarism matching. It also means two schools can use the same report in very different ways.

| Option | Best use | Main weakness |

|---|---|---|

| Turnitin AI detector | Institutional review of long-form academic prose | Probabilistic score can be overread as proof. |

| Standalone AI detectors | Quick informal screening | Often lack school policy context and may disagree with Turnitin. |

| Traditional plagiarism checkers | Finding matching sources and copied passages | They do not prove whether AI generated original text. |

| Process-based assessment | Showing authorship through drafts, notes, and revision history | Requires more instructor planning and grading time. |

If your concern is copied text, compare tools in our best plagiarism checkers breakdown. If your concern is authentic writing development, combine detector review with better assignment design. If your concern is tool choice across departments, our AI detector for teachers guide is the better starting point.

Turnitin also should not be confused with tools that generate, transform, or assist writing. A ChatGPT prompt generator, an AI resume builder, and an AI translation tool all raise different disclosure questions. Detection is only one part of a much larger policy conversation about acceptable AI use.

Frequently asked questions

Is Turnitin’s AI detector accurate?

It can be useful, but it is not definitive. Turnitin says its tool has a low document-level false positive rate under certain conditions, while independent research still warns that AI detectors should not be used as sole misconduct evidence.[4][6] Treat the score as a signal that needs human review.

Can Turnitin falsely accuse a student of using AI?

Yes. Turnitin’s own guide says its AI writing model may misidentify human-written, AI-generated, and AI-paraphrased text.[1] That is why instructors should review drafts, assignment context, and student explanations before taking action.

What does a low Turnitin AI score mean?

Turnitin no longer surfaces exact AI scores below 20% in new reports. It shows an asterisk instead because false positives are more likely in the 0% to 19% range.[1] A low-score signal should not be treated as strong evidence by itself.

Does Turnitin detect paraphrased AI writing?

Turnitin says its AI Writing Report can identify likely AI-generated text and likely AI-generated text that was also AI-paraphrased.[1] Its release notes show that AI paraphrasing detection was incorporated into the AI writing capability in December 2023.[3] This still remains a prediction, not proof of intent.

Can students access the Turnitin AI detector directly?

Usually no. Turnitin AI Writing Detection is an institutional feature, and Turnitin tells administrators to contact their account manager to add it to a license.[11] Students normally see results only if their institution and instructor make them available.

Should schools disable Turnitin AI detection?

Not necessarily. Some institutions, including Vanderbilt and UBC, chose not to use or continue using it because of reliability and due process concerns.[7][8] Other schools may keep it if they pair it with clear policy, human review, and an appeal process.