Scribbr AI Detector is useful as a quick screening tool, but it is not accurate enough to prove that a student, employee, freelancer, or applicant used AI. Scribbr says its free detector checks for AI-like writing patterns and returns a percentage score, while its premium detector adds broader model coverage and document-level reporting.[1][2] The best reading of the evidence is practical: Scribbr can flag text worth reviewing, especially obvious AI drafts, but its own guidance and broader AI-detection research warn against treating any score as definitive proof.[3][5]

Verdict: Scribbr is helpful, not conclusive

This Scribbr AI Detector review has a simple conclusion. Scribbr is a good first-pass checker for low-stakes review. It is not a courtroom-grade authorship test. If your goal is to decide whether a paragraph needs closer review, Scribbr can help. If your goal is to accuse someone of misconduct, reject a submission, or penalize a writer, a Scribbr percentage is not enough.

Scribbr’s own public pages say no AI detector can guarantee complete accuracy and recommend scanning longer text rather than isolated sentences or short paragraphs.[1] That caveat matters. AI detectors do not know who wrote a document. They infer probability from patterns. Those patterns can overlap with human academic writing, edited prose, non-native English writing, template-heavy content, and highly predictable subject matter.

My practical rating is conditional. For teachers, Scribbr is a useful signal when combined with draft history, assignment fit, source checking, and a student conversation. For publishers, it can help screen obvious machine-written submissions before deeper editing. For students and writers, it is best used as a self-check, not as a reason to rewrite honest work into awkward prose. If you need a school-focused comparison, see our Best AI Detectors for Teachers and Schools guide.

What Scribbr AI Detector checks

Scribbr AI Detector checks whether text resembles AI-generated or AI-refined writing. Scribbr says AI detectors measure characteristics such as sentence structure and length, word choice, predictability, perplexity, and burstiness rather than comparing the text against a database of known sources.[1][2] That makes it different from a plagiarism checker. A plagiarism checker looks for similarity to existing documents. An AI detector looks for statistical writing patterns.

The report uses a percentage-style score. Scribbr’s FAQ describes the AI score as a number from 0% to 100% that estimates how much of the text contains content likely written or refined using AI tools.[1] The help center also describes report categories for AI-generated, AI-generated and AI-refined, human-written and AI-refined, and human-written text.[2] That category split is more helpful than a single binary label because many real documents now involve mixed workflows.

Scribbr says its detector is built for popular AI tools including ChatGPT, Gemini, and Copilot, and its help article lists ChatGPT, Gemini, Claude, Bard, and Bing Chat among the systems covered by the premium detector.[1][2] The tool is therefore aimed at text authorship review, not image detection, video detection, source verification, or citation auditing. If your problem is copied text rather than AI-like style, start with our Best Plagiarism Checkers roundup instead.

Language support is slightly confusing across Scribbr’s own pages. The main detector page mentions support for multiple languages including German, French, and Spanish, while Scribbr’s help center says the detector currently supports English, German, and French and offers a separate Dutch detector.[1][2] Scribbr also has a separate FAQ saying the detector can analyze English, Spanish, German, and French.[9] For April 2026, I would treat English, German, and French as the safest documented options and test Spanish support before relying on it for a policy decision.

How accurate is Scribbr AI Detector?

Scribbr looks comparatively strong in Scribbr’s own published detector test, but that is not the same as independent proof. Scribbr’s comparison article says Scribbr Premium scored 84% overall accuracy, Scribbr Free scored 78%, and QuillBot Free also scored 78% in its test set.[3] It also says Scribbr Premium detected 60% of mixed, paraphrased, or human-combined AI texts in that test.[3] Those numbers support a cautious conclusion: Scribbr can be good relative to other detectors, but even the stronger result leaves room for missed AI text and incorrect suspicion.

The harder cases are the cases that matter most. A raw AI draft is easier to detect than a document that has been revised by a human, blended with original writing, translated, paraphrased, or heavily edited. Scribbr’s own comparison notes that AI text combined with human text or paraphrased text is hard to detect, and that detectors can produce false positives.[3] That is why a score should start a review, not finish one.

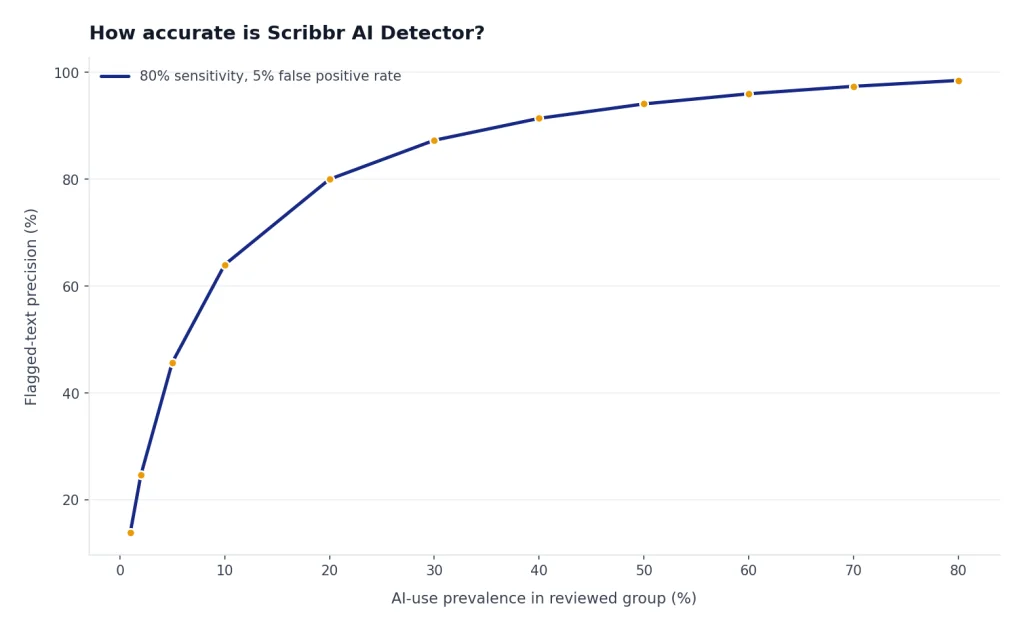

There is also a base-rate problem. In a classroom where most students wrote honestly, even a low false-positive rate can create serious harm because the suspicious group may include many innocent writers. Turnitin’s AI writing guidance makes the same operational point in stronger terms: its AI score may misidentify both human and AI-generated text and should not be used as the sole basis for adverse action against a student.[8] That principle applies to Scribbr as well, even though Scribbr and Turnitin are different tools.

OpenAI’s own history also shows why text detection is hard. OpenAI shut down its AI classifier on July 20, 2023, because of a low rate of accuracy; its published evaluation said the classifier correctly identified 26% of AI-written text as likely AI-written and incorrectly labeled human-written text as AI-written 9% of the time.[6] OpenAI’s old classifier is not Scribbr’s detector, but it is useful context. The organization that built ChatGPT still found AI text classification difficult enough to retire a public detector.

Non-native English writing deserves special care. A widely cited arXiv study found that several GPT detectors consistently misclassified non-native English writing samples as AI-generated, while native English writing samples were identified more accurately.[7] This is one reason schools should not convert an AI score into an automatic penalty. Formal, careful, or language-learner writing can look statistically “AI-like” even when it is authentic.

Free vs premium Scribbr AI Detector

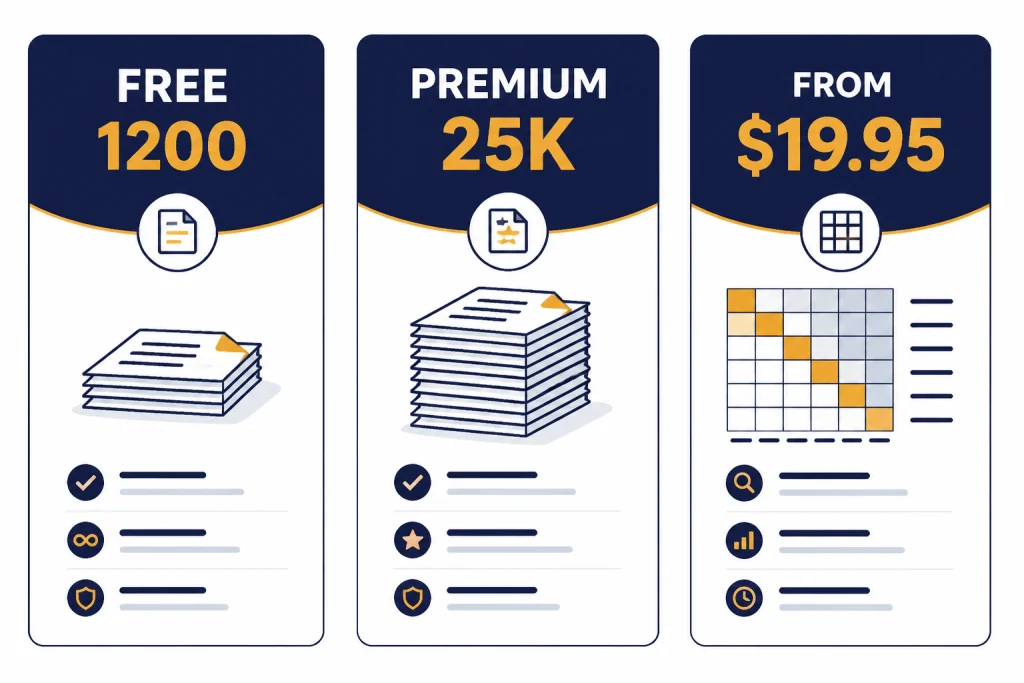

Scribbr has a free detector and a premium detector tied to its plagiarism-checking workflow. The free version is attractive because it requires no sign-up and allows repeated checks, but Scribbr’s main page lists a limit of up to 1,200 words per free submission.[1] Scribbr’s older comparison article mentions a 500-word free limit, which appears inconsistent with the current main detector page.[3] For April 2026, the safer figure to rely on is the 1,200-word limit shown on Scribbr’s current detector page.

The premium documentation has another important limit. Scribbr’s help center says the AI Detector can scan documents up to 25,000 words, and if a document is larger, only the first 25,000 words are scanned.[2] Scribbr’s comparison article says the premium AI check is included with a plagiarism check that costs between $19.95 and $39.95.[3] Scribbr’s FAQ also lists the premium plagiarism report as starting from $19.95 and says it includes a precise AI writing percentage.[4]

| Feature | Scribbr Free AI Detector | Scribbr Premium AI Detector | What it means |

|---|---|---|---|

| Cost | Free | Bundled with premium plagiarism reporting from $19.95 | Free is enough for quick screening; premium makes more sense when you also need plagiarism review. |

| Submission size | Up to 1,200 words per submission on the current detector page | Help center says documents are scanned up to 25,000 words | Long papers need premium or section-by-section free checks. |

| Model coverage | Scribbr says GPT-2, GPT-3, and GPT-3.5 with average accuracy | Scribbr says premium has high accuracy and broader coverage including GPT-4 and other known models | Premium is the better fit for newer-model detection claims. |

| Output | Percentage-style AI score | AI score plus report categories in the premium workflow | Categories are more useful than a single score when text is mixed or edited. |

| Best use | Quick self-checks and low-stakes screening | Academic document review where plagiarism checking is also needed | Neither version should be treated as proof by itself. |

The premium version is most appealing when you already need a plagiarism report. If you only want a quick AI probability check, the free version may be enough. If you need to compare plagiarism tools more broadly, use our best plagiarism checker comparison. If you are evaluating broader writing workflows, our AI writing tools compared in 2026 guide may be more relevant than an AI detector alone.

How to use Scribbr without overreacting

The safest way to use Scribbr is to treat it as one signal in a larger review process. Start with the document itself. Look for a mismatch between the assignment and the answer, fabricated citations, unsupported claims, sudden style shifts, missing process notes, or sections the author cannot explain. Then use Scribbr to identify passages worth a closer look.

For teachers, the review should include process evidence. Ask for outlines, notes, draft history, source annotations, or a short oral explanation of the argument. A student who can explain why each source was used and how the draft changed over time should not be treated the same as a student who cannot describe the paper. AI detection can support that conversation, but it cannot replace it. Our guide to AI detectors for teachers covers this workflow in more detail.

For editors and publishers, Scribbr is most useful as a triage tool. A high score can justify deeper editorial review, source verification, and author follow-up. It should not trigger automatic rejection. Many legitimate writers use grammar tools, translation aids, citation tools, and AI summarizers during research and revision. If your workflow includes summarization or research support, see our guides to AI summarizer tools for long documents and AI research tools for academics.

For students and job applicants, do not chase a 0% score. A detector can flag polished human writing, especially if the prose is formal, repetitive, or formulaic. Instead, preserve proof of authorship. Keep drafts. Use version history. Save notes. Track sources. If you use AI within your institution’s rules, disclose it in the format your school requires. If you use translation or language support, our AI translation tools tested guide can help you choose tools that fit your policy environment.

When to choose a different tool

Choose a different tool if your main question is not “does this sound AI-generated?” Scribbr is not a source verifier. It does not tell you whether a citation exists. It does not prove plagiarism. It does not show a full writing-process timeline. It does not verify whether a student used ChatGPT in a permitted or prohibited way.

Use a plagiarism checker when you need similarity evidence. Use a citation checker or manual source audit when you need to verify references. Use version history when authorship is disputed. Use classroom discussion when a student’s understanding matters. Use a prompt or drafting tool when your goal is better writing, not detection. For that last use case, our ChatGPT prompt generator tools roundup is a better starting point.

Scribbr is strongest for academic users who already know Scribbr’s plagiarism and citation ecosystem. It is weaker for organizations that need audit trails, policy enforcement, team dashboards, API access, or calibrated thresholds across departments. For those buyers, the right comparison set includes education-focused AI detectors, plagiarism platforms, authorship-tracking tools, and document-management workflows rather than only free web checkers.

The bottom line is not that Scribbr is bad. It is that AI detection is an uncertain category. Scribbr gives a useful probability signal, especially for obvious cases. It does not give certainty. Any policy that treats a detector percentage as final evidence is stronger than the technology supports.

Frequently asked questions

Is Scribbr AI Detector accurate?

Scribbr appears reasonably accurate compared with many free detectors, especially for obvious AI-generated text. Scribbr’s own comparison says its premium detector scored 84% and its free detector scored 78% in that test.[3] That still is not enough for proof, because false positives and false negatives can happen.

Can Scribbr AI Detector prove that a student used ChatGPT?

No. Scribbr can provide a probability signal, but it cannot prove who wrote a document or which tool was used. For academic misconduct, combine detector output with drafts, version history, assignment fit, source review, and a conversation with the student.

What does a high Scribbr AI score mean?

A high score means Scribbr found patterns that it associates with AI-generated or AI-refined text. It does not automatically mean the author cheated or violated a policy. It should trigger closer review, not automatic punishment.

Is Scribbr AI Detector free?

Yes, Scribbr offers a free AI detector with repeated checks and no sign-up requirement. Scribbr’s current detector page lists a limit of up to 1,200 words per free submission.[1] Premium AI detection is tied to Scribbr’s premium plagiarism workflow, which Scribbr lists as starting from $19.95.[4]

Does Scribbr detect plagiarism too?

The AI detector itself does not detect plagiarism. Scribbr says its AI content checker identifies AI-generated, AI-refined, and human-written content, while its plagiarism checker is the tool for similarity checking.[4] Use both only when you need both authorship-pattern review and source-similarity review.

Should writers rewrite text just to lower a Scribbr score?

Not automatically. If the writing is genuinely yours, first preserve drafts, notes, sources, and version history. Rewrite for clarity and originality, not merely to satisfy a detector that may be wrong.