Copyleaks is a serious AI detector for organizations that need more than a quick paste-and-check tool. It combines AI detection, plagiarism checking, reports, browser access, Google Docs support, LMS integrations, and API options. That makes it a better fit for schools, publishers, agencies, and compliance teams than for one-off curiosity checks. The main caution is important: Copyleaks scores should guide review, not replace human judgment. AI detection remains probabilistic, and even strong detectors can misread polished, formulaic, translated, edited, or non-native writing. This Copyleaks review explains where the tool is useful, what it costs, and how to use it without overtrusting a score.

Verdict

Copyleaks is best for readers who need an AI detector inside a broader originality workflow. It is not just a public AI checker. Copyleaks positions the product around AI detection, plagiarism detection, explainable reporting, integrations, and institutional controls. Its official AI Detector page says it supports AI detection in more than 30 languages, detects content from models such as GPT-5, GPT-4, ChatGPT, Claude, Gemini, DeepSeek, Llama, Bloom, Rytr, and Jasper, and can identify mixed human and AI-generated text within a report.[1]

Our take: Copyleaks is a good shortlist candidate for schools, editors, and teams that need reports, auditability, and repeatable review procedures. It is a poor fit if you want a simple yes-or-no verdict on whether a person cheated, copied, or used ChatGPT. If you are comparing detectors for classrooms, start with Best AI Detectors for Teachers and Schools. If you care about copied source material as much as AI use, compare it with Best Plagiarism Checkers.

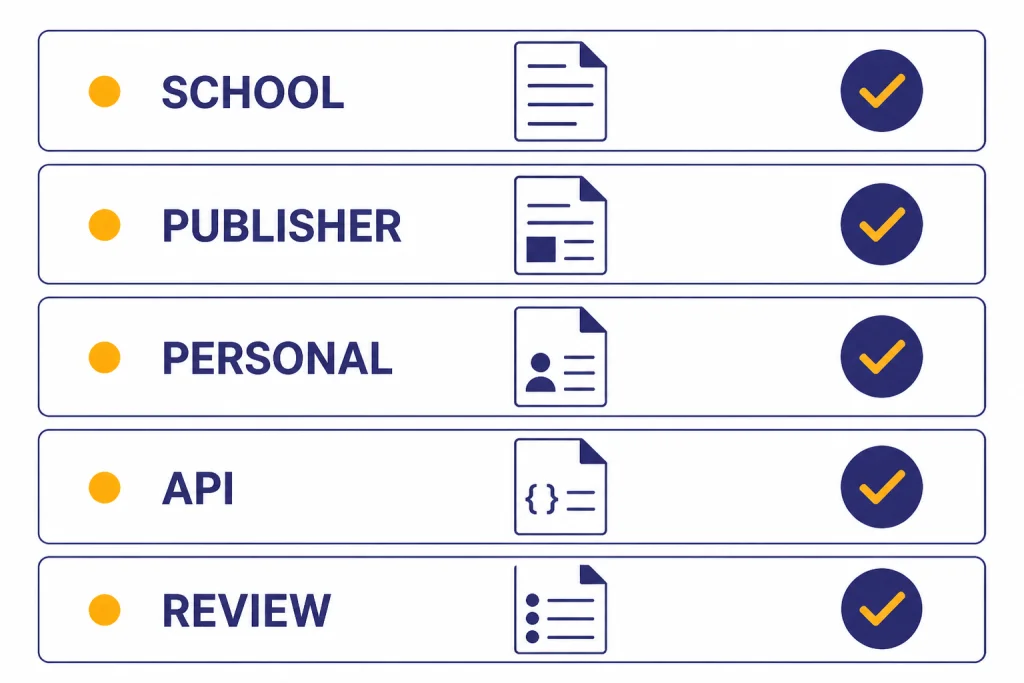

| Use case | Copyleaks fit | Why |

|---|---|---|

| School assignment review | Strong, with policy safeguards | LMS support, AI detection, plagiarism checking, and reports fit academic workflows. |

| Publisher content intake | Strong | Teams can combine AI signals with plagiarism checks and editorial review. |

| One-off personal checking | Mixed | The free credits help, but paid plans may be more than casual users need.[1] |

| Disciplinary proof | Weak on its own | A detector score is evidence for review, not proof of authorship. |

| Developer integration | Strong | The API supports document workflows, hosted reports, and SDKs.[12] |

How Copyleaks works

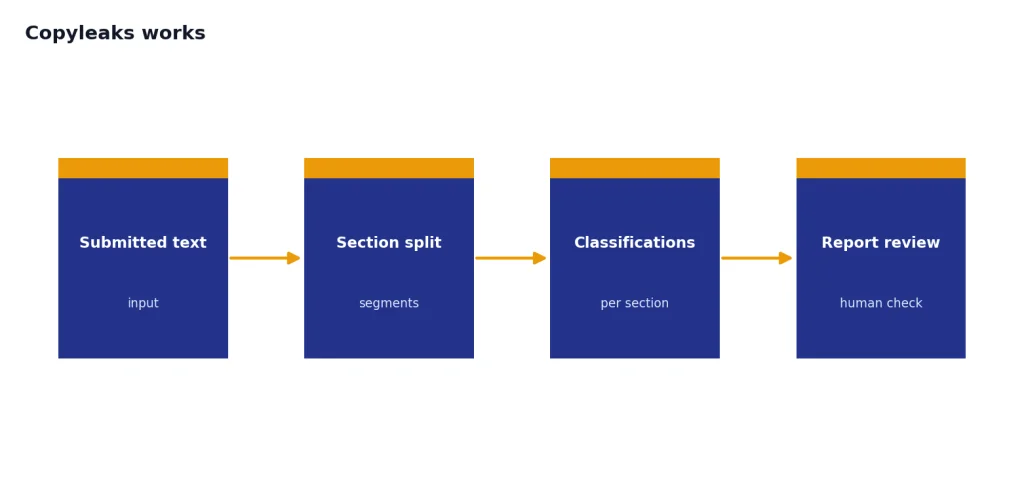

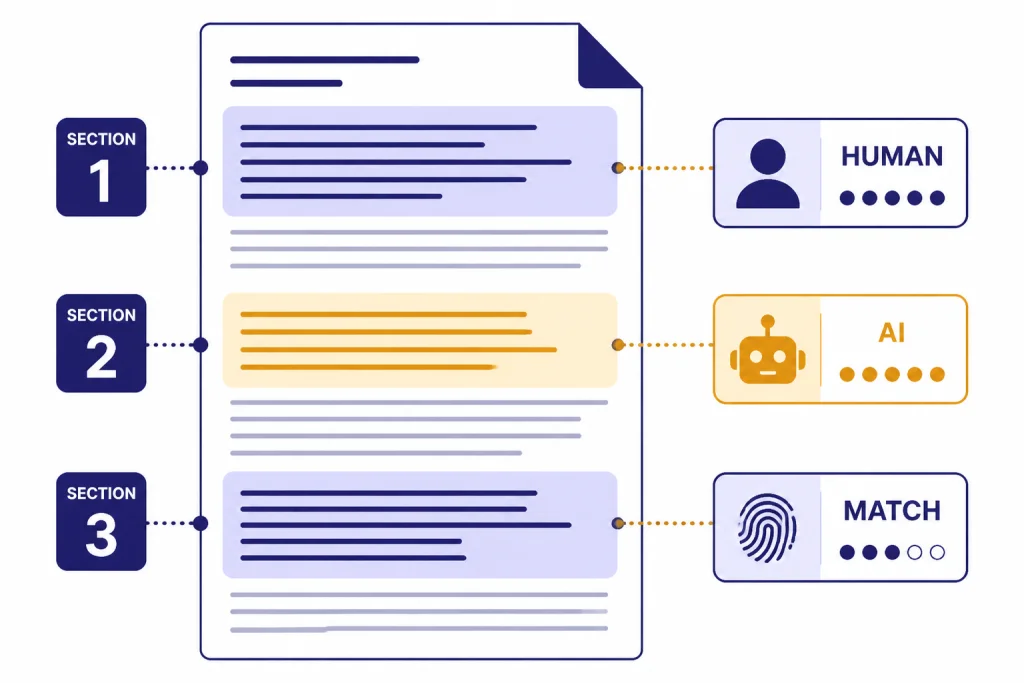

Copyleaks analyzes text for patterns associated with machine-generated writing and returns classifications that can be reviewed at the document and section level. Its API documentation says the AI Content Detection endpoint receives submitted text, then returns classifications, with text classification divided into sections so different parts of a document can receive different classifications.[5]

The practical value is that a reviewer does not have to treat a whole paper, blog post, or report as one black-box score. A mixed document can show human-written sections, suspected AI-generated sections, and plagiarism matches in the same workflow. That is useful for teachers, editors, and compliance reviewers because the next step is targeted review, not automatic punishment.

Copyleaks also supports more than web pasting. Its technical documentation defines one page as up to 250 words for billing, lists common document types such as PDF, DOCX, TXT, CSV, PPTX, XLSX, EPUB, and source-code file types for plagiarism workflows, and lists a maximum document length of 2,000 pages or 500K words.[4]

For developers, Copyleaks offers API workflows for plagiarism detection, AI-generated text detection, grammar checking, hosted reports, open-source report modules, AI image detection, and moderation. The documentation lists official SDKs for Python, JavaScript, Java, C#, PHP, and Ruby.[12] If you are evaluating writing tools more broadly, our best AI writing tools compared in 2026 guide covers tools that create and edit text rather than detect it.

Accuracy evidence

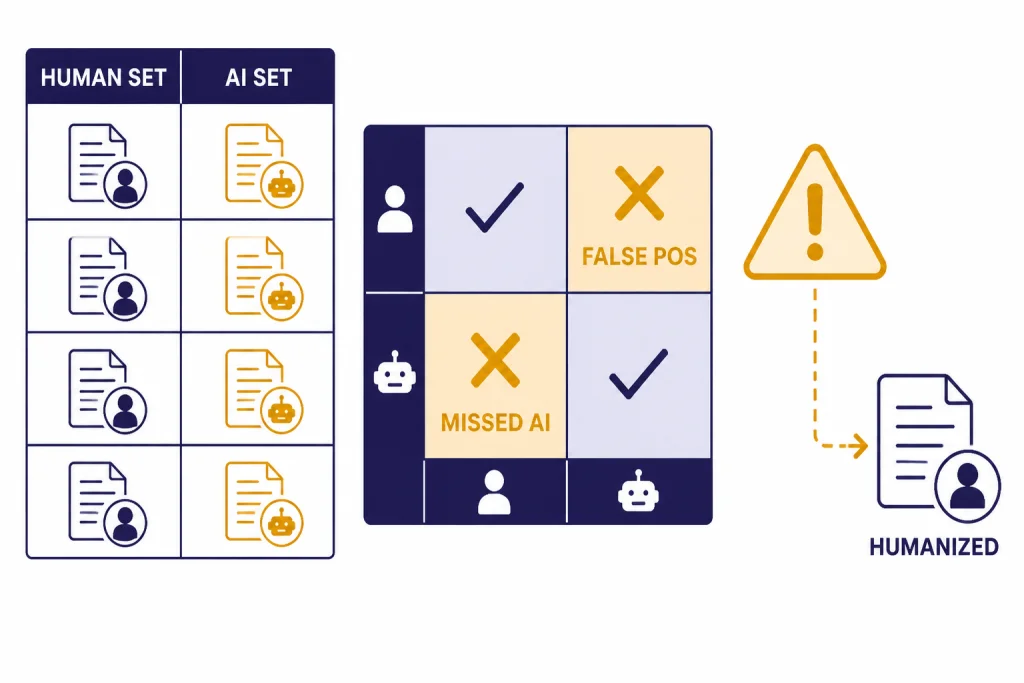

Copyleaks makes strong accuracy claims, and some independent research has ranked it well. Its official AI Detector page says the system reaches over 99% accuracy and claims a .03% likelihood that human-written content is mistakenly labeled as AI-generated.[1] Treat those as vendor claims, not universal guarantees. Accuracy depends on the text, language, length, model, editing history, and the way a reviewer interprets the report.

Copyleaks published a V10 testing methodology with a test date of October 16, 2025, and a publish date of November 12, 2025.[3] In the Data Science Team test described there, Copyleaks reports 300,000 human-written texts and 200,000 AI-generated texts, with TPR of 0.988 and TNR of 0.999 on the combined dataset.[3] That is a large internal test, and it is helpful because the company discloses the model version, dates, dataset counts, and evaluation metrics.

Independent work is more useful for buyers because it is less controlled by the vendor. A 2023 arXiv study of eight publicly available LLM-generated text detectors in computing education found Copyleaks to be the most accurate detector in that test, while also warning that all detectors were less accurate with code, non-English languages, and paraphrasing tools.[8] Another arXiv study on DeepSeek-generated text found that Copyleaks and QuillBot were near-perfect on original and paraphrased DeepSeek text in its test, but humanization reduced Copyleaks accuracy to 71%.[9]

The pattern is clear. Copyleaks is one of the stronger detectors when the input resembles text it is good at analyzing. It is less reliable when the text has been heavily humanized, paraphrased, translated, shortened, or changed to imitate a different writing profile. That does not make Copyleaks useless. It means the result should trigger a review process.

| Evidence type | What it supports | What it does not prove |

|---|---|---|

| Vendor accuracy page | Copyleaks’ own claim of over 99% accuracy and .03% false-positive likelihood.[1] | Performance on every school, publisher, language, genre, or adversarial rewrite. |

| Copyleaks V10 methodology | Large internal English-language testing with disclosed model version and metrics.[3] | Independent real-world performance across all customer contexts. |

| Computing education study | Strong relative performance versus other detectors in one academic benchmark.[8] | Reliable detection of code, paraphrased work, or all non-English writing. |

| DeepSeek study | Strong detection on original and paraphrased DeepSeek samples, with weaker performance after humanization.[9] | That humanized AI text can always be detected. |

Pricing and plan limits

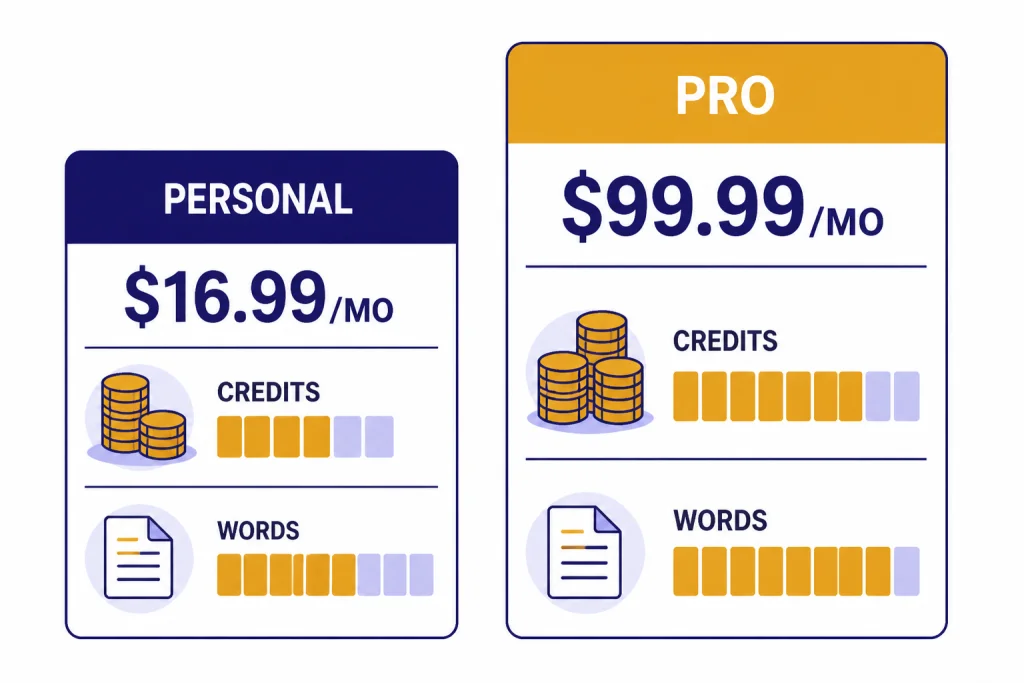

Copyleaks uses subscription plans for individual users and custom pricing for education and enterprise customers. The Personal plan is listed at $16.99 per month or $13.99 per month when billed annually, and the Pro plan is listed at $99.99 per month or $74.99 per month when billed annually.[2] Both paid individual plans include access to AI detection and plagiarism detection.[2]

Credits matter more than the monthly price. Copyleaks says Personal includes 100 scan credits for up to 25,000 words per month, and Pro includes 1,000 scan credits for up to 250,000 words per month.[2] The pricing FAQ says one credit covers 250 words or less.[2] That means short documents can consume credits quickly if you scan many separate items.

| Plan | Monthly price | Annual-billing price | Included monthly scan volume | Best fit |

|---|---|---|---|---|

| Personal | $16.99/month[2] | $13.99/month[2] | 100 credits, up to 25,000 words[2] | Individual writers, tutors, light editorial review |

| Pro | $99.99/month[2] | $74.99/month[2] | 1,000 credits, up to 250,000 words[2] | Small teams, agencies, frequent reviewers |

| Education | Custom[2] | Custom[2] | Customized during onboarding[2] | Schools, colleges, districts |

| Enterprise | Custom[2] | Custom[2] | Customized during onboarding[2] | Publishers, platforms, compliance teams |

The cancellation terms deserve attention. Copyleaks says subscriptions renew automatically unless canceled before the next billing cycle, canceling stops future renewals but does not immediately end current access, and refunds are only issued if the request is submitted within the first 10 days of the billing cycle and no pages or credits have been used.[2] If you only need occasional checks, compare the effective cost per reviewed document before choosing a paid plan.

Best use cases

Schools and universities

Copyleaks fits schools because it can sit inside institutional workflows. Its LMS integration page lists Brightspace, Canvas, Google Classroom, Moodle, Blackboard, Edsby, Sakai, and Schoology.[6] That matters because teachers should not have to copy student work into a separate detector one document at a time.

The strongest academic use is not automatic accusation. It is triage. A high AI score can prompt a teacher to review drafts, compare writing history, ask the student to explain their process, and check whether the assignment instructions allowed AI assistance. For policy design, pair this review with this guide to best AI detectors for teachers and schools.

Publishers and content teams

Copyleaks can help editors screen contributor submissions, SEO drafts, syndicated content, and outsourced writing. It is especially useful when AI detection and plagiarism detection need to live in the same report. For teams buying adjacent tools, Best AI Summarizer Tools for Long Documents and Best AI Research Tools for Academics cover tools that may appear earlier in the content workflow.

Software platforms and internal systems

Copyleaks is also a platform product. The API documentation says the default account rate is 10 requests per second and that API rate-limit errors return HTTP 429, after which the host cannot authenticate with the API for 5 minutes.[4] Those limits are manageable for many internal review tools, but high-volume platforms should verify rate limits, retention settings, and support terms before implementation.

Developers should also note a documentation mismatch around code. The public AI Detector page says the detector can analyze and identify AI-generated code.[1] However, Copyleaks release notes say that on December 16, 2025, AI code detection capabilities were deprecated from submit endpoints, while natural-language AI Content Detection remains supported.[11] If source-code detection is central to your purchase, confirm the current product path with Copyleaks sales before buying.

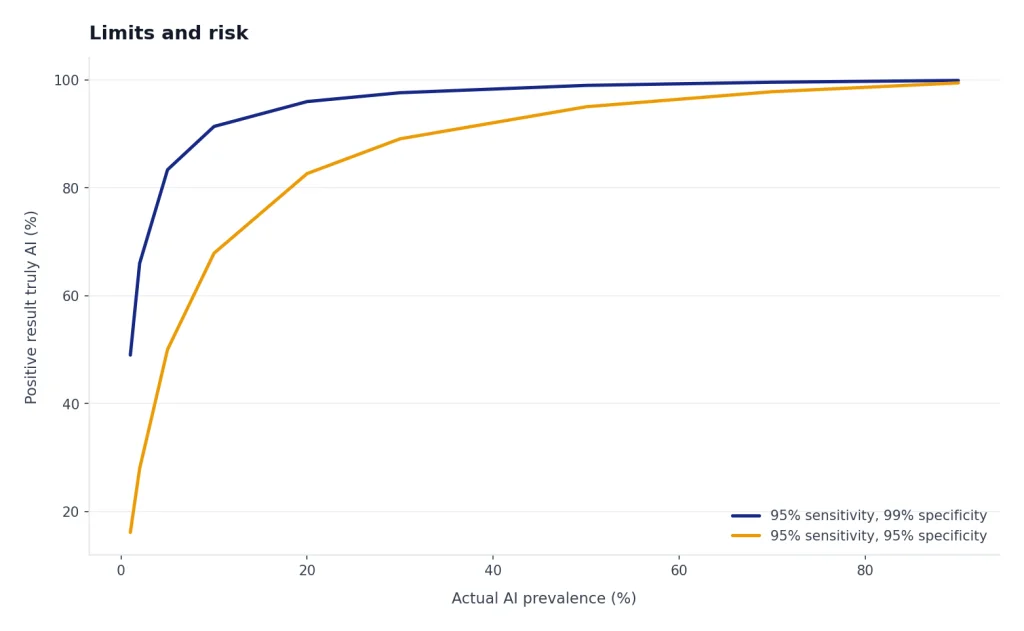

Limits and risk

The biggest risk in any Copyleaks review is overconfidence. AI detectors estimate likely authorship signals. They do not observe the writing process. They do not know whether a student used an outline, a grammar checker, an AI rewrite, a human editor, a translation tool, or no assistance at all.

OpenAI’s own discontinued AI Text Classifier is a useful cautionary example. OpenAI said the classifier was no longer available as of July 20, 2023, due to its low rate of accuracy; in its evaluation, it identified 26% of AI-written text as likely AI-written and incorrectly labeled human-written text as AI-written 9% of the time.[10] OpenAI also warned that classifiers can be unreliable on short text, can confidently mislabel human writing, can perform worse outside recommended languages, and can be evaded by edited AI-written text.[10]

Copyleaks is not OpenAI’s old classifier, and the evidence above suggests it performs better in several benchmarks. The caution still applies. A detector score should be one input. Strong review processes use drafts, version history, citations, assignment design, interviews, and writing samples from the same author. If the issue is plagiarism rather than AI use, use a plagiarism-first workflow and compare options in the best plagiarism checkers breakdown.

Privacy and compliance also matter. Copyleaks says it has SOC 2 and SOC 3 certification, that its SOC 3 audit was performed by KPMG, and that it is committed to GDPR practices including encryption and allowing users to delete uploaded content.[7] Those are useful signals, but institutions should still review contracts, data-processing terms, retention settings, student privacy obligations, and whether uploaded work becomes part of any shared comparison database.

Alternatives

Copyleaks is not the only AI detector worth evaluating. The right alternative depends on the environment. A classroom needs policy tools and review history. A publisher needs workflow speed and plagiarism checks. A developer needs API stability. A solo writer may need only a low-cost sanity check.

| Option | Best reason to consider it | Main caution |

|---|---|---|

| Copyleaks | Best fit when AI detection, plagiarism checking, LMS, API, and reporting need to work together. | Scores still need human review, and code-detection support should be confirmed.[11] |

| Turnitin-style academic systems | Best when a school already standardizes submission, grading, and similarity reports in one platform. | Institutional convenience can make users overtrust automated signals. |

| GPTZero-style standalone detectors | Best for quick checks and lighter workflows. | May not provide the same originality, LMS, and governance stack. |

| Originality-focused publisher tools | Best for editorial teams that primarily screen web content and outsourced writing. | May be less suitable for schools that need LMS administration. |

| No detector | Best when the organization uses process-based assessment, drafts, oral defense, and version history. | Harder to scale across large document volumes. |

If your real need is content production rather than detection, look at Best ChatGPT Prompt Generator Tools, ai resume builder tools compared, and OpenAI Token Counter Tools. Those tools solve different problems, but they often appear in the same writing and review stack.

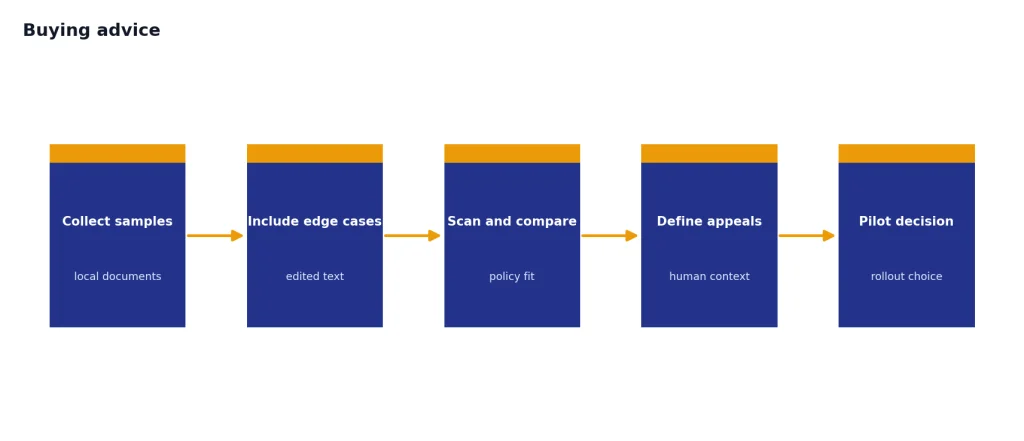

Buying advice

Choose Copyleaks if you need a serious originality review system and you are willing to build a fair review process around it. The tool is strongest when it flags risk, shows where the risk appears, and gives reviewers enough context to investigate. It is weakest when an organization treats a percentage as a verdict.

Before buying, test Copyleaks on documents from your own environment. Use known human writing, known AI-generated writing, edited AI writing, translated writing, citation-heavy writing, formulaic writing, and short submissions. Compare results against your policy. If your organization cannot explain how a person can appeal or contextualize a score, it is not ready to use any detector in a high-stakes workflow.

For individual buyers, the Personal plan is the natural starting point. For schools and platforms, skip the casual plan comparison and talk to sales about LMS, API, data handling, retention, and admin controls. For publishers, run a pilot with real contributor material before standardizing on a detector. A good Copyleaks deployment reduces review friction. It should not reduce fairness.

Frequently asked questions

Is Copyleaks accurate?

Copyleaks reports over 99% accuracy and a .03% likelihood of human-written content being mislabeled as AI-generated.[1] Independent studies have also found strong performance in some benchmarks.[8] Do not treat that as a universal guarantee. Test it on your own document types before using it for decisions that affect students, employees, or contractors.

Can Copyleaks detect ChatGPT?

Copyleaks says it can detect content from GPT-5, GPT-4, ChatGPT, Claude, Gemini, DeepSeek, Llama, Bloom, Rytr, Jasper, and other systems.[1] That claim means the detector is trained to recognize signals associated with those outputs. It does not mean every edited or humanized ChatGPT passage will be caught.

How much does Copyleaks cost?

The Personal plan is listed at $16.99 per month or $13.99 per month when billed annually, and the Pro plan is listed at $99.99 per month or $74.99 per month when billed annually.[2] Education and Enterprise pricing is custom.[2] Check the current pricing page before purchase because SaaS pricing can change.

Is Copyleaks safe for student work?

Copyleaks says it has SOC 2 and SOC 3 certification and follows GDPR practices.[7] That is a good starting point, but schools should still review the contract, data-processing terms, retention settings, and student privacy requirements. Safety is not just a vendor claim; it depends on configuration and policy.

Can Copyleaks replace plagiarism checking?

No. AI detection and plagiarism detection answer different questions. AI detection estimates whether text resembles machine-generated writing, while plagiarism detection looks for copied or closely matched source material. Copyleaks can combine both in a single workflow, which is one reason it is useful for schools and publishers.[2]

Should a teacher punish a student based only on a Copyleaks score?

No. A Copyleaks score should start a review, not end it. Teachers should examine drafts, version history, citations, the assignment prompt, the student’s prior writing, and the student’s explanation. This is especially important because even strong detectors can produce false positives and false negatives.