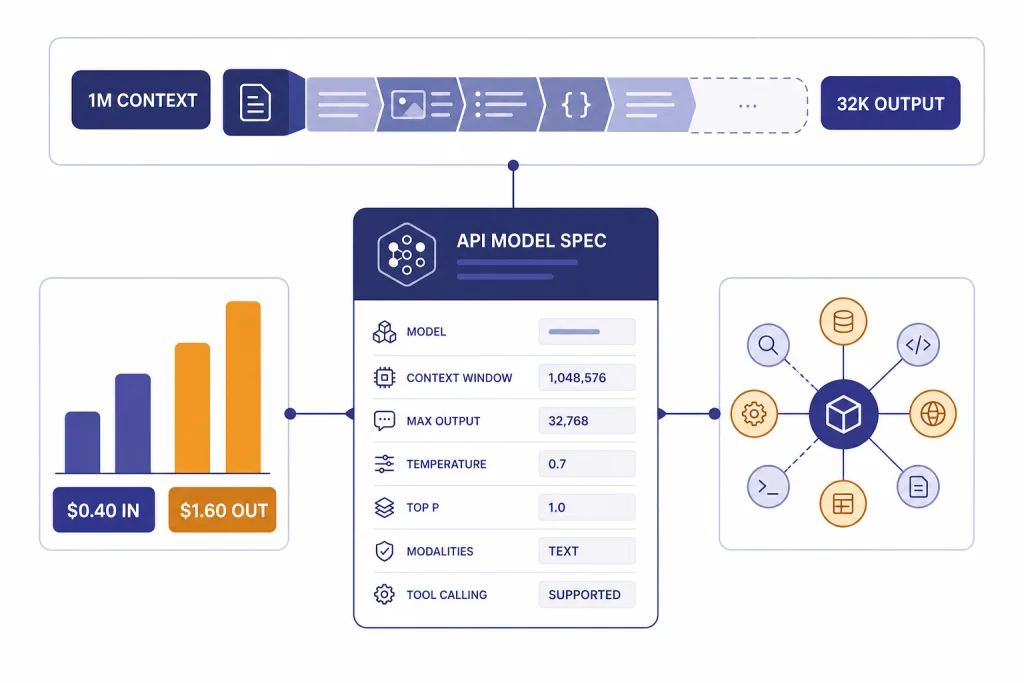

GPT-4.1 mini is OpenAI’s smaller, faster member of the GPT-4.1 family. It is built for developers who need strong instruction following, tool calling, coding support, and long-context processing at a lower cost than the full GPT-4.1 model. The API model supports text input and output, image input, a 1,047,576-token context window, and up to 32,768 output tokens.[2] It is best suited for production assistants, structured extraction, codebase Q&A, support automation, and high-volume workflows where the full GPT-4.1 model is not necessary. It is not the best choice for heavy reasoning or native audio and video generation.

What GPT-4.1 mini is

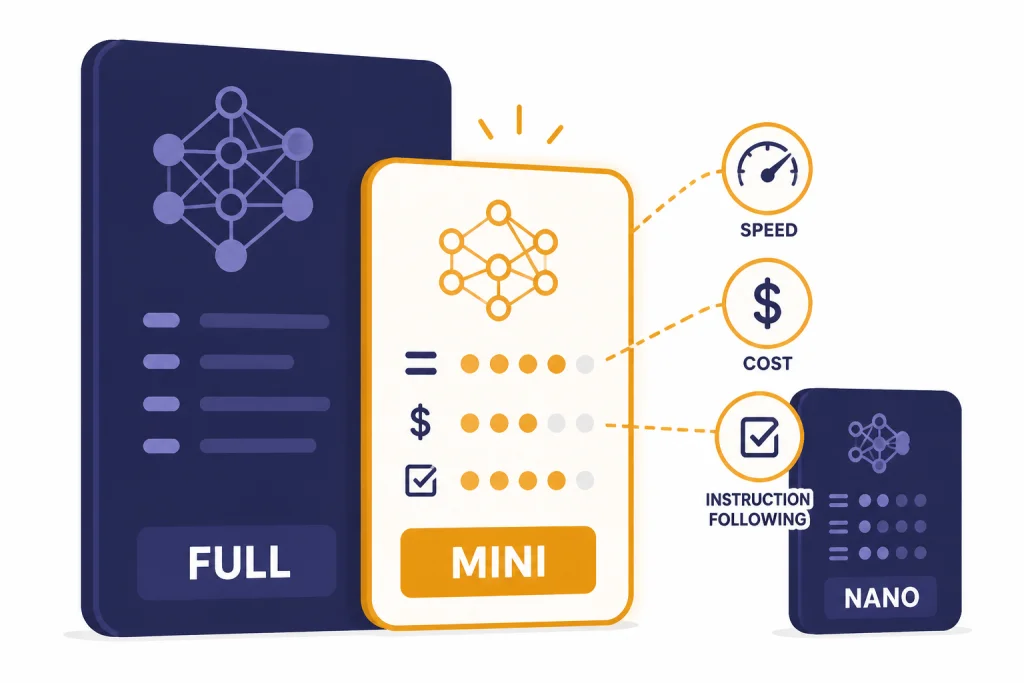

GPT-4.1 mini is the cost-and-speed tier of OpenAI’s GPT-4.1 API family. OpenAI launched GPT-4.1, GPT-4.1 mini, and GPT-4.1 nano in the API on April 14, 2025.[1] The model sits between the full GPT-4.1 model and GPT-4.1 nano. It aims to keep much of the family’s instruction-following and long-context behavior while lowering latency and token cost.

The practical way to think about GPT-4.1 mini is simple. Use it when you need a general-purpose API model that can follow detailed instructions, call tools, return structured data, and read very large inputs. Move up to a stronger model when the task depends on deep reasoning, difficult math, high-stakes analysis, or nuanced creative judgment. Move down to a smaller model when the task is mostly classification, tagging, or simple extraction.

For broader model selection, start with all GPT models compared side by side. If your decision is mostly about speed, compare it with the fastest GPT model. If your decision is mostly about cost, see our OpenAI API pricing guide.

Core specs

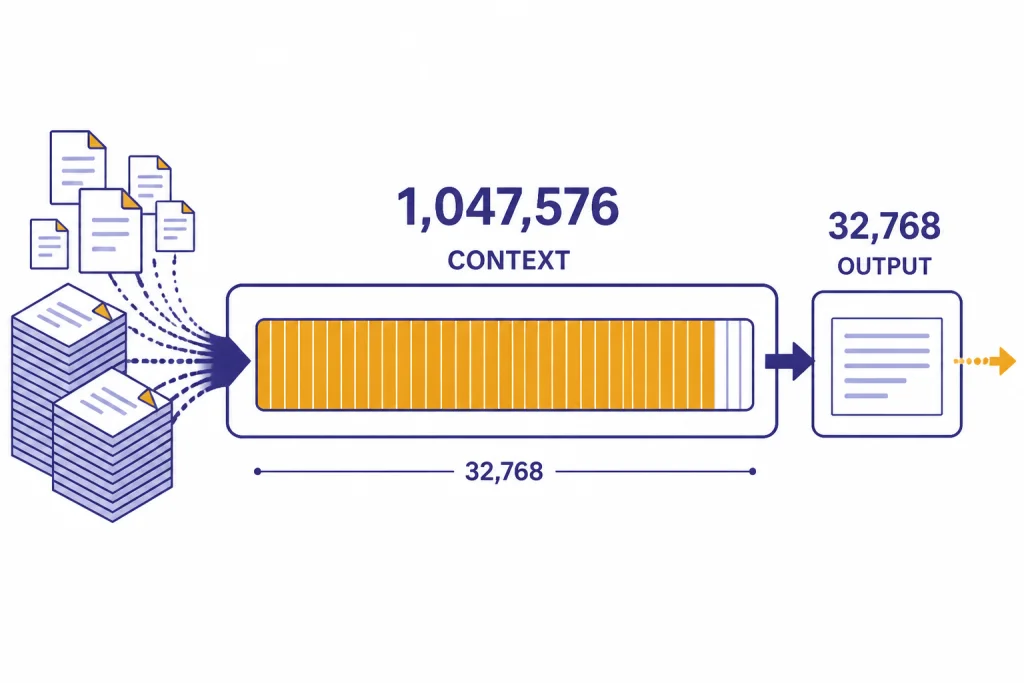

GPT-4.1 mini’s defining spec is its long context window. OpenAI’s model page lists a 1,047,576-token context window and a 32,768-token maximum output length.[2] OpenAI’s launch post described the GPT-4.1 family as supporting up to 1 million tokens of context, up from 128,000 for earlier GPT-4o models.[1] For developers, that changes the design space. You can pass long documents, large code files, extensive conversation history, or multiple retrieved passages without splitting every task into small chunks.

The model accepts text and image input and returns text output.[2] It does not support audio or video as native input/output modalities on the model page.[2] If your workflow depends on speech transcription, generated images, or video generation, pair GPT-4.1 mini with a specialized model such as Whisper, DALL-E 3, or Sora instead of treating it as an all-media model.

| Spec | GPT-4.1 mini | What it means in practice |

|---|---|---|

| Context window | 1,047,576 tokens[2] | Large enough for long documents, multi-file code context, and extensive retrieval payloads. |

| Maximum output | 32,768 tokens[2] | Long responses are possible, but you should still set output limits for cost control. |

| Knowledge cutoff | June 1, 2024[2] | The model does not inherently know later events unless you provide context or use retrieval. |

| Input modalities | Text and image[2] | Useful for text-heavy work and image understanding, not native audio or video pipelines. |

| Output modality | Text[2] | Use other models for image, voice, or video output. |

| Supported features | Streaming, function calling, structured outputs, fine-tuning, and predicted outputs[2] | Suitable for production API apps that need reliable formatting and tool use. |

OpenAI has not published an official parameter count for GPT-4.1 mini. Treat any claimed parameter number from third-party posts as an estimate unless OpenAI publishes it.

If context size is your main selection factor, compare this model with our context window sizes for every GPT model reference before you build around a maximum prompt size.

Pricing and cost

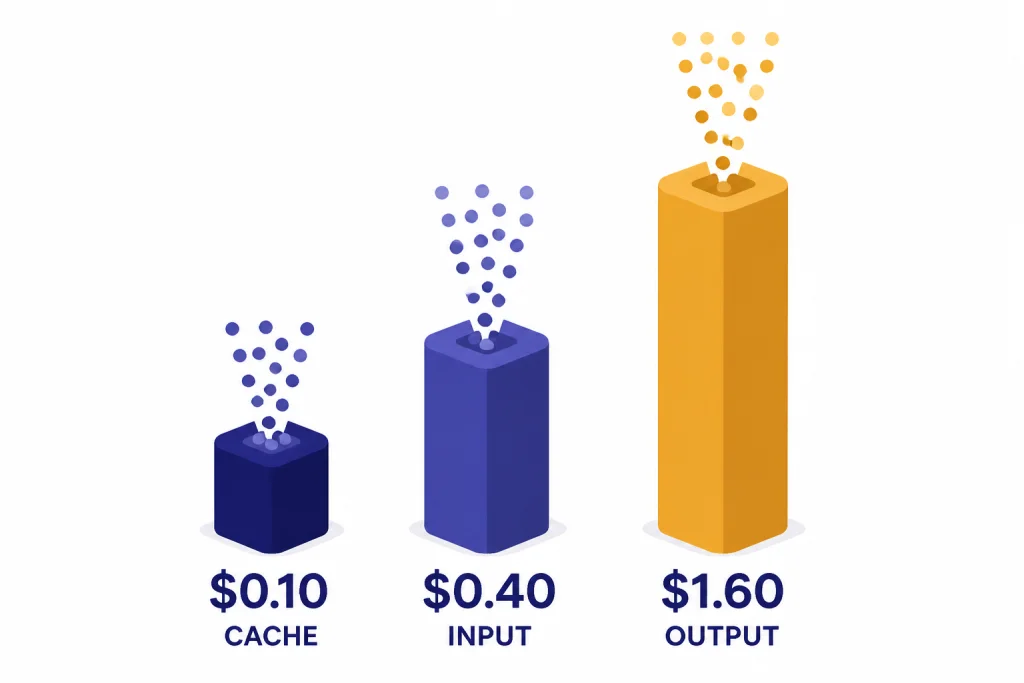

OpenAI lists GPT-4.1 mini at $0.40 per 1 million input tokens, $0.10 per 1 million cached input tokens, and $1.60 per 1 million output tokens.[2] OpenAI’s GPT-4.1 launch post published the same prices and described a 50% Batch API discount for the GPT-4.1 family.[1] A third-party pricing tracker also listed GPT-4.1 mini at $0.40 per 1 million input tokens and $1.60 per 1 million output tokens in early 2026, which corroborates the public rate.[6]

The most important budgeting point is that output tokens cost 4 times as much as input tokens at the standard GPT-4.1 mini API rate. A short classifier with a large input and a tiny label output can be very cheap. A writing assistant that produces long drafts can cost more than expected, even if the prompt is small.

| Token type | Price | Cost control tactic |

|---|---|---|

| Input | $0.40 per 1M tokens[2] | Trim irrelevant context and use retrieval instead of pasting full archives. |

| Cached input | $0.10 per 1M tokens[2] | Put stable system prompts, schemas, and repeated context in cache-friendly positions. |

| Output | $1.60 per 1M tokens[2] | Set explicit response lengths and request structured fields instead of prose when possible. |

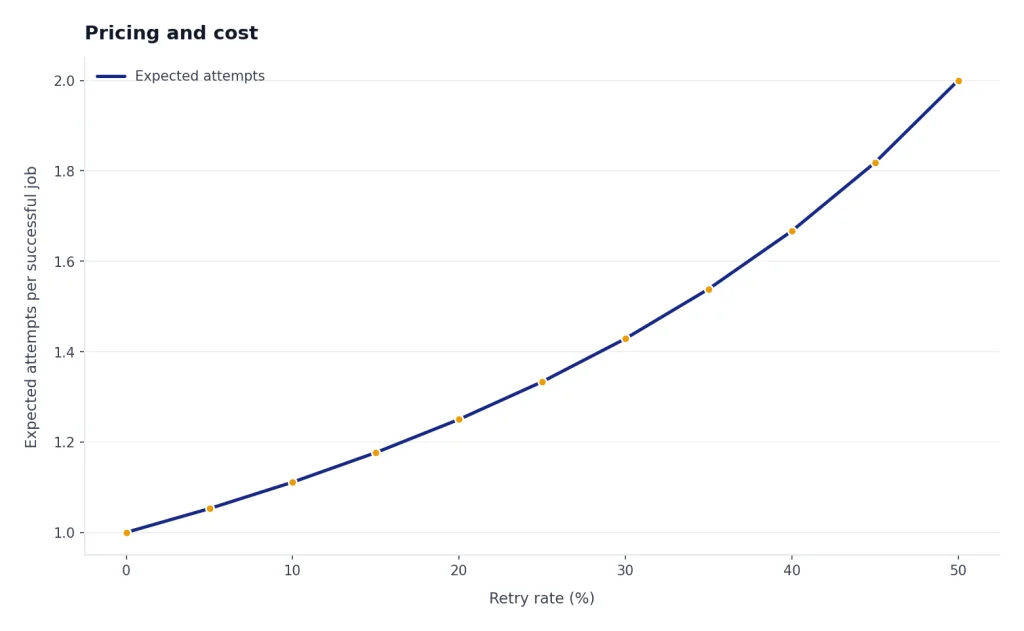

For high-volume workloads, test GPT-4.1 mini against smaller and newer alternatives with your own prompts. A model that is cheaper per token can still cost more if it needs retries, longer prompts, or more validation. Our cheapest GPT model comparison is a better starting point if price is the main constraint.

Benchmarks and performance

OpenAI’s published GPT-4.1 benchmark table reports GPT-4.1 mini at 87.5% on MMLU, 65.0% on GPQA Diamond, 23.6% on SWE-bench Verified, and 34.7% on Aider’s polyglot benchmark.[1] These numbers show a capable general model, not a top reasoning specialist. In the same OpenAI table, the full GPT-4.1 model scores 90.2% on MMLU and 54.6% on SWE-bench Verified, while GPT-4.1 nano scores 80.1% on MMLU and 9.8% on Aider’s polyglot benchmark.[1]

Benchmarks should not be read as a universal ranking. GPT-4.1 mini may beat a larger model on a narrow extraction task because it follows the schema more consistently. It may lose on a hard planning task because it does not reason as deeply as a dedicated reasoning model. Build a small evaluation set from your own tickets, documents, prompts, and code diffs before committing to it.

| Task type | Expected fit | Why |

|---|---|---|

| Instruction-following assistant | Strong | OpenAI describes GPT-4.1 mini as excelling at instruction following and tool calling.[2] |

| Code review and codebase Q&A | Good | The long context window helps when the model must inspect many files. |

| Hard algorithmic repair | Mixed | Use your own tests or compare with a stronger coding model in best GPT model for coding. |

| High-volume classification | Good, but test nano too | GPT-4.1 nano may be cheaper for low-risk labels, while mini gives more headroom. |

| Deep reasoning | Not ideal | Use a reasoning-focused model when correctness depends on multi-step deliberation. |

For creative drafting, GPT-4.1 mini can be useful when you value cost and formatting control. For final editorial tone, long-form style, and sensitive rewriting, compare it with our best GPT model for writing recommendations.

Best use cases

GPT-4.1 mini works best when the task has clear instructions, a defined output shape, and enough context to justify using a long-context model. It is a practical production model rather than a novelty model.

Long-document analysis

Use GPT-4.1 mini for contract summaries, policy comparisons, research-note synthesis, meeting transcript cleanup, and knowledge-base consolidation. Its context window lets you pass large source bundles, but you should still ask for grounded answers with citations to the provided text. Long context does not remove the need for source discipline.

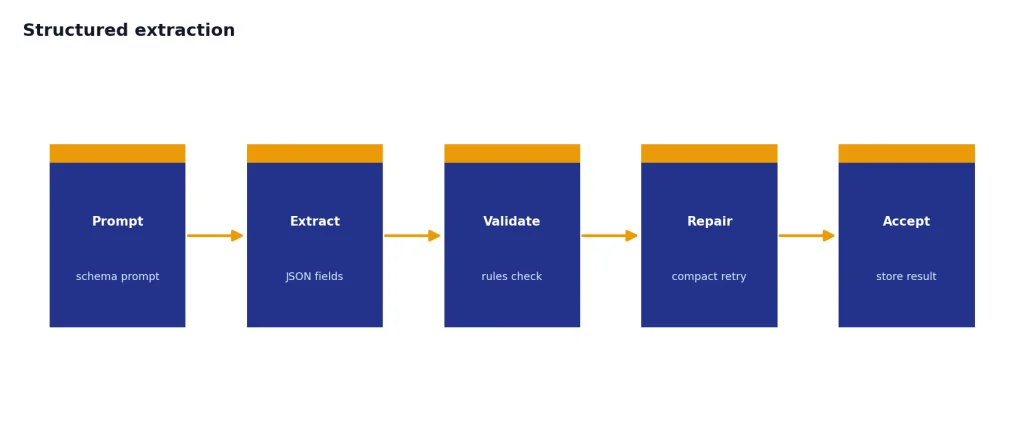

Structured extraction

The model is a good fit for turning messy inputs into JSON, tables, tags, summaries, or routing decisions. Pair structured outputs with validation. If the schema fails, retry with a compact repair prompt rather than sending the entire job again.

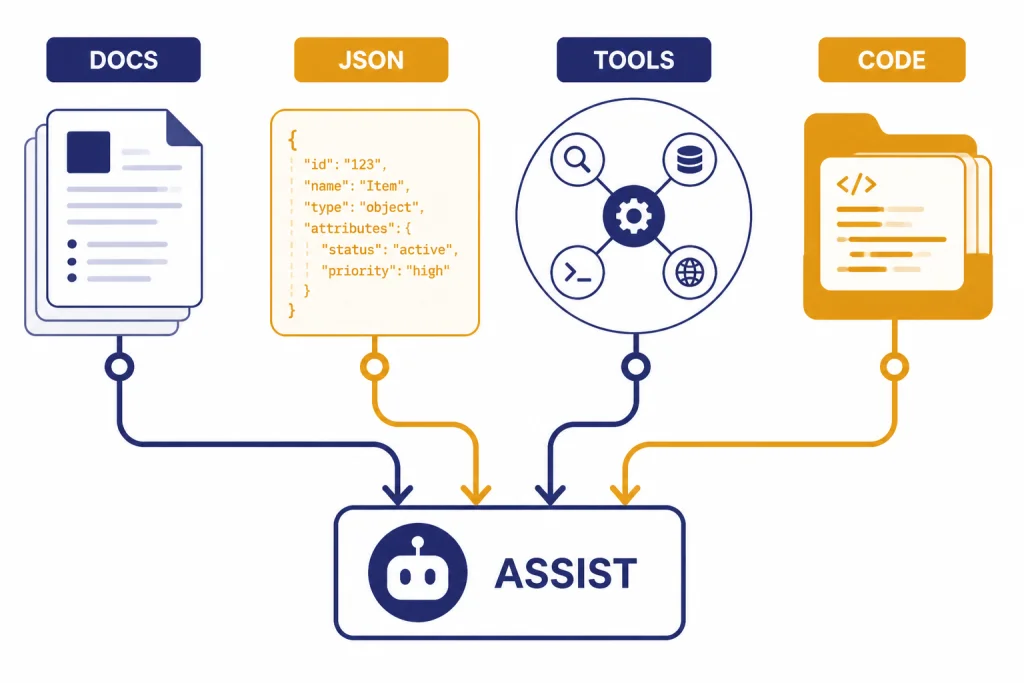

Tool-calling agents

OpenAI lists function calling and structured outputs as supported GPT-4.1 mini features.[2] That makes it suitable for support bots, internal operations assistants, CRM workflows, and data-entry automations. Keep tools narrow. The model should choose among safe, well-described actions, not improvise broad permissions.

Codebase Q&A and lightweight coding

GPT-4.1 mini can answer questions across multiple files, explain unfamiliar modules, draft tests, and help with small refactors. It is less compelling for difficult bug hunts where a stronger coding or reasoning model may save time. Use it as a first-pass model, then escalate when tests fail or the change touches critical systems.

Customer support and internal help desks

The model is well matched to support triage, answer drafting, policy lookup, and escalation summaries. The right architecture is retrieval plus GPT-4.1 mini, not a giant static prompt. Store the current policy in your retrieval layer so the model’s June 1, 2024 knowledge cutoff does not become a product bug.[2]

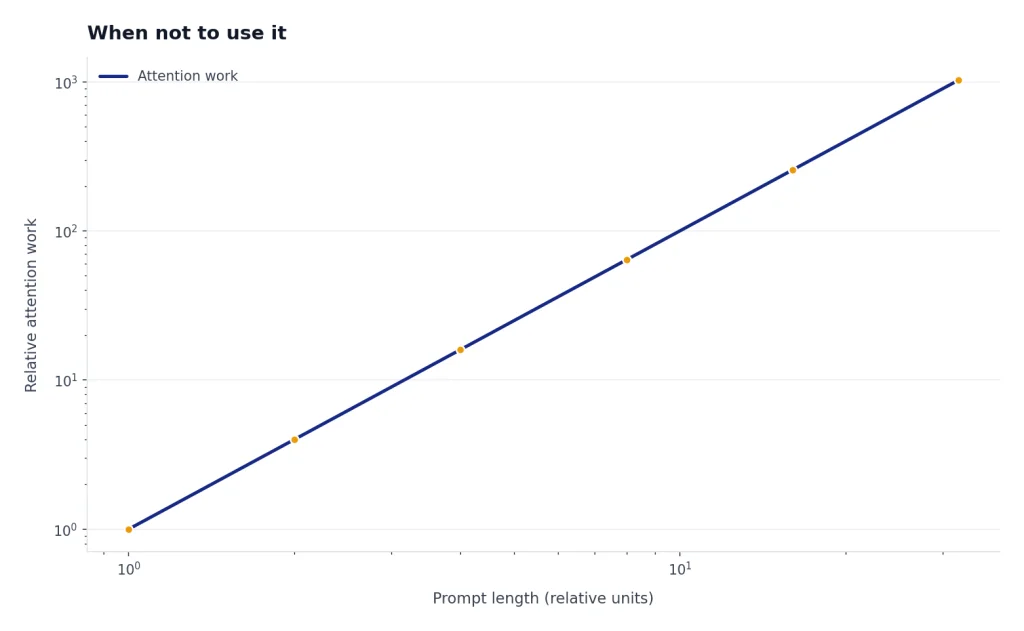

When not to use it

Do not choose GPT-4.1 mini only because it has a large context window. A large prompt can still be slow, expensive, and harder to audit. Use retrieval, chunk ranking, and summaries when they produce a smaller and more relevant prompt.

Avoid it for native audio, native video, and image generation workflows. GPT-4.1 mini’s model page lists text output, with image as an input modality, and audio and video as unsupported.[2] For image generation, start with the best GPT model for image generation. For video generation comparisons, use Sora 2 or a dedicated video-model guide.

Be careful with high-stakes domains. A smaller model can be efficient, but cost is not the only variable. For legal, medical, financial, security, and safety-sensitive decisions, use human review, stronger models where appropriate, narrow tools, and measurable evaluations.

Do not use GPT-4.1 mini as a substitute for a reasoning model when the task requires deliberate multi-step analysis. If your prompt includes phrases such as “prove,” “derive,” “optimize,” “debug a failing distributed system,” or “choose the safest treatment,” you should test a reasoning model such as OpenAI o4-mini or a stronger current alternative.

API access and implementation notes

OpenAI lists GPT-4.1 mini on the Responses API, Chat Completions API, Realtime API, Assistants API, Batch API, and fine-tuning endpoint.[2] For new applications, the Responses API is usually the cleaner default because it is designed around modern tool use and multimodal inputs. Existing systems that already use Chat Completions can still integrate the model through that endpoint.

The model aliases listed by OpenAI include gpt-4.1-mini and the snapshot gpt-4.1-mini-2025-04-14.[2] Use the dated snapshot when you need repeatable behavior for an evaluation, regulated workflow, or carefully tuned prompt. Use the alias when you prefer OpenAI’s default version and can tolerate behavior changes over time.

A practical implementation pattern is a three-step router. First, send simple labels and extraction jobs to a cheaper model. Second, send normal assistant, tool-calling, and long-context tasks to GPT-4.1 mini. Third, escalate failed or high-risk jobs to a stronger model. The router should use observable signals: input length, required output format, confidence checks, validation failures, and user tier.

Use the OpenAI Playground review workflow to test prompts before you commit code. Build a small benchmark with passing examples, failing examples, long examples, and adversarial examples. Measure schema validity, factual grounding, latency, token use, and retry rate. A cheap model with a high retry rate may not be cheap in production.

ChatGPT availability

GPT-4.1 mini’s API availability and ChatGPT availability are separate. OpenAI’s ChatGPT model release notes say GPT-4.1 mini replaced GPT-4o mini in ChatGPT for all users on May 14, 2025.[4] The same release notes say OpenAI retired GPT-4o, GPT-4.1, GPT-4.1 mini, and OpenAI o4-mini from ChatGPT on February 13, 2026, with no API changes at that time.[4]

That means this guide is mainly relevant for API users, product teams, and developers maintaining systems that call GPT-4.1 mini directly. If you are choosing a model inside ChatGPT, the available picker may differ from the API model catalog. If you are choosing a subscription for personal use, compare model access and price separately in our ChatGPT Plus price in 2026 guide.

There was also some early confusion around safety documentation. TechCrunch reported in April 2025 that GPT-4.1 did not ship with a separate system card, citing OpenAI’s position that GPT-4.1 was not a frontier model.[5] OpenAI’s later ChatGPT release notes say GPT-4.1 and GPT-4.1 mini went through standard safety evaluations and pointed readers to the Safety Evaluations Hub.[4] The safest reading is that OpenAI did safety evaluation work, but did not publish a traditional standalone system card for the GPT-4.1 family at launch.

Frequently asked questions

Is GPT-4.1 mini the same as GPT-4o mini?

No. GPT-4.1 mini is a member of the GPT-4.1 family, while GPT-4o mini belongs to the GPT-4o family. OpenAI said GPT-4.1 mini replaced GPT-4o mini in ChatGPT for all users on May 14, 2025, before later retiring GPT-4.1 mini from ChatGPT on February 13, 2026.[4]

How large is the GPT-4.1 mini context window?

OpenAI lists GPT-4.1 mini with a 1,047,576-token context window.[2] The launch post describes the GPT-4.1 family as supporting up to 1 million tokens of context.[1] In practice, you should still send the smallest relevant context that solves the task.

How much does GPT-4.1 mini cost?

OpenAI lists the standard GPT-4.1 mini API price at $0.40 per 1 million input tokens, $0.10 per 1 million cached input tokens, and $1.60 per 1 million output tokens.[2] OpenAI’s GPT-4.1 launch post published the same figures.[1] Your actual bill depends on prompt size, output length, caching, retries, and batch usage.

Does GPT-4.1 mini support images?

Yes, but only as input. OpenAI’s model page lists text and image input, with text output.[2] Use a dedicated image generation model if you need to create or edit images.

Is GPT-4.1 mini good for coding?

It is useful for code explanation, codebase Q&A, small refactors, tests, and tool-assisted development. OpenAI’s benchmark table reports GPT-4.1 mini at 23.6% on SWE-bench Verified and 34.7% on Aider’s polyglot benchmark.[1] For hard bug fixes or complex architecture work, compare it against stronger coding models on your own repository.

Is GPT-4.1 mini still available in ChatGPT?

Not as of this article’s publication date. OpenAI’s release notes say GPT-4.1 mini was retired from ChatGPT on February 13, 2026, while adding that there were no API changes at that time.[4] API users should check the current model catalog before deploying new production work.