OpenAI o3-mini is a small reasoning model built for coding, math, science, and other technical tasks where step-by-step problem solving matters more than broad multimodal capability. OpenAI released it on January 31, 2025, with access in ChatGPT and the API, and priced the API at $1.10 per 1 million input tokens, $0.55 per 1 million cached input tokens, and $4.40 per 1 million output tokens.[1][2] As of this April 16, 2026 guide, o3-mini is best treated as a legacy cost-efficient reasoning option: useful for existing API workloads, but no longer the center of OpenAI’s ChatGPT reasoning lineup after later o-series releases.[5]

Quick verdict

OpenAI o3-mini made the most sense when you needed reasoning quality above a standard small chat model but did not want to pay for a full frontier reasoning model. It was positioned for STEM work, especially coding, math, and scientific reasoning, and it added production-friendly API features that earlier small reasoning models lacked.[2]

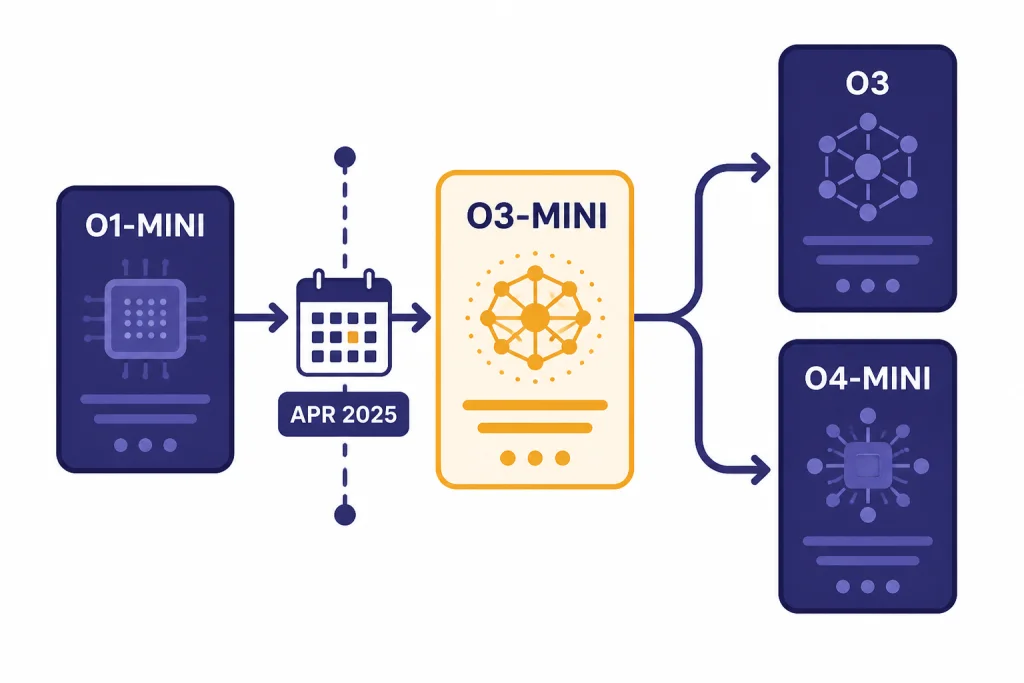

The model is less attractive as a new default in 2026. OpenAI’s later ChatGPT reasoning lineup replaced o3-mini and o3-mini-high in the model selector with newer o-series options on April 16, 2025.[5] If you are maintaining an existing API integration, o3-mini can still be worth understanding. If you are choosing a model for a new application, compare it against all GPT models compared side by side, OpenAI o3, and OpenAI o4-mini before committing.

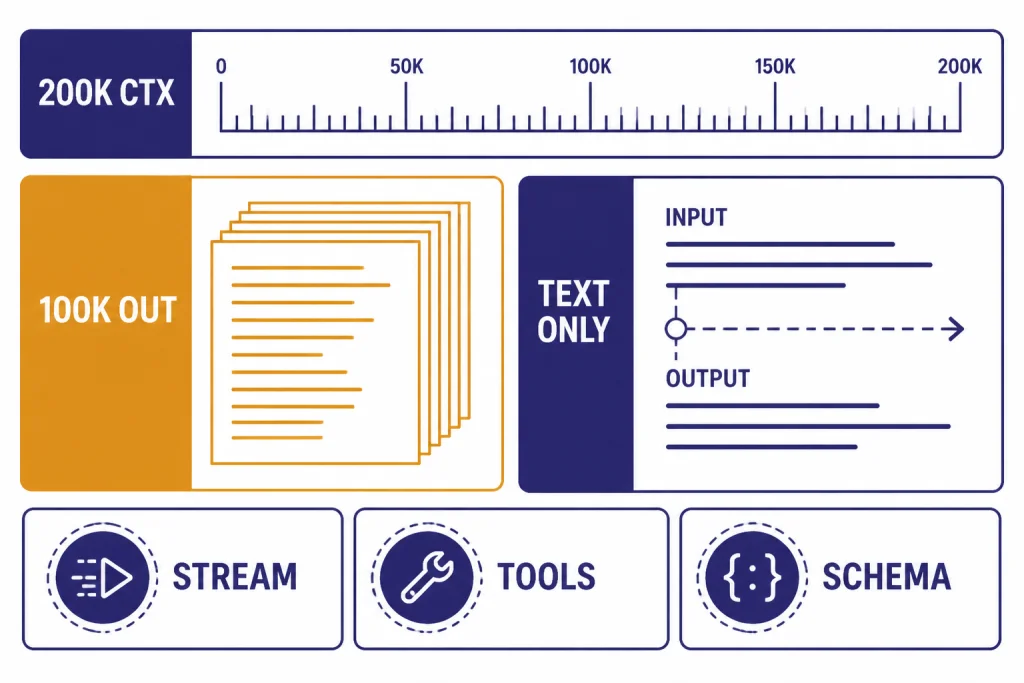

The short version: o3-mini is a technical reasoning workhorse. It is text-only. It supports long context, tool use through function calling, structured outputs, streaming, and Batch API workflows.[1] It is not the right choice for image understanding, audio, video, or the newest ChatGPT reasoning experience.

OpenAI o3-mini specs

OpenAI’s API documentation lists o3-mini as a small alternative to o3 with a 200,000-token context window, 100,000 maximum output tokens, text input and text output, and a knowledge cutoff of October 1, 2023.[1] Those details matter because o3-mini can handle large prompts, but it should not be treated as a current-events model unless you connect it to retrieval, search, or your own data.

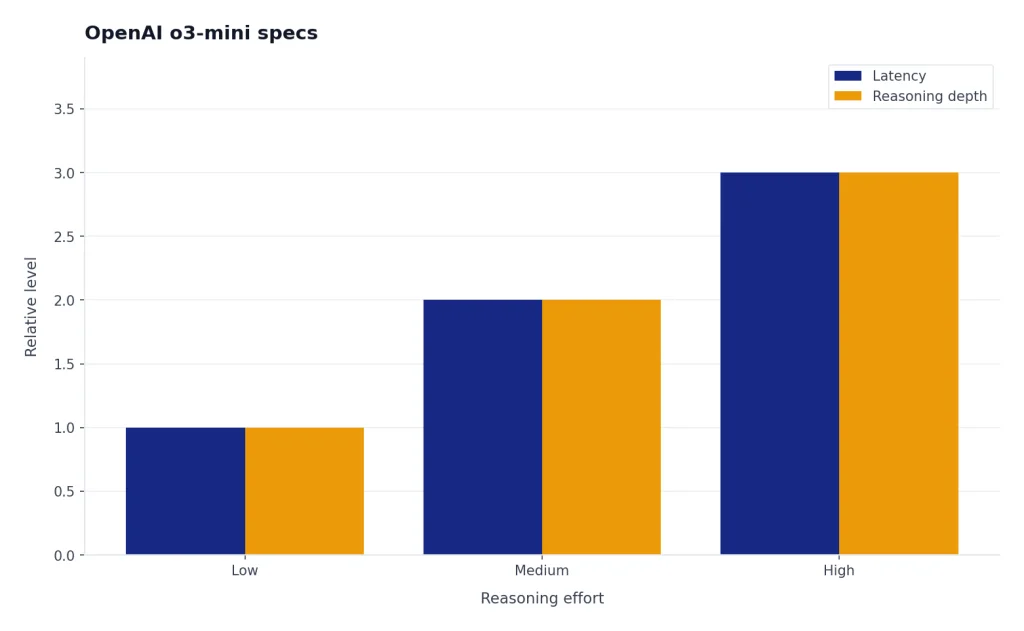

OpenAI’s launch note said developers could choose low, medium, or high reasoning effort, trading latency for depth.[2] In ChatGPT at launch, o3-mini used medium reasoning effort, while paid users could select o3-mini-high for a slower but stronger response path.[2]

| Spec | OpenAI o3-mini | What it means |

|---|---|---|

| Model family | o-series reasoning | Built to spend extra compute on hard reasoning steps |

| Context window | 200,000 tokens | Can ingest long code, policy, or research prompts |

| Maximum output | 100,000 tokens | Can produce very long answers when allowed |

| Modalities | Text input and text output | No native image, audio, or video support |

| Knowledge cutoff | October 1, 2023 | Use retrieval for newer information |

| Developer features | Streaming, function calling, Structured Outputs, Batch API | Usable in production workflows that need predictable outputs |

| Fine-tuning | Not supported | Use prompting, retrieval, or another model if customization is required |

For context-window planning across the model lineup, see our context window sizes for every GPT model. If your task depends on images rather than text, start with GPT-4 Vision or our guide to the best GPT model for image generation instead.

Pricing and rate limits

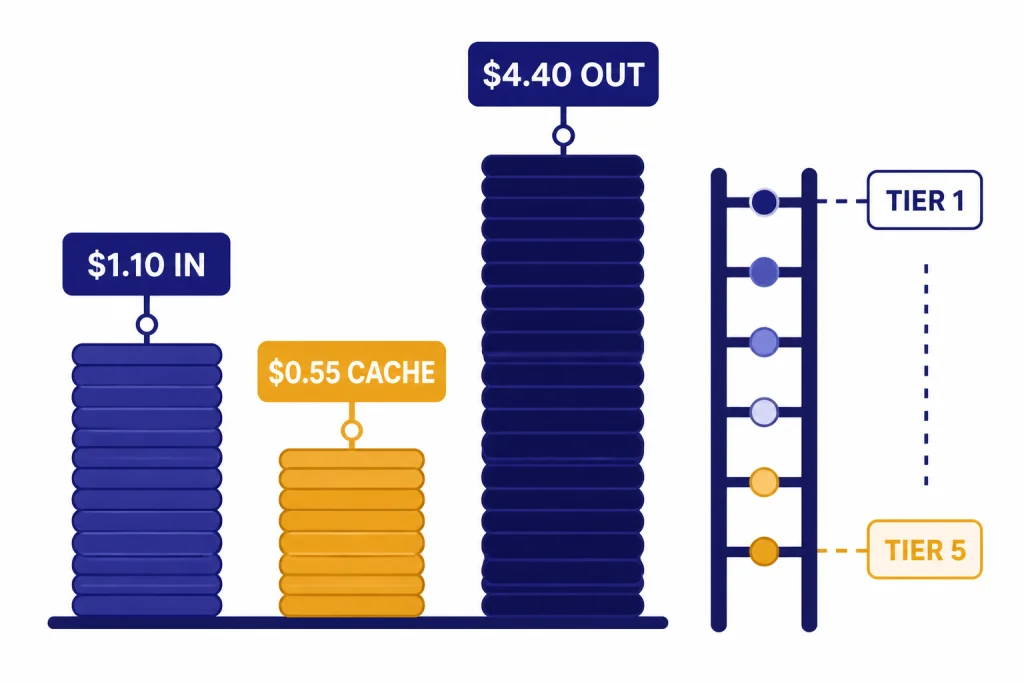

OpenAI lists o3-mini pricing at $1.10 per 1 million input tokens, $0.55 per 1 million cached input tokens, and $4.40 per 1 million output tokens.[1] A third-party pricing tracker also listed $1.10 input and $4.40 output pricing for o3-mini across providers, which corroborates the headline token rates.[6]

The output side is the main cost driver. A workload that sends short prompts but asks for long reasoning-heavy answers can cost more than expected because output tokens are priced at 4 times the input-token rate.[1] Reasoning models can also spend hidden reasoning tokens internally, so test with real prompts before estimating production spend.

| Token type | Price per 1 million tokens | Planning note |

|---|---|---|

| Input | $1.10 | Text you send to the model |

| Cached input | $0.55 | Reusable prompt content when caching applies |

| Output | $4.40 | Text the model generates |

OpenAI’s model page lists rate limits by API usage tier. Tier 1 is shown at 1,000 requests per minute and 100,000 tokens per minute, while Tier 5 is shown at 30,000 requests per minute and 150,000,000 tokens per minute.[1] Treat those as account-tier limits, not a guarantee for every organization. If you are comparing total API spend, use our OpenAI API pricing guide and the cheapest GPT model comparison.

Tool use can add separate costs. OpenAI’s pricing page lists web search at $10.00 per 1,000 calls, with search content tokens billed at model rates for reasoning-model web search preview usage.[7] That can matter if you pair o3-mini with live retrieval for current information.

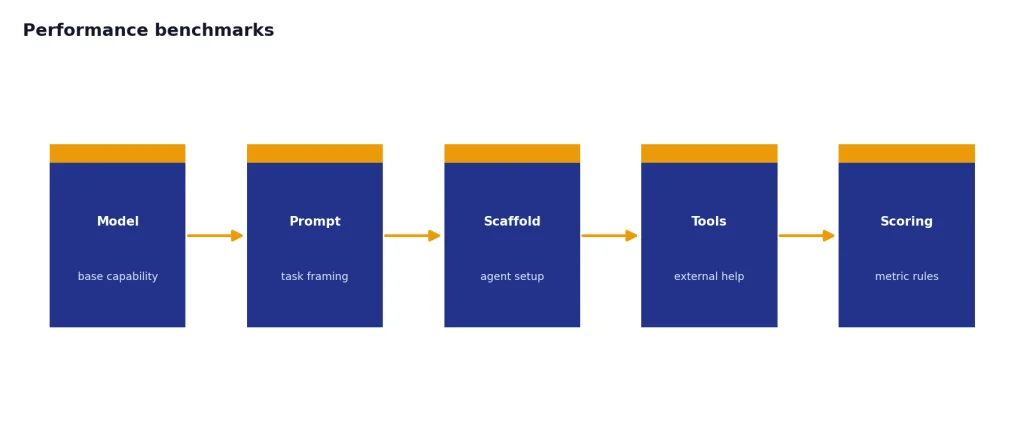

Performance benchmarks

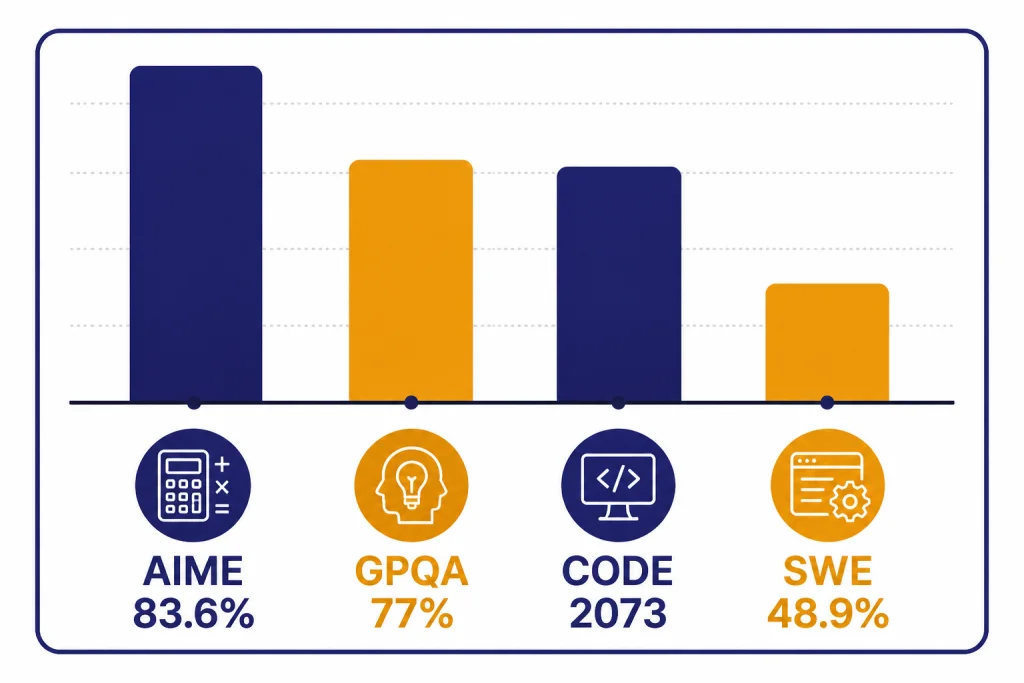

OpenAI presented o3-mini as strongest in STEM work. In its launch benchmarks, o3-mini-high reached 83.6% accuracy on AIME 2024, 77.0% on GPQA Diamond, 2,073 Elo on Codeforces, and 48.9% on SWE-bench Verified.[2] Those numbers are useful, but they should not be read as a universal ranking for every task.

The same OpenAI release said expert testers preferred o3-mini over o1-mini 56% of the time and observed a 39% reduction in major errors on difficult real-world questions.[2] OpenAI also reported that o3-mini delivered responses 24% faster than o1-mini in A/B testing, with an average response time of 7.7 seconds compared with 10.16 seconds.[2]

The system card adds important caution. OpenAI wrote that exact production performance can vary based on system updates, final parameters, system prompts, and other factors.[3] It also reported a separate SWE-bench Verified result of 39% with the Agentless scaffold and 61% with an internal tools scaffold for o3-mini.[3] That spread shows why benchmark setup matters as much as model name.

| Benchmark or evaluation | Reported o3-mini result | Best interpretation |

|---|---|---|

| AIME 2024 | 83.6% for o3-mini-high | Strong competition math performance |

| GPQA Diamond | 77.0% for o3-mini-high | Strong expert-level science QA result |

| Codeforces | 2,073 Elo for o3-mini-high | Competitive programming strength |

| SWE-bench Verified | 48.9% in launch chart; 39% to 61% in system-card setups | Good coding-agent potential, sensitive to scaffolding |

| A/B response speed | 7.7 seconds average versus 10.16 seconds for o1-mini | Faster than o1-mini in OpenAI’s test |

If your priority is raw model strength, compare this with our most powerful GPT model benchmark guide. If latency is the deciding factor, use our fastest GPT model comparison rather than relying only on reasoning benchmarks.

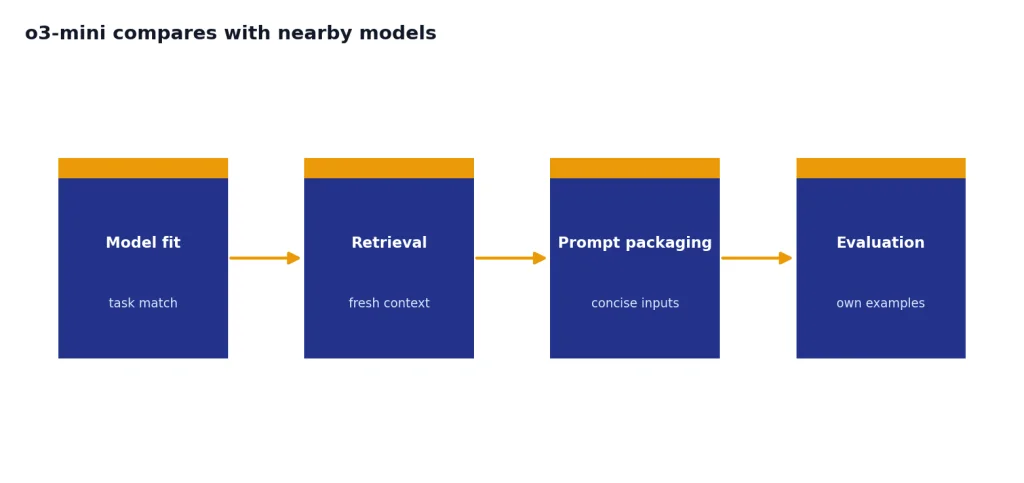

How o3-mini compares with nearby models

o3-mini sits between low-cost general chat models and heavier reasoning models. It is more specialized than a general-purpose mini chat model, but it is not the broadest or newest reasoning option. The right comparison depends on whether you care most about cost, code reasoning, latency, tool use, or multimodal input.

| Model path | Use it when | Avoid it when |

|---|---|---|

| o3-mini | You need cost-efficient text reasoning for coding, math, or structured technical answers | You need image input, audio, video, or the newest ChatGPT reasoning model |

| o1-mini | You are maintaining older workflows that already depend on it | You want OpenAI’s later small reasoning improvements |

| o3 | You need stronger reasoning than o3-mini and can accept higher cost or latency | Your task is routine and price-sensitive |

| o4-mini | You want a later small reasoning model in the o-series path | You must preserve exact o3-mini behavior in an existing integration |

| GPT-4o mini-style models | You need inexpensive general chat, extraction, or high-throughput text tasks | You need deeper multi-step reasoning on hard technical prompts |

For coding, o3-mini is still worth testing against newer models because its launch results were strong on software tasks. Our best GPT model for coding guide is the better place to compare current code-generation choices. For writing and editing, a reasoning model is often unnecessary; see the best GPT model for writing instead.

Do not choose o3-mini only because it has a large context window. A long context window helps with large repositories, multi-document prompts, and structured analysis, but it does not guarantee the best answer. The best workflow usually combines a suitable model, retrieval, concise prompt packaging, and evaluation on your own examples.

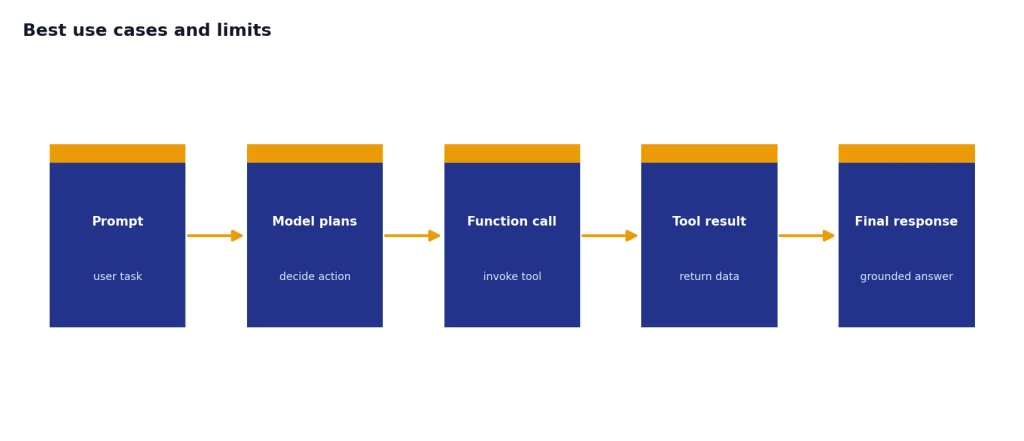

Best use cases and limits

o3-mini is strongest when the prompt rewards deliberate reasoning. Good fits include debugging a difficult function, explaining a proof, checking a SQL query, generating a structured test plan, comparing scientific arguments, and extracting rules from a long technical document. It is also practical when you need function calling or Structured Outputs in a reasoning workflow.[1]

- Code review: Ask it to identify failure modes, edge cases, and test gaps.

- Math and science tutoring: Use it for stepwise explanations, but verify final answers on high-stakes work.

- Technical extraction: Pair the 200,000-token context window with a schema for long documents.[1]

- Agentic workflows: Use function calling when the model must call tools instead of only writing prose.

- Batch analysis: Use the Batch API when latency is less important than cost and throughput.[1]

Its limits are just as important. o3-mini does not support image, audio, or video input in OpenAI’s API model page.[1] It is not a speech model like Whisper, an image model like DALL-E 3, or a video model like Sora. It also is not fine-tunable according to OpenAI’s o3-mini model documentation.[1]

The system card classifies o3-mini as Medium overall risk before mitigation, with Medium scores for persuasion, CBRN, and model autonomy, and Low for cybersecurity.[3] That does not mean typical users should avoid it. It means developers should keep normal safeguards: scoped tools, permission checks, logging, red-team prompts, and human review for consequential domains.

Migration notes for 2026

If you used o3-mini in ChatGPT at launch, the main change is that later o-series models took its place. OpenAI said on April 16, 2025, that ChatGPT Plus, Pro, and Team users would see o3, o4-mini, and o4-mini-high in the model selector, replacing o1, o3-mini, and o3-mini-high.[5] For ChatGPT users, that means o3-mini is mostly a historical model choice.

If you used o3-mini through the API, check the current model documentation before creating a new dependency. OpenAI’s model page still documents the o3-mini alias and the o3-mini-2025-01-31 snapshot, but the page also marks the snapshot as deprecated.[1] For production systems, pinning behavior, testing replacements, and monitoring output drift are safer than assuming an older model will remain the best fit indefinitely.

- For exact legacy behavior: keep regression tests around your existing prompts and schemas.

- For stronger reasoning: test OpenAI o3 or OpenAI o3-pro.

- For lower-cost modern reasoning: evaluate OpenAI o4-mini against your own tasks.

- For broad model selection: start with all GPT models compared side by side.

A good migration test set should include successful examples, known failures, edge cases, long-context prompts, tool-calling prompts, and cost measurements. Do not judge a reasoning model only by one benchmark or one impressive answer.

Frequently asked questions

What is OpenAI o3-mini?

OpenAI o3-mini is a small o-series reasoning model for technical tasks such as coding, math, and science. OpenAI released it on January 31, 2025, for ChatGPT and API users.[2] It is text-only and designed to trade some extra reasoning time for better problem solving.

How much does o3-mini cost in the API?

OpenAI lists o3-mini at $1.10 per 1 million input tokens, $0.55 per 1 million cached input tokens, and $4.40 per 1 million output tokens.[1] Output-heavy reasoning prompts can cost more than short extraction tasks, so measure real usage before budgeting.

What is the o3-mini context window?

OpenAI’s model page lists a 200,000-token context window and 100,000 maximum output tokens for o3-mini.[1] That makes it suitable for long technical prompts, but long context still needs careful prompt design.

Does o3-mini support images?

No. OpenAI’s o3-mini API documentation lists text input and text output, with image, audio, and video not supported.[1] Use a multimodal model if your task requires visual reasoning.

Is o3-mini better than o1-mini?

OpenAI reported that o3-mini outperformed o1-mini on advanced STEM tasks and that expert evaluators preferred o3-mini responses over o1-mini 56% of the time.[4] It also reported lower latency than o1-mini in its launch testing.[2] For current model selection, compare against newer o-series models too.

Should I use o3-mini in 2026?

Use o3-mini in 2026 mainly for existing API workloads, compatibility testing, or cost-efficient text reasoning where it already performs well. For new ChatGPT workflows, newer reasoning models have replaced it in the model selector.[5] For new API workflows, test o3-mini against current alternatives before choosing it.