The best GPT model for coding on April 15, 2026 is GPT-5.4 for most professional developers, because it combines strong reasoning, Codex integration, tool use, and public API access in one model. GPT-5.3-Codex is still the specialist pick for long-running agentic coding inside Codex, while GPT-5.3-Codex-Spark is the low-latency choice for rapid interactive edits. GPT-4.1 remains useful when you need a cheaper API model with long context and reliable code diffs. The right choice depends less on a single benchmark score and more on the work pattern: repo-wide debugging, quick autocomplete, architecture planning, test repair, or production API usage.

Quick answer

If you want one default answer, use GPT-5.4. OpenAI released GPT-5.4 on March 5, 2026 and described it as a frontier model for professional work across reasoning, coding, and agentic workflows.[1] It is the safest recommendation for teams that need a model available across ChatGPT, the API, and Codex rather than a model locked to one interface.

Use GPT-5.3-Codex when the job is a long-running software agent task inside Codex. OpenAI said GPT-5.3-Codex was its most capable agentic coding model when it launched on February 5, 2026, and positioned it for research, tool use, and complex execution.[2] Use GPT-5.3-Codex-Spark when the main problem is speed during real-time coding sessions. OpenAI described it as a research preview built for near-instant coding interaction and more than 1000 tokens per second under its low-latency serving setup.[3]

Use GPT-4.1 only when price, predictable API behavior, or legacy integration matters more than frontier reasoning. It was launched in the API on April 14, 2025 with improvements for coding, instruction following, and long context.[4] For a broader model-by-model view beyond coding, see all GPT models compared side by side.

What changed for coding models in 2026

The biggest change is that OpenAI’s coding story is no longer just “ask a chatbot to write code.” The strongest models now target agentic workflows: reading a repository, planning changes, editing files, using tools, running tests, and staying steerable while the job is in progress. GPT-5.4 is important because it merged the recent Codex coding line back into a mainline reasoning model that is available in ChatGPT, the API, and Codex.[1]

That shift changes how developers should choose a model. A model that writes a clean function in isolation is not always the best model for a broken monorepo. A model that is fast enough for line-by-line editing may not be the best model for a multi-hour refactor. A model with a huge context window may still need careful file selection, summaries, and tests. If your main constraint is repository size, pair this article with our context window comparison.

GPT-5.3-Codex also raised the floor for coding agents. OpenAI reported that GPT-5.3-Codex improved on GPT-5.2-Codex, combined coding strength with GPT-5.2-style reasoning and knowledge work, and ran 25% faster for Codex users.[2] GPT-5.4 then became the better general recommendation because it brought much of that capability into a broader model with public API pricing.[1]

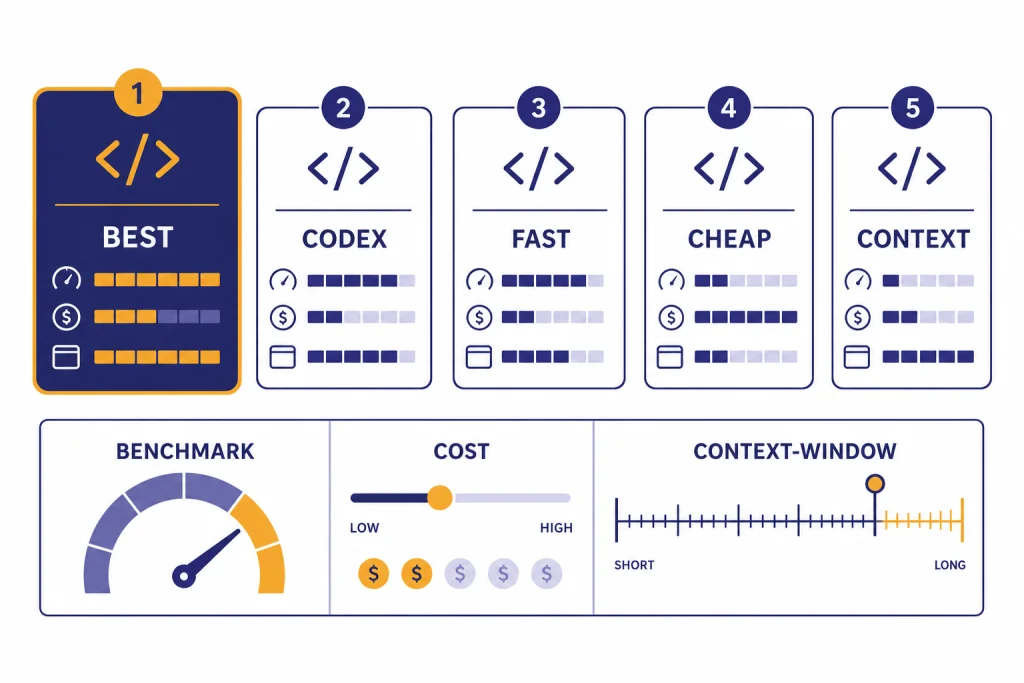

Coding model comparison table

This table ranks the practical choices as of April 15, 2026. It is not a universal benchmark leaderboard. It weights availability, workflow fit, cost visibility, latency, and coding-agent behavior.

| Model | Best coding use | Why pick it | Public price or access note | Key caution |

|---|---|---|---|---|

| GPT-5.4 | Default professional coding model | Strong coding, reasoning, tool use, Codex support, and API access | $2.50 per 1M input tokens and $15 per 1M output tokens in the API.[1] | More expensive than older non-frontier models. |

| GPT-5.4 Pro | Hard architecture, debugging, and research-heavy software tasks | Maximum-performance version for complex tasks | $30 per 1M input tokens and $180 per 1M output tokens in the API.[1] | Use only when the task justifies the cost. |

| GPT-5.3-Codex | Long-running agentic work inside Codex | Specialized for Codex, code review, execution, and multi-step developer workflows | OpenAI said it was available in paid ChatGPT plans where Codex is available and was working toward API access at launch.[2] | Not the simplest pick for ordinary API integrations. |

| GPT-5.3-Codex-Spark | Fast interactive editing | Designed for low-latency real-time coding loops | Research preview for ChatGPT Pro users and select API design partners at launch.[3] | Text-only at launch, with a 128k context window.[3] |

| GPT-4.1 | Lower-cost API coding, diffs, and long-context utility | Good code diffs, lower cost, and API availability | $2.00 per 1M input tokens and $8.00 per 1M output tokens.[4] | Older than GPT-5.4 and weaker for frontier agentic tasks. |

The table also shows why “best” is not the same as “most powerful.” GPT-5.4 Pro may be the strongest option for some work, but GPT-5.4 is the better daily default. For a pure capability ranking, see our most powerful GPT model breakdown. For budget-first production planning, compare it with the cheapest GPT model guide and our OpenAI API pricing reference.

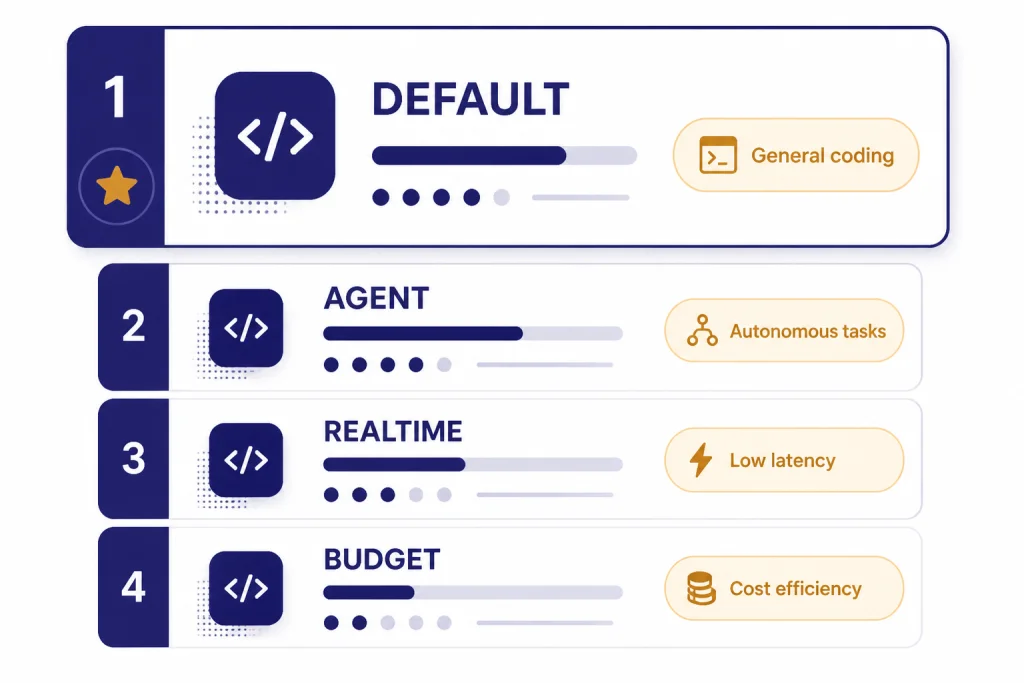

Pick the model by coding task

For everyday app development

Choose GPT-5.4. It is the best balance for building features, explaining code, generating tests, reviewing pull requests, and debugging production-style issues. It is also easier to standardize across a team because the same model is available in ChatGPT, the API, and Codex.[1]

For long-running Codex agents

Choose GPT-5.3-Codex when the work happens inside Codex and requires the model to stay on task over many steps. Good examples include migrating a service, chasing test failures across packages, converting a codebase to a new pattern, or drafting implementation plans with intermediate checkpoints. OpenAI framed GPT-5.3-Codex as a shift from code writing and review toward broader computer-based developer work.[2]

For live pair programming

Choose GPT-5.3-Codex-Spark if you have access and the session is highly interactive. It fits small edits, UI refinements, quick targeted patches, and situations where you want to interrupt or redirect the model frequently. Its launch post emphasized real-time coding, fast iteration, and a 128k context window rather than maximum long-horizon reasoning.[3] If latency is your main criterion, also read our fastest GPT model comparison.

For API products with predictable spend

Choose GPT-4.1 or GPT-5.4 depending on quality needs. GPT-4.1 is cheaper on output tokens than GPT-5.4, with $8.00 per 1M output tokens versus GPT-5.4 at $15 per 1M output tokens.[4][1] GPT-5.4 is still the better choice when the product depends on tool use, deeper reasoning, or hard codebase repair.

For architecture and hard debugging

Start with GPT-5.4. Move to GPT-5.4 Pro only for high-value, complex tasks where a wrong answer is expensive or where repeated failed attempts would cost more than the model upgrade. Use Pro for design reviews, incident postmortems, cross-service refactors, or security-sensitive reasoning. Do not use it for routine boilerplate.

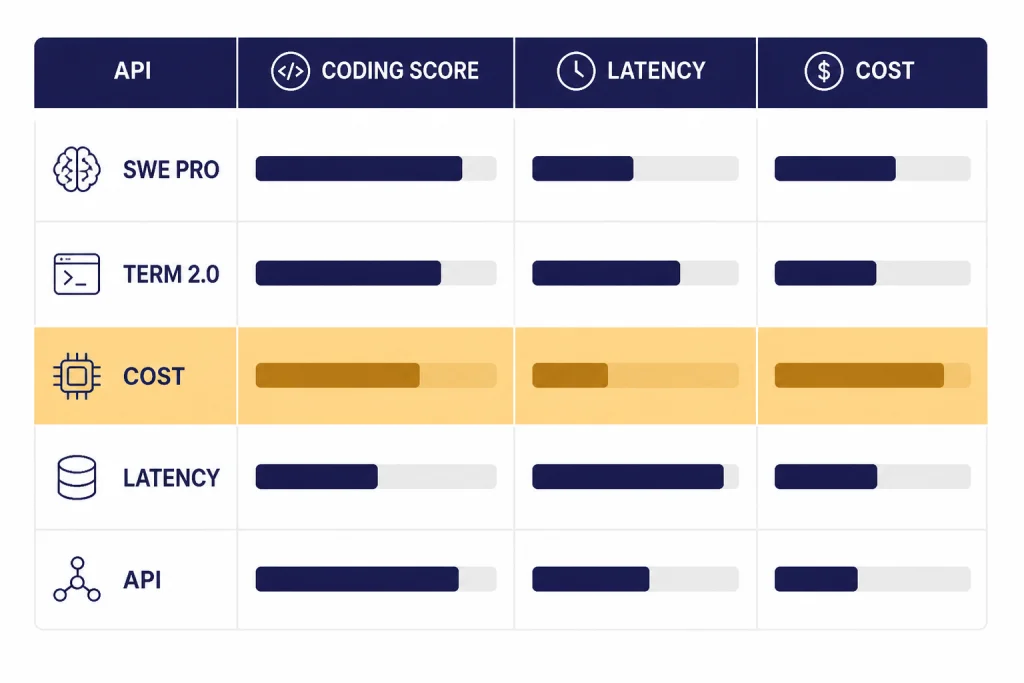

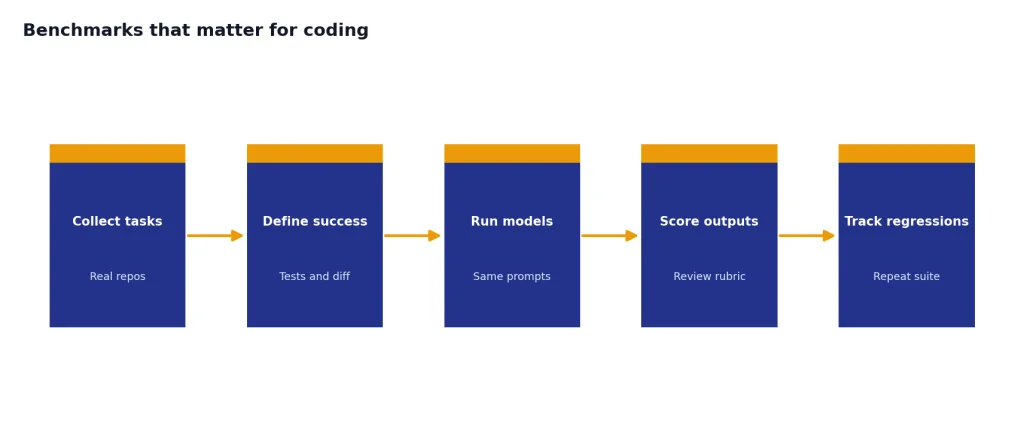

Benchmarks that matter for coding

For coding, benchmark names matter. SWE-bench Verified was useful for measuring autonomous software engineering progress, but OpenAI said in February 2026 that it no longer recommends SWE-bench Verified for frontier coding launches and now recommends SWE-bench Pro.[5] That is why this comparison gives more weight to SWE-Bench Pro, Terminal-Bench, OSWorld-style computer use, and real workflow availability.

GPT-5.4 scored 57.7% on SWE-Bench Pro Public in OpenAI’s launch evaluation, while GPT-5.3-Codex scored 56.8% and GPT-5.2 scored 55.6% in the same table.[1] GPT-5.3-Codex led GPT-5.4 on Terminal-Bench 2.0 in that same GPT-5.4 launch table, with 77.3% versus 75.1%.[1] This is a good example of why the best daily model is not always the top model on every coding-adjacent benchmark.

Benchmarks should guide your shortlist, not replace your own test set. A good internal evaluation uses real repositories, real failing tests, your lint rules, your security constraints, and your code review standards. If you build developer tools on top of OpenAI models, create a small private benchmark with tasks such as “fix flaky test,” “add migration,” “convert one component pattern,” “explain regression,” and “produce minimal diff.”

Cost, context, and latency trade-offs

Cost matters because coding workloads can create large input and output volumes. GPT-5.4 costs $2.50 per 1M input tokens, $0.25 per 1M cached input tokens, and $15 per 1M output tokens in OpenAI’s launch pricing table.[1] GPT-5.4 Pro costs $30 per 1M input tokens and $180 per 1M output tokens, so it should be reserved for tasks where the extra reasoning quality changes the outcome.[1]

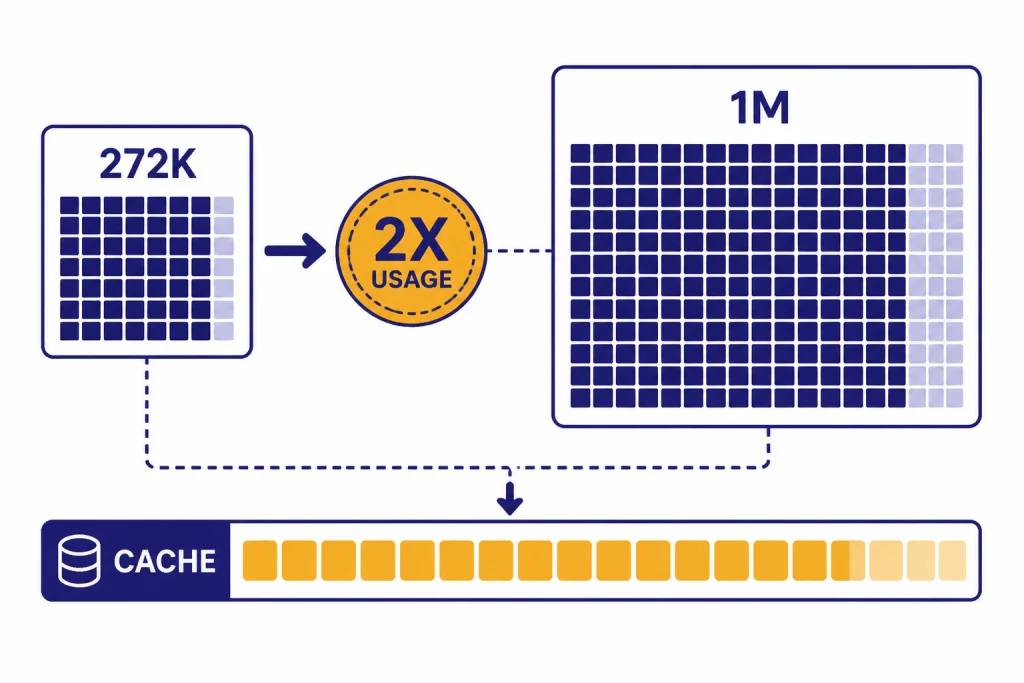

Context is also not a free substitute for retrieval and planning. GPT-5.4 in Codex included experimental support for a 1M context window, but OpenAI said requests above the standard 272K context window counted against usage limits at 2x the normal rate.[1] In the same release, OpenAI’s long-context evaluations showed performance dropping at deeper ranges, including MRCR v2 8-needle accuracy of 57.5% at 256K–512K and 36.6% at 512K–1M.[1]

The practical lesson is simple. Give the model the files it needs, not the entire company history. Use repository maps, failing tests, dependency graphs, and short design notes. Cache repeated context where possible. Ask for minimal diffs unless you truly need full-file rewrites. For more detail on how context windows differ across models, use this guide to context window sizes for every GPT model.

A practical workflow for developers

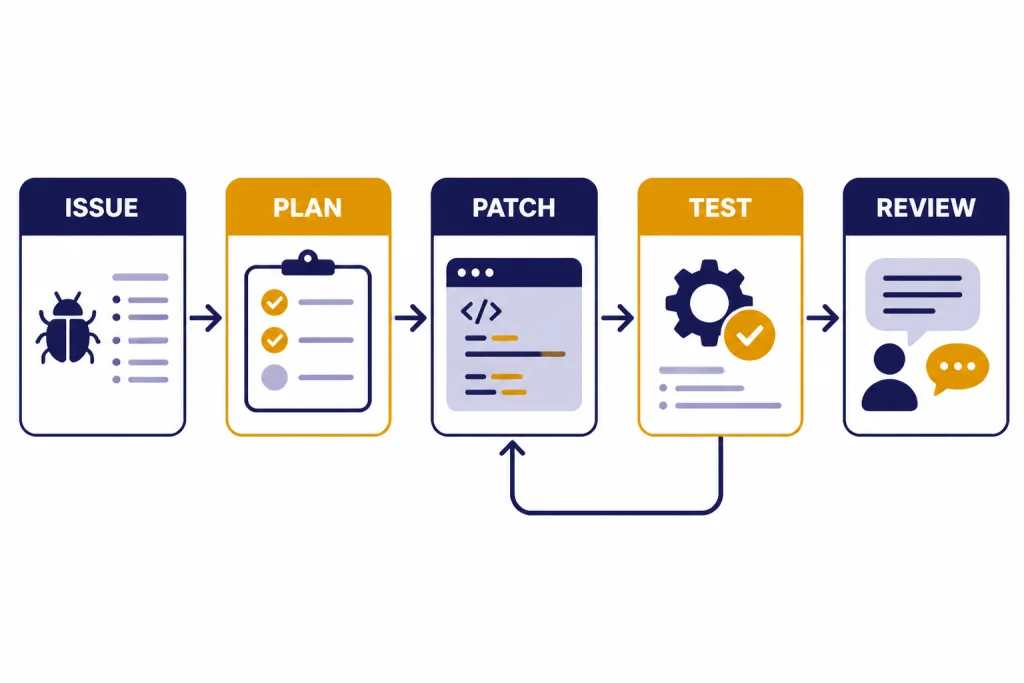

A strong model still needs a disciplined workflow. The best pattern is to separate planning, editing, and verification. Start by asking the model to inspect the problem and propose a plan. Then ask for a narrow patch. Then run tests and feed back exact failures. Finally, ask for a review of the final diff against the original requirement.

- Give the issue first. Include the bug report, expected behavior, actual behavior, and relevant stack trace.

- Give the repository map. Point to the likely files, test directories, and framework conventions.

- Ask for a plan before code. This catches wrong assumptions before the model edits too much.

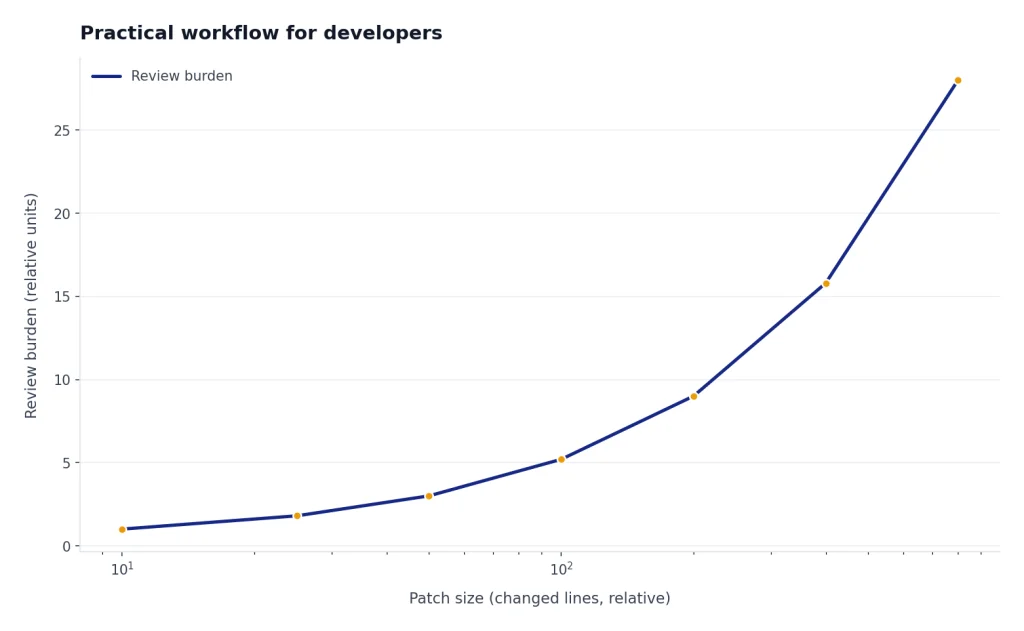

- Prefer minimal diffs. Smaller patches are easier to review and cheaper to generate.

- Run tests outside the model. Treat the model’s test claims as suggestions until your toolchain verifies them.

- Ask for risk notes. Have the model name migrations, backward-compatibility risks, security concerns, and edge cases.

This workflow works with GPT-5.4, GPT-5.3-Codex, and GPT-4.1. GPT-5.3-Codex is better when the workflow runs inside Codex and the agent can operate over many steps. GPT-4.1 is still useful when you need structured diffs, long context, and lower API cost; OpenAI specifically trained GPT-4.1 to follow diff formats more reliably.[4] For older-model context, see GPT-5.3 and GPT-5.2.

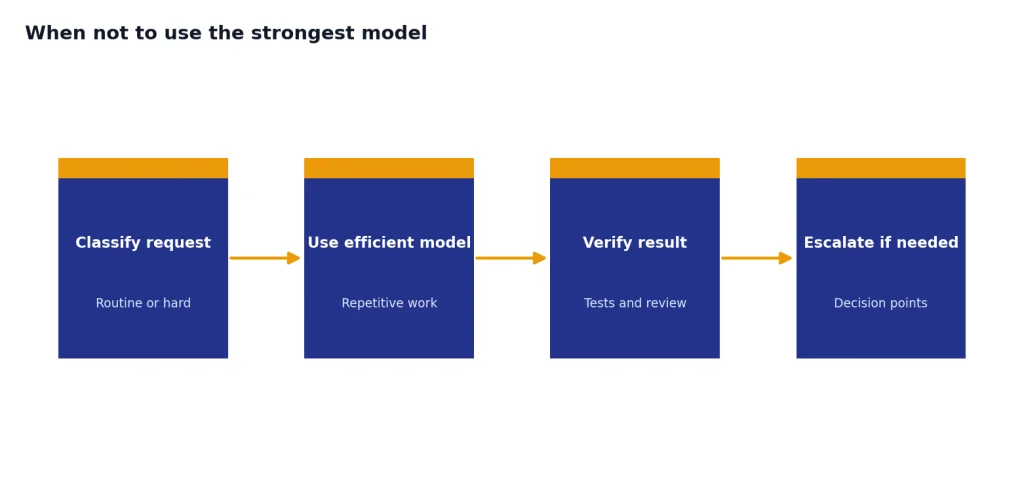

When not to use the strongest model

Do not use GPT-5.4 Pro for every coding request. It can be the right tool for hard architecture work, but it is wasteful for formatting, simple unit tests, type annotations, short scripts, and routine documentation. Use a smaller or cheaper model for repetitive tasks and reserve the frontier model for the decision points.

Do not use a coding model as your only reviewer. It can miss subtle business logic, hidden security assumptions, deployment constraints, or licensing issues. Human review, automated tests, static analysis, dependency scanning, and staging environments still matter.

Do not assume a 1M-token context window means the model understood every file equally. Large context helps, but targeted context still wins. The best results come from pairing the model with good repository indexing, precise prompts, and repeatable verification.

Finally, do not confuse coding with other model categories. The best model for code is not automatically the best model for images, voice, or video. For adjacent use cases, see our guides to best GPT model for writing, best GPT model for image generation, and GPT-4 Vision.

Frequently asked questions

What is the best GPT model for coding in 2026?

As of April 15, 2026, GPT-5.4 is the best default GPT model for coding. It has strong coding and reasoning performance, public API access, and Codex support. GPT-5.3-Codex is better for some long-running Codex-agent work, but GPT-5.4 is the easier general recommendation.[1]

Is GPT-5.3-Codex better than GPT-5.4 for coding?

Sometimes. GPT-5.3-Codex was built specifically for Codex-style agentic coding and scored higher than GPT-5.4 on Terminal-Bench 2.0 in OpenAI’s GPT-5.4 release table.[1] GPT-5.4 is still the better default when you need one model across ChatGPT, Codex, and the API.

Is GPT-5.3-Codex-Spark the fastest coding model?

It is the best choice in this group for real-time interactive coding if you have access. OpenAI said GPT-5.3-Codex-Spark was designed for near-instant coding interaction and more than 1000 tokens per second on its low-latency setup.[3] It is not the best pick for every long-running task.

Should I still use GPT-4.1 for coding?

Yes, when cost and predictable API behavior matter more than frontier capability. GPT-4.1 is useful for code diffs, long-context tasks, and lower-cost production workloads. It is not the best choice for the hardest agentic debugging or architecture tasks.

Do coding benchmarks prove which model is best?

No. Benchmarks are useful filters, but they do not replace testing on your own repositories. OpenAI has said SWE-bench Verified no longer measures frontier coding capability well and recommends SWE-bench Pro instead.[5] Your own failing tests and review standards should decide final model choice.

Which model should startups use for a coding assistant API?

Start with GPT-5.4 for quality-sensitive coding features and evaluate GPT-4.1 for cheaper background tasks. Use caching, retrieval, and minimal diffs to control cost. Add GPT-5.4 Pro only for premium workflows where accuracy on hard tasks justifies the higher token price.