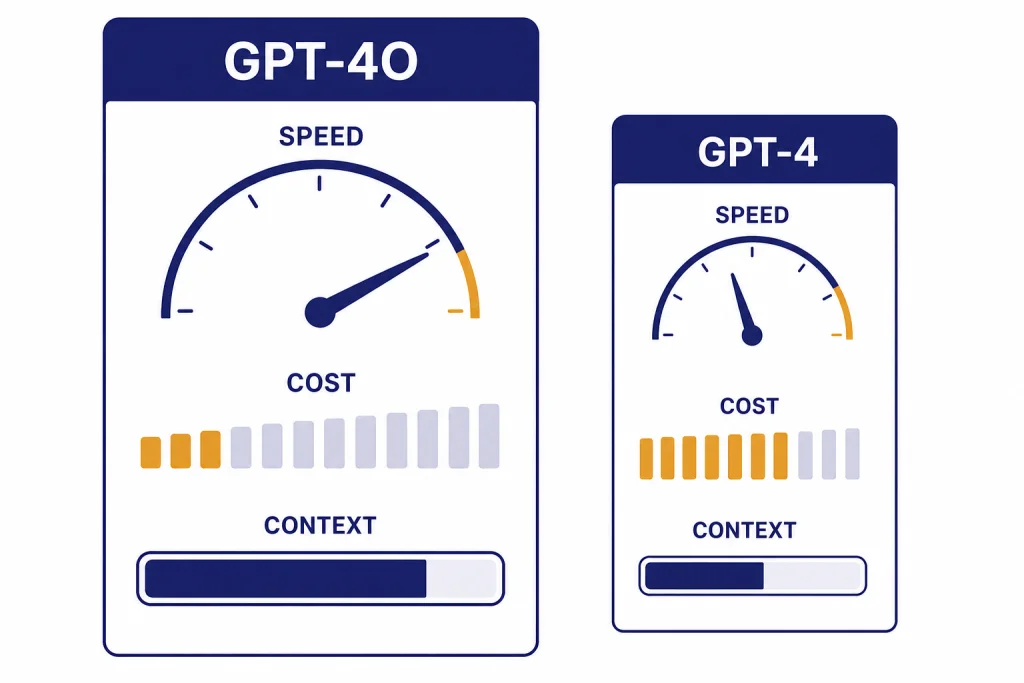

GPT-4o is the better choice than GPT-4 for almost every practical workload that still depends on these legacy OpenAI models. It is faster, supports text and image input in the API, has a much larger context window, and costs far less per token than the older GPT-4 API model.[1][2][3] GPT-4 still matters as a reference point because it set the first widely used GPT-4 quality baseline in March 2023, but it is now best treated as an older comparison model rather than a default pick.[4] As of this article’s publication date, GPT-4o has also been retired from normal ChatGPT model selection, while remaining available through the API.[6]

Bottom line

The short version of gpt-4o vs gpt-4 is simple. GPT-4o wins on speed, cost, context length, and multimodal usability. GPT-4 wins only if you need to preserve behavior in an old integration that was tuned against the original GPT-4 family.

OpenAI described GPT-4o as an omni model that can reason across audio, vision, and text, and said it matched GPT-4 Turbo performance on English text and code while improving non-English language performance.[1] That does not mean every GPT-4o answer is better than every GPT-4 answer. It means GPT-4o is the more practical model for most live products because it gives comparable or better quality with much better efficiency.

OpenAI’s current model documentation lists GPT-4o as a high-intelligence model with text and image input, text output, a 128,000-token context window, and a 16,384-token maximum output.[2] The same documentation lists GPT-4 as an older high-intelligence model with text-only input and output, an 8,192-token context window, and an 8,192-token maximum output.[3] That single difference changes how each model feels in real work. GPT-4o can hold more source material, scan images, and return longer structured outputs. GPT-4 feels narrower and more expensive.

| Category | GPT-4o | GPT-4 | Winner |

|---|---|---|---|

| Best use | General text, coding, image understanding, multilingual work, API products | Legacy apps that need original GPT-4 behavior | GPT-4o |

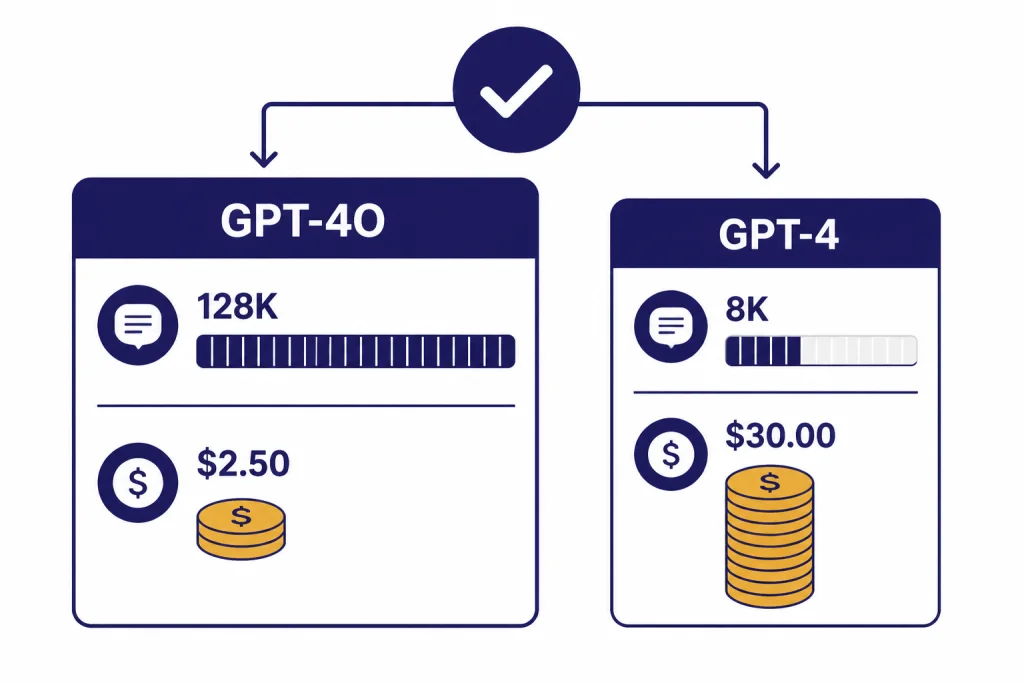

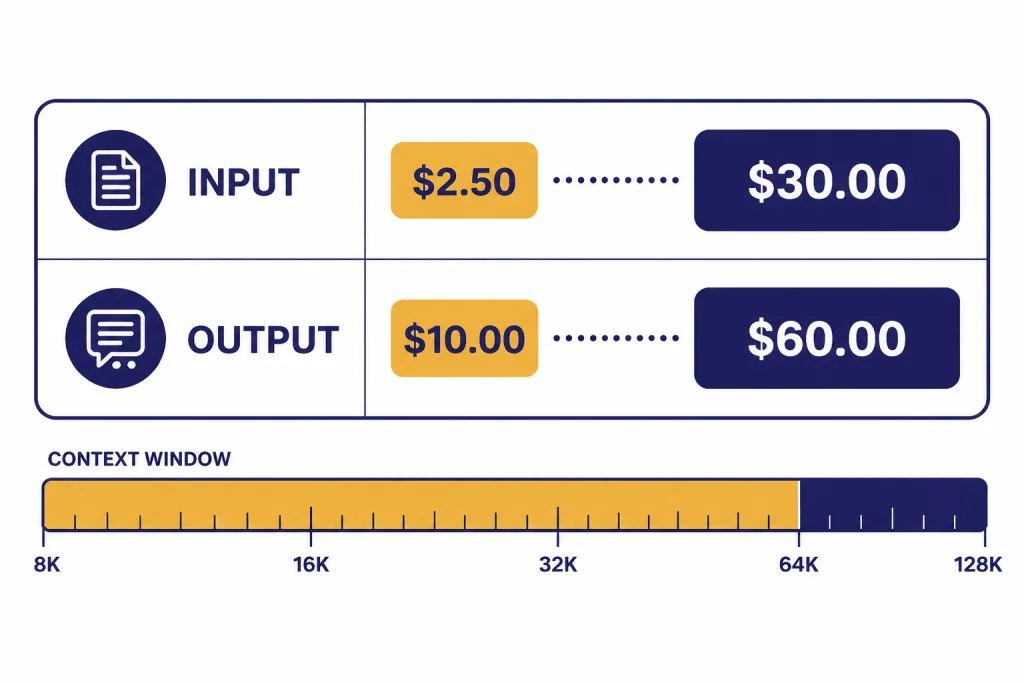

| API input price | $2.50 per 1M tokens | $30.00 per 1M tokens | GPT-4o |

| API output price | $10.00 per 1M tokens | $60.00 per 1M tokens | GPT-4o |

| Context window | 128,000 tokens | 8,192 tokens | GPT-4o |

| Max output | 16,384 tokens | 8,192 tokens | GPT-4o |

| Image input | Supported | Not supported in the listed GPT-4 API model | GPT-4o |

| Behavior stability | Better for modern builds | Useful for old prompts tuned to GPT-4 | Depends |

The table above uses OpenAI’s API model pages for the token prices, context windows, max output limits, modalities, and feature status.[2][3] If you are comparing newer OpenAI generations too, read our GPT-5 vs GPT-4o guide after this one. For a broader model lineup, use all GPT models compared side by side.

Speed test: GPT-4o is built for lower latency

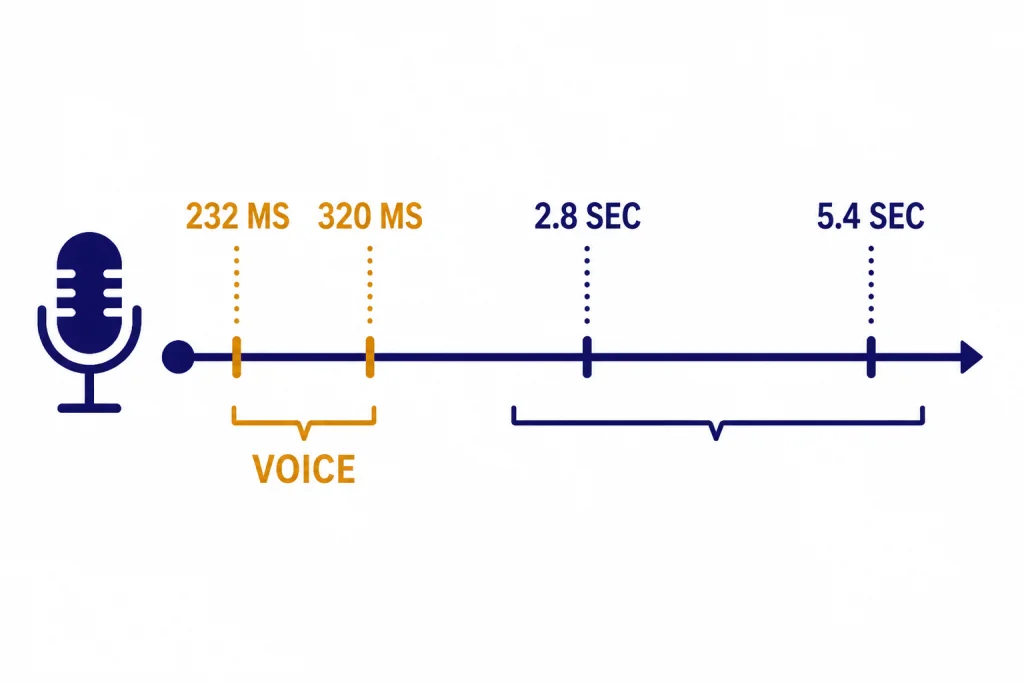

GPT-4o’s speed advantage is not just a small interface improvement. OpenAI said GPT-4o can respond to audio inputs in as little as 232 milliseconds, with a 320-millisecond average, and compared that timing to human conversational response time.[1] The same launch article said the previous Voice Mode pipeline had average latencies of 2.8 seconds with GPT-3.5 and 5.4 seconds with GPT-4.[1]

That comparison matters because GPT-4 used a pipeline for voice interaction. Audio had to be transcribed, the text model had to answer, and another model had to convert text back to audio. OpenAI said that pipeline meant the main GPT-4 model could not directly observe tone, multiple speakers, or background noise.[1] GPT-4o was trained end-to-end across text, vision, and audio, so the architecture was designed for faster multimodal interaction from the beginning.[1]

For text-only API work, the practical speed result is similar. OpenAI said GPT-4o was 2x faster than GPT-4 Turbo in the API and had 5x higher rate limits at launch.[1] That statement compares GPT-4o with GPT-4 Turbo, not the original GPT-4 model. Still, it shows the direction clearly: GPT-4o was built as the efficient GPT-4-class model, while the original GPT-4 API model is now listed as older.

In real use, this affects the product feel. A chat support assistant can stream a draft answer sooner. A coding assistant can return a larger patch without forcing the user to wait through a slow first token. A document workflow can include more context and still feel interactive. If raw latency is your top priority, also compare this article with our fastest GPT model benchmark page.

GPT-4 can still be acceptable for batch jobs where nobody is waiting on the screen. It is harder to justify for interactive apps. Users notice delay before they notice small stylistic differences, especially in chat, voice, and workflow automation.

Quality test: where GPT-4o improves, matches, or trails

GPT-4o is not a pure quality-only upgrade over GPT-4 in every possible prompt. It is better understood as a GPT-4-class model optimized for speed, multimodality, multilingual work, and broader access. OpenAI said GPT-4o achieved GPT-4 Turbo-level performance on text, reasoning, and coding while setting new high watermarks on multilingual, audio, and vision capabilities.[1]

That framing is important. If you test a short English reasoning prompt, GPT-4o and GPT-4 may both produce strong answers. If you test image-heavy work, mixed-language documents, voice interaction, or long source packets, GPT-4o has structural advantages. The older GPT-4 API model is text-only in OpenAI’s current model page, while GPT-4o accepts text and image input.[2][3]

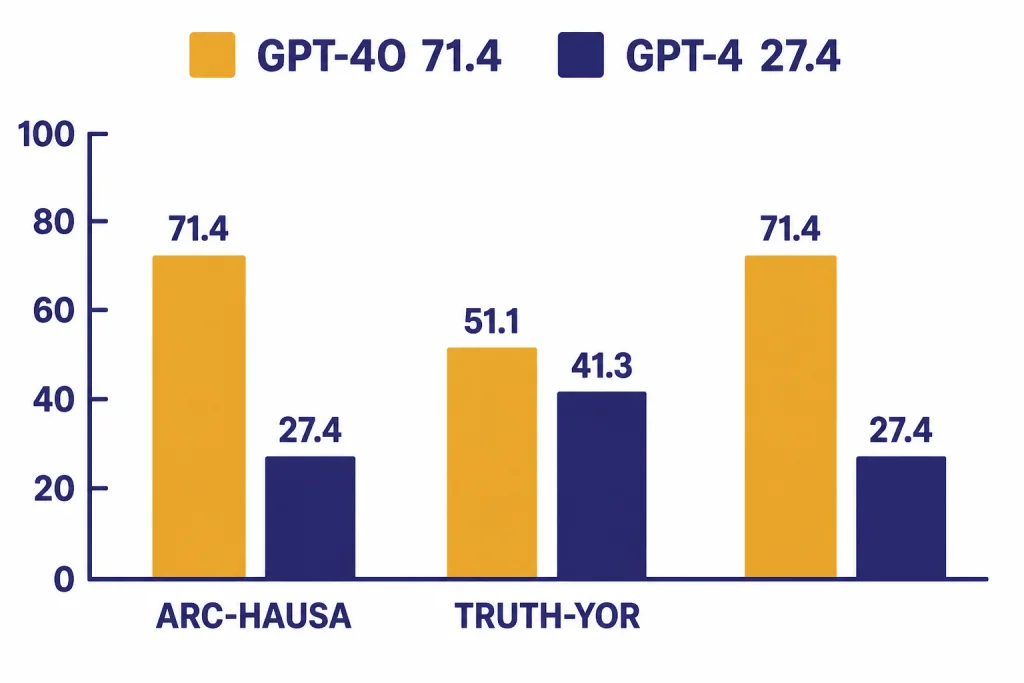

The clearest published quality gap appears in underrepresented language evaluations from the GPT-4o System Card. On translated ARC-Easy in Hausa, OpenAI reported GPT-4 at 27.4% accuracy and GPT-4o at 71.4% accuracy.[5] On translated TruthfulQA in Yoruba, OpenAI reported GPT-4 at 41.3% accuracy and GPT-4o at 51.1% accuracy.[5] On Uhura-Eval in Hausa, OpenAI reported GPT-4 at 41.9% accuracy and GPT-4o at 59.4% accuracy.[5]

Medical and clinical benchmarks also show GPT-4o ahead of GPT-4 Turbo in several published tests. The GPT-4o System Card reported MedQA USMLE 4-option zero-shot accuracy improving from 0.78 for GPT-4T in May 2024 to 0.89 for GPT-4o, and MMLU Clinical Knowledge zero-shot improving from 0.85 to 0.92.[5] These are benchmark results, not a license to use either model as a doctor. They show that GPT-4o did not trade away all high-end quality to gain speed.

For English writing, editing, summarization, and general analysis, the quality difference is often less dramatic than the efficiency difference. GPT-4 can still sound careful and polished. GPT-4o is usually more responsive and better suited to multi-input work. If your application depends on deep deliberate reasoning rather than quick GPT-style response, compare GPT vs the o-Series and OpenAI o1 vs o3 before deciding.

The main quality risk with GPT-4o is not that it is weak. It is that migrations can change tone, refusal style, formatting, and edge-case behavior. If an old workflow was heavily prompt-tuned for GPT-4, run a regression set before switching. Include your hardest prompts, not only happy-path examples.

Cost, context, and API features

Cost is where GPT-4o makes GPT-4 look dated. OpenAI’s GPT-4o model page lists text input at $2.50 per 1M tokens, cached input at $1.25 per 1M tokens, and output at $10.00 per 1M tokens.[2] OpenAI’s GPT-4 model page lists input at $30.00 per 1M tokens and output at $60.00 per 1M tokens.[3] OpenAI’s prompt caching article separately lists the same GPT-4o prices for the gpt-4o-2024-08-06 snapshot, which corroborates the GPT-4o pricing figures.[7]

The context gap is just as important. GPT-4o is listed with a 128,000-token context window and 16,384-token maximum output.[2] GPT-4 is listed with an 8,192-token context window and 8,192-token maximum output.[3] If your prompt includes long files, transcripts, code repositories, contracts, or many retrieved passages, GPT-4o gives you much more room before you need chunking or retrieval tricks.

Feature support also favors GPT-4o. OpenAI’s model page lists GPT-4o support for streaming, function calling, structured outputs, fine-tuning, and predicted outputs.[2] The GPT-4 page lists streaming and fine-tuning as supported, but function calling, structured outputs, and predicted outputs as not supported.[3] For developers building tools, that changes the integration design. GPT-4o is easier to use for typed JSON responses, tool calls, and production workflows that need predictable output shapes.

That does not mean GPT-4o is always the cheapest OpenAI option. Smaller models can cost less, and newer models may be better for some workloads. But inside this direct GPT-4o vs GPT-4 comparison, GPT-4o is the obvious cost winner. For a current price-by-model view, use our OpenAI API pricing guide.

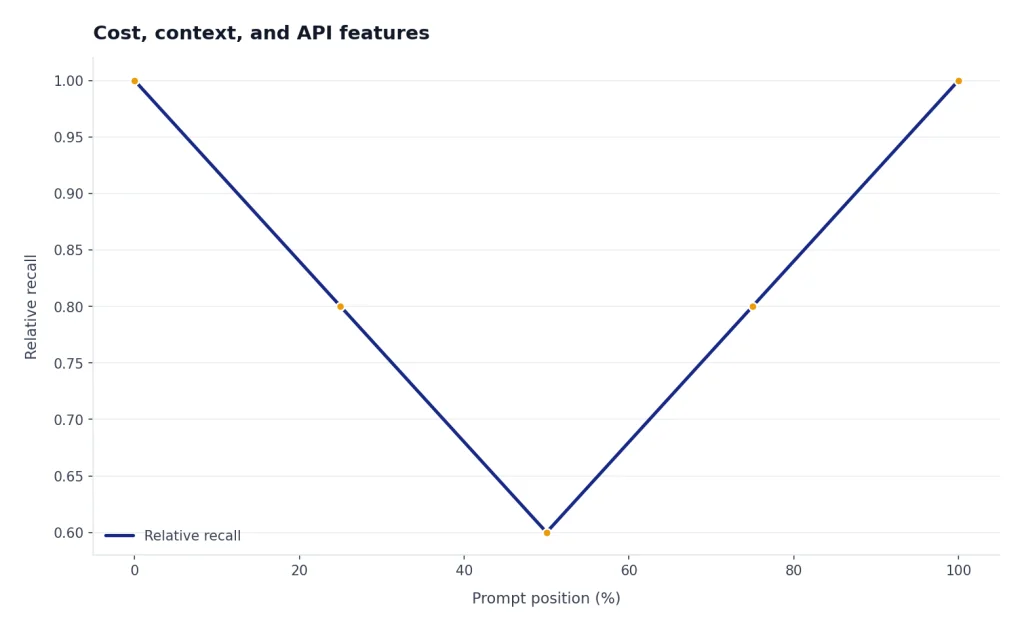

Context size also affects accuracy in practice. A larger window lets you include more relevant source material, but it does not guarantee that the model will use every detail perfectly. Long-context testing should check recall at the beginning, middle, and end of the prompt. For more detail on token limits across model families, see our context window sizes for every GPT model reference.

ChatGPT availability in 2026

This comparison needs a 2026 caveat. OpenAI’s Help Center says GPT-4o and several other models were deprecated in ChatGPT on February 13, 2026, and that those models continue to be available in the API.[6] It also says ChatGPT Business, Enterprise, and Edu customers retain GPT-4o access within Custom GPTs until April 3, 2026, after which GPT-4o is fully retired across ChatGPT plans.[6]

That means most readers should not treat GPT-4o vs GPT-4 as a normal ChatGPT model-picker decision. It is mainly an API, migration, and legacy-support comparison. If you are choosing a consumer ChatGPT plan, plan-level access matters more than this old model pair. Use ChatGPT Free vs Plus vs Pro, ChatGPT Plus vs Team, or ChatGPT Pro vs Team for current subscription decisions.

For developers, the API question remains relevant. OpenAI’s current model pages still list both GPT-4o and GPT-4, with GPT-4o positioned as fast, intelligent, and flexible, and GPT-4 positioned as an older high-intelligence GPT model.[2][3] If you maintain an old GPT-4 integration, you should decide whether compatibility is worth the higher token cost and smaller context window.

If you are starting a new project, do not start with GPT-4 unless you have a specific compatibility reason. Build against a newer model first, then use GPT-4o only if your product, evaluation suite, or vendor requirement points there. If your comparison set includes GPT-4 Turbo, read GPT-4 vs GPT-4 Turbo before making a final migration plan.

Which model should you use?

Use GPT-4o over GPT-4 if your workload includes any of the following: long context, image input, multilingual text, structured outputs, function calling, or interactive response speed. Those are not edge cases. They are core requirements for many modern AI products.

Keep GPT-4 only when you are maintaining an old integration whose behavior has already been validated with GPT-4. In that case, migration risk may be more expensive than token cost for a short period. But even then, the right move is usually to create a test set and plan a controlled migration rather than freezing forever on GPT-4.

For a practical migration test, collect representative prompts from production. Include short user questions, long documents, structured-output calls, refusal-sensitive prompts, coding tasks, and multilingual cases if your users need them. Compare answer quality, latency, output format validity, cost per completed task, and failure mode. GPT-4o should win most of those categories, but your own workload is the final judge.

If you want a smaller and cheaper model in the same family, read GPT-4o vs GPT-4o mini. If you are deciding between legacy GPT-4-era models and newer OpenAI systems, start with GPT-4 vs GPT-5 instead.

Our recommendation is direct: choose GPT-4o for legacy API work unless you have a proven reason not to. Choose GPT-4 only for compatibility. Choose a newer model family when the project is new and you are not constrained by legacy prompts.

Frequently asked questions

Is GPT-4o better than GPT-4?

Yes, for most practical uses. GPT-4o is faster, cheaper, more multimodal, and has a much larger context window than the listed GPT-4 API model.[2][3] GPT-4 may still be useful when an old workflow was tuned specifically to its behavior.

Is GPT-4o faster than GPT-4?

Yes. OpenAI said GPT-4o could respond to audio inputs in as little as 232 milliseconds with a 320-millisecond average, while the earlier GPT-4 voice pipeline averaged 5.4 seconds.[1] OpenAI also said GPT-4o was 2x faster than GPT-4 Turbo in the API at launch.[1]

Does GPT-4o cost less than GPT-4?

Yes. OpenAI lists GPT-4o at $2.50 per 1M input tokens and $10.00 per 1M output tokens.[2] It lists GPT-4 at $30.00 per 1M input tokens and $60.00 per 1M output tokens.[3]

Can GPT-4o handle images?

Yes. OpenAI’s GPT-4o model page lists text and image as supported inputs and text as the output.[2] The listed GPT-4 API model is text-only in OpenAI’s current model documentation.[3]

Can I still use GPT-4o in ChatGPT?

Usually no. OpenAI says GPT-4o was deprecated in ChatGPT on February 13, 2026, while continuing to be available in the API.[6] Business, Enterprise, and Edu customers had temporary Custom GPT access through April 3, 2026.[6]

Should developers migrate from GPT-4 to GPT-4o?

Most should. GPT-4o has lower token prices, a larger context window, image input, structured outputs, and function calling support.[2] Keep GPT-4 only if your app depends on old GPT-4 behavior and you have not finished regression testing.