GPT-4o is worth the upgrade over GPT-4o mini when answer quality, vision reasoning, multilingual nuance, or high-value user interactions matter more than token cost. GPT-4o mini is the better default for high-volume automation, classification, tagging, extraction, simple support flows, and other focused tasks where speed and cost control matter. The key catch in 2026 is availability. OpenAI has retired GPT-4o and GPT-4o mini from ChatGPT, while API access remains unchanged.[5] So this comparison is mainly for developers, teams maintaining older workflows, and buyers deciding whether a GPT-4o API call is justified over a cheaper mini-model call.

Quick verdict

Use GPT-4o when the task is ambiguous, visual, customer-facing, multilingual, or expensive to get wrong. Use GPT-4o mini when the task is repetitive, well-scoped, and easy to verify. OpenAI describes GPT-4o as a high-intelligence flagship model for most tasks, while GPT-4o mini is described as a fast, affordable small model for focused tasks.[1][2]

The simple rule is this: start with GPT-4o mini, then upgrade only the calls that fail quality checks. That is usually better than sending every request to GPT-4o. If you are comparing current ChatGPT subscriptions rather than API models, read our ChatGPT Free vs Plus vs Pro guide instead, because GPT-4o and GPT-4o mini are no longer the main ChatGPT upgrade path.[5]

| Question | Better choice | Why |

|---|---|---|

| Best quality for complex prompts | GPT-4o | OpenAI positions it as the higher-intelligence flagship option.[1] |

| Lowest cost at scale | GPT-4o mini | Its listed API input and output prices are far lower.[2] |

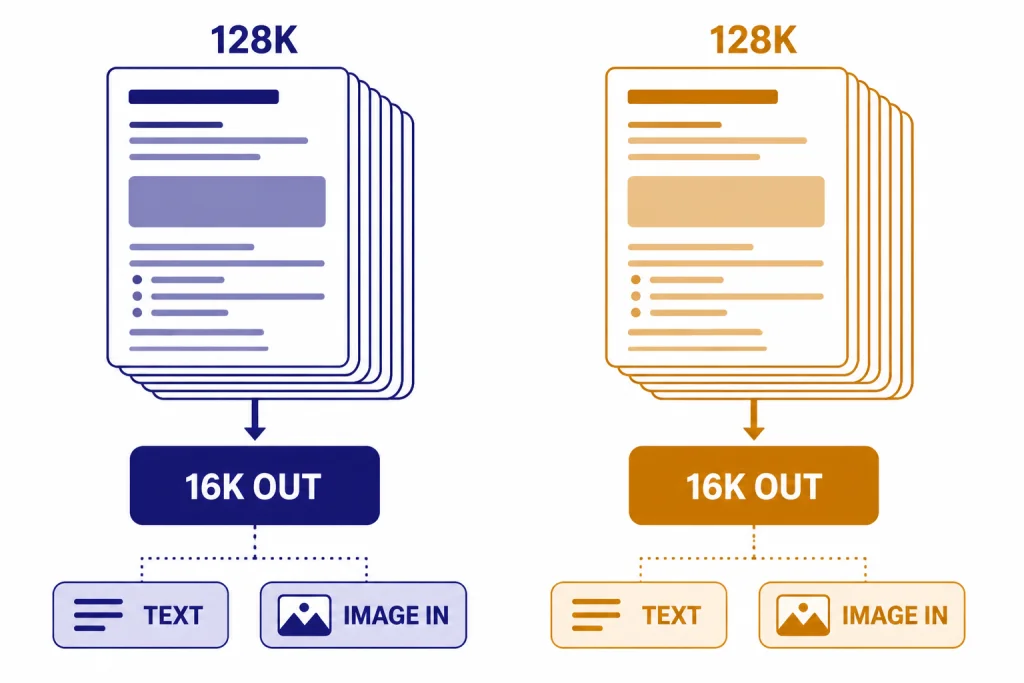

| Long context | Tie | Both model docs list a 128,000-token context window.[1][2] |

| Large single response | Tie | Both model docs list 16,384 max output tokens.[1][2] |

| Simple extraction, routing, tags | GPT-4o mini | Its lower price makes repeated focused calls easier to justify. |

| High-stakes final answer | GPT-4o | The quality margin is usually worth the added cost. |

ChatGPT and API availability in 2026

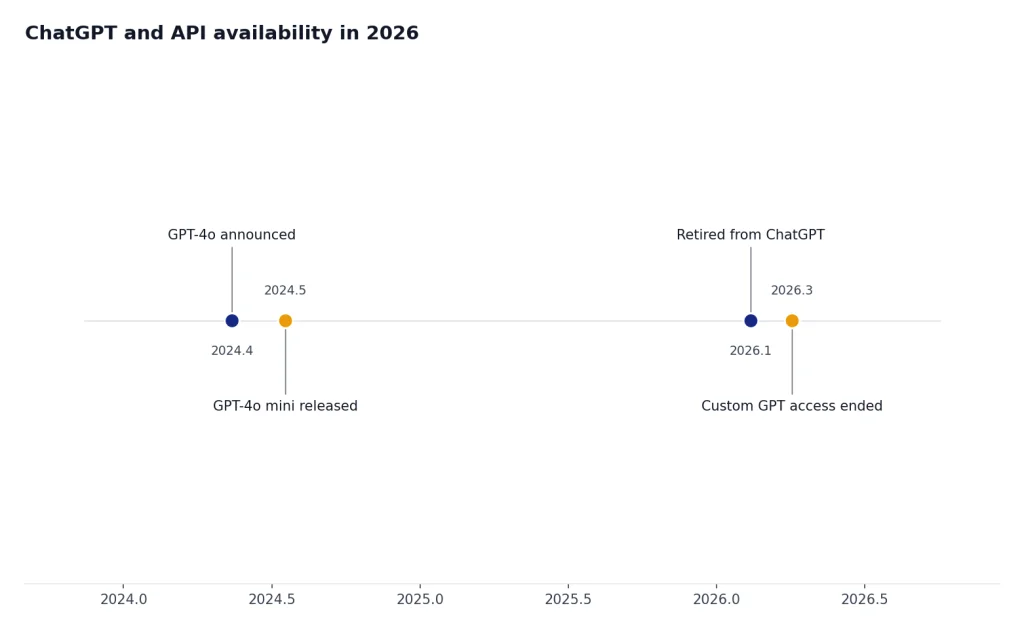

The most important update is that this is no longer a normal ChatGPT model-picker decision. OpenAI’s Help Center says that, as of February 13, 2026, GPT-4o and several other legacy models were retired from ChatGPT, and that API access remained unchanged.[5] The same Help Center page also says Business, Enterprise, and Edu customers retained GPT-4o access inside Custom GPTs only until April 3, 2026.[5]

That means “upgrade” has two different meanings. In ChatGPT, users should compare current plans and current models, not assume GPT-4o is the paid upgrade over GPT-4o mini. For that path, see ChatGPT Plus vs Team, ChatGPT Pro vs Team, or ChatGPT Team vs Enterprise.

In the API, the comparison still matters. The GPT-4o API model page lists the model, its context window, max output, pricing, endpoints, and supported features.[1] The GPT-4o mini API model page does the same for the smaller model.[2] If you are building or maintaining a product, the practical question is not whether GPT-4o is “better.” It is whether it is better enough for the specific request.

Cost difference

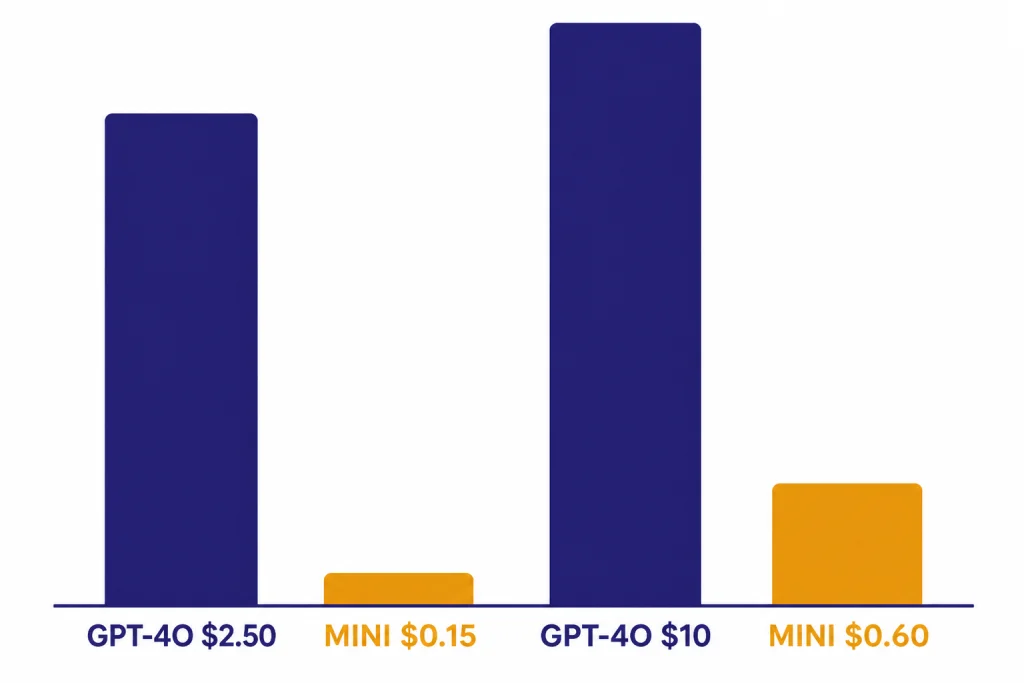

GPT-4o costs $2.50 per 1 million input tokens, $1.25 per 1 million cached input tokens, and $10.00 per 1 million output tokens in OpenAI’s listed API model page.[1] GPT-4o mini costs $0.15 per 1 million input tokens, $0.075 per 1 million cached input tokens, and $0.60 per 1 million output tokens.[2]

That makes GPT-4o about 16.7 times more expensive than GPT-4o mini for standard input tokens and standard output tokens, based on OpenAI’s listed prices.[1][2] The ratio is the same for cached input tokens. This difference dominates the decision for products that send millions of small requests.

| Example API workload | GPT-4o estimated token cost | GPT-4o mini estimated token cost | Practical takeaway |

|---|---|---|---|

| 1 million input tokens and 250,000 output tokens | $5.00 | $0.30 | Mini saves $4.70 before any quality review. |

| 10 million input tokens and 2.5 million output tokens | $50.00 | $3.00 | Mini can make bulk processing economically viable. |

| 100 million input tokens and 25 million output tokens | $500.00 | $30.00 | Routing matters more than small prompt optimizations. |

These examples use only the listed text-token prices and a fixed 4:1 input-to-output ratio. They do not include tool calls, storage, retries, fine-tuning, moderation, or platform overhead. For broader price comparisons across current models, use our OpenAI API pricing reference.

Capability differences

Both models support text and image input with text output in the API, according to OpenAI’s model pages.[1][2] Both pages also list Structured Outputs, function calling, streaming, fine-tuning, and predicted outputs as supported features.[1][2] On paper, that makes the feature checklist look very similar.

The difference is not mainly the context window. Both model pages list a 128,000-token context window and a 16,384-token max output.[1][2] The difference is how reliably each model uses that context, follows nuanced instructions, weighs evidence, and handles messy inputs. A big context window does not guarantee strong reasoning across the whole prompt.

OpenAI’s original GPT-4o announcement said GPT-4o accepts combinations of text, audio, image, and video inputs and can generate combinations of text, audio, and image outputs.[3] However, the current GPT-4o API model page for this comparison lists text and image input with text output for the model page shown there.[1] Treat the specific API model page as the safer source when you are deciding what to implement.

If you need a wider map of model families, see all GPT models compared side by side. If your question is mainly about context limits, use our context window comparison.

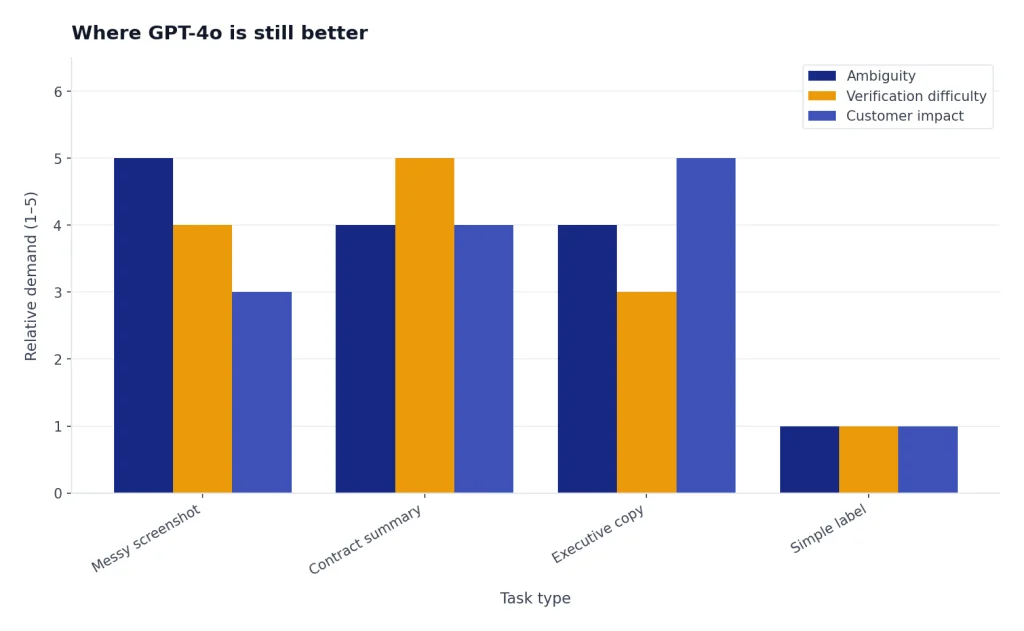

Where GPT-4o is still better

GPT-4o is the better choice when the task involves judgment. Examples include interpreting a messy screenshot, reconciling conflicting notes, revising executive copy, reviewing a contract summary, or explaining a technical failure to a customer. These tasks are not only about producing text. They require prioritization and restraint.

OpenAI’s GPT-4o launch post said the model matched GPT-4 Turbo performance on English text and code, improved on non-English text, and was especially better at vision and audio understanding than existing models at launch.[3] Its system card also describes GPT-4o as an end-to-end omni model trained across text, vision, and audio, with safety evaluations focused heavily on speech-to-speech while also covering text and image capabilities.[7]

In practical testing, the upgrade is most noticeable when prompts are under-specified. GPT-4o is usually better at asking the right clarifying question, preserving intent during rewriting, and avoiding brittle literalism. GPT-4o mini can complete many of the same jobs, but it is more likely to need tighter templates, narrower instructions, and post-processing.

For coding, GPT-4o is also the safer choice when the model must reason across architecture, logs, tests, and ambiguous requirements. For quick code labeling or simple snippets, GPT-4o mini is often enough. If you are comparing reasoning-first models rather than general GPT models, read GPT vs the o-Series or OpenAI o1 vs o3.

Where GPT-4o mini is the smarter choice

GPT-4o mini is strongest when the task can be reduced to a clear instruction and a predictable output shape. Good examples include sentiment labels, support-ticket routing, product attribute extraction, email triage, search-query rewriting, tag generation, translation drafts, and first-pass summaries. These tasks benefit from cost efficiency more than marginal reasoning gains.

OpenAI’s GPT-4o mini release said the model scored 82.0% on MMLU, 87.0% on MGSM, 87.2% on HumanEval, and 59.4% on MMMU in the evaluations OpenAI reported.[4] The same release positioned GPT-4o mini for low-cost, low-latency applications that chain multiple calls, pass large context, or respond quickly in customer support settings.[4]

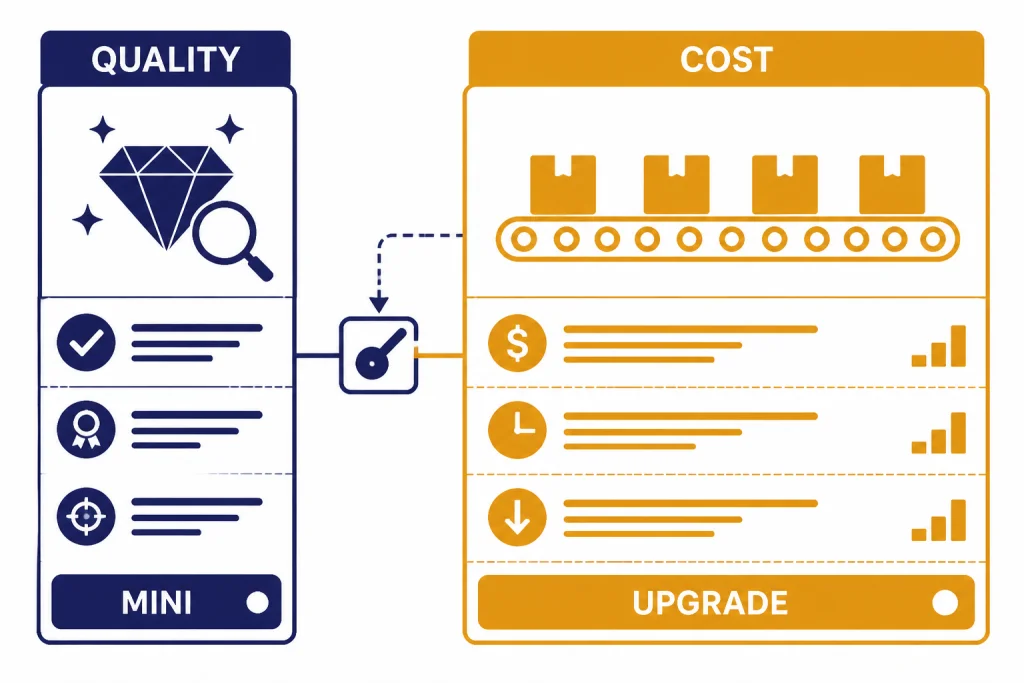

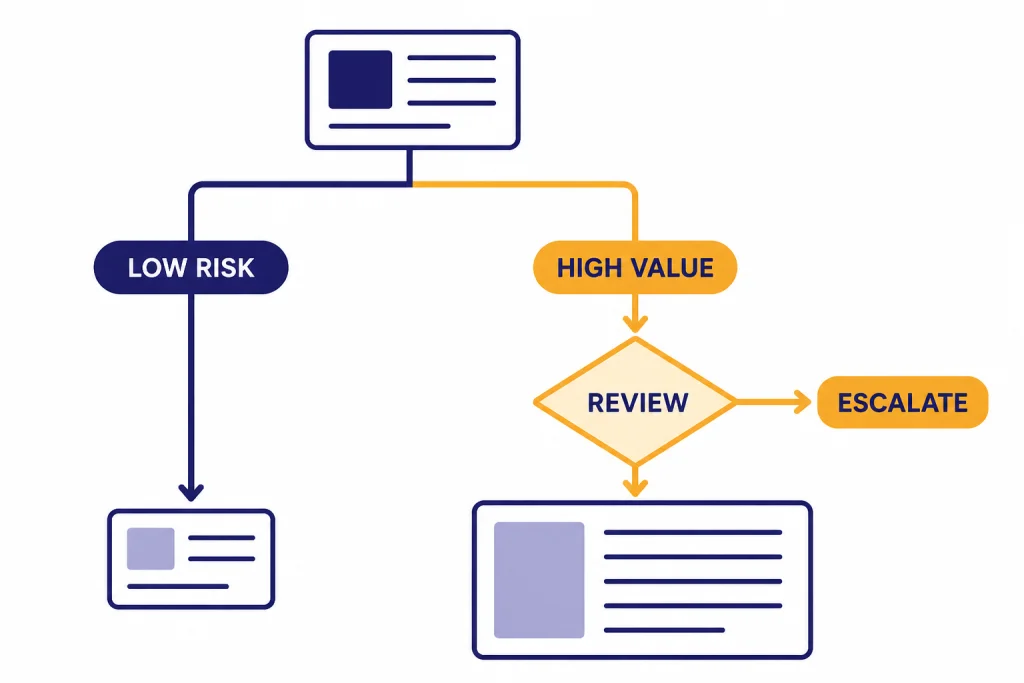

The best mini-model workflows add guardrails. Use structured outputs. Validate fields. Retry only failed rows. Send low-confidence cases to GPT-4o or a human reviewer. This pattern usually beats a one-model strategy because most production workloads contain a mix of easy and hard requests.

GPT-4o mini is also useful as a drafting layer. It can create the first summary, extract the entities, or normalize the data. GPT-4o can then handle the final answer, escalation, or customer-visible explanation. This two-step approach is especially useful in support, compliance review, and sales operations.

Decision guide

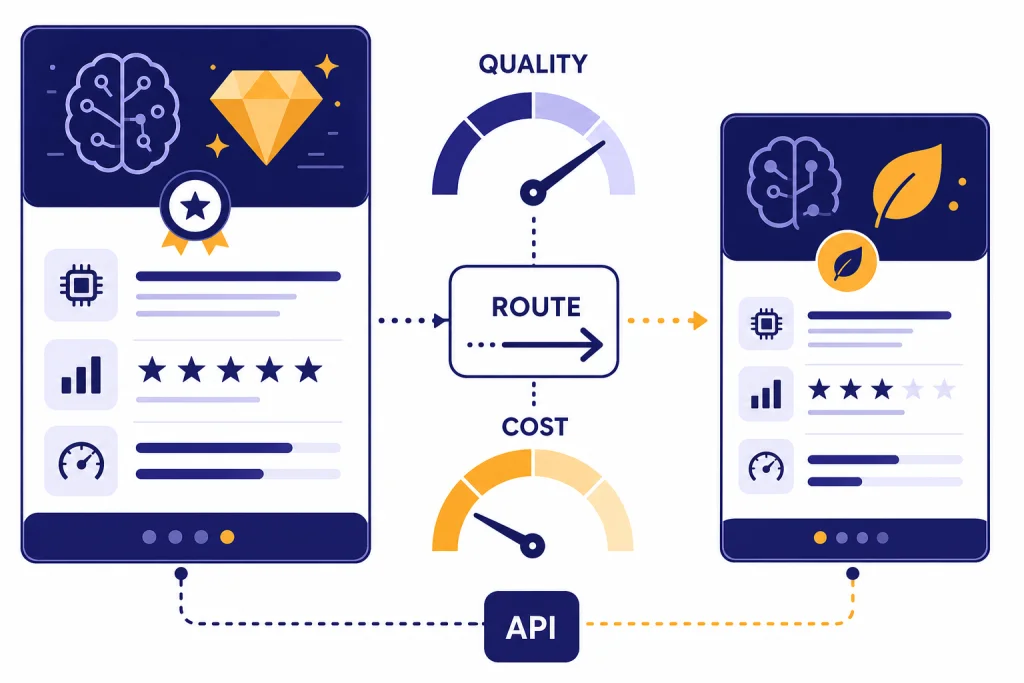

The best answer is not “always upgrade.” The best answer is “route.” Use GPT-4o mini as the default for low-risk requests, then route selected cases to GPT-4o when the request is valuable, vague, visual, or hard to verify. This keeps cost predictable without forcing your hardest tasks through the weaker model.

- Choose GPT-4o mini first for classification, extraction, tagging, routing, templated replies, and bulk processing.

- Upgrade to GPT-4o for nuanced writing, complex analysis, image-heavy tasks, multilingual nuance, and final customer-facing responses.

- Use both when you can let mini handle the first pass and GPT-4o handle exceptions or final review.

- Do not use either blindly for regulated, medical, legal, or financial decisions without domain review and appropriate controls.

A simple production rule works well: if the output is cheap to check, use GPT-4o mini. If the output is expensive to check, expensive to correct, or directly visible to an important user, use GPT-4o. If you are choosing among newer OpenAI generations, compare this with GPT-5 vs GPT-4o and GPT-5 vs GPT-5.1.

For most API teams, GPT-4o mini should be the baseline. GPT-4o should be the escalation model. That is the cleanest way to make the upgrade worth paying for.

Frequently asked questions

Is GPT-4o better than GPT-4o mini?

Yes, GPT-4o is the stronger model for complex, ambiguous, visual, and customer-facing work. GPT-4o mini is not meant to beat it on overall quality. It is meant to deliver acceptable quality at much lower cost for focused tasks.

Is GPT-4o mini good enough for production?

Yes, if the workflow is narrow and you validate the output. It is a good fit for extraction, labeling, routing, and structured transformations. It is a poor fit for tasks where a subtle mistake can create legal, financial, safety, or customer-trust problems.

Are GPT-4o and GPT-4o mini still available in ChatGPT?

Not as normal ChatGPT choices as of this article’s publication date. OpenAI says GPT-4o and other legacy models were retired from ChatGPT on February 13, 2026, and that API access remained unchanged.[5] Business, Enterprise, and Edu Custom GPT access to GPT-4o ended after April 3, 2026.[5]

Do GPT-4o and GPT-4o mini have the same context window?

OpenAI’s API model pages list a 128,000-token context window for both GPT-4o and GPT-4o mini.[1][2] That does not mean they perform equally on long prompts. GPT-4o is usually the safer choice when the model must synthesize many parts of a large context.

Why not use GPT-4o for everything?

Cost is the main reason. GPT-4o’s listed standard input and output token prices are about 16.7 times GPT-4o mini’s listed prices.[1][2] At production scale, that difference can be larger than the value of the quality improvement on easy tasks.

What is the best upgrade strategy?

Use GPT-4o mini for the first pass and route exceptions to GPT-4o. Escalate when confidence is low, the prompt includes images, the user is high value, or the answer will be published without review. This gives you most of the cost benefit without giving up quality where it matters.