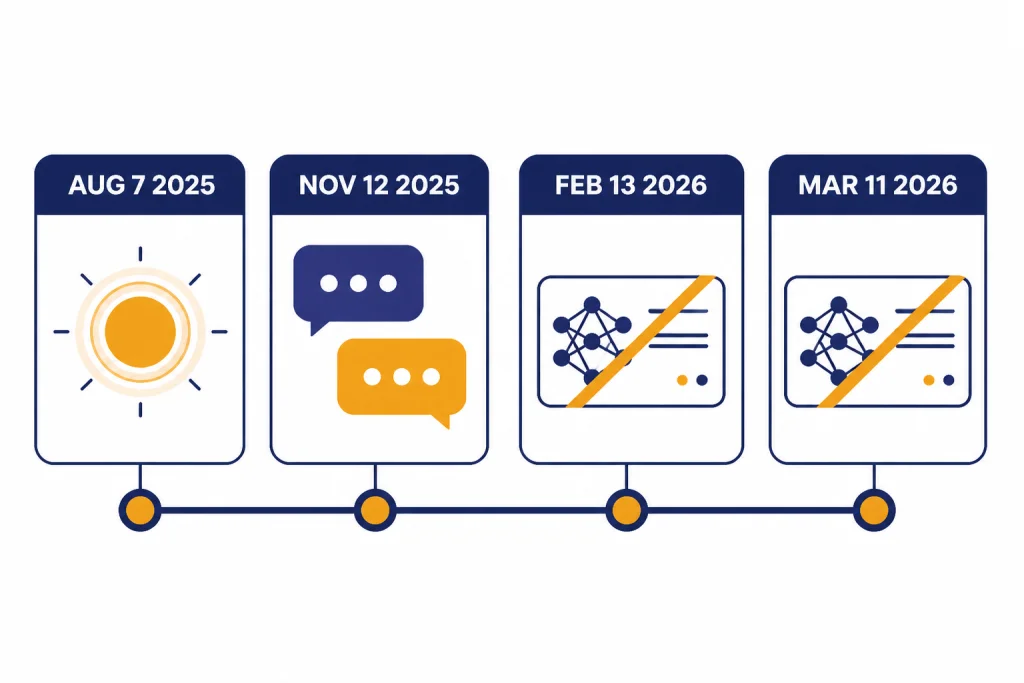

GPT-5.1 was not a full reset of GPT-5. It was a practical revision: faster on simple work, more adaptive about reasoning time, better at coding workflows, more conversational in ChatGPT, and cheaper to operate in long-running sessions when prompt caching applies. OpenAI introduced GPT-5 on August 7, 2025, then updated ChatGPT to GPT-5.1 Instant and GPT-5.1 Thinking on November 12, 2025, followed by GPT-5.1 in the API on November 13, 2025.[1][3][4] As of April 19, 2026, GPT-5.1 is no longer selectable in ChatGPT, but OpenAI says it remains available through the API.[7]

Quick verdict

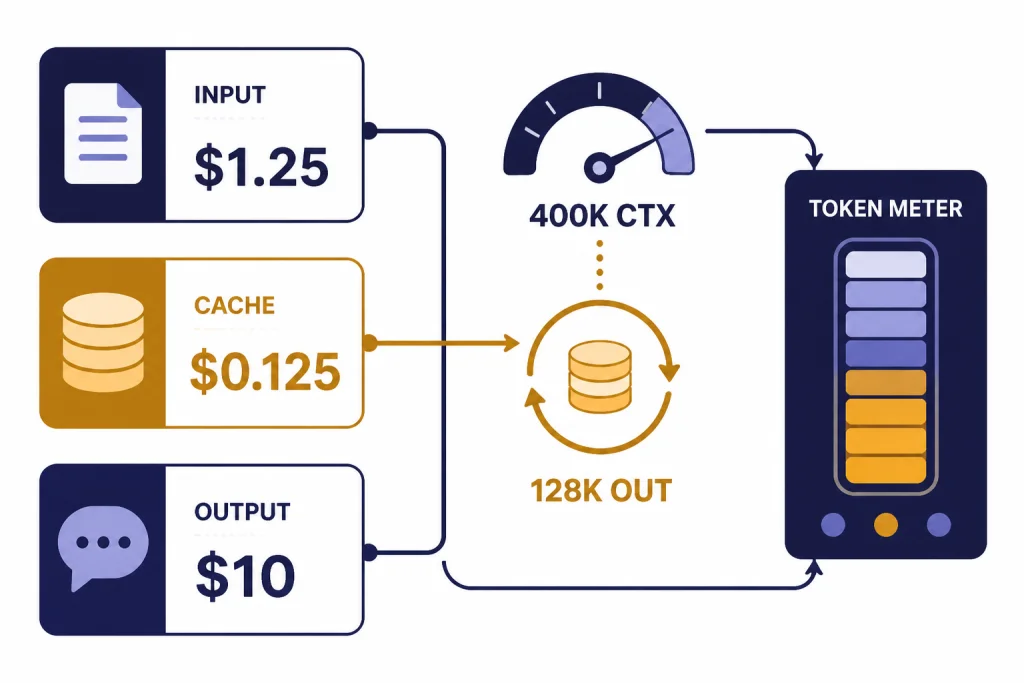

The short version: GPT-5.1 was a better everyday production model than GPT-5 for many API teams, but it was not a simple across-the-board benchmark sweep. It kept the same documented API list pricing and the same documented 400,000-token context window and 128,000-token max output limit as GPT-5.[5][6] The real changes were behavior and controls: adaptive reasoning, a no-reasoning mode, longer prompt-cache retention, stronger coding ergonomics, and a more conversational ChatGPT personality.[3][4]

If you use ChatGPT, the comparison is now mostly historical. OpenAI retired GPT-5 Instant and GPT-5 Thinking from ChatGPT on February 13, 2026, and retired GPT-5.1 Instant, GPT-5.1 Thinking, and GPT-5.1 Pro from ChatGPT on March 11, 2026.[7] If you use the API, the comparison still matters because OpenAI says GPT-5.1 remains available through the API and that it did not currently plan to deprecate GPT-5 in the API when GPT-5.1 launched.[4][7] For a broader map of the model family, see all GPT models compared side by side.

- Choose GPT-5.1 in the API when latency, tool calling, code editing, or conversational polish matter.

- Keep GPT-5 in the API when your evals are already validated and the risk of behavior drift matters more than new features.

- Do not chase GPT-5.1 in ChatGPT as a current picker option. Use the current Instant, Thinking, and Pro choices exposed in ChatGPT instead.[7]

Availability changed more than the name suggests

The most important current detail is availability. GPT-5 and GPT-5.1 are easy to compare on paper, but they no longer occupy the same place in ChatGPT. GPT-5 launched as the new default ChatGPT system in August 2025, while GPT-5.1 later replaced GPT-5 Instant and GPT-5 Thinking with GPT-5.1 Instant and GPT-5.1 Thinking in November 2025.[1][3] That made GPT-5.1 feel like a normal in-product upgrade at launch.

By April 19, 2026, the ChatGPT story is different. OpenAI says GPT-5 Instant and GPT-5 Thinking were retired from ChatGPT on February 13, 2026, while GPT-5.1 Instant, GPT-5.1 Thinking, and GPT-5.1 Pro were retired from ChatGPT on March 11, 2026.[7] OpenAI also says these models continue to be available through the API.[7] If your main question is plan access rather than model behavior, start with our ChatGPT plan comparison.

| Surface | GPT-5 status | GPT-5.1 status | Practical effect |

|---|---|---|---|

| ChatGPT | GPT-5 Instant and GPT-5 Thinking retired on February 13, 2026.[7] | GPT-5.1 Instant, Thinking, and Pro retired on March 11, 2026.[7] | Use the current ChatGPT model picker, not the old labels. |

| OpenAI API | OpenAI said it did not currently plan to deprecate GPT-5 in the API when GPT-5.1 launched.[4] | GPT-5.1 and gpt-5.1-chat-latest were released to developers on all paid API tiers.[4] | API teams can still compare behavior in their own evals. |

| Legacy comparison | Useful as the original GPT-5 baseline. | Useful as the first major GPT-5 refinement. | The comparison explains what changed between the first and second GPT-5-era releases. |

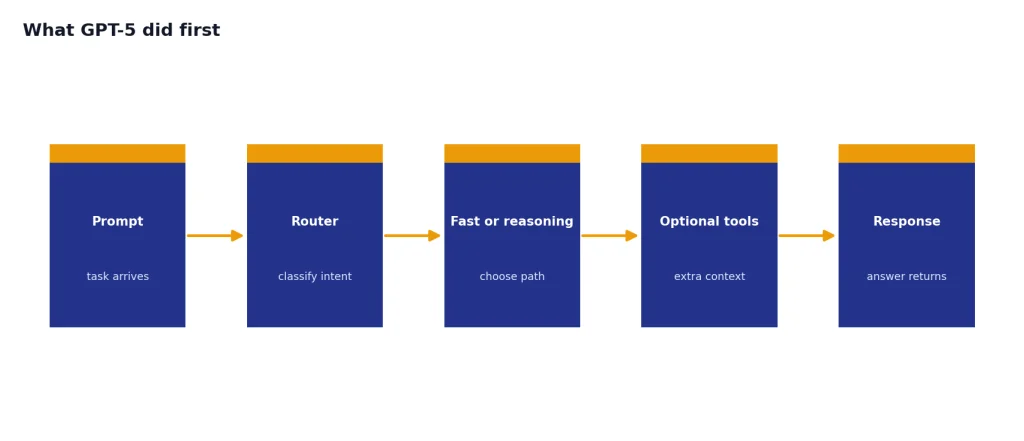

What GPT-5 did first

GPT-5 mattered because it simplified OpenAI’s product story. OpenAI described GPT-5 as a unified system with a smart efficient model, a deeper reasoning model called GPT-5 thinking, and a real-time router that decides which path to use based on the task, tools, complexity, and user intent.[1] That architecture was the bridge between fast chat models and slower reasoning models. If you want the model-family background, our GPT vs the o-Series explainer covers the reasoning split in more detail.

GPT-5 also reset expectations for coding, writing, health, visual perception, instruction following, and hallucination reduction compared with prior OpenAI models.[1] In the API, OpenAI released three GPT-5 sizes: gpt-5, gpt-5-mini, and gpt-5-nano.[2] The non-reasoning ChatGPT variant was also exposed as gpt-5-chat-latest.[2] For context on the earlier upgrade path, see GPT-4 vs GPT-5 upgrade path and our GPT-5 vs GPT-4o benchmark context.

The main thing GPT-5 established was the auto-switching model experience. Users no longer had to decide between a fast general model and a reasoning model for every prompt. GPT-5 made that routing a core product behavior, then GPT-5.1 tuned how that behavior felt and how much compute it used.[1][4]

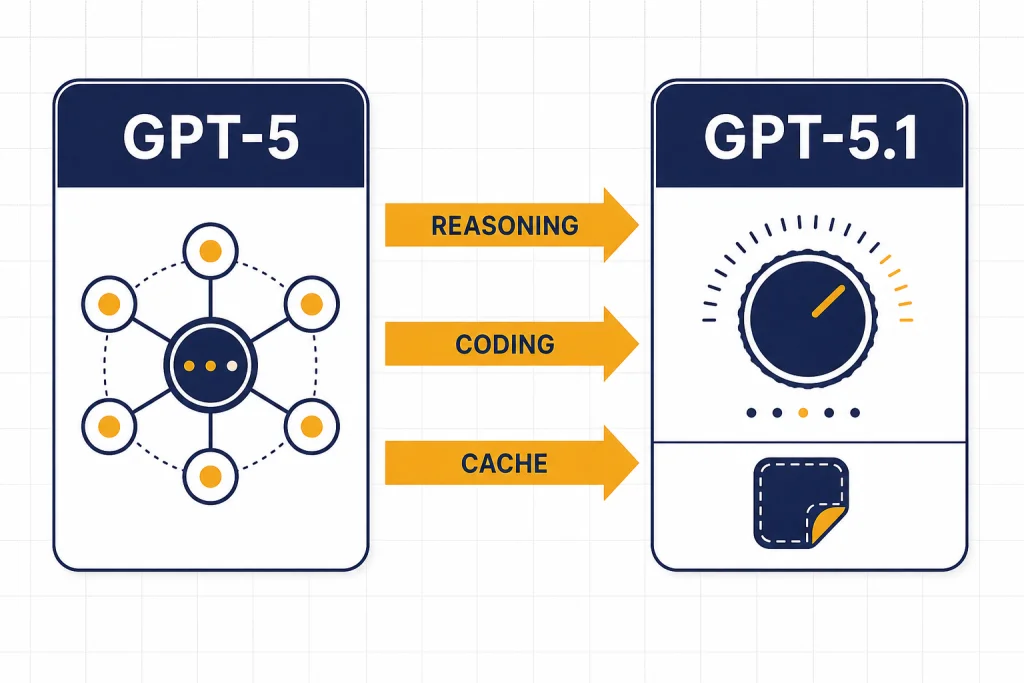

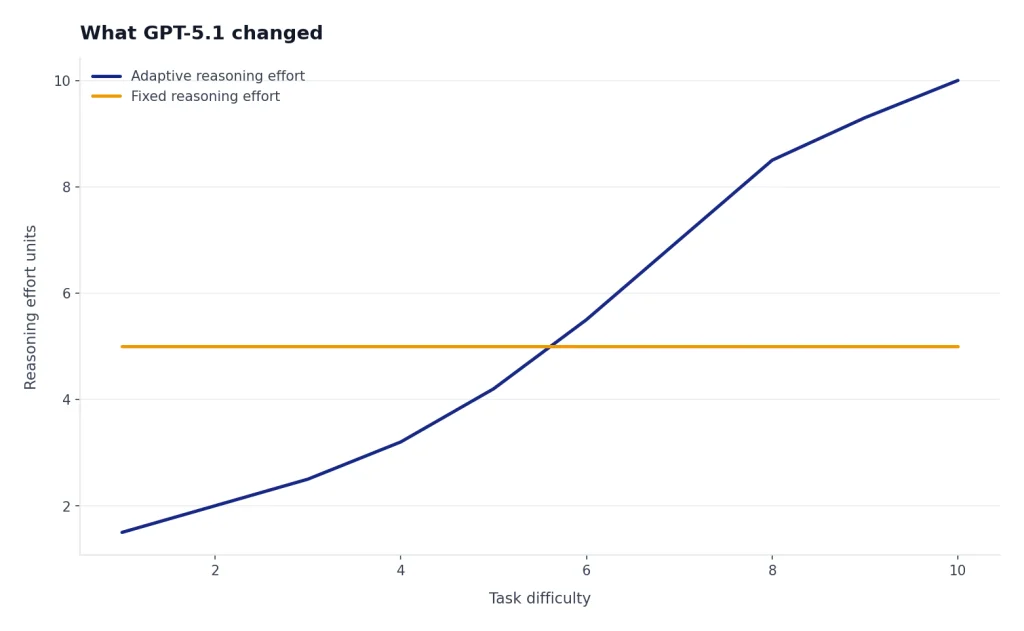

What GPT-5.1 changed

GPT-5.1 changed the operating profile more than the model label suggests. OpenAI positioned GPT-5.1 as the next GPT-5-series model for agentic and coding tasks, with dynamic reasoning that spends less effort on simple work and more effort on difficult tasks.[4] In practice, that meant GPT-5.1 tried to reduce overthinking on easy requests without giving up deeper work where deeper reasoning helped.

Reasoning controls became more flexible

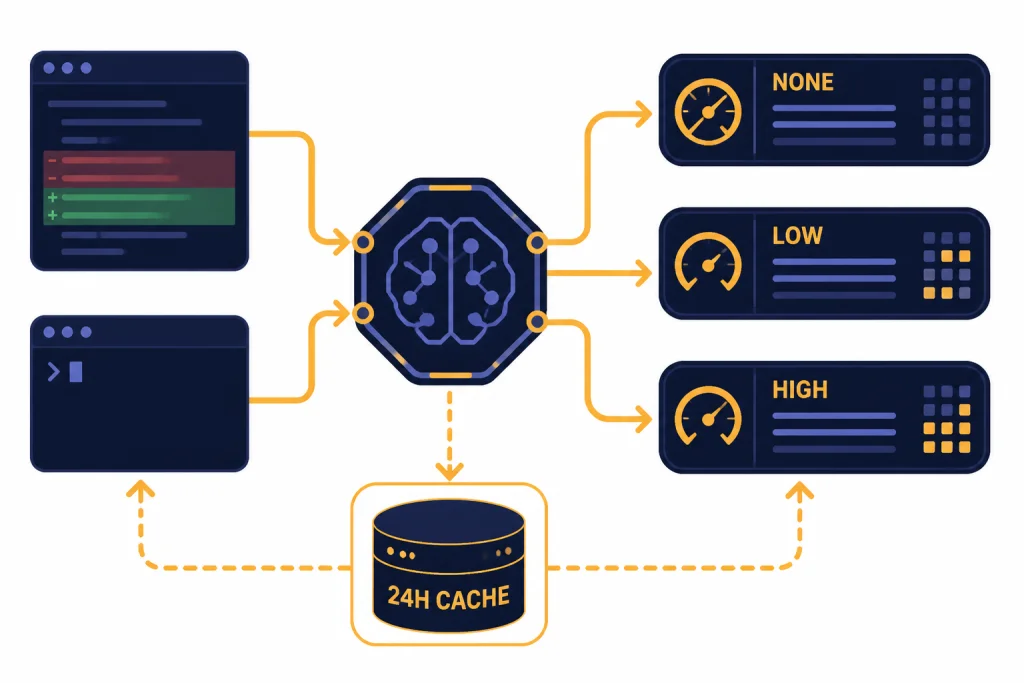

GPT-5 supported minimal, low, medium, and high reasoning effort in the API docs.[6] GPT-5.1 changed that control surface to none, low, medium, and high, with none described as the default on the GPT-5.1 model page.[5] OpenAI also said the new no-reasoning mode was meant for latency-sensitive use cases that do not need deep thinking.[4] That is the most concrete API-level difference between GPT-5 and GPT-5.1.

ChatGPT became more conversational

In ChatGPT, OpenAI said GPT-5.1 Instant used light adaptive reasoning for harder questions while staying fast, and GPT-5.1 Thinking adapted its thinking time more precisely for complex tasks with clearer, less jargony responses.[3] OpenAI also updated tone controls with presets such as Default, Friendly, Efficient, Professional, Candid, and Quirky, and said GPT-5.1 models followed custom instructions and style preferences more reliably.[3] This was a product-experience change, not just a raw intelligence change.

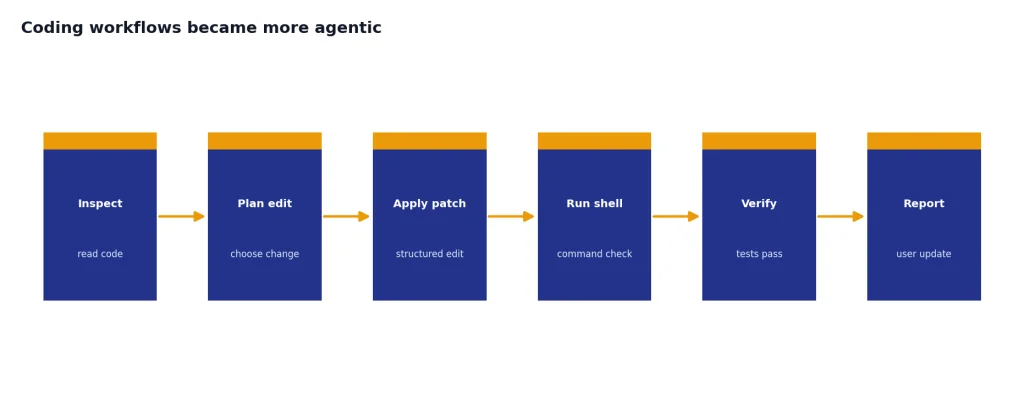

Coding workflows became more agentic

OpenAI said GPT-5.1 built on GPT-5’s coding strengths with a more steerable coding personality, less overthinking, improved code quality, better user-facing updates during tool calls, and more functional frontend designs, especially at low reasoning effort.[4] It also introduced two developer tools: apply_patch for structured code edits and shell for controlled command-line interaction.[4] That matters most for coding agents, IDE integrations, and systems that need the model to inspect, modify, and verify code in a loop.

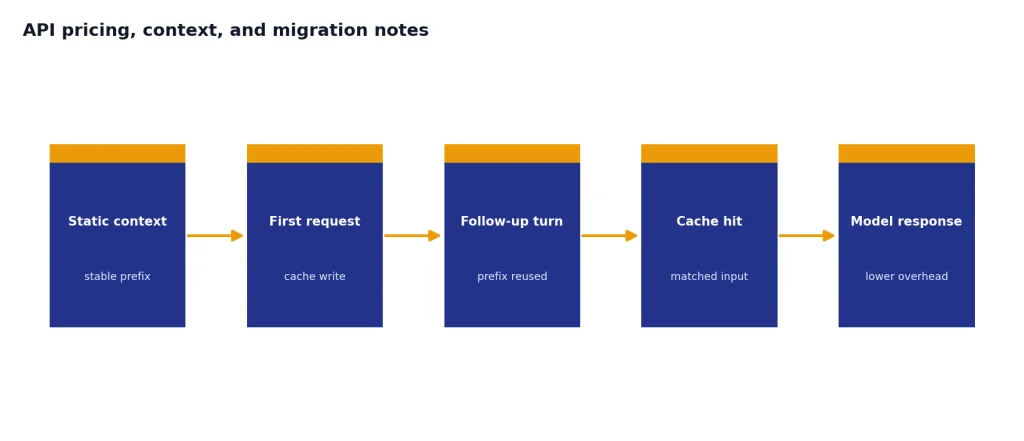

Prompt caching became more useful

GPT-5.1 added extended prompt caching with up to 24-hour cache retention.[4] OpenAI said cached input token pricing remained 90% cheaper than uncached input tokens, with no additional charge for cache writes or storage.[4] This did not change the headline model price, but it could change real cost for long-running chats, coding sessions, and retrieval workflows that reuse large static context.

GPT-5 vs GPT-5.1 comparison table

The clearest way to read GPT-5 vs GPT-5.1 is to separate architecture, availability, controls, and price. GPT-5 set the unified-router baseline. GPT-5.1 refined that baseline with more adaptive compute and better production ergonomics.

| Category | GPT-5 | GPT-5.1 | What actually changed |

|---|---|---|---|

| Original launch role | Unified ChatGPT system with fast and deeper-reasoning paths.[1] | Update to GPT-5 with GPT-5.1 Instant and GPT-5.1 Thinking in ChatGPT.[3] | GPT-5.1 refined the same product direction rather than replacing it with a new category. |

| API model names | gpt-5, gpt-5-mini, gpt-5-nano, and gpt-5-chat-latest.[2] | gpt-5.1 and gpt-5.1-chat-latest, plus GPT-5.1 Codex variants.[4] | GPT-5.1 added a newer default target for many API workloads. |

| Reasoning effort | minimal, low, medium, and high.[6] | none, low, medium, and high.[5] | The new no-reasoning mode made low-latency GPT-5.1 use more practical. |

| Documented context and output | 400,000-token context window and 128,000 max output tokens.[6] | 400,000-token context window and 128,000 max output tokens.[5] | No documented API context-window increase between the two. |

| Documented text API price | $1.25 per 1M input tokens, $0.125 per 1M cached input tokens, and $10.00 per 1M output tokens.[6] | $1.25 per 1M input tokens, $0.125 per 1M cached input tokens, and $10.00 per 1M output tokens.[5] | The list price stayed the same; cost differences come from efficiency and caching. |

| Knowledge cutoff in model docs | September 30, 2024.[6] | September 30, 2024.[5] | GPT-5.1 was not documented as a newer-knowledge model on its API model page. |

| ChatGPT status on April 19, 2026 | Retired from ChatGPT for GPT-5 Instant and GPT-5 Thinking.[7] | Retired from ChatGPT for GPT-5.1 Instant, GPT-5.1 Thinking, and GPT-5.1 Pro.[7] | The live comparison is mainly an API and historical comparison. |

Performance and benchmark changes

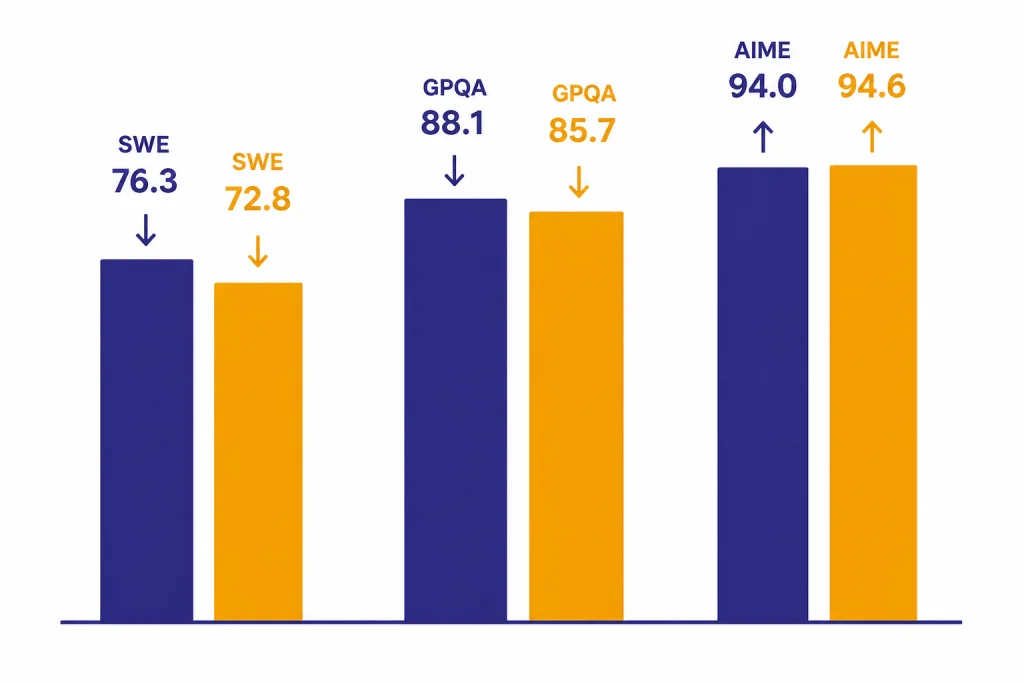

OpenAI’s GPT-5.1 developer appendix shows a mixed but mostly positive benchmark picture at high reasoning effort. GPT-5.1 improved on several coding, science, multimodal, and agentic-service benchmarks, but GPT-5 remained ahead on some rows. The conclusion is not that GPT-5.1 won everything. The conclusion is that GPT-5.1 improved the balance of intelligence, speed, and usability for many real workflows.[4]

| OpenAI evaluation | GPT-5.1 high | GPT-5 high | Direction | Source |

|---|---|---|---|---|

| SWE-bench Verified, all 500 problems | 76.3% | 72.8% | GPT-5.1 higher | [4] |

| GPQA Diamond, no tools | 88.1% | 85.7% | GPT-5.1 higher | [4] |

| AIME 2025, no tools | 94.0% | 94.6% | GPT-5 higher | [4] |

| FrontierMath, with Python tool | 26.7% | 26.3% | GPT-5.1 higher | [4] |

| MMMU | 85.4% | 84.2% | GPT-5.1 higher | [4] |

| Tau 2-bench Airline | 67.0% | 62.6% | GPT-5.1 higher | [4] |

| Tau 2-bench Telecom | 95.6% | 96.7% | GPT-5 higher | [4] |

| Tau 2-bench Retail | 77.9% | 81.1% | GPT-5 higher | [4] |

| BrowseComp Long Context 128k | 90.0% | 90.0% | No change | [4] |

The safety story is also nuanced. OpenAI’s GPT-5.1 system-card addendum says GPT-5.1 Thinking and GPT-5.1 Instant showed comparable safety performance to their GPT-5 predecessors on challenging evaluations, while noting some light regressions for GPT-5.1 Thinking in categories involving harassment, hateful language, and disallowed sexual content.[8] That is another reason to run domain-specific evals before switching production systems. If speed is your main concern, compare this article with our fastest GPT model tests.

API pricing, context, and migration notes

For API teams, GPT-5.1 was not a pricing shock. The GPT-5.1 model page lists $1.25 per 1M input tokens, $0.125 per 1M cached input tokens, and $10.00 per 1M output tokens.[5] The GPT-5 model page lists the same amounts.[6] Both pages also list a 400,000-token context window, 128,000 max output tokens, text and image input, text output, and no audio or video support.[5][6] For a broader cost table, use our OpenAI API pricing guide, and for context details see the context window guide.

The practical cost difference is therefore not the sticker price. It is how often GPT-5.1 can avoid unnecessary reasoning, how much follow-up traffic can hit cached context, and whether better tool behavior reduces retries. OpenAI’s 24-hour prompt-cache retention for GPT-5.1 is the biggest concrete cost lever for long sessions that reuse large context blocks.[4]

Migration checklist

- Run your own evals first. GPT-5.1 improves many OpenAI-reported benchmarks, but not all of them.[4]

- Replace old reasoning assumptions. GPT-5 used

minimal; GPT-5.1 addednonefor latency-sensitive calls.[5][6] - Test low-latency tool calls separately. No-reasoning mode can be attractive for routers, search helpers, and customer-support tools that do not need deep analysis.[4]

- Use extended caching where the prompt is stable. Product manuals, codebase summaries, policy documents, and large retrieval context can benefit when reused across turns.[4]

- Sandbox coding tools. The

apply_patchandshelltools are useful for agentic coding, but they should be gated by permissions, review, and environment isolation.[4] - Keep a GPT-5 fallback during rollout. OpenAI said it did not currently plan to deprecate GPT-5 in the API when GPT-5.1 launched, so teams had room to transition deliberately.[4]

Use-case recommendations

For new API projects, GPT-5.1 is the better default starting point unless your internal tests say otherwise. It gives you the same listed price and documented context size as GPT-5, plus more flexible reasoning controls, extended prompt caching, and coding-specific tools.[4][5][6] That makes it especially attractive for short tool calls, customer-facing assistants, code review flows, and agents that need to move quickly without spending reasoning tokens on every step.

For existing GPT-5 deployments, do not migrate only because the name is newer. Move when you can measure gains in latency, completion quality, tool success, token use, or user satisfaction. Keep GPT-5 for audited workflows that are already passing validation, especially where a style or safety change would require a new review cycle. GPT-5.1 is a better target for many cases, but not a substitute for release engineering.

For ChatGPT users, the right lesson is simpler. GPT-5.1 explained the direction of later ChatGPT models: faster everyday answers, more controllable reasoning, and better conversational tone. It is not a model you should expect to select directly in ChatGPT on April 19, 2026, because OpenAI says the GPT-5.1 ChatGPT models were retired on March 11, 2026.[7]

Frequently asked questions

Is GPT-5.1 a completely new generation?

No. OpenAI described GPT-5.1 as the next model in the GPT-5 series, not as a separate GPT-6-style generation.[4] The practical changes were adaptive reasoning, no-reasoning mode, better coding workflows, longer prompt-cache retention, and improved ChatGPT tone behavior.

Is GPT-5.1 still available in ChatGPT?

No, not as a selectable ChatGPT model on April 19, 2026. OpenAI says GPT-5.1 Instant, GPT-5.1 Thinking, and GPT-5.1 Pro were retired from ChatGPT on March 11, 2026.[7] Existing conversations and GPTs were moved to corresponding newer models.[7]

Did GPT-5.1 cost more than GPT-5 in the API?

No. The GPT-5 and GPT-5.1 model pages list the same text-token rates: $1.25 per 1M input tokens, $0.125 per 1M cached input tokens, and $10.00 per 1M output tokens.[5][6] Your real bill can still change if GPT-5.1 uses fewer reasoning tokens, gets more cache hits, or reduces retries.

Did the context window increase from GPT-5 to GPT-5.1?

No documented API increase appears on the model pages. Both GPT-5 and GPT-5.1 are listed with a 400,000-token context window and 128,000 max output tokens.[5][6] The bigger difference is GPT-5.1’s 24-hour prompt-cache retention option for reusable context.[4]

Is GPT-5.1 better for coding?

Usually, yes, based on OpenAI’s reported coding features and SWE-bench results. GPT-5.1 scored 76.3% on SWE-bench Verified versus 72.8% for GPT-5 at high reasoning effort.[4] It also added the apply_patch and shell tools for more reliable coding-agent workflows.[4]

Did OpenAI publish parameter counts for GPT-5 or GPT-5.1?

OpenAI has not published an official parameter-count figure for either model in the primary sources reviewed for this article. Treat any unsourced parameter-count claims as speculation. For practical model choice, benchmark your own tasks instead of relying on guessed model size.