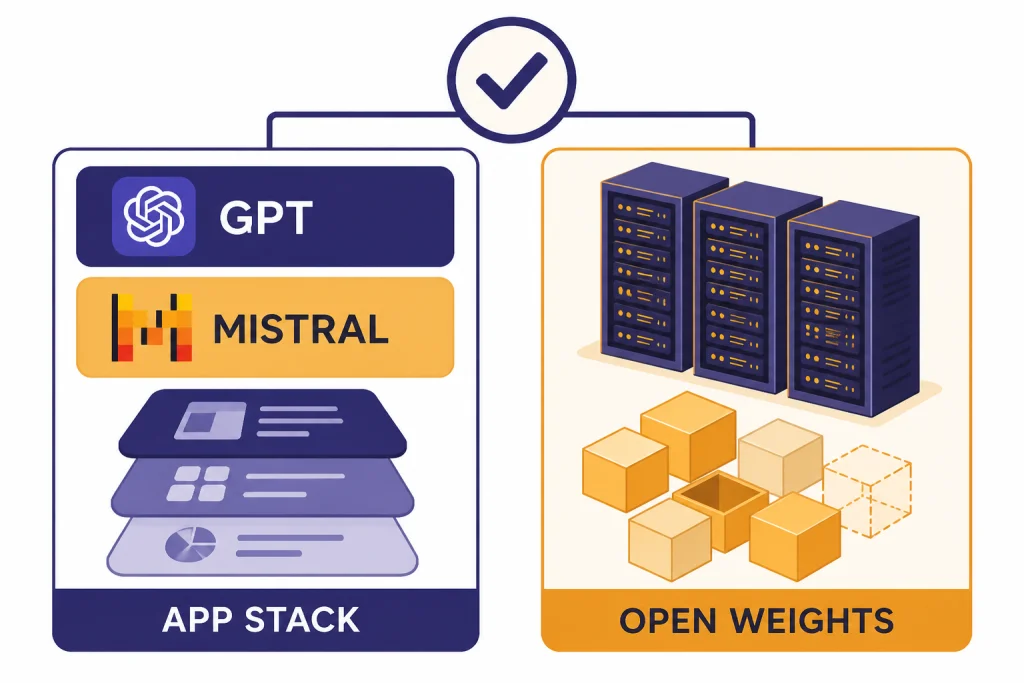

GPT still has the stronger default case for people who want the best integrated assistant, deeper agent workflows, and frontier reasoning inside ChatGPT, Codex, and the OpenAI API. Mistral is the better fit when you need open weights, European deployment posture, lower token prices, or more control over customization. The practical gpt vs mistral decision is not “which lab is smarter.” It is whether you want a polished closed platform or a more open model stack that you can run, tune, and govern closer to your own infrastructure. As of this comparison, GPT-5.4 is OpenAI’s relevant frontier reference point, while Mistral Large 3 is Mistral’s strongest open-weight challenger.[1][4]

Quick verdict

Choose GPT if you want the strongest all-purpose assistant experience with mature tooling around chat, coding, documents, image input, search, agents, and API orchestration. GPT-5.4 launched across ChatGPT, the API, and Codex on March 5, 2026, with OpenAI positioning it for professional work, coding, computer use, tool use, long context, and multimodal reasoning.[1] That makes GPT the safer default for users who do not want to assemble their own stack.

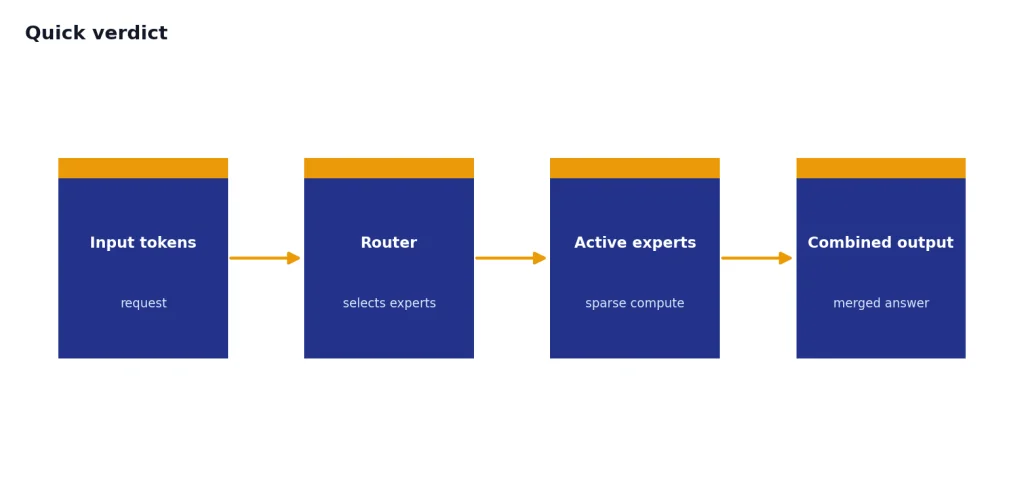

Choose Mistral if openness, cost, and deployment control matter more than having the most integrated consumer assistant. Mistral Large 3 is open weight, multimodal, and built as a sparse mixture-of-experts model with 41B active parameters and 675B total parameters.[5] Mistral also emphasizes European infrastructure, open releases, enterprise customization, and multilingual use cases. If you are also comparing other open model families, read our GPT vs Llama and GPT vs Qwen comparisons.

The short answer: GPT wins for most individuals, knowledge workers, and teams that want dependable end-to-end product quality. Mistral wins for developers, regulated organizations, EU-focused buyers, and teams that want open-weight models they can inspect, host, and adapt.

The model lineups compared

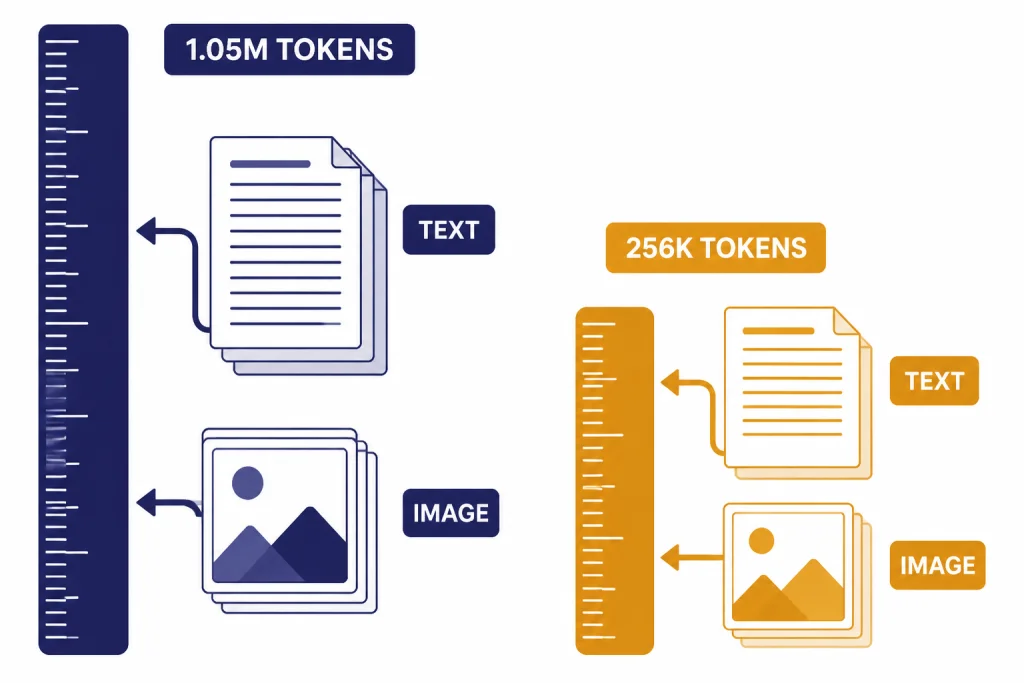

“GPT” is not one model. It is a family of OpenAI models delivered through ChatGPT, Codex, and the API. For this comparison, GPT-5.4 is the main frontier reference because it was the newest broadly relevant GPT release available before this article’s publication date. OpenAI’s model documentation lists GPT-5.4 with a 1,050,000-token context window, 128,000 maximum output tokens, text and image input, and text output.[2]

“Mistral” is also a portfolio. The practical comparison centers on Mistral Large 3 for frontier open-weight work, Mistral Small 4 for efficient general use, Mistral Medium 3.1 for managed frontier-class multimodal work, and specialist models such as Codestral, Devstral, Voxtral, and OCR models. Mistral’s model overview listed Mistral Large 3, Mistral Small 4, Mistral Medium 3.1, Ministral 3 models, Magistral models, Devstral 2, Codestral, Voxtral, and OCR models in its 2026 catalog.[6]

The key difference is packaging. OpenAI sells a vertically integrated experience: ChatGPT for users, Codex for coding, and a developer platform for APIs and agents. Mistral sells a more modular stack: Le Chat for users, Studio and API access for builders, and open weights for self-hosting or third-party deployment. If you need a broader map of OpenAI’s own model lineup, see all GPT models compared side by side.

| Category | GPT reference | Mistral reference | Practical takeaway |

|---|---|---|---|

| Frontier model | GPT-5.4, released March 5, 2026.[1] | Mistral Large 3, released as part of Mistral 3.[4] | GPT is stronger as an integrated product. Mistral is stronger for open deployment. |

| Open weights | No public GPT-5.4 weights.[2] | Mistral Large 3 is open weight under Apache 2.0.[4] | Mistral is the clear choice if self-hosting is required. |

| API context | 1,050,000-token context window for GPT-5.4.[2] | 256k context on the Mistral Large 3 model card.[5] | GPT has the larger published API context window. |

| API price | $2.50 input and $15.00 output per 1M tokens for GPT-5.4.[2] | $0.50 input and $1.50 output per 1M tokens for Mistral Large 3.[5] | Mistral is much cheaper per token for this flagship comparison. |

| Best user app | ChatGPT. | Le Chat. | ChatGPT is broader. Le Chat is improving quickly and has a European-first identity. |

Quality, reasoning, and coding

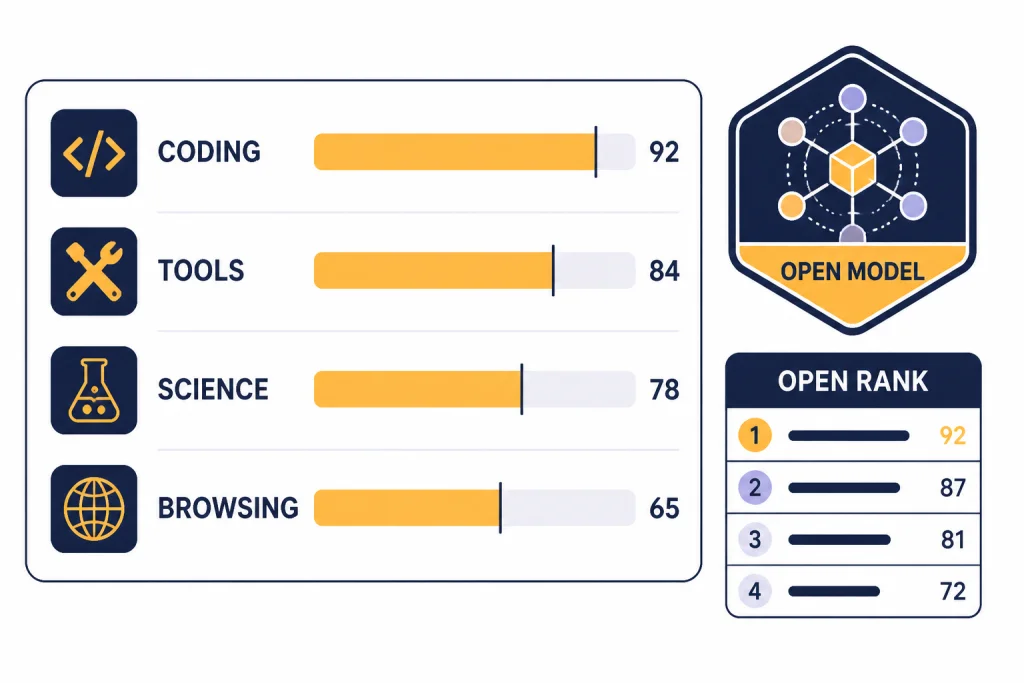

GPT has the edge for complex reasoning and coding because OpenAI has built the model, tooling, and application layer around long-running professional tasks. In OpenAI’s own GPT-5.4 evaluations, GPT-5.4 scored 57.7% on SWE-Bench Pro Public, 75.1% on Terminal-Bench 2.0, 82.7% on BrowseComp, and 92.8% on GPQA Diamond.[1] Vendor benchmarks are not neutral field tests, but they do show where OpenAI optimized the model: software engineering, tool use, professional analysis, visual understanding, and hard reasoning.

Mistral Large 3 is best understood differently. Mistral does not present it as a closed frontier assistant that beats every proprietary model. It presents it as a state-of-the-art open model with strong multilingual, multimodal, and enterprise customization value. Mistral says Large 3 debuted at number 2 in the OSS non-reasoning category and number 6 among OSS models overall on the LMArena leaderboard.[4] That is the right framing: Mistral is not merely a chatbot alternative. It is an open model option for organizations that care about control.

In hands-on workflow terms, GPT usually feels more reliable when the task has many moving parts: debug this failing test suite, inspect this spreadsheet, summarize these documents, call tools, revise the answer, and produce a final artifact. Mistral often shines when the task is narrower, multilingual, cost-sensitive, or tied to a controlled deployment environment. If coding speed matters more than model ideology, compare this article with our fastest GPT model guide and our GPT vs the o-Series breakdown.

The most honest verdict is that GPT is still easier to trust as a general reasoning assistant. Mistral is easier to justify when the organization has a reason not to depend fully on a closed U.S. platform.

Context windows and modalities

Context is one of GPT’s clearest advantages in this comparison. OpenAI’s GPT-5.4 model page lists a 1,050,000-token context window and 128,000 maximum output tokens.[2] That matters for long contracts, source repositories, audit trails, product documentation, and multi-file analysis. It does not guarantee perfect recall, but it gives developers and analysts more room before they need chunking, retrieval, or summarization layers.

Mistral Large 3’s model card lists a 256k context window.[5] Mistral’s separate known-limitations page lists “Mistral Large” at 131,072 tokens, which appears to refer to the broader or older Mistral Large category rather than the Large 3 model card.[8] Because those two official Mistral pages use different labels, use the Mistral Large 3 model card when you are choosing that specific model, and validate the effective limit in your deployment before production.

Modalities are close, but the product experience differs. GPT-5.4 supports text and image input with text output in the API.[2] Mistral Large 3 is also presented as a multimodal model, and Mistral’s model card lists features such as chat completions, function calling, agents and conversations, structured outputs, OCR, document Q&A, FIM, embeddings, moderations, transcriptions, and text to speech as platform capabilities around the card.[5]

If your workload depends mainly on long-context ingestion, GPT has the cleaner published advantage. If your workload depends on combining text, vision, OCR, and open deployment choices, Mistral becomes more interesting. For more detail on token windows across OpenAI models, see our context window comparison.

Pricing and cost control

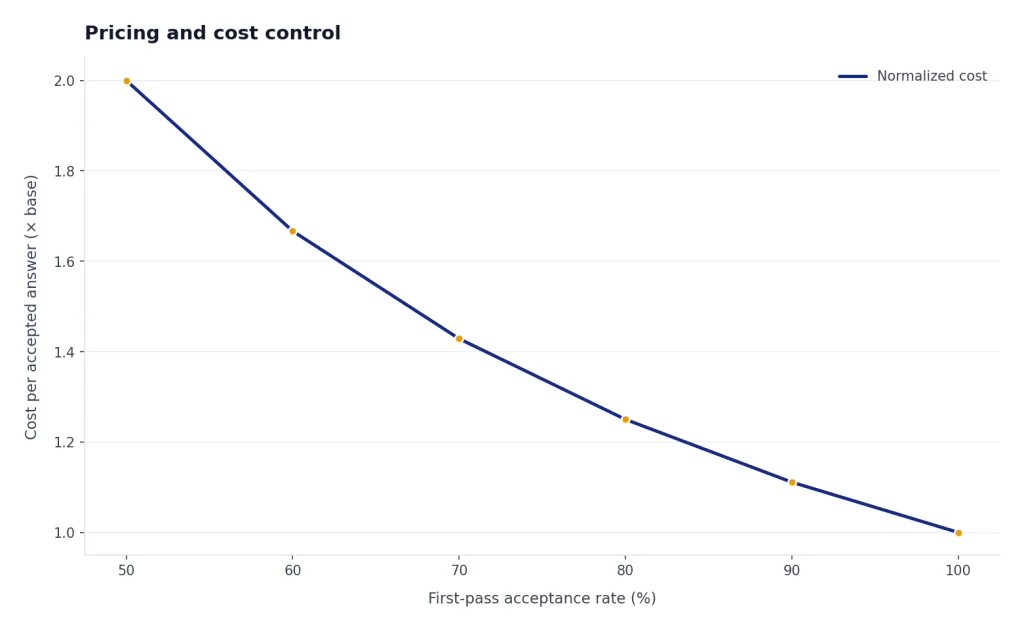

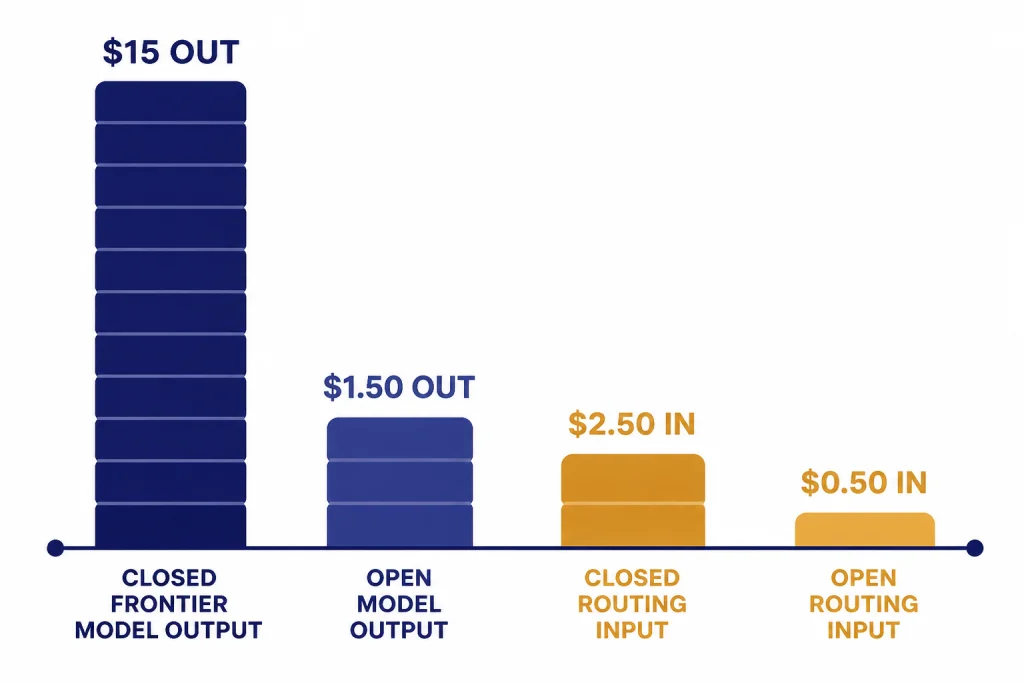

Mistral’s most obvious advantage is API cost. Mistral Large 3 is listed at $0.50 per 1M input tokens and $1.50 per 1M output tokens.[5] GPT-5.4 is listed at $2.50 per 1M input tokens and $15.00 per 1M output tokens.[2] On token price alone, Mistral is far cheaper for high-volume applications, especially when responses are long.

OpenAI narrows that gap with smaller models. GPT-5.4 mini has a 400k context window and costs $0.75 per 1M input tokens and $4.50 per 1M output tokens.[3] GPT-5.4 nano costs $0.20 per 1M input tokens and $1.25 per 1M output tokens.[3] Those options make the GPT stack more flexible for routing. A developer can send hard tasks to GPT-5.4 and routine work to smaller GPT models. Mistral can do a similar thing with its own small and specialist models, but the choice depends on whether you need OpenAI’s tools or Mistral’s openness.

Do not compare only sticker prices. GPT may save time if it needs fewer retries, handles tool calls better, or produces cleaner first drafts. Mistral may save money if you can self-host, batch work, tune prompts tightly, or accept a little more application engineering. The right economic test is cost per accepted answer, not cost per token. If you are budgeting OpenAI workloads, pair this section with our OpenAI API pricing guide.

| Model | Input price | Output price | Published context | Best use |

|---|---|---|---|---|

| GPT-5.4 | $2.50 per 1M tokens[2] | $15.00 per 1M tokens[2] | 1,050,000 tokens[2] | Complex professional work and agent workflows. |

| GPT-5.4 mini | $0.75 per 1M tokens[3] | $4.50 per 1M tokens[3] | 400k tokens[3] | High-volume coding, tool use, and subagent tasks. |

| GPT-5.4 nano | $0.20 per 1M tokens[3] | $1.25 per 1M tokens[3] | Not the flagship context tier. | Low-cost routing and routine automation. |

| Mistral Large 3 | $0.50 per 1M tokens[5] | $1.50 per 1M tokens[5] | 256k tokens[5] | Open-weight enterprise workloads and cheaper frontier-style inference. |

Apps, tools, and daily workflow

For most readers, the app layer matters as much as the base model. ChatGPT is the more mature general assistant. It has a large ecosystem of consumer and business features, and GPT-5.4 was released into ChatGPT, the API, and Codex at the same time.[1] That makes it easier to move from casual question to document analysis, coding, image reasoning, and agent work without switching vendors.

Le Chat is Mistral’s answer. Mistral describes Le Chat as a conversational AI assistant available on the web, iOS, and Android, with chat, research, document analysis, image generation, code interpretation, voice, custom agents, connectors, libraries, memories, and Canvas-style editing.[9][10] It is not just a demo window for Mistral models. It is becoming a real workspace.

Le Chat’s pricing is aggressive. Mistral’s pricing page lists Free, Pro, Team, and Enterprise plans, with Pro at $14.99 and Team at $24.99 per month before taxes.[7] The Free plan includes Le Chat access, Mistral models, up to 500 memories, image generation, projects, and more than 40 enterprise connectors.[7] Pro adds higher limits, extended thinking, deep research reports, up to 15GB of document storage, up to 1,000 projects, Mistral Vibe for all-day coding, support, and state-of-the-art image generation.[7]

Le Chat also has voice mode across web and mobile apps. Mistral says voice mode supports English, French, Spanish, German, Russian, Chinese, Japanese, Italian, Portuguese, Dutch, Arabic, Hindi, and Korean.[11] That reinforces Mistral’s European and multilingual positioning. GPT still has the stronger mainstream assistant ecosystem, but Le Chat is no longer a minor side project.

If you are choosing based on a consumer plan rather than an API, compare this with our ChatGPT Free vs Plus vs Pro guide. If you mainly want alternatives to ChatGPT, start with our AI chatbot alternatives list.

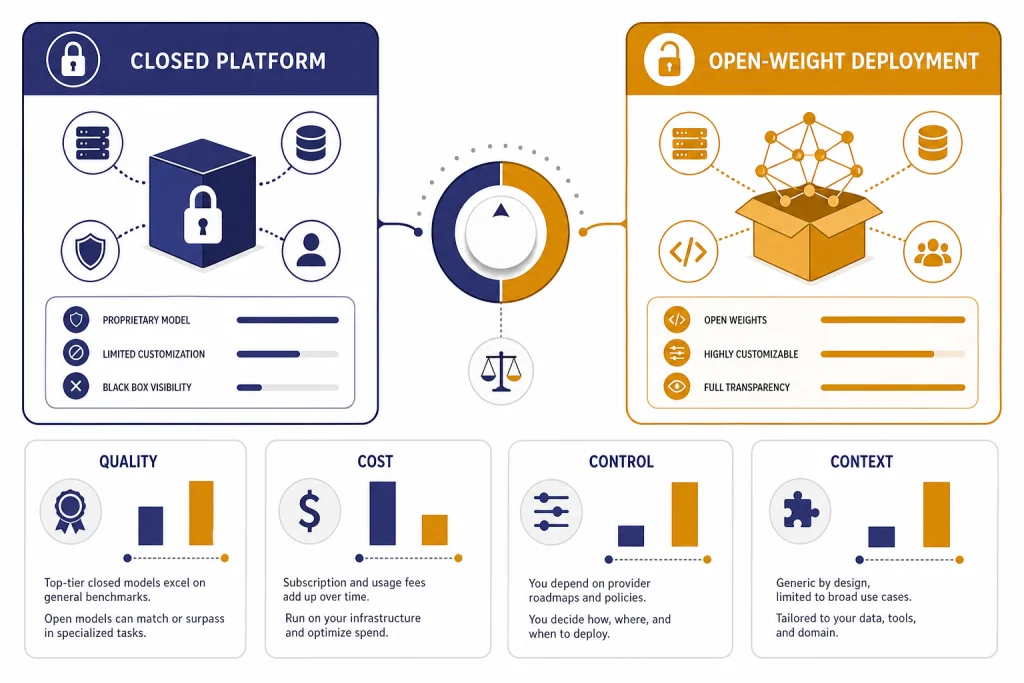

Open weights, privacy, and control

This is where Mistral has its strongest argument. Mistral Large 3 is open weight under Apache 2.0, while GPT-5.4 is a closed OpenAI model.[4][2] For some buyers, that settles the debate. They need to run models on their own infrastructure, inspect deployment behavior, satisfy procurement rules, reduce vendor concentration, or keep sensitive workloads in a chosen region.

Mistral also says the Mistral API is served from EU data centers by default.[8] That does not automatically solve compliance, and it does not replace legal review. It does give European organizations a cleaner starting point than a platform whose default operational center is outside the EU. For finance, public sector, defense, healthcare, and industrial companies, that can be more important than a benchmark lead.

OpenAI’s counterargument is managed capability. Many teams do not want to maintain inference clusters, patch serving infrastructure, monitor open model behavior, or build their own app layer. They want secure managed tools, model upgrades, API documentation, agent features, and predictable product support. In that world, GPT’s closed nature is a tradeoff, not an automatic defect.

The decision turns on organizational maturity. If your team has machine learning operations, security review, evaluation harnesses, and deployment expertise, Mistral gives you more control. If your team wants capability with less engineering overhead, GPT is the safer route.

Which should you use?

Use GPT when accuracy, tool reliability, and product completeness matter most. It is the stronger choice for executives, analysts, writers, product managers, lawyers, consultants, students, and developers who want one assistant that can handle a wide range of tasks with minimal setup. It is also the better default for coding agents if your workflow already depends on Codex or OpenAI’s API ecosystem.

Use Mistral when cost, openness, European deployment, or model sovereignty matters most. It is the stronger choice for teams building internal assistants, domain-specific agents, EU-first deployments, or high-volume applications where per-token economics dominate. It is also attractive if your company wants an open-weight fallback to reduce dependence on closed frontier APIs.

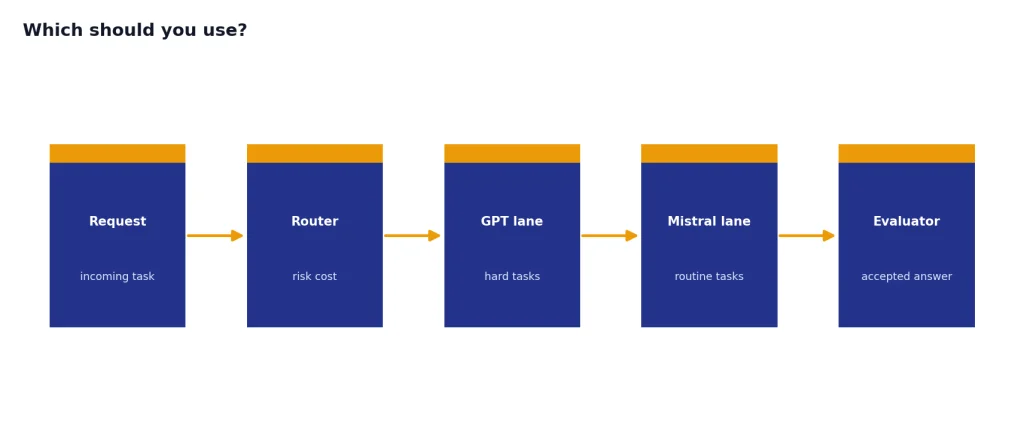

Use both if you can route intelligently. Many teams should not choose a single winner. A practical architecture sends high-risk reasoning, complex code review, and agent orchestration to GPT, while sending cheaper classification, summarization, translation, extraction, and internal knowledge tasks to Mistral. That hybrid pattern often beats brand loyalty.

For most individual readers, GPT remains the better everyday assistant. For developers and organizations, Mistral is the more serious challenger than its market share suggests. It is not “ChatGPT but French.” It is a different bet: open, cheaper, modular, and easier to deploy under your own rules.

If your real decision is search rather than model choice, read ChatGPT vs Google Search. If you are comparing more closed assistant ecosystems, see GPT vs Microsoft Copilot.

Frequently asked questions

Is Mistral better than GPT?

Mistral is better when you need open weights, lower API costs, European deployment defaults, or more control over hosting and customization. GPT is better for most everyday users who want the strongest integrated assistant and mature tooling. The better choice depends on whether you value product completeness or deployment control more.

Which model should developers compare: GPT-5.4 or Mistral Large 3?

For a frontier comparison, GPT-5.4 and Mistral Large 3 are the most useful pair in this article. GPT-5.4 is OpenAI’s professional-work model with a 1,050,000-token context window in the API.[2] Mistral Large 3 is Mistral’s open-weight flagship with 41B active parameters and 675B total parameters.[5]

Is Mistral Large 3 open source?

Mistral describes Mistral Large 3 as an open-weight model released under Apache 2.0.[4] “Open weight” means the model weights are available, but it does not mean every training detail, dataset, or internal process is public. For most deployment decisions, the important point is that you can run or adapt it outside Mistral’s hosted service.

Is Mistral cheaper than GPT?

For the flagship API comparison here, yes. Mistral Large 3 is listed at $0.50 per 1M input tokens and $1.50 per 1M output tokens, while GPT-5.4 is listed at $2.50 per 1M input tokens and $15.00 per 1M output tokens.[5][2] Actual cost can still vary by retries, prompt length, routing, caching, and infrastructure.

Which is better for French and European organizations?

Mistral has a stronger European positioning and says its API is served from EU data centers by default.[8] It also emphasizes multilingual models and open deployment. GPT can still be the stronger model choice for many tasks, but Mistral may fit procurement, sovereignty, and compliance conversations more naturally.

Should I replace ChatGPT with Le Chat?

Most users should try Le Chat before replacing ChatGPT. Le Chat now supports chat, research, document work, code interpretation, custom agents, voice, connectors, libraries, memories, and Canvas-style editing.[9][10] ChatGPT still has the stronger ecosystem, but Le Chat is credible if you want a Mistral-native assistant.