The GPT-5.2 release is OpenAI’s December 11, 2025 upgrade to the GPT-5 line, focused less on chatty personality and more on professional work, long-context reasoning, coding, tool use, and safer sensitive-conversation handling.[1][8] The release introduced ChatGPT-5.2 Instant, ChatGPT-5.2 Thinking, and ChatGPT-5.2 Pro, with matching API names for developers and higher token pricing than GPT-5.1.[1][3] The most important practical change is that GPT-5.2 is built for longer, messier tasks: spreadsheets, presentations, codebases, research documents, and multi-step workflows where accuracy matters more than a fast first draft.[1][5]

GPT-5.2 release: the short answer

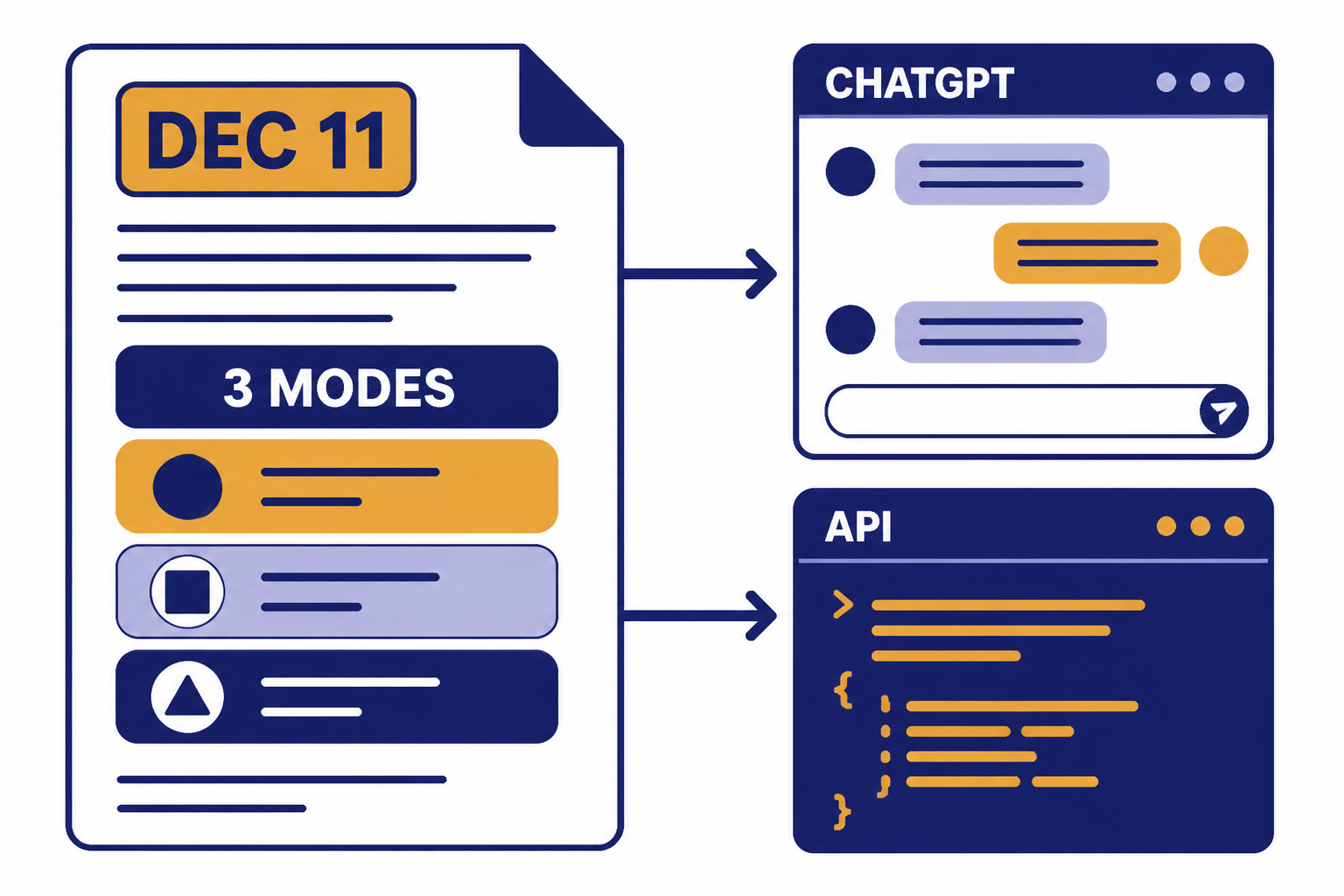

OpenAI released GPT-5.2 on December 11, 2025 as the next GPT-5-series upgrade after GPT-5.1.[1][8] It is a work-focused release with three ChatGPT modes, three API names, stronger long-context performance, higher benchmark scores, and more expensive API pricing than GPT-5.1.[1][3]

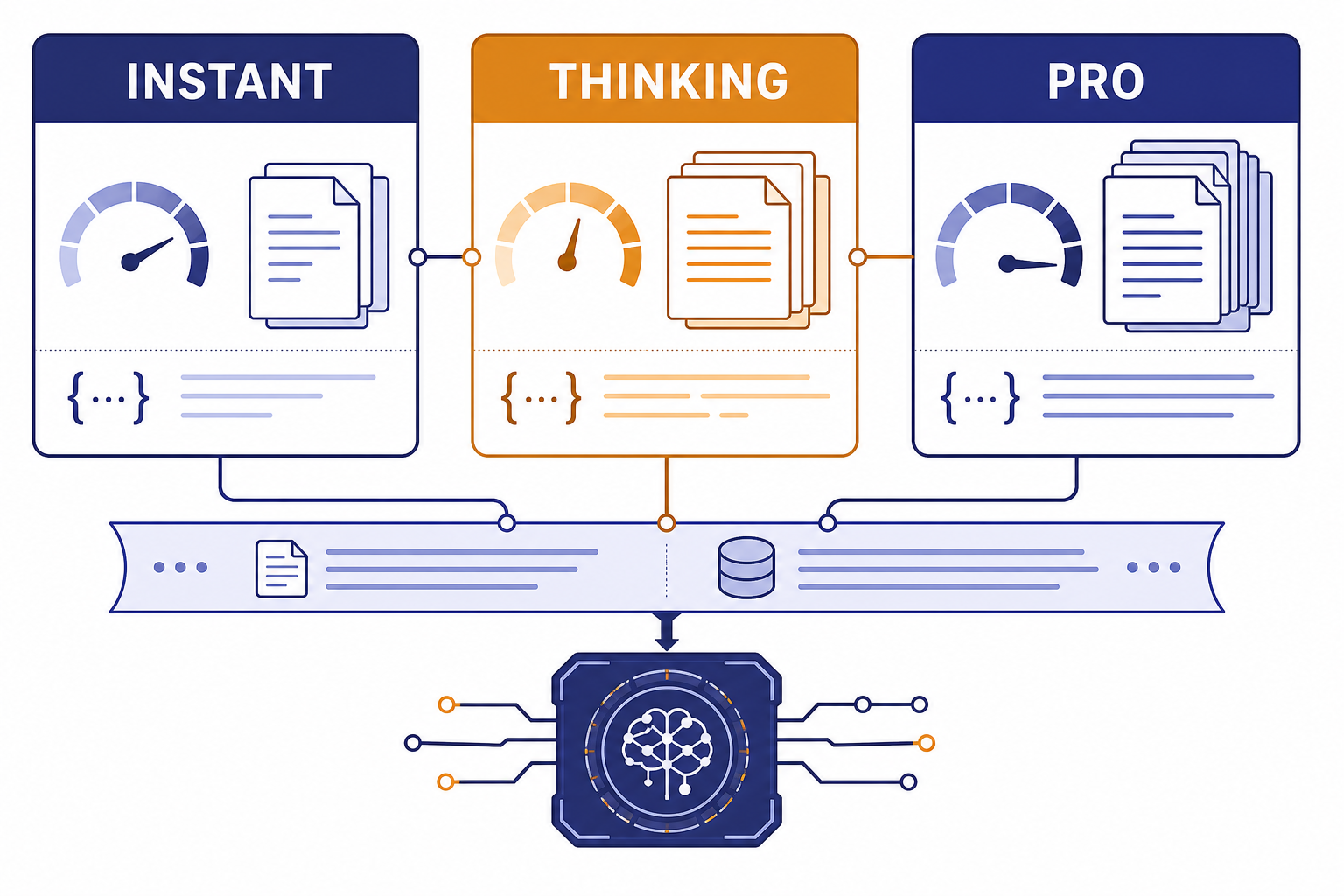

The short version is simple: use GPT-5.2 Instant for everyday answers, GPT-5.2 Thinking for hard reasoning and document-heavy work, and GPT-5.2 Pro when the answer quality is worth waiting longer.[1][7] Developers should treat GPT-5.2 as a higher-capability but higher-cost model and run their own evaluations before replacing GPT-5.1 in production.[1][5]

What OpenAI released

The GPT-5.2 release covered both ChatGPT and the OpenAI API.[1][3] In ChatGPT, OpenAI described three user-facing options: ChatGPT-5.2 Instant, ChatGPT-5.2 Thinking, and ChatGPT-5.2 Pro.[1][7] In the API, OpenAI mapped those to gpt-5.2-chat-latest, gpt-5.2, and gpt-5.2-pro.[1][3]

The naming matters because OpenAI is separating user experience from developer control. ChatGPT users see a simplified model picker. Developers see model IDs, reasoning settings, token prices, context limits, and endpoint support. If you track model changes for work, keep both sets of names in your notes.

The release also fits a pattern OpenAI started with GPT-5.1: more frequent GPT-5-series updates rather than a single static model for a long period.[1][2] If you want the broader model lineup, see our side-by-side GPT model comparison and our GPT-5.1 update explainer.

| ChatGPT name | API name | Best fit | Key note |

|---|---|---|---|

| ChatGPT-5.2 Instant[1][7] | gpt-5.2-chat-latest[1][3] | Fast everyday work, writing, translation, how-to help | Designed as the fast workhorse mode |

| ChatGPT-5.2 Thinking[1][7] | gpt-5.2[1][3] | Coding, analysis, long documents, multi-step reasoning | Supports configurable reasoning effort, including xhigh in the API[1][3] |

| ChatGPT-5.2 Pro[1][7] | gpt-5.2-pro[1][3] | Hard questions where quality matters more than latency | Available through the Responses API as the higher-compute option[1][3] |

Headline GPT-5.2 features

OpenAI positioned GPT-5.2 around professional knowledge work rather than a single flashy capability.[1][6] The release emphasized spreadsheet creation, presentation building, code writing, image understanding, long-context work, tool use, and multi-step projects.[1][9] That makes GPT-5.2 less like a novelty chatbot release and more like an infrastructure update for people who already use ChatGPT or the API inside a workflow.

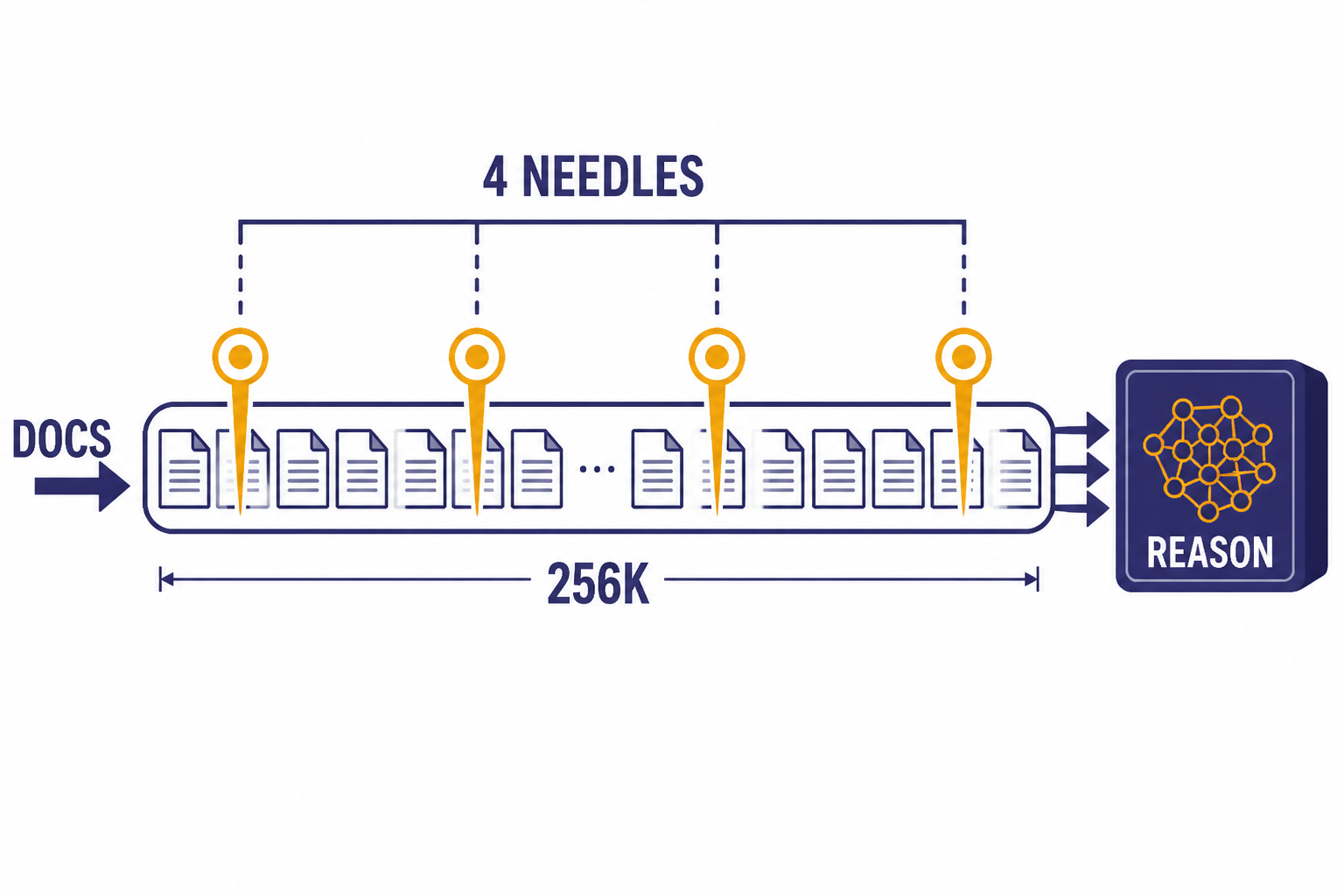

Better long-context reasoning

Long context is one of the strongest GPT-5.2 claims. OpenAI said GPT-5.2 Thinking reached near 100% accuracy on its 4-needle MRCR variant out to 256,000 tokens, and the API model page lists a 400,000-token context window with 128,000 maximum output tokens.[1][3] For readers comparing model memory limits, our context window size guide tracks how those figures fit across GPT models.

Stronger agentic and tool-use behavior

OpenAI’s launch post says GPT-5.2 Thinking reached 98.7% on Tau2-bench Telecom, a tool-use benchmark for long, multi-turn tasks.[1][9] The separate GPT-5.2 prompting guide describes the model as suited for enterprise and agentic workloads, with stronger instruction following, token efficiency, structured reasoning, tool grounding, and multimodal understanding.[5][1]

Cleaner professional outputs

The release notes call out clearer answers for information-seeking questions, how-to tasks, technical writing, translation, studying, and career guidance.[2][1] In practice, that means GPT-5.2’s default style is meant to put the important information earlier and reduce wandering explanations. That is useful for business users, but it also explains why some early users described the model as more corporate or less playful.[12][7]

Spreadsheet and presentation work

OpenAI repeatedly framed GPT-5.2 as a model for spreadsheets and presentations.[1][9] It also reported an internal investment-banking spreadsheet task score of 68.4% for GPT-5.2 Thinking versus 59.1% for GPT-5.1, and 71.7% for GPT-5.2 Pro.[1][9] Treat those as OpenAI-reported internal results, not a universal guarantee. If you use GPT-5.2 for financial, legal, or compliance work, require citations, spreadsheet formulas, and human review.

Benchmark results and what they mean

OpenAI published a wide benchmark appendix for GPT-5.2, and several secondary reports focused on the same theme: GPT-5.2 Thinking and GPT-5.2 Pro improved most visibly on professional work, coding, math, tool use, and abstract reasoning.[1][7] Benchmarks are useful directional signals. They are not a substitute for testing the model on your own documents, codebase, data formats, and latency requirements.

The most cited number is GDPval. OpenAI reported that GPT-5.2 Thinking beat or tied top industry professionals on 70.9% of GDPval comparisons, and GPT-5.2 Pro reached 74.1%.[1][9] Computerworld also reported the 70.9% figure and described GDPval as a benchmark covering knowledge-work tasks across 44 occupations.[9][1]

| Evaluation | GPT-5.2 Thinking | GPT-5.2 Pro | Comparison point |

|---|---|---|---|

| GDPval wins or ties | 70.9%[1][9] | 74.1%[1][9] | GPT-5 listed at 38.8% in OpenAI’s appendix[1][9] |

| SWE-Bench Pro, public | 55.6%[1][7] | OpenAI did not publish a corroborated figure | GPT-5.1 Thinking at 50.8%[1][7] |

| SWE-bench Verified | 80.0%[1][7] | OpenAI did not publish a corroborated figure | GPT-5.1 Thinking at 76.3%[1][7] |

| GPQA Diamond, no tools | 92.4%[1][7] | 93.2%[1][7] | GPT-5.1 Thinking at 88.1%[1][7] |

| AIME 2025, no tools | 100.0%[1][7] | 100.0%[1][7] | GPT-5.1 Thinking at 94.0%[1][7] |

| ARC-AGI-2 Verified | 52.9%[1][7] | 54.2% at high[1][7] | GPT-5.1 Thinking at 17.6%[1][7] |

The benchmark story is not “GPT-5.2 is best at everything.” It is that OpenAI optimized for a particular class of work: tasks with many constraints, long context, tool calls, and a need to preserve accuracy over multiple steps.[1][5] That is why the release matters most to analysts, developers, researchers, operations teams, and advanced ChatGPT users.

There is also a caveat. OpenAI said benchmark runs used maximum available reasoning effort in the API for GPT-5.2 Thinking and Pro, except for professional evaluations, where GPT-5.2 Thinking used the maximum available effort in ChatGPT Pro.[1][3] A Plus user using fast ChatGPT responses may not experience the same output quality as a benchmark run with maximum reasoning.

ChatGPT availability and model names

OpenAI said GPT-5.2 began rolling out in ChatGPT on December 11, 2025, starting with paid plans including Plus, Pro, Go, Business, and Enterprise.[1][8] Reuters also reported the same December 11, 2025 launch timing and paid-plan rollout.[8][1]

The ChatGPT model names can be confusing because “Instant,” “Thinking,” and “Pro” describe behavior as much as product packaging.[1][7] Instant is the everyday fast mode. Thinking is the deeper reasoning mode for harder prompts. Pro is the high-compute option for difficult questions where latency is less important than answer quality.

OpenAI also said GPT-5.1 would remain available to paid users for three months under legacy models after the GPT-5.2 launch, then be sunset from ChatGPT.[1][2] That is a product availability statement, not an API deprecation statement. OpenAI separately said it had no current plans to deprecate GPT-5.1, GPT-5, or GPT-4.1 in the API at the time of the GPT-5.2 launch.[1][3]

If your main question is subscription value rather than model capability, start with our ChatGPT Plus price guide. If your question is which model is strongest overall, see our most powerful GPT model benchmark roundup.

API pricing and developer details

For developers, the GPT-5.2 release is a pricing and evaluation decision as much as a model upgrade.[1][3] OpenAI priced gpt-5.2 and gpt-5.2-chat-latest at $1.75 per 1 million input tokens, $0.175 per 1 million cached input tokens, and $14.00 per 1 million output tokens.[1][3] OpenAI priced gpt-5.2-pro at $21.00 per 1 million input tokens and $168.00 per 1 million output tokens, with no cached-input price listed in the launch table.[1][3]

Those prices are higher than GPT-5.1 in OpenAI’s launch table, where gpt-5.1 and gpt-5.1-chat-latest were listed at $1.25 per 1 million input tokens and $10.00 per 1 million output tokens.[1][3] OpenAI’s argument is that GPT-5.2 can still be cheaper at a target quality level when its stronger reasoning and token efficiency reduce retries or failed agent steps.[1][5] You should verify that in your own workload before migrating.

| API model | Input price | Cached input price | Output price | Primary use |

|---|---|---|---|---|

gpt-5.2[1][3] | $1.75 / 1M tokens[1][3] | $0.175 / 1M tokens[1][3] | $14.00 / 1M tokens[1][3] | Reasoning, coding, analysis, agentic workflows |

gpt-5.2-chat-latest[1][3] | $1.75 / 1M tokens[1][3] | $0.175 / 1M tokens[1][3] | $14.00 / 1M tokens[1][3] | Fast ChatGPT-like responses |

gpt-5.2-pro[1][3] | $21.00 / 1M tokens[1][3] | OpenAI listed no cached-input price[1][3] | $168.00 / 1M tokens[1][3] | Highest-quality difficult responses |

The API model page also lists a 400,000-token context window, 128,000 maximum output tokens, an August 31, 2025 knowledge cutoff, and support for text input/output plus image input for GPT-5.2.[3][1] That combination is important for document analysis and multimodal review, but it does not mean every request should use the full context. Long prompts are expensive, and long outputs can dominate cost.

A practical migration plan is to keep GPT-5.1 or a smaller GPT-5 model for simple tasks, route complex tasks to GPT-5.2 Thinking, and reserve GPT-5.2 Pro for cases where the cost of a wrong answer is higher than the cost of the model call. For broader model cost comparisons, see our OpenAI API pricing breakdown.

Safety, system card, and known limits

OpenAI published a GPT-5.2 system card update on December 11, 2025.[4][1] The company said the overall safety mitigation approach for GPT-5.2 was largely the same as the approach described in the GPT-5 and GPT-5.1 system cards.[4][1] It also identified GPT-5.2 Instant as gpt-5.2-instant and GPT-5.2 Thinking as gpt-5.2-thinking inside the safety documentation.[4][1]

OpenAI’s launch post says GPT-5.2 improved responses in sensitive conversations involving suicide, self-harm, mental health distress, and emotional reliance on the model.[1][4] The same post reported mental-health evaluation scores of 0.995 for GPT-5.2 Instant and 0.915 for GPT-5.2 Thinking, compared with 0.883 for GPT-5.1 Instant and 0.684 for GPT-5.1 Thinking.[1][4] These are OpenAI-reported evaluation scores, not independent clinical validation.

The safety story also has a user-experience tradeoff. Some early users praised GPT-5.2’s stronger professional tone, while others complained that the model felt overly cautious, corporate, or less emotionally natural.[12][7] That is not unusual for a major model update. A model can improve on safety and benchmark reliability while also feeling worse for certain conversational or creative use cases.

OpenAI’s own prompting guide recommends explicit self-checking for legal, financial, compliance, and safety-sensitive contexts, including scanning for unstated assumptions and ungrounded numbers.[5][1] That is the right default. GPT-5.2 can reduce error rates, but it still requires human review for high-stakes work.

Analysis: the routing-versus-control tradeoff

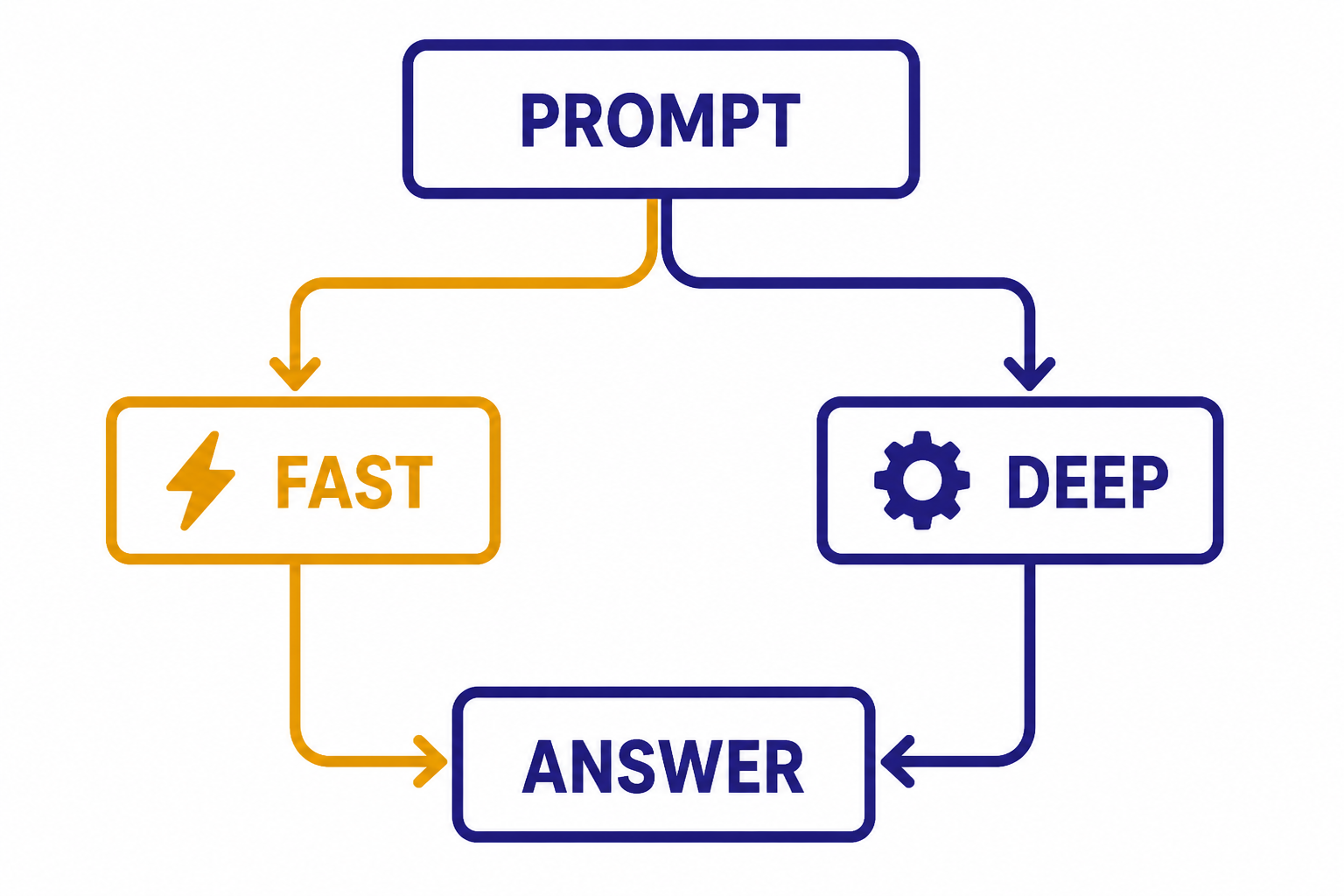

The most important GPT-5.2 product pattern is not a benchmark. It is OpenAI’s shift toward adaptive intelligence: faster models for ordinary tasks, deeper reasoning for hard tasks, and Pro compute for the most difficult prompts.[1][7] This is good for most users because they do not want to choose a model for every question. It is frustrating for power users because the most important quality setting becomes partly invisible.

That creates a routing-versus-control tradeoff. Routing improves convenience and cost efficiency. Control improves predictability. GPT-5.2 sits in the middle: ChatGPT users get simpler choices, while API users get model IDs and reasoning-effort settings.[1][3]

For everyday users, the best rule is to start with Instant and switch to Thinking when a task has more than one hard constraint. Examples include “compare these contracts,” “debug this failing test,” “build a spreadsheet model from this source data,” or “summarize these files and find contradictions.” For teams, the better rule is to define routing policies. A support bot, data-analysis assistant, and code-review agent should not all use the same default model settings.

The release also shows how OpenAI is aiming ChatGPT deeper into workplace software. The company highlighted partners and infrastructure, including Microsoft and NVIDIA, and said Azure data centers and NVIDIA GPUs such as H100, H200, and GB200-NVL72 underpinned training infrastructure.[1][11] For more context on the business side, read our OpenAI and Microsoft partnership analysis and our OpenAI leadership guide.

Who should use GPT-5.2

GPT-5.2 is most compelling for users who already push ChatGPT beyond quick drafting.[1][5] If your workflow includes long files, multi-step reasoning, code repositories, tool use, data analysis, or document synthesis, GPT-5.2 Thinking is the default place to test. If your workflow is mostly short emails, summaries, and brainstorming, the difference may be less dramatic.

- Use GPT-5.2 Instant for quick answers, rewrites, translations, outlines, and simple how-to questions.[1][2]

- Use GPT-5.2 Thinking for coding, research synthesis, spreadsheets, long documents, and tasks with conflicting constraints.[1][5]

- Use GPT-5.2 Pro for high-value analysis, hard technical questions, and cases where waiting longer is acceptable.[1][3]

- Do not use GPT-5.2 alone for medical, legal, financial, or safety-critical decisions without expert review.[5][4]

Developers should run a small controlled evaluation before changing production defaults. Measure task success, total token cost, latency, refusal rate, formatting reliability, and user satisfaction. A model that wins benchmarks can still lose in your product if it is too slow, too expensive, or too cautious for the job.

For product watchers, GPT-5.2 also connects to OpenAI’s broader 2025 and 2026 strategy. The same push toward richer, more agentic experiences appears in releases such as ChatGPT Atlas and Sora 2, even though those products serve different use cases.

Frequently asked questions

When was GPT-5.2 released?

OpenAI released GPT-5.2 on December 11, 2025.[1][8] The rollout began in ChatGPT with paid plans, and the API models were also made available on launch day.[1][8] The release came after GPT-5.1 and continued OpenAI’s pattern of more frequent GPT-5-series updates.[1][2]

What are the main GPT-5.2 models?

The main GPT-5.2 ChatGPT modes are ChatGPT-5.2 Instant, ChatGPT-5.2 Thinking, and ChatGPT-5.2 Pro.[1][7] Their API names are gpt-5.2-chat-latest, gpt-5.2, and gpt-5.2-pro.[1][3] Instant is the fast mode, Thinking is the deeper reasoning model, and Pro is the higher-compute option for difficult questions.[1][7]

How much does GPT-5.2 cost in the API?

OpenAI listed gpt-5.2 and gpt-5.2-chat-latest at $1.75 per 1 million input tokens and $14.00 per 1 million output tokens.[1][3] Cached input was listed at $0.175 per 1 million tokens, a 90% discount from the standard input price.[1][3] OpenAI listed gpt-5.2-pro at $21.00 per 1 million input tokens and $168.00 per 1 million output tokens.[1][3]

Is GPT-5.2 better than GPT-5.1?

OpenAI’s published benchmarks show GPT-5.2 ahead of GPT-5.1 on several major evaluations.[1][7] Examples include SWE-bench Verified at 80.0% for GPT-5.2 Thinking versus 76.3% for GPT-5.1 Thinking, and AIME 2025 at 100.0% versus 94.0%.[1][7] That said, user experience depends on mode, reasoning effort, latency tolerance, and the type of prompt.

What is GPT-5.2’s context window?

The OpenAI API model page lists GPT-5.2 with a 400,000-token context window and a 128,000-token maximum output limit.[3][1] OpenAI’s launch post also says GPT-5.2 Thinking performed strongly on long-context evaluations, including near 100% accuracy on a 4-needle MRCR variant out to 256,000 tokens.[1][3] Large context windows are useful, but they also increase cost and make prompt organization more important.

Does GPT-5.2 support images?

The GPT-5.2 API model page lists text input/output and image input, while audio is listed as not supported for that model page.[3][1] OpenAI’s launch post also highlighted better image understanding, including spatial comprehension in examples comparing GPT-5.2 with GPT-5.1.[1][7] For image generation, use the dedicated image models rather than assuming GPT-5.2 itself is the image generator.

What is GPT-5.2-Codex?

GPT-5.2-Codex is a coding-focused version OpenAI announced on December 18, 2025.[10][4] OpenAI described it as its most advanced agentic coding model at that time, with stronger long-context understanding, reliable tool calling, factuality, native compaction, and Windows-environment performance.[10][4] It is related to GPT-5.2, but it is a separate Codex-focused release rather than the main ChatGPT-5.2 model picker option.[10][1]

Bottom line

GPT-5.2 is a serious upgrade for professional work, not just a new model label.[1][6] Its strongest case is long-context analysis, coding, spreadsheets, tool-heavy agents, and careful reasoning where GPT-5.1 required more retries or manual repair.[1][5]

The next thing to watch is whether users experience the same gains in everyday ChatGPT that OpenAI reports in maximum-effort benchmarks. The model’s value will depend on routing, reasoning effort, latency, and cost as much as raw scores.