The Sora 2 launch turned OpenAI’s video work from a research showcase into a consumer product: a video-and-audio generation model, a standalone Sora app, a social feed, and consent-based cameo tools all arrived together on September 30, 2025.[1][7] That is why the launch mattered. It made short AI video feel less like a studio experiment and more like a daily creative format. As of this article’s April 24, 2026 publication date, though, the story has a sharp ending: OpenAI says the Sora web and app experiences will be discontinued on April 26, 2026, while the Sora API will be discontinued on September 24, 2026.[6][14]

What Sora 2 launched

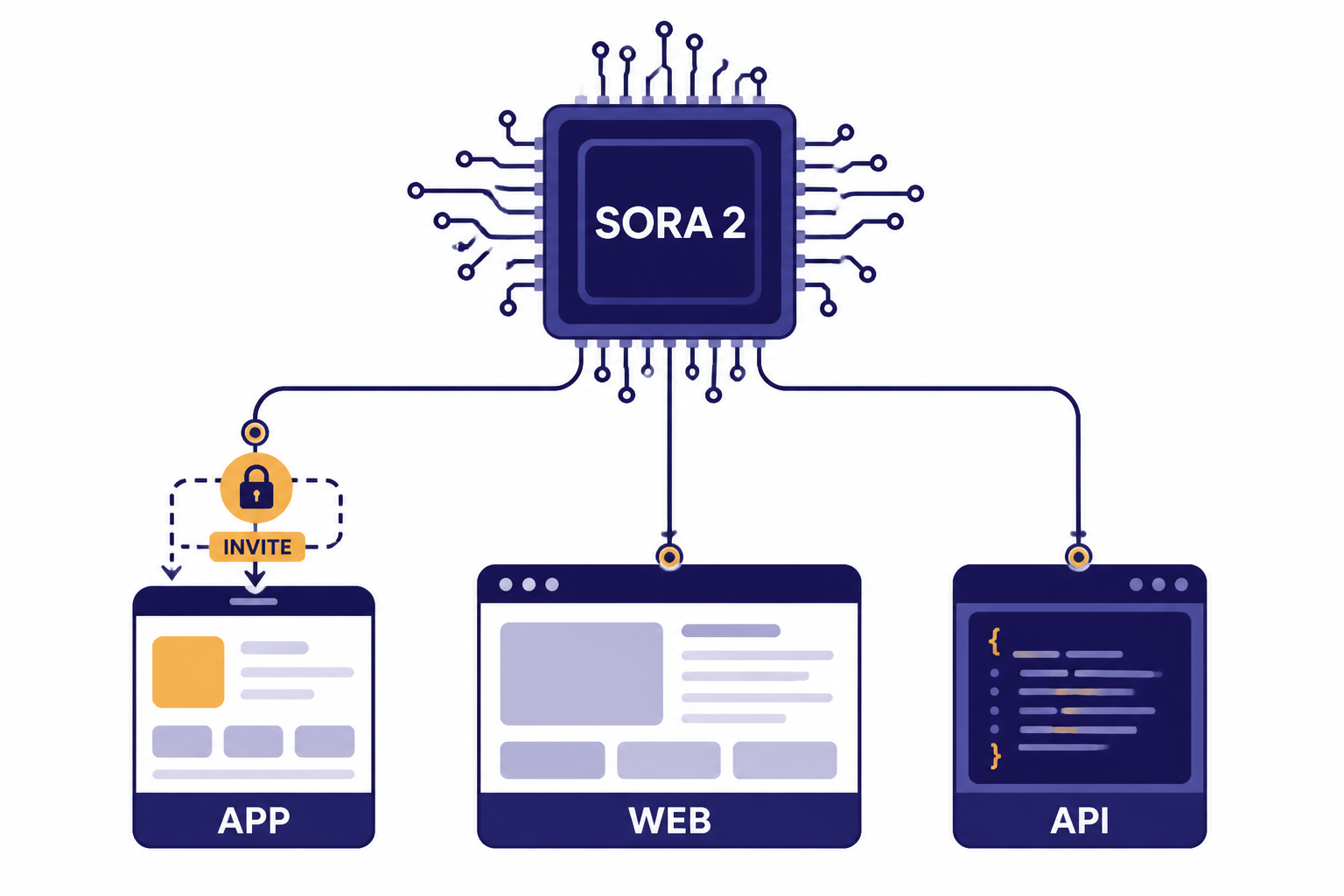

Sora 2 launched as OpenAI’s flagship video-and-audio generation model on September 30, 2025, paired with a new Sora iOS app and web access through sora.com for invited users.[1][8] The headline was not just better clips. The bigger shift was packaging generation, sharing, remixing, and personal likeness controls into one consumer app.

OpenAI described Sora 2 as more physically accurate, more realistic, more controllable, and able to generate synchronized dialogue and sound effects.[1][2] The company started the rollout in the United States and Canada, made the app invite-based, and said Sora 2 would be available for free at launch subject to compute limits.[1][7]

The launch also created a new competitive frame for OpenAI. The company was no longer showing a model that could make impressive samples. It was testing whether generated video could become a social medium. That is the same product pattern readers saw across other OpenAI launches, from the chatgpt atlas launch breakdown to our take on gpt 5 3 release: OpenAI increasingly ships models inside workflows, not as isolated demos.

Why the launch mattered

Sora 2 mattered because it crossed three thresholds at once: video, audio, and distribution. Earlier text-to-video systems could impress in short demos, but they often broke down when motion, continuity, sound, and user control had to work together. OpenAI’s launch framed Sora 2 as a step toward models that simulate physical dynamics, not just generate plausible frames.[1][2]

The mainstream piece came from the Sora app. OpenAI made the model available through a feed-like consumer product where people could generate, remix, and share clips with friends.[4][9] That changed the user from “prompt engineer testing a model” to “creator posting a clip.” It also changed the risk profile, because the app encouraged public sharing of realistic synthetic media.

The launch arrived after OpenAI’s original Sora preview in February 2024 and after the public Sora availability for ChatGPT Plus and Pro users in late 2024.[1][12] Sora 2 was the first time OpenAI combined the model with a social product built around everyday creation. For readers tracking the broader model race, this sat alongside the company’s continuing GPT updates, including this guide to gpt-5.2 release notes and features and the gpt-5.1 update breakdown.

| Launch element | What OpenAI shipped | Why it changed the market |

|---|---|---|

| Sora 2 model | Video and audio generation with improved realism, physics, controllability, dialogue, and sound effects.[1][2] | It moved OpenAI’s video product beyond silent visual generation. |

| Sora app | An invite-based iOS app for generating, remixing, sharing, and following friends.[4][8] | It put AI video into a consumer social format. |

| Cameos | A likeness feature based on a short video-and-audio recording for identity verification and capture.[1][4] | It made personal identity part of the creative workflow. |

| Provenance signals | Visible watermarks, invisible provenance signals, and C2PA metadata at launch.[3][2] | It acknowledged that realistic AI video needed traceability. |

| Initial rollout | United States and Canada first, with invite access and planned expansion.[1][7] | It let OpenAI limit scale while testing demand and safety. |

What changed from Sora 1

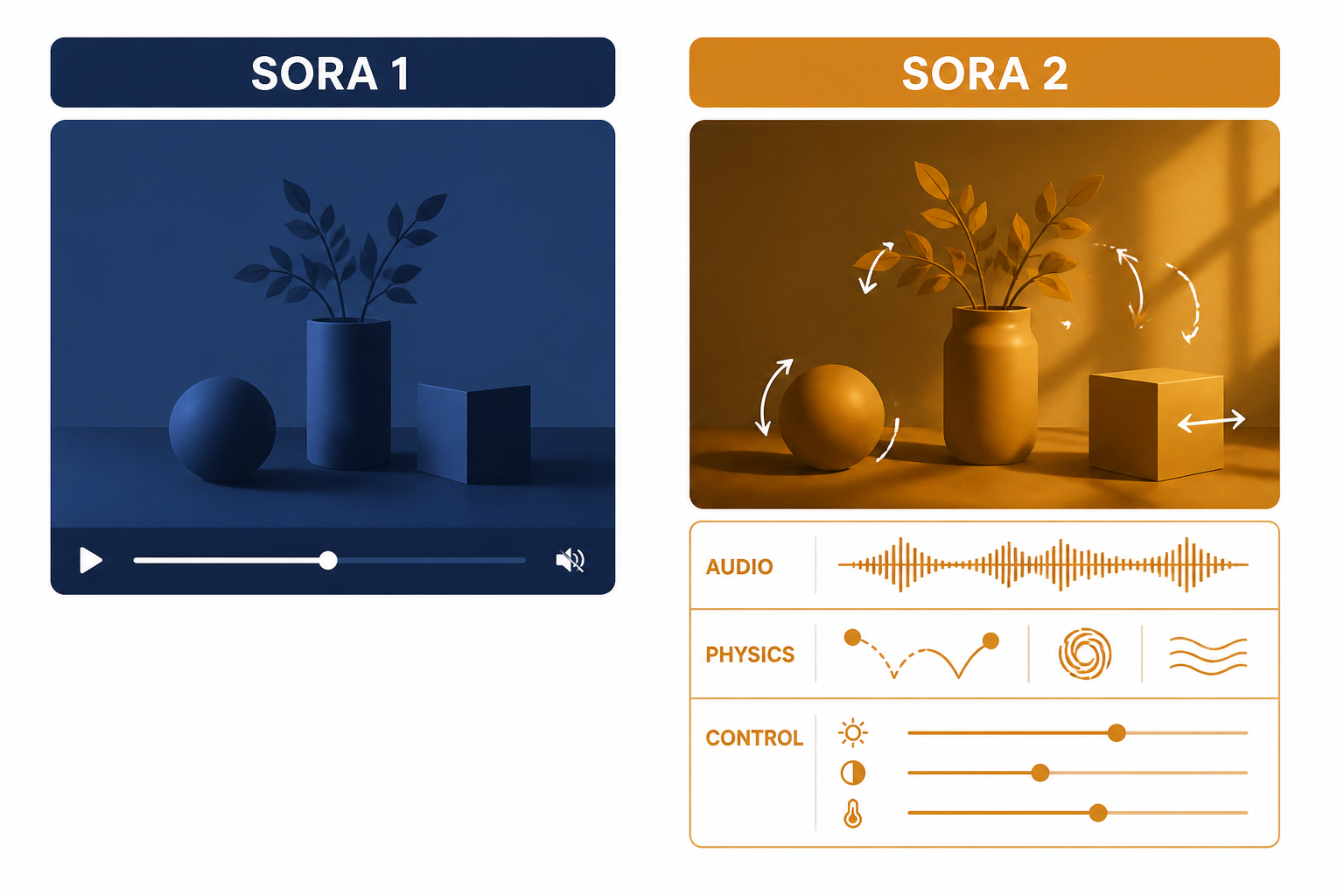

OpenAI positioned the original Sora model from February 2024 as the moment when video generation began to look workable, while Sora 2 was presented as a much larger step toward controllable, audio-synchronized, physics-aware generation.[1][2] The company’s public examples emphasized motion that respected real-world dynamics more often than earlier systems, such as objects reacting rather than simply morphing or teleporting.

The most visible product difference was sound. Sora 2 could generate background soundscapes, speech, and sound effects along with the video.[1][2] That matters because silent AI video often feels like a visual effect, while synchronized sound makes the output closer to a finished social clip or storyboard.

The second difference was steerability. OpenAI said Sora 2 followed user direction with high fidelity and supported a wider stylistic range.[2][1] In practical terms, that meant users could ask for a scene with a specific camera move, tone, character action, or environment and expect fewer prompt-to-output mismatches than with the earlier system.

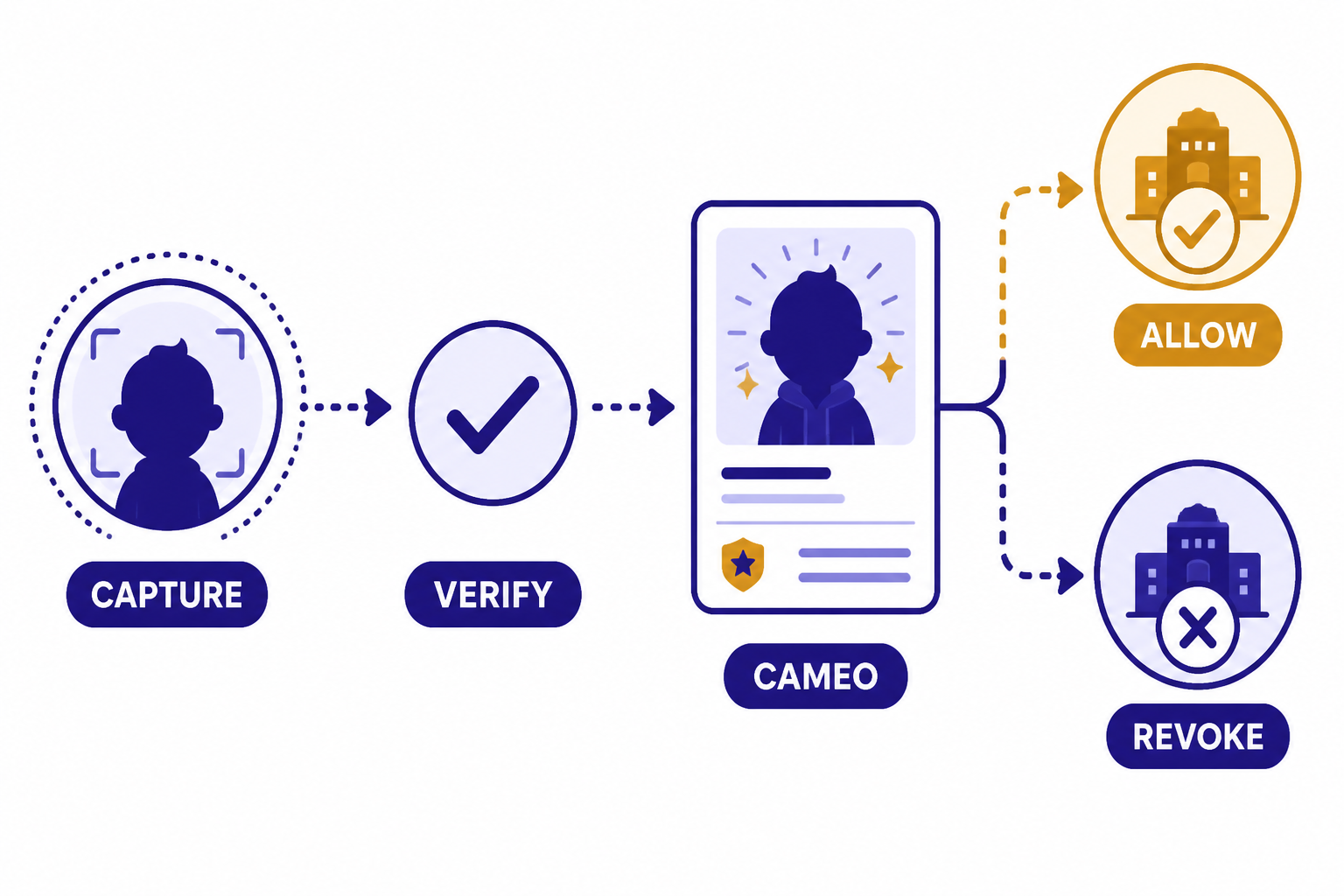

The third difference was identity. Sora 2’s app-centered launch introduced cameos, which let users place themselves or authorized friends into generated scenes after a one-time capture flow.[1][4] That feature made Sora 2 less like a generic video generator and more like a personal media engine.

| Area | Original Sora | Sora 2 launch | Reader takeaway |

|---|---|---|---|

| Release context | Original Sora was introduced publicly in February 2024.[1][12] | Sora 2 launched on September 30, 2025.[1][7] | The gap shows how quickly OpenAI moved from preview to social product. |

| Media type | Video generation was the core showcase.[1][12] | Video plus synchronized dialogue, sound effects, and soundscapes.[1][2] | Audio made the output feel more complete. |

| Distribution | Sora lived mainly as a model and web creation surface.[12][4] | Sora 2 launched with a standalone iOS app and social sharing features.[4][8] | The app was the mainstreaming move. |

| Identity tools | Personal likeness was not the defining product loop.[12][3] | Cameos used consent-based capture and sharing controls.[1][3] | Likeness became both a feature and a safety challenge. |

| Safety framing | Sora had watermarking and policy controls in earlier form.[12][3] | Sora 2 launched with visible watermarks, C2PA metadata, internal tracing tools, and system-card mitigations.[3][2] | OpenAI anticipated higher misuse pressure from realistic social video. |

How the Sora app worked

The Sora app used an existing OpenAI account and asked users to sign in with the same credentials they used for ChatGPT.[4] During onboarding, OpenAI said users would enter a birthday for age-appropriate protections, choose a username, optionally add a profile photo, and find friends to follow.[4] In the invite phase, users could also need an invite code.[4][8]

The core creation loop was simple. A user generated a short video from text or an image, remixed videos they liked, and shared work with friends.[4][9] That loop was closer to a social app than a professional video editor. The product choice made sense for mainstream adoption because most users do not want a timeline, node graph, or rendering pipeline before they can make a clip.

Cameos were the app’s defining feature. OpenAI said a user could create a character of themselves after a short one-time video-and-audio recording that verified identity and captured likeness.[1][4] The user could decide who was allowed to use that character and revoke access later.[3][4]

This is also where the launch became culturally important. A social feed of entirely generated clips can spread jokes, memes, fictional scenes, and believable-looking synthetic footage much faster than a model hidden behind a web form. Readers comparing OpenAI’s product packaging with other platform decisions should also see openai and microsoft, because distribution partnerships and compute access shape how quickly these tools reach ordinary users.

Safety and rights controls

OpenAI launched Sora 2 with a safety story built around provenance, consent, moderation, and restricted uploads.[2][3] The company said every Sora video included visible and invisible provenance signals, all outputs carried a visible watermark at launch, and videos embedded C2PA metadata.[3][2] OpenAI also said it maintained internal reverse-image and audio search tools to trace Sora videos back to the system with high accuracy.[3]

The system card identified risks that were especially relevant to the Sora app: nonconsensual use of likeness and misleading generations.[2][3] OpenAI said its early deployment used limited invitations, restricted image uploads featuring photorealistic people, restricted all video uploads, and applied stringent safeguards for content involving minors.[2][3]

The rights story was more turbulent. Within days of the September 30, 2025 launch, reporting said OpenAI planned more granular controls for rightsholders after the app generated videos involving recognizable copyrighted characters.[10][11] That sequence became an early lesson in mainstream AI video: once a tool is social, policy decisions become public events, not back-office settings.

Likeness controls also faced real-world pressure. In October 2025, OpenAI, SAG-AFTRA, Bryan Cranston, United Talent Agency, Creative Artists Agency, and the Association of Talent Agents issued a joint statement after concerns about Sora-generated depictions of Cranston.[12][10] The case showed that consent tools are necessary but not sufficient. They also need enforcement, user education, appeals, and rapid fixes when a model or moderation system misses the intended boundary.

Analysis: the mainstream video tradeoff

The Sora 2 launch revealed a pattern I would call the “consumerization-risk squeeze.” The easier an AI video system becomes to use, the more valuable it becomes for normal creators, and the more dangerous it becomes for impersonation, spam, harassment, and copyright conflict. Sora 2’s app was powerful because it removed friction. That same removed friction made every safety decision more consequential.

The tradeoff is not simply safety versus creativity. It is speed versus accountability. A feed-based product rewards fast generation, remixing, and sharing. A rights- and likeness-safe product requires verification, permission checks, provenance, moderation, and takedown workflows. Those systems can coexist, but only if the product treats governance as part of the core experience rather than as a separate compliance layer.

Sora 2 also showed why video is different from text. A misleading paragraph can do damage, but a realistic video of a person doing or saying something can travel through social networks with far less context. That is why watermarking and C2PA metadata were central to OpenAI’s launch materials.[3][2] It is also why users should treat generated video as a publishing medium, not just a private toy.

For creators, the decision framework is straightforward. Use AI video when the output is fictional, authorized, clearly labeled, and not presented as evidence of real events. Avoid it when a clip could plausibly harm a real person, imply endorsement, recreate a copyrighted character without permission, or confuse viewers about whether something happened. For a broader model-selection view, see all gpt models compared side by side and context window sizes for every gpt model; the same principle applies across modalities: choose the model and workflow that match the risk of the task.

Pricing, credits, and access

At launch, OpenAI said Sora 2 would initially be free, with generous limits subject to compute constraints.[1][7] It also said ChatGPT Pro users would get access to an experimental, higher-quality Sora 2 Pro model on sora.com, with app support planned later.[1][4] OpenAI has not published a corroborated independent figure for all Sora 2 plan limits, so readers should treat exact availability as account- and rollout-dependent.

OpenAI later documented a credit system for Sora usage. Its help article listed Sora 2 10-second generations at 10 credits, Sora 2 15-second generations at 20 credits, and several Sora 2 Pro options on the web, including 10-second standard-resolution generations at 40 credits and 15-second high-resolution generations at 500 credits.[5] OpenAI has not published a corroborated independent figure for those credit costs outside its own help documentation.

| Video option | OpenAI-listed unit | OpenAI-listed credits | Important caveat |

|---|---|---|---|

| Sora 2, 10 seconds | 1 video generation | 10 credits[5] | OpenAI has not published a corroborated independent figure. |

| Sora 2, 15 seconds | 2 video generations | 20 credits[5] | OpenAI says longer videos may cost more credits per second. |

| Sora 2, 25 seconds | 3 video generations | 30 credits[5] | Listed under Sora 2 Pro availability notes in the help article. |

| Sora 2 Pro, standard resolution, 10 seconds | 4 video generations | 40 credits[5] | OpenAI says Sora 2 Pro options were available only on Sora Web at that point. |

| Sora 2 Pro, standard resolution, 15 seconds | 8 video generations | 80 credits[5] | Useful for comparing relative compute cost. |

| Sora 2 Pro, high resolution, 15 seconds | 50 video generations | 500 credits[5] | The highest listed credit cost in the cited OpenAI help table. |

The pricing lesson is that generated video is compute-intensive. A credit table makes that visible. A higher-resolution, longer, or Pro-tier clip can cost many times more than a short standard generation. Readers comparing costs across OpenAI products should keep openai api pricing and chatgpt plus price in 2026 nearby, because consumer subscriptions and metered add-ons answer different needs.

Shutdown timeline

The Sora 2 launch looked like a mainstreaming moment in September 2025. By March 24, 2026, OpenAI had announced plans to discontinue Sora, according to contemporaneous reporting and OpenAI’s own help-center shutdown guidance.[6][13] As of April 24, 2026, OpenAI’s help page says the Sora web and app experiences will be discontinued on April 26, 2026, and the Sora API will be discontinued on September 24, 2026.[6][14]

That does not erase the importance of the launch. It changes the lesson. Sora 2 proved that AI video could attract mainstream attention, but it also showed how hard it is to sustain a consumer video platform when compute costs, rights management, safety reviews, and product-market fit all collide. The technology may continue in future OpenAI systems even if the Sora-branded app ends.

| Date | Event | Source-grounded significance |

|---|---|---|

| February 2024 | OpenAI introduced the original Sora model.[1][12] | It established OpenAI as a leading text-to-video player. |

| September 30, 2025 | OpenAI launched Sora 2 and the Sora app.[1][7] | Video generation moved into an invite-based social product. |

| October 2025 | Rights and likeness concerns escalated around generated characters and public figures.[10][12] | The launch forced fast policy responses from OpenAI. |

| March 24, 2026 | OpenAI announced plans to discontinue Sora, according to coverage of the company’s announcement.[13][9] | The mainstream product experiment began winding down. |

| April 26, 2026 | OpenAI says Sora web and app experiences will be discontinued.[6][14] | Consumer Sora access ends after this date. |

| September 24, 2026 | OpenAI says the Sora API will be discontinued.[6][14] | Developer access has a later sunset than the consumer app. |

Frequently asked questions

When did the Sora 2 launch happen?

OpenAI launched Sora 2 on September 30, 2025.[1][7] The launch included the Sora 2 video-and-audio generation model and a standalone Sora iOS app.[1][8] The initial rollout was invite-based and began in the United States and Canada.[1][7]

What made Sora 2 different from the first Sora?

Sora 2 added synchronized dialogue, sound effects, stronger realism, better controllability, and more accurate physical behavior compared with prior systems, according to OpenAI.[1][2] The first Sora model was introduced in February 2024, while Sora 2 launched on September 30, 2025.[1][7] The other major difference was distribution: Sora 2 arrived with a social app, not just a model showcase.[4][8]

What were Sora cameos?

Cameos let a user create a reusable likeness after a short one-time video-and-audio recording in the Sora app.[1][4] OpenAI said the recording verified identity and captured the user’s image and voice likeness.[1][3] Users could decide who could use their cameo and revoke access later.[3][4]

Was Sora 2 free?

OpenAI said Sora 2 would initially be available for free, with generous limits that were still subject to compute constraints.[1][7] ChatGPT Pro users were also slated to get access to the higher-quality Sora 2 Pro model on sora.com.[1][4] Later OpenAI help documentation listed credit costs for some Sora 2 and Sora 2 Pro generations, but OpenAI has not published a corroborated independent figure for all plan limits.[5]

Did Sora 2 include watermarks?

Yes. OpenAI said every Sora video included visible and invisible provenance signals, and that all outputs carried a visible watermark at launch.[3][2] The company also said Sora videos embedded C2PA metadata.[3][2] These measures were designed to help distinguish AI-generated videos from camera-captured footage.

Why did Sora 2 become controversial?

The controversy centered on realistic synthetic video, likeness misuse, and copyrighted characters. OpenAI’s own system card identified nonconsensual likeness use and misleading generations as risks.[2][3] Within days of the September 30, 2025 launch, reporting said OpenAI was moving toward more granular rightsholder controls after Sora-generated videos raised copyright concerns.[10][11]

Is Sora 2 still available on April 24, 2026?

As of April 24, 2026, OpenAI’s help documentation says Sora web and app experiences will be discontinued on April 26, 2026.[6][14] The same guidance says the Sora API will be discontinued on September 24, 2026.[6][14] Users who need their existing Sora content should export it before the consumer shutdown window closes.[6]

Bottom line

The Sora 2 launch was the moment AI video became a mainstream consumer product, not just a technical demo. It combined a stronger video-and-audio model, a social app, cameos, watermarking, and rights controls into one package.[1][3]

The shutdown timeline makes the story more useful, not less. Sora 2 showed what consumer AI video can become, and it showed why any future version will need clearer economics, stronger rights management, and a better answer for trust from the first day.