Sora is OpenAI’s video generation model family for creating short AI videos from prompts and uploaded media. OpenAI first previewed Sora on February 15, 2024, then released Sora Turbo as a standalone product for ChatGPT Plus and Pro users on December 9, 2024.[3][4][1][2] As of March 13, 2026, the current Sora generation is Sora 2, which OpenAI announced on September 30, 2025 with synchronized audio, stronger physics, and a dedicated Sora app.[8][10] Sora is best understood as a creative video system, not a general GPT model: it turns language and visual inputs into video, while text reasoning, coding, and writing still belong to OpenAI’s GPT and o-series models.

What is Sora?

Sora is OpenAI’s video generation model for producing video from text, image, and video inputs.[5][7] It is separate from the GPT text models covered in all GPT models compared side by side, and it should be evaluated by motion quality, controllability, safety controls, and editing workflow rather than by text benchmarks. The original Sora preview proved the research direction; Sora Turbo made it usable as a product; Sora 2 added audio and a more app-centered creation loop.[3][1][8]

The short answer: use Sora when the output you need is a visual scene in motion. Use a GPT model when the output you need is language, code, analysis, or structured text. Use an image model such as DALL-E 3 when one still image is enough.

Sora timeline and versions

OpenAI introduced Sora in stages. The February 2024 preview showed a research model capable of generating video up to a minute long, but it was not broadly available to the public at that point.[3][4] The December 2024 product release used Sora Turbo, which OpenAI described as faster than the February preview and available through Sora.com for ChatGPT Plus and Pro users.[1][6] Sora 2 followed on September 30, 2025 with video-and-audio generation, a standalone app, and stronger world-simulation claims.[8][10]

| Milestone | Date | What changed | Reader takeaway |

|---|---|---|---|

| Research preview | February 15, 2024[3][4] | OpenAI showed Sora as a text-to-video research model and described its world-simulation approach. | Impressive capability, but not a normal consumer product. |

| Sora Turbo product launch | December 9, 2024[1][6] | OpenAI released Sora at Sora.com for ChatGPT Plus and Pro users. | Users could generate and edit short AI videos in a dedicated interface. |

| Sora 2 launch | September 30, 2025[8][10] | OpenAI added synchronized audio, better physics, stronger controllability, and a new Sora app. | Sora moved from silent clip generation toward social, character, and sound-based video creation. |

This matters because many Sora discussions mix the research preview, Sora Turbo, and Sora 2. A clip limit, app feature, or safety rule may apply to one version but not another. If you are comparing the latest system against Runway or Google Veo, start with the dedicated comparison guides for Sora vs Runway and Sora vs Google Veo.

How Sora works

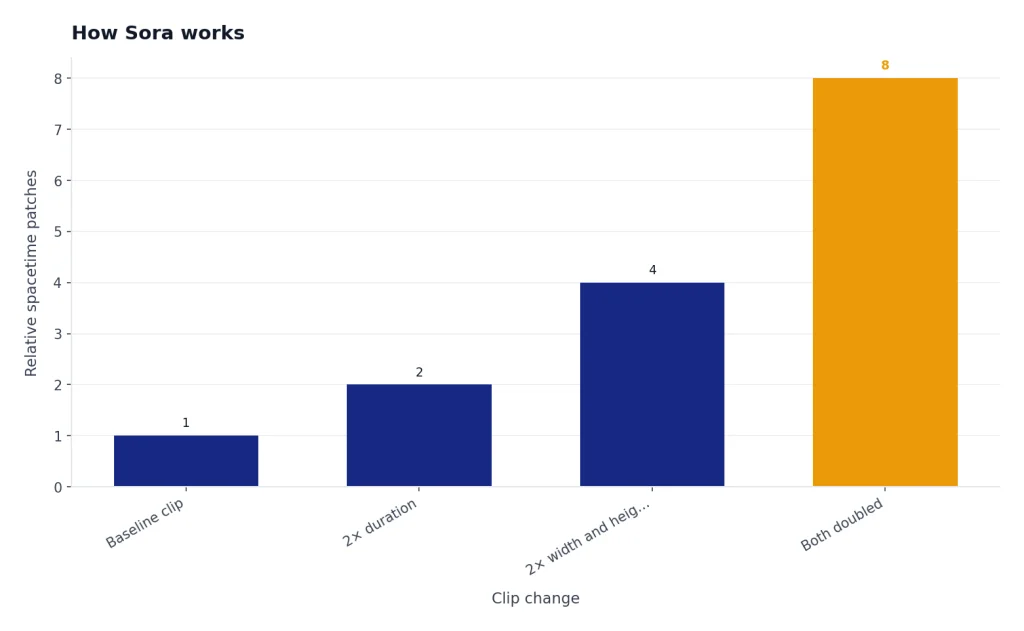

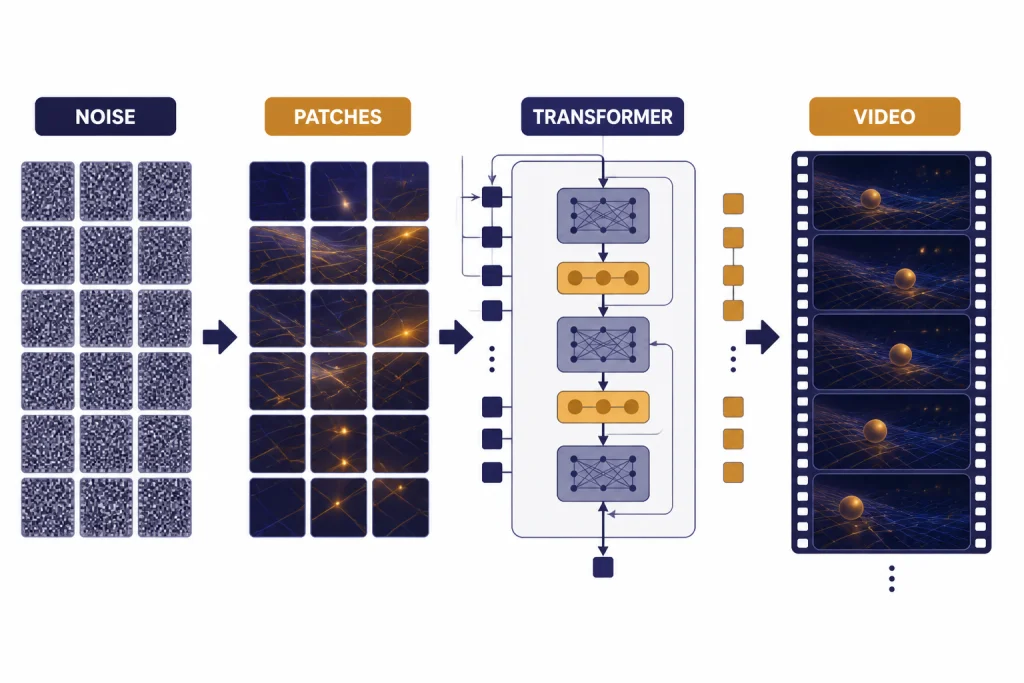

OpenAI describes Sora as a diffusion model that starts from noisy video-like data and gradually transforms it into a clean video that matches the prompt.[5][3] The important architectural idea is that Sora represents visual data as spacetime patches. Those patches act like tokens for a transformer, except they represent compressed pieces of video across space and time rather than fragments of text.[3][5]

That design explains why Sora can work across different visual formats. OpenAI says the model can generate video in widescreen, vertical, or square aspect ratios, and the original product page listed generation up to 1080p resolution and up to 20 seconds long.[1][6] The same general idea also explains why Sora can animate an image, extend a video, remix a scene, or blend supplied assets rather than only creating clips from scratch.[1][7]

The term “world simulator” should be read carefully. OpenAI’s research post argues that scaling video generation produces useful simulation behaviors, but the company also states that Sora can fail at physics and complex actions over longer durations.[3][1] In practice, Sora can preserve visual continuity better than older video tools in many scenes, but it still does not understand the world the way a physical simulator or robotics engine does.

What you can create with Sora

Sora is strongest when the requested video can be described visually and kept within a short, focused scene. Product teams can make concept clips. Educators can make visual explainers. Designers can test motion direction. Creators can generate backgrounds, transitions, stylized shots, and mood boards. If your task is still-image-first, compare it with best GPT model for image generation before spending video generation time.

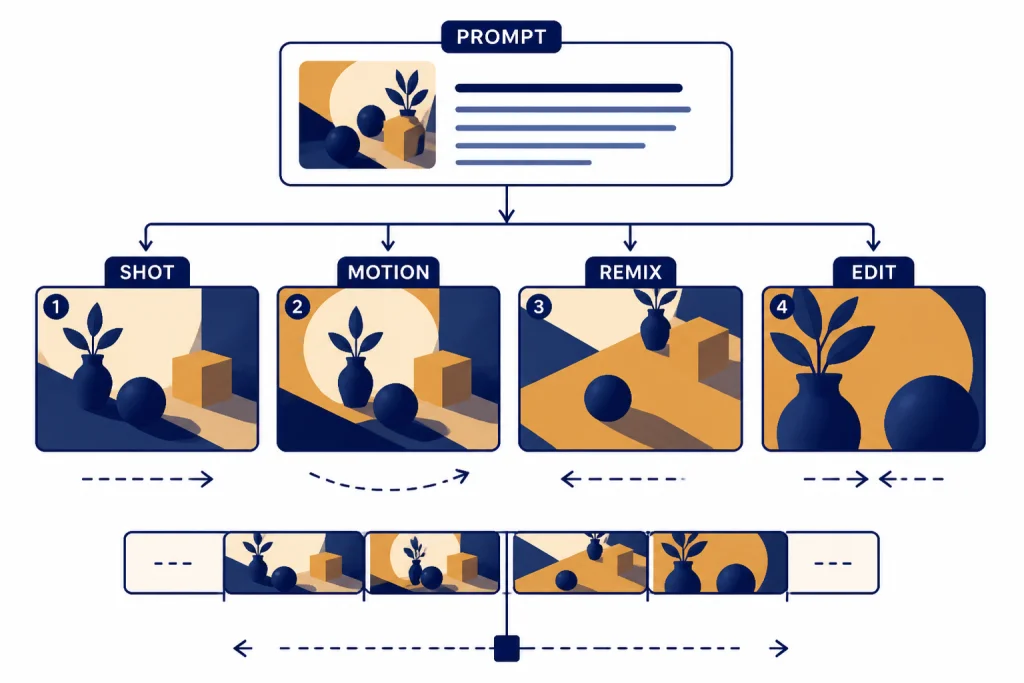

The Sora editor supports prompt-based generation from text, and OpenAI’s help material says users can upload an image or video file as an initial prompt where the product allows it.[7][1] OpenAI also describes editing features such as extending, remixing, blending, and storyboarding.[1][7] Sora 2 expands the creative surface by adding synchronized dialogue, sound effects, background soundscapes, and a character-like cameo workflow built around user consent.[8][10]

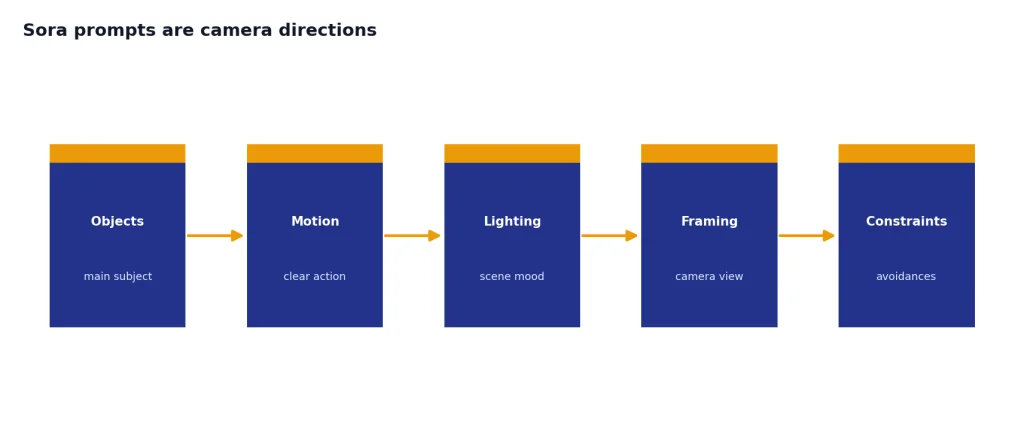

Good Sora prompts are camera directions, not essays

A weak prompt says: “make a futuristic product video.” A better prompt says: “slow macro shot of a translucent handheld device on a white table, soft studio light, camera pushes in, shallow depth of field, no people.” The second prompt gives Sora concrete objects, motion, lighting, and framing. That matters because video models must solve scene layout, subject consistency, camera movement, and temporal continuity at the same time.

For best results, keep one primary subject, one clear action, and one camera style. Ask for shorter scenes when the action is physically complex. Use a language model from best GPT model for writing to draft prompt variants, then test them in Sora as separate takes rather than cramming every idea into one prompt.

Sora access, pricing, and limits

Sora access has changed across versions, plans, and regions, so the safe rule is to check the current Sora help page before buying a plan only for video. OpenAI’s December 9, 2024 launch post said Sora was included with ChatGPT Plus at no additional cost and that the Pro plan offered more usage, higher resolutions, and longer durations.[1][6] OpenAI’s ChatGPT pricing announcement lists ChatGPT Plus at $20 per month and ChatGPT Pro at $200 per month, and TechRadar separately described the same $20-to-$200 spread in February 2026.[11][12]

Published Sora limits also vary by product generation. For the Sora Turbo launch, OpenAI said users could generate videos up to 1080p resolution and up to 20 seconds long.[1][6] OpenAI also said Plus users could generate up to 50 videos at 480p resolution or fewer videos at 720p each month, but OpenAI has not published a corroborated figure for that exact Plus monthly allowance.[1] Treat any Sora quota as plan-specific and subject to change.

| Plan or route | Published price | Sora relevance | Best fit |

|---|---|---|---|

| ChatGPT Plus | $20 per month[11][12] | OpenAI’s launch post included Sora in Plus at no extra cost.[1][9] | Casual creation and testing prompts. |

| ChatGPT Pro | $200 per month[11][12] | OpenAI described Pro as offering more Sora usage, higher resolutions, and longer durations.[1][6] | Creators who need more frequent video iteration. |

| Sora app or web | Included through eligible access routes, depending on plan[7][8] | OpenAI’s Sora 2 materials describe a standalone Sora app and Sora.com access.[8][10] | Prompting, feeds, remixing, and social-style workflows. |

If you are evaluating cost rather than creativity, compare Sora against API-priced models and text tools in OpenAI API pricing and cheapest GPT model. Video generation is compute-heavy, and a low monthly text-model price does not mean unlimited practical video production.

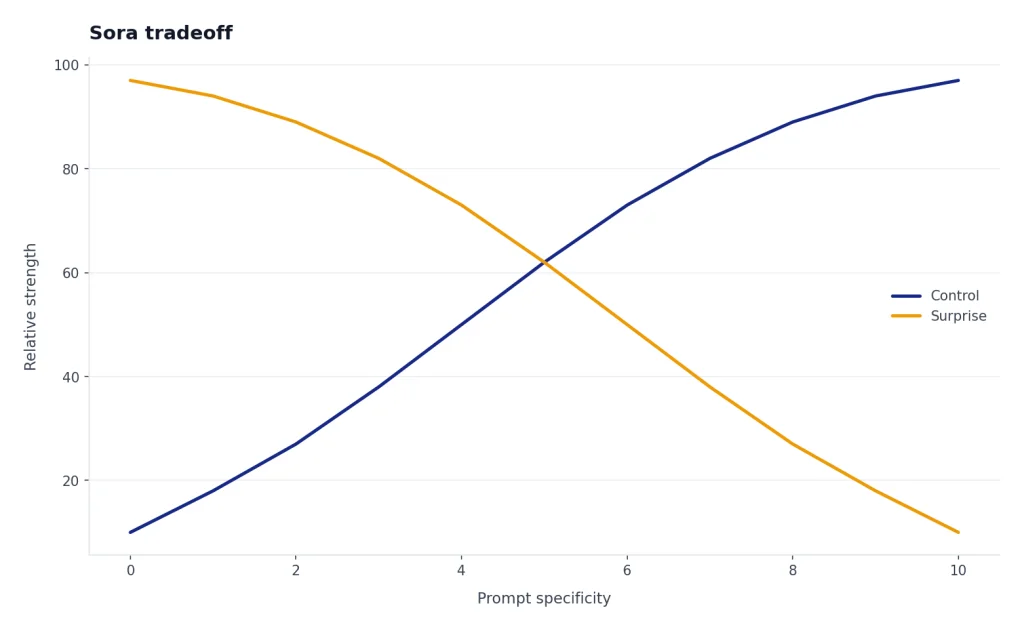

Original analysis: the Sora tradeoff

Sora’s core tradeoff is not quality versus price. It is control versus surprise. The model can produce striking scenes from a compact prompt, but the same generative freedom makes exact continuity, exact brand compliance, exact physics, and exact character behavior hard to guarantee.

That creates a practical decision framework. Use Sora for ideation when a near-miss is still useful. Use it for previsualization when a director, designer, or founder needs to see a scene before commissioning production. Use it for short clips where small artifacts will not damage trust. Do not use it as the only production step for medical, legal, financial, political, or identity-sensitive content.

The same pattern appears in OpenAI’s own language. The company emphasizes storytelling and creative expression, while also warning that the deployed Sora version has limitations in physics and complex actions.[1][5] Sora 2 improves realism and controllability, but the safety card still treats likeness, misleading generations, and photorealistic people as special risk areas.[9][14]

The best workflow is therefore layered. Generate several Sora drafts. Pick the version with the best motion. Use conventional editing tools to trim, add titles, normalize audio, and enforce brand rules. For scripts, voiceover, subtitles, and planning, pair Sora with the right language or speech model, such as Whisper for transcription and a writing model for script revisions.

Safety, provenance, and likeness controls

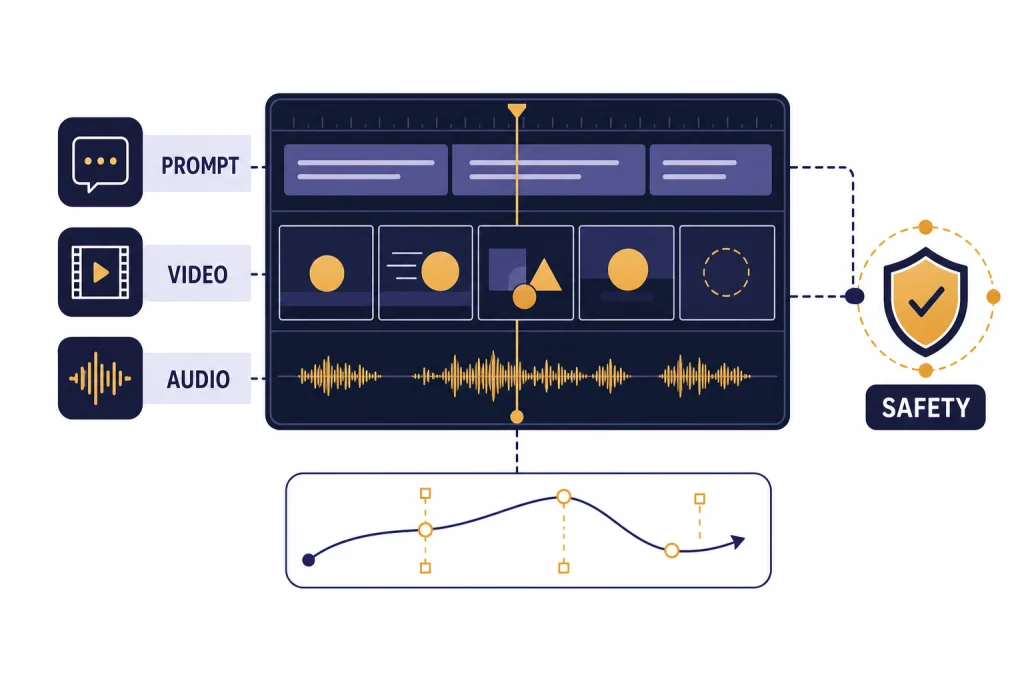

Sora creates obvious misuse risks because realistic moving images are easier to misread than text. OpenAI’s December 2024 launch post says all Sora-generated videos include C2PA metadata, and it also describes visible watermarks by default plus an internal search tool for identifying whether content came from Sora.[1][5] AP reported at launch that OpenAI limited how most users could depict people while monitoring for misuse.[2][1]

The Sora system card says the model uses text, image, and video inputs and generates new video output.[5][7] It also says OpenAI tested safety mitigations with external red teamers from September through December 2024 and ran more than 15,000 generations, but OpenAI has not published a corroborated figure for that testing count.[5] The same system-card family identifies child safety, sexual deepfakes, violence, misinformation, and likeness misuse as risk areas.[5][14]

Sora 2 raised the stakes because it added synchronized audio and a cameo-style identity workflow.[8][10] OpenAI’s Sora 2 system card says initial deployment included limited invitations, restrictions on image uploads featuring photorealistic people, restrictions on video uploads, and stricter thresholds involving minors.[9][14] Those controls are not just policy details. They define which professional uses are safe enough to attempt.

Sora vs. other OpenAI models

Sora belongs in OpenAI’s multimodal stack, but it does not replace GPT, DALL-E, Whisper, or reasoning models. It generates video. GPT models generate and transform language. Image models generate still visuals. Whisper transcribes audio. Reasoning models such as OpenAI o3 or OpenAI o4-mini are better fits for hard planning, math, coding, or step-by-step analysis.

| Model family | Main output | Use it for | Do not use it for |

|---|---|---|---|

| Sora / Sora 2[1][8] | Short AI video, with Sora 2 adding audio[8][10] | Motion concepts, scene generation, visual storytelling. | Precise factual explanations, long documents, code. |

| GPT models | Text, structured output, multimodal analysis depending on model | Writing, summarizing, planning, coding, prompt drafting. | Final video rendering. |

| DALL-E 3 | Still images | Illustrations, thumbnails, visual concepts. | Motion, timing, dialogue, or sequence control. |

| Whisper | Speech-to-text | Transcripts, captions, searchable audio archives. | Generating new video. |

The right comparison depends on the job. If you need a model to understand an image, read GPT-4 Vision. If you need fast text responses, start with fastest GPT model. If you need maximum reasoning strength, use most powerful GPT model. If you need video, Sora is the relevant branch.

Who should use Sora?

Sora is useful for people who benefit from fast visual iteration. Filmmakers can test camera language. Marketers can explore campaign directions before a shoot. Product teams can mock up interface motion. Teachers can make short visual explanations. Game and experience designers can explore mood, movement, and environmental concepts.

Sora is a poor fit when the video must be legally exact, physically exact, or identity-sensitive. Do not rely on it to depict a real person without consent. Do not use it as a source of factual evidence. Do not assume a generated object obeys engineering constraints. OpenAI’s own launch note says the deployed Sora version can produce unrealistic physics and struggle with complex actions over long durations.[1][5]

The strongest professional pattern is to treat Sora as a preproduction and iteration tool. It compresses the distance between idea and draft. It does not remove the need for human review, rights clearance, editing, accessibility checks, or brand approval.

Frequently asked questions

When did OpenAI release Sora?

OpenAI first previewed Sora on February 15, 2024 as a research model.[3][4] It released Sora Turbo as a product on December 9, 2024 for ChatGPT Plus and Pro users.[1][6] Sora 2 followed on September 30, 2025 with synchronized audio and a dedicated Sora app.[8][10]

What is the difference between Sora and Sora 2?

Sora is the original OpenAI video model family, while Sora 2 is the later generation announced on September 30, 2025.[8][10] OpenAI says Sora 2 improves physics, realism, steerability, and stylistic range, and adds synchronized dialogue and sound effects.[8][9] If you want the latest version, read this guide to Sora 2.

How long can Sora videos be?

For the Sora Turbo product launch, OpenAI said users could generate videos up to 20 seconds long and up to 1080p resolution.[1][6] The February 2024 research preview showed capability up to a minute, but that was not the same as the public product limit.[3][4] Always check the current Sora help page because limits can differ by plan, version, and region.[7][1]

Does Sora cost extra?

OpenAI’s December 9, 2024 launch post said Sora was included with ChatGPT Plus at no additional cost and that Pro offered more usage.[1][6] OpenAI listed ChatGPT Plus at $20 per month and ChatGPT Pro at $200 per month, with TechRadar independently describing the same $20 and $200 plan gap in February 2026.[11][12] Do not buy a plan only for Sora without checking the current quota shown on your account.

Can Sora generate videos of real people?

Sora has likeness controls because realistic video can be used for impersonation. AP reported at the December 2024 launch that OpenAI limited how most users could depict people, while OpenAI said it was focused on misappropriation of likeness and deepfake risks.[2][1] Sora 2 added a cameo-style workflow based on a one-time video-and-audio recording to verify identity and capture likeness.[8][10]

Is Sora a GPT model?

No. Sora uses transformer ideas, but OpenAI describes it as a video generation model and diffusion model, not as a GPT text model.[5][3] GPT models are better for writing, reasoning, coding, and structured answers. For text-model tradeoffs, use context window sizes for every GPT model or best GPT model for coding.

What are Sora’s biggest limitations?

OpenAI says the deployed Sora version can generate unrealistic physics and struggle with complex actions over long durations.[1][5] That means you should expect some failed takes, especially with hands, collisions, tools, sports, choreography, and multi-character action. Sora is strongest for short, visually clear scenes where a human editor can choose the best generation.

Bottom line

Sora is OpenAI’s video branch: powerful for short visual scenes, especially when paired with a disciplined prompt and human editing. Sora 2 makes the model family more useful by adding synchronized audio and stronger control, but it also increases the need for consent, provenance, and careful review.[8][9]

Watch the Sora line for three things next: lower-cost iteration, better continuity across longer clips, and clearer rights controls for people, brands, and copyrighted styles. Those will determine whether Sora remains mainly a creative drafting tool or becomes a dependable production system.