Originality.ai is worth the subscription if you run a publishing workflow, manage freelance writers, or need one place to check AI likelihood, plagiarism, readability, grammar, and basic fact claims before content goes live. It is less compelling for casual writers who only need an occasional AI scan, and it should not be used as final proof that a student, employee, or freelancer used AI. The strongest case for Originality.ai is operational: it gives editors a repeatable review step with reports, website scans, Google Docs support, and team features. The weak point is the same one shared by every AI detector: false positives and false negatives still happen.

Verdict: who should subscribe

This originality ai review has a simple answer. Subscribe if you need a content quality checkpoint, not a courtroom verdict. Originality.ai fits publishers, SEO agencies, editorial teams, marketplace operators, and site owners who buy or accept large volumes of text from other people. It helps these teams standardize review before publication.

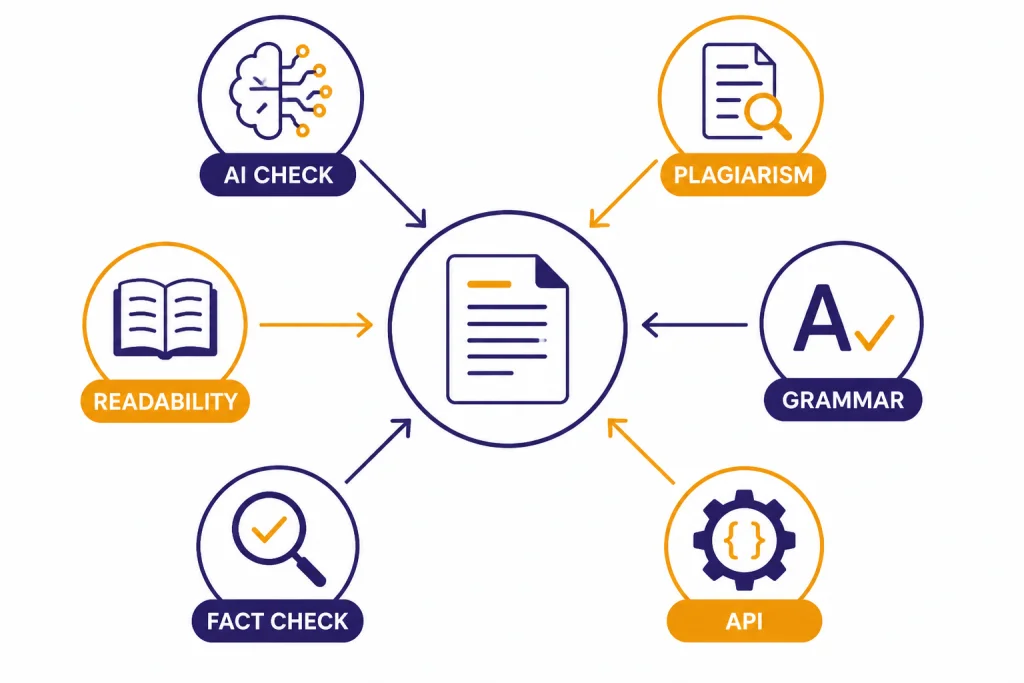

It is also useful if you already use separate tools for plagiarism, AI detection, readability, and grammar. Originality.ai combines those checks in one workspace. That matters when your real cost is editor time, not just subscription price. If you are comparing it with broader writing suites, see our best AI writing tools compared in 2026 for a wider view.

Do not subscribe if your only goal is to prove that a person used AI. AI detection is probabilistic. A score can support an editorial review, but it should not replace authorship evidence, version history, source checks, or a conversation with the writer. This is especially important in schools. If your main use case is classroom policy, start with our guide to AI detectors for teachers before paying for any detector.

What Originality.ai does

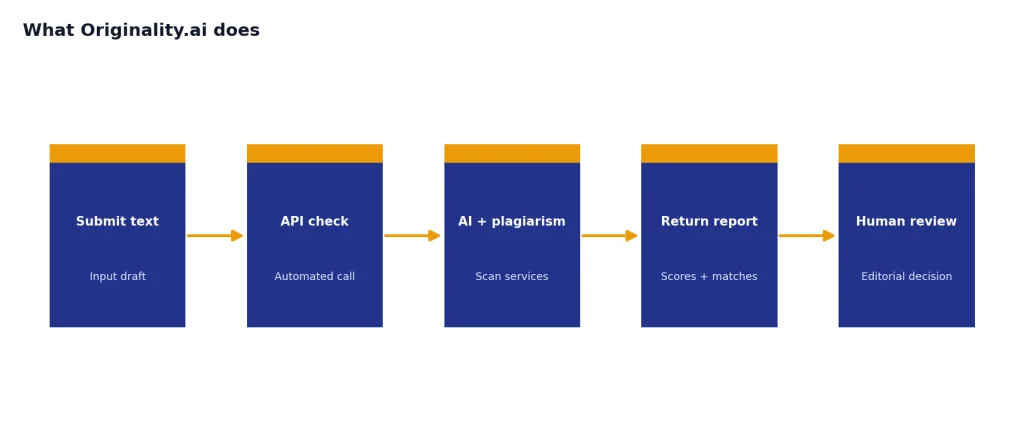

Originality.ai is a browser-based content review platform. Its pricing page lists AI Checker, Plagiarism Checker, Readability Checker, Grammar and Spelling Checker, Fact Checking Aid, SEO Content Optimizer, Chrome extension, WordPress plugin, shareable reports, and scan history among the product features available across its plans.[1]

The AI checker estimates whether text is likely human-written, AI-written, or AI-edited. Originality.ai’s own accuracy page names its Lite Version 1.0.2, Turbo 3.0.2, and Academic 0.0.5 detection models, and says those models were launched as part of a next-generation release in September 2025.[6] That matters because the tool is not a single static detector. It is a set of models tuned for different risk tolerances.

The plagiarism checker compares submitted text against public web content. Originality.ai’s help article says the plagiarism scan detects exact or closely matching text, highlights matched sections, links to original sources, displays a similarity percentage, and is limited to 10,000 words across the platform, Moodle integration, Chrome extension, and public API.[3] If plagiarism is your main concern, compare it with our best plagiarism checkers because traditional plagiarism tools and AI detectors solve different problems.

The Chrome extension is a practical differentiator. Originality.ai says the extension works in Google Docs and Chrome, can scan Google Docs, run page scans, create read-only shareable reports, replay how a Google Doc was written character by character, and show contributor activity.[4] That is more useful than a pasted-text detector when you need process evidence.

The API is for teams that want automated checks. Originality.ai’s API help article says developers and product teams can integrate AI detection and plagiarism checking into existing workflows, applications, or publishing systems.[5] If you are building your own moderation or publishing pipeline, pair that research with OpenAI API pricing and API cost calculator tools so your total review cost is not hidden.

Originality.ai pricing and credits

Originality.ai uses a credit system. Its pricing page says all prices are in USD, the Pay as you go plan costs $30 as a one-time payment, includes 3,000 credits, and unused credits expire after 2 years.[1] Scribbr’s pricing FAQ also reports the $30 pay-as-you-go option with 3,000 credits.[2]

The Pro plan costs $14.95 per month when billed monthly, or $12.95 per month when billed yearly, and includes 2,000 credits per month.[1] Scribbr corroborates the $14.95 base subscription and 2,000 monthly credits.[2] The Enterprise plan costs $179 per month when billed monthly, or $136.58 per month when billed yearly, and includes 15,000 credits per month.[1]

The credit math is the key buying decision. Originality.ai says 1 credit equals 100 words for AI checks, and its FAQ says scanning 100 words for both AI and plagiarism uses 2 credits.[1] Scribbr reports that a credit can be used to check 100 words for plagiarism and AI detection, or 10 words for fact checking.[2]

| Plan | Best fit | Price | Credits | Important limitation |

|---|---|---|---|---|

| Pay as you go | Occasional publisher checks | $30 one time | 3,000 credits | Credits expire after 2 years |

| Pro | Individuals and small teams | $14.95 monthly, or $12.95 monthly when billed yearly | 2,000 monthly credits | Subscription credits expire monthly |

| Enterprise | Agencies and publishers | $179 monthly, or $136.58 monthly when billed yearly | 15,000 monthly credits | Higher commitment, but includes stronger workflow features |

For most buyers, Pay as you go is the safest first purchase. It lets you test real documents without committing to a recurring plan. Pro makes sense when you scan content every week. Enterprise is only worth it if the API, priority support, longer scan history, and higher credit allowance directly support your publishing operation.

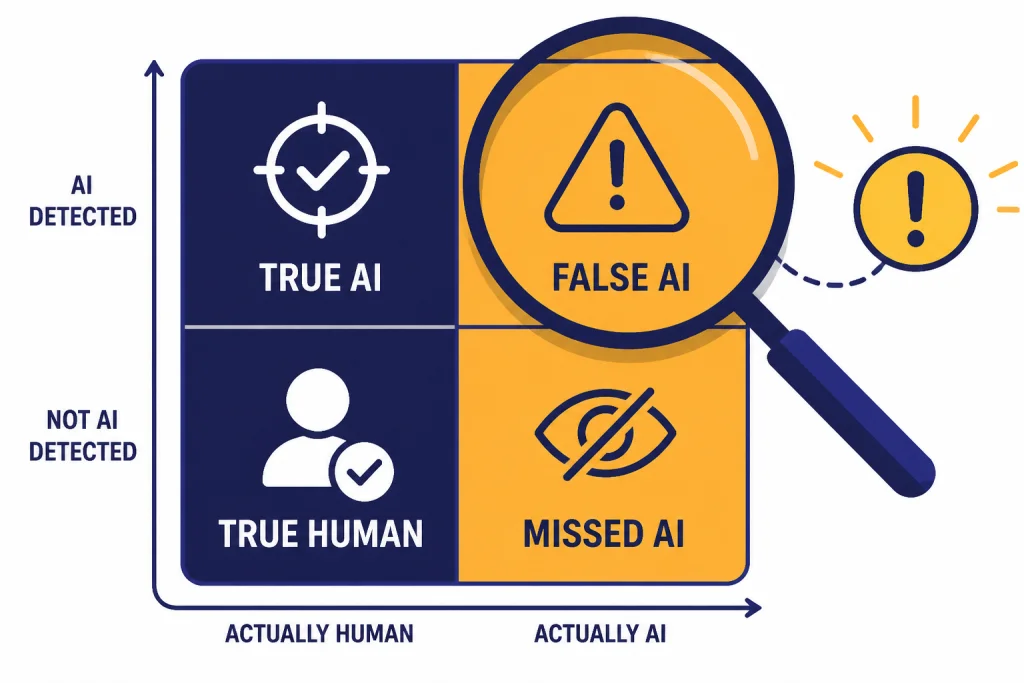

Accuracy, false positives, and what the score means

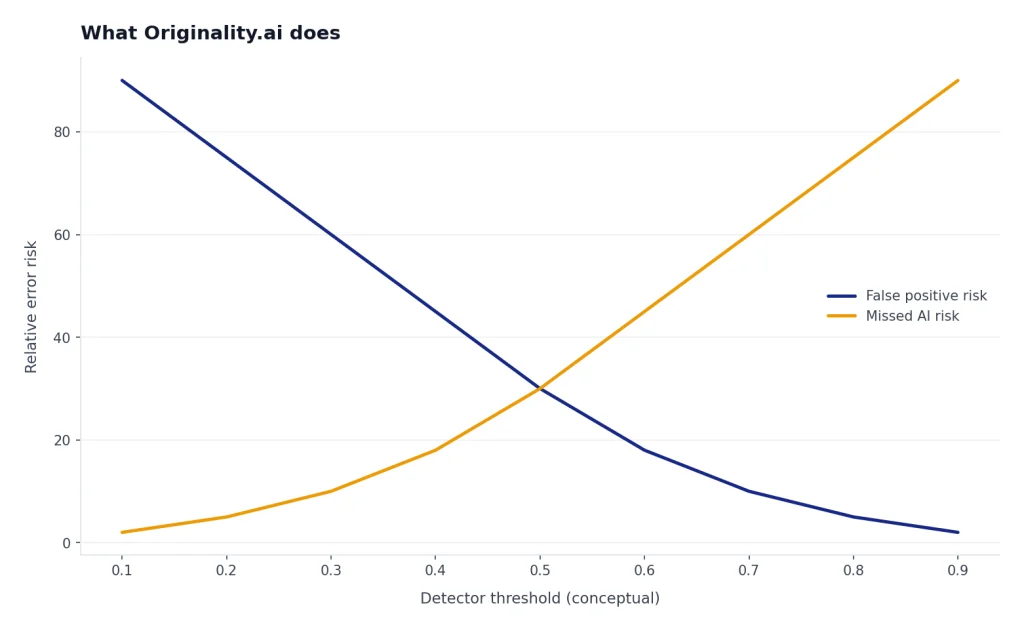

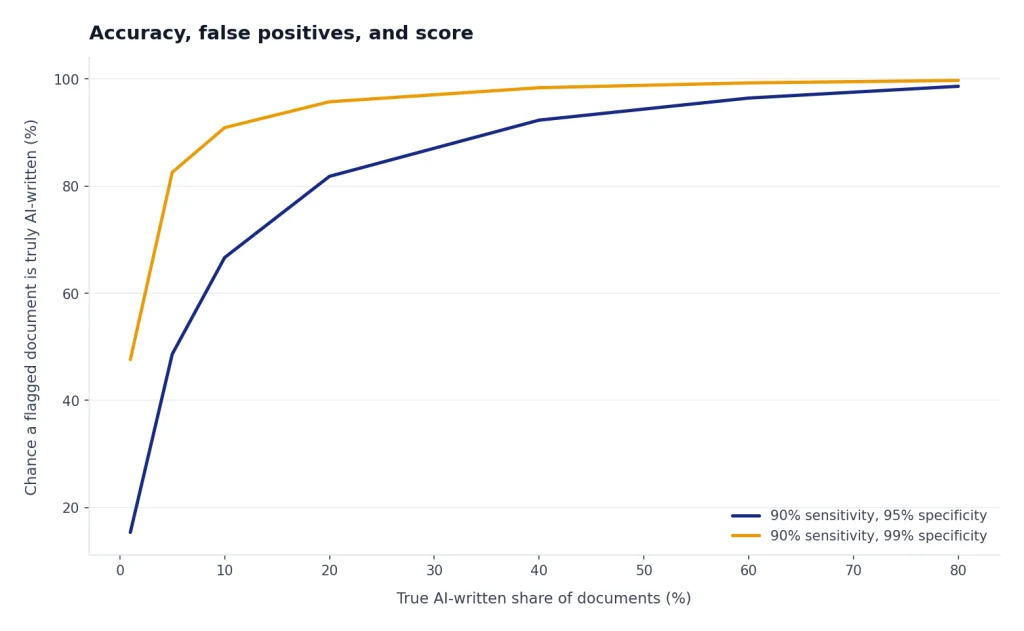

Originality.ai’s own accuracy claims are strong. The company says Lite Version 1.0.2 has 99% accuracy on leading flagship AI models and a 0.5% false positive rate, Turbo 3.0.2 has 99%+ accuracy and a 1.5% false positive rate, and Academic 0.0.5 has 99%+ accuracy with less than 1% false positives.[6] Treat those as vendor claims, not independent proof.

Independent tests are more mixed. Scribbr’s review found Originality.ai had 76% overall accuracy in its curated test set and could detect paraphraser use 60% of the time, but also reported false positive concerns.[7] In Scribbr’s broader detector comparison, Originality.AI ranked fourth out of 12 tools, with 76% accuracy and 1 false positive in that test.[8]

Academic research also supports a cautious view. A Frontiers in Education study found Originality.ai had no false negatives in its tested set, but it also flagged human writing as AI at a 17.6% false positive rate.[9] The same study found that counting a text as suspicious only when both GPTZero and Originality.ai flagged it reduced the false positive rate to 5.2%, but the authors still concluded that AI detectors cannot be relied on as the only metric for student AI use.[9]

That pattern matches the broader state of the field. Tech & Learning reported on University of Chicago research comparing commercial and open-source detectors, including OriginalityAI and GPTZero, and noted that GPTZero had a lower false-positive rate while OriginalityAI was better able to distinguish AI from human text in that study.[10] OpenAI’s own history is another warning. OpenAI discontinued its AI classifier on July 20, 2023 because of a low rate of accuracy, and OpenAI said that its classifier should not be used as a primary decision-making tool.[11]

The practical takeaway is not that Originality.ai is useless. It is that the score needs context. A high AI score on a thin, formulaic, or heavily edited document should start a review. It should not end one. The tool is strongest when used across patterns: repeated scores from the same writer, suspicious similarity, missing sources, inconsistent voice, and no visible drafting history.

How to use Originality.ai responsibly

A good Originality.ai workflow starts before the scan. Tell writers what is allowed. Some teams ban undisclosed AI drafting. Others allow AI brainstorming, outlining, grammar cleanup, or summarization. The detector cannot enforce a policy you never wrote.

Use the scan as one layer. For an outsourced article, check AI likelihood, plagiarism, source quality, factual claims, and readability. If the content uses pasted statistics or medical, legal, financial, or technical claims, verify those claims manually. Originality.ai’s fact checker can help triage claims, but no automated checker should be the final authority.

Keep the document trail. If the Chrome extension’s Google Docs replay is available, use it alongside the score. Draft history can show whether a document developed over time or appeared as a large pasted block. For academic settings, that process evidence is often more informative than a percentage.

For editors, the best workflow looks like this:

- Run the AI and plagiarism scans on the final draft.

- Review highlighted passages instead of only the top-line score.

- Check matched plagiarism sources and citations.

- Ask the writer for notes, sources, or draft history when the score conflicts with the assignment.

- Track repeated problems by writer, client, or content type.

This is also where companion tools matter. A token counter tool can estimate text volume before scanning. AI summarizer tools can help editors review long source documents, but summarized source checks still require judgment. For academic literature workflows, compare Originality.ai with AI research tools for academics, because detection is only one part of research integrity.

Originality.ai alternatives

Originality.ai competes with AI detectors, plagiarism checkers, writing assistants, and broader editorial tools. The right alternative depends on whether you care most about AI detection, plagiarism, classroom workflows, or writer productivity.

| Tool type | Better choice when | Tradeoff |

|---|---|---|

| Originality.ai | You manage publishing workflows and want AI, plagiarism, readability, reports, Google Docs support, and team features in one place | Paid credits and probabilistic AI scores |

| Scribbr premium AI Detector | You want a detector that Scribbr’s comparison ranked above Originality.ai in its test set | Less focused on publisher operations |

| QuillBot AI Detector | You need fast, lightweight AI checks and do not need a full editorial workflow | Not a replacement for plagiarism and process review |

| Traditional plagiarism checker | Your main risk is copied text, missing attribution, or source overlap | Does not prove whether a passage was AI-written |

| Writing assistant | Your goal is to improve drafts, grammar, tone, or structure | May make human writing look more uniform to detectors |

If you are building a full content stack, do not treat Originality.ai as the whole stack. Pair it with clear briefs, source documentation, a plagiarism policy, and editor review. If you are comparing writing and generation tools rather than detection tools, see our ChatGPT prompt generator tools guide for prompt workflows and our AI resume builder tools compared guide for a narrower writing use case.

Pros and cons

What Originality.ai does well

- It fits real editorial workflows. Reports, tags, website scans, Google Docs support, and team features make it more operational than a simple paste-in detector.

- It combines multiple checks. AI detection, plagiarism, readability, grammar, and fact-checking support can reduce tool switching.

- It gives editors review artifacts. Shareable reports and scan history help teams document why a draft needs follow-up.

- It supports scaled use. The API and Enterprise plan make sense for agencies, marketplaces, and publishers with repeated scans.

Where Originality.ai falls short

- It can be wrong. Independent tests and academic work show false positives remain a real risk.

- The credit system needs planning. Monthly subscription credits expire at the end of each month, while pay-as-you-go credits expire after 2 years.[1]

- It is not ideal as a student discipline tool. A detector score alone is too weak for high-stakes accusations.

- It may be overkill for occasional checks. A writer checking one short document may not need the full platform.

Our bottom line: Originality.ai is a strong subscription when the buyer is a publisher or agency that needs repeatable review, not a person chasing certainty. Use it to reduce risk, prioritize human review, and document editorial decisions. Do not use it as a final judgment on authorship.

Frequently asked questions

Is Originality.ai worth paying for?

Yes, if you publish or review enough content that a repeatable scan workflow saves editor time. It is most useful for agencies, site owners, and publishers managing outside writers. It is less useful if you only need a one-off AI check.

How much does Originality.ai cost?

Originality.ai’s Pay as you go plan costs $30 for 3,000 credits, Pro costs $14.95 per month with 2,000 monthly credits, and Enterprise costs $179 per month with 15,000 monthly credits.[1] Annual billing lowers Pro to $12.95 per month and Enterprise to $136.58 per month.[1]

Can Originality.ai prove that text was written by AI?

No. Originality.ai estimates likelihood based on patterns in text. Independent research and reviews show that false positives and false negatives can happen, so the score should be treated as evidence for review, not proof of misconduct.[9]

Is Originality.ai good for teachers?

It can be useful as one signal, especially if paired with draft history and a clear AI policy. It should not be the only basis for grading penalties or academic discipline. The risk of false positives is too high for that use.

Does Originality.ai check plagiarism too?

Yes. Originality.ai’s plagiarism checker detects exact or closely matching text, highlights matched sections, provides source links, and displays a similarity percentage.[3] That makes it more useful for publishers than an AI-only detector.

What is the best alternative to Originality.ai?

For AI detection alone, Scribbr’s comparison ranked its premium detector above Originality.ai in the tested set.[8] For plagiarism-first work, a dedicated plagiarism checker may be a better fit. For writing improvement, use a writing assistant instead of a detector.