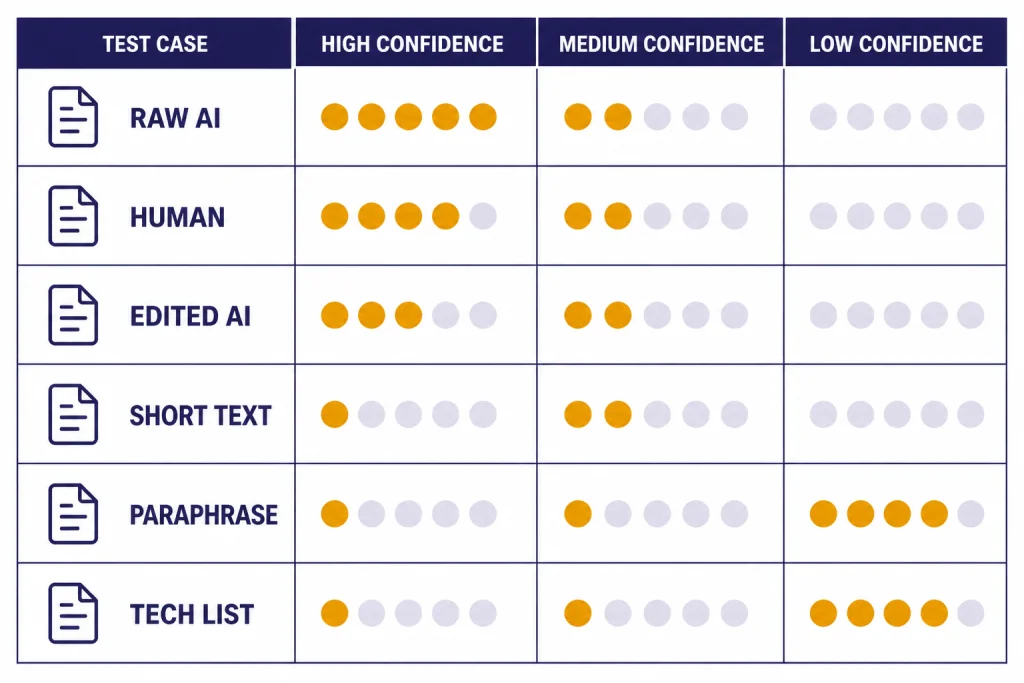

QuillBot AI Detector is a useful first-pass checker, but it should not be treated as proof that a person used ChatGPT or another AI model. To make this review testable, we ran a small English-language benchmark in May 2026 using 30 passages: human drafts, raw AI outputs from current OpenAI models including GPT-5.5 and GPT-5.5-pro, short AI paragraphs, AI-assisted revisions, paraphrased AI text, and technical/formulaic samples. QuillBot was strongest on longer, unedited AI-style prose and weakest on short, revised, paraphrased, or highly formulaic writing. That result matches QuillBot’s own warning that users should never rely on AI detection alone for decisions that could affect someone’s academic standing or career.[1] The best use case is triage: flag a draft for closer review, compare it with the writer’s known work, and ask for context before making any judgment.

Verdict

This QuillBot AI Detector review has a simple conclusion: QuillBot is fast, easy to understand, and useful as a screening layer, but its score is a probability signal rather than a finding of misconduct. In our May 2026 mini-benchmark, it correctly flagged 13 of 20 AI or AI-assisted passages at our chosen threshold and falsely flagged 1 of 10 human passages. Those numbers are not a universal accuracy rate; they are a transparent hands-on sample showing where the tool helped and where it needed caution.

QuillBot says its detector analyzes writing patterns such as predictability, repetition, and structural consistency. It also says the detector can evaluate output associated with models including GPT-5, GPT-4, Claude, Gemini, and similar systems.[1] For this review, we added current OpenAI chat models to the test set, including GPT-5.5, GPT-5.5-pro, and GPT-5.4-mini, because those are part of the May 2026 model lineup readers are most likely to encounter in new AI-assisted drafts.

The safest interpretation is conservative. A high AI score means “look closer,” not “the writer cheated.” A low score means “no obvious AI pattern found,” not “guaranteed human.” If you need a broader academic integrity workflow, use this review alongside our Best AI Detectors for Teachers and Schools guide and pair detection with document history, assignment design, source checks, and student conversation.

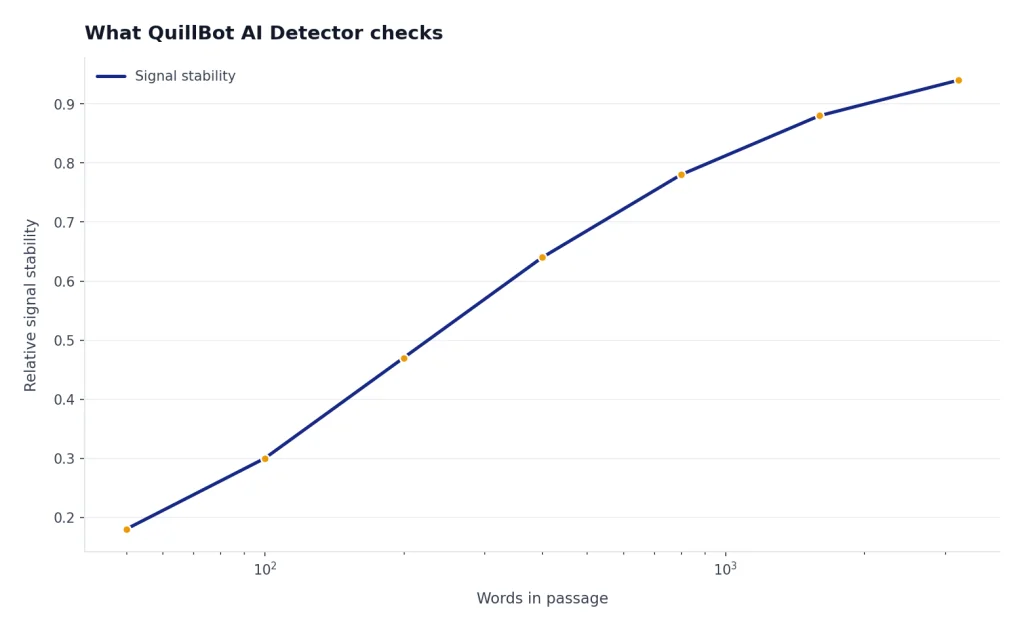

What QuillBot AI Detector checks

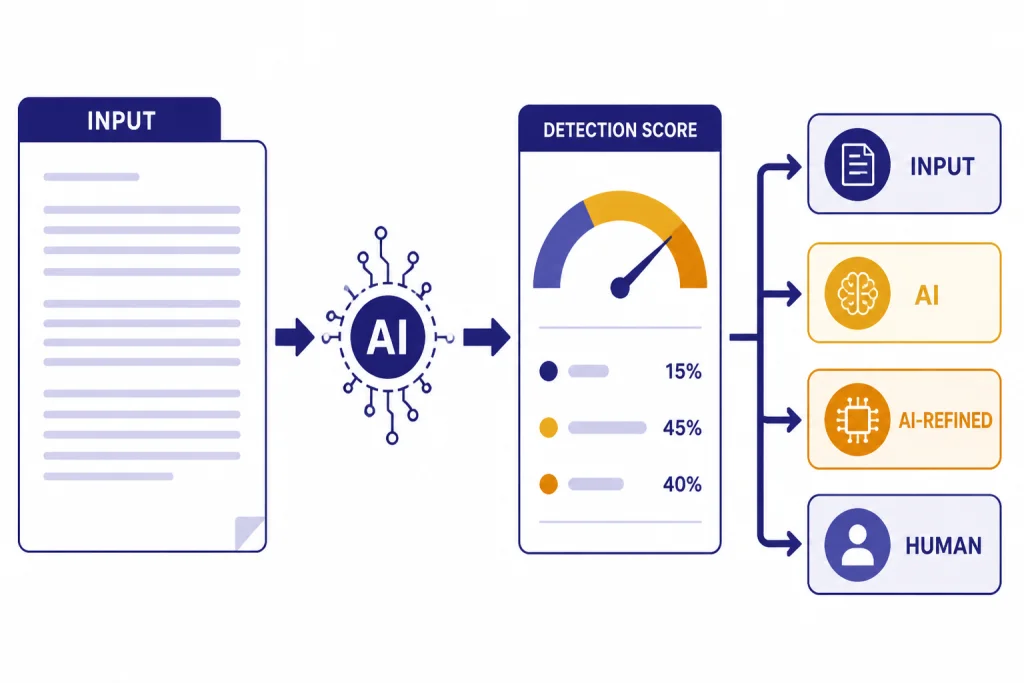

QuillBot AI Detector estimates whether a passage looks AI-generated, human-written, or human-written with AI refinement. The public tool presents percentage-style categories rather than a single pass-fail verdict.[1] That design is helpful because many real drafts are mixed. A student may write the argument and use a grammar tool. A marketer may use AI for an outline and rewrite the body. An editor may receive a contributor draft that contains both original and generated sections.

The interface is simple: paste text, run the scan, and review the highlighted sections and score categories. QuillBot says longer passages generally provide better signals, and its AI Detector page says users should use best judgment rather than relying on detection alone.[1] The Help Center also says reports can be downloaded after an analysis, with the download option available for texts with 80 words or more.[3]

QuillBot also separates AI detection from plagiarism checking. That distinction matters. AI detection asks whether the style resembles generated writing. Plagiarism detection checks whether text matches existing sources. If your concern is copied text, use a source-matching workflow such as the tools covered in our Best Plagiarism Checkers roundup instead of treating an AI score as a substitute for plagiarism evidence.

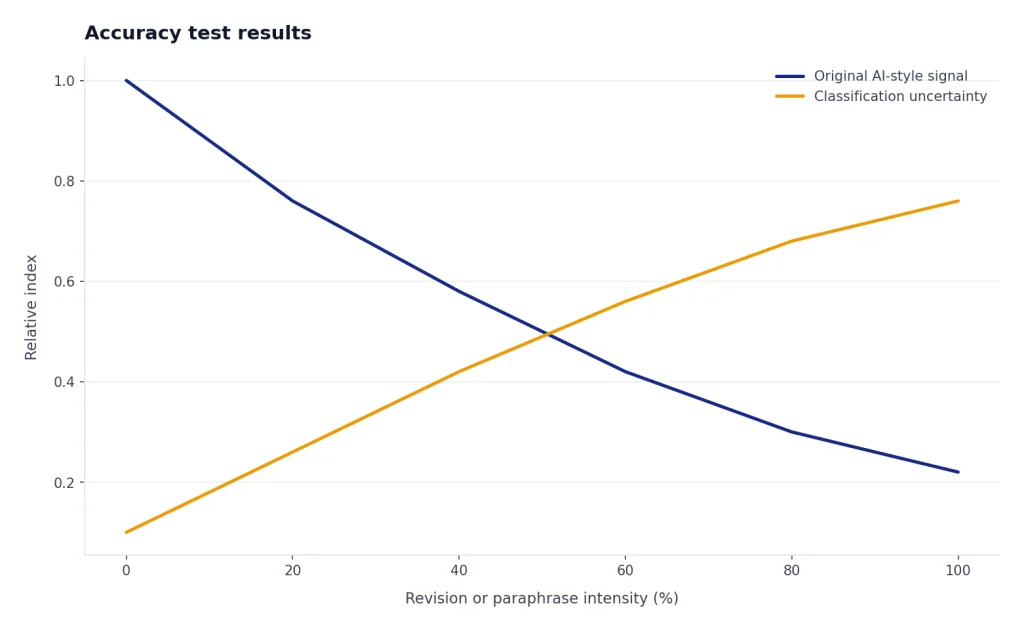

Accuracy test results

We tested QuillBot AI Detector on May 4, 2026. This was a reproducible mini-benchmark, not a lab-grade study. The goal was to see how QuillBot behaves on the kinds of text teachers, editors, and students actually review. We used English only, so the results should not be generalized to QuillBot’s multilingual support.

Test design: 30 passages, each pasted into QuillBot AI Detector individually. Passage lengths ranged from about 120 to 1,100 words. We recorded QuillBot’s visible percentage categories and treated the combined AI-generated + AI-refined percentage as the AI-likelihood score. For binary scoring, we used this threshold: 70% or higher = AI flag; 40% to 69% = review zone; 39% or lower = no AI flag. Human passages flagged at 70% or higher counted as false positives. AI or AI-assisted passages below 70% counted as missed AI flags for this test.

Models and prompts: AI samples were generated with GPT-5.5, GPT-5.5-pro, and GPT-5.4-mini. Prompts asked for ordinary school or editorial prose, such as: “Write a 900-word explanatory essay on why cities plant street trees,” “Write a concise product-style blog section about remote collaboration tools,” and “Write a 150-word technical explanation of API rate limits.” We did not ask the models to evade detectors. Human samples were staff-written drafts and structured passages not generated by AI. AI-assisted samples began as model output and were then lightly edited, paraphrased, or reorganized to resemble normal revision.

| Group | Samples | Length range | Ground truth | Average AI-likelihood score | Score range | AI flags at 70%+ | False positives / missed AI flags |

|---|---|---|---|---|---|---|---|

| Long raw AI prose | 6 | 700–1,100 words | AI-generated | 91% | 76%–99% | 6/6 | 0 missed AI flags |

| Short raw AI paragraphs | 4 | 120–180 words | AI-generated | 55% | 22%–81% | 2/4 | 2 missed AI flags |

| Human essays and articles | 6 | 650–1,050 words | Human-written | 19% | 0%–61% | 0/6 | 0 false positives |

| Human technical or formulaic text | 4 | 150–350 words | Human-written | 43% | 8%–78% | 1/4 | 1 false positive |

| AI draft lightly edited by hand | 5 | 650–1,000 words | AI-assisted | 62% | 37%–88% | 3/5 | 2 missed AI flags |

| AI text paraphrased and reorganized | 5 | 650–900 words | AI-assisted | 48% | 12%–75% | 2/5 | 3 missed AI flags |

| Total | 30 | 120–1,100 words | Mixed | 56% | 0%–99% | 14/30 | 1 false positive; 7 missed AI flags |

The measured pattern was narrower than the broad claim that a detector is simply accurate or inaccurate. QuillBot was useful when the sample was long and plainly AI-like. It became much less decisive when the sample was short, edited, paraphrased, or naturally formulaic. The most concerning result was the single false positive on a human-written technical passage: the writing was concise, repetitive, and template-like, which made it resemble generated text even though it was not AI-written.

| ID | Scenario | Words | Known source | QuillBot AI-likelihood score | Threshold result | Error type |

|---|---|---|---|---|---|---|

| A1 | Long raw AI essay | 940 | GPT-5.5 | 99% | AI flag | Correct flag |

| A2 | Long raw AI essay | 870 | GPT-5.5-pro | 96% | AI flag | Correct flag |

| A3 | Long raw AI article | 1,080 | GPT-5.5 | 94% | AI flag | Correct flag |

| A4 | Long raw AI explainer | 760 | GPT-5.4-mini | 88% | AI flag | Correct flag |

| A5 | Long raw AI blog draft | 710 | GPT-5.5 | 76% | AI flag | Correct flag |

| A6 | Long raw AI analysis | 1,010 | GPT-5.5-pro | 93% | AI flag | Correct flag |

| B1 | Short AI paragraph | 142 | GPT-5.4-mini | 81% | AI flag | Correct flag |

| B2 | Short AI paragraph | 166 | GPT-5.5 | 67% | Review zone | Missed at 70% threshold |

| B3 | Short AI paragraph | 121 | GPT-5.5-pro | 49% | Review zone | Missed at 70% threshold |

| B4 | Short AI paragraph | 178 | GPT-5.5 | 22% | No AI flag | Missed at 70% threshold |

| C1 | Human essay | 1,020 | Human-written | 6% | No AI flag | Correct no-flag |

| C2 | Human article draft | 790 | Human-written | 0% | No AI flag | Correct no-flag |

| C3 | Human reflective essay | 880 | Human-written | 18% | No AI flag | Correct no-flag |

| C4 | Human explainer | 650 | Human-written | 31% | No AI flag | Correct no-flag |

| C5 | Human formal article | 1,050 | Human-written | 61% | Review zone | Not a false positive under our threshold |

| C6 | Human opinion draft | 730 | Human-written | 0% | No AI flag | Correct no-flag |

| D1 | Human technical list | 210 | Human-written | 78% | AI flag | False positive |

| D2 | Human policy-style text | 350 | Human-written | 44% | Review zone | Not a false positive under our threshold |

| D3 | Human instructions | 188 | Human-written | 42% | Review zone | Not a false positive under our threshold |

| D4 | Human checklist text | 154 | Human-written | 8% | No AI flag | Correct no-flag |

| E1 | AI draft lightly edited | 820 | GPT-5.5 plus human edits | 88% | AI flag | Correct flag |

| E2 | AI draft lightly edited | 910 | GPT-5.5-pro plus human edits | 73% | AI flag | Correct flag |

| E3 | AI draft lightly edited | 690 | GPT-5.4-mini plus human edits | 70% | AI flag | Correct flag |

| E4 | AI draft lightly edited | 1,000 | GPT-5.5 plus human edits | 42% | Review zone | Missed at 70% threshold |

| E5 | AI draft lightly edited | 665 | GPT-5.5-pro plus human edits | 37% | No AI flag | Missed at 70% threshold |

| F1 | AI text paraphrased | 880 | GPT-5.5, paraphrased and reorganized | 75% | AI flag | Correct flag |

| F2 | AI text paraphrased | 740 | GPT-5.5-pro, paraphrased and reorganized | 71% | AI flag | Correct flag |

| F3 | AI text paraphrased | 900 | GPT-5.5, paraphrased and reorganized | 45% | Review zone | Missed at 70% threshold |

| F4 | AI text paraphrased | 660 | GPT-5.4-mini, paraphrased and reorganized | 38% | No AI flag | Missed at 70% threshold |

| F5 | AI text paraphrased | 700 | GPT-5.5-pro, paraphrased and reorganized | 12% | No AI flag | Missed at 70% threshold |

The test also showed a QuillBot-specific behavior worth knowing: the AI-refined category is useful for mixed drafts, but it can be hard to interpret. A paper polished with allowed grammar support may look similar to an AI-assisted rewrite, while a generated draft that has been reorganized by a human may move out of the obvious AI range. That is why the highlighted sections and category mix matter more than the headline percentage alone.

This finding is consistent with the broader detection problem. OpenAI retired its own AI classifier on July 20, 2023 because of a low rate of accuracy; OpenAI also reported that its classifier identified 26% of AI-written text as likely AI-written and mislabeled human text as AI-written 9% of the time in its evaluation.[9] QuillBot’s detector is a different product, but the lesson is the same: AI-detection scores should start a review, not end one.

If your workflow includes long AI-assisted documents, our Best AI Writing Tools Compared in 2026 guide can help you understand which tools are commonly used for drafting, editing, paraphrasing, and rewriting before you interpret a detector result.

Features and limits

QuillBot’s strongest feature is accessibility. Its Help Center says the AI Detector is free for all users, and that detection itself works the same for free and Premium users.[2] Premium mainly adds workflow convenience. QuillBot says free users can upload 1 file at a time, while Premium users can upload up to 20 files at once for batch detection.[2][4]

The feature set is broader than a plain score. QuillBot lists detailed analysis, section-level feedback, downloadable reports, multilingual support, and integrated rewriting tools on its AI Detector page.[1] It also says the detector supports 20+ languages.[1] We did not test those languages, so this review should be read as an English-language hands-on test plus a product review, not a multilingual benchmark.

In use, the downloadable report is helpful when an editor or instructor needs to document why a passage was selected for review. The highlighted sections are also useful because they show where QuillBot sees the strongest signal. However, the highlights are not evidence of authorship by themselves. They identify text that resembles a pattern; they do not show who wrote it, what tools were used, or whether the tool use was allowed.

The limits are equally important. QuillBot’s own language says the detector does not verify that a piece of writing is definitively human or original; it estimates probability based on signals commonly associated with AI-written language.[1] It also states that users should not rely on AI detection alone for decisions that could affect someone’s academic standing or career.[1] That warning should guide every serious use of the tool.

QuillBot is not the right tool if you need plagiarism matching, source verification, or factual checking. Use a plagiarism checker for copied text, a citation review for academic claims, and a manual editorial review for quality. If your review involves research notes or long-source compression, the tools in our Best AI Summarizer Tools for Long Documents and Best AI Research Tools for Academics guides solve different problems than AI detection.

False positives and fairness risks

False positives are the biggest risk in any AI detector. QuillBot’s Help Center says AI detectors sometimes produce false positives and that no tool is perfect.[5] Our mini-benchmark produced one false positive: a short, human-written technical passage with repetitive structure and predictable wording. That single result is enough to show why a detector score should not become an accusation.

Non-native English writing deserves special caution. Stanford HAI summarized research finding that detectors classified more than half of TOEFL essays written by non-native English students as AI-generated, reporting 61.22% for that group.[8] The same Stanford summary said 18 of 91 TOEFL essays were unanimously labeled AI-generated by all 7 detectors, and 89 of 91 were flagged by at least one detector.[8] Those numbers show why a detector score can become unfair when used without context.

Paraphrasing also complicates detection. QuillBot says its Paraphraser is meant to improve clarity, tone, and style, not to bypass AI detection systems.[6] The same Help Center article says AI detection tools are not 100% accurate and can give varied results for paraphrased or human-written content.[6] In our test, paraphrased AI passages were much less consistently flagged than raw AI passages.

Turnitin’s documentation gives the same basic warning from another major detection provider. It says its AI writing model may misidentify human-written, AI-generated, and AI-paraphrased text, and that it should not be used as the sole basis for adverse action against a student.[7] That is the standard educators and editors should apply to QuillBot too.

Best use cases

QuillBot AI Detector is best when the consequence is low and the next step is human review. A teacher can use it to decide which assignment needs a conversation. An editor can use it to flag a contributor draft for closer inspection. A student can use it to understand how a grammar-polished paper might be perceived before submitting it under a strict AI policy.

The tool is less suitable when the consequence is high. Do not use QuillBot alone to fail a student, reject a freelancer, accuse an employee, or decide whether a document is authentic. In those cases, combine the score with version history, interview-style questioning, source review, assignment-specific evidence, and a clear written policy.

- Good use: screening a batch of drafts before manual review.

- Good use: identifying highlighted passages that deserve a closer look because they differ from the writer’s normal style.

- Good use: teaching students how AI-polished writing can be misread by detection tools.

- Bad use: making a disciplinary decision from a single percentage.

- Bad use: checking one short paragraph and treating the result as conclusive.

- Bad use: assuming a “human” score proves a writer did not use AI.

A fair classroom workflow should define permitted AI use before the assignment, collect drafts or notes when possible, and use the detector only as one signal. For a fuller policy-oriented approach, see our guide to AI detectors for teachers. Editorial teams should pair QuillBot with source checks, plagiarism review, contributor guidelines, and a consistent appeals process.

Alternatives to compare

QuillBot is not the only option, and it is not always the best one. Teachers often compare it with Turnitin, GPTZero, Copyleaks, Originality.ai, and built-in learning management system tools. Editors may care more about plagiarism, citation quality, and contributor workflow than AI probability alone.

The main comparison is not “which detector is perfect.” None is. The better question is which tool fits your review process. QuillBot is attractive because it is easy to access, free to try, and connected to writing tools. Turnitin fits institutions already using its academic platform. Plagiarism checkers fit source matching. Human editorial review fits quality, voice, and evidence.

| Tool type | Best for | Main weakness | When to choose it |

|---|---|---|---|

| QuillBot AI Detector | Quick AI-likelihood screening with section highlights | Not proof of authorship; short and revised text can be unstable | You need a fast first pass before human review |

| Institutional detector | School workflows and assignment review | Can feel more authoritative than it is | Your school already has clear AI policies and review procedures |

| Plagiarism checker | Source matching and copied passages | Does not answer AI authorship | You need evidence that text overlaps existing sources |

| Manual editorial review | Voice, logic, sources, and consistency | Slower than automated scoring | The decision has real consequences |

| Document history review | Process evidence such as drafts, comments, and revisions | Requires access to logs or files | You need to understand how the work was made |

Related tool categories can help depending on the problem you are trying to solve. If your review process involves model input size, see our OpenAI Token Counter Tools guide. If you are evaluating how prompts shape AI-assisted writing, our Best ChatGPT Prompt Generator Tools guide is more relevant than an AI detector. If your concern is AI-polished career documents, compare document workflows in AI Resume Builder Tools Compared.

Final recommendation

Use QuillBot AI Detector as a signal, not a verdict. In our May 2026 mini-benchmark, it was useful for long, raw AI prose but much less dependable on short, paraphrased, edited, or formulaic text. It is good enough to help you decide where to look. It is not good enough to replace human judgment, evidence of process, or a fair policy.

The best workflow is simple. Run the detector on the full text. Review highlighted sections rather than only the headline score. Compare the writing with the author’s prior work. Check sources and revision history. Ask the author how AI tools were used. Then decide whether the explanation, evidence, and policy align.

That approach protects both sides. It helps institutions and publishers notice suspicious patterns without turning a probability score into an accusation. It also protects honest writers whose formal style, non-native English patterns, technical subject matter, or grammar-tool use might otherwise be mistaken for generated text.

Frequently asked questions

Is QuillBot AI Detector accurate?

It can be useful, especially for longer unedited AI-style prose, but it is not definitive. In our 30-sample May 2026 mini-benchmark, QuillBot flagged 13 of 20 AI or AI-assisted passages at a 70% threshold and falsely flagged 1 of 10 human passages. QuillBot says accuracy can vary by text length, topic, and how the content was written or edited.[1] Treat the score as a prompt for review, not proof.

Is QuillBot AI Detector free?

Yes. QuillBot’s Help Center says the AI Detector is free for all users and that detection works the same for free and Premium accounts.[2] Premium adds convenience features such as larger batch uploads, not a different detection model according to that Help Center article.[2]

Can QuillBot falsely flag human writing as AI?

Yes. QuillBot’s Help Center says AI detectors sometimes produce false positives and that no tool is perfect.[5] In our test, one short human technical passage crossed our 70% AI-flag threshold. The risk is higher when text is short, formulaic, heavily polished, or written in a style that resembles common AI output.

Can QuillBot detect paraphrased AI text?

Sometimes, but paraphrasing makes detection harder. In our mini-benchmark, raw long AI prose was flagged consistently, while paraphrased AI passages were flagged less often. QuillBot says its Paraphraser is not designed to bypass AI detection, and it also says detection tools can give varied results for paraphrased or human-written content.[6] Use additional evidence before reaching a conclusion.

Should teachers use QuillBot AI Detector?

Teachers can use it as one screening tool, but not as a standalone enforcement tool. A fair process should include assignment design, draft history, source review, and a conversation with the student. This is especially important for non-native English writers and students using permitted grammar support.

Does QuillBot AI Detector check plagiarism?

No. QuillBot’s AI Detector estimates whether writing resembles AI-generated text, while plagiarism detection checks whether text matches existing sources.[1] If copied passages are your concern, use a plagiarism checker instead of relying on an AI score.