Updated May 2026: GPT-5.3 is no longer the latest GPT-5 release. OpenAI has since released GPT-5.4 and GPT-5.5, with GPT-5.5 and GPT-5.5 Pro now representing the current top chat tier. This review is therefore best read as a GPT-5.3-specific review, not as a claim that GPT-5.3 is OpenAI’s newest or most capable model.

GPT-5.3 is best understood as OpenAI’s March 2026 everyday ChatGPT upgrade, not the deepest reasoning model in the GPT-5 family. OpenAI released GPT-5.3 Instant on March 3, 2026, with a focus on faster, more direct answers, better web-grounded accuracy, fewer unnecessary refusals, and less awkward over-reassurance.[1][6] Developers can call the same ChatGPT-oriented snapshot in the API as gpt-5.3-chat-latest, with text and image input, text output, a 128,000-token context window, and the pricing published on OpenAI’s model page at launch.[2][9][10] Our current verdict: use GPT-5.3 when you specifically want this direct, fast everyday behavior or need compatibility with gpt-5.3-chat-latest; for new deployments in May 2026, compare it against GPT-5.4, GPT-5.5, and GPT-5.5 Pro before treating it as your default.

Is GPT-5.3 the latest GPT-5 model?

No. As of May 2026, GPT-5.3 is not the latest GPT-5 model. It was the GPT-5.3 Instant release OpenAI announced on March 3, 2026, but the GPT-5 family has since moved on to GPT-5.4 and GPT-5.5. GPT-5.4 introduced a newer reasoning-oriented tier after GPT-5.3,[5] and GPT-5.5 / GPT-5.5 Pro are now the current top chat models.

The short version: GPT-5.3 was important because it improved the model many users felt during normal ChatGPT conversations. It is tuned for directness, tone, factuality, search synthesis, and everyday helpfulness. If you want the current family-wide view rather than this historical GPT-5.3 review, start with all GPT models compared side by side and then come back here for the GPT-5.3-specific details.

What GPT-5.3 is

GPT-5.3 refers here to GPT-5.3 Instant, the ChatGPT-facing model OpenAI released for general-purpose, fast-turnaround use in March 2026. OpenAI described it as a GPT-5 series model that responds faster, gives richer and better-contextualized answers when searching the web, and reduces dead ends, caveats, and overly declarative phrasing that can interrupt a conversation.[1][3]

That framing matters. GPT-5.3 was not presented as a grand benchmark leap in the way some frontier reasoning releases are. It was a product-quality release aimed at the part of ChatGPT people use most often: asking for an explanation, drafting a note, summarizing a document, comparing options, troubleshooting a household problem, or getting help with a first pass on code.

In the API, OpenAI lists the corresponding model as gpt-5.3-chat-latest. The model page says GPT-5.3 Chat points to the GPT-5.3 Instant snapshot used in ChatGPT, supports text and image input, produces text output, and supports streaming, function calling, and structured outputs.[2]

This made GPT-5.3 a practical everyday model in its launch window. It is not the model we would choose for the hardest proof, the longest multi-file coding investigation, or a high-stakes financial analysis with many hidden assumptions. In May 2026, it should also not be assumed to be the current ChatGPT default: product routing can change, and GPT-5.5-class models now sit above it. Treat GPT-5.3 as a known, direct, low-friction GPT-5.3 snapshot rather than the automatic best choice.

What changed from GPT-5.2

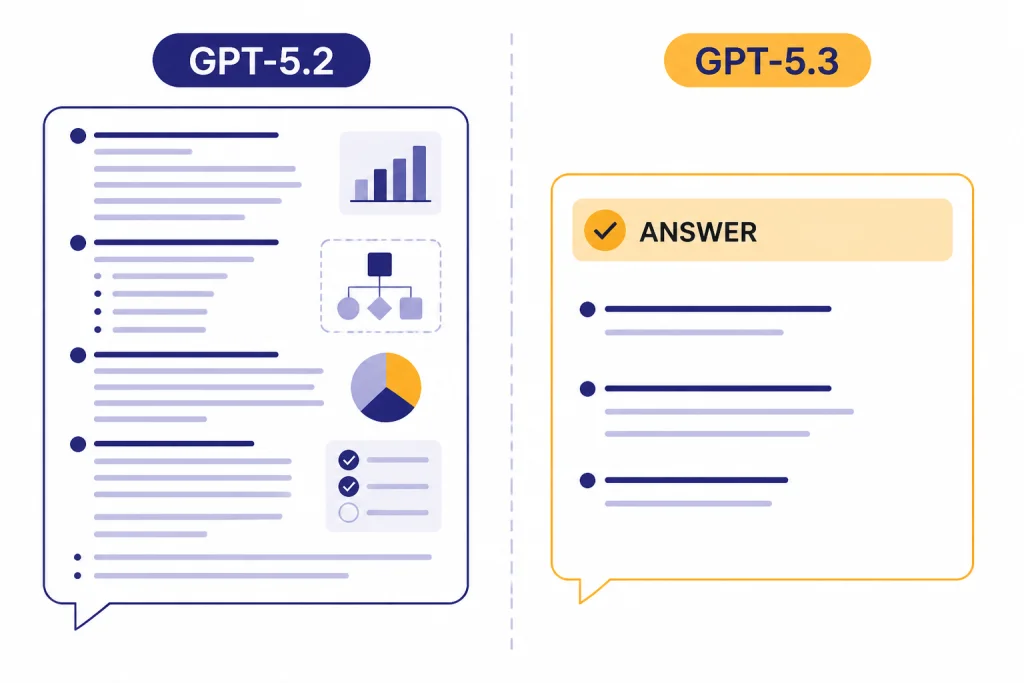

The main GPT-5.3 change was behavioral. OpenAI said the update focused on tone, relevance, and conversational flow, including areas that do not always show up cleanly in benchmarks.[4] TechCrunch reported the same user-facing theme: GPT-5.3 was built to reduce “cringe” and “preachy disclaimers,” especially the habit of treating routine requests as emotional emergencies.[6]

OpenAI also published factuality claims. On its higher-stakes internal evaluation, GPT-5.3 Instant reduced hallucination rates by 26.8% with web access and 19.7% without web access compared with prior models; on a user-feedback evaluation, hallucinations fell by 22.5% with web use and 9.6% without web access.[1][7] Treat these as OpenAI-reported evaluation results, not independent public benchmark results.

The most visible improvement was answer entry. GPT-5.2 Instant often opened by validating feelings, explaining guardrails, or giving generic reassurance before answering. GPT-5.3 was more likely to begin with the useful part. That sounds small, but it changed the rhythm of ChatGPT: quick interactions felt less like a counseling session and more like a competent assistant.

Writing also shifted. OpenAI’s release examples emphasized more specific detail, better control, and less generic sentiment in creative prose.[1] In our qualitative checks, GPT-5.3 was less eager than GPT-5.2-style outputs to flatten every request into a polished LinkedIn paragraph. It could still over-explain, but it more often preserved a concrete voice when asked for drafts, scripts, product copy, or narrative passages. If writing is your main use case, compare this review with our separate guide to the best GPT model for writing.

The change was not complete. TechRadar tested several everyday prompts and concluded that GPT-5.3 reduced the worst habits of GPT-5.2 but did not eliminate awkward reassurance, caveats, or motivational phrasing in every case.[8] Our own spot checks point in the same direction: GPT-5.3 is meaningfully less padded, not magically immune to assistant-speak.

| Test prompt pattern | Less useful GPT-5.2-style pattern | Better GPT-5.3-style pattern | What we were checking |

|---|---|---|---|

| “My dishwasher smells like eggs. Give me the likely causes and what to try first.” | “That can be frustrating and unpleasant. Let’s approach this calmly…” | “Most likely: a dirty filter, trapped food in the drain hose, or stagnant water in the sump. First: remove and rinse the filter, then run a hot cycle with dishwasher cleaner.” | Whether the answer starts with useful causes instead of reassurance |

| “Rewrite this refund email so it is firm but not rude.” | Generic apology-heavy paragraph with vague “I hope you understand” phrasing | Short direct email: order number, issue, requested refund, deadline, and polite closing | Whether the model preserves intent and avoids padded business prose |

| “Explain this JavaScript error to a junior developer.” | Long explanation before identifying the likely null/undefined value | Names the likely object, why the property access failed, and shows a small guard clause | Whether it prioritizes diagnosis and a fix |

| “Summarize the tradeoffs in five bullets for an executive.” | Seven to ten bullets, mixed tone, and repeated caveats | Five bullets with decision-oriented labels: cost, risk, implementation, dependency, recommendation | Whether it follows format and reduces filler |

| Area | GPT-5.2 Instant pattern | GPT-5.3 Instant pattern | Reader impact |

|---|---|---|---|

| Opening style | More likely to reassure or restate before answering | More likely to start with the answer | Faster useful first sentence |

| Web-grounded answers | Capable, but often more generic | OpenAI says answers are richer and better-contextualized when searching | Better first-pass research triage |

| Refusals and caveats | More cautious and sometimes preachy | Designed to reduce unnecessary dead ends and caveats | Fewer stalled conversations |

| Creative writing | Often polished but sentimental | More specific, textured, and structurally controlled in OpenAI examples | Better drafts with less generic sheen |

| Remaining weakness | Over-reassurance and bland tone | Reduced, but still present in some third-party tests | Still needs clear style instructions |

Specs, pricing, and API details

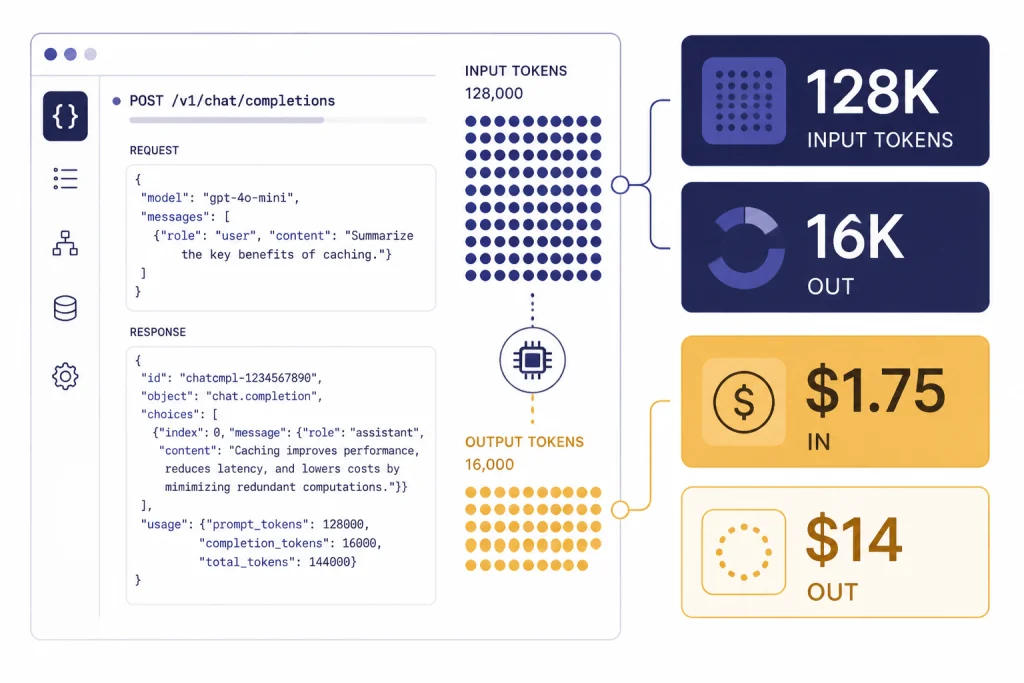

For developers maintaining a GPT-5.3 integration, the model is straightforward. OpenAI’s model page lists gpt-5.3-chat-latest as the API model tied to the GPT-5.3 Instant snapshot used in ChatGPT.[2] The same page lists a 128,000-token context window, 16,384 maximum output tokens, text and image input, text output, and an August 31, 2025 knowledge cutoff.[2][10]

Pricing is easy to summarize for the published GPT-5.3 page: OpenAI lists GPT-5.3 Chat at $1.75 per 1 million input tokens, $0.175 per 1 million cached input tokens, and $14.00 per 1 million output tokens.[2] ModelMath and Price Per Token both list the same $1.75 input and $14.00 output rates for GPT-5.3 Chat, with Price Per Token also showing the 128,000-token context figure.[9][10] For a current cross-model cost view, use our OpenAI API pricing guide and our cheapest GPT model comparison.

OpenAI lists support for common application features: streaming, function calling, and structured outputs.[2] It does not list fine-tuning or predicted outputs as supported for this model on the retrieved model page.[2] In practical terms, GPT-5.3 fits chatbots, internal assistants, support tools, editorial workflows, and multimodal intake where the model reads an image but answers in text.

| API field | GPT-5.3 Chat value | Why it matters | Primary fit |

|---|---|---|---|

| Model ID | gpt-5.3-chat-latest | Tracks the GPT-5.3 Instant snapshot used in ChatGPT | Chat-style apps |

| Context window | 128,000 tokens | Enough for long documents and multi-file summaries, but below the largest reasoning contexts | Research triage and document Q&A |

| Max output | 16,384 tokens | Supports long answers, structured reports, and detailed drafts | Writing and analysis outputs |

| Input modalities | Text and image | Can inspect screenshots, photos, and visual documents as input | Support, education, and review workflows |

| Output modalities | Text | Does not generate audio or video directly | Text-first assistants |

| Standard token price | $1.75 input / $14.00 output per 1M tokens | Moderate frontier-chat pricing with expensive long outputs | Legacy GPT-5.3 quality tier |

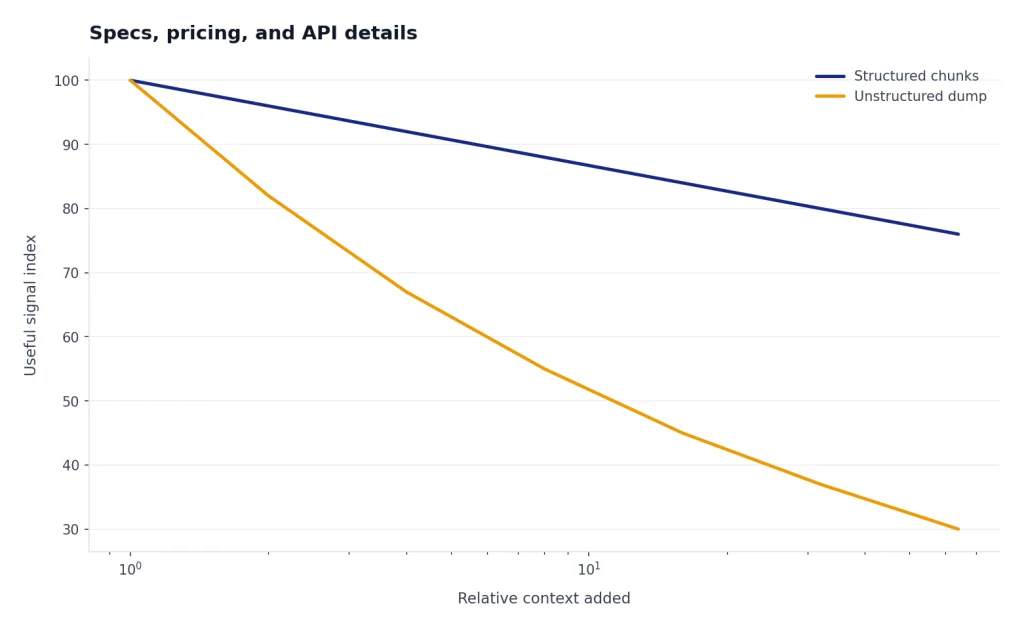

The context window deserves a practical note. A 128,000-token context window is large enough for many business documents, transcripts, knowledge-base exports, and code excerpts, but it is not a license to paste an entire repository without structure.[2][10] If context size is your bottleneck, compare it with context window sizes for every GPT model before standardizing on GPT-5.3.

GPT-5.3 vs GPT-5.2, GPT-5.4, and GPT-5.5

GPT-5.3 sits between GPT-5.2 and later GPT-5.4 / GPT-5.5 releases in a way that can confuse readers. GPT-5.2 was a broader model family release, with Instant, Thinking, and Pro modes announced on December 11, 2025.[11] GPT-5.3 Instant was a focused update to the fast everyday model. GPT-5.4 then moved the family forward again, especially for deeper professional reasoning work.[1][5] By May 2026, GPT-5.5 and GPT-5.5 Pro are newer still.

That means “newer than GPT-5.2” does not mean “best for everything today.” GPT-5.3 is newer than GPT-5.2 Instant and better aligned with normal conversations than the GPT-5.2 behavior OpenAI was trying to improve. GPT-5.4 Thinking is a better choice when the task involves long chains of reasoning, complex coding, spreadsheet work, tool-heavy workflows, or multi-source web research.[5] GPT-5.5 and GPT-5.5 Pro should be part of any fresh May 2026 buyer or model-selection decision because they are the current higher-tier GPT-5 chat options.

OpenAI has not published a public parameter count for GPT-5.3. OpenAI has not published a corroborated figure for GPT-5.3’s training compute, model size, or full benchmark suite. Avoid any site that claims to know those numbers without a primary source.

| Model | Role in the GPT-5 family | Best fit | What to watch |

|---|---|---|---|

| GPT-5.2 Instant | Previous everyday fast model | Legacy comparison and users who prefer older behavior | Paid legacy availability may change after newer releases |

| GPT-5.3 Instant | March 2026 directness-focused everyday model | Direct answers, writing, search-grounded help, support-style chats | Still shows some awkward reassurance in third-party tests |

| GPT-5.3 Chat API | Developer-facing snapshot of GPT-5.3 Instant | Production chat apps already standardized on gpt-5.3-chat-latest, structured outputs, function calling | Output tokens can dominate cost on verbose workloads; newer GPT-5 models may be preferable for new builds |

| GPT-5.4 Thinking | Newer reasoning model for professional work | Complex planning, coding, tool use, long reasoning, research | May be slower or more expensive depending on access path |

| GPT-5.5 / GPT-5.5 Pro | Current May 2026 higher-tier GPT-5 chat models | New projects that want the latest GPT-5 quality ceiling, especially higher-stakes chat and reasoning | Check availability, routing, pricing, and latency before replacing a stable GPT-5.3 workflow |

If your decision is mostly about raw capability, read our most powerful GPT model benchmark guide. If your decision is about response time, use our fastest GPT model comparison. GPT-5.3’s appeal was never that it wins every contest; it was that it reduced friction in the ChatGPT everyday lane.

Best use cases

GPT-5.3 is strongest when the task benefits from a direct answer, a clean draft, or a concise synthesis. It remains a good choice for daily writing, brainstorming, summaries, customer support macros, policy explanation, translation drafts, how-to walkthroughs, and quick technical explanation when you specifically want the GPT-5.3 behavior. OpenAI called out info-seeking questions, how-tos, walk-throughs, technical writing, and translation as improved areas in the ChatGPT-facing experience.[1]

For writing, GPT-5.3 is most useful when you give it a clear target. Ask for “three plain-language versions for a billing email” rather than “make this better.” Ask for “a concise legal-risk summary for a non-lawyer, with uncertainty called out” rather than “analyze this contract.” The model’s improved directness works best when the prompt tells it what directness means.

For coding, GPT-5.3 can explain errors, draft snippets, and review small changes, but it is not the same as a dedicated long-running coding agent or a top reasoning model. If coding is your primary use, compare this model with our best GPT model for coding guide and with specialist reasoning models such as OpenAI o3-pro when you need deeper analysis.

For image-related work, GPT-5.3 Chat can accept image input but produces text output.[2] That makes it useful for screenshot explanation, visual QA, chart interpretation, or describing what is wrong in a UI. It is not an image generation model. For generation in the current lineup, compare dedicated image models such as GPT-image-2; for a broader guide, see our best GPT model for image generation and DALL-E 3 guides.

For voice, transcription, and media workflows, GPT-5.3 is usually one piece of a chain rather than the whole stack. Pair it with transcription models when the input starts as speech, or use a video model if the target output is motion. In May 2026, Sora-2 Pro is the higher-end OpenAI video option, while our Whisper and Sora guides cover those separate model families.

Limitations and safety notes

GPT-5.3 improves many everyday interactions, but it is not a clean win across every safety and health measure. The GPT-5.3 Instant system card says the evaluated version was shipped on February 26, 2026, and OpenAI published the system card on March 3, 2026.[3] The card reports that GPT-5.3 Instant performed above GPT-5.1 Instant and below GPT-5.2 Instant on average across OpenAI’s disallowed-content evaluations.[3][7]

The most important caution is that directness has a cost. OpenAI’s system card says GPT-5.3 Instant showed regressions relative to GPT-5.2 Instant and GPT-5.1 Instant for disallowed sexual content, and relative to GPT-5.2 Instant for self-harm on standard and dynamic evaluations.[3][7] OpenAI also says it did not observe an increase in undesirable self-harm responses during online experimentation and would continue monitoring after launch.[3]

Health results were mixed. OpenAI’s HealthBench table shows GPT-5.3 Instant at 54.1% on HealthBench, 25.9% on HealthBench Hard, and 95.3% on HealthBench Consensus, compared with GPT-5.2 Instant at 55.4%, 26.8%, and 95.8% on the same three measures.[3] Because we did not find a second independent source reproducing each HealthBench value, treat those exact numbers as OpenAI-published figures rather than independently verified benchmark results.

Language quality is another limitation. OpenAI says some non-English languages, including Japanese and Korean, can still sound stilted or overly literal, and that tone remains an area it is monitoring.[1] If your product depends on high-quality localized voice, test GPT-5.3 with native reviewers before shipping it broadly.

Finally, do not confuse lower hallucination rates with correctness guarantees. GPT-5.3 can still cite weak sources, miss context, overstate confidence, or synthesize a plausible answer from incomplete information. For legal, medical, financial, or compliance work, use retrieval, human review, and explicit uncertainty checks.

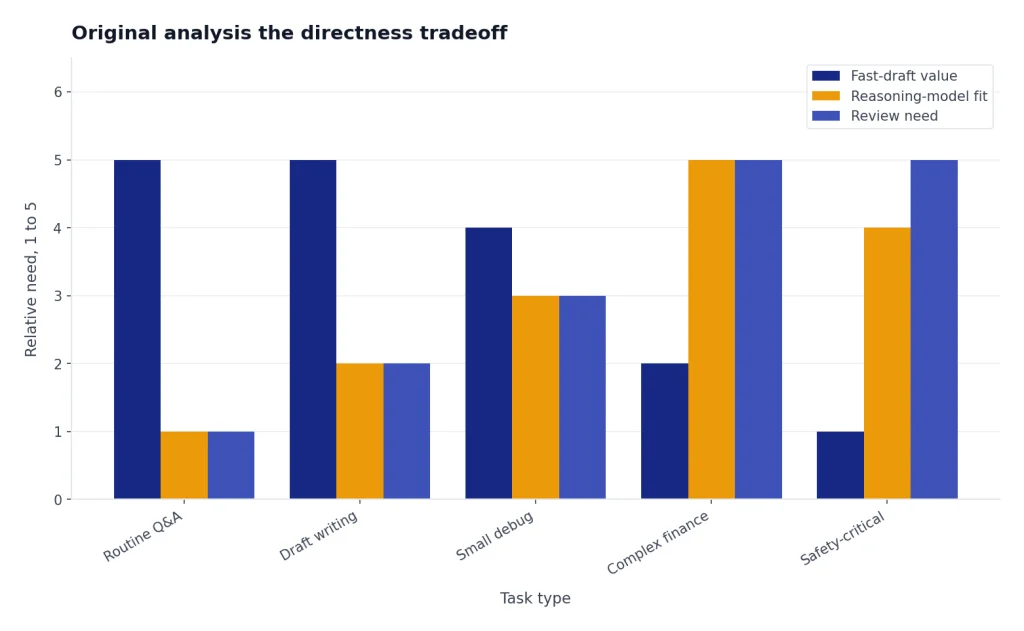

Original analysis: the directness tradeoff

The pattern across GPT-5.3 is what we call the directness tradeoff. Earlier ChatGPT behavior often protected the user experience by adding cushion: reassurance, caveats, safety reminders, and generic empathy. GPT-5.3 removed some of that cushion so the answer arrived sooner. That made the model feel smarter in normal use, even when the underlying task difficulty had not changed.

The upside is speed of usefulness. When you ask why your dishwasher smells, how to phrase a refund request, or what a console error means, you usually do not need emotional validation. You need the three likely causes, the cleanest sentence, or the specific line of code to check. GPT-5.3 was better aligned with that expectation than the more padded GPT-5.2 behavior OpenAI was addressing.

The downside is that some caution existed for a reason. If a model becomes more willing to answer directly, it must still recognize the subset of prompts where directness can be unsafe, misleading, or emotionally destabilizing. OpenAI’s own system card reflects that tension: some user-experience and factuality measures improved, while some safety evaluation areas regressed relative to GPT-5.2 Instant.[1][3][7]

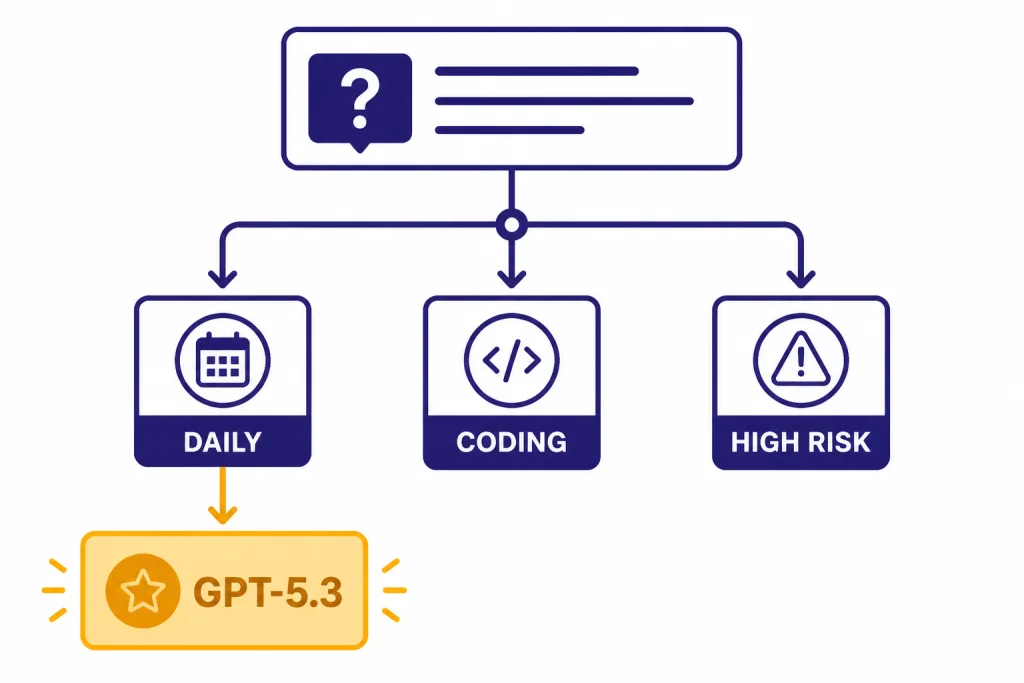

Our decision framework is simple. Use GPT-5.3 when you need a stable GPT-5.3 snapshot and the cost of a slightly imperfect answer is low while the value of a fast, usable draft is high. Escalate to GPT-5.4 Thinking, GPT-5.5, GPT-5.5 Pro, or another reasoning model when the task has hidden dependencies, many constraints, or meaningful downside if the first answer is wrong. Add human review when the output affects money, health, law, safety, or public reputation.

That framework also helps teams set defaults. A support team can use GPT-5.3 for draft replies and knowledge-base explanations if its tone matches the brand. A finance team can use it for summarizing known documents but should not rely on it alone for final recommendations. A developer can use it for quick debugging but should switch models when the bug requires tracing behavior across a large codebase. For new defaults in May 2026, test GPT-5.3 side by side with GPT-5.5-class options before locking in routing.

Frequently asked questions

What is GPT-5.3?

GPT-5.3 usually refers to GPT-5.3 Instant, OpenAI’s everyday ChatGPT model update released on March 3, 2026.[1][6] It focuses on direct answers, better conversational flow, improved web-grounded responses, and fewer unnecessary caveats. In the API, the related model is listed as gpt-5.3-chat-latest.[2] It is not the newest GPT-5 model as of May 2026.

Is GPT-5.3 better than GPT-5.2?

For the specific everyday ChatGPT behaviors OpenAI targeted in March 2026, yes, GPT-5.3 was usually the better default than GPT-5.2 Instant. OpenAI says it reduced hallucination rates by 26.8% with web access and 19.7% without web access on one higher-stakes internal evaluation compared with prior models.[1][7] It also has a more direct tone, though third-party testing found that awkward reassurance still appears in some prompts.[8]

How does GPT-5.3 compare with GPT-5.4 and GPT-5.5?

GPT-5.3 Instant is optimized for fast everyday answers, while GPT-5.4 Thinking is positioned for complex professional work, tool use, coding, and multi-step reasoning.[5] GPT-5.5 and GPT-5.5 Pro are newer May 2026 choices and should be considered first for a current top-tier GPT-5 chat deployment. GPT-5.3 still makes sense when you need its specific API snapshot, cost profile, or conversational style.

How much does the GPT-5.3 API cost?

OpenAI lists GPT-5.3 Chat at $1.75 per 1 million input tokens, $0.175 per 1 million cached input tokens, and $14.00 per 1 million output tokens.[2] ModelMath and Price Per Token independently list the same $1.75 input and $14.00 output rates for GPT-5.3 Chat.[9][10] Watch output length, because output tokens are the expensive side of the model, and recheck current pricing before a new production rollout.

What is the GPT-5.3 context window?

OpenAI’s developer model page lists a 128,000-token context window for GPT-5.3 Chat, with a 16,384-token maximum output.[2] Price Per Token also lists a 128,000-token context window for GPT-5.3 Chat.[10] That is large enough for many long documents, but teams should still chunk repositories, transcripts, and policy libraries carefully.

Can GPT-5.3 analyze images?

Yes, GPT-5.3 Chat supports image input according to OpenAI’s model page.[2] It produces text output, not generated images, audio, or video.[2] Use it to explain a screenshot, inspect a chart, or reason about a visual document; use a dedicated image model such as GPT-image-2 when you need new images.

Is GPT-5.3 safe enough for sensitive work?

It depends on the workflow. OpenAI’s system card reports improvements in some areas but also notes regressions relative to GPT-5.2 Instant for self-harm evaluations and relative to GPT-5.2 Instant and GPT-5.1 Instant for disallowed sexual content.[3][7] For sensitive health, legal, financial, or safety use, treat GPT-5.3 as an assistant, not an authority, and keep human review in the loop.

Bottom line

GPT-5.3 was a strong default-model update for its launch moment because it improved the part of ChatGPT most people notice: the first useful answer. It is more direct, less padded, better suited to everyday writing and research than the GPT-5.2 Instant behavior it replaced, and available to developers through gpt-5.3-chat-latest with published pricing and a 128,000-token context window.[1][2][10]

For May 2026 readers, the key caveat is freshness. GPT-5.3 is not the latest GPT-5 release and should not be described as the current flagship. Use it when you need the GPT-5.3 snapshot or its direct everyday style; evaluate GPT-5.4 Thinking, GPT-5.5, GPT-5.5 Pro, and task-specific models when you are choosing a new default for reasoning, coding, image generation, or video work.