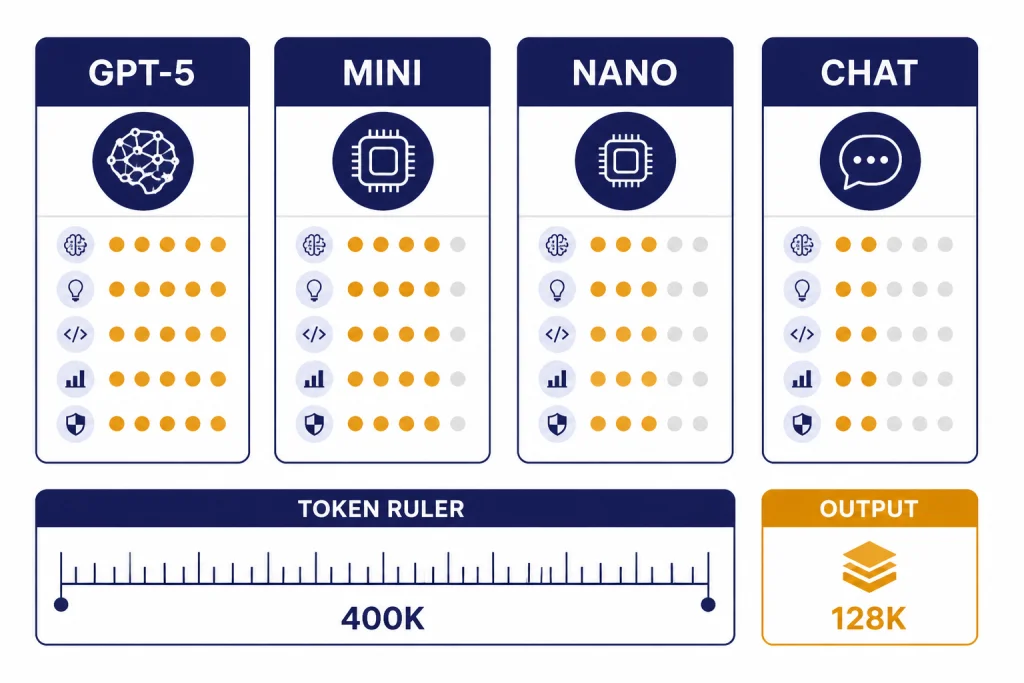

GPT-5 is OpenAI’s flagship model family that launched on August 7, 2025, bringing a unified ChatGPT experience, built-in reasoning, stronger coding performance, improved factuality, and API models for different cost and latency needs.[1][7] The original GPT-5 API models include gpt-5, gpt-5-mini, and gpt-5-nano, each supporting text and vision, with the main GPT-5 model listed at a 400,000-token context window and 128,000 max output tokens.[2][4] As of this March 12, 2026 update, GPT-5 is best understood as the base generation in a larger GPT-5 family that now includes newer iterations such as GPT-5.4 for professional work.[6][12]

GPT-5 release date and quick answer

OpenAI released GPT-5 on August 7, 2025, and made it the new default model in ChatGPT for logged-in users as it rolled out across Free, Plus, Pro, and Team plans.[1][5][7] Developers also received GPT-5 in the API on launch day, with three main API sizes: gpt-5, gpt-5-mini, and gpt-5-nano.[2][11]

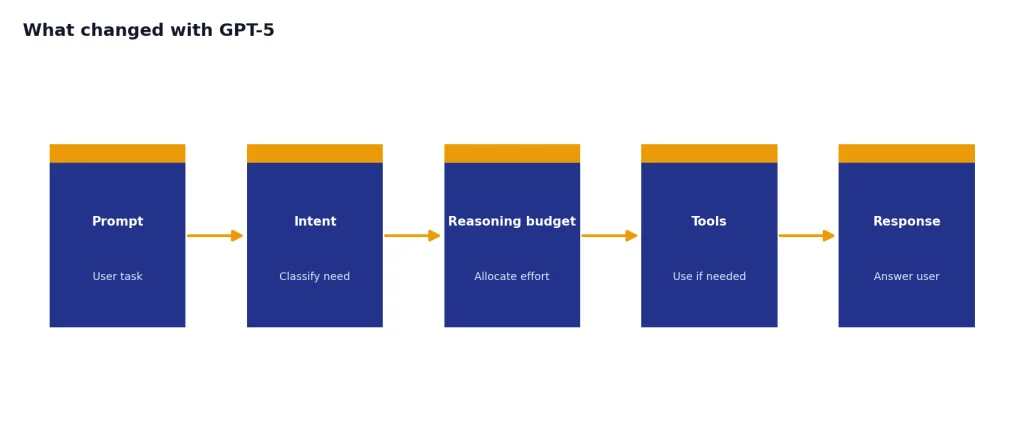

The short version is simple. GPT-5 was not just a larger chat model. It was OpenAI’s move toward a unified system that could answer quickly for simple prompts, reason longer for hard prompts, and reduce the need for users to manually choose between general, coding, and reasoning models.[1][7]

What changed with GPT-5

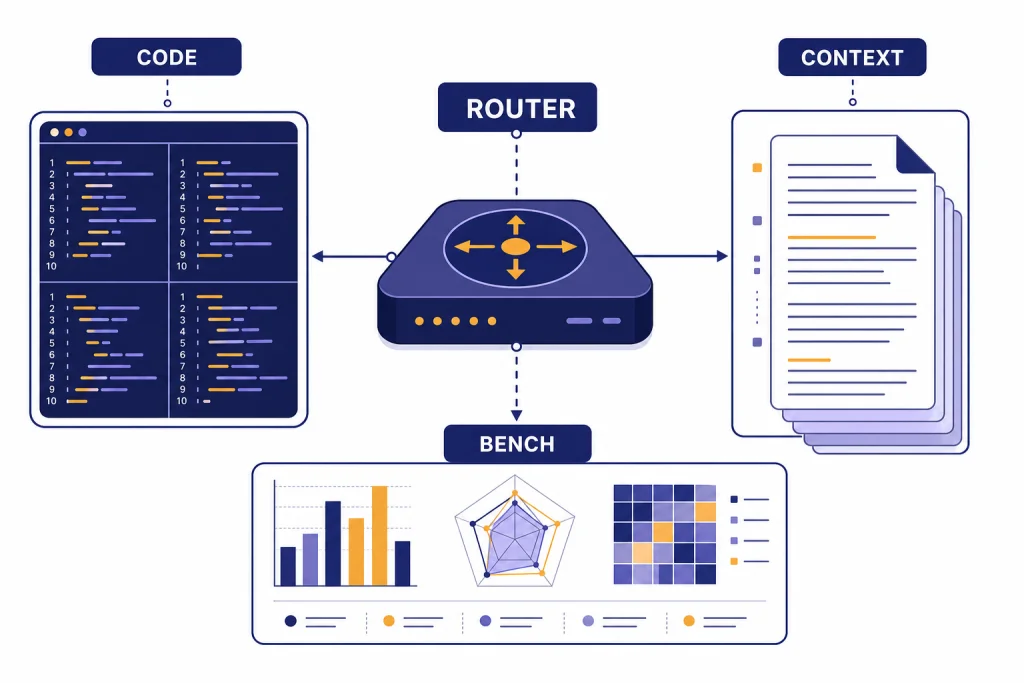

GPT-5 changed the product experience more than the name suggests. In ChatGPT, OpenAI described GPT-5 as a unified system with a fast model, a deeper thinking model, and a real-time router that chooses how much reasoning to apply based on the task, conversation type, tools, and user intent.[1][7] That meant a user could ask for a quick rewrite, a bug fix, or a multi-step plan without opening a model picker first.

The developer story was more explicit. OpenAI said GPT-5 in the API was the reasoning model that powered maximum performance in ChatGPT, while the ChatGPT product also used non-reasoning and router models behind the scenes.[2][7] This distinction matters. If you compare ChatGPT answers with API calls, you are not always comparing the same routing behavior.

The most visible gains were in coding, long-context work, structured reasoning, writing, and health-related answers. OpenAI’s launch page emphasized fewer hallucinations, better instruction following, less sycophancy, and stronger performance in three common ChatGPT use cases: writing, coding, and health.[1][7] If you want the broader model-family view, see all GPT models compared side by side.

GPT-5 also changed expectations around model access. Previous flagship reasoning models were often limited to paid tiers or exposed through separate selectors. GPT-5 put a reasoning-capable default experience in front of free ChatGPT users, while paid plans received higher usage limits or access to more capable variants.[1][7]

GPT-5 model lineup, context window, and pricing

The original GPT-5 API lineup centered on three models: gpt-5, gpt-5-mini, and gpt-5-nano.[2][11] The main GPT-5 model was the full-performance choice. Mini was the lower-cost option for well-defined tasks. Nano was the fastest and cheapest option for high-volume classification, extraction, and summarization work.[3][8]

| Model | Best fit | Context and output | Standard API price at launch |

|---|---|---|---|

gpt-5 | Complex coding, reasoning, agents, analysis | 400,000-token context window and 128,000 max output tokens.[3][4] | $1.25 per 1M input tokens and $10.00 per 1M output tokens.[3][8] |

gpt-5-mini | Production tasks that need lower cost and good quality | 400,000-token context window and 128,000 max output tokens.[3][4] | $0.25 per 1M input tokens and $2.00 per 1M output tokens.[3][8] |

gpt-5-nano | Classification, extraction, routing, short summaries | 400,000-token context window and 128,000 max output tokens on OpenAI’s GPT-5 landing page.[3][4] | $0.05 per 1M input tokens and $0.40 per 1M output tokens.[3][8] |

gpt-5-chat-latest | Chat-style API behavior closer to non-reasoning ChatGPT responses | OpenAI described it as the non-reasoning version used in ChatGPT.[2][3] | OpenAI listed it at the same $1.25 input and $10.00 output per 1M token price point as base GPT-5.[2][8] |

The context number needs careful reading. OpenAI’s model documentation lists GPT-5 at a 400,000-token context window and 128,000 max output tokens.[3][4] In developer materials, OpenAI also described the practical split as up to 272,000 input tokens and up to 128,000 reasoning and output tokens, for the same 400,000-token total.[2][4] For a deeper comparison across the model catalog, use our context window sizes for every GPT model guide.

For developers, the price-performance story was a major part of the launch. TechCrunch reported the same $1.25 input and $10.00 output per 1M token base GPT-5 pricing and framed it as aggressively competitive against other frontier models at the time.[8][7] For current cost planning across the full API lineup, see our OpenAI API pricing reference.

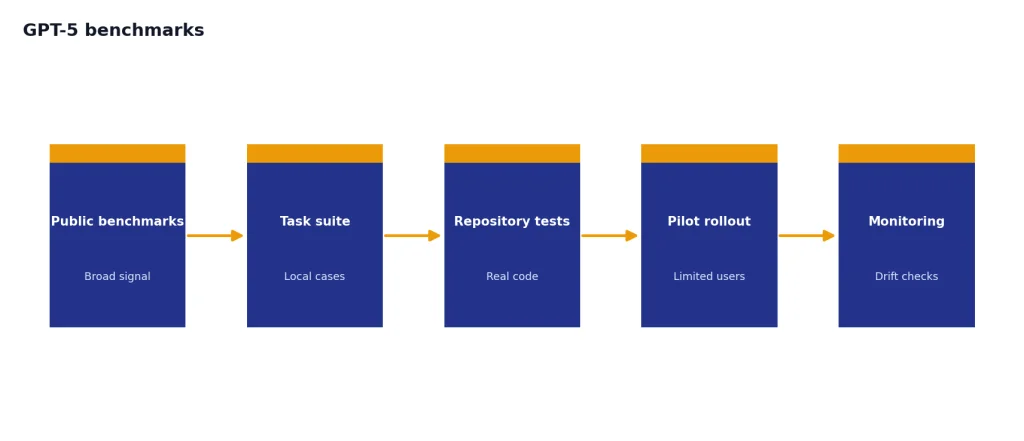

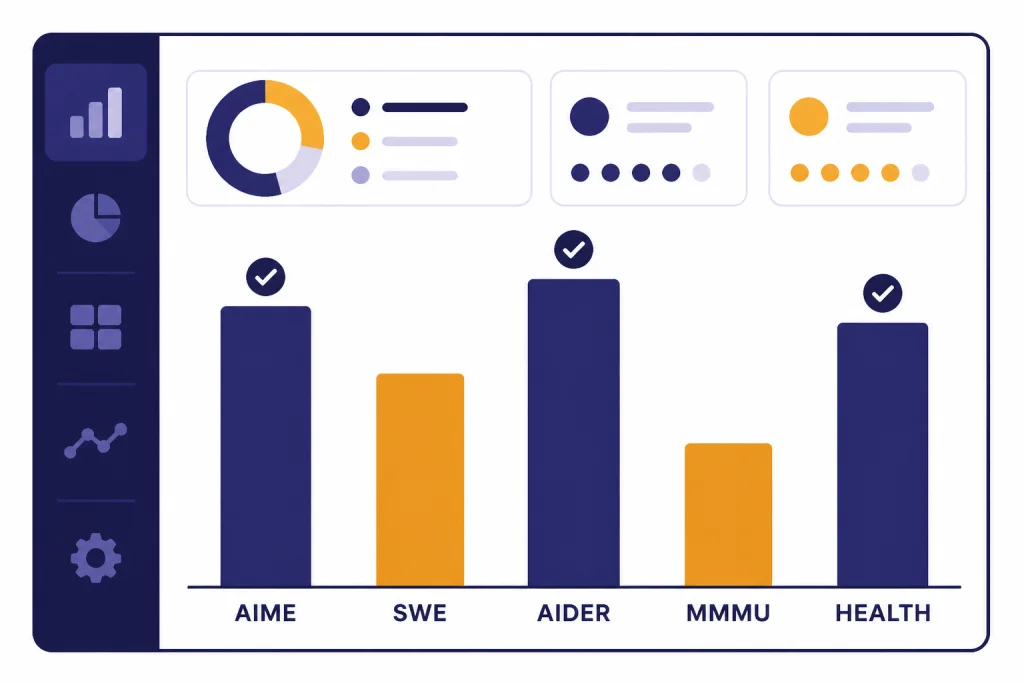

GPT-5 benchmarks

GPT-5’s benchmark profile was strongest in coding, math, multimodal understanding, long-context retrieval, and health-related evaluations. OpenAI reported 94.6% on AIME 2025 without tools, 74.9% on SWE-bench Verified, 88.0% on Aider Polyglot, 84.2% on MMMU, and 46.2% on HealthBench Hard.[1][7] GPT-5 Pro with extended reasoning was reported at 88.4% on GPQA without tools by OpenAI, while TechCrunch reported a 89.4% first-try GPQA Diamond figure from OpenAI’s briefing coverage.[1][7]

| Benchmark | What it measures | Reported GPT-5 result | How to read it |

|---|---|---|---|

| AIME 2025 | Competition math | 94.6% without tools.[1][7] | Strong signal for symbolic reasoning, but not a complete measure of business usefulness. |

| SWE-bench Verified | Real GitHub issue resolution | 74.9%.[1][7] | Useful for coding assistants, though launch-chart presentation issues later drew scrutiny.[10][7] |

| Aider Polyglot | Multi-language code editing | 88.0%.[1][9] | BenchGecko later listed GPT-5 as the Aider Polyglot leader with a score of 88.0 across 53 tested models.[9][1] |

| MMMU | Multimodal understanding | 84.2%.[1][7] | Relevant for chart, image, document, and screenshot reasoning, not image generation quality. |

| HealthBench Hard | Difficult health-answer quality | 46.2%.[1][11] | Useful as a safety and quality signal, but not a reason to treat ChatGPT as a doctor. |

Benchmarks should guide expectations, not replace testing. GPT-5’s 74.9% SWE-bench Verified result was strong, but PC Gamer documented criticism around OpenAI’s launch charts and questions about missing SWE-bench tasks in the presentation context.[10][7] The practical takeaway is that GPT-5 was a clear coding upgrade, but teams should still run their own repository-specific tests before switching production workflows.

For readers choosing a model mainly by raw capability, see our most powerful GPT model benchmark showdown. For developers choosing by response time, our fastest GPT model guide is often more useful than benchmark leaderboards.

Features that matter in daily use

Coding and software work

GPT-5 was OpenAI’s strongest coding model at launch, with particular improvements in front-end generation, debugging larger repositories, and agentic coding workflows.[1][2] OpenAI also said GPT-5 beat OpenAI o3 at front-end web development 70% of the time in internal testing.[2][7] That does not mean every generated app is production-ready. It means GPT-5 became a better partner for scaffolding, refactoring, diagnosing bugs, and converting vague product ideas into a first working version.

For coding, GPT-5’s best use is iterative. Give it a small target, run tests, paste errors back, and ask for a constrained patch. For full codebase work, use its large context window carefully and include file maps, failing tests, and acceptance criteria. Our best GPT model for coding guide covers those tradeoffs in more detail.

Writing and analysis

OpenAI emphasized GPT-5’s improvements in writing, instruction following, and reduced sycophancy.[1][7] In practice, that makes it better for structured drafts, editing under style constraints, research briefs, and documents that require a specific tone. It is less useful when a user wants the model to be endlessly agreeable. Strong writing prompts should specify audience, format, exclusions, and examples.

If your main work is content, compare GPT-5 with alternatives in best GPT model for writing. The best model for writing is not always the highest-scoring reasoning model. Style control, latency, and editing discipline can matter more.

Vision and multimodal input

The GPT-5 API supports text input and output plus image input, while OpenAI’s GPT-5 landing page describes the models as text and vision models.[3][4] That makes GPT-5 useful for reading screenshots, diagrams, charts, and visual bug reports. It does not make GPT-5 itself the same thing as an image generation model. For that distinction, see best GPT model for image generation and our DALL-E 3 guide.

Health answers

OpenAI highlighted health as one of GPT-5’s major improvement areas, including better context awareness and safer answers.[1][7] GPT-5 can help users prepare questions for a clinician, summarize medical documents, or explain general concepts. It should not diagnose you, replace a medical professional, or make emergency decisions. Treat any health answer as a starting point for discussion with a qualified professional.

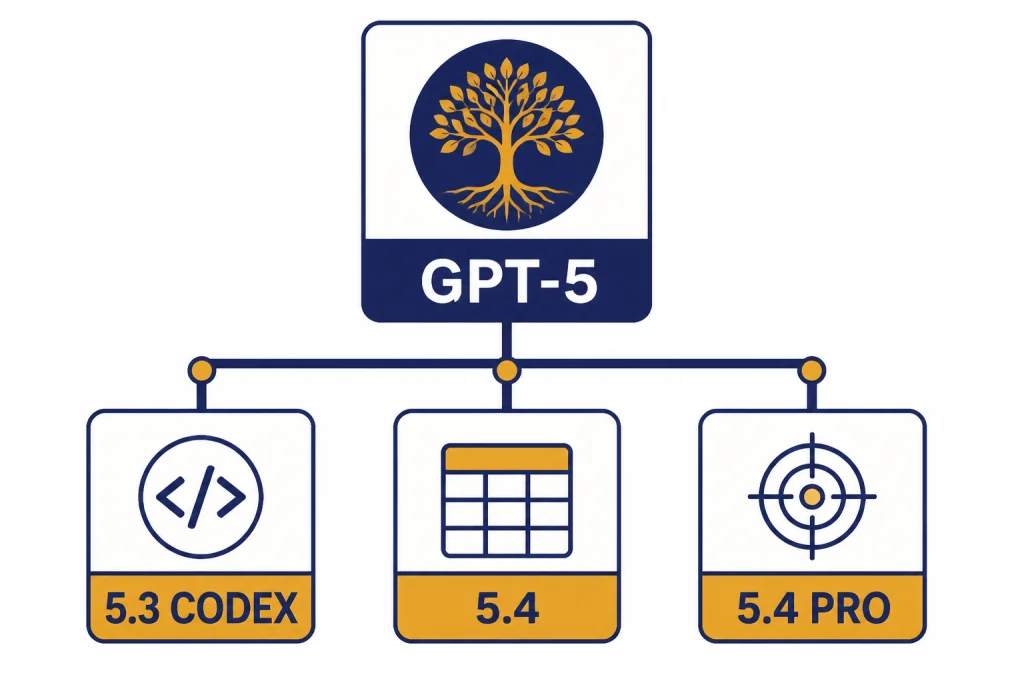

GPT-5 vs newer GPT-5 family models

By March 12, 2026, the GPT-5 family had moved beyond the original August 2025 release. OpenAI released GPT-5.4 on March 5, 2026, positioning it as a more capable and efficient frontier model for professional work across ChatGPT, the API, and Codex.[6][12] That does not erase the original GPT-5. It means “GPT-5” can refer either to the base model or to the broader family.

| Model generation | Release timing | Main emphasis | API price signal |

|---|---|---|---|

| Original GPT-5 | Released August 7, 2025.[1][7] | Unified ChatGPT, coding, reasoning, writing, health, and long-context work.[1][2] | $1.25 input and $10.00 output per 1M tokens for the base model.[3][8] |

| GPT-5.3-Codex | OpenAI described GPT-5.4 as incorporating the coding capabilities of GPT-5.3-Codex.[6][12] | Coding and Codex-centered workflows.[6][12] | Use current OpenAI pricing before deployment because Codex pricing can differ by model and tier.[6][12] |

| GPT-5.4 | Released March 5, 2026.[6][12] | Professional work, spreadsheets, presentations, documents, computer use, and tool-heavy agents.[6][12] | $2.50 input, $0.25 cached input, and $15.00 output per 1M tokens for standard GPT-5.4.[6][13] |

| GPT-5.4 Pro | Released with GPT-5.4 on March 5, 2026.[6][12] | Maximum performance on complex tasks.[6][12] | $30.00 input and $180.00 output per 1M tokens for GPT-5.4 Pro.[6][13] |

The practical decision is not “GPT-5 or nothing.” Use the base GPT-5 class when cost matters and the task is within its strengths. Use a newer GPT-5 iteration when the task is high-value, tool-heavy, document-heavy, or sensitive to mistakes. For the latest iteration coverage, see GPT-5.3 and compare it with newer releases when available.

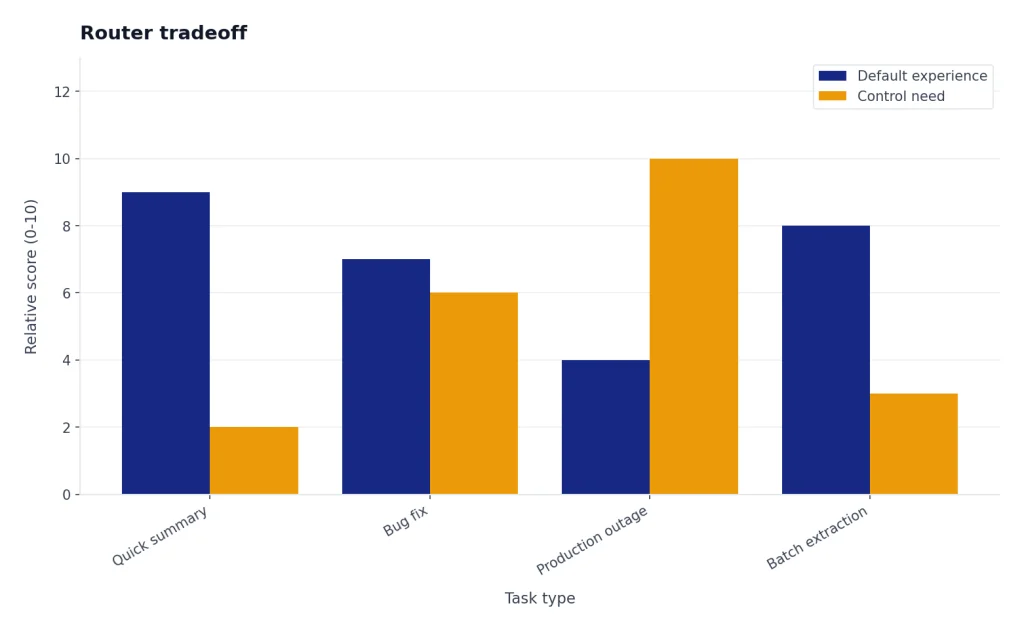

Original analysis: the router tradeoff

The most important GPT-5 product decision was the router. It made ChatGPT simpler for casual users because they no longer needed to understand the difference between a fast model, a reasoning model, and a code-focused model.[1][7] It also reduced control for power users who had built workflows around choosing a specific model for a specific job.

This is the router tradeoff: automatic intelligence allocation improves the default experience, but it can hide the system’s reasoning budget from the user. A student asking for a quick summary benefits from not thinking about model choice. A developer debugging a production outage may want to force deeper reasoning, inspect tool use, and keep behavior consistent across runs.

The launch showed both sides. GPT-5 was designed to simplify the model picker, but reporting after launch described user backlash, restored model choices, and criticism of the early rollout.[10][7] That does not mean the router idea failed. It means automatic model selection needs transparency. Users should know when the system is thinking deeply, when it is answering quickly, and how to override that choice.

Our recommendation is to treat GPT-5 like a smart default, not a guarantee. In ChatGPT, explicitly ask it to think harder when stakes are high. In the API, choose the model and reasoning settings deliberately. For cost-sensitive systems, route simple extraction and classification to smaller models and reserve full GPT-5-class reasoning for tasks where error cost is higher than token cost. For budget-first comparisons, start with cheapest GPT model.

Who should use GPT-5

GPT-5 is a strong default for users who want one model for writing, coding, analysis, image understanding, and multi-step reasoning. It is especially useful when you do not want to decide among separate reasoning and non-reasoning models. It is also a good API baseline for teams that need high quality without immediately paying for the newest Pro-class model.

- Use GPT-5 for coding when you need repository reasoning, bug diagnosis, test-driven patches, or front-end prototypes.[1][2]

- Use GPT-5 for analysis when you need long documents summarized, compared, or turned into structured output within a large context window.[2][4]

- Use GPT-5 mini or nano when the task is narrow, repetitive, and cost-sensitive.[3][8]

- Use a newer GPT-5 family model when the job involves professional deliverables, heavy tool use, computer use, or expensive errors.[6][12]

Do not choose GPT-5 only because it has a higher benchmark score than an older model. Choose it when the work benefits from reasoning, context, and instruction following. For basic chat, short rewrites, routing, or extraction, smaller models can be faster and cheaper. For voice workflows, see ChatGPT Voice Mode review.

Frequently asked questions

When was GPT-5 released?

OpenAI released GPT-5 on August 7, 2025.[1][7] It rolled out to ChatGPT users and developers through the API at launch.[2][7] OpenAI’s Help Center described GPT-5 as the next flagship model and the new default for logged-in ChatGPT users.[5][7]

Is GPT-5 available for free?

Yes. OpenAI said GPT-5 was available to all users, with Plus subscribers receiving more usage and Pro subscribers receiving access to GPT-5 Pro.[1][7] The Help Center also said GPT-5 was rolling out across Free, Plus, Pro, and Team plans worldwide.[5][7] Free access still depends on usage limits and the current ChatGPT plan rules.

What is GPT-5’s context window?

OpenAI lists GPT-5 with a 400,000-token context window and 128,000 max output tokens.[3][4] Developer materials explain that GPT-5 API models can accept up to 272,000 input tokens and emit up to 128,000 reasoning and output tokens within that total.[2][4] This makes GPT-5 suitable for large documents and codebases, but retrieval quality still depends on prompt structure.

How much does GPT-5 cost in the API?

At launch, the base GPT-5 API price was $1.25 per 1M input tokens and $10.00 per 1M output tokens.[3][8] GPT-5 mini was listed at $0.25 input and $2.00 output per 1M tokens, while GPT-5 nano was listed at $0.05 input and $0.40 output per 1M tokens.[3][8] Always check OpenAI’s current pricing before deploying because model prices can change.

What benchmarks did GPT-5 score best on?

OpenAI reported GPT-5 at 94.6% on AIME 2025, 74.9% on SWE-bench Verified, 88.0% on Aider Polyglot, 84.2% on MMMU, and 46.2% on HealthBench Hard.[1][7] BenchGecko later listed GPT-5 at 88.0 on Aider Polyglot across 53 tested models.[9][1] These numbers are useful, but your own task-specific tests matter more.

Is GPT-5 better than GPT-4o?

For reasoning, coding, long-context work, and many structured tasks, GPT-5 was designed to surpass GPT-4o and earlier models.[1][7] OpenAI also said GPT-5 reduced hallucinations and improved instruction following compared with prior systems.[1][7] Some users still preferred older model personalities during the rollout, which is a separate issue from benchmark capability.[10][7]

Is GPT-5 still the latest OpenAI model?

No. By this March 12, 2026 update, OpenAI had released GPT-5.4 on March 5, 2026.[6][12] GPT-5 remains important because it is the base generation and the reference point for pricing, routing, and benchmarks. Newer GPT-5-family models may be better for professional work, tool use, and high-value tasks.[6][12]

Bottom line

GPT-5 was the release that turned OpenAI’s model lineup toward a unified, reasoning-capable default. It launched on August 7, 2025, brought a 400,000-token context window to the main API model, and set strong launch benchmarks in coding, math, multimodal understanding, and health.[1][3][7]

Use GPT-5 as the baseline for serious ChatGPT and API work, then move up to newer GPT-5-family models when the task justifies the extra cost. The next thing to watch is not just a higher benchmark score. Watch how OpenAI exposes routing, reasoning effort, tool use, and context control to users who need predictable results.