GPT-4o is OpenAI’s omni-modal GPT model, introduced as a step toward real-time interaction across text, audio, vision, and video inputs, with text, audio, and image outputs described in OpenAI’s launch materials.[1] As of March 13, 2026, the practical answer is split: GPT-4o has been retired from normal ChatGPT model selection, but OpenAI says API access continues unchanged.[3] That makes GPT-4o less of a default ChatGPT choice and more of a legacy API workhorse for teams that still depend on its speed, vision input, structured outputs, function calling, and predictable behavior.[2]

What GPT-4o is

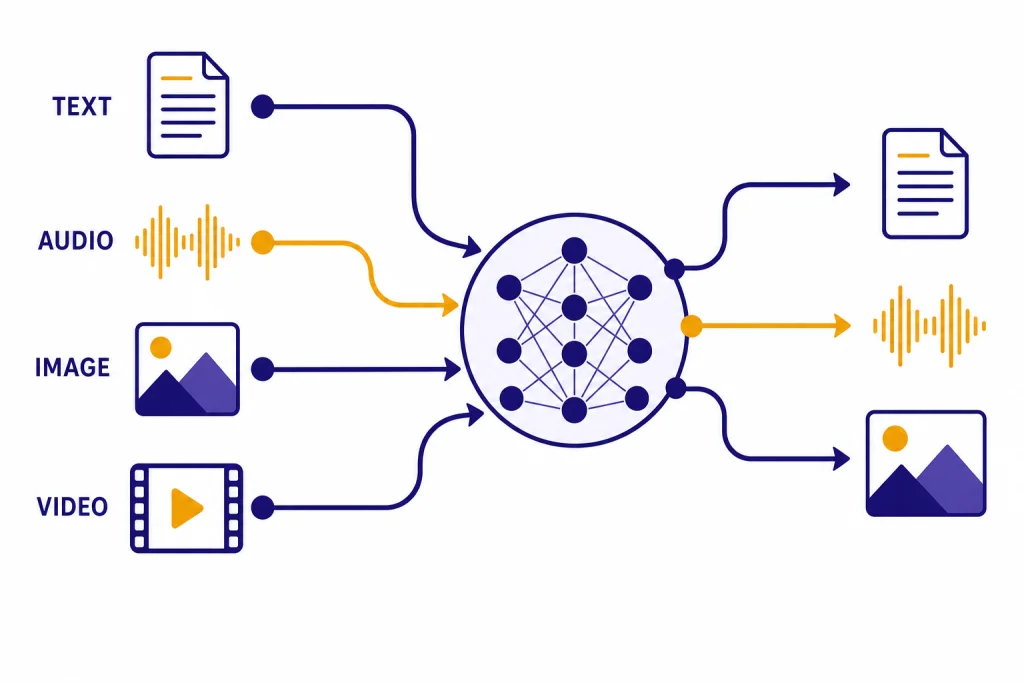

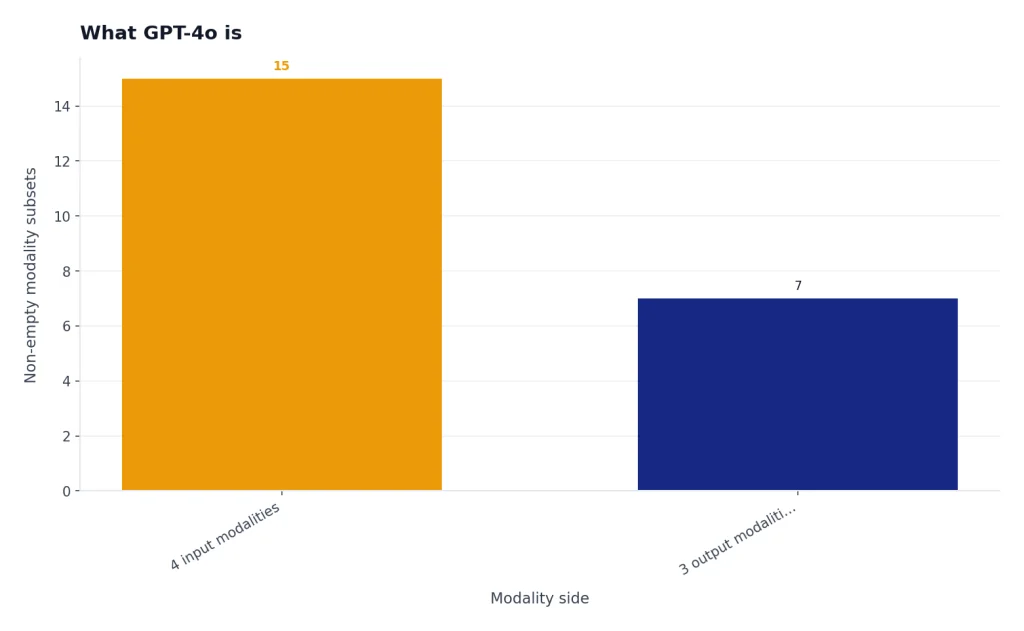

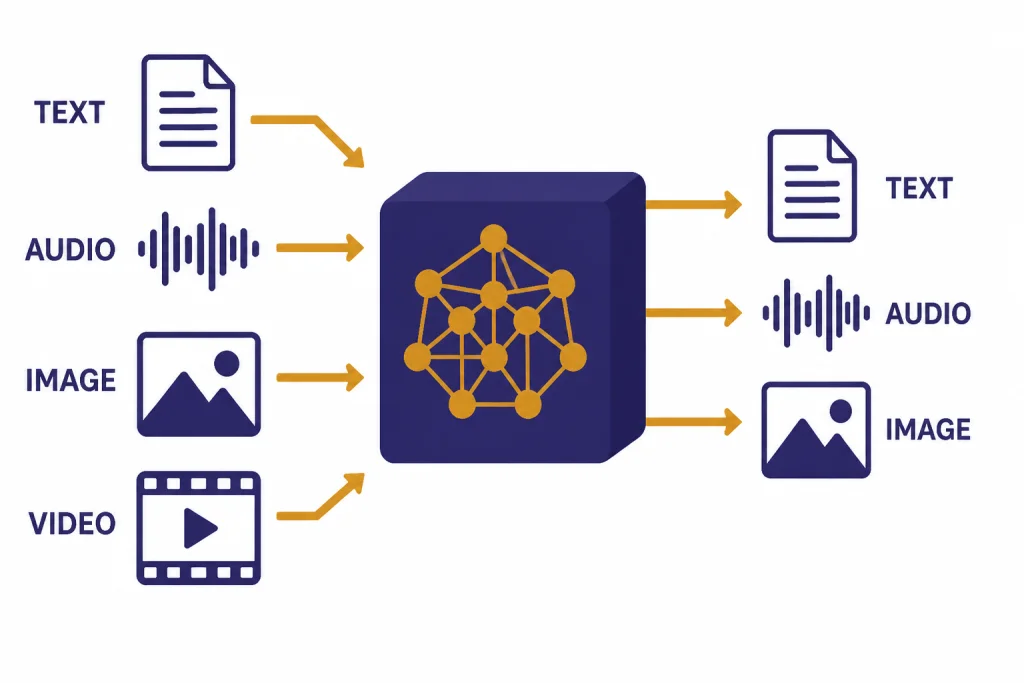

GPT-4o is the model OpenAI described as an “omni” model because it was designed around multiple input and output modalities rather than text alone.[1] At launch, OpenAI said GPT-4o could accept combinations of text, audio, image, and video as input, and generate combinations of text, audio, and image as output.[1] The “o” stands for “omni,” and that naming mattered because the model was meant to reduce the gap between typing to a chatbot and interacting with a system that can see, hear, and respond more naturally.[1]

OpenAI’s API documentation later presented GPT-4o as a fast, intelligent, flexible GPT model that accepts text and image inputs and produces text outputs in the standard API model card.[2] That API description is narrower than the original launch framing because OpenAI separated some audio and realtime use cases into related model families and endpoints over time.[2] For readers comparing GPT-4o with newer releases, our broader all GPT models compared side by side guide is the better starting point.

The short version: GPT-4o was the bridge between the GPT-4 era and the later multimodal model stack. It made GPT-4-class work feel faster and more interactive, especially for vision-heavy prompts, voice-like experiences, and applications that needed tool calls or structured responses. It is no longer the main ChatGPT default, but it remains important because many applications were built around its API behavior.

Current status in ChatGPT and the API

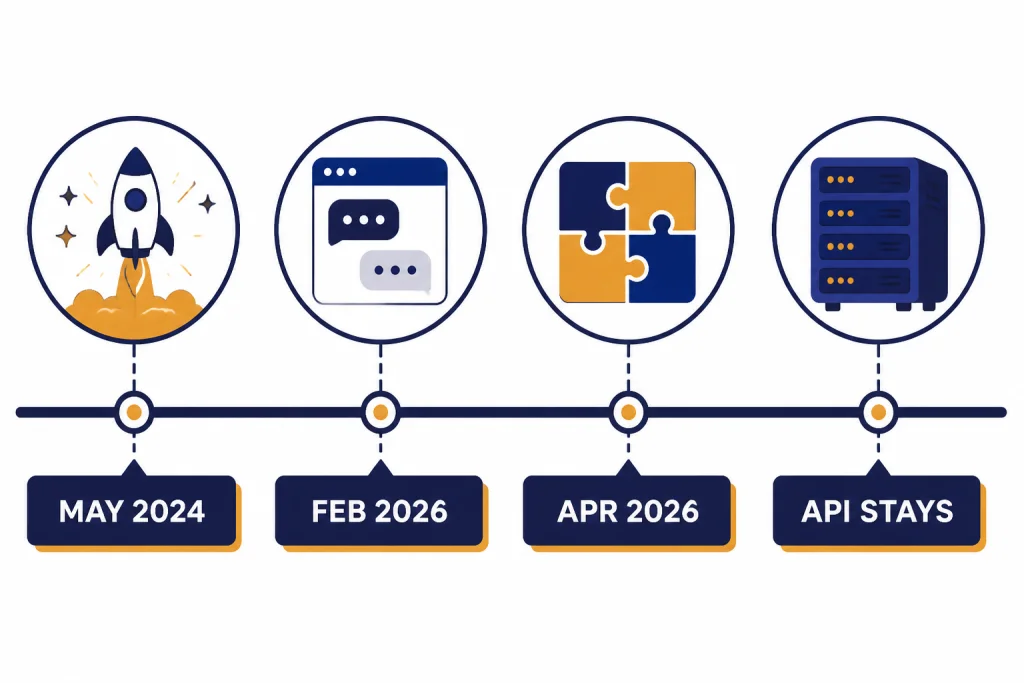

As of March 13, 2026, GPT-4o is not a normal ChatGPT model-picker option for most users. OpenAI’s Help Center says GPT-4o and several other ChatGPT models were deprecated in ChatGPT on February 13, 2026, while continuing to be available in the API.[3] OpenAI also says ChatGPT Business, Enterprise, and Edu customers retain access to GPT-4o inside Custom GPTs until April 3, 2026.[3]

This distinction matters. If you are trying to select GPT-4o inside ChatGPT, you should expect the current ChatGPT experience to route you to newer default models instead. If you are calling the OpenAI API, the GPT-4o model card still lists GPT-4o as an available model with its own context window, output limit, pricing, endpoints, and feature support.[2]

Older ChatGPT conversations may also behave differently when continued after the retirement date. OpenAI says conversations and projects using deprecated models default to newer equivalents going forward.[3] That means a saved exchange that originally used GPT-4o may not reproduce the same tone or answer style if you reopen it later. For everyday ChatGPT use, look at our ChatGPT Voice Mode review if your main interest is spoken interaction rather than the API model named GPT-4o.

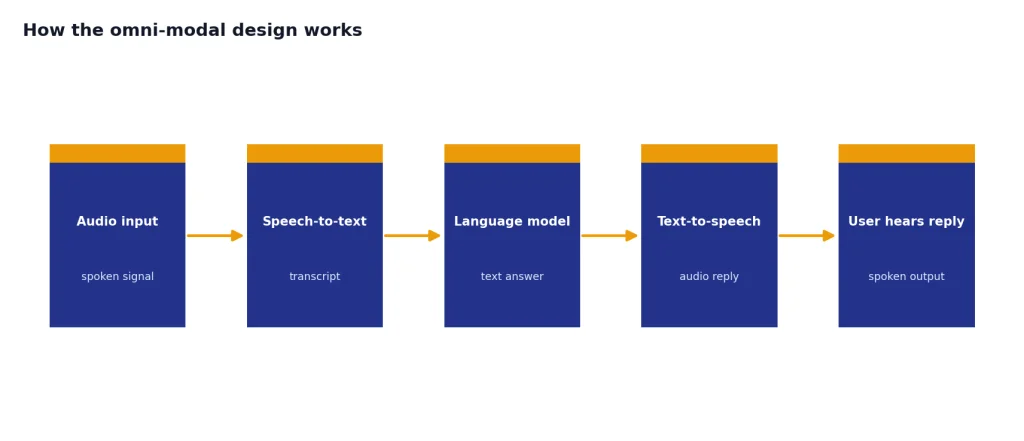

How the omni-modal design works

Before GPT-4o, voice-style systems often chained separate models together. A speech-to-text system would transcribe audio, a language model would answer, and a text-to-speech system would read the answer back. OpenAI positioned GPT-4o differently: it said the model was trained end-to-end across text, vision, and audio, so the same neural network could process richer signals rather than relying only on a transcript.[1]

That design explains why the launch emphasized response time. OpenAI said GPT-4o could respond to audio input in as little as 232 milliseconds, with an average of 320 milliseconds.[1] Those numbers helped define the original promise of GPT-4o: a model that could feel closer to a live conversation than earlier text-first systems. They also made GPT-4o relevant to developers comparing latency, not just benchmark accuracy. For a broader speed-focused view, see our fastest GPT model comparison.

For vision work, GPT-4o sits in the lineage that followed earlier image-understanding models. It can interpret screenshots, charts, product photos, forms, and diagrams when exposed through supported interfaces.[2] If you are comparing it with older vision-specific behavior, our GPT-4 Vision guide gives useful historical context.

The omni-modal label does not mean every modality is exposed in every product surface. In the API model card, GPT-4o is listed with text input and output, image input, and no audio or video support on that specific model page.[2] For audio transcription specifically, OpenAI has separate speech models, and our Whisper guide explains the older speech-to-text path.

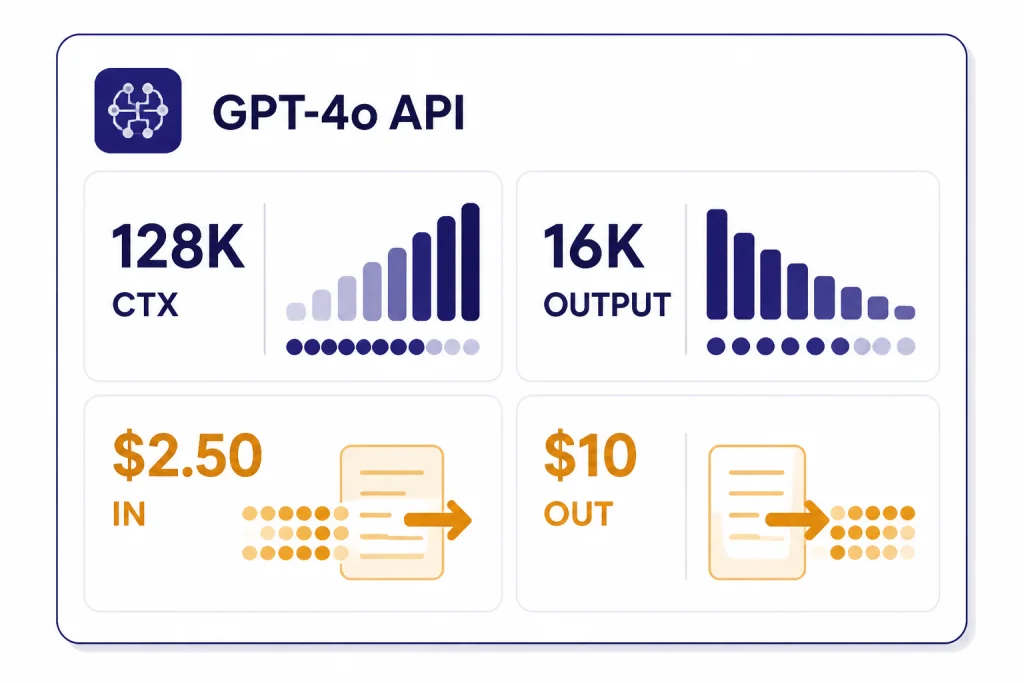

GPT-4o specs and API pricing

The API specs are the cleanest way to understand GPT-4o today. OpenAI’s GPT-4o model card lists a 128,000-token context window, a 16,384-token maximum output, and an October 01, 2023 knowledge cutoff.[2] If context length is your main decision factor, compare it with other models in our context window sizes for every GPT model reference.

| Field | GPT-4o API value | Why it matters |

|---|---|---|

| Context window | 128,000 tokens[2] | Supports long prompts, large documents, extended chats, and multi-file context. |

| Maximum output | 16,384 tokens[2] | Allows long reports, structured exports, and detailed code responses. |

| Knowledge cutoff | October 01, 2023[2] | Marks the model’s built-in training knowledge boundary. |

| Text input price | $2.50 per 1M tokens[2] | Sets the cost of prompts, uploaded text, and retrieved context. |

| Cached input price | $1.25 per 1M tokens[2] | Reduces cost when reusing eligible repeated context. |

| Text output price | $10.00 per 1M tokens[2] | Sets the cost of generated answers, code, and structured responses. |

OpenAI’s model card also lists support for streaming, function calling, structured outputs, fine-tuning, distillation, and predicted outputs for GPT-4o.[2] Those features are a major reason some teams keep GPT-4o in production even after ChatGPT moved on. If your decision is mostly financial, use our OpenAI API pricing and cheapest GPT model guides alongside the official price sheet.

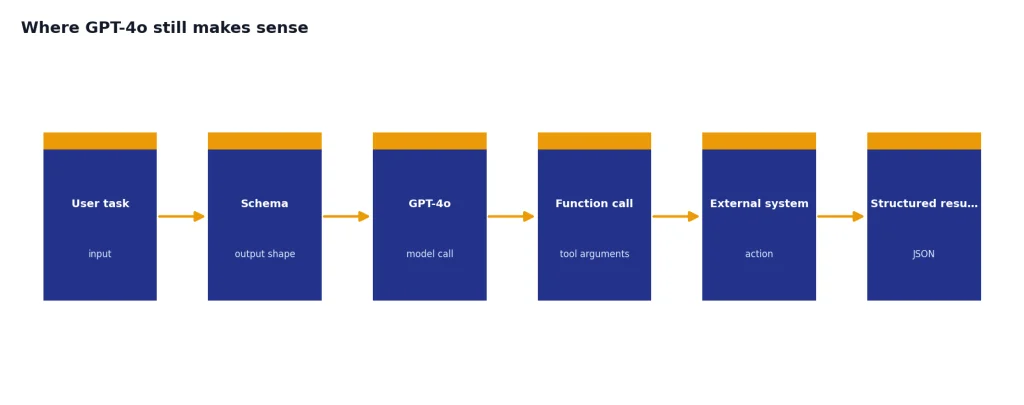

Where GPT-4o still makes sense

GPT-4o still makes sense when you need stable API behavior, multimodal input, and mature tool support more than you need the newest ChatGPT default. It is especially useful for applications that already passed evaluation on GPT-4o and would require a careful migration to newer models.[2]

- Vision-assisted support. GPT-4o can read screenshots, error dialogs, product photos, and charts when image input is supported.[2]

- Structured business workflows. Its support for structured outputs and function calling helps developers return predictable JSON-like results and trigger external systems.[2]

- Long-context drafting. The 128,000-token context window can hold substantial policy documents, meeting notes, or code excerpts.[2]

- Legacy production apps. Teams with regression tests, prompts, and user expectations tuned to GPT-4o may prefer incremental migration instead of a sudden model swap.

- Balanced writing and analysis. GPT-4o remains a capable general model for summarization, rewriting, classification, and explanation tasks.[2]

For writing-heavy workloads, compare its style and controllability with our best GPT model for writing guide. For software work, use our best GPT model for coding comparison, because newer models may outperform GPT-4o on complex coding even when GPT-4o remains adequate for code explanation and light refactoring.

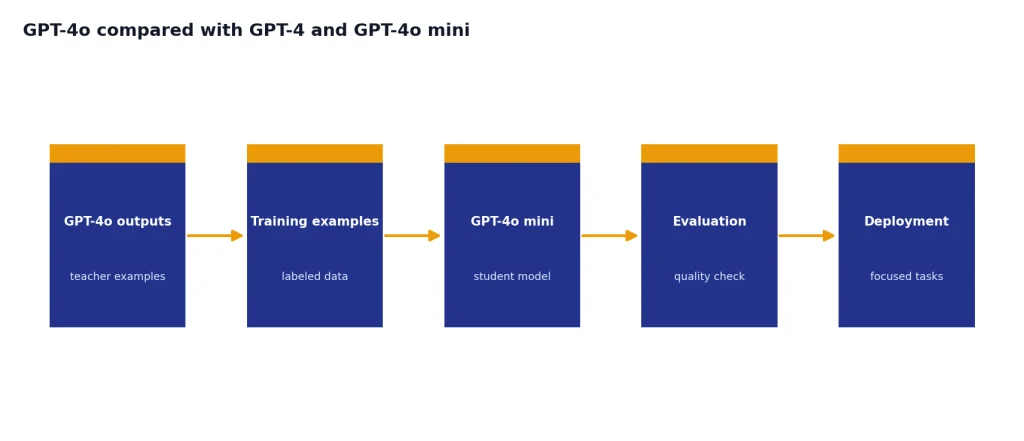

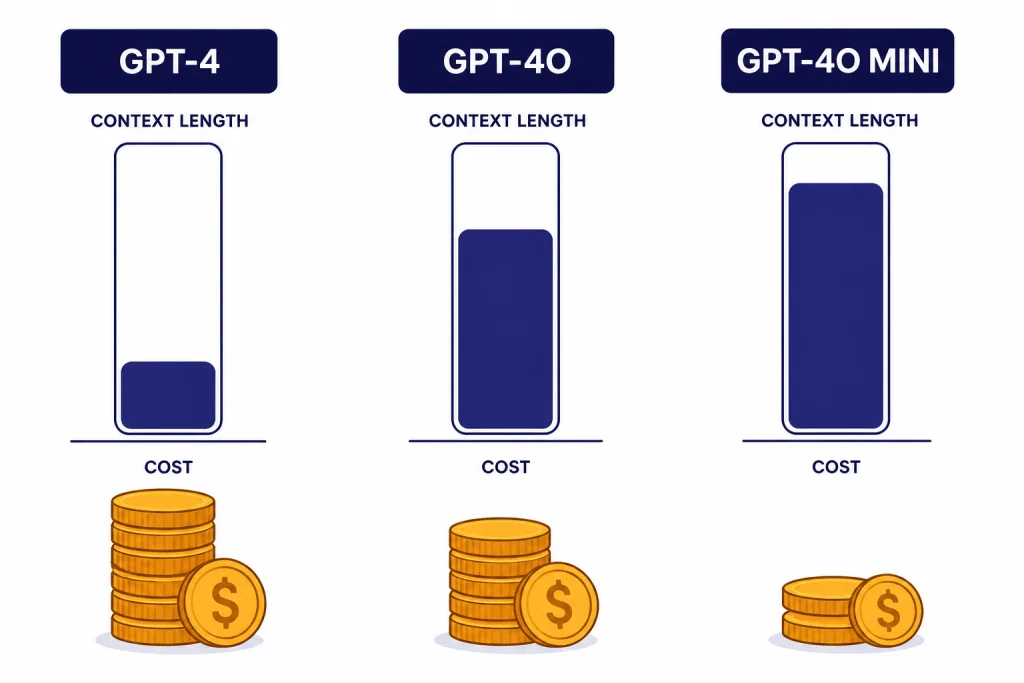

GPT-4o compared with GPT-4 and GPT-4o mini

GPT-4o replaced many practical uses of GPT-4 because it was faster, cheaper, and more multimodal. OpenAI’s launch post said GPT-4o matched GPT-4 Turbo performance on English text and code while being much faster and 50% cheaper in the API at launch.[1] Current API model cards show the older GPT-4 model with an 8,192-token context window and $30.00 input / $60.00 output pricing per 1M tokens, while GPT-4o lists a 128,000-token context window and $2.50 input / $10.00 output pricing per 1M tokens.[5][2]

| Model | Best fit | Context window | Input / output price | Notes |

|---|---|---|---|---|

| GPT-4 | Older high-intelligence GPT compatibility | 8,192 tokens[5] | $30.00 / $60.00 per 1M tokens[5] | Older model card; not the cost-effective choice for most new builds. |

| GPT-4o | Balanced legacy multimodal API apps | 128,000 tokens[2] | $2.50 / $10.00 per 1M tokens[2] | Strong fit for text, image input, structured outputs, and function calling. |

| GPT-4o mini | Lower-cost focused tasks | 128,000 tokens[6] | $0.15 / $0.60 per 1M tokens[6] | Better for classification, extraction, tagging, and high-volume simple prompts. |

GPT-4o mini is not just “smaller GPT-4o.” OpenAI describes GPT-4o mini as a fast, affordable small model for focused tasks, and its model card says outputs from a larger model such as GPT-4o can be distilled to GPT-4o mini for lower cost and latency.[6] That makes GPT-4o mini attractive when you can tolerate lower capability in exchange for much lower unit cost.

The main comparison in 2026 is no longer GPT-4o versus GPT-4. It is GPT-4o versus newer GPT-5-era defaults in ChatGPT and newer API models. Use GPT-4o when you need its specific API contract or migration stability. Use newer models when you need the strongest reasoning, coding, or agentic behavior available in the current stack. For power comparisons, see our most powerful GPT model benchmark roundup.

Limitations, safety, and migration notes

GPT-4o is capable, but it is not current by default. Its API model card lists an October 01, 2023 knowledge cutoff, so built-in knowledge is not enough for questions about events, products, laws, or prices after that date.[2] Developers should pair it with retrieval, web search, or their own databases when freshness matters.

OpenAI’s GPT-4o System Card, published August 8, 2024, describes safety work before release, including external red teaming, preparedness evaluations, and mitigations for areas such as unauthorized voice generation, speaker identification, unsupported sensitive-trait inference, and disallowed audio content.[4] That is important background for voice and multimodal deployments, because a model that can process richer signals can also create different abuse and privacy risks than a text-only model.

OpenAI has not published an official parameter count for GPT-4o. Treat any parameter-count claim you see elsewhere as unofficial unless OpenAI later publishes a figure. The same caution applies to hidden routing behavior inside ChatGPT: OpenAI documents product-level availability and model retirements, but it does not expose every internal routing decision to end users.[3]

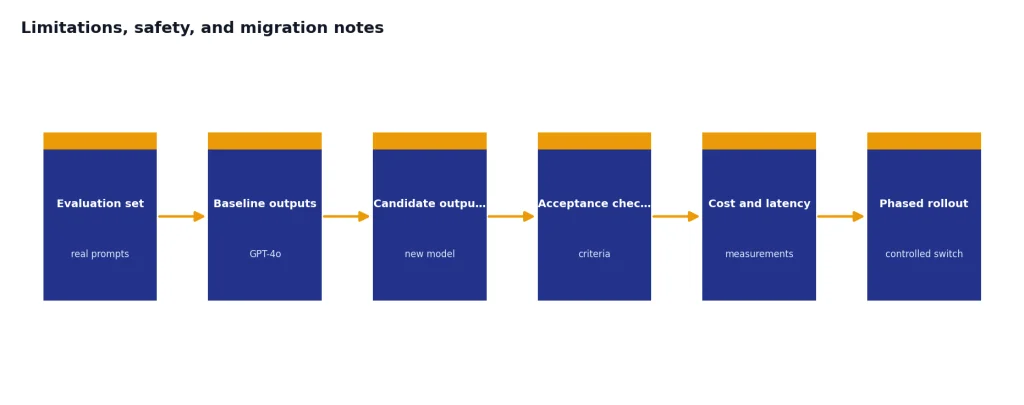

If you maintain a GPT-4o application, do not migrate by changing the model name and hoping for identical behavior. Build a small evaluation set from real prompts, include image and tool-call cases, compare outputs against your acceptance criteria, and measure cost and latency. Pay special attention to structured output schemas, refusal behavior, function-call arguments, and long-context recall. Those are the areas where a newer model can be better overall but still break a workflow tuned to GPT-4o.

Frequently asked questions

Is GPT-4o still available in ChatGPT?

For normal ChatGPT use, no. OpenAI says GPT-4o was deprecated in ChatGPT on February 13, 2026.[3] Business, Enterprise, and Edu customers retain GPT-4o access inside Custom GPTs until April 3, 2026.[3]

Is GPT-4o still available through the API?

Yes. OpenAI’s retirement notice says GPT-4o continues to be available in the API, and the GPT-4o API model card remains published with its specs, endpoints, and pricing.[3][2] API users should still monitor OpenAI notices for future retirement timelines.

What does the “o” in GPT-4o mean?

The “o” stands for “omni.” OpenAI used the name to describe a model designed for more natural interaction across text, audio, image, and video inputs, with text, audio, and image outputs in its launch framing.[1]

How large is GPT-4o’s context window?

OpenAI lists GPT-4o with a 128,000-token context window and a 16,384-token maximum output in the API model card.[2] That is large enough for substantial documents and long conversations, but developers still need chunking and retrieval for very large corpora.

How much does GPT-4o cost in the API?

OpenAI lists GPT-4o text pricing at $2.50 per 1M input tokens, $1.25 per 1M cached input tokens, and $10.00 per 1M output tokens.[2] Actual bills depend on prompt length, output length, caching eligibility, tools, and traffic volume.

Should I use GPT-4o or GPT-4o mini?

Use GPT-4o when you need stronger general capability, vision input, and mature structured workflow behavior. Use GPT-4o mini when cost and latency matter more and the task is focused, such as tagging, extraction, classification, or simple transformation.[6] Test both on your own prompts before switching production traffic.