The GPT-5.3 release was not one single model drop. It was a staged upgrade across ChatGPT and Codex: GPT-5.3-Codex arrived for agentic coding, GPT-5.3-Codex-Spark added a low-latency coding preview, and GPT-5.3 Instant became the default everyday ChatGPT model on March 3, 2026.[1][2][6][7] The practical change is that ChatGPT’s fast mode became less preachy, more direct, better at web-grounded answers, and available to developers as gpt-5.3-chat-latest.[1][4][10] The deeper reasoning upgrade moved quickly into GPT-5.4, which OpenAI released on March 5, 2026.[9][11]

What changed in the GPT-5.3 release

GPT-5.3 changed the fast, default ChatGPT experience more than it changed the top-end reasoning tier. GPT-5.3 Instant focused on tone, relevance, web-grounded answers, fewer unnecessary caveats, and lower hallucination rates, while GPT-5.3-Codex focused on agentic coding and computer-based work.[1][3][6]

The headline answer is simple: GPT-5.3 made ChatGPT feel more useful in ordinary conversations, but it was not the final March 2026 reasoning upgrade. OpenAI followed it with GPT-5.4 on March 5, 2026, positioning GPT-5.4 Thinking for deeper reasoning and professional work while leaving GPT-5.3 Instant as the fast everyday model.[2][9][11]

This matters if you are comparing the GPT-5.2 release, the GPT-5.1 update, and the new GPT-5.3 release. GPT-5.3 is best understood as the bridge between a more conversational ChatGPT and a more capable agentic stack.

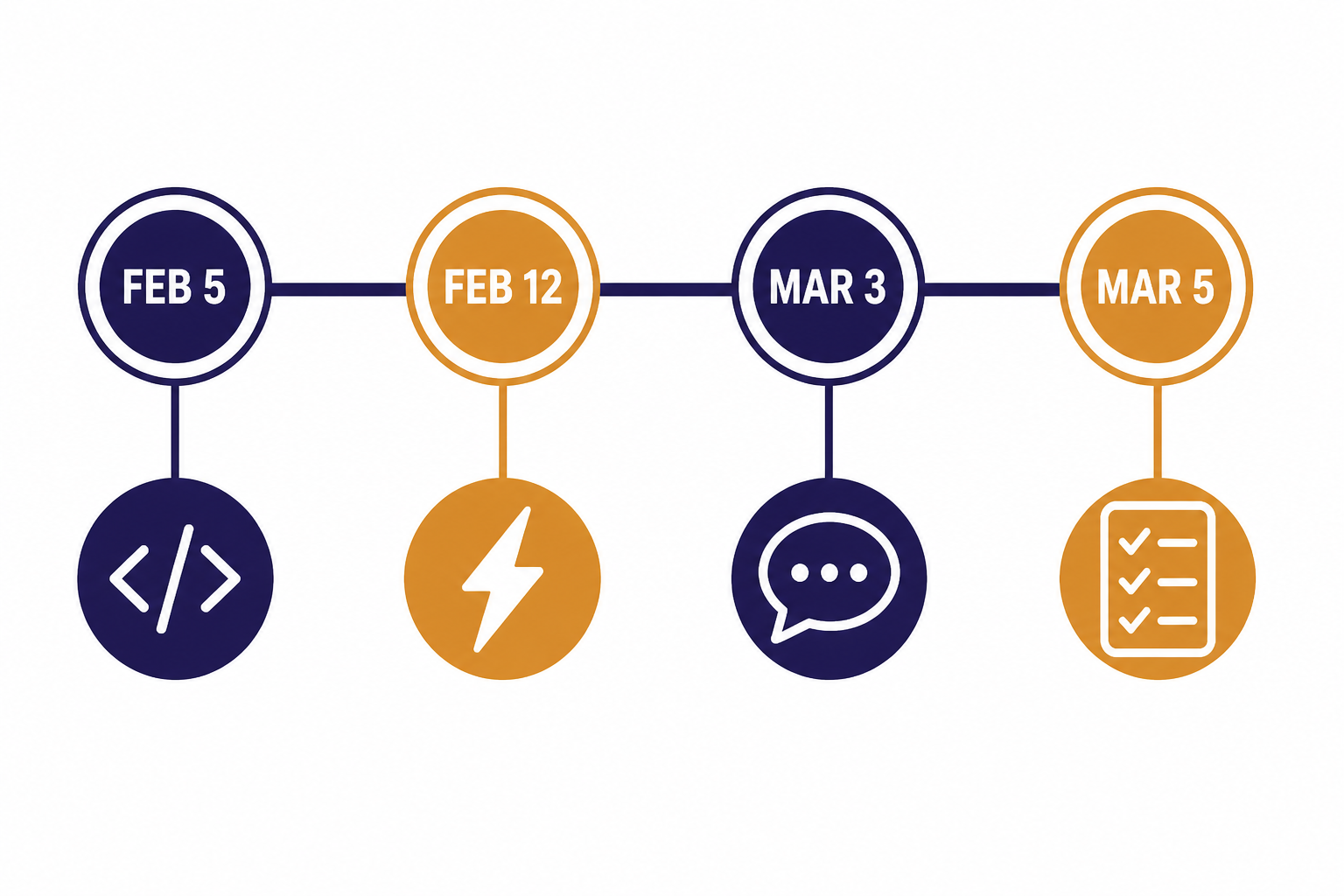

The GPT-5.3 release timeline

OpenAI spread the GPT-5.3 release across several product surfaces. That created some confusion because “GPT-5.3” referred to different things depending on whether you were using ChatGPT, Codex, or the API.

| Date | Release | What changed | Primary audience |

|---|---|---|---|

| February 5, 2026 | GPT-5.3-Codex | OpenAI introduced a coding and computer-work agent that it described as 25% faster than GPT-5.2-Codex for Codex users. | Developers and professional Codex users.[6][14] |

| February 12, 2026 | GPT-5.3-Codex-Spark | OpenAI released a smaller, low-latency coding model designed for real-time interaction and served on Cerebras hardware. | ChatGPT Pro users in Codex research preview and selected API design partners.[7][8] |

| March 3, 2026 | GPT-5.3 Instant | OpenAI made GPT-5.3 Instant available to all ChatGPT users and to developers as gpt-5.3-chat-latest. | Everyday ChatGPT users and API developers.[1][2][10] |

| March 5, 2026 | GPT-5.4 Thinking and GPT-5.4 Pro | OpenAI moved the reasoning track forward with GPT-5.4, incorporating GPT-5.3-Codex capabilities into a broader professional-work model. | Users who need deeper reasoning, tool use, research, and complex workflows.[9][11] |

The table shows the pattern. GPT-5.3 was a family checkpoint, not a single universal model replacing every GPT-5.2 mode at once. If you want the broader model ladder, see our side-by-side GPT models comparison and our context window comparison.

What changed in ChatGPT

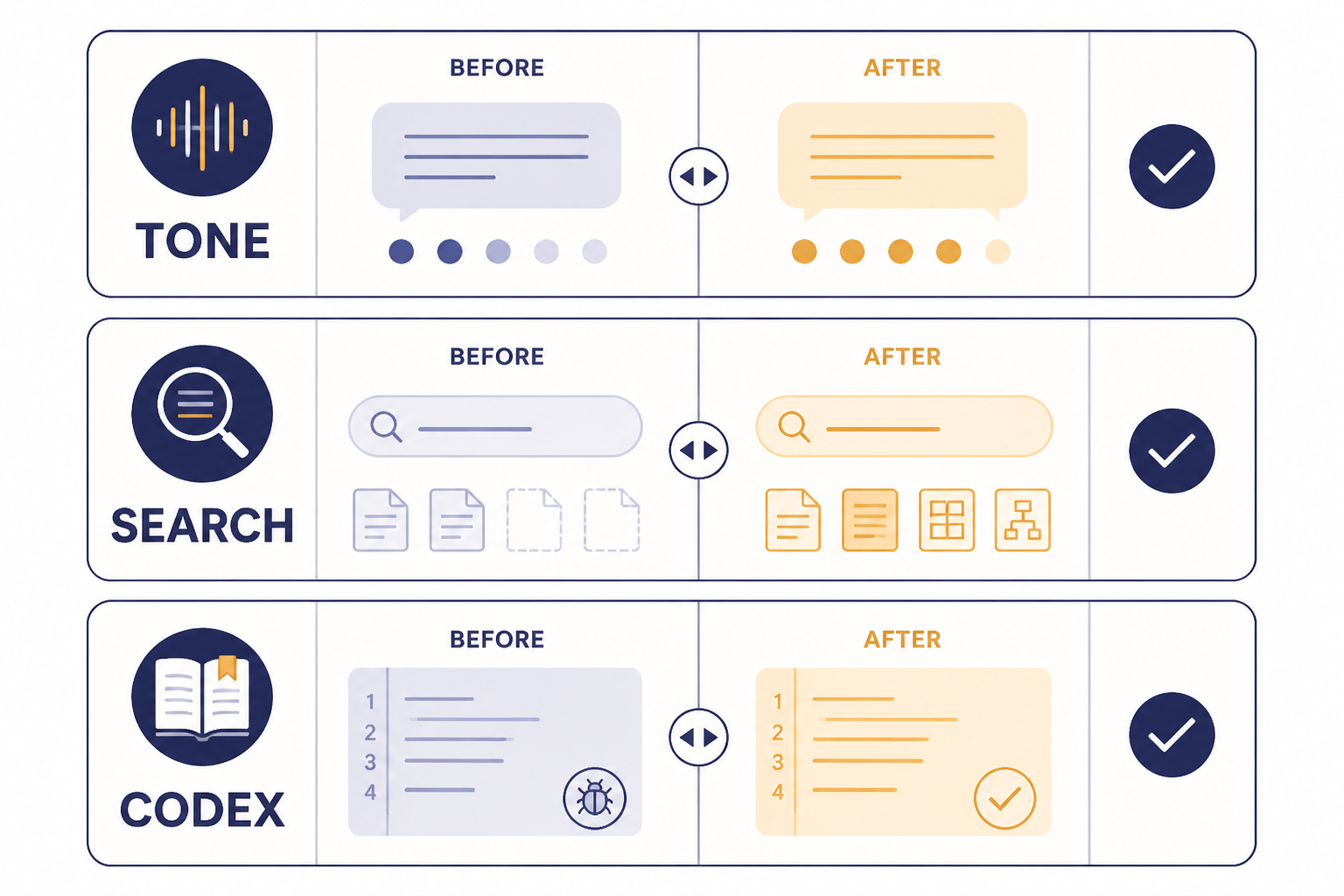

The most visible GPT-5.3 change was in ChatGPT’s default fast experience. OpenAI said GPT-5.3 Instant delivers more accurate answers, richer and better-contextualized results when searching the web, and fewer “dead ends,” caveats, and overly declarative phrases that interrupt the flow of a conversation.[1][2]

Less defensive tone

OpenAI’s own examples emphasized tone. GPT-5.2 Instant could sound overbearing in sensitive everyday questions. GPT-5.3 Instant was tuned to answer more directly, avoid unwarranted emotional assumptions, and reduce lines that feel like a lecture before an answer.[1][10]

This is not only a style preference. Tone affects whether users trust the answer enough to keep working with it. A model that repeatedly opens with unnecessary warnings can be safer in appearance but less useful in practice. GPT-5.3 tries to keep safety boundaries without turning harmless questions into counseling scripts.

Better web-grounded answers

GPT-5.3 Instant also changed how ChatGPT handles information-seeking prompts. OpenAI said the model provides richer search results and better context when browsing the web, which should help with questions where the correct answer depends on recent events or source selection.[1][2]

That matters for news, shopping, software documentation, and policy questions. It does not remove the need to check sources. It does mean the fast default model should be less likely to give a stale answer when a web search is needed.

Lower hallucination rates, according to OpenAI

OpenAI reported that GPT-5.3 Instant reduced hallucination rates by 26.8% with web use and 19.7% without web use on a higher-stakes internal evaluation, compared with prior models.[1][3] OpenAI also reported reductions of 22.5% with web use and 9.6% without web access on a user-feedback evaluation built from de-identified conversations flagged for factual errors.[1][3] These are OpenAI-reported internal numbers; OpenAI has not published a corroborated independent benchmark for those exact hallucination figures.

That caveat is important. The improvement may be real, but internal hallucination tests do not always predict every user’s experience. A lawyer, physician, developer, or analyst should still verify important claims, especially when the answer affects money, safety, law, or medical care.

What changed for Codex and developers

GPT-5.3 also changed Codex before it changed the main ChatGPT default. On February 5, 2026, OpenAI introduced GPT-5.3-Codex as a model for agentic coding, tool use, research, and computer-based professional work.[6][14] OpenAI said GPT-5.3-Codex was available with paid ChatGPT plans anywhere Codex could be used: the app, CLI, IDE extension, and web.[6]

The key Codex shift was scope. OpenAI framed GPT-5.3-Codex as moving beyond writing and reviewing code toward operating a computer to complete work end to end.[6] That is a different product bet than a normal chat upgrade. It treats code as one tool inside a larger workflow.

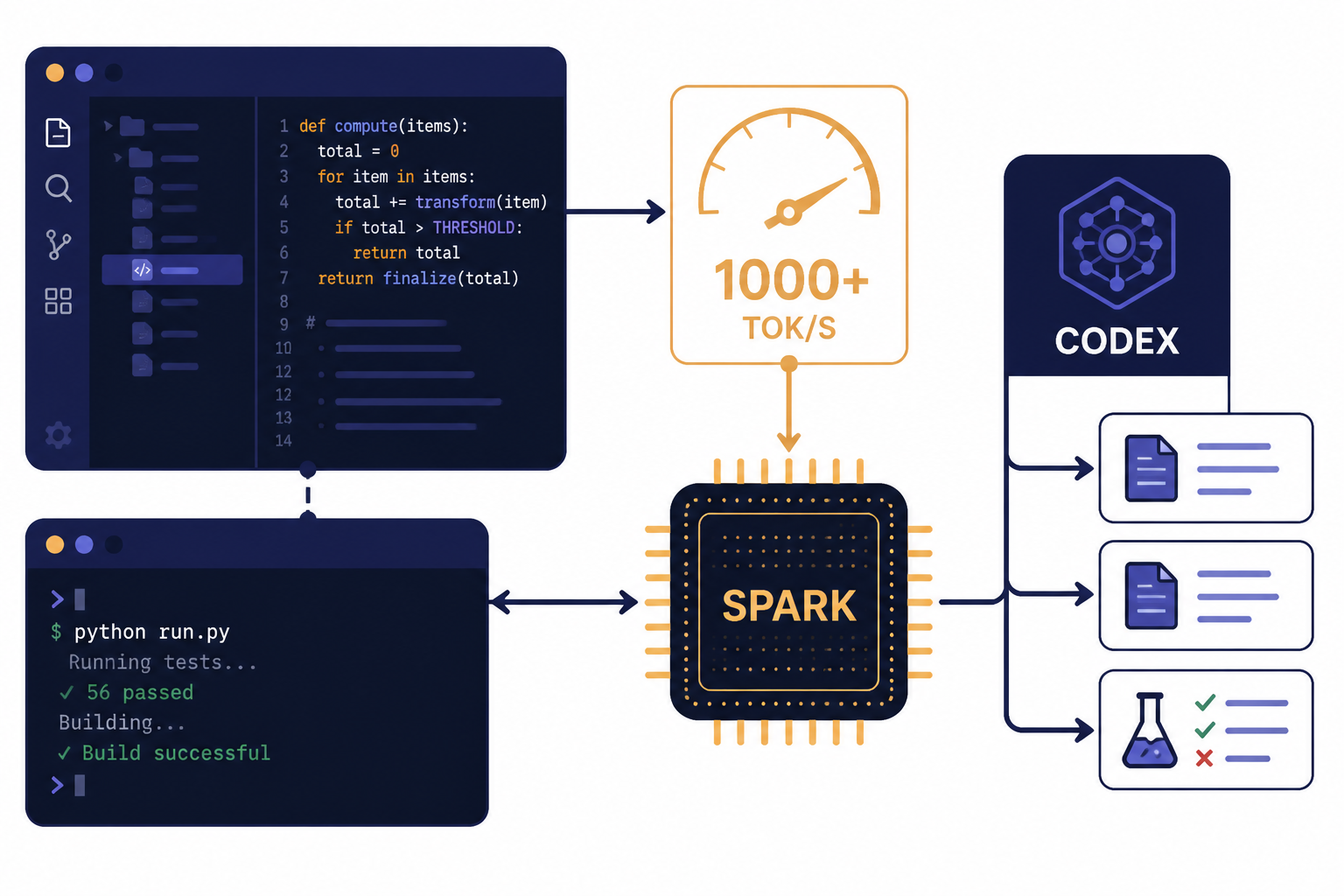

Codex-Spark added the latency track

One week later, OpenAI introduced GPT-5.3-Codex-Spark as a smaller version of GPT-5.3-Codex for real-time coding.[7][8] OpenAI and Cerebras both described the model as running at over 1,000 tokens per second on Cerebras hardware, while OpenAI said it was available as a research preview for ChatGPT Pro users in the Codex app, CLI, and VS Code extension.[7][8]

That was the clearest infrastructure signal in the GPT-5.3 cycle. GPT-5.3-Codex used NVIDIA GB200 NVL72 systems, while Codex-Spark introduced a Cerebras-backed path for low-latency inference.[6][7][8] For more on the infrastructure and business side, see our guide to OpenAI and Microsoft.

Pricing, access, and API details

For ChatGPT users, GPT-5.3 Instant was available to all users starting March 3, 2026.[1][2] For developers, the relevant API model was gpt-5.3-chat-latest, which OpenAI’s developer documentation describes as the GPT-5.3 Instant snapshot used in ChatGPT.[1][4]

| Access path | What you get | Price or limit detail | Best fit |

|---|---|---|---|

| ChatGPT Free | Access to GPT-5.3 Instant through ChatGPT | OpenAI said GPT-5.3 Instant was available to all ChatGPT users; exact free-tier limits vary by account and demand.[1][2] | Casual use, search, writing, and everyday questions. |

| ChatGPT Go | Lower-cost paid ChatGPT access in markets where Go is available | OpenAI listed ChatGPT Go at $8 USD/month in the United States when it announced global availability.[12] | Users who want more than free access but do not need Plus. |

| ChatGPT Plus | Higher GPT-5.3 limits and access to advanced reasoning models | OpenAI lists ChatGPT Plus at $20/month.[13][12] | Regular users who rely on ChatGPT for work, school, or research. |

| ChatGPT Pro | Highest consumer access tier and early previews of newer features | OpenAI listed ChatGPT Pro at $200 USD/month in its January 2026 plan announcement.[12] | Power users, heavy Codex users, and people who routinely hit limits. |

| OpenAI API | gpt-5.3-chat-latest for applications | OpenAI’s model page listed $1.75 per 1M input tokens, $0.175 per 1M cached input tokens, and $14.00 per 1M output tokens; Price Per Token listed the same input and output prices.[4][5] | Developers building products, workflows, and internal tools. |

The API details are also useful for product planning. OpenAI’s developer page lists a 128,000-token context window, 16,384 maximum output tokens, text and image input, and text output for GPT-5.3 Chat.[4][5] If your main question is cost, compare this with our OpenAI API pricing guide.

One practical note: API usage is separate from ChatGPT subscriptions. OpenAI’s ChatGPT Plus help page says API usage is billed independently from the $20/month Plus subscription.[13] That distinction matters if you use ChatGPT for writing but also run automated API workloads.

GPT-5.3 compared with GPT-5.2 and GPT-5.4

The easiest mistake is to compare GPT-5.3 Instant with GPT-5.4 Thinking as if they are direct replacements. They are related, but they serve different jobs. GPT-5.3 Instant is the fast conversational default. GPT-5.4 Thinking is the deeper reasoning model that followed it in the March 2026 release cycle.[1][9][11]

| Model or track | Main change | Availability note | Reader takeaway |

|---|---|---|---|

| GPT-5.2 Instant | Previous fast ChatGPT model | OpenAI said GPT-5.2 Instant would remain in Legacy Models for paid users for three months and retire on June 3, 2026.[1][2] | Use only if you need to compare older behavior before it disappears. |

| GPT-5.3 Instant | More direct answers, better web context, lower OpenAI-reported hallucination rates, and smoother tone | Available to all ChatGPT users and to developers as gpt-5.3-chat-latest.[1][4][10] | Best default for everyday chat, writing, search, and general work. |

| GPT-5.3-Codex | Agentic coding and computer-work model; OpenAI reported a 25% speed improvement for Codex users | Available with paid ChatGPT plans in Codex surfaces; OpenAI said API access was being worked on safely.[6][14] | Best fit when you need an agent to work across code, files, and tools. |

| GPT-5.3-Codex-Spark | Low-latency coding preview on Cerebras hardware, reported at over 1,000 tokens per second | Research preview for ChatGPT Pro users in Codex and selected API design partners.[7][8] | Best fit for interactive coding where response speed matters. |

| GPT-5.4 Thinking | Reasoning, coding, tool use, research, and professional work upgrade | OpenAI released it on March 5, 2026, for ChatGPT Plus, Team, and Pro users, with API access as gpt-5.4.[9][11] | Use it when the task needs planning, tool use, or multi-step reasoning. |

If you want a single recommendation, use GPT-5.3 Instant for normal ChatGPT sessions and switch to the reasoning track when the task requires careful planning, long context, spreadsheet-style work, coding architecture, or multi-source research. Our most powerful GPT model benchmark page goes deeper on that tradeoff.

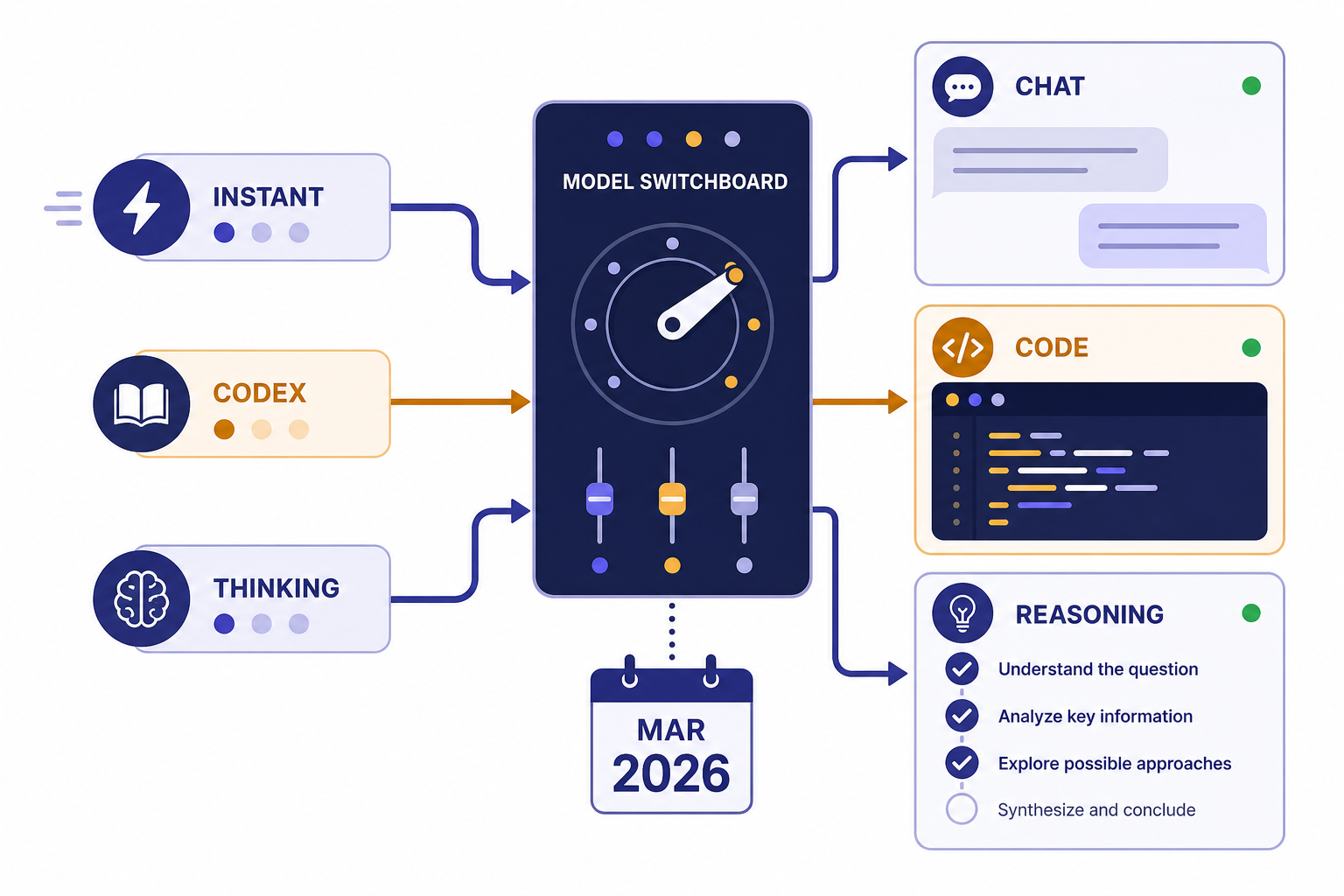

Analysis: why GPT-5.3 was a split release

The GPT-5.3 release shows a pattern we should expect more often: OpenAI is no longer shipping one model that tries to be the best at every interaction style. It is splitting the stack into fast conversation, deep reasoning, coding agents, and low-latency specialist models.

That split has a clear advantage. Most ChatGPT sessions do not need the most expensive reasoning model. They need a fast answer that understands the prompt, searches when needed, and avoids needless hedging. GPT-5.3 Instant targets that everyday layer.[1][2]

The tradeoff is complexity. Users now have to understand Instant, Thinking, Pro, Codex, Spark, legacy models, and API aliases. OpenAI tried to simplify part of that by updating the ChatGPT model picker in March 2026 with options such as Instant, Thinking, and Pro for paid accounts.[2] That is simpler than a long list of raw model names, but it still requires users to know when to switch modes.

My read is that GPT-5.3 is the “interaction quality” release. It fixes the parts of ChatGPT people complain about during daily use: tone, stale answers, unnecessary caveats, and writing texture. GPT-5.4, by contrast, is the “hard work” release: deeper reasoning, agentic tool use, and professional workflows.[1][9]

This division also explains why the ChatGPT Atlas launch and the Sora 2 launch matter in the same product strategy. OpenAI is building a set of surfaces where models are routed by task: browsing, coding, video, chat, research, and automation.

Who should switch to GPT-5.3 now

Most users should simply use GPT-5.3 Instant as the default fast model. It is meant for ordinary ChatGPT work: asking questions, drafting, revising, translating, summarizing, checking current information, and getting practical explanations.[1][2]

- Use GPT-5.3 Instant for fast answers, writing, research starts, translation, and general ChatGPT work.

- Use GPT-5.4 Thinking when the task requires multi-step reasoning, deeper research, spreadsheet work, tool use, or a plan you may want to steer while it runs.[9][11]

- Use GPT-5.3-Codex when the work lives in a codebase, repository, CLI, IDE, or file workflow.[6]

- Use GPT-5.3-Codex-Spark if you have access and need low-latency coding interaction rather than long autonomous runs.[7][8]

Paid users who still rely on GPT-5.2 Instant should treat the legacy window as a migration period, not a long-term option. OpenAI said GPT-5.2 Instant would remain available to paid users for three months and retire on June 3, 2026.[1][2] For subscription tradeoffs, see our ChatGPT Plus price analysis.

Frequently asked questions

When was GPT-5.3 released?

GPT-5.3 was released in stages. GPT-5.3-Codex arrived on February 5, 2026, GPT-5.3-Codex-Spark followed on February 12, 2026, and GPT-5.3 Instant became available to all ChatGPT users on March 3, 2026.[1][6][7] That is why some users saw GPT-5.3 in Codex before they saw GPT-5.3 Instant in normal ChatGPT. The release was a product sequence, not a single switch.

Is GPT-5.3 the same as GPT-5.3 Instant?

No. GPT-5.3 Instant is the ChatGPT fast model that OpenAI made available on March 3, 2026.[1][2] GPT-5.3-Codex and GPT-5.3-Codex-Spark are coding-focused models released earlier in February 2026.[6][7] In casual use, people often say “GPT-5.3” when they mean GPT-5.3 Instant.

What changed most from GPT-5.2 to GPT-5.3?

The biggest ChatGPT change was conversational quality. OpenAI said GPT-5.3 Instant gives more accurate answers, better web-contextualized results, fewer unnecessary caveats, and a more natural style than GPT-5.2 Instant.[1][2] OpenAI also reported hallucination-rate reductions of 26.8% with web use and 19.7% without web use on one internal higher-stakes evaluation, but those exact figures are not independently corroborated.[1][3]

Does GPT-5.3 have API access?

Yes, GPT-5.3 Instant is available to developers as gpt-5.3-chat-latest.[1][4] OpenAI’s developer documentation lists GPT-5.3 Chat at $1.75 per 1M input tokens and $14.00 per 1M output tokens, with a 128,000-token context window.[4][5] API usage is separate from ChatGPT Plus billing.[13]

Is GPT-5.3 better than GPT-5.4?

Not in a single universal sense. GPT-5.3 Instant is the faster everyday model, while GPT-5.4 Thinking is meant for deeper reasoning and professional work.[1][9] OpenAI released GPT-5.4 on March 5, 2026, shortly after GPT-5.3 Instant.[9][11] Use GPT-5.3 for speed and normal chat; use GPT-5.4 Thinking when the task needs planning, tools, or longer reasoning.

What is GPT-5.3-Codex-Spark?

GPT-5.3-Codex-Spark is a smaller, low-latency coding model that OpenAI released on February 12, 2026, as a research preview.[7][8] OpenAI and Cerebras said it runs at over 1,000 tokens per second on Cerebras hardware.[7][8] It is designed for real-time coding feedback rather than the longest autonomous coding runs.

Should I keep using GPT-5.2 Instant?

Only if you need to compare old behavior or preserve a short-term workflow. OpenAI said GPT-5.2 Instant would remain available to paid users in Legacy Models for three months and retire on June 3, 2026.[1][2] For normal use, GPT-5.3 Instant is the better default because it is the active fast model and the one OpenAI is updating.

Bottom line

The GPT-5.3 release matters because it separates everyday ChatGPT quality from frontier reasoning. GPT-5.3 Instant made the default experience more direct and useful, while GPT-5.3-Codex and Codex-Spark pushed coding agents toward richer computer work and lower latency.[1][6][7]

Watch the routing layer next. The important question after GPT-5.3 is not only which model is strongest, but whether ChatGPT can send each task to the right mode without making users study the whole model lineup.