GPT-4.5 was OpenAI’s large research-preview GPT model built to make chat feel more natural, knowledgeable, and emotionally tuned. It was not a reasoning model in the o-series sense. Its main change was more scale in pre-training and post-training, not a new “think step” architecture. That made it strong for writing, coaching, ideation, general conversation, and some practical coding support. It also made it expensive and short-lived as an API choice. By April 8, 2026, the practical answer is clear: study GPT-4.5 to understand the bridge between GPT-4o and later GPT systems, but choose newer models for most production work.

What GPT-4.5 was

GPT-4.5 was released by OpenAI on February 27, 2025 as a research preview of its strongest GPT model at the time.[1] OpenAI described it as its largest and best chat model then, with improvements from scaling pre-training and post-training rather than from adding a reasoning-first response loop.[1]

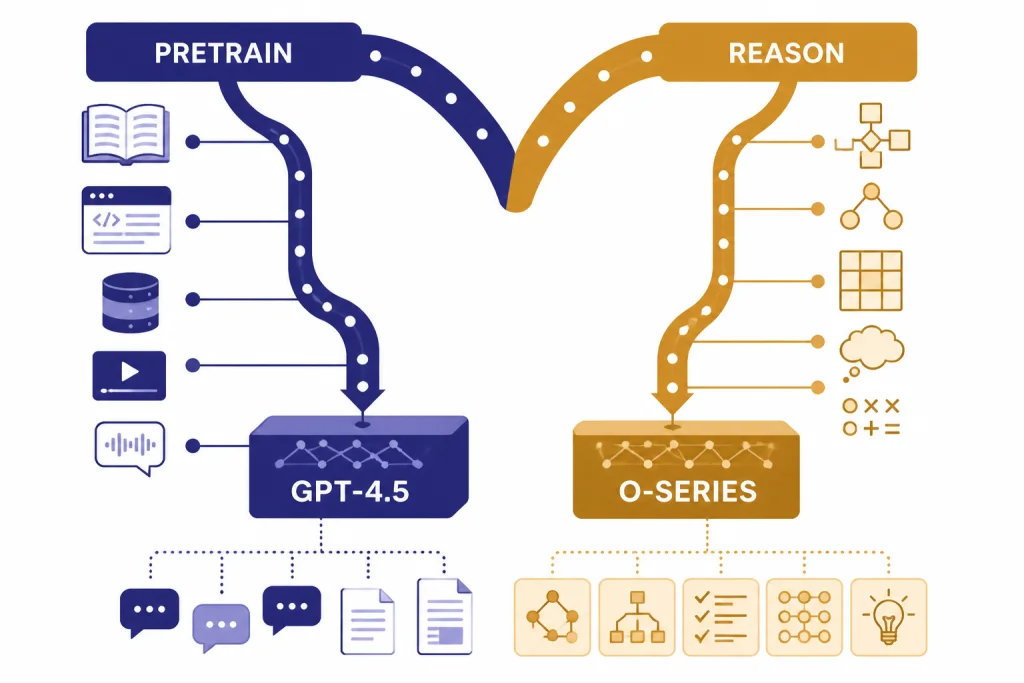

The model sat between two eras. On one side were GPT-4 and GPT-4o: strong general GPT models designed for broad chat, vision, and tool use. On the other side were o-series reasoning models and later unified GPT systems, which were designed to spend more compute on harder multi-step problems. If you want the broader model family map, see all GPT models compared side by side.

OpenAI’s own framing is important. GPT-4.5 “doesn’t think before it responds,” according to OpenAI’s launch post.[1] That sentence explains most of the model’s identity. GPT-4.5 was not built to compete with a reasoning model on every math proof, contest problem, or formal planning task. It was built to be a better general-purpose chat model: more fluent, more sensitive to user intent, stronger at natural collaboration, and better at broad knowledge tasks.

That made GPT-4.5 easy to misunderstand. The name sounded like a straight-line upgrade over every GPT-4-era model. In practice, it was a specialized preview of what more unsupervised learning scale could do for a chat model. It mattered most when tone, nuance, synthesis, and broad world knowledge were more valuable than raw step-by-step reasoning.

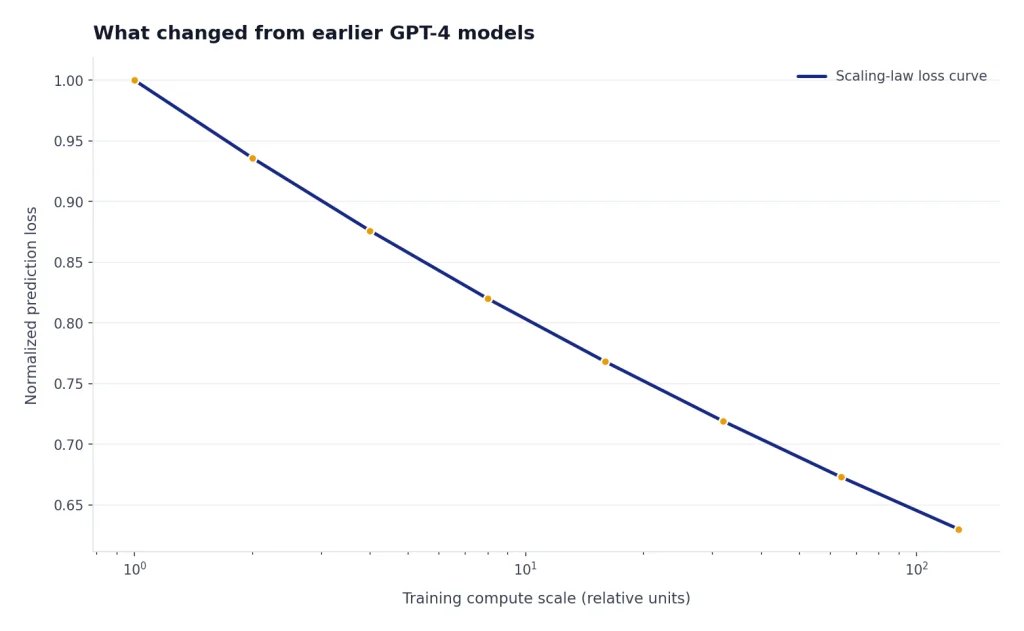

What changed from earlier GPT-4 models

The biggest change in GPT-4.5 was not a new product wrapper. It was the training emphasis. OpenAI said GPT-4.5 scaled unsupervised learning to improve pattern recognition, connection-making, and creative insight generation without reasoning.[1] The system card also says GPT-4.5 built on GPT-4o, scaled pre-training further, and was designed to be more general-purpose than STEM-focused reasoning models.[2]

For users, that translated into a different feel. GPT-4.5 was often most useful when a prompt had ambiguity, emotional context, stylistic goals, or several soft constraints. A writing prompt such as “make this sound less defensive but still firm” was a better fit than a prompt such as “prove this theorem under strict assumptions.” For more writing-specific model choices, see best GPT model for writing.

GPT-4.5 also sharpened the distinction between chat intelligence and deliberate reasoning. OpenAI compared it with OpenAI o1 and OpenAI o3-mini, saying GPT-4.5 was more general-purpose and “innately smarter,” while reasoning models used a different approach for harder tasks.[1] If your work depends on solving long chains of logic, read OpenAI o3 and OpenAI o3-mini before treating GPT-4.5 as the default answer.

| Dimension | GPT-4.5 | Reasoning models | Practical takeaway |

|---|---|---|---|

| Core idea | Scaled GPT chat model | Deliberate reasoning path | Use GPT-4.5 for natural collaboration, not the hardest logic work. |

| Best fit | Writing, coaching, ideation, knowledge synthesis | Math, planning, hard coding, analysis | Pick by task, not by model name. |

| Response style | Fast, conversational, intuitive | More deliberate and structured | GPT-4.5 often felt warmer and less mechanical. |

| Risk | High cost and limited preview status | May be slower or overkill for simple tasks | Production teams needed a fallback plan. |

Specs, pricing, and access

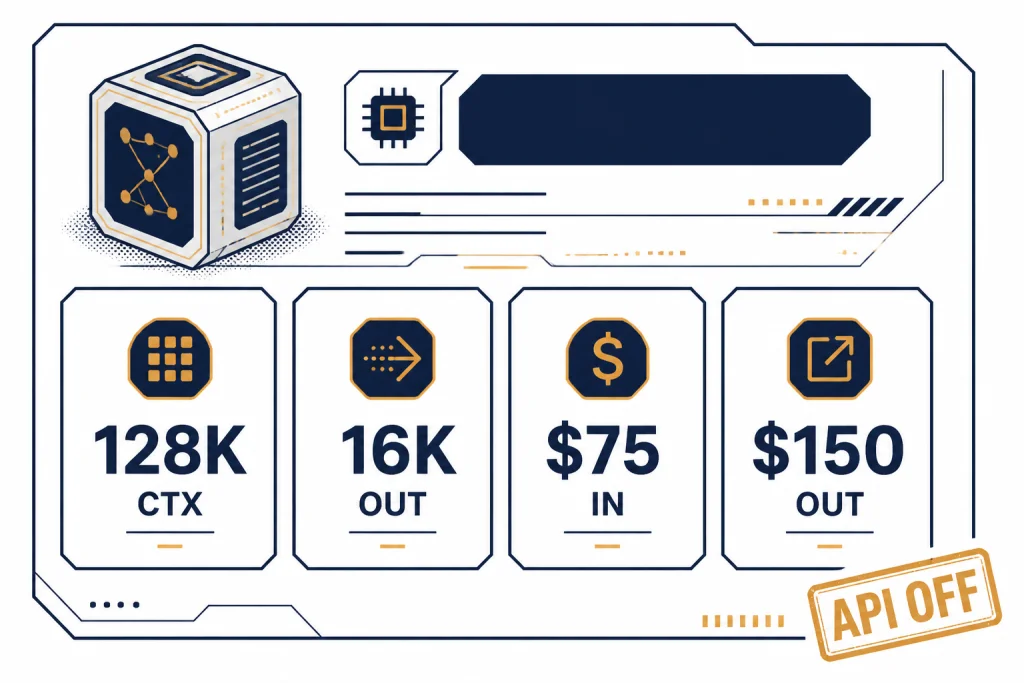

The API model page lists GPT-4.5 Preview as a deprecated large model, with a 128,000-token context window, 16,384 maximum output tokens, text output, and image input support.[3] For readers comparing long-document capacity across models, the most useful companion is our context window sizes for every GPT model guide.

GPT-4.5’s API pricing was unusually high: $75.00 per 1 million input tokens, $37.50 per 1 million cached input tokens, and $150.00 per 1 million output tokens.[3] That pricing alone made it a poor default for high-volume apps. If cost is your main constraint, start with OpenAI API pricing and our cheapest GPT model comparison before designing around any premium model.

The model documentation also listed an October 1, 2023 knowledge cutoff for GPT-4.5 Preview.[3] That did not mean ChatGPT could never answer newer questions when tools such as search were available. It meant the base model’s training knowledge had that cutoff in the API documentation. Treat base-model knowledge and tool-augmented answers as separate things.

| Item | GPT-4.5 Preview value | Why it mattered |

|---|---|---|

| Release date | February 27, 2025[1] | It was a research preview, not a long-term stable default. |

| Context window | 128,000 tokens[3] | Useful for large prompts, long drafts, and multi-file context. |

| Max output | 16,384 tokens[3] | Enough for long generated drafts or structured responses. |

| API input price | $75.00 per 1 million tokens[3] | Too costly for many high-volume workloads. |

| API output price | $150.00 per 1 million tokens[3] | Output-heavy writing apps became expensive quickly. |

| API status | Deprecated; removed July 14, 2025[4] | New production systems should not depend on it. |

Where GPT-4.5 performed well

OpenAI’s benchmark table showed GPT-4.5 as a strong broad-knowledge and multimodal model, but not as the top reasoning model across every category. It scored 71.4% on GPQA, 36.7% on AIME 2024, 85.1% on MMMLU, 74.4% on MMMU, and 38.0% on SWE-bench Verified in OpenAI’s published table.[1] Those numbers show a model with real capability, but also a clear gap versus reasoning models on math-heavy tasks.

The most favorable GPT-4.5 story was factuality and natural interaction. OpenAI’s launch post reported 62.5% SimpleQA accuracy and a 37.1% SimpleQA hallucination rate for GPT-4.5, compared with 38.2% accuracy and 61.8% hallucination rate for GPT-4o in the same displayed comparison.[1] That is one reason GPT-4.5 drew attention from people who wanted fewer confident wrong answers in ordinary knowledge chats.

Still, a benchmark is not a guarantee. SimpleQA is a constrained factuality test. A production support bot, legal assistant, medical workflow, or research agent needs retrieval, citations, evaluation, refusal handling, and monitoring. GPT-4.5 reduced some failure modes, but it did not remove the need to verify factual claims.

For coding, GPT-4.5 was capable but not the obvious long-term winner. OpenAI’s launch post positioned it as useful for programming and multi-step coding workflows, while its API documentation later recommended GPT-4.1 or o3 models for most use cases.[1][3] If code quality, latency, and price matter more than conversational polish, compare it with best GPT model for coding.

Where GPT-4.5 was the wrong choice

GPT-4.5 was the wrong choice when price, latency, or long-term API stability mattered more than peak conversational quality. OpenAI itself said GPT-4.5 was “very large and compute-intensive,” more expensive than GPT-4o, and not a replacement for GPT-4o.[1] That was an unusually direct warning for developers.

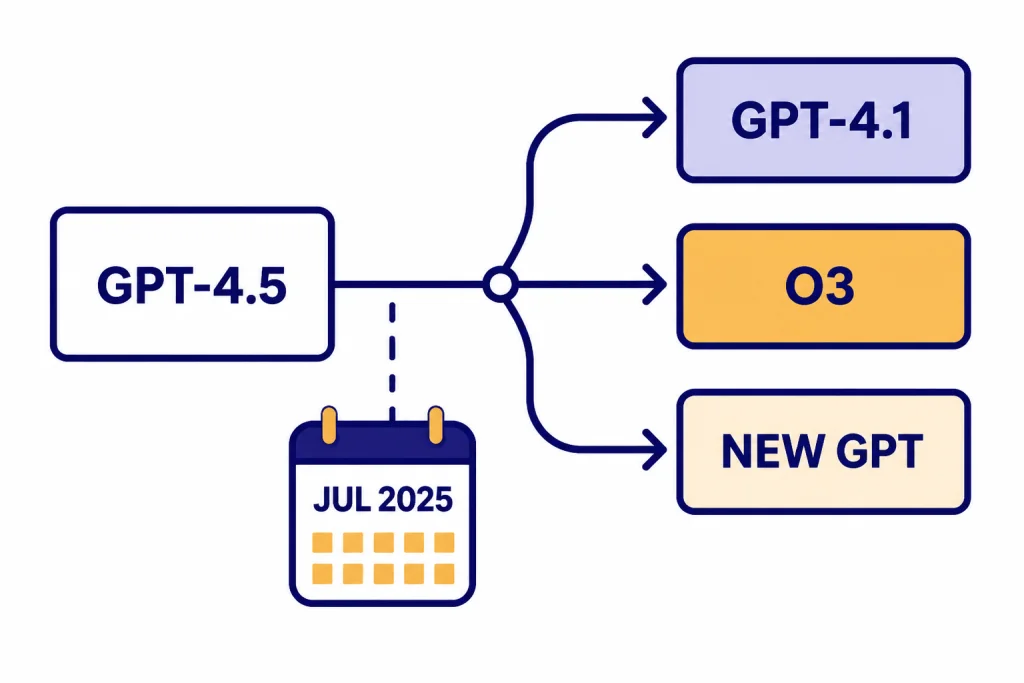

It was also a poor choice for teams that needed a durable API dependency. OpenAI notified developers on April 14, 2025 that gpt-4.5-preview was deprecated, and the listed shutdown date was July 14, 2025 with gpt-4.1 as the recommended replacement.[4] By April 8, 2026, that means GPT-4.5 is best understood as a historical preview and a design signal, not a model to build new API products around.

It was also not the best model when speed was the key metric. The model page classified its speed as medium and its price as high relative to alternatives.[3] If response time drives your product, use our fastest GPT model guide instead of assuming the largest model is the best fit.

Finally, GPT-4.5 did not support every modality in every product context. In ChatGPT at launch, OpenAI said GPT-4.5 supported search, file and image uploads, and canvas, but did not then support Voice Mode, video, or screensharing.[1] For image-heavy workflows, compare model families with GPT-4 Vision and best GPT model for image generation rather than treating GPT-4.5 as a universal multimodal answer.

Who GPT-4.5 was for

GPT-4.5 was for users who cared about response quality in high-touch language tasks. A good GPT-4.5 task had some combination of ambiguity, tone sensitivity, broad knowledge, and creative synthesis. It was less about grinding through every possible inference and more about understanding what the user meant.

Writers and editors were one of the clearest audiences. GPT-4.5 could help reshape a messy draft, preserve a speaker’s intent, adjust tone without flattening personality, and generate several viable directions for a piece. That type of work benefits from nuance more than from formal proof-style reasoning.

Coaches, tutors, and support designers were another natural audience. OpenAI emphasized higher emotional intelligence and better understanding of user intent.[1] In practice, that meant GPT-4.5 was often better suited to prompts such as “help me respond to this upset customer” or “explain this mistake without making the student feel foolish.”

Product teams could also use GPT-4.5 as a qualitative benchmark. Even if they did not ship it in production, they could compare cheaper models against GPT-4.5 outputs to define what “good” sounded like. That is often a practical way to improve prompts and evaluation rubrics before selecting a cheaper deployment model.

Developers, however, needed discipline. GPT-4.5 made sense only when its output quality justified its cost and instability. A low-volume executive-writing assistant might have justified the premium. A high-volume summarization API almost certainly did not.

What to use instead

For API users, the official migration answer was direct: OpenAI’s deprecation page listed gpt-4.1 as the recommended replacement for gpt-4.5-preview when the model shut down on July 14, 2025.[4] The GPT-4.5 model page also says OpenAI recommends GPT-4.1 or o3 models for most use cases.[3]

Use GPT-4.1-style models when your task looks like coding, instruction following, structured writing, document transformation, or general application logic. Use reasoning models when the task has hard planning, math, multi-step debugging, or decision analysis. Use newer flagship GPT models when you need the current default blend of chat quality, tool use, and reasoning. To compare top-end capability rather than lineage, see most powerful GPT model.

The practical migration pattern is simple. First, save representative GPT-4.5 outputs for your real prompts. Second, run those prompts against your candidate replacement models. Third, score the answers for accuracy, tone, structure, latency, and cost. Fourth, keep GPT-4.5 only as a reference point in your evaluation notes, not as a dependency.

For ChatGPT users, the advice is less technical. If you have access to GPT-4.5 in a model picker, use it for writing polish, brainstorming, sensitive replies, and open-ended synthesis. If you need deep analysis, switch to a reasoning-oriented model. If you need a repeatable business workflow, test the same prompt across several models and save the one that gives the best balance of quality and speed.

Frequently asked questions

Is GPT-4.5 the same as GPT-4 5?

Yes. “GPT-4 5” is usually a search-friendly way of writing GPT-4.5. OpenAI’s official model name used the decimal form, GPT-4.5.[1]

Was GPT-4.5 better than GPT-4o?

It was better for some tasks, especially natural chat, broad knowledge, writing, and nuanced collaboration. It was not a full replacement for GPT-4o, and OpenAI explicitly said GPT-4.5 was more expensive and not a GPT-4o replacement.[1]

Is GPT-4.5 a reasoning model?

No. OpenAI said GPT-4.5 did not think before responding, which made it different from reasoning models such as OpenAI o1.[1] Use reasoning models for hard multi-step analysis, math, and planning-heavy work.

Can developers still build with GPT-4.5?

Not as a new stable API choice. OpenAI’s deprecation page listed gpt-4.5-preview as deprecated, with a July 14, 2025 shutdown date and gpt-4.1 as the recommended replacement.[4]

Why was GPT-4.5 so expensive?

OpenAI described GPT-4.5 as very large and compute-intensive.[1] Its API documentation listed prices of $75.00 per 1 million input tokens and $150.00 per 1 million output tokens, which made it costly for high-volume use.[3]

What was the best use case for GPT-4.5?

The best use case was high-value language work where tone, judgment, creativity, and broad knowledge mattered. Examples include executive writing, sensitive customer replies, coaching, tutoring, and creative brainstorming. For most production apps, newer or cheaper models are the better starting point.