OpenAI o1-mini was the compact reasoning model OpenAI launched for lower-cost STEM reasoning, especially math and coding. It is still useful to understand because many API integrations and benchmark discussions were built around it, but OpenAI now marks o1-mini as deprecated and recommends o3-mini for higher intelligence at the same latency and price target.[2] For new projects in 2026, o1 mini is usually a legacy compatibility choice, not the best default. The main reasons to study it are cost history, migration planning, and understanding how OpenAI’s small reasoning models evolved from o1-mini to newer o-series options.

What o1-mini is

o1-mini is a small reasoning model in OpenAI’s o1 family. OpenAI introduced it on September 12, 2024 as a cost-efficient alternative to o1-preview for tasks that need deliberate reasoning without the full breadth of a larger general model.[1] Its original pitch was narrow and practical: stronger reasoning than typical small chat models, lower latency than o1-preview, and a much lower API cost than the first o-series preview.

The model was optimized for STEM reasoning. OpenAI described its strongest areas as math and coding, and positioned it for applications where a smaller technical model could work through a problem step by step. That made it a notable option for competitive programming prompts, math explanations, algorithm design, test generation, and structured technical troubleshooting.[1] If you are choosing among current models for software work, compare it with our best GPT model for coding guide before building around it.

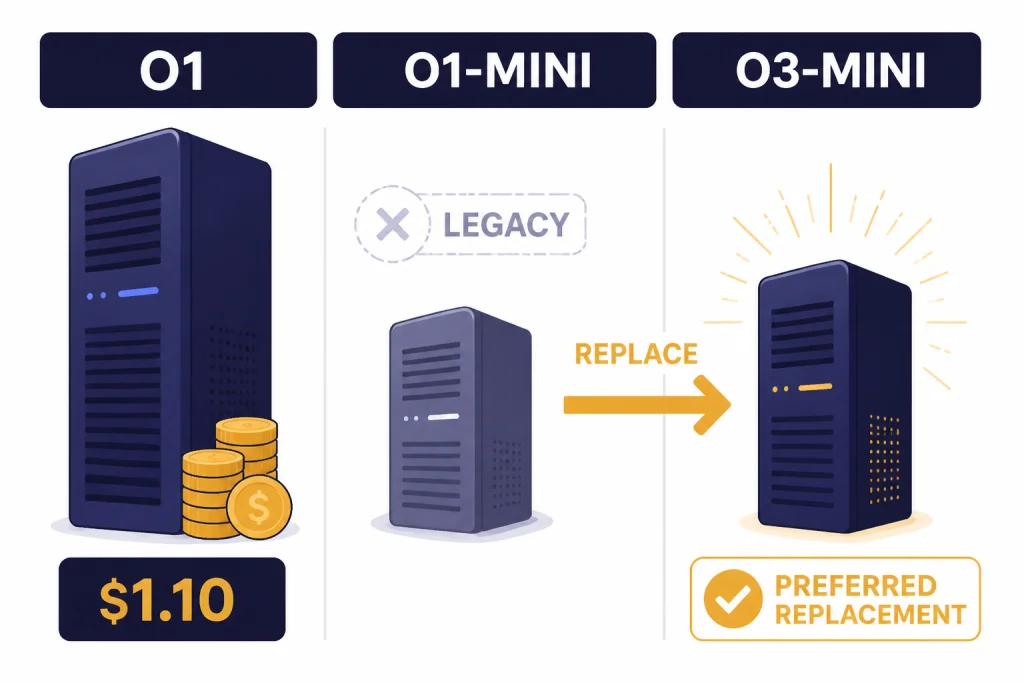

Its status has changed. OpenAI’s model documentation now marks o1-mini as deprecated and says developers should use o3-mini instead because o3-mini offers higher intelligence at the same latency and price as o1-mini.[2] That does not erase o1-mini’s importance. It was the first broadly visible “small reasoning” model from OpenAI and set the template for cheaper o-series reasoning.

Specs and pricing

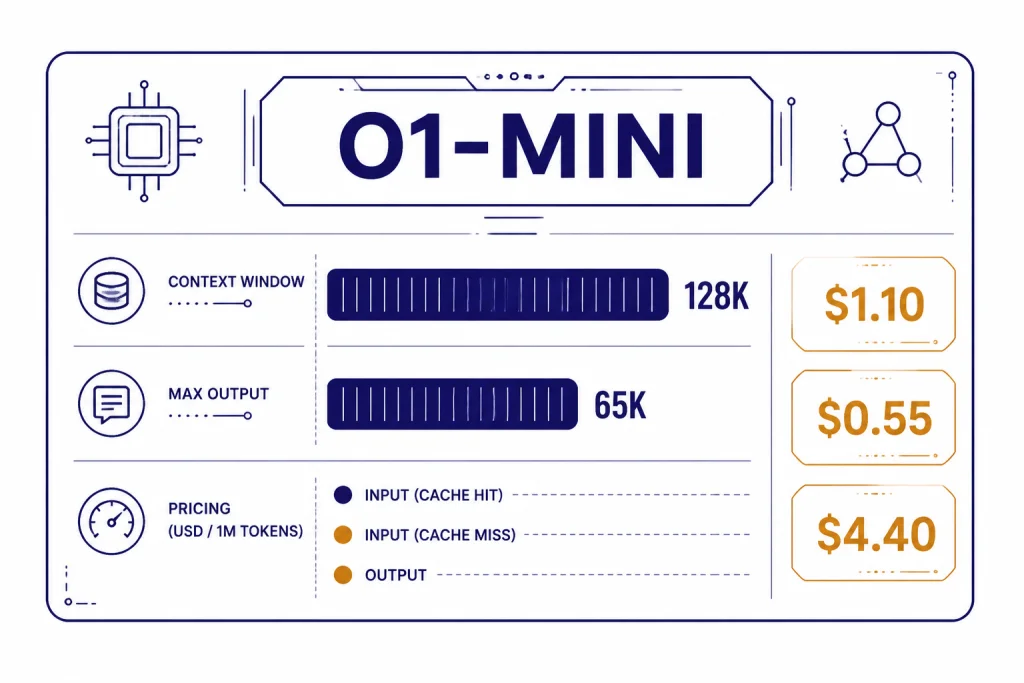

As listed in OpenAI’s API model documentation, o1-mini has a 128,000-token context window, a 65,536-token maximum output, and an October 1, 2023 knowledge cutoff.[2] It supports text input and text output, but does not support image, audio, or video modalities.[2] For a broader model-by-model view, see our context window sizes for every GPT model reference.

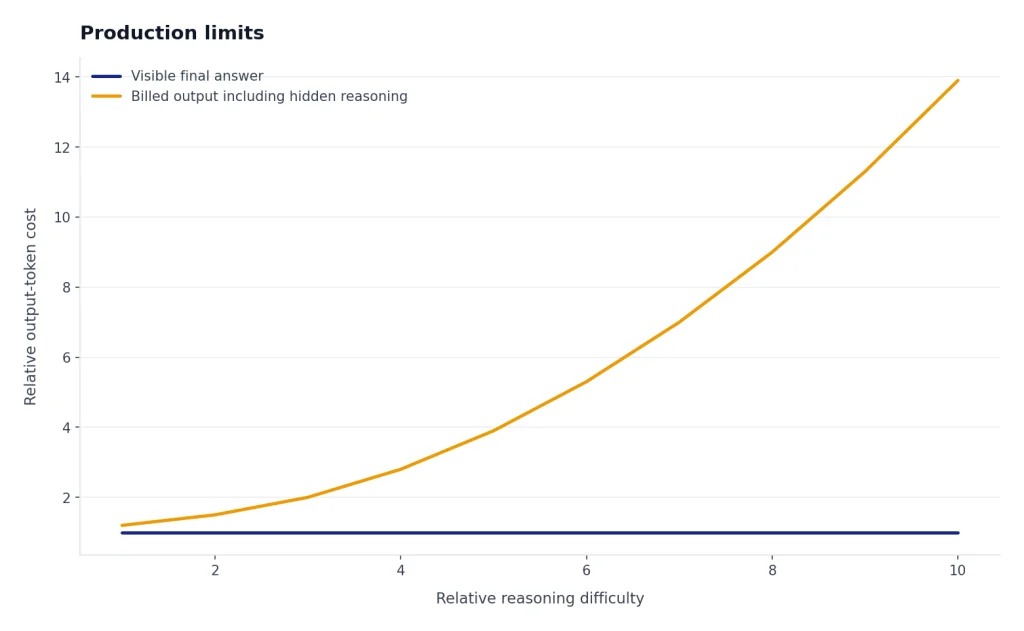

OpenAI’s pricing page lists o1-mini at $1.10 per 1 million input tokens, $0.55 per 1 million cached input tokens, and $4.40 per 1 million output tokens.[3] Reasoning tokens are not visible through the API, but OpenAI says they still occupy context-window space and are billed as output tokens.[3] That matters for cost estimates because a short final answer can still use hidden reasoning tokens.

| Item | o1-mini value | Why it matters |

|---|---|---|

| Status | Deprecated | Use mainly for legacy compatibility or comparisons.[2] |

| Context window | 128,000 tokens | Large enough for long technical prompts and code context.[2] |

| Maximum output | 65,536 tokens | Useful for long derivations, generated tests, or multi-file explanations.[2] |

| Input price | $1.10 per 1 million tokens | Low for a reasoning model, but not cheaper than o3-mini.[3] |

| Cached input price | $0.55 per 1 million tokens | Reduces cost when repeated context is cacheable.[3] |

| Output price | $4.40 per 1 million tokens | Important because reasoning tokens are billed as output.[3] |

| Modalities | Text input and text output | Not a fit for image, audio, or video workflows.[2] |

The price is the easiest part to understand. o1-mini was not meant to be the cheapest OpenAI model overall. It was meant to be a cheaper reasoning model. If raw cost is your only priority, start with our cheapest GPT model comparison and then decide whether reasoning quality justifies the output-token spend.

Where o1-mini performed well

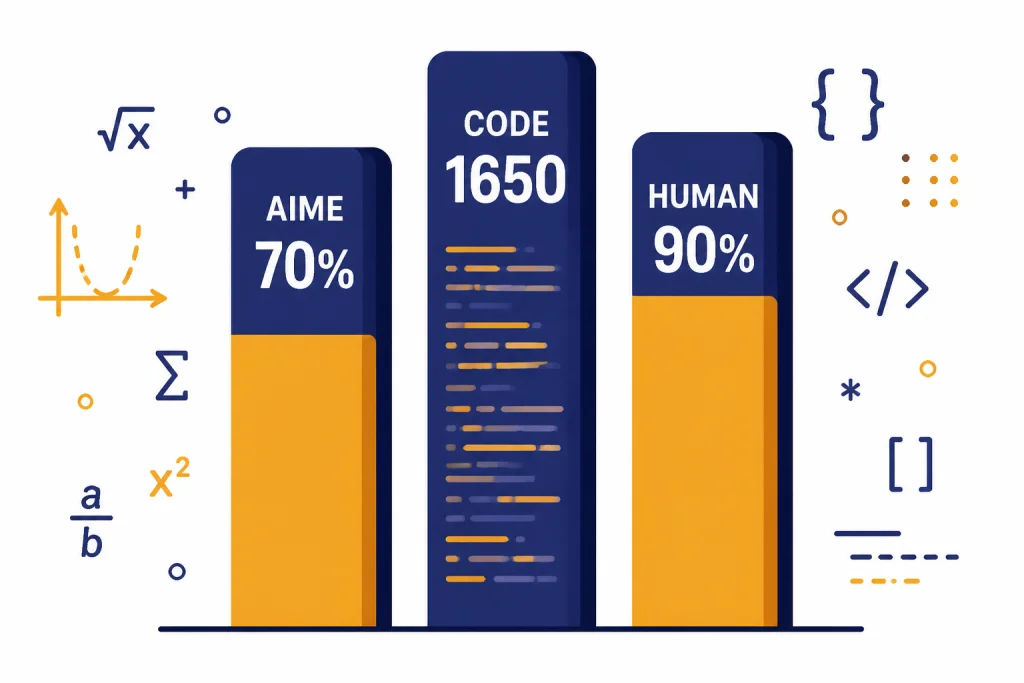

o1-mini’s launch benchmarks were concentrated in technical reasoning. OpenAI reported that o1-mini scored 70.0% on AIME, compared with 74.4% for o1 and 44.6% for o1-preview.[1] OpenAI also reported a 1650 Elo score on Codeforces, compared with 1673 for o1 and 1258 for o1-preview.[1] Those numbers explain why the model attracted attention from developers even though it was smaller than the full o1 model.

The best use cases were bounded technical tasks. Good examples include solving a math contest problem, explaining a dynamic programming recurrence, finding a bug in a small code sample, writing unit tests from a specification, or comparing two algorithmic approaches. In these cases, broad factual recall matters less than the ability to reason through a constrained problem.

OpenAI also reported that o1-mini reached 90.2% on HumanEval, while o1 and o1-preview were listed at 92.4% on the same benchmark.[1] That gap was small enough to make o1-mini attractive when the task was code-heavy and budget-sensitive. For model choice across the larger lineup, use our all GPT models compared side by side page.

One practical pattern was “narrow prompt, strict answer.” o1-mini performed best when the user gave enough context, specified the output format, and avoided asking for broad research-style knowledge. A prompt like “Given this function and these failing tests, identify the likely bug and propose a patch” fits the model better than “Explain the entire history of distributed databases and recommend a vendor.”

Production limits

The biggest production limit is its current status. OpenAI marks o1-mini as deprecated in the model documentation.[2] A deprecated model can remain available for some time, but it is not the model you should choose for a fresh architecture unless you have a specific compatibility reason.

The second limit is feature support. OpenAI’s o1-mini documentation lists streaming as supported, but function calling, Structured Outputs, fine-tuning, and predicted outputs as not supported.[2] That makes o1-mini a poor fit for many modern production agents, where schemas, tool calls, and strict output validation are standard engineering requirements.

The third limit is modality. o1-mini is text-only for input and output, and OpenAI lists image, audio, and video as not supported.[2] If your workflow needs visual reasoning, transcription, speech, or video generation, use a model designed for those modalities. For adjacent model families, see our guides to GPT-4 Vision, Whisper, DALL-E 3, and Sora.

The fourth limit is knowledge breadth. OpenAI’s launch article said o1-mini performed worse on tasks requiring non-STEM factual knowledge.[1] That is a core tradeoff. The model was designed to be small and technical, not to act as a broad general-knowledge assistant.

There is also a cost trap. Because reasoning tokens are billed as output tokens, a workload with many difficult prompts can spend more than expected even when the visible answer is concise.[3] For production planning, measure total token usage under realistic prompts rather than estimating from final answer length alone. Our OpenAI API pricing guide explains how to think about those tradeoffs across models.

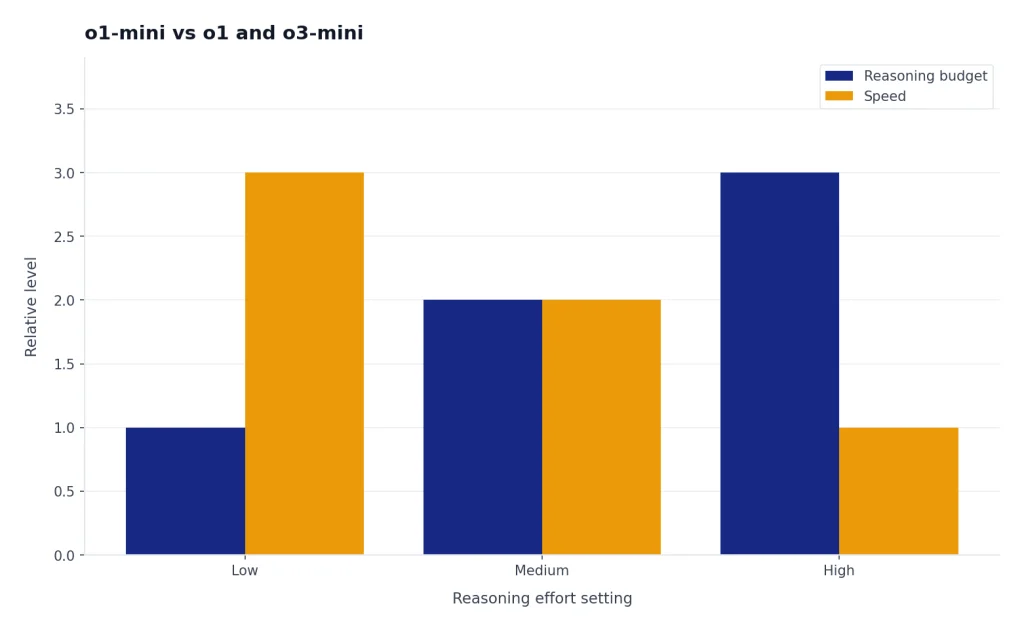

o1-mini vs o1 and o3-mini

The most useful comparison is not “Is o1-mini good?” It is “Which reasoning model should replace it?” OpenAI’s own documentation points to o3-mini as the successor for most small-reasoning workloads, because o3-mini provides higher intelligence at the same latency and price as o1-mini.[2] OpenAI’s o3-mini launch article also says o3-mini replaced o1-mini in the ChatGPT model picker and increased the Plus and Team rate limit from 50 messages per day with o1-mini to 150 messages per day with o3-mini.[4]

Compared with o1, o1-mini was cheaper and narrower. OpenAI’s quick comparison lists o1 at $15.00 per 1 million input tokens and o1-mini at $1.10 per 1 million input tokens.[2] That made o1-mini far more economical for high-volume technical reasoning, but o1 remained the broader model for harder and more general reasoning tasks. Read our OpenAI o1 guide if you need the full o1-family baseline.

| Model | Best role | Context window | Input price | Output price | Current guidance |

|---|---|---|---|---|---|

| o1-mini | Legacy small STEM reasoning | 128,000 tokens | $1.10 per 1M tokens | $4.40 per 1M tokens | Deprecated; use mainly for existing integrations.[2] |

| o1 | Broader o-series reasoning | 200,000 tokens | $15.00 per 1M tokens | $60.00 per 1M tokens | Use when broad reasoning matters more than cost.[2] |

| o3-mini | Small reasoning replacement | 200,000 tokens | $1.10 per 1M tokens | $4.40 per 1M tokens | Preferred replacement for most o1-mini workloads.[5] |

o3-mini also fixed several developer-facing gaps. OpenAI said o3-mini supports function calling, Structured Outputs, and developer messages, and allows developers to choose low, medium, or high reasoning effort.[4] Those features make it easier to put into structured applications than o1-mini. For a deeper successor view, see our OpenAI o3-mini article and our OpenAI o4-mini guide.

There are still cases where o1-mini matters. If you maintain an older system, need to reproduce an earlier benchmark, or compare historical model behavior, the snapshot name o1-mini-2024-09-12 is still part of the documented model record.[2] If you need maximum o1-family reasoning rather than the small tier, compare with OpenAI o1-pro.

Migration guidance

For new work, start with o3-mini instead of o1-mini. OpenAI’s documentation recommends o3-mini over o1-mini, and its pricing page lists both models at $1.10 per 1 million input tokens and $4.40 per 1 million output tokens.[2][3] That means the migration decision is rarely blocked by base token price.

For existing work, test before swapping. Reasoning models can differ in style, refusal behavior, verbosity, and sensitivity to prompt structure. Build a small evaluation set from your own prompts. Include easy cases, edge cases, malformed inputs, and examples where the old system failed. Then compare answer quality, latency, total output tokens, and downstream parser success.

- Inventory prompts. Find every place that calls o1-mini, including background jobs and evaluation scripts.

- Classify the task. Separate math, coding, extraction, tool-use, and broad knowledge prompts.

- Run paired tests. Send the same prompts to o1-mini and the replacement model.

- Measure total cost. Include visible output and hidden reasoning-token effects where available in usage data.

- Check integration behavior. Validate JSON, tool-call assumptions, retry logic, and timeout settings.

- Roll out gradually. Move low-risk traffic first, then monitor regressions before full migration.

If your application depends on exact phrasing, do not expect a drop-in match. Use snapshot pinning where available, store golden outputs, and keep old transcripts for regression testing. If your application depends on structured machine-readable responses, migration may be an improvement because newer small reasoning models support features that o1-mini did not support.[4]

The bottom line is simple. o1-mini was a meaningful step toward affordable reasoning, but it is now best treated as a historical and legacy model. For current builds, choose a newer small reasoning model unless you have a clear need to preserve o1-mini behavior.

Frequently asked questions

Is o1-mini still available?

OpenAI’s model documentation lists o1-mini but marks it as deprecated.[2] That means it may still appear for compatibility, but it should not be your default choice for new projects.

What was o1-mini best at?

o1-mini was best at bounded STEM reasoning, especially math and coding. OpenAI’s launch benchmarks highlighted strong AIME, Codeforces, and HumanEval results compared with o1-preview.[1]

How much did o1-mini cost?

OpenAI lists o1-mini at $1.10 per 1 million input tokens, $0.55 per 1 million cached input tokens, and $4.40 per 1 million output tokens.[3] Remember that reasoning tokens are billed as output tokens even when they are not visible.[3]

Should I use o1-mini or o3-mini?

Use o3-mini for most new small-reasoning workloads. OpenAI says o3-mini offers higher intelligence at the same latency and price as o1-mini.[2]

Does o1-mini support images or audio?

No. OpenAI’s o1-mini documentation lists text input and text output, while image, audio, and video are not supported.[2] Choose a multimodal model if the task involves files, screenshots, speech, or video.

Does o1-mini support function calling?

No. OpenAI’s documentation lists function calling and Structured Outputs as not supported for o1-mini.[2] If your application needs tool use or strict schemas, newer reasoning models are usually a better fit.