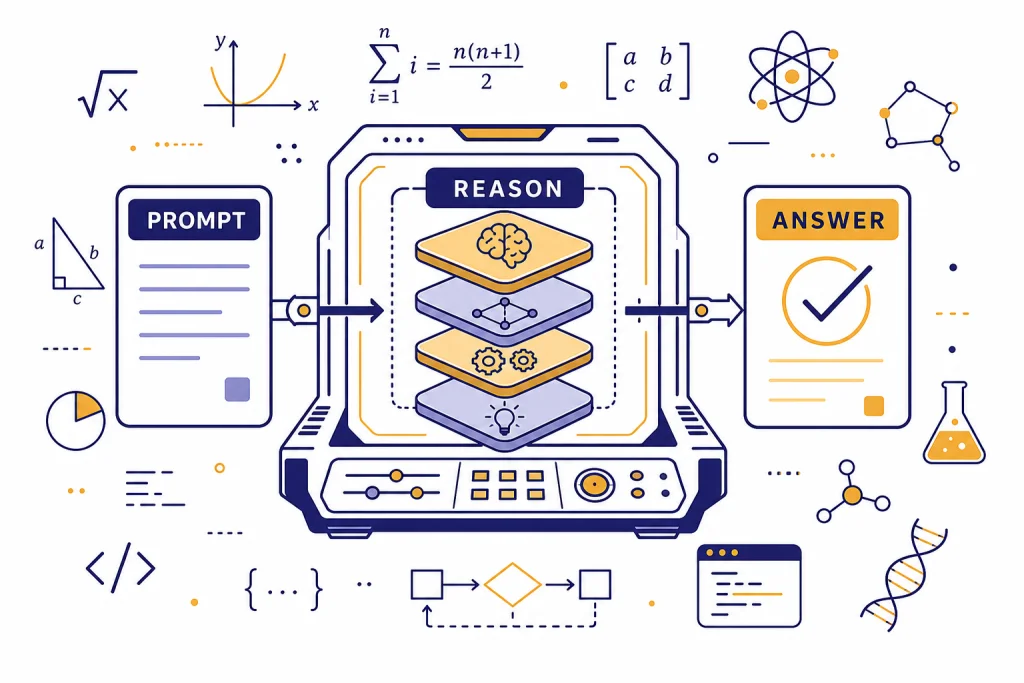

OpenAI o1 is a reasoning model designed to spend more time working through hard problems before it answers. It is best suited for multi-step coding, math, science, data analysis, and planning tasks where a fast general chat model may miss constraints or make shallow jumps. OpenAI describes o1 as a reinforcement-learning-trained model that produces internal reasoning before responding, with a 200,000-token context window and a 100,000-token maximum output limit in the API.[3] It is powerful, but it is not the default choice for every prompt. Use o1 when accuracy on complex reasoning matters more than speed or low cost.

What is OpenAI o1?

OpenAI o1 is part of OpenAI’s o-series, a family built around deliberate reasoning rather than only fast next-token response generation. OpenAI introduced the first public o1 preview on September 12, 2024, as a model series for complex tasks in science, coding, and math.[1] The production API snapshot listed for o1 is o1-2024-12-17.[3]

The simplest way to understand o1 is this: it trades some speed and cost efficiency for stronger problem solving. A general model can answer many ordinary questions well. o1 is for prompts where the answer depends on a sequence of checks, transformations, edge cases, or proofs. If you are comparing the full model lineup, start with all GPT models compared side by side and then use this page when you need the reasoning-specific details.

OpenAI’s API documentation calls o1 the “previous full o-series reasoning model,” which means it has been superseded by later reasoning models but remains a reference point for how OpenAI’s reasoning family works.[3] That status matters. o1 is no longer the newest reasoning option, but it is still important because many later o-series design patterns are easier to understand after you understand o1.

How o1 reasons

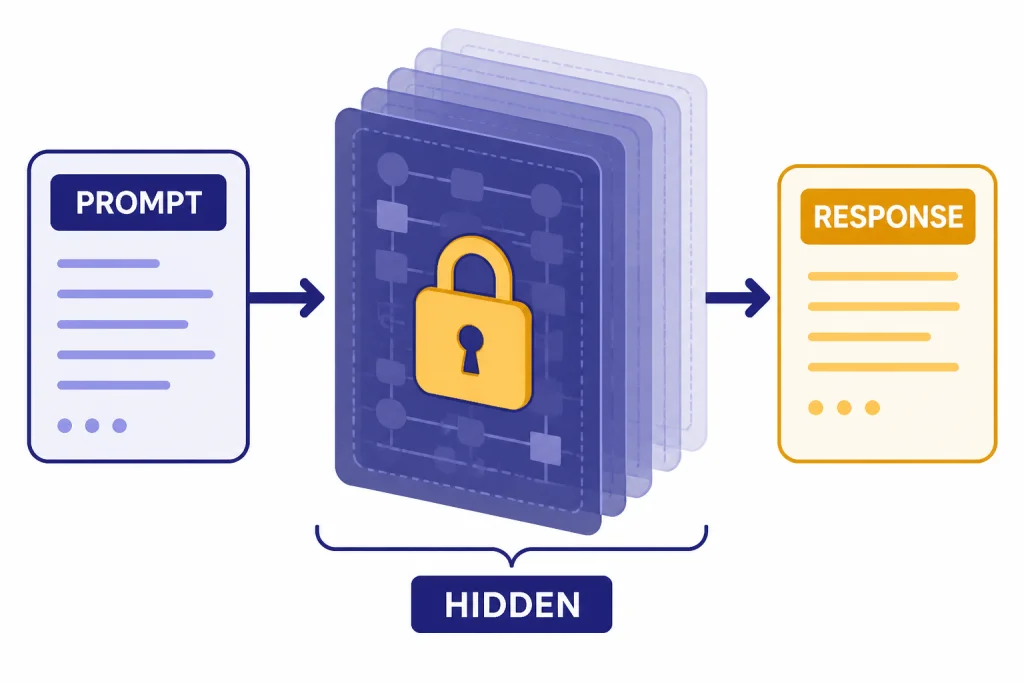

OpenAI says o1 models are trained with reinforcement learning to perform complex reasoning and “think before they answer,” producing a long internal chain of thought before responding to the user.[3] The key word is internal. The model may give you a concise explanation, a proof sketch, or a set of steps, but the hidden reasoning process itself is not the same thing as the visible answer.

This design changes how you should use the model. With many chat models, users often ask for step-by-step reasoning to push the model into more careful work. With o1, the model already allocates internal compute to the problem. You get better results by defining the task, constraints, data, and desired output clearly. You usually do not need to ask it to “think step by step.”

OpenAI’s research write-up says o1-preview ranked in the 89th percentile on Codeforces, placed among the top 500 students in the United States on an AIME qualifier, and exceeded human PhD-level accuracy on GPQA, a science benchmark covering physics, biology, and chemistry.[2] Those figures were from the early public reasoning release, but they explain why the o-series attracted attention: it was not just a larger chat model. It was trained to spend more computation on hard reasoning.

OpenAI also used a safety method called deliberative alignment for o-series models. The company describes it as training models to reason over written safety specifications before answering.[7] That does not make o1 error-free. It does mean safety behavior is part of the model’s reasoning design, not only a final filter placed after a response.

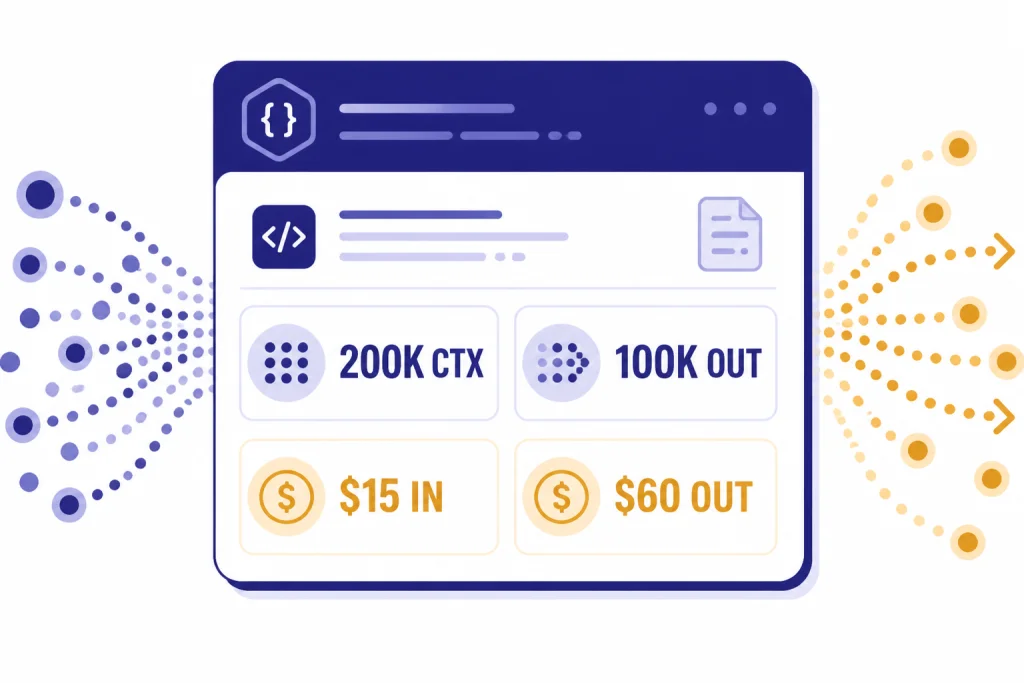

o1 specs, pricing, and API support

For developers, o1 is best understood through four practical dimensions: context size, output budget, supported inputs, and price. OpenAI lists a 200,000-token context window, a 100,000-token maximum output limit, and an October 1, 2023 knowledge cutoff for o1 in the API model documentation.[3] For a broader model-by-model view, see our context window sizes for every GPT model.

| Category | o1 detail | What it means in practice |

|---|---|---|

| API snapshot | o1-2024-12-17[3] | Use a snapshot when you need more stable behavior over time. |

| Context window | 200,000 tokens[3] | Useful for long codebases, contracts, research notes, or dense technical briefs. |

| Maximum output | 100,000 tokens[3] | Allows long structured answers, but large outputs can become expensive. |

| Modalities | Text input and output; image input only; audio and video not supported[3] | Good for text-heavy reasoning and visual analysis, not realtime voice or video generation. |

| API features | Streaming, function calling, and Structured Outputs supported[3] | Suitable for apps that need tool calls or schema-constrained responses. |

| Fine-tuning | Not supported[3] | Use prompting, retrieval, tools, or another model if you need fine-tuning. |

| Standard API pricing | $15.00 input, $7.50 cached input, and $60.00 output per 1 million tokens[4] | Reserve it for work where better reasoning justifies the cost. |

Reasoning tokens are especially important for cost planning. OpenAI’s pricing page says reasoning tokens are not visible through the API, but they still occupy space in the model’s context window and are billed as output tokens.[4] This means a short final answer can still cost more than expected if the model used substantial internal reasoning.

OpenAI’s developer announcement for o1-2024-12-17 also introduced a reasoning_effort parameter and said o1 used, on average, 60% fewer reasoning tokens than o1-preview for a given request.[5] If you are building against the API, pair that with logging and cost limits. Our OpenAI API pricing guide explains how to compare token costs across models.

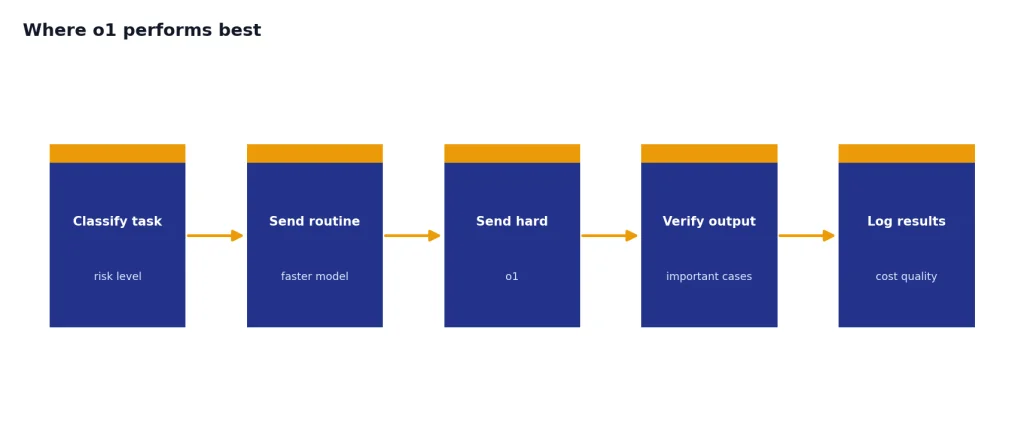

Where o1 performs best

o1 is strongest when the problem has hidden structure. That includes debugging a failing system, proving or disproving a claim, optimizing a query, reconciling conflicting requirements, designing a multi-step plan, or solving a math problem that needs several transformations. It is less compelling for short copy, casual Q&A, summarizing a simple article, or generating a quick list.

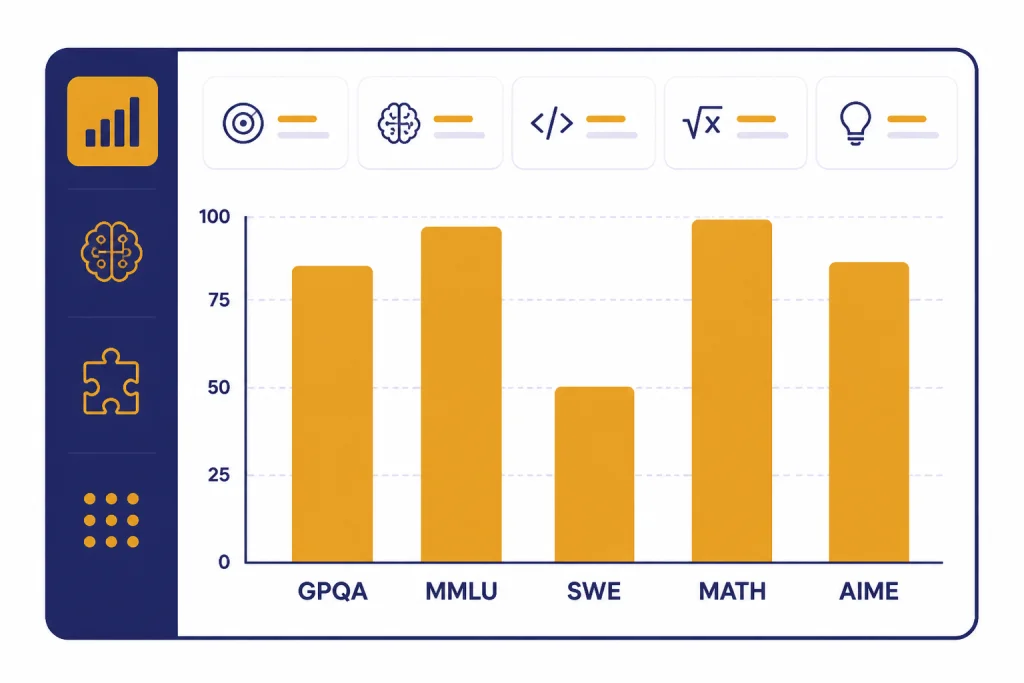

OpenAI reported that o1-2024-12-17 scored 75.7 on GPQA diamond, 91.8 on MMLU pass@1, 48.9 on SWE-bench Verified, 96.4 on MATH pass@1, and 79.2 on AIME 2024 pass@1.[5] Benchmarks are not product guarantees, but they show the shape of the model. It is built for difficult reasoning, not just fluent writing.

For coding, o1 is useful when the task involves architecture, state, concurrency, tests, or a bug that spans several files. If you only need boilerplate, a cheaper or faster model may be enough. If you are choosing a model mainly for software work, compare this with our best GPT model for coding guide.

For writing, o1 can help with argument structure, technical editing, policy analysis, and legal-style issue spotting. It is not always the most natural prose stylist. If the assignment is brand copy, tone matching, or ordinary article drafting, see our best GPT model for writing comparison before defaulting to o1.

For cost-sensitive applications, o1 should usually sit behind a routing rule. Send ordinary tasks to a smaller or faster model. Send ambiguous, high-stakes, or multi-step tasks to o1. If cost is the main constraint, our cheapest GPT model comparison will help you decide whether o1 is overkill.

o1 vs. o1-preview, o1-mini, and o1-pro

The o1 name can be confusing because OpenAI used several related labels. o1-preview was the first public preview. o1 is the full reasoning model. o1-mini is a faster, cheaper reasoning model for STEM-style tasks. o1-pro is a heavier version intended to spend more compute on the hardest prompts.[1][6][9]

| Model | Best fit | Main tradeoff |

|---|---|---|

| o1-preview | Early access to the first public o-series reasoning model | Deprecated in the current model listing[3] |

| o1 | General heavy reasoning across coding, math, science, and analysis | Higher cost than smaller reasoning models[4] |

| o1-mini | Lower-cost STEM reasoning, especially math and coding | Less broad world knowledge than the full o1 family, according to OpenAI’s launch note[9] |

| o1-pro | Harder prompts where reliability matters more than response time | More compute and a higher-priced tier in ChatGPT Pro and the API[6][4] |

Use OpenAI o1-mini when the task is mostly coding or math and you need a lower-cost model. Use OpenAI o1-pro when you are working on a small number of difficult prompts and want the model to spend more compute. If you are comparing with later reasoning models, see OpenAI o3, OpenAI o3-pro, and OpenAI o4-mini.

Speed is another reason to route carefully. Reasoning models can be slower because their value comes from extra internal work. If latency is the deciding factor, compare against our fastest GPT model guide before choosing o1 for a user-facing workflow.

How to prompt o1 well

o1 responds best to clear tasks with explicit constraints. Do not bury the goal. Start with the desired outcome, then provide facts, limits, and the output format. If the model must choose among options, tell it what criteria matter most.

A strong o1 prompt often has this shape:

Task: Find the likely root cause of this bug.

Context: The service fails only after cache warmup.

Constraints: Do not assume access to production logs. Prefer explanations that fit all symptoms.

Evidence: [paste logs, code, traces]

Output: Give the top hypotheses, how to test each one, and the smallest safe fix.For analysis tasks, ask o1 to separate assumptions from conclusions. For coding tasks, ask for a patch plan before code. For mathematical work, ask it to state definitions and check edge cases. For business or legal-style work, ask it to identify missing information and explain how uncertainty affects the recommendation.

Avoid long motivational instructions. “Be brilliant,” “think harder,” and “do your best” usually add noise. o1 already spends internal compute on reasoning. Your job is to give it the right problem boundary.

Limits and cautions

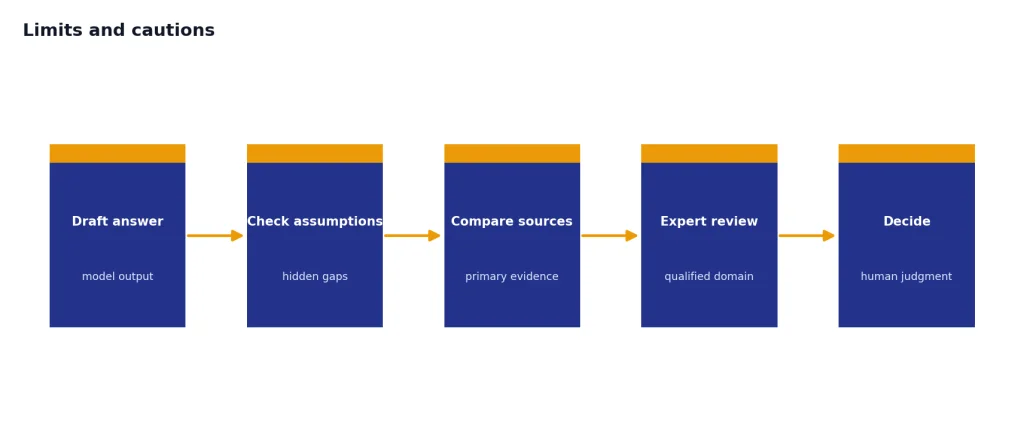

o1 can still be wrong. A reasoning model may make a more elaborate mistake than a simpler model. Treat it as a strong analyst, not as an authority. For medical, legal, financial, or safety-critical use, verify outputs against primary sources and qualified experts.

OpenAI has not published an official parameter count for o1. Any article that claims a precise parameter count without an OpenAI source is guessing. The useful public facts are the model behavior, API limits, pricing, supported modalities, and benchmark results that OpenAI has published.

o1 is also not the right model for every media task. It is not an image generator, video generator, speech-to-text model, or text-to-speech system. For those jobs, see our guides to DALL-E 3, Sora, and Whisper.

The practical rule is simple. Use o1 when the cost of a shallow answer is high. Use a faster or cheaper model when the task is routine. Use o1-pro when a single hard answer is worth extra compute. Use o1-mini or a later small reasoning model when you want reasoning behavior at a lower cost.

Frequently asked questions

Is o1 still worth using?

Yes, when you need deliberate reasoning on hard tasks and your workflow already supports its cost and latency. It is not the newest reasoning model, but it remains a useful reference model in the o-series. For new builds, compare it with newer o-series and GPT models before standardizing on it.

What is the context window for o1?

OpenAI lists o1 with a 200,000-token context window and a 100,000-token maximum output limit in its API model documentation.[3] That large window helps with long technical inputs, but reasoning tokens can still consume context and increase cost.[4]

How much does o1 cost in the API?

OpenAI lists standard o1 API pricing at $15.00 per 1 million input tokens, $7.50 per 1 million cached input tokens, and $60.00 per 1 million output tokens.[4] Output pricing matters because hidden reasoning tokens are billed as output tokens.[4]

Does o1 show its chain of thought?

No. OpenAI describes o1 as producing internal reasoning before it answers, but that internal chain of thought is not the same as the visible response.[3] You can ask for a concise explanation, justification, checklist, or proof outline instead.

Is o1 better than GPT-4o?

For hard reasoning, OpenAI positioned o1 ahead of earlier general models. In its o1-preview launch, OpenAI reported that GPT-4o solved 13% of problems in an IMO qualifying exam while the reasoning model scored 83%.[1] For everyday chat, speed, and multimodal interaction, a general model may still be the better fit.

Does OpenAI publish o1’s parameter count?

No. OpenAI has not published an official figure for this. Treat any precise public parameter estimate for o1 as unverified unless OpenAI releases it directly.