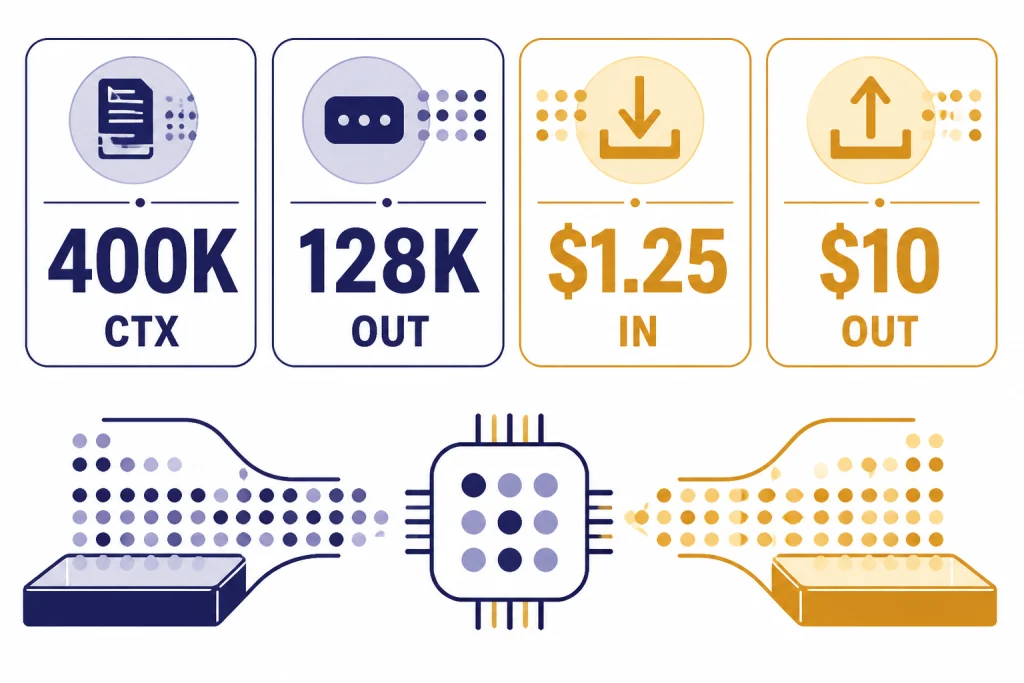

GPT-5.1 was OpenAI’s November 2025 update to the GPT-5 family, focused less on a new architecture label and more on practical behavior: warmer ChatGPT replies, better instruction following, adaptive reasoning, and developer controls for lower-latency responses.[1][8] As of March 23, 2026, the important status change is that GPT-5.1 is no longer selectable in ChatGPT; OpenAI retired GPT-5.1 models from normal chats and GPTs on March 11, 2026, while keeping them available through the OpenAI API.[4][13] For developers, the main API facts are a 400,000-token context window, 128,000 max output tokens, and pricing of $1.25 per 1 million input tokens and $10.00 per 1 million output tokens.[3][10]

What changed in GPT-5.1

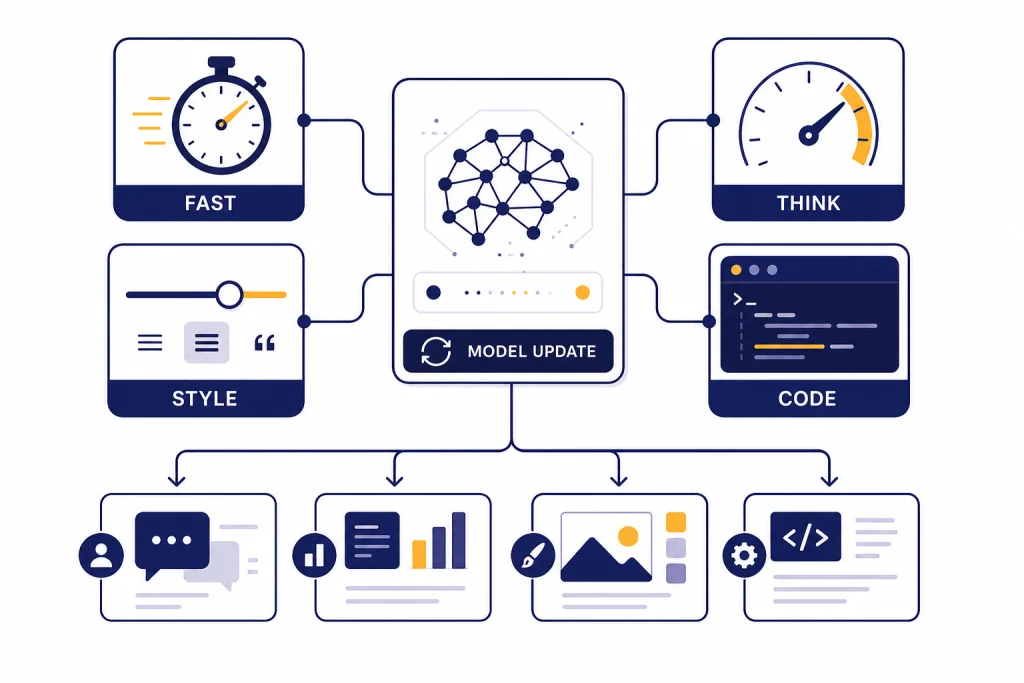

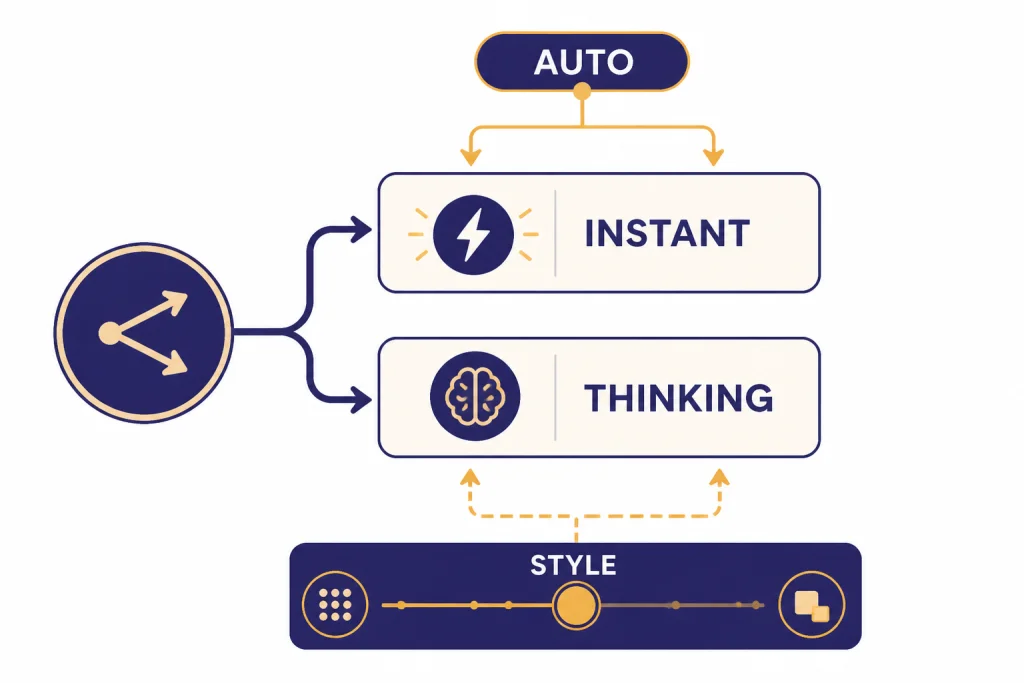

GPT-5.1 changed the everyday feel of the GPT-5 family more than it changed the product category. OpenAI introduced GPT-5.1 Instant for faster, more conversational answers and GPT-5.1 Thinking for harder reasoning tasks that need more time and clearer explanations.[1][8] The update also added adaptive reasoning, expanded tone controls in ChatGPT, and a developer-facing none reasoning setting for latency-sensitive API calls.[2][7]

The short version is simple: GPT-5.1 was the “make GPT-5 easier to live with” release. It tried to fix the two complaints that often matter most after a major model launch: answers that feel too stiff and reasoning that takes too long on easy prompts. If you want a broader family-level comparison, use all GPT models compared side by side after reading this update.

GPT-5.1 timeline and current availability

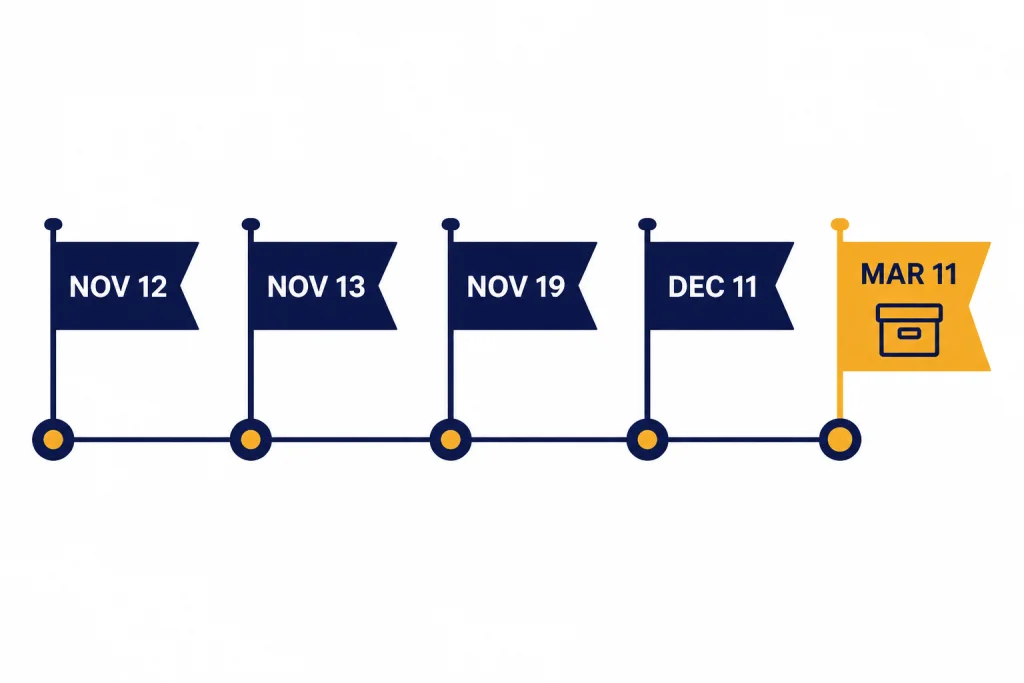

GPT-5.1 had a short life as the visible default generation inside ChatGPT. OpenAI announced GPT-5.1 on November 12, 2025, then followed with a developer announcement on November 13, 2025.[1][8][2][11] OpenAI later said GPT-5.1 would remain available to paid ChatGPT users under legacy models for three months after the GPT-5.2 launch, and then OpenAI retired GPT-5.1 from normal ChatGPT chats and GPTs on March 11, 2026.[5][4][13]

| Date | Event | Why it mattered | Sources |

|---|---|---|---|

| November 12, 2025 | GPT-5.1 Instant and GPT-5.1 Thinking announced for ChatGPT. | OpenAI framed the release around smarter, warmer, and more customizable ChatGPT behavior. | [1][8] |

| November 13, 2025 | OpenAI published the developer update for GPT-5.1. | Developers got clearer guidance on reasoning effort, latency, tools, and migration from earlier GPT-5 models. | [2][11] |

| November 19, 2025 | GPT-5.1-Codex-Max was introduced in OpenAI model release notes. | OpenAI positioned it as a frontier agentic coding model for long-running, project-scale work. | [6][11] |

| December 11, 2025 | GPT-5.2 launched and GPT-5.1 moved toward legacy status in ChatGPT. | OpenAI said GPT-5.1 would remain under legacy models for paid users for three months. | [5][14] |

| March 11, 2026 | GPT-5.1 models were retired from normal ChatGPT chats and GPTs. | The API remained available, but ChatGPT users lost GPT-5.1 as a selectable model. | [4][13] |

That timeline matters because many readers now reach this page after seeing old references to GPT-5.1 in prompts, screenshots, or coding tools. If you are using ChatGPT directly on March 23, 2026, GPT-5.1 is a historical model choice, not the current model picker option.[4][13] If you are using the API, GPT-5.1 remains relevant because OpenAI has not announced an API retirement date for it.[4][5]

ChatGPT feature notes

OpenAI split the ChatGPT experience into GPT-5.1 Instant and GPT-5.1 Thinking.[1][8] Instant was the everyday model, tuned for a warmer default tone, better conversational flow, and stronger instruction following. Thinking was the advanced reasoning option, meant to spend less time on simple questions and more time on complex problems.[1][9]

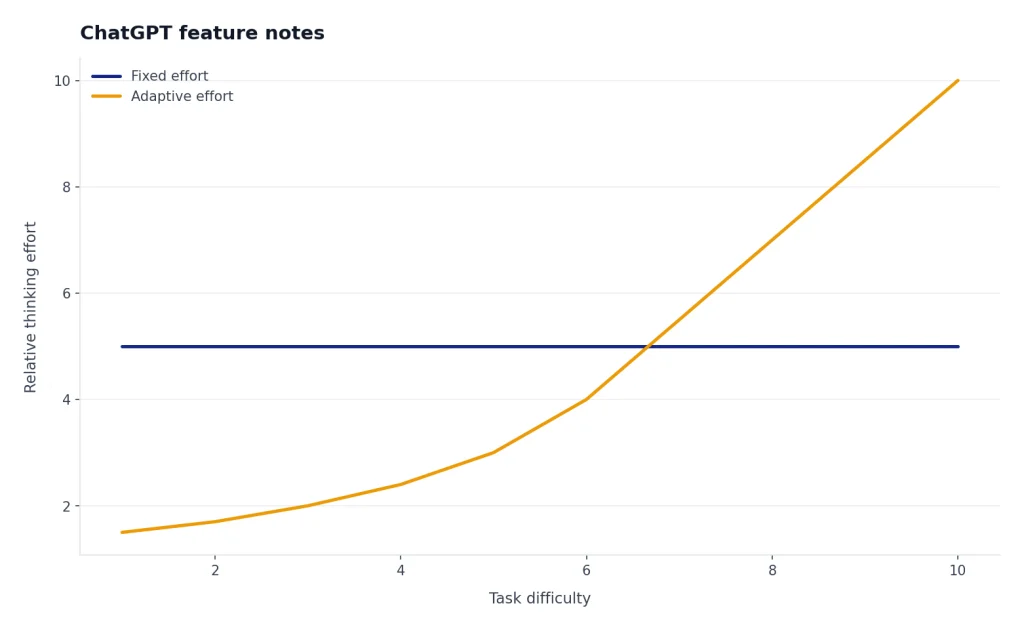

The most important behavior change was adaptive reasoning. GPT-5.1 Instant could decide when to think before answering a harder prompt, while GPT-5.1 Thinking could vary its thinking time more precisely by task difficulty.[1][7] OpenAI said GPT-5.1 Thinking was roughly twice as fast on the fastest tasks and twice as slow on the slowest tasks, compared with GPT-5 Thinking on a representative distribution of ChatGPT tasks.[1][9]

The second major change was tone control. OpenAI kept Default, Friendly, and Efficient styles, added Professional, Candid, and Quirky, and kept Nerdy and Cynical available in personalization settings.[1][9] This was not only a cosmetic change. It made model behavior easier to steer without rewriting the same style instructions in every prompt.

GPT-5.1 Auto also mattered. OpenAI said GPT-5.1 Auto would continue routing each query to the model best suited for it, so most users would not need to manually choose between Instant and Thinking.[7][9] That router-first design is now a recurring pattern in ChatGPT: the model picker becomes less about choosing a raw model and more about choosing the kind of work you want done.

API specs, pricing, and limits

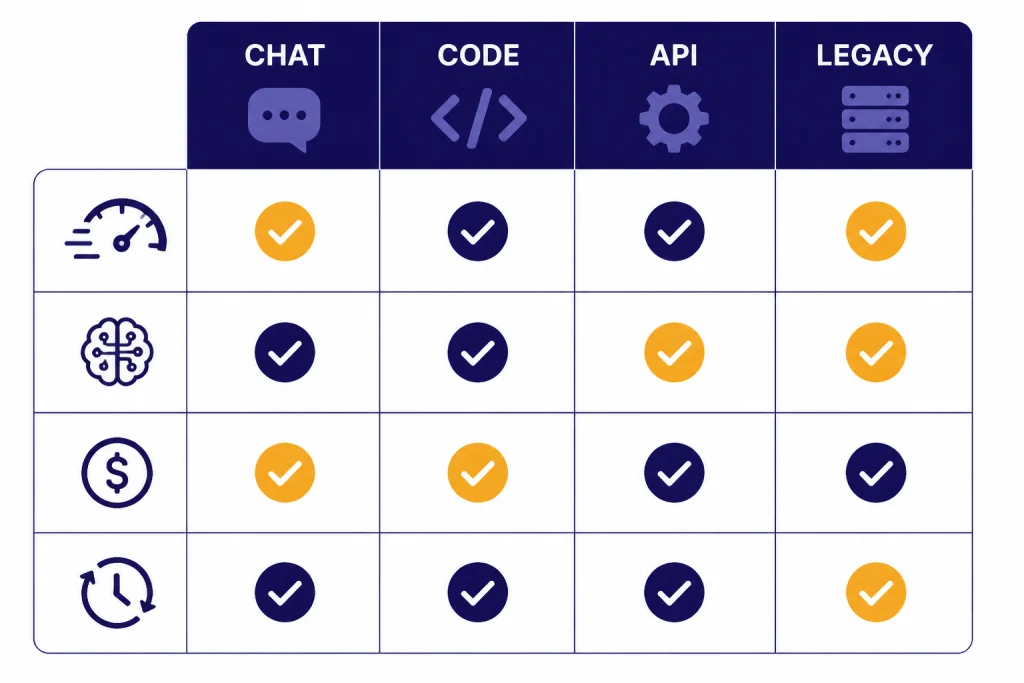

For API users, GPT-5.1 is still a concrete model, not just a past ChatGPT label. OpenAI lists GPT-5.1 as a flagship model for coding and agentic tasks with configurable reasoning and non-reasoning effort.[3][10] The official model page lists text and image input, text output, streaming, function calling, structured outputs, distillation support, and no fine-tuning support for GPT-5.1.[3][10]

The official API page lists a 400,000-token context window, 128,000 max output tokens, a September 30, 2024 knowledge cutoff, and reasoning effort options of none, low, medium, and high.[3][10] Third-party model directories broadly corroborate the 400,000-token context and 128,000-token output limit, but some pricing trackers list different effective context figures for particular providers.[10][11][12] Treat OpenAI’s model page as the source of record when building directly on the OpenAI API.

| API item | OpenAI listing | Corroborating or conflicting source | Practical note |

|---|---|---|---|

| Context window | 400,000 tokens | Sim and Galaxy list 400K tokens; LangCopilot lists 200,000 tokens. | Use the OpenAI value for direct OpenAI API planning.[3][10][11][12] |

| Max output | 128,000 tokens | Sim and Galaxy also list 128K max output. | Long outputs can still be constrained by cost, latency, and app-level settings.[3][10][11] |

| Input price | $1.25 per 1 million tokens | Sim, Galaxy, and LangCopilot list $1.25 per 1 million input tokens. | Input-heavy retrieval apps benefit most from caching.[3][10][11][12] |

| Cached input price | $0.125 per 1 million tokens | Sim and LangCopilot list $0.125 per 1 million cached input tokens. | Repeated system prompts and stable document prefixes can reduce cost.[3][10][12] |

| Output price | $10.00 per 1 million tokens | Sim, Galaxy, and LangCopilot list $10.00 per 1 million output tokens. | Output-heavy agents can cost more than simple chatbots.[3][10][11][12] |

Those numbers place GPT-5.1 in a middle position: not the cheapest OpenAI option, but much cheaper than the later GPT-5.2 Pro pricing OpenAI published in December 2025.[5][3] If cost is your main constraint, compare it with the cheapest GPT model and OpenAI API pricing before committing a production workload.

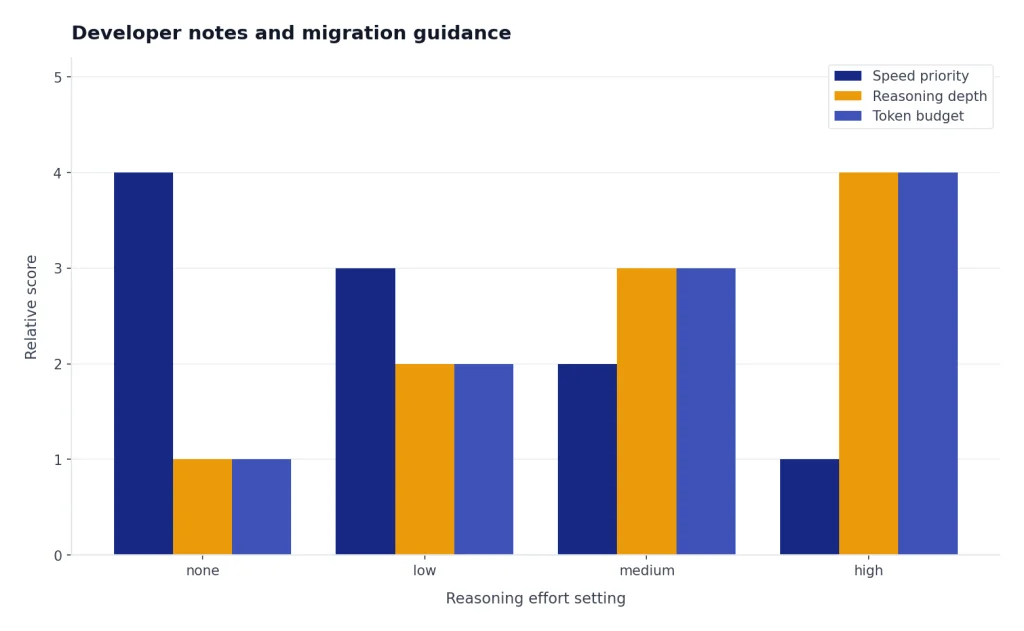

Developer notes and migration guidance

GPT-5.1’s developer story centers on control. OpenAI said GPT-5.1 added a none reasoning effort setting, increased steerability, and new coding-use-case tools compared with the previous GPT-5 model.[2][3] The none setting is especially important because it lets developers run GPT-5.1 more like a non-reasoning model when latency matters.[2][3]

The migration advice is to test reasoning levels instead of assuming a single setting works for every workflow. For support bots, summarizers, and light extraction, none or low may be enough. For planning, coding, multi-step document analysis, and agentic tool use, medium or high can be worth the extra time and tokens.[2][3]

OpenAI’s GPT-5.1 developer page gave a simple latency example: for the prompt asking for an npm command to list globally installed packages, GPT-5.1 at medium reasoning answered in about 2 seconds and about 50 tokens, while GPT-5 at medium reasoning took about 10 seconds and about 250 tokens in OpenAI’s example.[2][11] That is not a universal benchmark, but it shows the release goal: avoid spending reasoning budget on easy tasks.

For coding agents, GPT-5.1-Codex-Max is the more specialized branch. OpenAI’s model release notes describe GPT-5.1-Codex-Max as a frontier agentic coding model for long-running, project-scale work, using compaction to work across multiple context windows.[6][11] If your main use case is software engineering, compare GPT-5.1 with the best GPT model for coding and with OpenAI’s coding-specific model notes before choosing a default.

Original analysis: the GPT-5.1 tradeoff

The best way to understand GPT-5.1 is as a tradeoff release. It did not ask users to learn a new category of model. It asked them to trust a smarter router, a warmer default tone, and more dynamic reasoning. That is why the most important question is not “Was GPT-5.1 the most powerful model?” It is “Did GPT-5.1 spend effort in the right places?”

I would call the pattern elastic reasoning. A fixed reasoning model behaves like a car stuck in one gear. GPT-5.1 tried to shift gears automatically: quick answers for obvious prompts, deeper reasoning for hard prompts, and more style control for the human-facing layer.[1][2][7] That makes it easier to use than a model that is always fast but shallow or always thorough but slow.

The downside is predictability. When a model decides how much to think, two similar prompts can have different latency and cost. That can be fine in ChatGPT, where user patience is flexible. It can be harder in an API product with service-level targets, budget ceilings, and test suites that expect stable behavior.

The decision framework is straightforward. Use GPT-5.1 when you need a strong general model with controllable reasoning, long context, and good coding behavior. Avoid it when you need the cheapest possible token price, a current ChatGPT model picker option, or a model family that OpenAI is actively positioning as its newest flagship. For raw model comparisons, keep context window sizes for every GPT model, the fastest GPT model, and the most powerful GPT model open while you test.

Who should still use GPT-5.1

Use GPT-5.1 through the API if your application already performs well on it and you need continuity. OpenAI says GPT-5.1 remains available through the API and that it will provide advance notice before any future API retirement.[4][5] That makes it reasonable to keep stable production systems on GPT-5.1 while testing newer models in parallel.

Use GPT-5.1 for long-context workflows when the 400,000-token context window is enough and the $1.25 input / $10.00 output price point fits your budget.[3][10] That includes document review, codebase Q&A, tool-using agents, and structured extraction where GPT-5.1’s reasoning controls help you balance speed against thoroughness.

Do not use GPT-5.1 as the first choice for new ChatGPT-only workflows because it is no longer available in normal ChatGPT chats or GPTs as of March 11, 2026.[4][13] If you mainly write, brainstorm, or edit in ChatGPT, start with the current model options and then compare writing quality against the best GPT model for writing.

Also separate GPT-5.1 from OpenAI’s media models. GPT-5.1 is not an image generation model, a video model, or a speech model. For those use cases, use dedicated guides such as DALL-E 3, Sora, and Whisper.

Frequently asked questions

What is GPT-5.1?

GPT-5.1 is an OpenAI GPT-5 family update announced on November 12, 2025 for ChatGPT and followed by a developer release on November 13, 2025.[1][8][2][11] It introduced GPT-5.1 Instant and GPT-5.1 Thinking, with a focus on warmer conversation, better instruction following, and adaptive reasoning.[1][7] As of March 23, 2026, it is best understood as an API-available legacy model rather than a current ChatGPT picker option.[4][13]

Is GPT-5.1 still available in ChatGPT?

No. OpenAI says GPT-5.1 models were retired from normal ChatGPT chats and GPTs on March 11, 2026.[4][13] The same OpenAI Help Center note says the models will continue to be available through the OpenAI API.[4][5] If you see GPT-5.1 in an old guide or screenshot, that likely reflects the November 2025 to March 2026 ChatGPT window.[1][4]

What were the main GPT-5.1 models?

The main ChatGPT models were GPT-5.1 Instant and GPT-5.1 Thinking.[1][8] Instant was designed for everyday use with faster, warmer answers, while Thinking was designed for deeper reasoning and clearer explanations on harder work.[1][9] OpenAI also documented GPT-5.1 Auto as a router that could choose the better model for a query.[7][9]

How much does GPT-5.1 cost in the API?

OpenAI lists GPT-5.1 at $1.25 per 1 million input tokens, $0.125 per 1 million cached input tokens, and $10.00 per 1 million output tokens.[3][10] Galaxy and LangCopilot list the same $1.25 input and $10.00 output pricing, while Sim also lists the $0.125 cached input price.[10][11][12] Always check the official pricing page before a production launch because API prices can change.

What is the GPT-5.1 context window?

OpenAI’s official GPT-5.1 model page lists a 400,000-token context window and 128,000 max output tokens.[3][10] Sim and Galaxy also list 400K context and 128K output.[10][11] LangCopilot lists a conflicting 200,000-token context value, so use OpenAI’s figure when building directly against OpenAI’s API.[12][3]

Did GPT-5.1 replace GPT-5?

GPT-5.1 was an iterative update inside the GPT-5 generation, not a separate generation. OpenAI said the name GPT-5.1 reflected meaningful improvements while staying within the GPT-5 generation.[1][8] In ChatGPT, GPT-5 remained under legacy models for paid subscribers for three months after the GPT-5.1 launch.[1][9]

Should developers migrate from GPT-5.1 now?

Not automatically. OpenAI says GPT-5.1 remains available through the API and has not announced a GPT-5.1 API retirement date.[4][5] Developers should keep stable GPT-5.1 workloads running if quality, latency, and cost are acceptable, while testing newer models on a representative evaluation set. If your app depends on ChatGPT model picker behavior rather than the API, you should migrate because GPT-5.1 was removed from ChatGPT on March 11, 2026.[4][13]

Bottom line

GPT-5.1 was the GPT-5 family’s usability correction: warmer answers, more flexible reasoning, better developer controls, and a clearer split between fast everyday work and deeper thinking.[1][2][7] Its ChatGPT moment has passed, but its API role remains useful for teams that want stable GPT-5.1 behavior and predictable pricing.

Watch OpenAI’s API deprecation notices next. The key practical question after March 11, 2026 is not whether GPT-5.1 still exists; it does in the API.[4][5] The question is how long developers will keep a reason to choose it over newer GPT-5 family models.