GPT vs the o-series comes down to response style and compute budget. GPT models are the better default when you want fast drafting, summarizing, rewriting, extraction, chat, and high-volume automation. OpenAI’s o-series models are better when the task needs deliberate multi-step reasoning, such as hard coding bugs, math, science, visual analysis, complex planning, and tool-heavy investigation. The split is less absolute than it used to be because newer GPT-5 generation systems can decide when to think longer. Still, the practical rule remains simple: start with GPT for speed, switch to an o-series reasoning model when correctness depends on solving a hard problem rather than producing fluent text.

Quick verdict

Use GPT first unless you know the task is reasoning-heavy. GPT models are built for broad utility, low latency, polished language, instruction following, and high-throughput workflows. OpenAI’s own API guidance describes GPT models and reasoning models as different families, and says an application might use o-series models to plan a strategy while using GPT models to execute tasks where speed and cost matter more.[1]

Use the o-series when the answer is not obvious from surface text. These models are trained to think for longer before responding, which makes them better fits for hard analysis, difficult code repair, multi-constraint planning, math, science, and visual reasoning.[3] If you want a model-by-model map, pair this comparison with all GPT models compared side by side and OpenAI o1 vs o3.

The short version: GPT is the fast workhorse. The o-series is the deliberate problem solver. GPT-5 generation systems blur that boundary by adding automatic routing between quicker answers and deeper thinking, but the old distinction still helps when you choose models manually or design an API workflow.[8]

What the names mean

GPT is OpenAI’s general model family name. In current OpenAI documentation, GPT models include products such as GPT-4o, GPT-4.1, and GPT-5 generation models. GPT-4o is described by OpenAI as a fast, intelligent, flexible GPT model, and GPT-4.1 is described as the smartest non-reasoning model in its API documentation.[7][6] For background on the term itself, see what GPT means.

The o-series is OpenAI’s reasoning-model family. OpenAI introduced o1 as the first o-series reasoning model and said it planned to keep developing the GPT series alongside the new o1 series.[2] Later o-series releases included o3 and o4-mini, which OpenAI described as models trained to think for longer before responding.[3]

The naming is confusing because both families are large language models, and the newer GPT-5 generation includes built-in thinking. The cleanest distinction is not “chat model versus reasoning model.” It is “model optimized for fast general work” versus “model optimized to spend more compute on hard reasoning.”

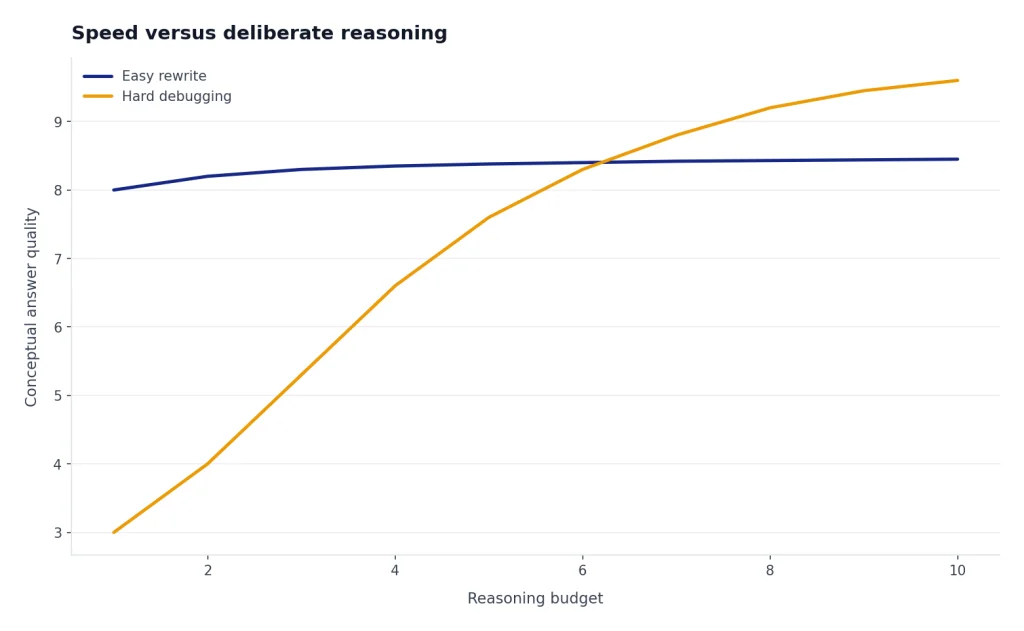

The core tradeoff: speed versus deliberate reasoning

A GPT model usually feels better for everyday work because it responds quickly and writes naturally. It is the model you want for first drafts, concise summaries, routine code snippets, email rewrites, support replies, extraction from documents, and simple data transformations. GPT-4.1, for example, is documented as having low latency without a reasoning step, which is exactly what many production apps need.[6]

An o-series model spends more of the interaction budget on reasoning before it answers. That can make it slower. It can also make it more reliable on tasks where a fast first answer is likely to miss a hidden constraint. OpenAI’s o3 and o4-mini system card says those models combine reasoning with tools such as web browsing, Python, image and file analysis, image generation, canvas, automations, file search, and memory.[4]

That does not mean you should use an o-series model for everything. A reasoning model can over-spend effort on a task that needed a quick rewrite. It can also cost more in an app if it generates more reasoning tokens. The best workflow is selective. Use speed by default. Escalate only when the question deserves deliberate work.

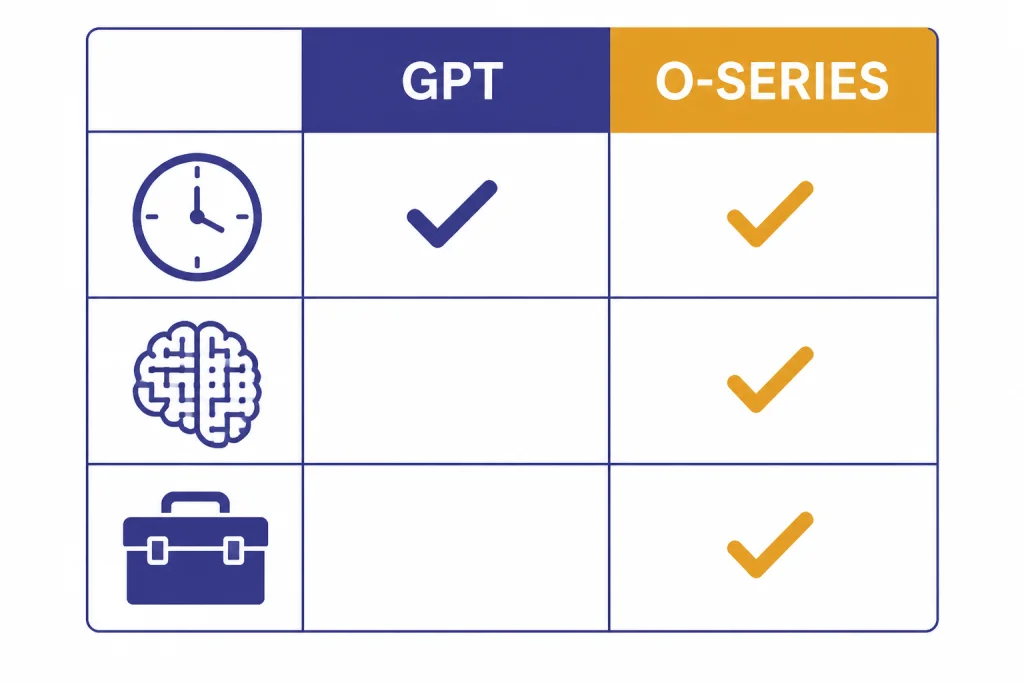

GPT vs o-series side by side

The table below gives the practical comparison. It focuses on how the model families behave in real use, not only on benchmark labels. For exact model limits and context windows, use our separate context window comparison.

| Decision point | GPT models | OpenAI o-series models |

|---|---|---|

| Best default use | Fast general work, writing, summarizing, extraction, chat, structured outputs, routine coding. | Hard reasoning, complex coding, math, science, planning, visual analysis, tool-heavy investigation. |

| Response speed | Usually the better choice when low latency matters. GPT-4.1 is documented as low latency without a reasoning step.[6] | Often slower because the model is designed to think longer before answering.[3] |

| Reasoning depth | Strong enough for many tasks, especially newer GPT-5 generation systems with built-in thinking.[8] | Purpose-built for deliberate reasoning and hard problem solving.[1] |

| Language polish | Usually the safer choice for tone, rewriting, style, and conversational flow. | Can write well, but the main advantage is solving, not prose polish. |

| Tool use | Strong tool use in supported GPT models and workflows. | o3 and o4-mini were documented with broad tool access across ChatGPT and API function calling.[3] |

| Scaling in apps | Better for high-volume automation where most requests are simple. | Better as an escalation path for high-value or high-risk requests. |

When to choose a GPT model

Choose a GPT model when you care about fast, clear, useful output more than deep search through a problem space. This includes most everyday ChatGPT work. It also includes many API workloads where latency and volume matter.

- Writing and editing. Use GPT for outlines, rewrites, tone changes, summaries, captions, and customer support drafts.

- Extraction and classification. Use GPT when the task is to pull fields from text, label a request, or reformat information.

- Routine coding. Use GPT for boilerplate, small functions, simple refactors, test generation, and code explanation.

- Interactive chat. Use GPT when the user expects a natural back-and-forth rather than a long wait.

- High-volume app flows. Use GPT when most requests are ordinary and you only need escalation for a minority of cases.

GPT-4.1 is a useful example of the GPT-side design goal. OpenAI launched GPT-4.1, GPT-4.1 mini, and GPT-4.1 nano in the API on April 14, 2025, with emphasis on coding, instruction following, long context, and faster variants.[5] GPT-4.1 nano was described as OpenAI’s fastest and cheapest model available at launch, which shows how the GPT family can scale down for latency-sensitive work.[5]

If your main question is speed, read our fastest GPT model benchmark guide. If your question is plan access rather than model behavior, start with ChatGPT Free vs Plus vs Pro.

When to choose an o-series model

Choose an o-series model when the task has a real reasoning bottleneck. The user is not just asking for text. They are asking the model to solve something. That difference matters.

- Hard coding problems. Use the o-series for debugging across files, understanding a complex codebase, or planning a multi-step implementation.

- Math and technical reasoning. Use it when a wrong shortcut could break the answer.

- Scientific or analytical work. Use it for hypothesis comparison, experimental design, and multi-source synthesis.

- Visual reasoning. Use it for diagrams, charts, screenshots, whiteboards, and messy visual inputs.

- Complex planning. Use it when the model must balance constraints, tradeoffs, dependencies, and failure modes.

OpenAI’s April 16, 2025 o3 and o4-mini announcement framed o3 as a frontier reasoning model and o4-mini as a smaller model optimized for fast, cost-efficient reasoning.[3] The same launch said o3 and o4-mini could use and combine tools in ChatGPT, including web search, file and data analysis with Python, visual reasoning, and image generation.[3]

That tool access is part of why the o-series can be better for difficult work. A model that can reason, inspect a file, run analysis, look at a chart, and revise its plan is better suited to problems with moving parts. For a deeper internal comparison, see OpenAI o1 vs o1-pro and our o1 versus o3 breakdown.

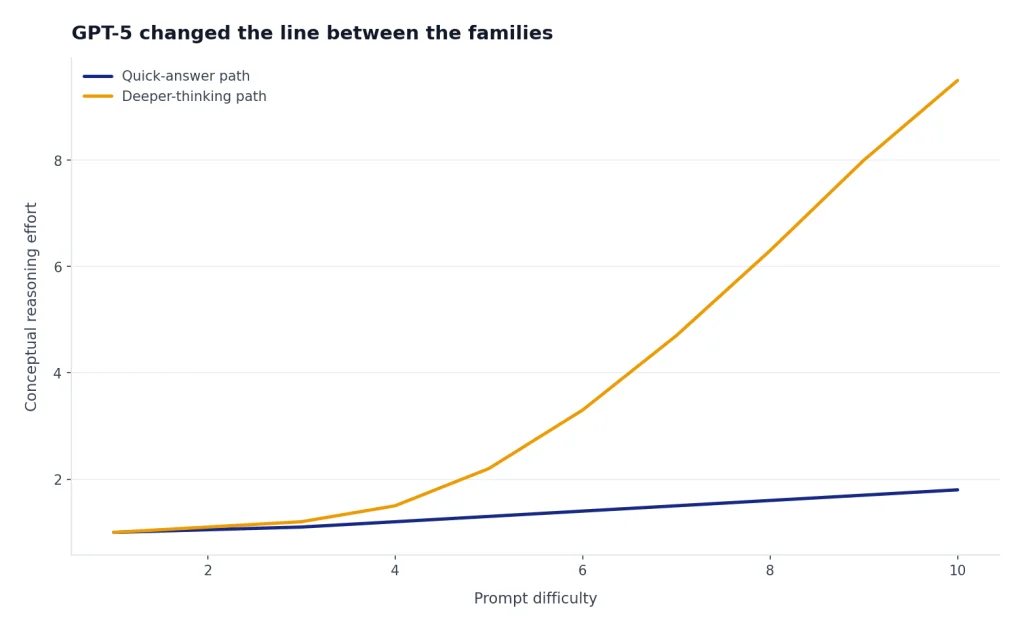

How GPT-5 changed the line between the families

GPT-5 made the GPT versus o-series distinction less rigid. OpenAI introduced GPT-5 on August 7, 2025 as a unified system that knows when to respond quickly and when to think longer.[8] For developers, OpenAI also described GPT-5 as supporting a reasoning_effort parameter, including a minimal value for faster answers without extensive reasoning first.[9]

This matters because GPT-5 generation models can behave more like a router than a single old-style chat model. The system can answer simple prompts quickly and spend more effort on harder prompts. That narrows the gap between “GPT for speed” and “o-series for reasoning.” It does not erase the difference for users comparing older GPT models, legacy model pickers, or API designs that still expose separate reasoning models.

If you are comparing specific GPT generations, read GPT-5 vs GPT-4o, GPT-4 vs GPT-5, and GPT-5 vs GPT-5.1. The family-level rule still helps: GPT is the default interface for most work, while o-series models are the deliberate reasoning branch.

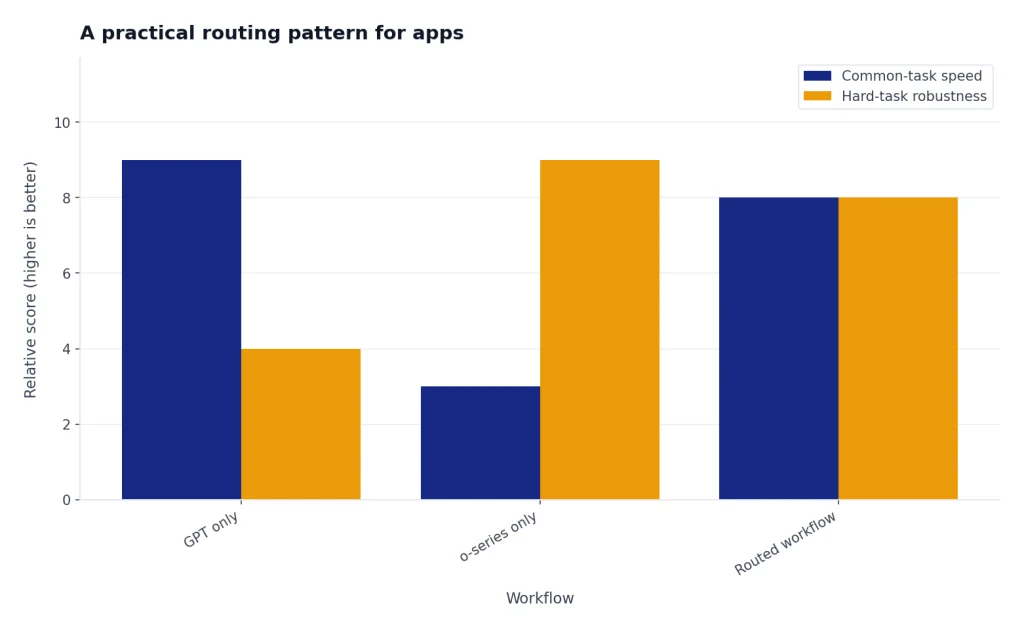

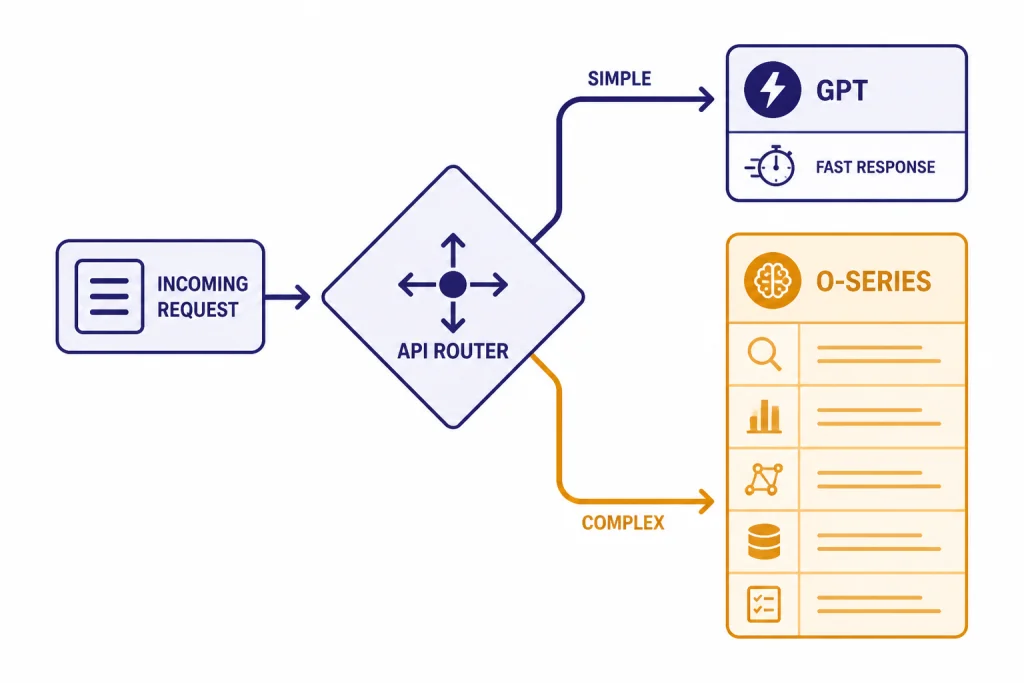

A practical routing pattern for apps

The best production pattern is not to pick one model family forever. Route requests. This keeps common tasks fast while reserving reasoning compute for the requests that need it.

Use GPT as the first pass

Send ordinary requests to a GPT model. This covers summarization, rewriting, extraction, classification, simple coding, and most conversational help. GPT models keep the product responsive and predictable.

Escalate when the prompt shows risk or complexity

Escalate to an o-series model when the request includes words such as “prove,” “debug,” “optimize,” “derive,” “plan,” “compare tradeoffs,” “analyze this chart,” or “find the root cause.” Escalate when the user uploads files, asks for multi-step work, or says the first answer was wrong.

Use a two-model workflow for hard jobs

For complex work, use the o-series to plan and a GPT model to execute routine substeps. This matches OpenAI’s own guidance that an app might use o-series models to plan a strategy and GPT models to execute specific tasks when speed and cost are more important.[1] It is also easier to test. You can measure whether escalation improves final accuracy enough to justify the slower path.

For cost planning, use OpenAI API pricing. For plan-level access questions, compare ChatGPT Pro vs Team if you are choosing for a group rather than a personal account.

Frequently asked questions

Is GPT better than the o-series?

GPT is better for most everyday work because it is faster and more conversational. The o-series is better for hard reasoning tasks where the model needs to spend more effort before answering. “Better” depends on the job, not the brand name.

Is the o-series only for math and coding?

No. Math and coding are common o-series use cases, but they are not the only ones. Use o-series models for any task with hidden constraints, complex visual inputs, technical analysis, planning, or multi-step decision-making.

Why are o-series responses sometimes slower?

OpenAI describes o-series models as trained to think for longer before responding.[3] That extra reasoning can improve hard answers, but it can also add delay. If speed matters more than careful reasoning, use GPT.

Does GPT-5 replace the o-series idea?

Not completely. GPT-5 introduced a unified system that can answer quickly or think longer depending on the task.[8] That makes the distinction less visible in ChatGPT, but the reasoning-versus-speed tradeoff still matters for model selection, app routing, and legacy comparisons.

Should developers use GPT or o-series by default?

Developers should usually start with GPT for default traffic. Add an o-series path for tasks that fail under fast models or require high-confidence reasoning. This gives users faster responses while preserving a stronger option for harder cases.

Can GPT models do reasoning too?

Yes. GPT models can solve many reasoning tasks, and GPT-5 generation systems include built-in thinking for harder prompts.[8] The point is not that GPT cannot reason. The point is that o-series models were designed around deliberate reasoning as their main advantage.