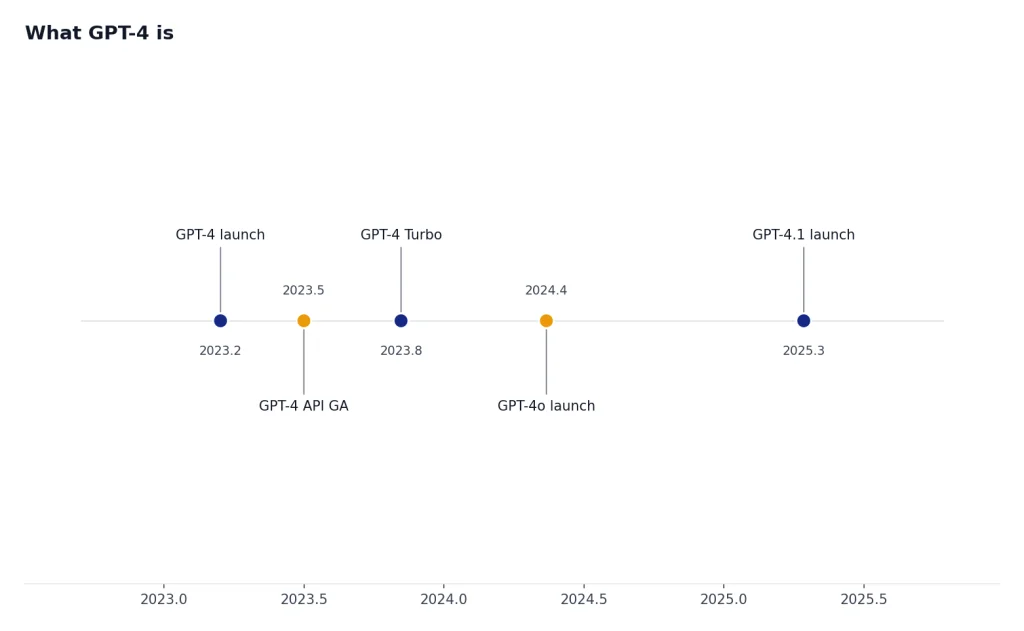

GPT-4 was the model that moved ChatGPT from a useful writing assistant into a serious reasoning, coding, and analysis tool. OpenAI introduced GPT-4 on March 14, 2023 as a large multimodal model that could accept text and image inputs and produce text outputs.[1] By March 25, 2026, GPT-4 is no longer OpenAI’s newest flagship. OpenAI’s API documentation describes GPT-4 as an older high-intelligence model with an 8,192-token context window and text-only API input/output.[3] It still matters because many workflows, evaluations, prompts, and enterprise habits were built around it.

What GPT-4 is

GPT-4 is a generative pre-trained transformer model from OpenAI. It was introduced as a major step up from GPT-3.5, especially for reasoning, instruction following, longer-form work, and professional benchmark performance.[1] In plain terms, GPT-4 reads a prompt, predicts a useful response, and can follow complex instructions better than the models that made ChatGPT famous in late 2022.

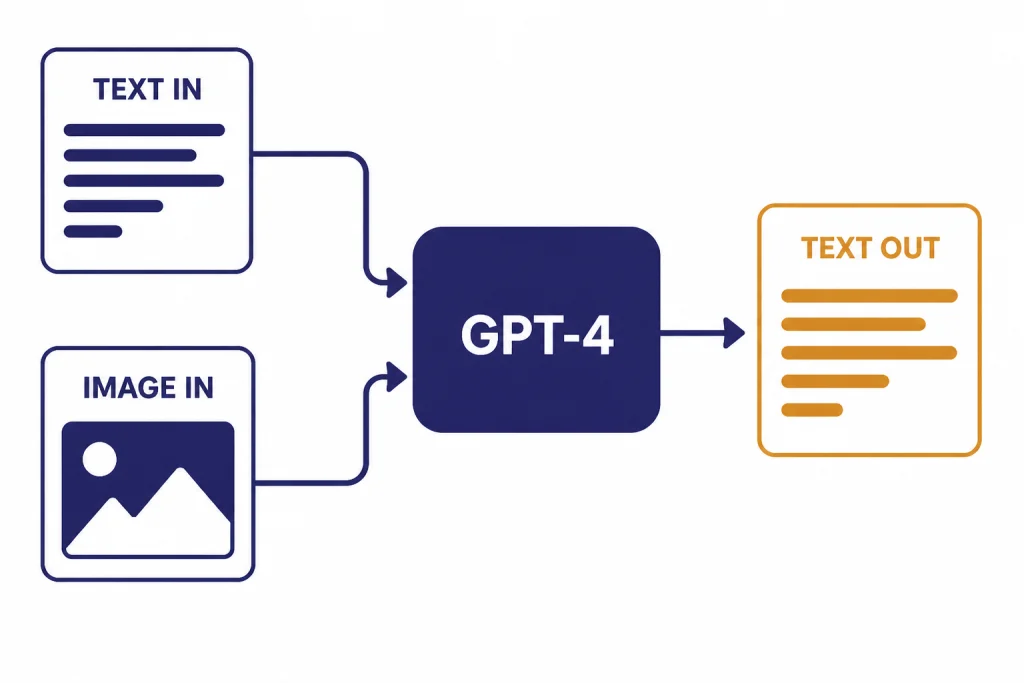

OpenAI originally described GPT-4 as a large multimodal model because the system was designed to accept both text and image inputs and return text outputs.[1] That distinction matters. The original model family included visual understanding, but not every product or API surface exposed those same capabilities. In current OpenAI API documentation, the GPT-4 model entry lists text input and text output, with image, audio, and video not supported for that model entry.[3] For image-focused GPT-4 coverage, see our GPT-4 Vision breakdown.

The word “flagship” needs context. GPT-4 was OpenAI’s flagship model at launch. It is now better understood as the foundation model that shaped later products, including GPT-4 Turbo, GPT-4o, and GPT-4.1. If you are comparing today’s models for a new project, start with all GPT models compared side by side rather than assuming GPT-4 is still the best default.

GPT-4 specs and API status

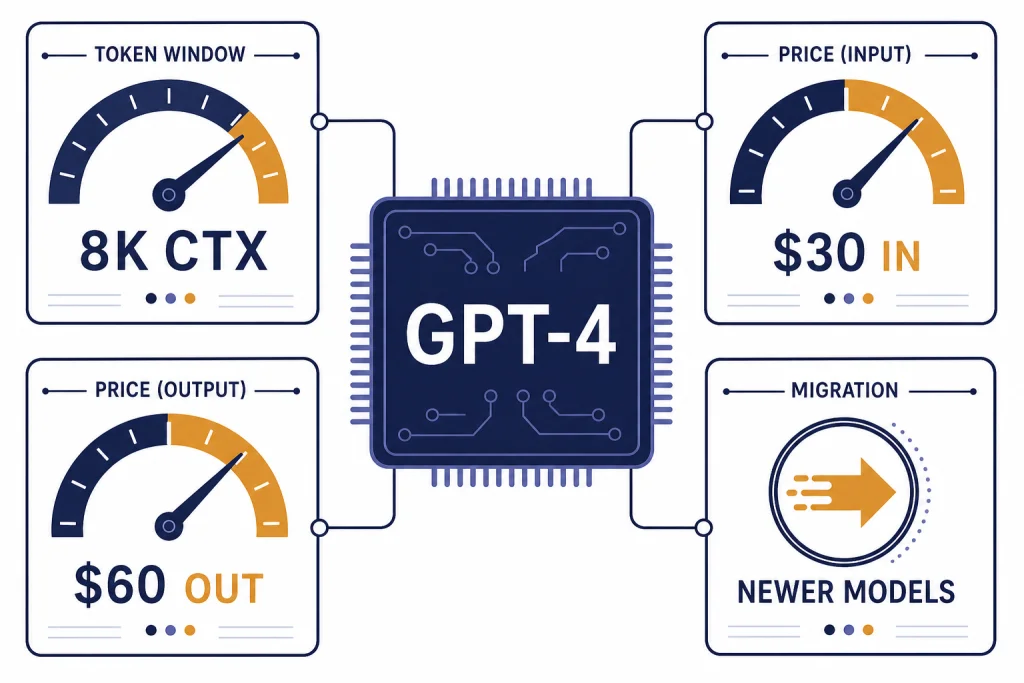

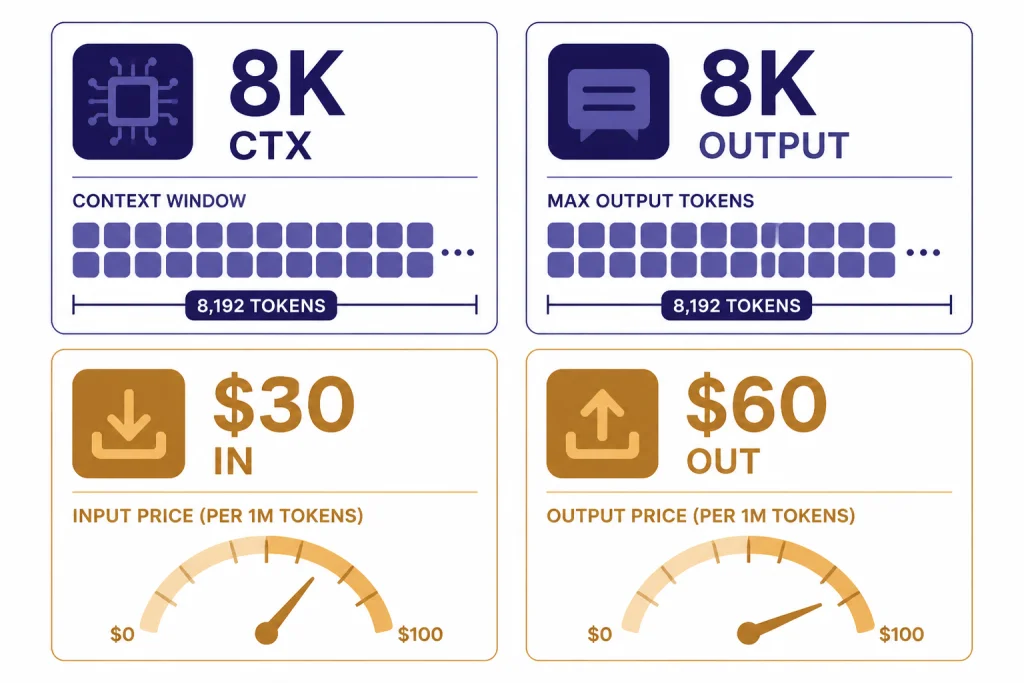

The current GPT-4 API model entry describes GPT-4 as “an older version of a high-intelligence GPT model” that is usable in Chat Completions.[3] Its listed context window is 8,192 tokens, and its listed maximum output is 8,192 tokens.[3] Those numbers are small by 2026 standards, but they were meaningful when GPT-4 arrived.

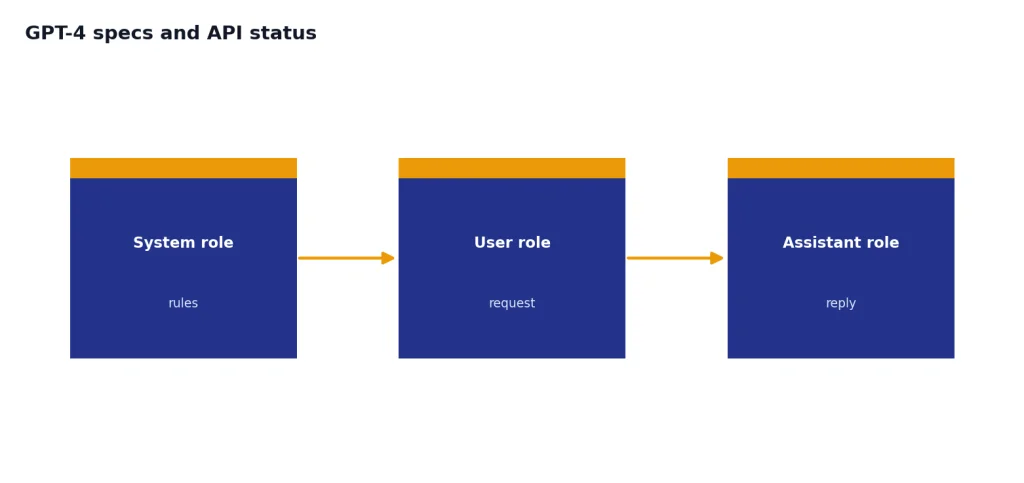

OpenAI made GPT-4 generally available to paying API customers in July 2023, initially emphasizing access to GPT-4 with 8K context.[2] That release also pushed developers toward the Chat Completions API rather than older freeform Completions models.[2] If you are maintaining an older integration, this historical detail explains why many GPT-4 apps are built around message arrays with system, user, and assistant roles.

| Spec | GPT-4 value | What it means |

|---|---|---|

| Model status | Older high-intelligence model[3] | Still documented, but not the newest default for new builds. |

| Context window | 8,192 tokens[3] | The combined prompt and working context must fit inside this limit. |

| Maximum output | 8,192 tokens[3] | The model entry lists this as the upper bound for generated text. |

| Knowledge cutoff | December 1, 2023[3] | The model does not know later facts unless your app supplies them. |

| API pricing | $30 input and $60 output per 1M tokens[3] | Expensive compared with later GPT-4-family models. |

| Modalities in current model docs | Text input and text output; image, audio, and video not supported[3] | Use a newer multimodal model for image or audio workflows. |

For context planning across models, use our context window sizes for every GPT model guide. GPT-4’s 8,192-token window can handle many chats and short documents, but it is not a long-context model by 2026 standards.

What GPT-4 does well

GPT-4’s signature strength is reliable work on tasks that need several constraints to be honored at once. It is useful for drafting structured documents, rewriting with specific rules, explaining code, checking logic, summarizing moderate-length material, and turning rough notes into coherent plans. OpenAI’s ChatGPT release notes highlighted advanced reasoning, complex instructions, and more creativity when GPT-4 arrived in ChatGPT for Plus subscribers on March 14, 2023.[9]

GPT-4 also became a reference point because it performed strongly on professional and academic evaluations. OpenAI reported that GPT-4 passed a simulated bar exam with a score around the top 10% of test takers, while GPT-3.5 was around the bottom 10%.[1] That single benchmark should not be treated as a universal intelligence score, but it did signal a large jump in reasoning and test performance.

For writing, GPT-4 remains capable when the task benefits from judgment and tone control. It can maintain a voice over a long answer, follow editorial constraints, and restructure messy input. For coding, it can explain unfamiliar code, draft functions, identify likely bugs, and help with refactors. If your main question is model selection rather than history, our separate guides to the best GPT model for writing and the best GPT model for coding are more practical.

GPT-4 is less compelling for high-volume classification, extraction, short customer support replies, or simple transformations. Those workloads usually reward speed and cost more than maximum reasoning depth. In those cases, compare it against newer and cheaper options in our cheapest GPT model guide.

GPT-4 family comparison

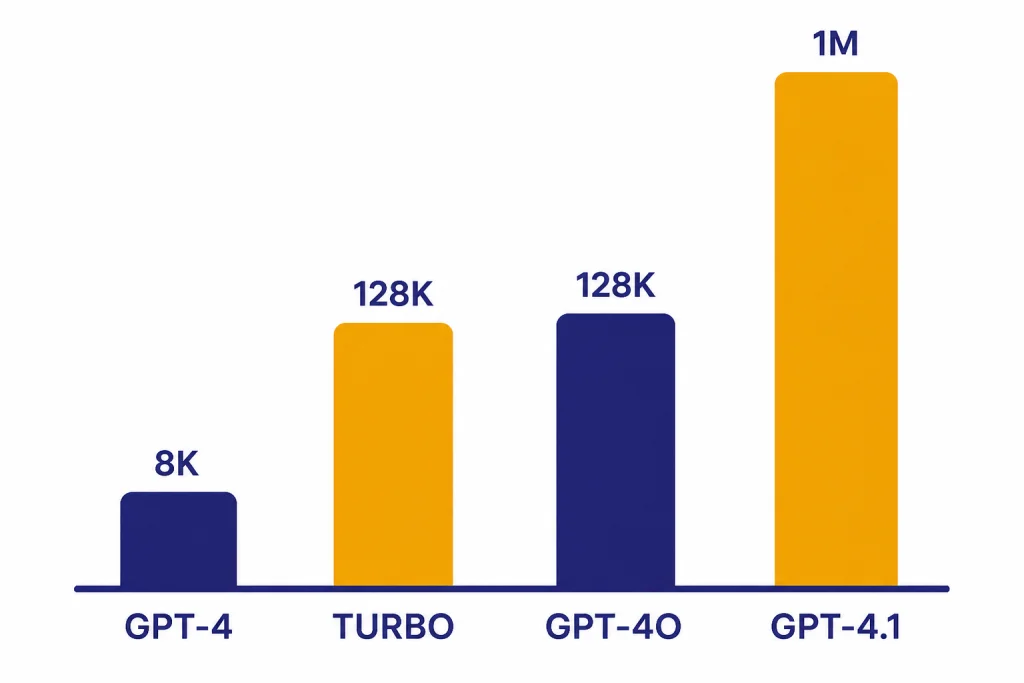

The phrase “GPT-4” can mean the original GPT-4 model, the wider GPT-4 family, or GPT-4-level performance in a later model. That ambiguity causes many bad model choices. The original GPT-4 API model has an 8,192-token context window and $30/$60 per 1M token pricing.[3] GPT-4 Turbo expanded the family with a 128K context window and lower prices.[4] GPT-4o later became OpenAI’s newer flagship, offering GPT-4-level intelligence with faster multimodal capability.[6] GPT-4.1 then pushed the non-reasoning GPT line toward a 1,047,576-token context window in the API.[8]

| Model | Best short description | Context window | Max output | Text token price | Best fit |

|---|---|---|---|---|---|

| GPT-4 | Original high-intelligence GPT-4 model[3] | 8,192 tokens[3] | 8,192 tokens[3] | $30 input / $60 output per 1M tokens[3] | Legacy apps, regression comparisons, older prompt libraries. |

| GPT-4 Turbo | Cheaper, larger-context GPT-4 successor[4] | 128,000 tokens[5] | 4,096 tokens[5] | $10 input / $30 output per 1M tokens[5] | Older long-context apps that were built before GPT-4o and GPT-4.1. |

| GPT-4o | Versatile high-intelligence flagship model[7] | 128,000 tokens[7] | 16,384 tokens[7] | $2.50 input / $10 output per 1M tokens[7] | General multimodal work, everyday API use, and lower-cost GPT-4-level tasks. |

| GPT-4.1 | Smartest non-reasoning model in the GPT-4.1 line[8] | 1,047,576 tokens[8] | 32,768 tokens[8] | $2 input / $8 output per 1M tokens[8] | Instruction following, coding, tool use, and large-document work. |

This table explains why “use GPT-4” is usually not precise enough. If you want the historical model, choose GPT-4. If you want a newer general model with GPT-4-level intelligence, GPT-4o is usually the better reference point. If you need a very large context window without a reasoning step, GPT-4.1 is usually the more relevant comparison. For raw performance rankings, see our most powerful GPT model benchmark page.

Pricing and cost

GPT-4 is expensive for API use by current GPT-family standards. OpenAI’s model page lists GPT-4 at $30 per 1M input tokens and $60 per 1M output tokens.[3] OpenAI’s pricing page also lists legacy GPT-4 snapshots at $30 input and $60 output per 1M tokens, with GPT-4-32k at $60 input and $120 output per 1M tokens.[10]

That cost profile changes the recommendation. For a small internal tool with low usage, keeping GPT-4 may be harmless if it is already stable. For a production product that sends millions of tokens, GPT-4 can be hard to justify unless you need exact compatibility with older outputs. GPT-4o is listed at $2.50 input and $10 output per 1M tokens, while GPT-4.1 is listed at $2 input and $8 output per 1M tokens.[7][8]

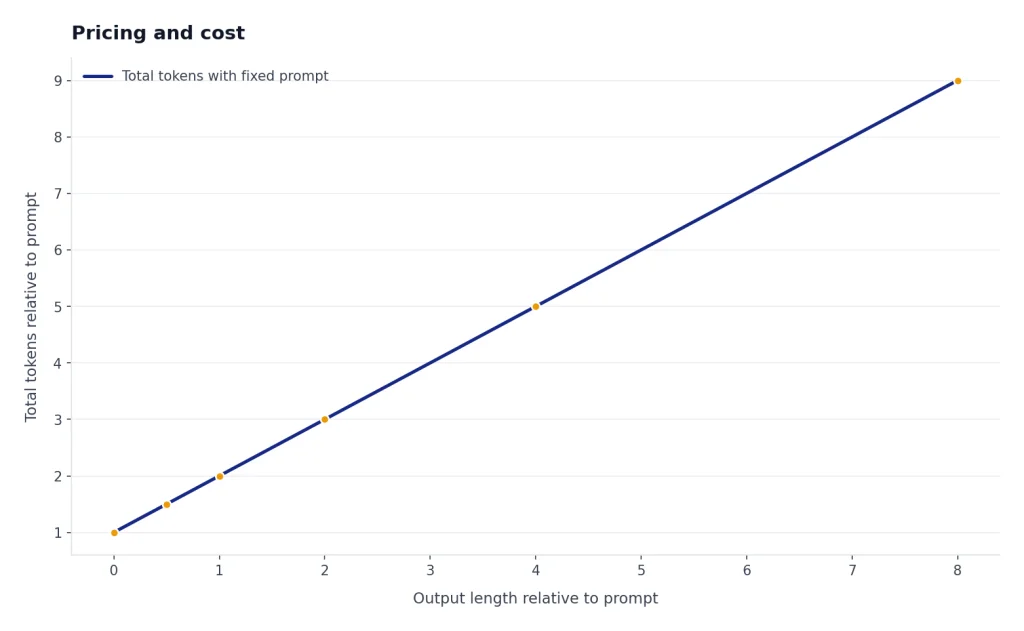

Cost also depends on output length. A model that writes long answers can become expensive even if the prompt is short. GPT-4’s high output price makes it a poor default for verbose customer support, content expansion, batch summarization, and extraction workflows. For a broader cost view, use our OpenAI API pricing reference.

When to use GPT-4 in 2026

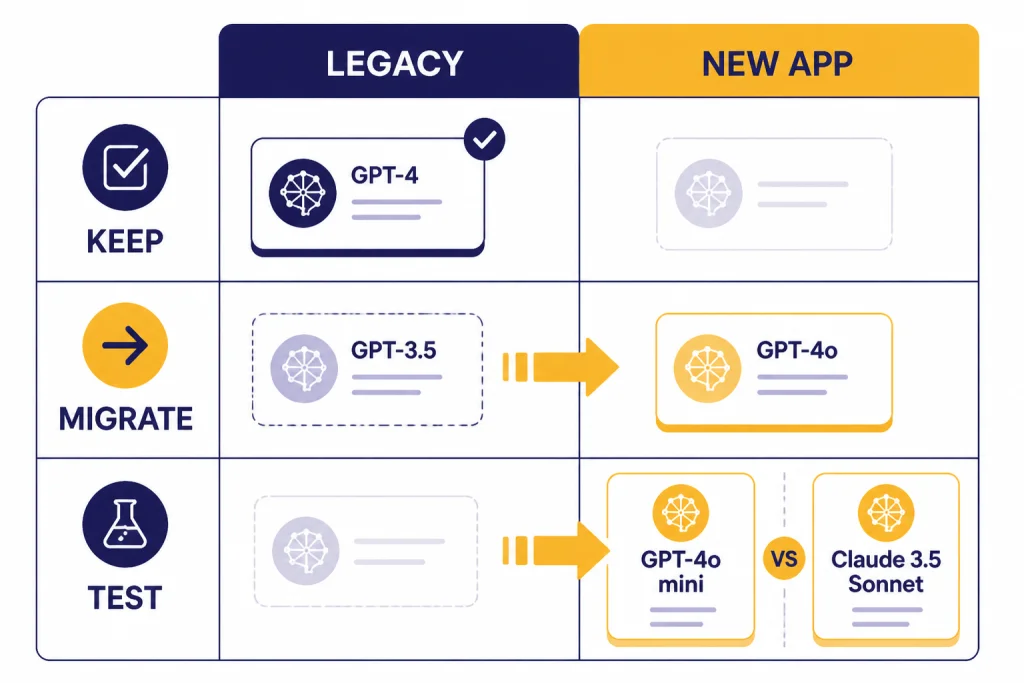

Use GPT-4 in 2026 when you have a specific reason to preserve GPT-4 behavior. The best reason is compatibility. If an application was evaluated, approved, or regulated around GPT-4 outputs, switching models may require a new validation cycle. That is especially true for internal knowledge tools, legal review aids, education products, or workflows with human sign-off.

GPT-4 is also useful as a baseline. Teams often keep it in an evaluation suite to measure how much better, faster, or cheaper a newer model is. This is a good practice. Without a stable baseline, it is easy to mistake a different writing style for a real improvement.

Do not use GPT-4 just because the name is familiar. If you need image input, GPT-4’s current API model entry says image is not supported.[3] If you need lower latency, compare it with the fastest GPT model options. If you need large-document processing, GPT-4.1’s 1,047,576-token context window is the more relevant specification.[8]

A simple rule works well: keep GPT-4 for legacy stability, not for new default builds. For new general-purpose apps, test GPT-4o, GPT-4.1, and the current GPT-5-family options before deciding. If your app needs images, also compare dedicated image-generation and vision-capable paths, including our guide to the best GPT model for image generation.

Limitations

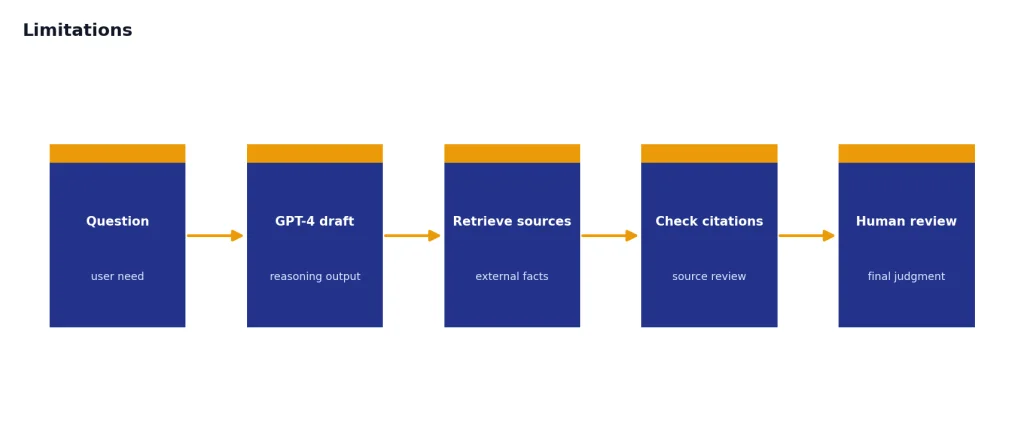

GPT-4 can still make mistakes. OpenAI’s original GPT-4 report warned that the model was not fully reliable and could hallucinate facts.[1] That limitation has practical consequences. Treat GPT-4 as a reasoning and drafting system, not as an authority. For factual work, pair it with retrieval, citations, source review, or human verification.

The context window is another constraint. GPT-4’s listed 8,192-token context window is much smaller than GPT-4 Turbo’s 128,000-token window, GPT-4o’s 128,000-token window, and GPT-4.1’s 1,047,576-token window.[3][5][7][8] This affects legal documents, long transcripts, code repositories, and research packets. Chunking can help, but chunking also adds complexity and can hide cross-document relationships.

GPT-4’s current model entry also lacks support for several newer API features. It lists function calling and structured outputs as not supported for GPT-4, while GPT-4o and GPT-4.1 list structured outputs as supported.[3][7][8] If your application depends on reliable JSON, tool calling, or multimodal inputs, GPT-4 is usually not the best fit.

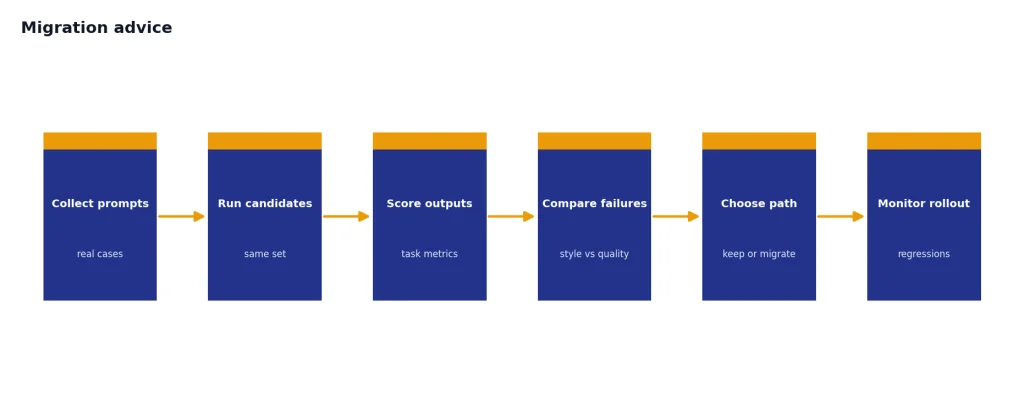

Migration advice

The safest migration path is evaluation first, replacement second. Start by collecting real prompts from your GPT-4 workflow. Include short prompts, long prompts, edge cases, adversarial user inputs, and examples where GPT-4 currently performs well. Then run the same set through GPT-4, GPT-4o, GPT-4.1, and any model you are considering from the newer families.

Score outputs against your actual needs. For a writing workflow, judge tone, factuality, structure, and edit distance from the desired output. For a coding workflow, run tests. For extraction, measure field-level accuracy. For support, measure resolution quality and escalation risk. Do not rely only on a general benchmark score.

| If your GPT-4 app needs… | Test first | Why |

|---|---|---|

| Lower API cost | GPT-4o or GPT-4.1 | Both list much lower per-token prices than GPT-4.[7][8] |

| Image input | GPT-4o or GPT-4.1 | Both list image input, while current GPT-4 docs do not.[3][7][8] |

| Very long documents | GPT-4.1 | Its model entry lists a 1,047,576-token context window.[8] |

| Exact old behavior | GPT-4 | Use snapshots and regression tests when compatibility matters. |

| General ChatGPT-style quality | GPT-4o | OpenAI describes GPT-4o as a versatile high-intelligence flagship model.[7] |

Keep one final rule in mind. A model migration is not only a model swap. You may need to adjust prompts, output schemas, safety filters, token budgets, and evaluation thresholds. The newer model may be better, but it may not fail in the same way. That is why measured migration beats blind replacement.

Frequently asked questions

Is GPT-4 still available?

OpenAI’s current API documentation still lists GPT-4 as an older high-intelligence model usable in Chat Completions.[3] Availability can vary by account, product surface, and API access. For new projects, compare it against newer models before committing.

Is GPT-4 the same as GPT-4o?

No. GPT-4 is the older model introduced in 2023, while GPT-4o was introduced in 2024 as a newer flagship model with GPT-4-level intelligence and faster multimodal capability.[1][6] The API specs also differ in context window, price, features, and modalities.[3][7]

What is GPT-4’s context window?

The current GPT-4 API model entry lists an 8,192-token context window.[3] That is much smaller than GPT-4 Turbo and GPT-4o at 128,000 tokens, and far smaller than GPT-4.1 at 1,047,576 tokens.[5][7][8]

How much does GPT-4 cost in the API?

OpenAI’s GPT-4 model page lists GPT-4 text pricing at $30 per 1M input tokens and $60 per 1M output tokens.[3] OpenAI’s pricing page also lists legacy GPT-4 snapshots at the same $30 and $60 rates.[10]

Does GPT-4 support images?

OpenAI originally described GPT-4 as multimodal because it could accept image and text inputs and produce text outputs.[1] The current GPT-4 API model entry, however, lists image input as not supported for that model.[3] Use a current vision-capable model if image input is required.

Should I use GPT-4 for a new app?

Usually no, unless you need legacy GPT-4 behavior. GPT-4o and GPT-4.1 offer larger context windows and lower listed token prices than GPT-4.[7][8] Test with your own prompts before migrating production traffic.