GPT-3.5 is the OpenAI model family that made ChatGPT mainstream. In 2026, it is no longer the model most people should choose for new work, but it still matters because many older apps, prompts, tests, fine-tuned workflows, and cost comparisons were built around GPT-3.5 Turbo. The current OpenAI API listing describes GPT-3.5 Turbo as a legacy model for cheaper chat and non-chat tasks, with text-only input and output, a 16,385-token context window, and a 4,096-token maximum output.[1] The short version: learn GPT-3.5 to understand the modern ChatGPT era, maintain older systems, and know when to migrate.

What GPT-3.5 is in 2026

GPT-3.5 is best understood as the bridge between the older GPT-3 API era and the modern ChatGPT product era. It made conversational prompting practical for a mass audience, and GPT-3.5 Turbo later gave developers a cheaper chat model they could put into products through the API. OpenAI’s current model listing still describes GPT-3.5 Turbo as a legacy GPT model for cheaper chat and non-chat tasks, not as a frontier model.[1]

The name also causes confusion. People often say “GPT-3.5” when they mean the original ChatGPT experience, the GPT-3.5 Turbo API model, or an older snapshot such as gpt-3.5-turbo-0613. Those are related, but they are not always identical. If you are comparing old prompts, old logs, or older app behavior, the exact snapshot matters.

For readers comparing model families, start with all GPT models compared side by side. For context length across OpenAI models, keep context window sizes for every GPT model open next to this article.

GPT-3.5 specs and model variants

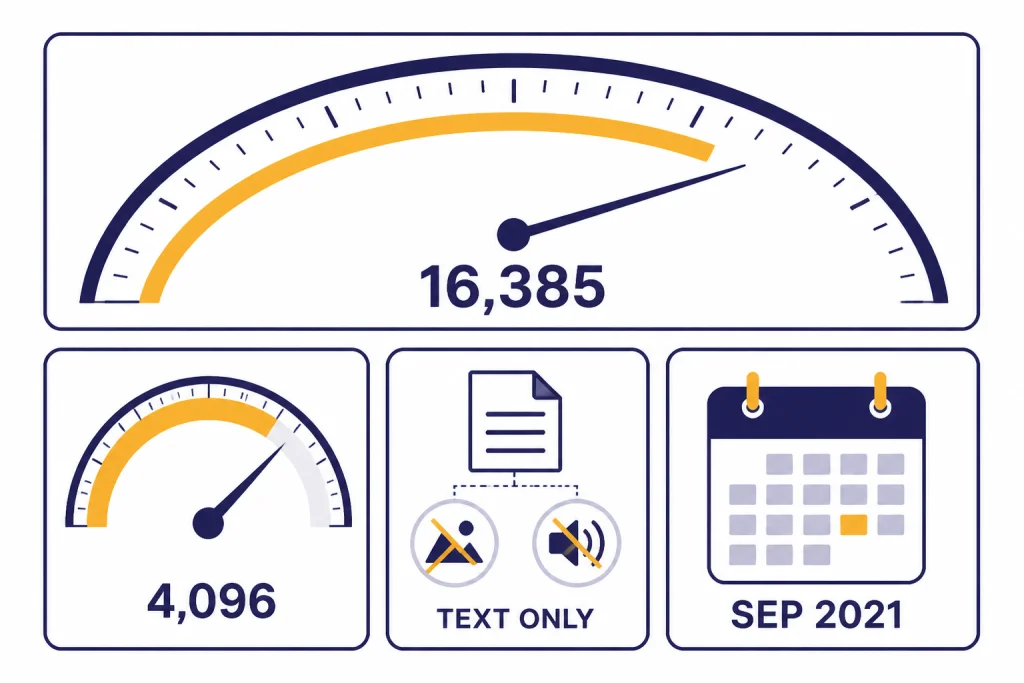

The most important GPT-3.5 model in 2026 is gpt-3.5-turbo. OpenAI lists it with a 16,385-token context window, a 4,096-token maximum output, a September 1, 2021 knowledge cutoff, text input and output, and no support for image, audio, or video input.[1] The same listing says GPT-3.5 Turbo is still available in the API, while recommending gpt-4o-mini instead for most former GPT-3.5 Turbo use cases.[1]

OpenAI’s pricing page lists gpt-3.5-turbo-0125 at $0.50 per 1 million input tokens and $1.50 per 1 million output tokens.[2] The GPT-3.5 Turbo model page shows the same $0.50 input and $1.50 output rates, which is useful when checking invoices or cost regressions in older systems.[1] For a broader cost view, see OpenAI API pricing and our cheapest GPT model comparison.

| Model or variant | Best description | Context window | Max output | Notes for 2026 |

|---|---|---|---|---|

gpt-3.5-turbo | Legacy chat and non-chat model | 16,385 tokens | 4,096 tokens | Still listed as available in the API; OpenAI recommends gpt-4o-mini instead for former GPT-3.5 Turbo use cases.[1] |

gpt-3.5-turbo-0125 | Current named GPT-3.5 Turbo snapshot in pricing references | 16,385 tokens | 4,096 tokens | Listed at $0.50 per 1 million input tokens and $1.50 per 1 million output tokens.[2] |

gpt-3.5-turbo-instruct | Legacy Completions endpoint model | 4,096 tokens | 4,096 tokens | OpenAI describes it as an older model compatible with the legacy Completions endpoint, not Chat Completions.[10] |

gpt-3.5-turbo-0613 | Older snapshot with function calling support | Older snapshot | Snapshot-specific | OpenAI announced function calling for this snapshot on June 13, 2023.[4] |

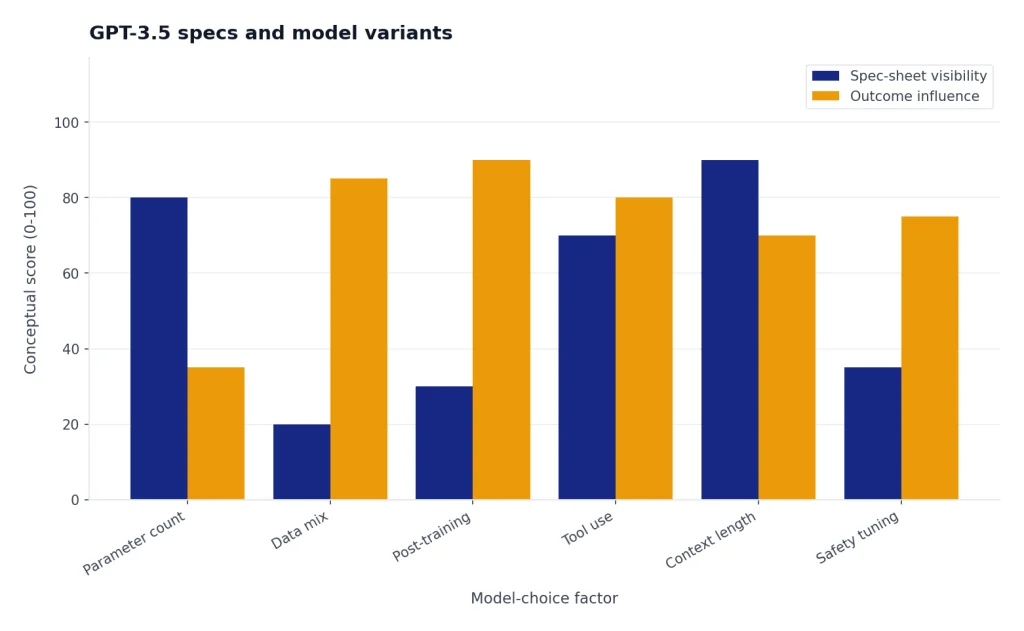

OpenAI has not published an official figure for GPT-3.5’s parameter count. Treat any claimed GPT-3.5 parameter number as an estimate unless it comes from OpenAI directly. Parameter count also would not tell you enough by itself. Data mix, post-training, tool use, latency, tokenizer behavior, context length, and safety tuning all affect what users actually experience.

What GPT-3.5 can still do well

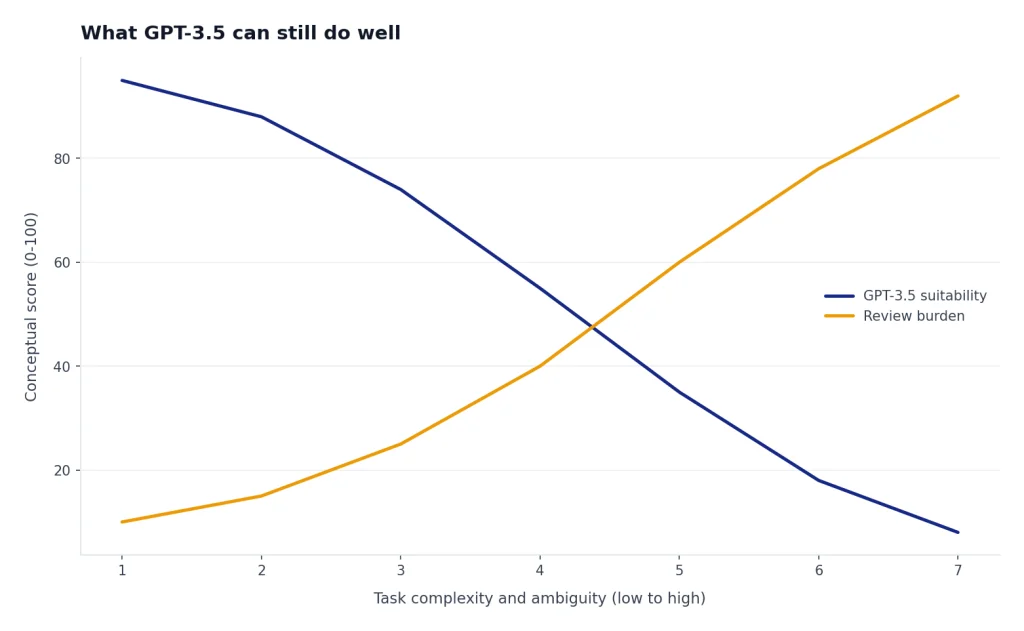

GPT-3.5 remains useful as a reference point and as a maintenance model. It can draft short text, classify simple inputs, summarize compact passages, transform data into a requested format, answer straightforward questions, and produce simple code examples. OpenAI’s model page says GPT-3.5 Turbo can understand and generate natural language or code, and that it was optimized for chat while also working for non-chat tasks.[1]

Its strongest 2026 use cases are narrow and predictable. Think of support-macro rewriting, lightweight categorization, old prompt regression tests, low-risk form letter generation, and compatibility checks for products that still have GPT-3.5-era assumptions. It is not the best default for high-stakes reasoning, long-context analysis, multimodal work, or new consumer-facing assistants.

- Short rewriting tasks: tone changes, subject lines, brief summaries, and template cleanup.

- Simple classification: routing tickets, tagging messages, and grouping short records.

- Legacy prompt testing: comparing old outputs against newer models before migration.

- Basic code help: small snippets, comments, and explanations when correctness can be reviewed.

- Fine-tuned legacy behavior: keeping a known response style when the surrounding product has not moved yet.

If your main task is prose, compare it with the models in best GPT model for writing. If your main task is code, use best GPT model for coding before choosing an older model for a new project.

Where GPT-3.5 falls short

GPT-3.5 is text-only in the OpenAI API listing. The model page says image, audio, and video are not supported for GPT-3.5 Turbo.[1] That alone makes it a poor fit for workflows that involve screenshots, charts, photos, voice, video, or mixed media. For those tasks, compare newer multimodal models and specialized systems rather than trying to force GPT-3.5 into the job.

Its context window is another limit. The current GPT-3.5 Turbo listing shows 16,385 tokens of context and 4,096 output tokens.[1] That is enough for many emails, support tickets, and short documents, but it is small compared with later OpenAI models built for large files, long conversations, and codebase-scale context. When context length is your main constraint, use this guide to context window sizes for every GPT model.

GPT-3.5 also carries older knowledge. OpenAI lists September 1, 2021 as the GPT-3.5 Turbo knowledge cutoff.[1] That does not mean every answer about events before that date is correct, and it does not mean the model knows current facts. If you need fresh information, you need retrieval, browsing, a search tool, or a newer workflow that can cite live sources.

The biggest practical shortcoming is reliability on complex tasks. GPT-3.5 can sound confident while missing edge cases, inventing details, or misreading constraints. Use it only where review is cheap, mistakes are low impact, and the output can be validated with rules, tests, or human oversight.

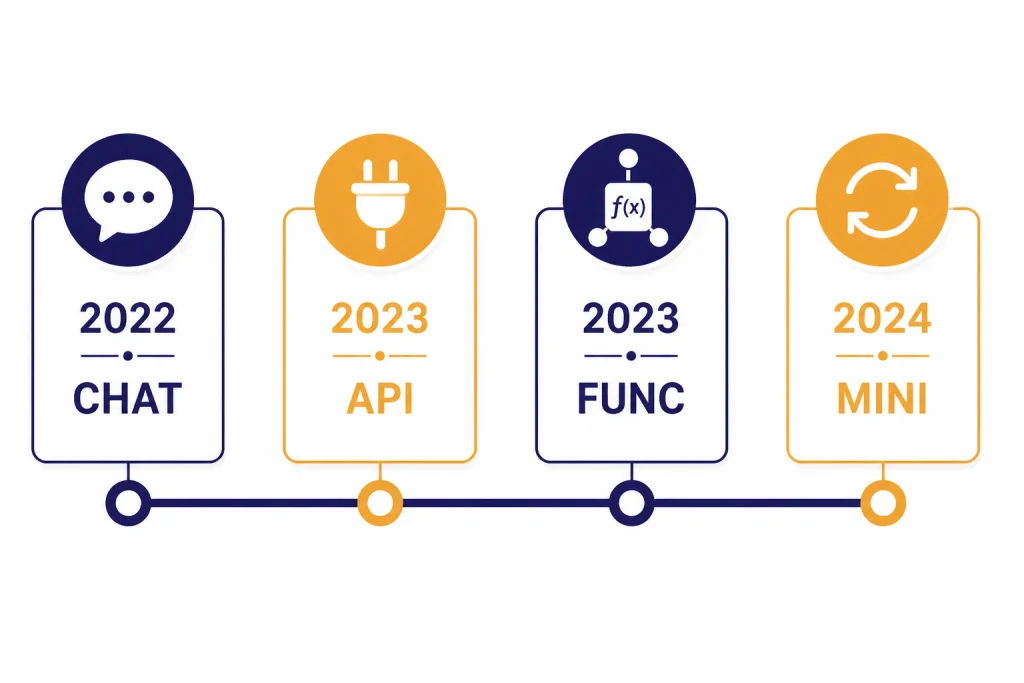

A short GPT-3.5 timeline

GPT-3.5 matters because it sits at the start of the modern ChatGPT adoption curve. OpenAI released the GPT-3.5 Turbo API alongside the Whisper API on March 1, 2023, and its announcement said developers could integrate GPT-3.5 Turbo and Whisper models into apps and products through the API.[3] That release helped move ChatGPT-style behavior from a website into software products.

OpenAI’s June 13, 2023 API update added function calling to gpt-3.5-turbo-0613, along with more reliable steerability through the system message.[4] That was a key moment for app developers because it made structured tool calls and JSON-style arguments more practical, even though modern tool calling has moved on since then.

Fine-tuning came later. OpenAI announced GPT-3.5 Turbo fine-tuning in August 2023 and said developers and businesses had asked for ways to customize the model for differentiated experiences.[7] Fine-tuning did not turn GPT-3.5 into a frontier model, but it made the model more useful for style control, narrow formats, and product-specific behavior.

The replacement phase became clear in 2024. OpenAI introduced GPT-4o mini on July 18, 2024 and said it would be available to ChatGPT Free, Plus, and Team users in place of GPT-3.5.[5] OpenAI also said GPT-4o mini was more than 60% cheaper than GPT-3.5 Turbo, with 15 cents per 1 million input tokens and 60 cents per 1 million output tokens.[5]

| Date | Event | Why it mattered |

|---|---|---|

| November 30, 2022 | ChatGPT reached the public as a GPT-3.5-era chatbot, according to contemporary reporting.[9] | It introduced a broad audience to conversational AI. |

| March 1, 2023 | OpenAI announced APIs for GPT-3.5 Turbo and Whisper.[3] | Developers could build GPT-3.5-style chat into products. |

| June 13, 2023 | OpenAI announced function calling for gpt-3.5-turbo-0613.[4] | GPT-3.5 became more practical for tool-connected applications. |

| August 2023 | OpenAI announced fine-tuning for GPT-3.5 Turbo.[7] | Teams could customize behavior for narrower use cases. |

| July 18, 2024 | OpenAI introduced GPT-4o mini and placed it in ChatGPT instead of GPT-3.5 for Free, Plus, and Team users.[5] | GPT-3.5 moved from default experience to legacy reference point. |

GPT-3.5 vs newer OpenAI models

The clearest comparison is with GPT-4o mini, because OpenAI explicitly recommends using gpt-4o-mini where developers previously used GPT-3.5 Turbo.[1] OpenAI’s GPT-4o mini announcement says that model supports text and vision in the API, has a 128K-token context window, supports up to 16K output tokens per request, and had knowledge up to October 2023 at launch.[5] Those are direct advantages over GPT-3.5 Turbo’s text-only interface, 16,385-token context window, 4,096-token maximum output, and September 1, 2021 knowledge cutoff.[1]

Cost is no longer GPT-3.5’s winning argument. OpenAI listed GPT-4o mini at 15 cents per 1 million input tokens and 60 cents per 1 million output tokens at launch, and described it as more than 60% cheaper than GPT-3.5 Turbo.[5] OpenAI’s API pricing page also lists gpt-4o-mini at $0.15 per 1 million input tokens and $0.60 per 1 million output tokens.[2]

| Question | GPT-3.5 Turbo | GPT-4o mini | Practical takeaway |

|---|---|---|---|

| Is it text-only? | Yes. OpenAI lists image, audio, and video as not supported.[1] | OpenAI said GPT-4o mini supports text and vision in the API at launch.[5] | Use a newer model for screenshots, charts, and visual inputs. |

| How much context does it handle? | 16,385 tokens.[1] | 128K tokens at launch.[5] | GPT-4o mini is better for long documents and conversation history. |

| How much output can it produce? | 4,096 tokens.[1] | Up to 16K output tokens per request at launch.[5] | GPT-4o mini is stronger for long structured outputs. |

| Which is cheaper? | $0.50 input and $1.50 output per 1 million tokens.[2] | $0.15 input and $0.60 output per 1 million tokens.[2] | GPT-3.5 is not the lowest-cost default anymore. |

| Which should new projects choose? | Usually no. | Usually yes for the old GPT-3.5 role. | Keep GPT-3.5 mainly for legacy compatibility. |

If your decision is mainly about speed, compare current latency-focused options in the fastest GPT model breakdown. If your question is capability rather than cost, start with the most powerful GPT model instead.

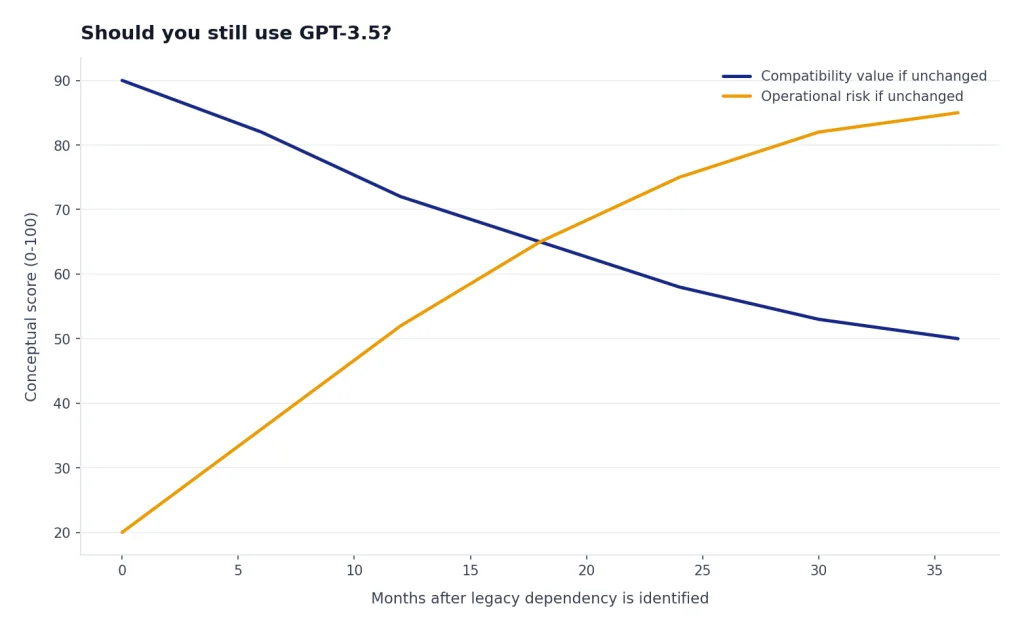

Should you still use GPT-3.5?

Most new projects should not start with GPT-3.5. OpenAI’s own GPT-3.5 Turbo model page says to use gpt-4o-mini in place of GPT-3.5 Turbo as of July 2024 because it is cheaper, more capable, multimodal, and just as fast.[1] That is a clear signal. If you are building something new and have no compatibility constraint, choose a newer small model or a task-specific model.

You may still use GPT-3.5 when you have a controlled legacy reason. Examples include an app whose prompts were tuned against GPT-3.5 outputs, a fine-tuned model that has not yet been migrated, an evaluation suite that needs historical baselines, or a customer contract that names an older model. Even then, set a migration plan. Legacy models tend to become operational risk over time.

The safer default is to treat GPT-3.5 as a compatibility layer. Keep it where removing it would break production behavior. Do not use it as the model that defines your 2026 product strategy.

Migration checklist for GPT-3.5 apps

Migrating away from GPT-3.5 is less about changing one model string and more about validating behavior. Newer models may be cheaper, longer-context, and more capable, but they may also follow instructions differently. Build a small migration harness before switching production traffic.

- Inventory model names. Search for

gpt-3.5-turbo,gpt-3.5-turbo-0125,gpt-3.5-turbo-0613,gpt-3.5-turbo-1106, andgpt-3.5-turbo-instructacross code, prompts, dashboards, and job queues. - Separate chat from legacy completions. OpenAI describes

gpt-3.5-turbo-instructas compatible with the legacy Completions endpoint and not Chat Completions.[10] Do not assume it migrates the same way as chat-based GPT-3.5 Turbo. - Build a golden set. Save representative prompts, expected formats, allowed tone, refusal cases, and failure examples.

- Run side-by-side tests. Compare GPT-3.5 outputs with a newer target model on real examples, not only toy prompts.

- Check structured outputs. Validate JSON, tool calls, schemas, and downstream parsers. Older function-calling behavior dates back to the June 13, 2023 GPT-3.5 Turbo update.[4]

- Recalculate cost. Use current token rates, expected input size, expected output size, retries, and batch usage. Do not assume GPT-3.5 is cheaper.

- Roll out gradually. Start with low-risk traffic, monitor error classes, and keep rollback paths until confidence is high.

The OpenAI Playground remains useful for this kind of testing because it lets teams compare prompts, parameters, and outputs before changing application code. See our OpenAI Playground review if you want a workflow for model comparisons.

Frequently asked questions

Is GPT-3.5 still available in 2026?

Yes, GPT-3.5 Turbo is still listed as available in the OpenAI API for this April 11, 2026 article.[1] It is not the recommended default for new projects. OpenAI labels it as a legacy model and recommends gpt-4o-mini for former GPT-3.5 Turbo use cases.[1]

What is the GPT-3.5 context window?

OpenAI lists GPT-3.5 Turbo with a 16,385-token context window and a 4,096-token maximum output.[1] Older snapshots and instruct variants can differ, so check the exact model name before making assumptions. Context length is one of the main reasons newer models are easier to use for long files.

How much does GPT-3.5 Turbo cost?

OpenAI’s pricing page lists gpt-3.5-turbo-0125 at $0.50 per 1 million input tokens and $1.50 per 1 million output tokens.[2] The GPT-3.5 Turbo model page shows the same input and output rates.[1] Always check current pricing before committing to a production budget.

Does GPT-3.5 support images or voice?

No. OpenAI’s GPT-3.5 Turbo listing says image, audio, and video are not supported.[1] If you need image understanding, voice, transcription, or video generation, use a multimodal or specialized model. For visual tasks, start with GPT-4 Vision or DALL-E 3, depending on whether you need image understanding or image generation.

Is GPT-3.5 better than GPT-4o mini for any task?

Usually no for new work. OpenAI says to use gpt-4o-mini in place of GPT-3.5 Turbo because it is cheaper, more capable, multimodal, and just as fast.[1] GPT-3.5 can still be useful when you need legacy compatibility, historical baselines, or existing fine-tuned behavior.

Did OpenAI publish GPT-3.5’s parameter count?

OpenAI has not published an official figure for this. Avoid treating rumored parameter counts as facts. For practical model choice, context window, modality support, price, latency, and task performance matter more than a guessed parameter number.