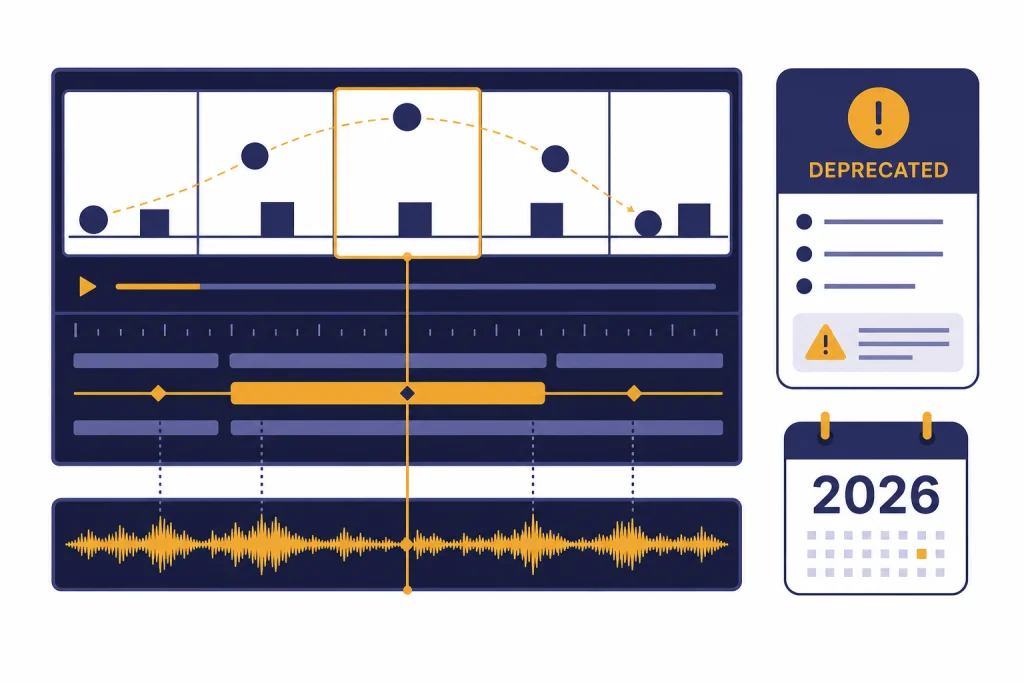

Sora 2 is OpenAI’s second-generation video model, and its main upgrade is not just sharper video. It combines generated video with synchronized audio, better physics, improved prompt following, character-style likeness controls, longer clip options, and a developer Videos API. The catch is product stability. OpenAI announced Sora 2 on September 30, 2025, and later listed the Sora 2 video models and Videos API as deprecated on March 24, 2026, with API removal scheduled for September 24, 2026.[1][5][7][8] Treat Sora 2 as a major technical step for AI video, but not as a safe long-term platform dependency unless OpenAI announces a replacement path.

What is new in Sora 2

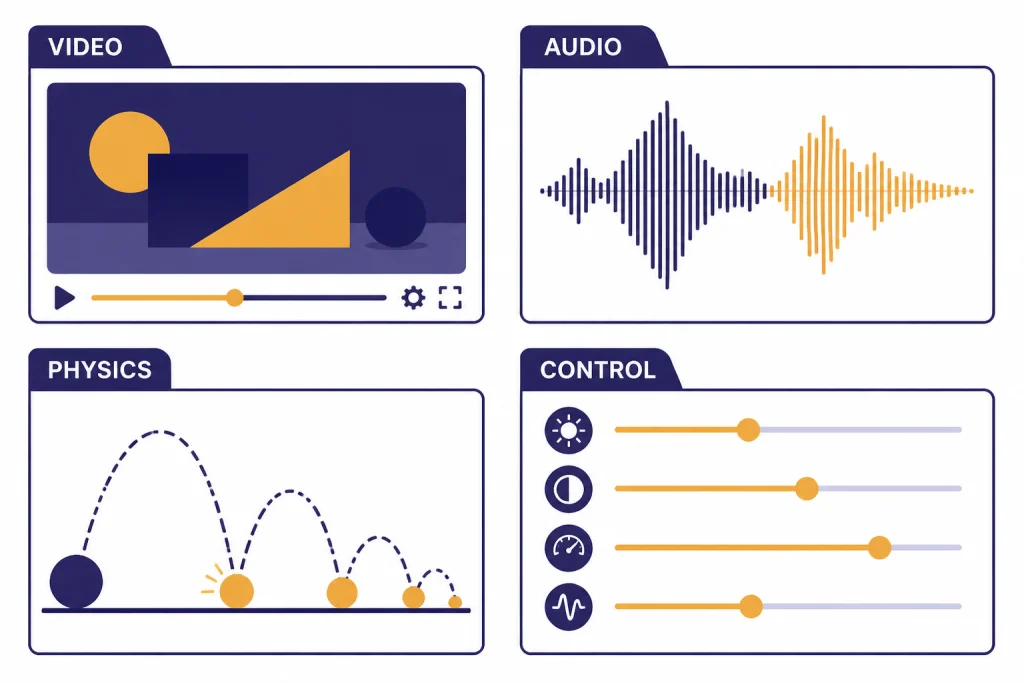

Sora 2 adds synchronized dialogue, sound effects, stronger physical consistency, better multi-shot instruction following, and a higher-quality Sora 2 Pro variant. OpenAI framed it as the company’s flagship video-and-audio generation model when it launched on September 30, 2025.[1][7]

The practical answer is simple. Sora 2 is best understood as OpenAI’s first broadly productized video model, not just a research preview. It moved Sora from impressive silent clips toward short videos that can include motion, sound, style, and reusable characters in one workflow.

The status answer is just as important. OpenAI’s developer documentation now lists the Sora 2 video generation models and Videos API as deprecated, with removal from the API on September 24, 2026.[5][4] That does not erase the technical progress, but it changes how teams should plan around it.

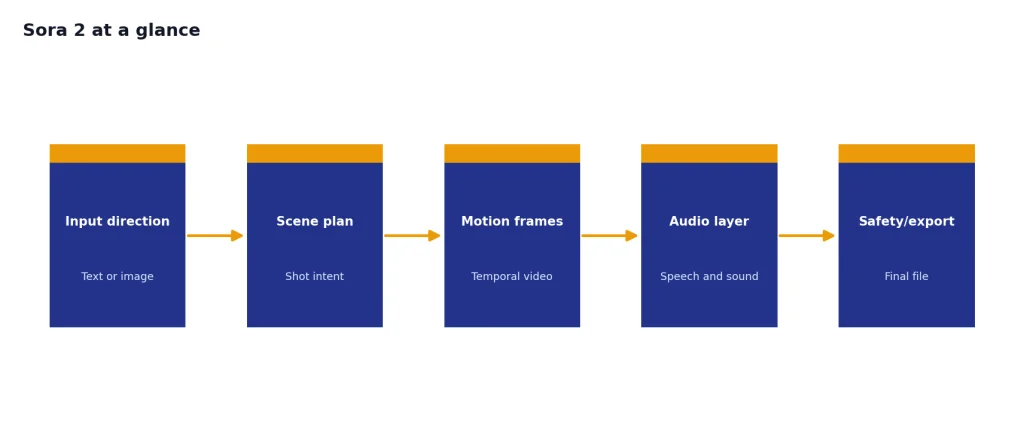

Sora 2 at a glance

Sora 2 sits in a different category from text models such as the GPT family. It is a generative media model that takes text or image direction and produces video with audio. If you are comparing it against language models, start with all GPT models compared side by side and context window sizes for every GPT model, then treat Sora as a separate media-generation system.

| Area | Sora 2 detail | Why it matters |

|---|---|---|

| Launch | Announced September 30, 2025[1][7] | Marks the public Sora 2 generation, separate from the original Sora preview. |

| Modalities | Text and image input; video and audio output in the model page[6][4] | Moves AI video beyond silent clips. |

| Consumer app | OpenAI launched a Sora iOS app with invite-based rollout in the U.S. and Canada.[1][7] | Made Sora 2 a social creation product, not only a model demo. |

| Pro tier | Sora 2 Pro targets higher fidelity and tougher shots.[2][4] | Gives creators a quality-vs-speed choice. |

| Developer access | Videos API supports creation, image guidance, extensions, editing, downloads, and batch queues.[4] | Lets teams build video workflows programmatically. |

| Deprecation | OpenAI notified developers on March 24, 2026, of deprecation for Sora 2 models and the Videos API.[5][8] | Raises migration risk for production users. |

Core capabilities

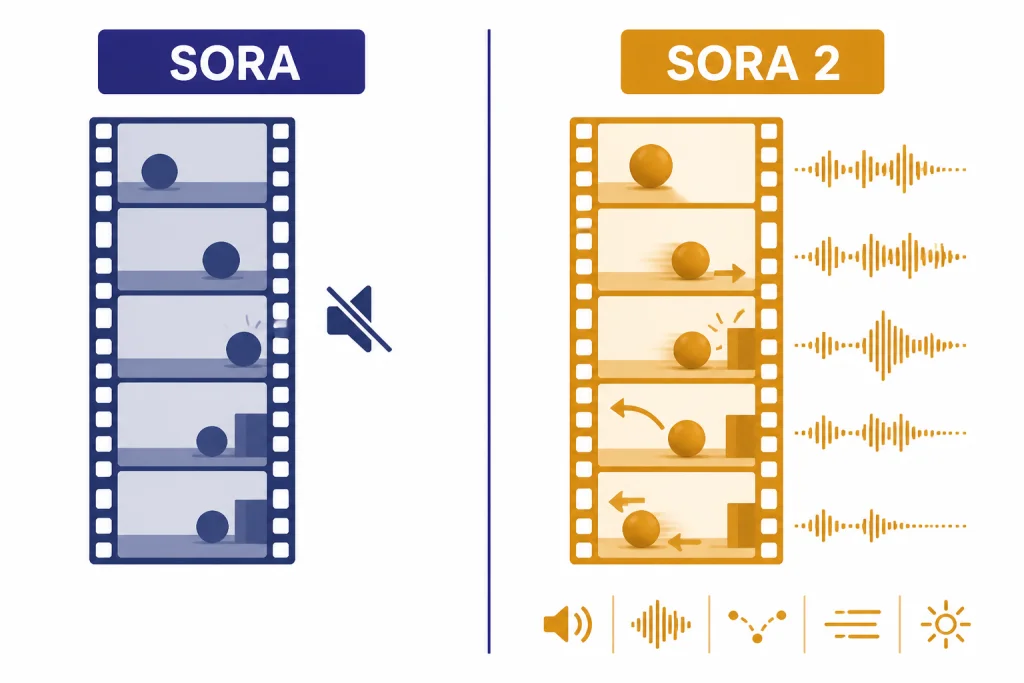

The biggest Sora 2 upgrade is synchronized audio. OpenAI says the model can create background soundscapes, speech, and sound effects with a high degree of realism.[1][3] That matters because audio timing is part of believability. A clip of footsteps, dialogue, a door slam, or crowd ambience feels more useful when sound follows the action instead of being added later.

The second upgrade is physical behavior. OpenAI’s launch post emphasized that prior video models often “solve” prompts by bending reality, while Sora 2 is better at modeling failed actions, rebound, rigidity, buoyancy, and continuous motion.[1][3] This is still not a physics simulator. It can still make errors. But it is a meaningful step for shots where cause and effect matter.

The third upgrade is control. OpenAI says Sora 2 can follow intricate instructions across multiple shots while persisting world state more accurately.[1][3] That makes it more useful for storyboards, ad concepts, education clips, and previsualization. It also narrows the gap between a prompt and a usable sequence.

The fourth upgrade is characters. The Sora app introduced a way to bring yourself or approved people into generated scenes after a short video-and-audio recording used to verify identity and capture likeness.[1] OpenAI later renamed and expanded related cameo-style workflows around characters in release notes.[10]

Sora 2 vs. the original Sora

The original Sora made text-to-video feel credible. Sora 2 made the product more complete. OpenAI described the original Sora from February 2024 as a “GPT-1 moment” for video and Sora 2 as a much larger jump toward usable video generation.[1][7]

| Comparison point | Original Sora | Sora 2 |

|---|---|---|

| Core output | Video generation research model and later product access | Video plus synchronized audio output[1][6] |

| Launch context | First previewed in February 2024[1][12] | Announced September 30, 2025[1][7] |

| Instruction following | Strong visual generation, but less productized control | Improved steerability and multi-shot control[1][3] |

| Product surface | Sora.com and earlier Sora experiences | Sora app, Sora.com, and Videos API support[1][4] |

| Creator tools | Storyboard ideas existed in Sora 1 | Storyboards for Sora 2 on web, plus 15-second and 25-second duration options in release notes[10][11] |

| Developer status | Earlier Sora access varied by product | Sora 2 API was later deprecated on March 24, 2026.[5][8] |

If your question is quality, Sora 2 is the better model. If your question is long-term product planning, Sora 2 is more complicated because the official API deprecation changes the risk profile.

API, pricing, and limits

OpenAI’s developer guide says the Videos API can create new videos, guide a generation with an image reference, reuse character assets, continue clips with extensions, edit video, download finished videos, and submit large offline render queues through the Batch API.[4] That is the right interface for applications, media pipelines, and internal creative tools.

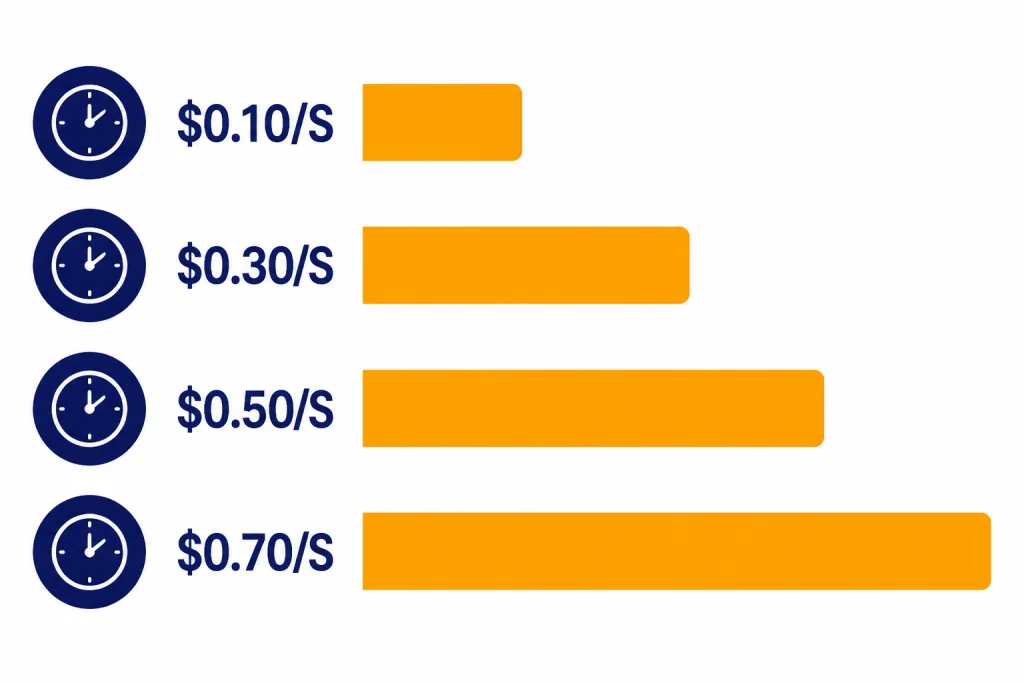

Pricing is per second. OpenAI’s pricing page lists standard Sora video API rates of $0.10 per second for sora-2 at 720p and $0.30 per second for sora-2-pro at 720p; it also lists higher Sora 2 Pro rates of $0.50 per second at 1024p and $0.70 per second at 1080p.[11][6] Batch pricing is lower: $0.05 per second for sora-2 at 720p and $0.15, $0.25, and $0.35 per second for the listed Sora 2 Pro sizes.[11]

| Model | Output size | Standard price | Batch price |

|---|---|---|---|

sora-2 | 720p, 720×1280 or 1280×720 | $0.10/sec[11][6] | $0.05/sec[11] |

sora-2-pro | 720p, 720×1280 or 1280×720 | $0.30/sec[11][6] | $0.15/sec[11] |

sora-2-pro | 1024p, 1024×1792 or 1792×1024 | $0.50/sec[11] | $0.25/sec[11] |

sora-2-pro | 1080p, 1080×1920 or 1920×1080 | $0.70/sec[11] | $0.35/sec[11] |

The API can generate 16-second and 20-second videos, according to OpenAI’s video generation guide.[4] The consumer release notes separately say all users could generate 15-second videos on app and web, while Pro users could generate 25-second videos on web with storyboard.[10] Keep those surfaces separate. App limits and API parameters are not the same thing.

How creators should use it

Use Sora 2 for short, directed clips where motion, camera, and audio all matter. A good prompt should name the subject, setting, action, shot type, pacing, lighting, and audio. OpenAI’s own guidance recommends specific camera and scene instructions rather than vague creative requests.[4]

- Start with a simple shot. Ask for one subject, one action, and one camera move.

- Add audio intentionally. Name ambience, dialogue, or sound effects rather than hoping the model infers them.

- Iterate before increasing quality. Use faster or lower-cost generations for idea testing, then move to Pro or higher resolution.

- Use storyboards for sequences. Sora 2 storyboards let creators plan second by second on the web in beta.[10]

- Export and archive important work. The deprecation notice makes preservation part of the workflow, not an afterthought.[5][8]

For brand or production teams, the strongest use case is previsualization. Sora 2 can create mood studies, pitch concepts, social clips, training visuals, and draft scenes. It should not replace human review, rights clearance, or factual verification.

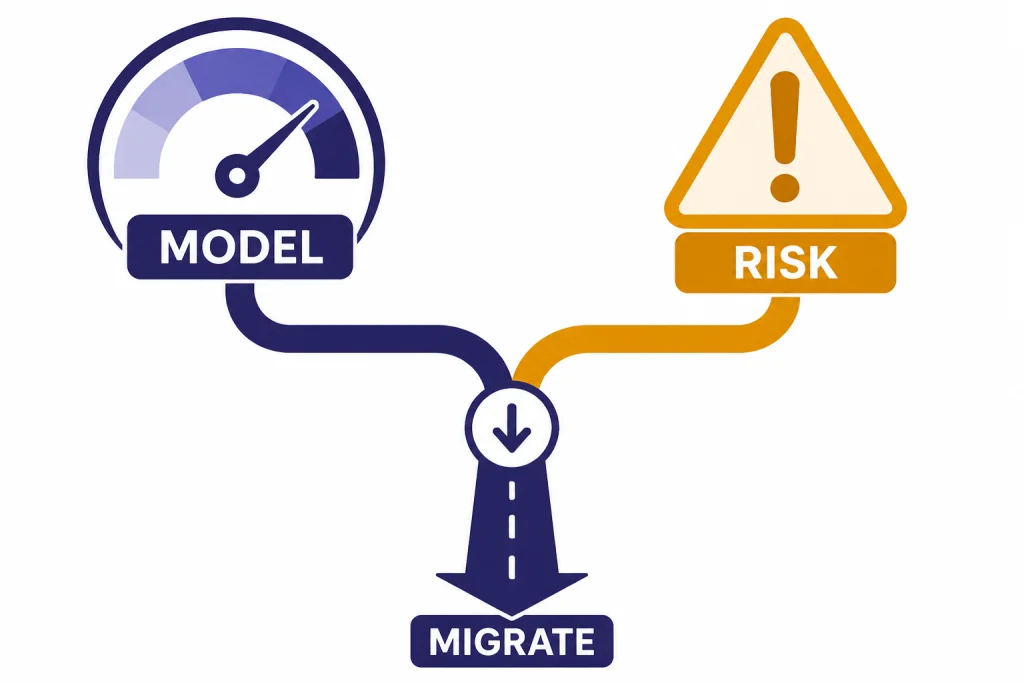

Analysis: the capability-platform split

Sora 2 creates a split decision. As a model, it shows that video generation is moving from visual novelty toward controllable audiovisual generation. As a platform, it became risky after OpenAI began the deprecation process for the Sora 2 models and Videos API on March 24, 2026.[5][8]

That split should guide buying and building decisions. If you need one-off creative exploration, Sora 2 is still important to understand. If you need a product feature that must work for years, do not hard-code your roadmap around Sora 2 without a migration plan.

The pattern is clear. AI video is compute-heavy, socially sensitive, and legally complicated. OpenAI delivered a stronger model, then moved quickly into a product wind-down. That suggests the limiting factor was not only model quality. Distribution, cost, moderation, copyright, and user safety mattered just as much.

For readers tracking OpenAI’s broader model strategy, compare this with text and reasoning models such as GPT-5.3, OpenAI o3, and OpenAI o4-mini. Text models can be embedded into many workflows with lower marginal media cost. Video models create heavier infrastructure and governance demands.

Safety, rights, and likeness controls

Sora 2’s safety issues are not theoretical. The system card names nonconsensual likeness use and misleading generations as risk areas, and says OpenAI used internal red teaming, limited invitations, restrictions on photorealistic person uploads, and stricter safeguards for minors.[3]

The app also gave users control over their likeness. OpenAI said users decide who can use their character, can revoke access, and can remove videos that include them.[1] That is essential for a product built around placing people into generated scenes.

Developers face stricter API restrictions. OpenAI’s video guide says the API rejects copyrighted characters and copyrighted music, blocks generation of real people including public figures, blocks human-likeness character uploads by default, and rejects input images with human faces at the time described in the guide.[4] If your workflow depends on real people, celebrity likeness, licensed music, or protected characters, Sora 2 is the wrong default unless your use case fits OpenAI’s policies and rights framework.

These controls also explain why Sora 2 should not be treated like DALL-E 3 with motion added. Video increases the risk of impersonation, context collapse, and false evidence. For image-specific workflows, use our guide to the best GPT model for image generation.

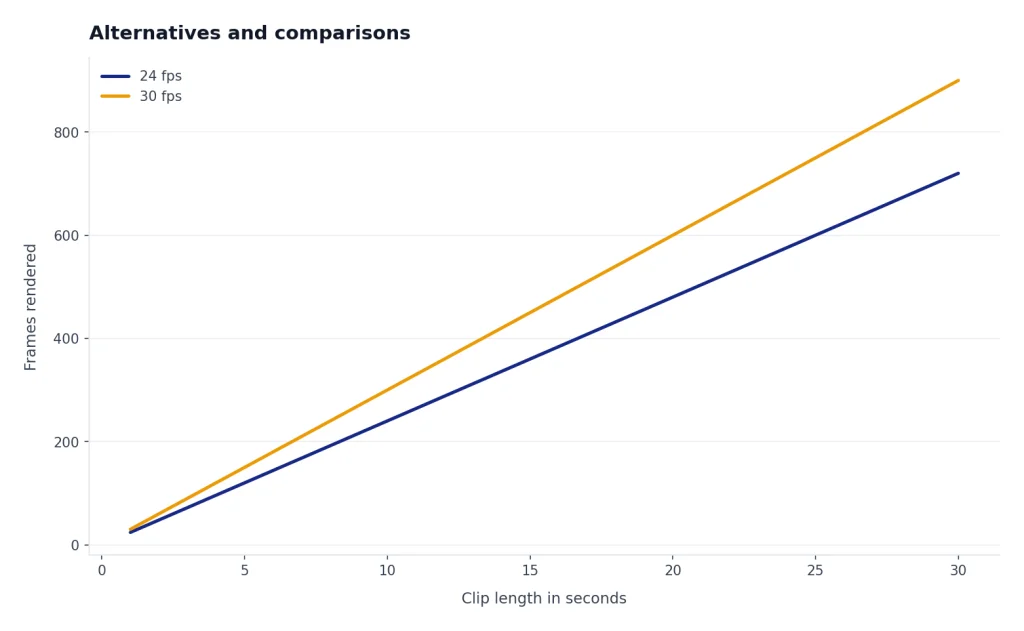

Alternatives and comparisons

Sora 2 is not the only serious AI video option. If you are comparing tools for production, look at model quality, clip length, price, rights controls, API reliability, and export workflow. Our direct comparisons of Sora vs Runway and Sora vs Google Veo are better places to weigh competing video systems.

For many teams, the best alternative is not another video model. It may be a still-image workflow, a narrated slide workflow, or a hybrid process using human-edited footage. Pair Sora-style ideation with Whisper for transcription, GPT-4 Vision for visual analysis, or a writing model from our best GPT model for writing guide.

If price is the deciding factor, compare Sora’s per-second billing against the broader OpenAI API pricing landscape. Video seconds are not like text tokens. A single short clip can cost more than many text requests, and high-resolution video scales quickly.

Frequently asked questions

When did Sora 2 launch?

OpenAI announced Sora 2 on September 30, 2025.[1][7] The launch included the Sora model update and a new Sora iOS app. OpenAI said the initial app rollout started in the U.S. and Canada.[1]

What is the biggest Sora 2 upgrade?

The biggest upgrade is combined video and synchronized audio. OpenAI says Sora 2 can generate soundscapes, speech, and sound effects along with video.[1][3] It also improves physics, realism, and instruction following compared with prior systems.[1][2]

How long can Sora 2 videos be?

In the Sora app and web product, OpenAI’s release notes say all users could make 15-second videos, while Pro users could make 25-second videos on web with storyboard.[10][11] In the API, OpenAI’s video guide says sora-2 and sora-2-pro support 16-second and 20-second generations.[4] The exact limit depends on product surface, model, and account access.

How much does the Sora 2 API cost?

OpenAI lists Sora Video API pricing per second. Standard pricing is $0.10 per second for sora-2 at 720p and $0.30 per second for sora-2-pro at 720p.[11][6] Higher listed Sora 2 Pro sizes cost $0.50 per second at 1024p and $0.70 per second at 1080p.[11]

Is Sora 2 Pro different from Sora 2?

Yes. OpenAI describes Sora 2 as tuned for speed and everyday creation, while Sora 2 Pro targets higher fidelity and tougher shots.[2][4] The API guide says Sora 2 Pro is the option to use when you need 1080p exports in 1920×1080 or 1080×1920.[4] It is slower and more expensive in OpenAI’s own documentation.[4][11]

Is the Sora 2 API being discontinued?

Yes. OpenAI’s deprecation page says it notified developers on March 24, 2026, that the Sora 2 video generation model aliases, snapshots, and Videos API were deprecated and would be removed from the API on September 24, 2026.[5][4] Axios also reported on March 24, 2026, that OpenAI was discontinuing the Sora video app.[8] OpenAI has not published a corroborated independent source for every deprecation-table detail, so developers should treat the official OpenAI deprecation page as the controlling source.

Should I build a product on Sora 2 now?

Use caution. Sora 2 is technically impressive, but OpenAI’s March 24, 2026 deprecation notice makes it a poor foundation for a new long-lived product unless you have a migration plan.[5][8] It is still useful for research, creative testing, and understanding where AI video is going. For production, compare alternatives and avoid tying your only video workflow to a deprecated API.

Bottom line

Sora 2 is the model that made OpenAI’s video work feel like a full audiovisual system: short clips, synchronized sound, stronger physics, character controls, creator tools, and API access. It is a major milestone for AI video.

The next thing to watch is not only a better model. It is OpenAI’s replacement strategy after the Sora 2 deprecation, and whether future video generation moves into ChatGPT, enterprise tools, partner platforms, or a new OpenAI video API.