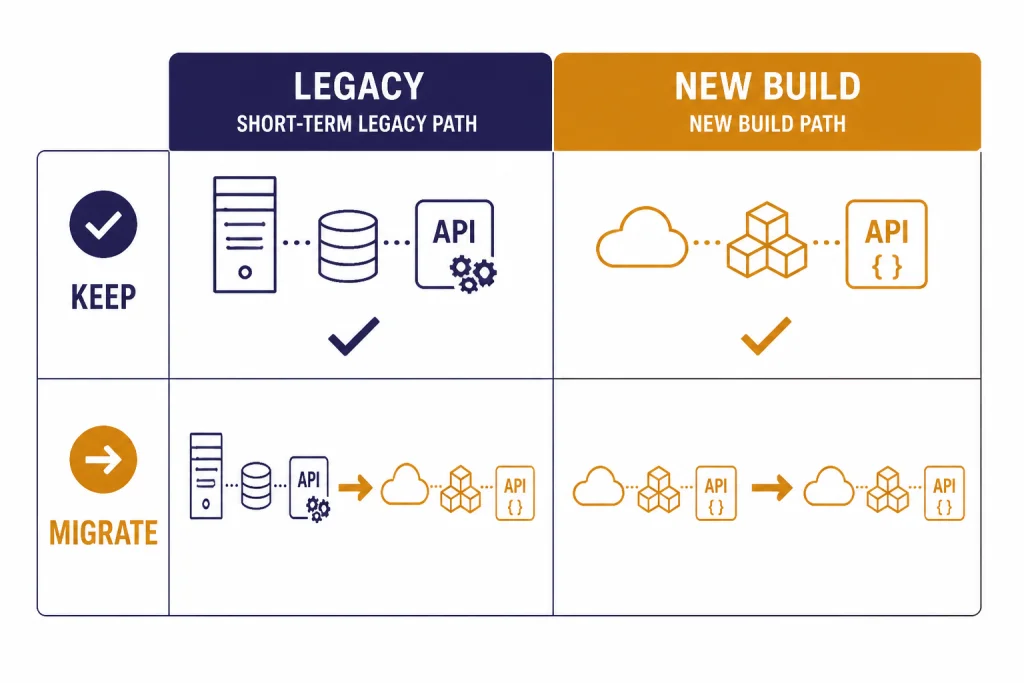

DALL-E 2 is still worth using in 2026 only in narrow cases: maintaining an existing API integration, generating inexpensive square images, or using image variations that your workflow already depends on. It is not the best choice for new image products, brand work, text-heavy graphics, or high-fidelity editing. OpenAI lists DALL-E 2 as deprecated in its model documentation, and its image generation guide says DALL-E 2 and DALL-E 3 support will stop on May 12, 2026.[1][2] For most readers, the practical answer is simple: keep DALL-E 2 running if you need a short transition period, but do not build a new 2026 workflow around it.

Short answer

DALL-E 2 is a legacy image model. It can still create useful images from natural-language prompts, and it remains familiar to developers who built against the older Image API. OpenAI describes it as an image system that can create realistic images and art from a natural-language description, and the model page lists it under the dall-e-2 alias.[3][1]

The problem is not that DALL-E 2 suddenly stopped working. The problem is that the surrounding product landscape moved on. OpenAI’s current image documentation points developers toward newer GPT Image models for image generation and editing, while noting that DALL-E 2 and DALL-E 3 are deprecated and scheduled to lose support on May 12, 2026.[2] If you are choosing an image model today, start with our best GPT model for image generation guide, then use this page to decide whether DALL-E 2 has a temporary role.

The best way to think about DALL-E 2 in 2026 is as a compatibility model. It is useful when your code, prompt library, cost assumptions, or variation workflow already depends on it. It is a poor default for a new app, a creative production pipeline, or any image workflow where prompt following, typography, editing accuracy, and long-term support matter.

What DALL-E 2 is

DALL-E 2 was OpenAI’s second-generation text-to-image system. OpenAI’s DALL-E 2 page says it can create original, realistic images and art from a text description, combine concepts, attributes, and styles, and support image generation, outpainting, inpainting, and variations.[3] OpenAI also said DALL-E 2 generated images at 4x greater resolution than the first DALL-E system.[3]

The public product history matters because it explains why DALL-E 2 still appears in old tutorials, SDK examples, and codebases. OpenAI removed the DALL-E waitlist on September 28, 2022, and made the DALL-E API publicly available on November 3, 2022.[3][4] That timing made DALL-E 2 the default image model for many early AI art apps, prototype tools, CMS plug-ins, and social post generators.

That legacy is also why many developers still see dall-e-2 in code. OpenAI’s DALL-E 3 Help Center article says the API defaulted to DALL-E 2 for backward compatibility, while allowing developers to set the model parameter to dall-e-3.[5] That compatibility was helpful for existing apps, but it is not a reason to start a new build on DALL-E 2.

If you are comparing DALL-E 2 with the rest of OpenAI’s model lineup, treat it differently from text, reasoning, speech, and video models. It does not have a context window in the same sense as chat models. For text model sizing, use our context window sizes for every GPT model reference. For broader model selection, see all GPT models compared side by side.

What DALL-E 2 still does well

DALL-E 2 still has practical strengths. They are not the strengths that win a 2026 image quality contest. They are operational strengths that matter when you already have a working system.

It is predictable for old prompts

Teams that built a prompt library around DALL-E 2 may prefer its older behavior for a while. A prompt that produced an acceptable illustration in 2023 may not produce the same style or composition in a newer image model. If you have a catalog of prompts with human-approved outputs, DALL-E 2 can buy time while you re-test them.

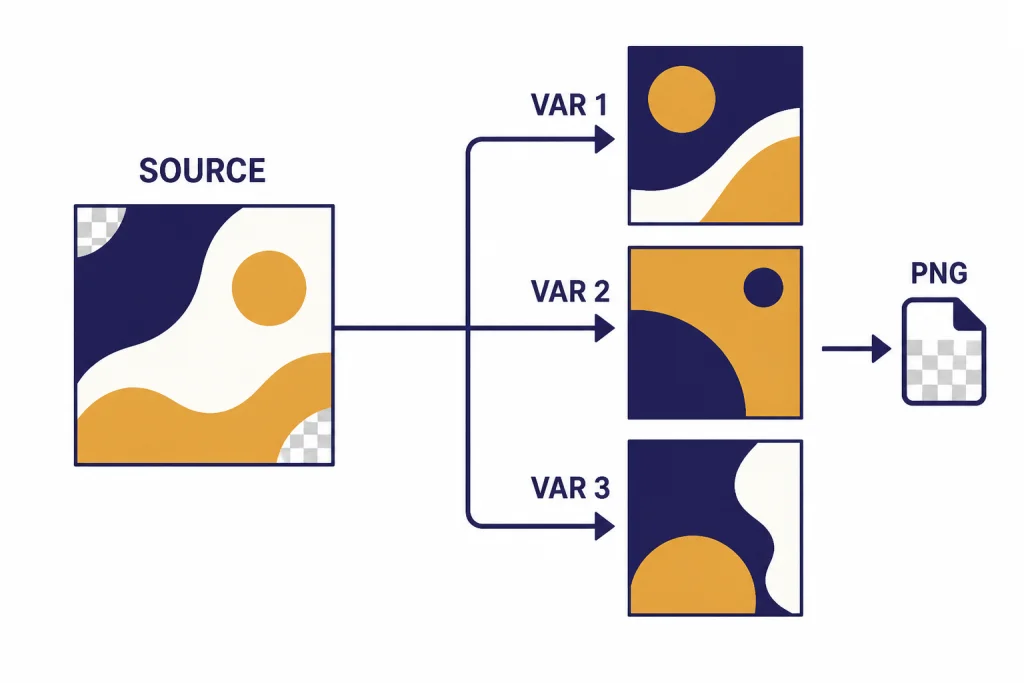

It supports variations

The OpenAI image generation guide says the Image API includes a variations endpoint for models that support it, such as DALL-E 2.[2] The API reference says the create image variation endpoint only supports DALL-E 2, and that the input image must be a square PNG under 4MB.[8] This is one of the clearest remaining reasons to keep DALL-E 2 in a workflow during migration.

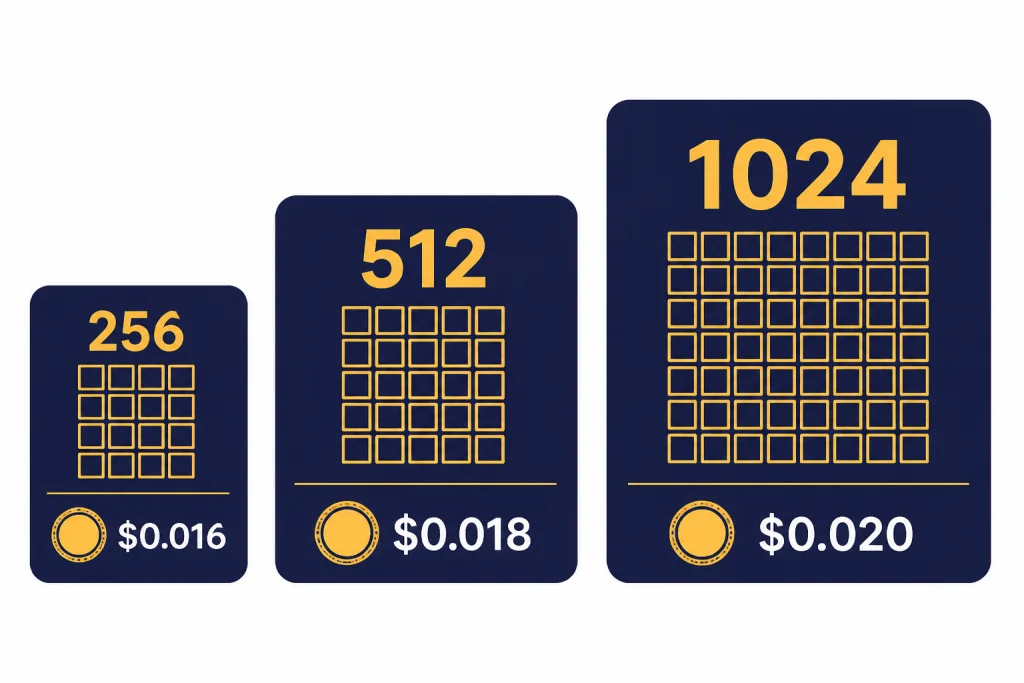

It can return smaller square images

DALL-E 2 supports 256×256, 512×512, and 1024×1024 image sizes in the Image API.[8] That is useful for thumbnails, drafts, internal review grids, and systems that do not need large or high-detail outputs. DALL-E 3, by contrast, uses 1024×1024, 1024×1792, or 1792×1024 sizes according to OpenAI’s Help Center.[5]

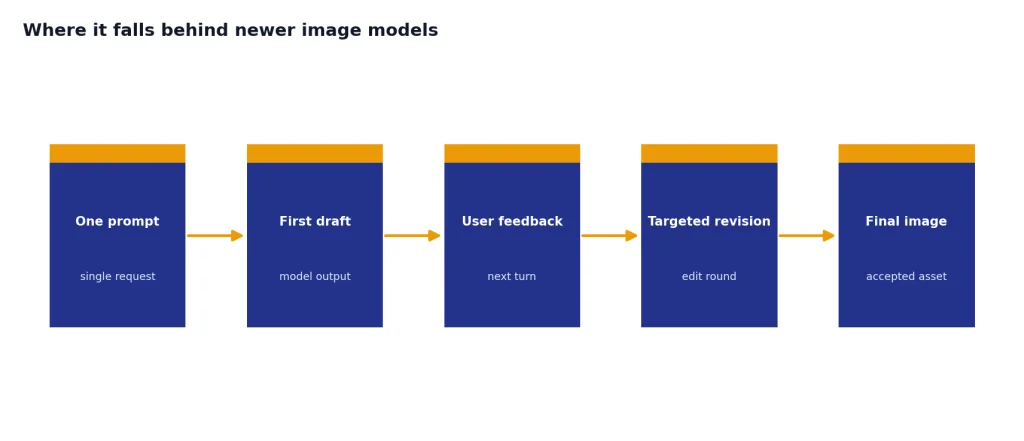

Where it falls behind newer image models

DALL-E 2 falls behind in the areas that matter most for current image generation: detailed prompt following, text rendering, high-fidelity editing, and real-world knowledge. OpenAI’s GPT Image API FAQ describes the newer GPT Image API as based on a latest multimodal model with superior instruction following, text rendering, detailed editing, and real-world knowledge.[5] OpenAI’s 2025 image API announcement also described gpt-image-1 as a natively multimodal model for high-quality, professional-grade image generation.[7]

The gap is especially visible in commercial work. DALL-E 2 can produce a pleasant illustration, but it is less reliable when a prompt asks for specific layouts, legible product text, precise visual hierarchy, or consistent edits across multiple rounds. If the image includes a label, UI mockup, menu, packaging panel, event poster, or branded layout, newer models are usually the better starting point.

DALL-E 2 also sits outside newer conversational image workflows. OpenAI’s image generation guide says the Responses API supports image generation inside conversations and multi-step flows, while the Image API is best for generating or editing a single image from one prompt.[2] That distinction matters if you want users to revise an image over several turns instead of submitting one isolated prompt.

If your main task is understanding images rather than generating them, DALL-E 2 is the wrong category of model. For visual analysis, screenshots, diagrams, and image inputs, use a multimodal model instead. Our GPT-4 Vision guide explains that side of the model family.

| Use case | DALL-E 2 fit | Better direction in 2026 |

|---|---|---|

| Legacy API app using old prompts | Good short-term fit | Run a migration test suite before May 12, 2026[2] |

| Small square thumbnails | Still useful because 256×256 and 512×512 are supported[8] | Keep only if quality is acceptable |

| Text-heavy graphics | Weak fit | Use newer GPT Image models or DALL-E 3[5] |

| High-fidelity edits | Weak fit | Use the newer Image API or Responses API workflow[2] |

| New production app | Poor fit | Start with current image models |

Pricing and limits

DALL-E 2’s strongest remaining business argument is cost predictability. OpenAI’s pricing page lists DALL-E 2 standard image pricing at $0.016 for 256×256, $0.018 for 512×512, and $0.02 for 1024×1024 images.[6] That is easy to model in a budget because each generated image maps to a simple per-image charge.

DALL-E 3 costs more at common square size. OpenAI’s pricing page lists DALL-E 3 standard pricing at $0.04 for 1024×1024 and $0.08 for larger standard landscape or portrait images; HD pricing is $0.08 for 1024×1024 and $0.12 for larger landscape or portrait images.[6] Newer GPT Image pricing is token-based, so the final cost depends on input text, input images when editing, and generated image output tokens.[2]

Do not look at price alone. A cheaper image is not cheaper if you regenerate it several times, fix it manually, or reject it because it cannot follow layout instructions. DALL-E 2 can still be economical for rough internal concepts and low-stakes thumbnails. It is less economical for finished assets that need accuracy.

| Model or route | Pricing shape | Best fit | Risk |

|---|---|---|---|

| DALL-E 2 | $0.016 to $0.02 per image across 256×256, 512×512, and 1024×1024[6] | Legacy square-image workflows | Deprecated; support ends May 12, 2026[2] |

| DALL-E 3 | $0.04 to $0.12 per image depending on size and quality[6] | Better prompt following and larger fixed aspect ratios | Also listed as deprecated in the image guide[2] |

| GPT Image API | Token-based image pricing[7] | Newer generation and editing workflows | Costs require estimation instead of a single flat image price |

Rate limits also matter. The DALL-E 2 model page lists rate limits by usage tier, from Tier 1 at 500 images per minute to Tier 5 at 10,000 images per minute, with free access marked as not supported.[1] Treat those figures as account-dependent ceilings, not as a guarantee that your application will always sustain peak throughput.

For a wider cost view across OpenAI models, use our OpenAI API pricing guide. If the main goal is minimizing model spend across text and image tasks, our cheapest GPT model comparison may be more useful than a DALL-E-only calculation.

Who should still use it

DALL-E 2 still makes sense for a small group of users.

- Developers maintaining an existing DALL-E 2 integration. If your app already uses

dall-e-2, keep it stable while you test replacements. - Teams that need image variations. The variation endpoint remains a specific DALL-E 2 dependency in OpenAI’s API reference.[8]

- Products that need small square drafts. The 256×256 and 512×512 options can be enough for thumbnails, mood boards, placeholders, and internal review.[8]

- Cost-sensitive prototypes. A predictable $0.016 to $0.02 per image can help during early testing.[6]

DALL-E 2 does not make sense for most new buyers or builders. If you are launching a fresh workflow in 2026, start with newer OpenAI image models, compare quality against your target style, and run cost tests with real prompts. If you are deciding between OpenAI and open-source image generation, our DALL-E vs Stable Diffusion comparison covers the trade-offs in control, cost, hosting, and policy constraints.

It is also worth separating image generation from video generation. DALL-E 2 is not a video model. If your end goal is motion, camera movement, or clip generation, look at Sora rather than trying to stretch a still-image model into a video workflow.

A practical migration plan

If you still rely on DALL-E 2, migration should be a controlled engineering task, not a panic rewrite. OpenAI’s image generation guide says DALL-E 2 and DALL-E 3 are deprecated and scheduled to stop being supported on May 12, 2026, so the safest path is to finish testing before that date.[2]

1. Inventory every DALL-E 2 call

Search your codebase for dall-e-2, images.generate, images.edit, and images.createVariation. Record the prompt source, output size, response format, image count, and downstream use. This tells you which calls need a direct replacement and which can be retired.

2. Build a prompt test set

Select real prompts, not perfect examples. Include short prompts, long prompts, rejected prompts, prompts that ask for text in the image, and prompts used by paying customers. Generate side-by-side outputs with your replacement model and score them for usefulness, not just beauty.

3. Separate generation, editing, and variations

Do not assume one replacement route covers every DALL-E 2 feature. OpenAI’s image docs distinguish single-prompt Image API use from conversational image workflows in the Responses API.[2] Variation-heavy workflows may need design changes because the DALL-E 2 variation endpoint is a special case.[8]

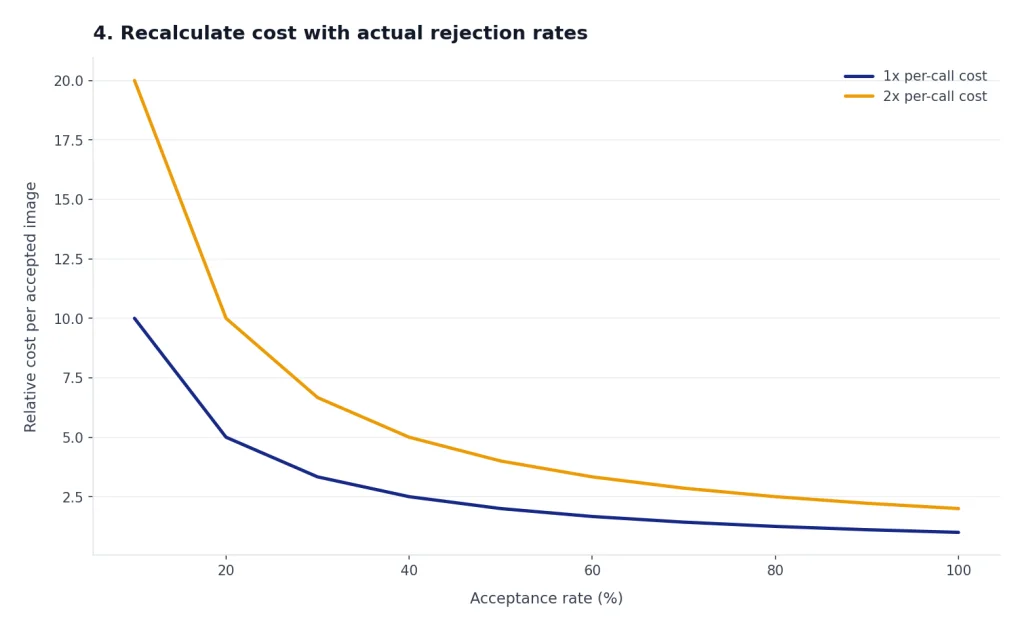

4. Recalculate cost with actual rejection rates

Flat DALL-E 2 pricing can look cheaper than newer token-based routes, but rejected images change the math. Track how many generations users need before accepting an image. A model that costs more per call can still be cheaper if it reduces retries.

5. Update user-facing promises

If your product advertises exact square sizes, fast batches, variations, or a specific visual style, update those claims after migration. New image models may improve quality while changing behavior. Users care about the result, not the model name.

Verdict

DALL-E 2 is still worth using in 2026 only as a short-term legacy tool. It remains useful for existing API integrations, simple square image generation, small drafts, and variation workflows. It is not the right foundation for a new product.

The deciding factor is support. OpenAI’s current image guide says DALL-E 2 and DALL-E 3 are deprecated and support stops on May 12, 2026.[2] That turns the question from “Can DALL-E 2 still make images?” into “Is it worth spending engineering time on a model with an approaching support cutoff?” For most teams, the answer is no.

Use DALL-E 2 if it keeps an existing system stable during migration. Avoid it if you are starting from scratch, need reliable text in images, expect multi-turn editing, or want a model choice that will age well past 2026. For the newer option in the same family, read our DALL-E 3 guide next.

Frequently asked questions

Is DALL-E 2 still available in 2026?

Yes, but only as a legacy option. OpenAI’s Help Center says the DALL-E 2 API remains available for backward compatibility, while the image generation guide says DALL-E 2 is deprecated and support stops on May 12, 2026.[5][2] Treat it as a migration bridge, not a long-term choice.

Is DALL-E 2 cheaper than DALL-E 3?

Yes, on listed per-image API pricing. OpenAI’s pricing page lists DALL-E 2 from $0.016 to $0.02 per image, while DALL-E 3 ranges from $0.04 to $0.12 per image depending on size and quality.[6] The cheaper model is not always the better value if it needs more retries.

Can DALL-E 2 make 512×512 images?

Yes. OpenAI’s API reference lists 256×256, 512×512, and 1024×1024 as supported DALL-E 2 sizes.[8] That is one reason some older thumbnail and draft workflows still use it.

Does DALL-E 2 support image variations?

Yes. OpenAI’s API reference says the create image variation endpoint only supports DALL-E 2.[8] The input image must be a square PNG under 4MB, so this is useful but constrained.[8]

Should I build a new app with DALL-E 2?

No, not unless you have a very specific compatibility requirement. OpenAI lists DALL-E 2 as deprecated, and the support cutoff makes it a poor foundation for a new product.[1][2] Start with newer image models and keep DALL-E 2 only for legacy coverage.

What should I use instead of DALL-E 2?

For OpenAI-based image generation, start by testing newer GPT Image models and DALL-E 3 against your real prompts. OpenAI describes the newer GPT Image API as offering stronger instruction following, text rendering, detailed editing, and real-world knowledge.[5] If you need non-OpenAI options, compare against Stable Diffusion and other image systems before choosing a production stack.