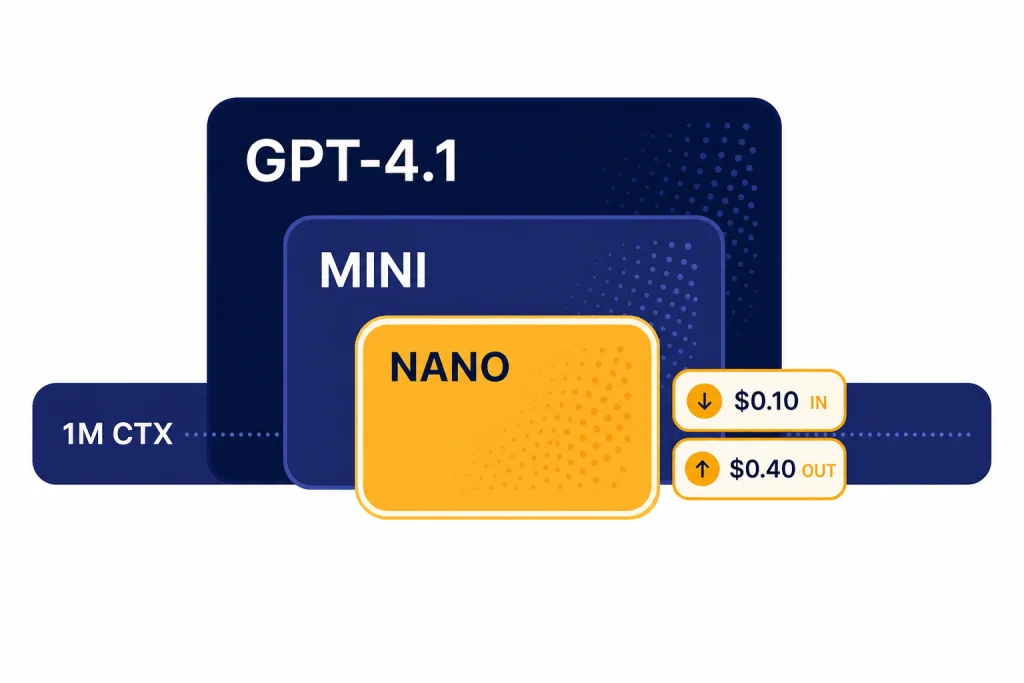

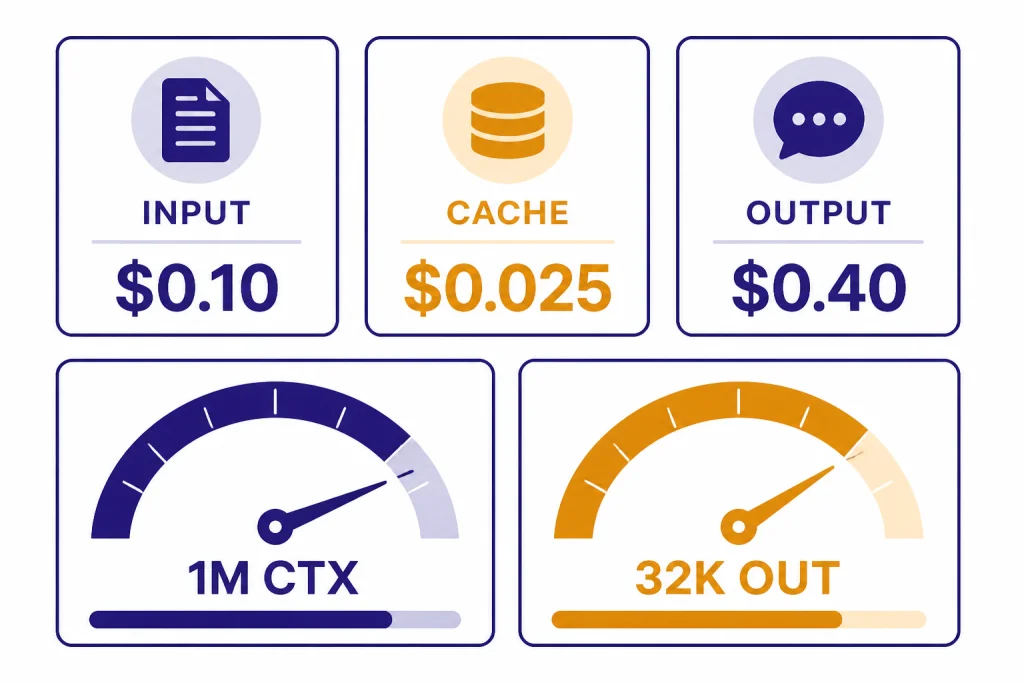

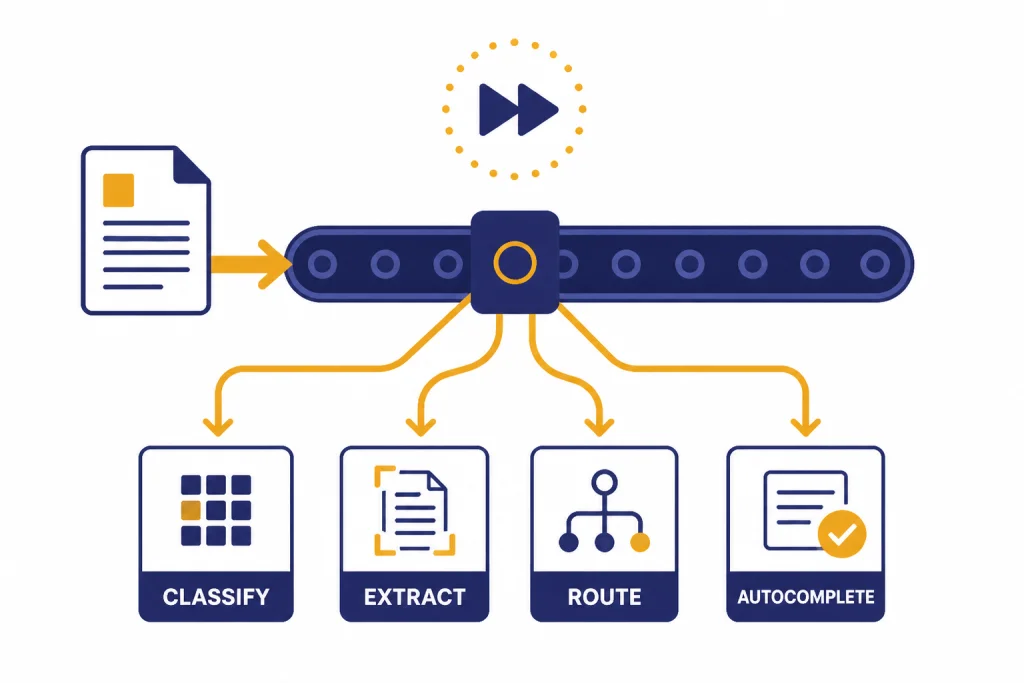

GPT-4.1 nano is the smallest, fastest, and lowest-cost member of OpenAI’s GPT-4.1 family. It is built for API workloads that need quick text responses, image input, tool calling, structured outputs, and large-context handling without paying for the full GPT-4.1 model. OpenAI lists GPT-4.1 nano with a 1,047,576-token context window, 32,768 maximum output tokens, text and image input, text output, and API pricing of $0.10 per 1M input tokens, $0.025 per 1M cached input tokens, and $0.40 per 1M output tokens.[2] It is best for classification, extraction, routing, autocomplete, and simple agent steps, not deep reasoning or difficult coding.

What GPT-4.1 nano is

GPT-4.1 nano is OpenAI’s smallest GPT-4.1 model. OpenAI introduced it with GPT-4.1 and GPT-4.1 mini on April 14, 2025, describing it as the company’s first nano model and as a model aimed at low-latency tasks such as classification and autocompletion.[1] In practical terms, it is the GPT-4.1 family member you choose when the unit economics matter more than maximum intelligence.

The model is an API-first option. OpenAI’s model page lists it for the Responses API, Chat Completions API, Assistants API, Batch API, and other platform endpoints, with support for streaming, function calling, structured outputs, fine-tuning, and predicted outputs.[2] If you are comparing it against the whole catalog, start with our all GPT models compared side by side and then return here for the nano-specific details.

The key idea is simple. GPT-4.1 nano gives developers a long-context, multimodal-input GPT model at a low token price. It does not try to be the most capable model in the lineup. It is designed to run many small tasks cheaply and quickly.

Specs and pricing

GPT-4.1 nano has an unusually large context window for a small model. OpenAI lists a 1,047,576-token context window and 32,768 maximum output tokens.[2] That makes it useful for large inputs such as product catalogs, policy documents, logs, transcripts, and retrieved document bundles, although a larger context window does not mean the model will reason perfectly over every token. For a wider reference, see our context window sizes for every GPT model.

| Field | GPT-4.1 nano | What it means |

|---|---|---|

| Model family | GPT-4.1[2] | Smallest member of the GPT-4.1 line. |

| Model IDs | gpt-4.1-nano and gpt-4.1-nano-2025-04-14[2] | Use the dated snapshot when you need more stable behavior. |

| Context window | 1,047,576 tokens[2] | Large enough for long documents and retrieval-heavy workflows. |

| Maximum output | 32,768 tokens[2] | Enough for long structured responses, though shorter outputs are usually better for this model. |

| Knowledge cutoff | June 1, 2024[2] | The model does not automatically know later events unless you provide them. |

| Input modalities | Text and image[2] | It can inspect images, but it does not generate images. |

| Output modality | Text[2] | Use a separate image, speech, or video model for other media output. |

| Text token price | $0.10 input, $0.025 cached input, $0.40 output per 1M tokens[2] | Low enough for high-volume classification, extraction, and routing. |

OpenAI’s general API pricing page also lists GPT-4.1 nano at $0.10 per 1M input tokens, $0.025 per 1M cached input tokens, and $0.40 per 1M output tokens, which corroborates the pricing shown on the model page.[3] For a broader cost comparison, use our OpenAI API pricing guide and our separate guide to the cheapest GPT model.

Why the nano size matters

The word “nano” signals a smaller model built for speed and cost control. OpenAI describes GPT-4.1 nano as the fastest and most cost-efficient version of GPT-4.1, with very fast speed and average intelligence on its model page.[2] That positioning matters because many production AI systems do not need a top model for every step.

Consider a support platform. A user message might first need language detection, intent classification, safety screening, account-type extraction, and routing to the right workflow. Those steps need consistency and low latency. They do not always need a heavy reasoning model. GPT-4.1 nano fits that first-pass layer.

The same pattern applies to internal search, sales operations, content moderation queues, spreadsheet enrichment, and agentic systems. A small model can label, filter, normalize, and route. A larger model can handle the few cases that remain ambiguous or high value. This division is often cheaper and easier to monitor than sending every request to a frontier model.

Latency also changes product design. A model that returns quickly can sit inside autocomplete, inline editing, form validation, and live triage experiences. If speed is your main criterion, compare this model against our fastest GPT model guide before you standardize.

Benchmarks and capability tradeoffs

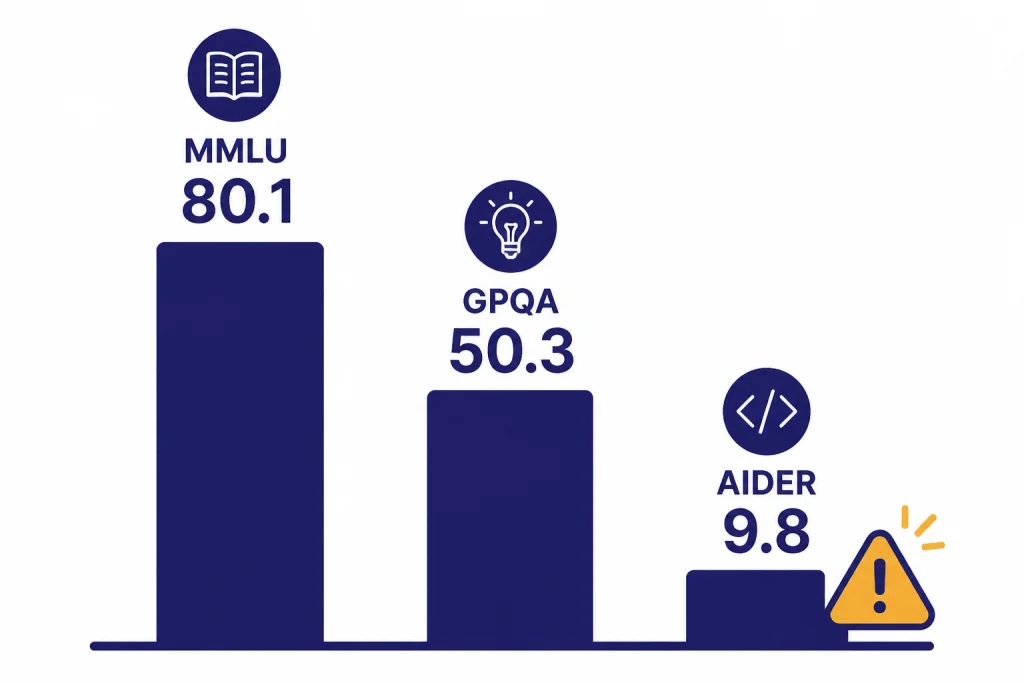

OpenAI’s launch post reported GPT-4.1 nano scores of 80.1% on MMLU, 50.3% on GPQA Diamond, and 9.8% on Aider’s polyglot coding benchmark.[1] Those numbers show the basic profile: strong enough for many language and knowledge tasks, much weaker than larger models on difficult coding and complex reasoning.

| Benchmark or capability | GPT-4.1 nano result | Reader takeaway |

|---|---|---|

| MMLU | 80.1%[1] | Solid general academic knowledge for a small model. |

| GPQA Diamond | 50.3%[1] | Useful, but not a substitute for a top reasoning model on hard science questions. |

| Aider polyglot coding | 9.8%[1] | Not the right default for serious coding agents. |

| MMMU vision benchmark | 55.4%[1] | Can handle some image understanding, but vision-heavy tasks may need a stronger model. |

| MultiChallenge | 15.0% with the default grader and 31.1% with an o3-mini grader[1] | Instruction-following quality depends on task complexity and evaluation method. |

The benchmark spread is more important than any single score. GPT-4.1 nano can classify, extract, summarize, and follow simple formats well enough for many workflows. It should not be your first pick for multi-file software changes, advanced math, legal analysis, medical interpretation, or tasks where an incorrect answer has a high cost. For code-heavy work, compare it with our best GPT model for coding guide.

OpenAI also lists GPT-4.1 nano as supporting function calling and structured outputs.[2] That makes it more useful than a cheap free-form text generator. You can ask it to return JSON, choose a tool, or populate a schema. Still, you should validate outputs server-side. Small models can be overconfident, especially when the prompt contains competing instructions or messy retrieved text.

Best use cases

GPT-4.1 nano works best when the task is bounded, repeatable, and easy to verify. It is a production utility model. Treat it like a fast specialist for small decisions, not like a universal assistant.

- Classification. Label support tickets, sales leads, reviews, abuse reports, search queries, and document types.

- Extraction. Pull names, dates, categories, amounts, entities, or checklist fields from text or image inputs.

- Routing. Decide which model, tool, queue, or workflow should receive the next request.

- Autocomplete. Suggest short completions inside forms, search boxes, code comments, and internal tools.

- Format repair. Convert messy text into structured JSON, normalized CSV fields, or policy-compliant templates.

- Large-context scanning. Read long provided context and return a short answer, tag, or extracted set of fields.

For writing, GPT-4.1 nano is usually better as an assistant to the writing pipeline than as the final author. It can create briefs, classify tone, enforce style rules, or produce short snippets. For polished long-form drafting, compare it with our best GPT model for writing.

For vision, remember the boundary. GPT-4.1 nano accepts image input and returns text output.[2] It can inspect screenshots, receipts, forms, product images, charts, and scanned documents. It cannot generate a new image. If the job is visual generation, you need a dedicated image model rather than this GPT-4.1 variant.

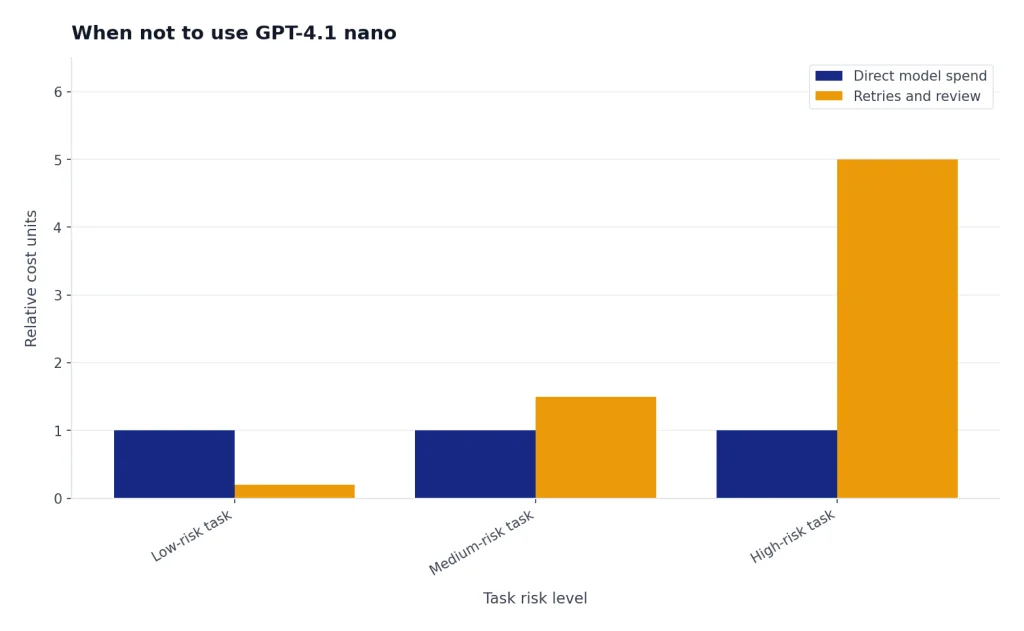

When not to use GPT-4.1 nano

Do not choose GPT-4.1 nano only because it is cheap. The wrong small model can create hidden costs through retries, escalations, manual review, and user frustration. Use it when the task can tolerate occasional uncertainty or when you can route uncertain cases to a stronger model.

Avoid GPT-4.1 nano for hard reasoning. OpenAI’s own benchmark table shows a large gap between GPT-4.1 nano and the larger GPT-4.1 model on several difficult tasks, including Aider’s polyglot coding benchmark and long-context graph tasks.[1] If the model must solve complex logic problems, plan multi-step actions, or debug unfamiliar code, start with a stronger GPT or an o-series reasoning model such as the models covered in our OpenAI o1-mini guide.

Avoid it for final answers in high-stakes domains unless you add expert review and strict validation. That includes medical, legal, financial, security, and compliance decisions. GPT-4.1 nano can help with triage or formatting in those settings, but it should not be the final authority.

Avoid it when style is the product. If you sell prose, analysis, creative ideation, or premium customer support, the savings may not justify a weaker answer. A small model can prepare data for a larger model, but the final response may need more expressive range.

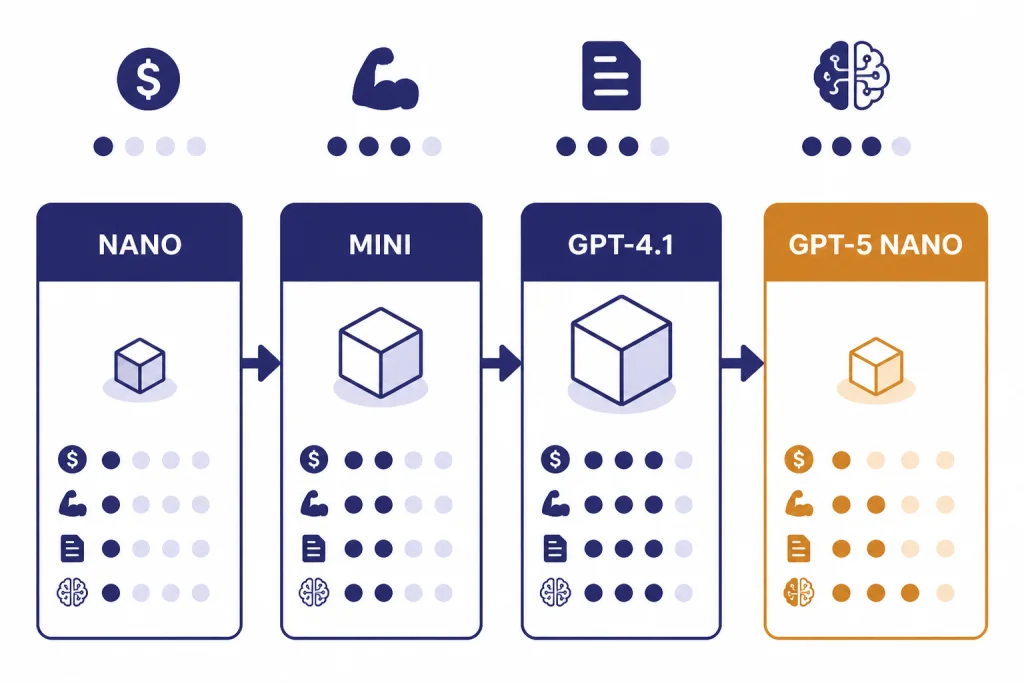

How to choose between GPT-4.1 nano and nearby models

The closest alternatives are GPT-4.1 mini, GPT-4.1, and GPT-5 nano. GPT-4.1 mini costs $0.40 per 1M input tokens and $1.60 per 1M output tokens, while GPT-4.1 costs $2.00 per 1M input tokens and $8.00 per 1M output tokens.[4][5] GPT-5 nano costs $0.05 per 1M input tokens and $0.40 per 1M output tokens, with a 400,000-token context window and 128,000 maximum output tokens.[6]

| Choose this model | When it fits | Tradeoff |

|---|---|---|

| GPT-4.1 nano | You need the lowest-cost GPT-4.1 variant with a 1,047,576-token context window.[2] | Lower reasoning and coding strength than larger models. |

| GPT-4.1 mini | You want a stronger GPT-4.1 small model and can pay $0.40 input and $1.60 output per 1M tokens.[4] | Higher cost than nano. |

| GPT-4.1 | You need the strongest non-reasoning GPT-4.1 option and can pay $2.00 input and $8.00 output per 1M tokens.[5] | Much higher cost for high-volume utility tasks. |

| GPT-5 nano | You want a newer nano option with $0.05 input pricing and reasoning token support.[6] | Smaller 400,000-token context window than GPT-4.1 nano.[6] |

A sensible default is to test GPT-4.1 nano first on tasks with clear labels or schemas. Move to GPT-4.1 mini if it misses too many cases. Move to GPT-4.1 or a reasoning model when the task requires deeper judgment. Move to GPT-5 nano if its newer model behavior and lower input price fit your workload better than GPT-4.1 nano’s larger context window.[2][6]

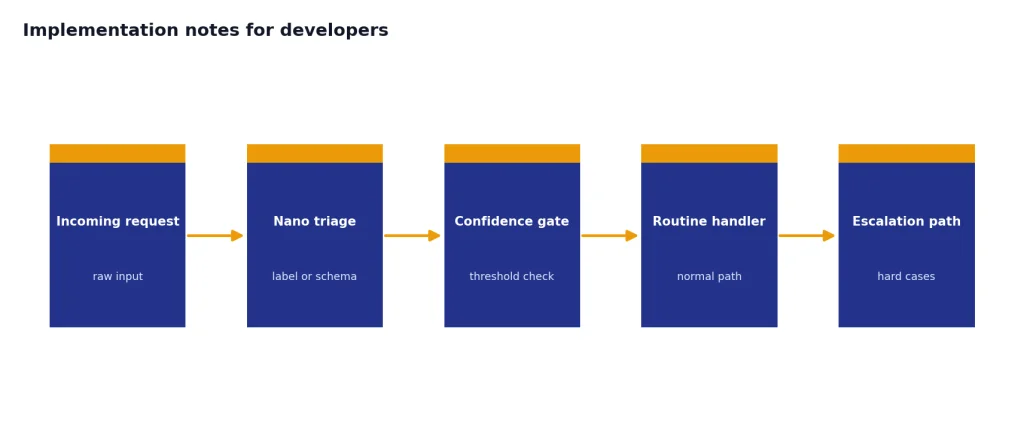

Implementation notes for developers

Use GPT-4.1 nano with narrow prompts. Define the allowed labels, the output schema, and the escalation rule. Small models perform better when the task boundary is explicit.

- Prefer structured outputs. Ask for a fixed schema instead of a paragraph when you need production data.

- Cache repeated context. GPT-4.1 nano cached input is priced at $0.025 per 1M tokens, lower than its standard $0.10 input price.[2]

- Use confidence routing. Ask the model to return a confidence band or reason code, then escalate low-confidence cases.

- Keep outputs short. The model can output up to 32,768 tokens, but utility tasks should usually return compact JSON or short text.[2]

- Pin snapshots for stability. OpenAI lists the

gpt-4.1-nano-2025-04-14snapshot for GPT-4.1 nano.[2] - Evaluate on your own data. Benchmarks help, but routing, extraction, and classification quality depends on your labels and examples.

For a simple routing design, send every user request to GPT-4.1 nano first. The model returns a route such as billing, technical support, sales, safety review, or escalate. A deterministic service then sends the request to the correct handler. This keeps the expensive model calls for cases that need them.

For document extraction, use a schema with required fields and a separate unknown value. Do not force the model to guess. If the document lacks a field, the model should say so in the schema. This one design choice often improves reliability more than switching models.

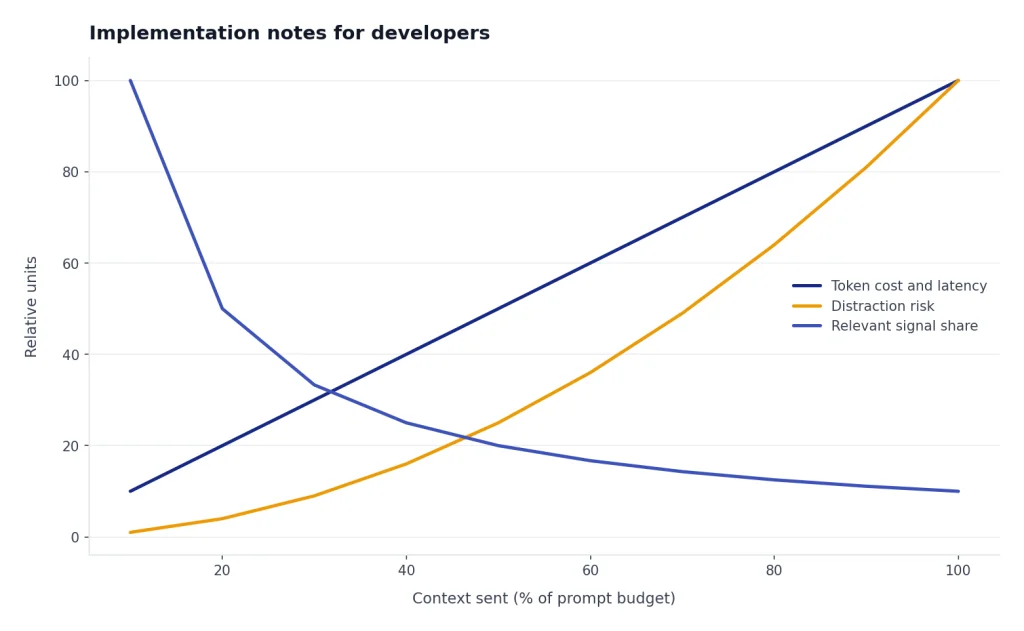

For long-context work, chunking may still help. Even with a 1,047,576-token context window, you should not send irrelevant material just because it fits.[2] Smaller, cleaner context reduces cost, latency, and distraction.

Frequently asked questions

Is GPT-4.1 nano the same as GPT-4.1?

No. GPT-4.1 nano is the smallest GPT-4.1 variant, while GPT-4.1 is the larger model in the family. OpenAI lists GPT-4.1 nano as the fastest and most cost-efficient GPT-4.1 version, and GPT-4.1 as the smartest non-reasoning model in that line.[2][5]

What is GPT-4.1 nano best for?

It is best for classification, extraction, routing, autocomplete, simple summarization, and structured formatting. OpenAI’s launch post specifically called out classification and autocompletion as good fits.[1] Use it where speed and cost matter more than maximum reasoning depth.

How much does GPT-4.1 nano cost?

OpenAI lists GPT-4.1 nano at $0.10 per 1M input tokens, $0.025 per 1M cached input tokens, and $0.40 per 1M output tokens.[2] The OpenAI API pricing page shows the same standard text-token prices.[3]

Does GPT-4.1 nano support images?

Yes, GPT-4.1 nano supports text and image input, with text output.[2] That means it can analyze an image and answer in text. It does not generate images.

Is GPT-4.1 nano good for coding?

Only for light coding-adjacent tasks. OpenAI reported a 9.8% result for GPT-4.1 nano on Aider’s polyglot coding benchmark, far below the larger GPT-4.1 model’s 51.6% result on the same table.[1] Use it for code labeling, simple explanations, or routing, not serious code repair.

Should I use GPT-4.1 nano or GPT-5 nano?

Test both if your workload is cost-sensitive. GPT-5 nano has lower input pricing at $0.05 per 1M input tokens and supports reasoning tokens, while GPT-4.1 nano has a larger 1,047,576-token context window.[6][2] The better choice depends on whether your task needs newer reasoning behavior or larger context.