GPT-4o mini is no longer the automatic answer if you want the cheapest OpenAI model, but it remains a practical budget model for high-volume, focused tasks. It launched as OpenAI’s cost-efficient small model with a 128,000-token context window, text and image inputs, and low per-token pricing.[1][2] In 2026, newer models such as GPT-5 nano and GPT-4.1 nano undercut it on input cost, while GPT-4.1 mini and o4-mini offer stronger alternatives for tool use, long context, or reasoning.[3][4][5][7] The short answer: GPT-4o mini is still good, but it is not the clean budget winner anymore.

What GPT-4o mini is

GPT-4o mini is a smaller, lower-cost member of OpenAI’s GPT-4o family. OpenAI introduced it on July 18, 2024, as a fast and affordable model for common text, vision, and structured-output tasks.[1] It was a major step up from GPT-3.5-era budget models because it paired lower cost with stronger benchmark performance and a much larger context window.[1]

The model accepts text and image inputs and produces text outputs. OpenAI’s model documentation lists support for streaming, function calling, Structured Outputs, fine-tuning, distillation, and predicted outputs for GPT-4o mini.[2] That combination made it useful for builders who needed reliable extraction, classification, summarization, light coding help, and fast support replies without paying for a flagship model on every request.

It also had an important product role. At launch, OpenAI said ChatGPT Free, Plus, and Team users would be able to access GPT-4o mini in place of GPT-3.5, with Enterprise access following the next week.[1] This article focuses on the API model and developer decision-making, because ChatGPT model availability changes more often than API model documentation.

If you are comparing the whole lineup, start with all GPT models compared side by side. If your decision is mostly about prompt size, pair this article with our context window comparison.

Pricing and specs

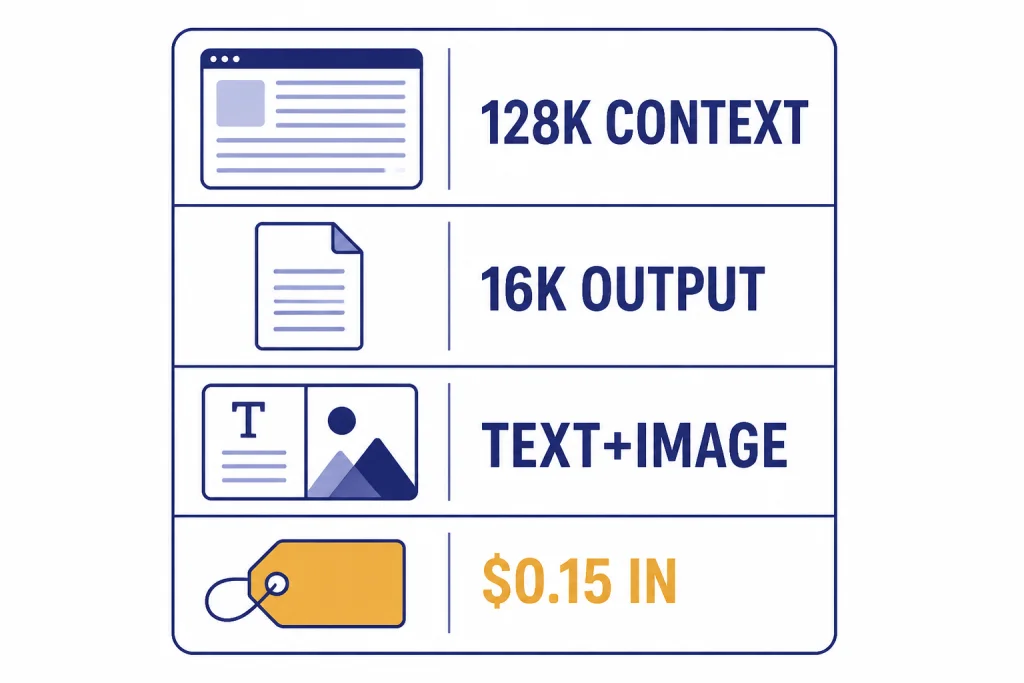

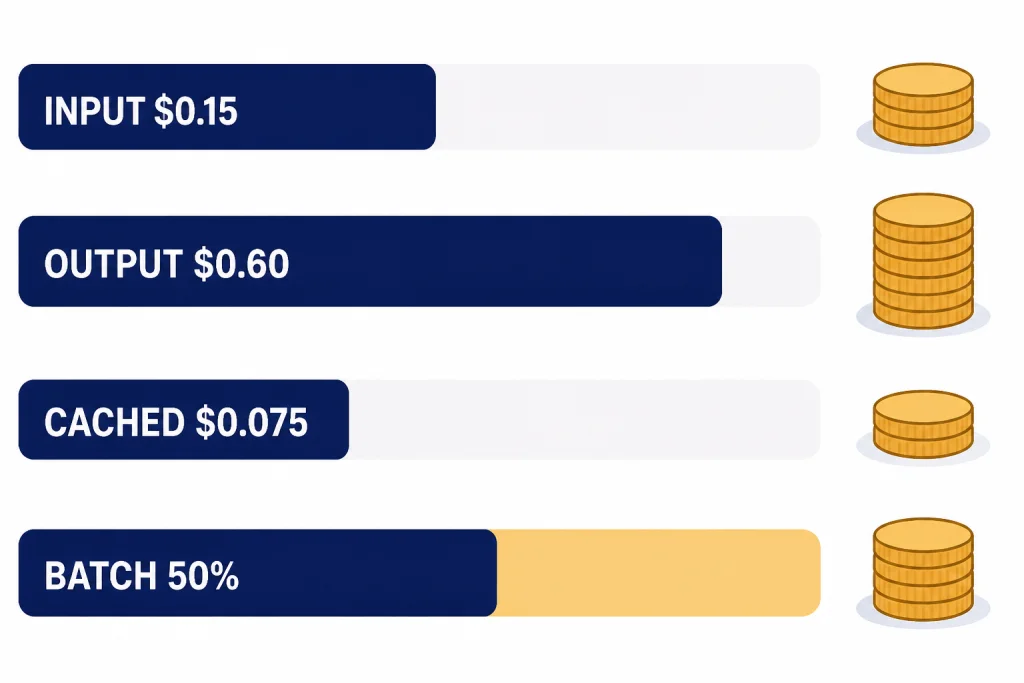

GPT-4o mini’s standard API price is $0.15 per 1 million input tokens, $0.075 per 1 million cached input tokens, and $0.60 per 1 million output tokens.[3] OpenAI’s launch post listed the same $0.15 input and $0.60 output prices, and an independent model directory also lists those prices for the July 18, 2024 snapshot.[1][8]

The model has a 128,000-token context window and a 16,384-token maximum output length in OpenAI’s model documentation.[2] The same 128,000-token context and 16,000-token output range appeared in OpenAI’s launch materials.[1] OpenAI lists the model’s knowledge cutoff as October 1, 2023.[2]

| Spec | GPT-4o mini | Why it matters |

|---|---|---|

| Input price | $0.15 per 1M tokens | Low cost for large batches of prompts.[3] |

| Cached input price | $0.075 per 1M tokens | Useful when many requests share a long system prompt or reference block.[3] |

| Output price | $0.60 per 1M tokens | Still inexpensive, but not the lowest output price in OpenAI’s current lineup.[3] |

| Context window | 128,000 tokens | Large enough for long transcripts, many documents, or big support histories.[2] |

| Maximum output | 16,384 tokens | Enough for long reports, code files, and structured exports.[2] |

| Inputs | Text and image | Works for low-cost visual question answering and OCR-like workflows.[2] |

| Outputs | Text | Not an image, audio, or video generation model.[2] |

For a concrete cost example, a workload with 10 million input tokens and 2 million output tokens would cost about $2.70 on GPT-4o mini at standard prices. The same token mix would cost about $45.00 on GPT-4o at standard prices, using OpenAI’s listed $2.50 input and $10.00 output rates for GPT-4o.[3] That difference is why GPT-4o mini became popular for high-volume tasks that do not need the strongest model.

Batch processing can lower the bill further when you do not need an immediate response. OpenAI says the Batch API offers 50% lower costs and a 24-hour turnaround for asynchronous request groups.[9] For more detailed pricing across the platform, see our OpenAI API pricing breakdown and our separate guide to the cheapest GPT model.

How GPT-4o mini compares with newer budget models

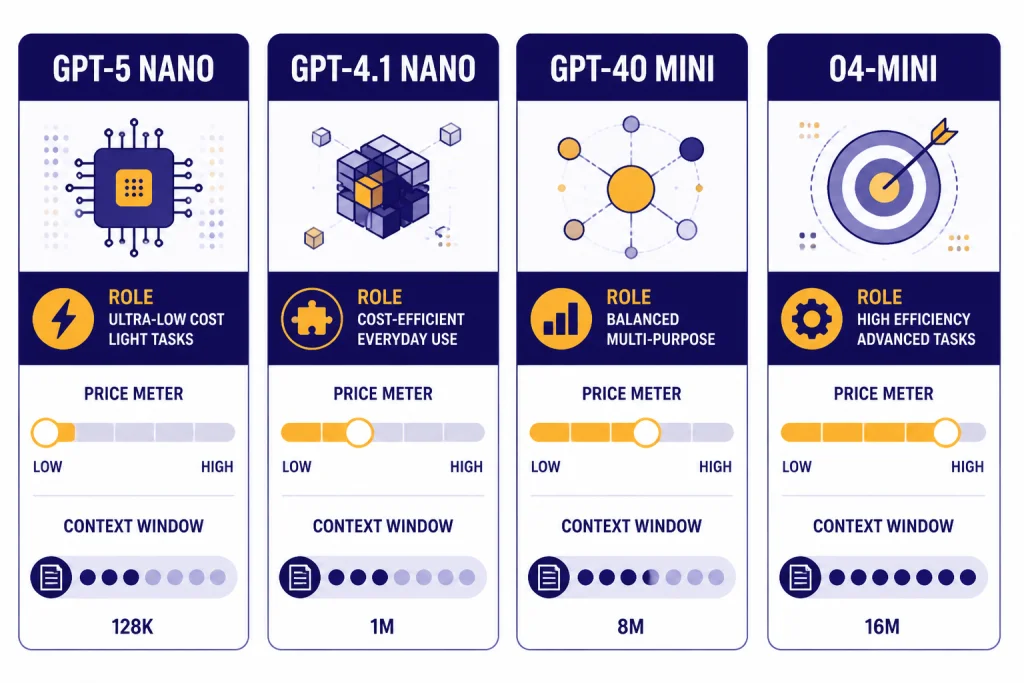

The budget question changed after GPT-4o mini launched. GPT-5 nano is now listed by OpenAI as the fastest and cheapest version of GPT-5, with $0.05 input, $0.005 cached input, and $0.40 output pricing per 1 million tokens.[4] GPT-4.1 nano also undercuts GPT-4o mini on input and output price, with $0.10 input, $0.025 cached input, and $0.40 output pricing per 1 million tokens.[5]

That does not make GPT-4o mini useless. It means the right budget model depends on the workload. GPT-4o mini is a sensible choice when you already have stable prompts, measured quality, and production behavior that meets your needs. GPT-5 nano is a better first test when your main goal is the lowest possible token cost. GPT-4.1 nano and GPT-4.1 mini are stronger candidates when you need long context and precise instruction following without a reasoning step.[5][6]

| Model | Best budget role | Input / output price | Context window | Main tradeoff |

|---|---|---|---|---|

| GPT-4o mini | Stable low-cost general tasks with text and image input | $0.15 / $0.60 per 1M tokens | 128,000 tokens | Not the cheapest current option.[2][3] |

| GPT-5 nano | Lowest-cost summarization and classification | $0.05 / $0.40 per 1M tokens | 400,000 tokens | Smaller GPT-5 tier; test quality before replacing stronger models.[4] |

| GPT-4.1 nano | Low-cost instruction following and tool calls | $0.10 / $0.40 per 1M tokens | 1,047,576 tokens | May be weaker than larger models on nuanced tasks.[5] |

| GPT-4.1 mini | Better small-model quality with long context | $0.40 / $1.60 per 1M tokens | 1,047,576 tokens | Costs more than GPT-4o mini.[6] |

| o4-mini | Reasoning, coding, and visual tasks where extra thinking helps | $1.10 / $4.40 per 1M tokens | 200,000 tokens | Much more expensive than GPT-4o mini.[7] |

Benchmarks support the idea that GPT-4o mini was strong for its original class. OpenAI said it scored 82.0% on MMLU, 87.0% on MGSM, 87.2% on HumanEval, and 59.4% on MMMU at launch.[1] Those results were impressive for a small low-cost model in 2024, but they should not be treated as proof that it beats every newer budget option in 2026.

If speed matters more than small differences in answer quality, compare it with our fastest GPT model guide. If the task is code-heavy, check the best GPT model for coding before defaulting to GPT-4o mini.

Best use cases for GPT-4o mini

GPT-4o mini works best when the task is bounded, repeatable, and easy to evaluate. It is not the model to choose when every answer needs deep reasoning. It is the model to test when you need many acceptable answers at low cost.

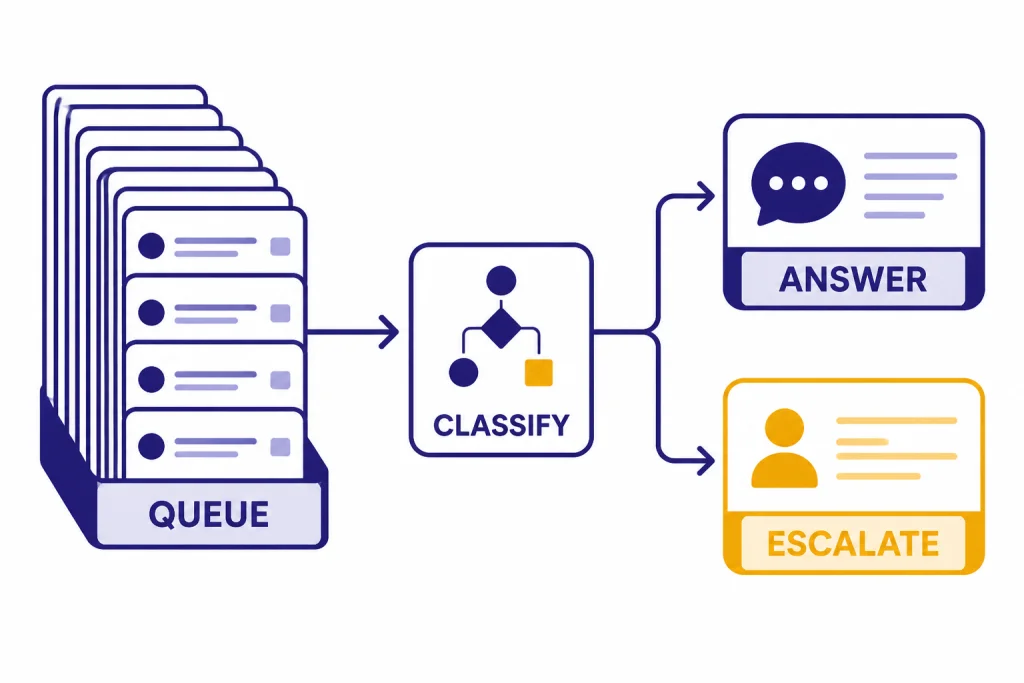

Classification and routing

Use GPT-4o mini to classify support tickets, route leads, label documents, or pick the next automation step. The model’s function calling and Structured Outputs support make it suitable for returning predictable JSON fields or tool arguments.[2] A common pattern is to let GPT-4o mini decide whether a request is simple enough to answer directly or complex enough to send to a stronger model.

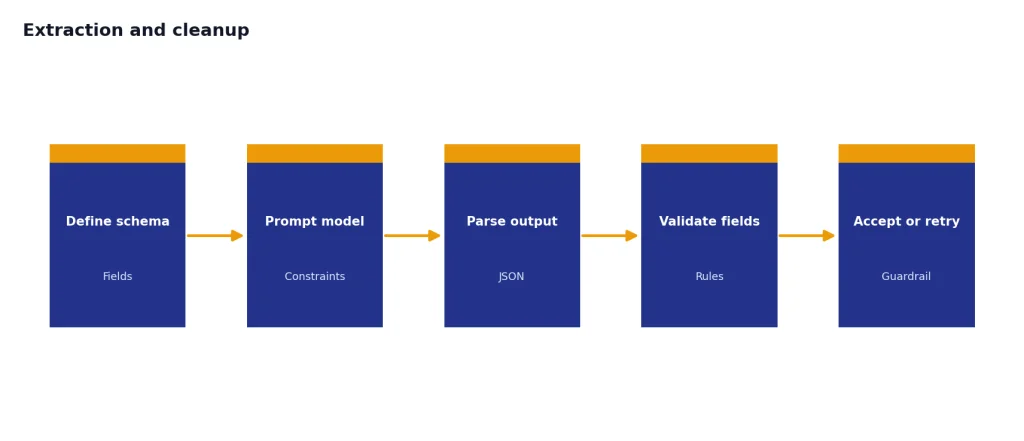

Extraction and cleanup

It is a good fit for extracting names, dates, invoice fields, product attributes, policy clauses, or search keywords from messy text. In these tasks, the prompt can define the schema tightly, and your application can validate the result. If you repeat the same schema instructions across requests, cached input pricing can reduce costs.[3]

Summaries at scale

GPT-4o mini can summarize call transcripts, chat logs, meeting notes, and internal documents cheaply. The 128,000-token context window helps when the source is long.[2] For very long documents or repositories, compare it with newer long-context models in our context window sizes guide.

Low-cost image understanding

Because GPT-4o mini accepts image input and returns text, it can handle basic visual understanding tasks such as describing a screenshot, checking whether an uploaded image contains a required element, or extracting visible text from a form.[2] For higher-stakes visual analysis, compare it with our GPT-4 Vision guide and test against real examples.

Drafting with strict templates

GPT-4o mini can draft short emails, product blurbs, support replies, and internal summaries when your prompt gives a clear template. It is less ideal for subtle editorial judgment, brand voice, or long-form creative work. For those workloads, use our best GPT model for writing comparison.

When to pick another model

Pick another model when the task needs stronger reasoning, fresher knowledge, larger context, or the lowest possible token price. GPT-4o mini is affordable, but the current pricing table shows GPT-5 nano and GPT-4.1 nano below it on input and output cost.[3][4][5]

- Choose GPT-5 nano when cost is the main constraint and the task is summarization, classification, or another well-defined operation. OpenAI lists GPT-5 nano at $0.05 input and $0.40 output per 1 million tokens, with a 400,000-token context window.[4]

- Choose GPT-4.1 nano when you need a very cheap non-reasoning model with a 1,047,576-token context window and strong instruction-following focus.[5]

- Choose GPT-4.1 mini when you want a small model but can pay more for better instruction following, tool calling, and long-context handling.[6]

- Choose o4-mini when the task needs reasoning effort, coding strength, or visual reasoning beyond a basic fast model. OpenAI lists o4-mini at $1.10 input and $4.40 output per 1 million tokens.[7] See our OpenAI o4-mini review for more detail.

- Choose a flagship model when correctness matters more than cost. Medical, legal, financial, security, and complex software tasks should not be optimized around the cheapest model first.

Also avoid GPT-4o mini if you need direct audio or video generation. It is a text-output model with text and image inputs in the main API documentation.[2] Use specialized audio, image, or video models when the output format requires them.

Migration advice for existing GPT-4o mini users

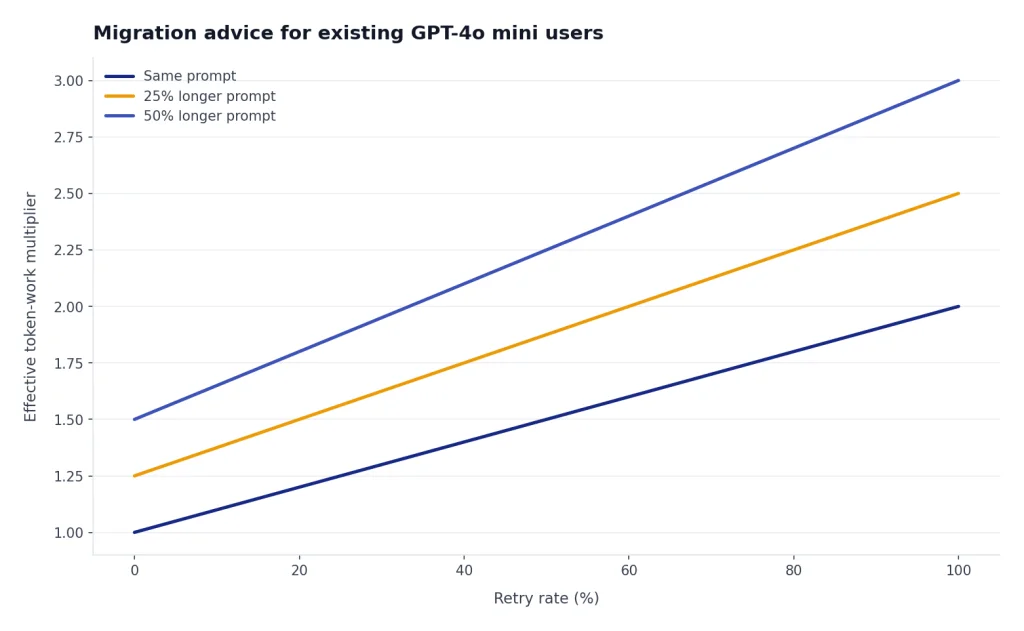

If GPT-4o mini is already working in production, do not migrate only because a newer model has a lower sticker price. Run an evaluation first. Small price differences can disappear if the replacement model needs longer prompts, more retries, more validation, or more human review.

A practical migration test should include your real prompts, your real failure cases, and your real output checks. Compare GPT-4o mini with GPT-5 nano and GPT-4.1 nano on the same sample set. Measure success rate, parse failures, average output length, latency, and total cost. If the cheaper model produces shorter or more accurate outputs, the migration is easy. If it produces more edge-case failures, keep GPT-4o mini or route only the simplest traffic to the cheaper model.

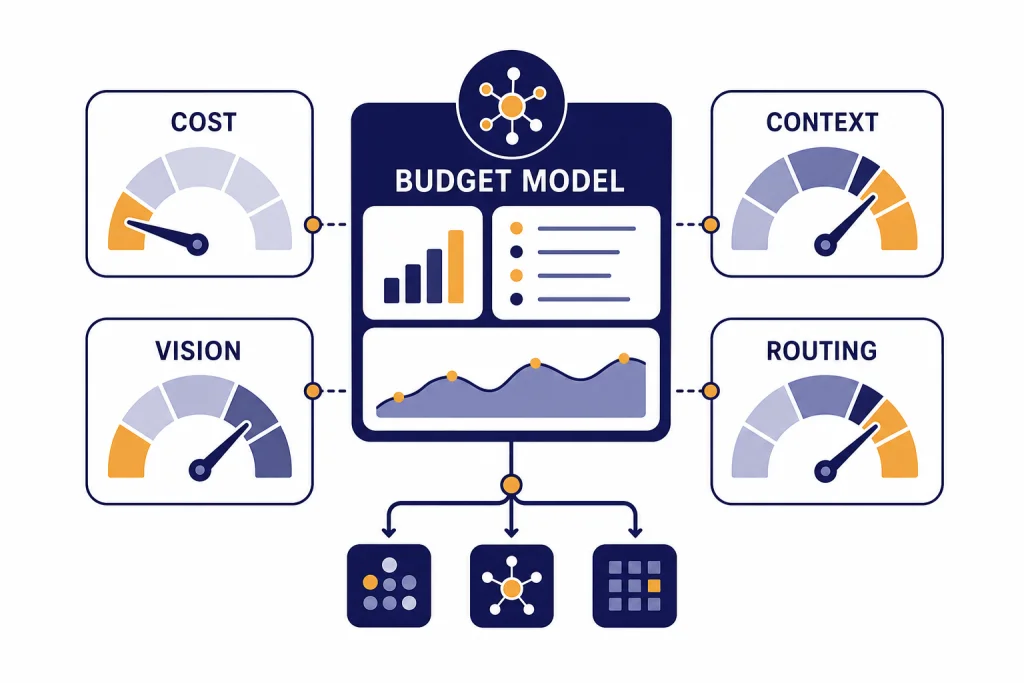

For many teams, the best budget architecture is not one model. It is a router. Use a cheap model for triage, a small reliable model for routine answers, and a stronger model only when the request is ambiguous, high-value, or risky. GPT-4o mini can still occupy the middle tier in that design.

OpenAI has not published an official parameter count for GPT-4o mini. Do not base a migration on rumored size estimates. Base it on evals, cost traces, and user-visible quality.

Frequently asked questions

Is GPT-4o mini still the cheapest OpenAI model?

No. OpenAI’s current pricing lists GPT-5 nano at $0.05 input and $0.40 output per 1 million tokens, while GPT-4o mini is $0.15 input and $0.60 output per 1 million tokens.[3][4] GPT-4.1 nano is also cheaper than GPT-4o mini on both input and output tokens.[5]

What is GPT-4o mini best at?

It is best at focused, high-volume tasks such as classification, extraction, summarization, routing, and templated replies. OpenAI describes it as a fast, affordable small model for focused tasks, with text and image inputs and text outputs.[2] It is less suitable for deep reasoning or high-stakes expert work.

Does GPT-4o mini support vision?

Yes. OpenAI’s model documentation lists text and image as supported inputs for GPT-4o mini, with text as the output modality.[2] That makes it useful for screenshot checks, visual descriptions, and simple image-to-text workflows.

How large is the GPT-4o mini context window?

OpenAI lists GPT-4o mini with a 128,000-token context window and a 16,384-token maximum output length.[2] That is large for many application workflows, but newer small models can offer larger context windows.[4][5]

Should I use GPT-4o mini or GPT-5 nano?

Start with GPT-5 nano if you are building a new cost-sensitive workflow and can validate quality with your own tests. Keep GPT-4o mini in the comparison if you need stable behavior for an existing workflow or want a proven small model with text and image input support.[2][4] The cheaper model is not always the cheaper system if it causes more retries or review work.

Is GPT-4o mini good for coding?

It can help with simple code explanations, small snippets, and structured code-related extraction. OpenAI reported an 87.2% HumanEval score for GPT-4o mini at launch.[1] For demanding coding, compare it with newer reasoning or coding-focused models before choosing it.