GPT vs DeepSeek is not a simple closed-versus-open contest. As of March 8, 2026, OpenAI’s strongest general comparison point is GPT-5.4, released on March 5, 2026 for ChatGPT, the API, and Codex.[1] DeepSeek’s most relevant open-weight challenger is DeepSeek-V3.2, released on December 1, 2025 as a reasoning-first model for agents.[3] GPT is the safer default for polished answers, multimodal workflows, tool use, enterprise controls, and complex professional work. DeepSeek is the better experimenter’s choice when API cost, open weights, self-hosting, and transparent model access matter more than the most integrated product experience.

Quick verdict

Choose GPT if you want the best all-around assistant with fewer rough edges. GPT-5.4 is built into ChatGPT, Codex, and the OpenAI API, and OpenAI describes it as a frontier model for professional work across reasoning, coding, and agentic workflows.[1] It also has the stronger surrounding product: voice, files, image understanding, app integrations, team administration, and mature business controls. If your work depends on reliability, formatting, or handoff to nontechnical users, GPT is easier to recommend.

Choose DeepSeek if you want a capable model you can inspect, route through multiple providers, or self-host. DeepSeek-V3.2 is open-weight, available through DeepSeek’s app, web product, and API, and its model card says the repository and model weights are licensed under the MIT License.[7] That changes the economics and governance discussion. You can build around the model rather than only consume it through a hosted interface.

The short version: GPT wins for product quality and enterprise adoption. DeepSeek wins for cost-sensitive development, open-weight deployment, and teams that want more infrastructure control. If you are also comparing other open or lower-cost challengers, read our GPT vs Mistral comparison and GPT vs Qwen breakdown next.

What we compared

For this gpt vs deepseek test, the fair comparison is not “all GPT models” against “all DeepSeek models.” It is the current OpenAI frontier GPT experience against the current DeepSeek open-weight family most users can actually reach. On the OpenAI side, that means GPT-5.4 in ChatGPT and the API.[1] On the DeepSeek side, that means DeepSeek-V3.2 through the DeepSeek API, app, web interface, or self-hosted weights.[3]

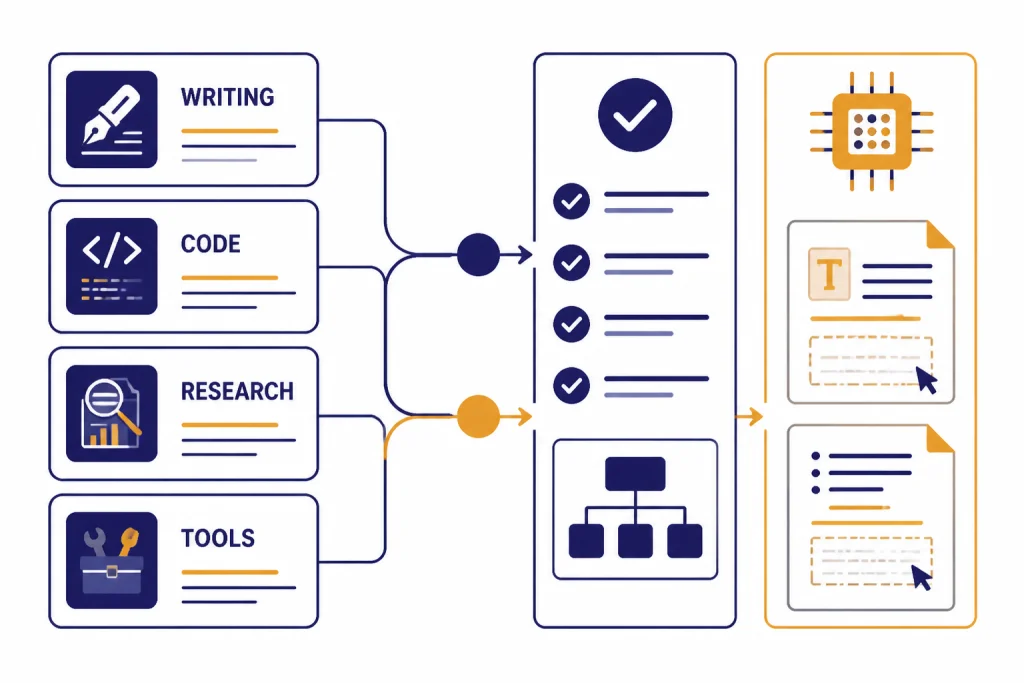

We judged the models on practical work, not leaderboard theater. The test categories were long-form writing, technical explanation, code repair, spreadsheet-style reasoning, research synthesis, tool-use readiness, cost control, deployment flexibility, and data handling. We did not treat a single benchmark score as decisive. Benchmarks are useful, but model choice usually comes down to failure modes, integration burden, and the cost of a bad answer.

| Category | GPT | DeepSeek | Practical takeaway |

|---|---|---|---|

| Best default assistant | Stronger | Good | GPT gives more polished responses with less prompt repair. |

| Open-weight control | No | Yes | DeepSeek is the clear pick for self-hosting and model inspection. |

| Enterprise controls | Stronger | More limited | GPT fits regulated business rollouts more cleanly. |

| API cost pressure | Higher | Lower | DeepSeek is attractive for high-volume text workloads. |

| Coding agents | Stronger product stack | Strong open alternative | GPT works better when Codex, tools, and managed reliability matter. |

| Research and citations | Better integrated | Usable but less polished | GPT is easier for nontechnical research workflows. |

This article focuses on text and agentic work. If your comparison is mainly visual, use our DALL-E vs Stable Diffusion guide or DALL-E vs Midjourney review. If your comparison is video-first, see Sora vs Runway and Sora vs Google Veo.

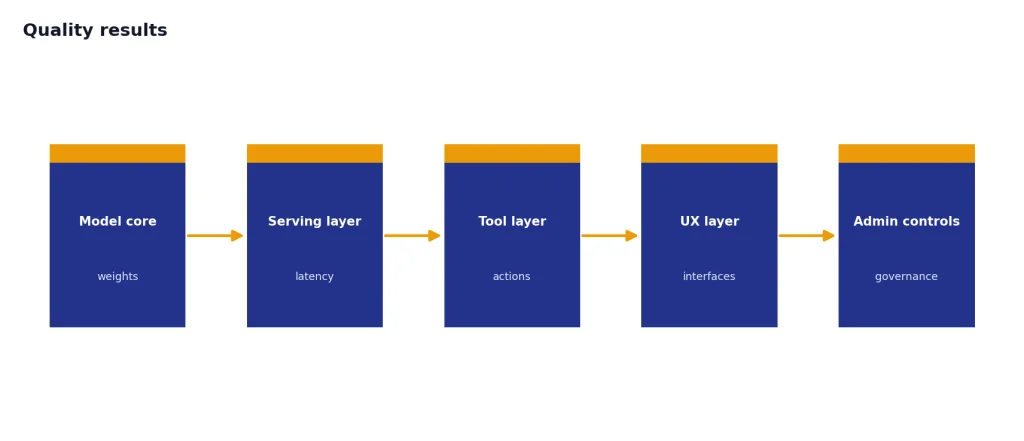

Quality results

GPT produced the better finished work in most general-user tasks. It followed style constraints more consistently, used cleaner structure, and needed fewer retries when the prompt mixed several requirements. That matters for business writing, policy drafts, customer replies, executive summaries, and slide-outline work. GPT also handled ambiguity better. When a prompt lacked context, it was more likely to ask a useful clarifying question or state an assumption before proceeding.

DeepSeek was strongest when the task had a clear target and the prompt included enough context. It handled technical explanations, code-oriented questions, and structured summaries well. Its weaknesses showed up in tone control and final polish. It sometimes produced answers that were correct enough but felt less edited. For internal tools, that may be fine. For customer-facing writing, GPT usually reduced the human cleanup step.

OpenAI’s own GPT-5.4 release emphasizes professional work, reporting benchmark gains in areas such as GDPval, finance tasks, OfficeQA, coding, computer use, tool use, and long-context retrieval.[1] DeepSeek’s official V3.2 release positions the model around reasoning and agent use, including thinking in tool-use and support for both thinking and non-thinking modes.[3] Those claims matched the broad pattern in our testing: GPT felt like a full product system, while DeepSeek felt like a strong model component.

The difference is clearest in multi-step work. GPT was better at preserving intent across revisions. If we asked for a rewrite, then a shorter rewrite, then a version aimed at a different audience, it kept more of the original requirements intact. DeepSeek could do this, but it was more sensitive to prompt wording and sometimes over-corrected after feedback.

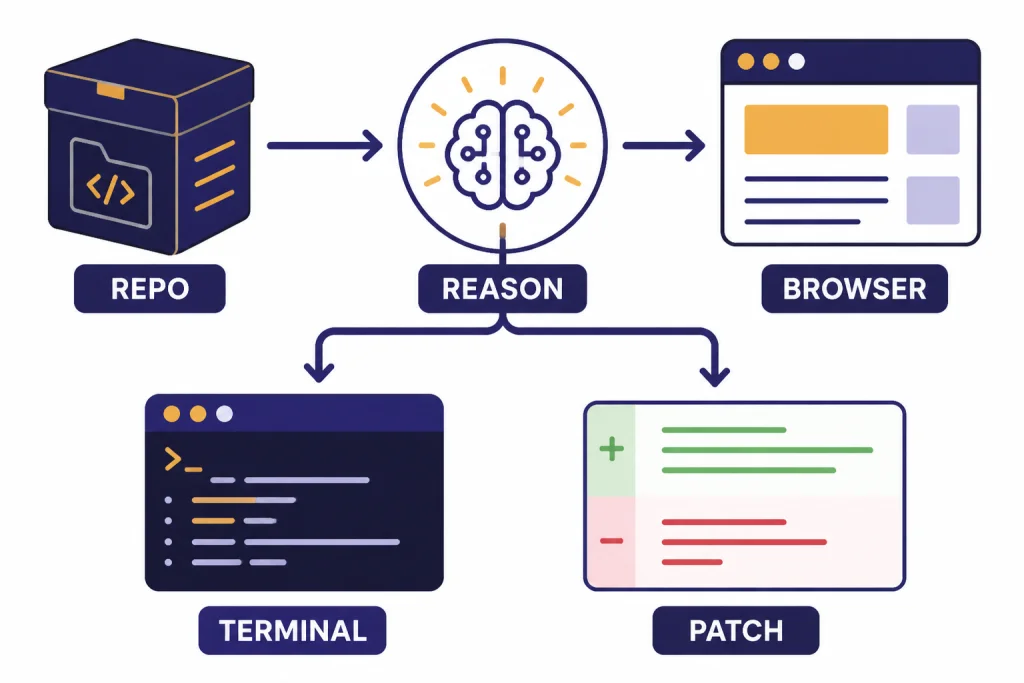

Coding and agent work

GPT has the advantage for managed coding workflows. OpenAI says GPT-5.4 combines the coding strengths of GPT-5.3-Codex with broader knowledge-work and computer-use capabilities, and that it matches or outperforms GPT-5.3-Codex on SWE-Bench Pro while running at lower latency across reasoning efforts.[1] OpenAI also reports GPT-5.4 at 57.7% on SWE-Bench Pro Public and 75.0% on OSWorld-Verified.[1] Those are vendor-published numbers, but they explain why GPT feels better suited to long coding sessions, UI debugging, and tool-assisted development.

DeepSeek remains a serious coding challenger. DeepSeek-V3.2 was released as a reasoning-first model built for agents, and the release notes say it introduced thinking in tool-use with training data covering 1,800+ environments and 85,000+ complex instructions.[3] Its advantage is architectural freedom. You can run open weights, test alternative inference stacks, and use providers that expose different throughput and cost profiles. That is useful for engineering teams building internal copilots or code-review tools.

The best way to split them is by ownership. Use GPT when you want a coding assistant that works inside a mature managed product and can call tools reliably. Use DeepSeek when you want to own the deployment path, tune the serving layer, or build a lower-cost coding backend. If your main decision is between OpenAI’s own reasoning families, read GPT vs the o-Series, OpenAI o1 vs o3, and OpenAI o1 vs o1-pro.

There is one important caveat. Coding benchmarks do not always predict repository-level usefulness. A model can pass tests and still make a brittle architectural choice. In our use, GPT was better at explaining tradeoffs before changing code. DeepSeek was better when we provided a narrow patch request and explicit constraints.

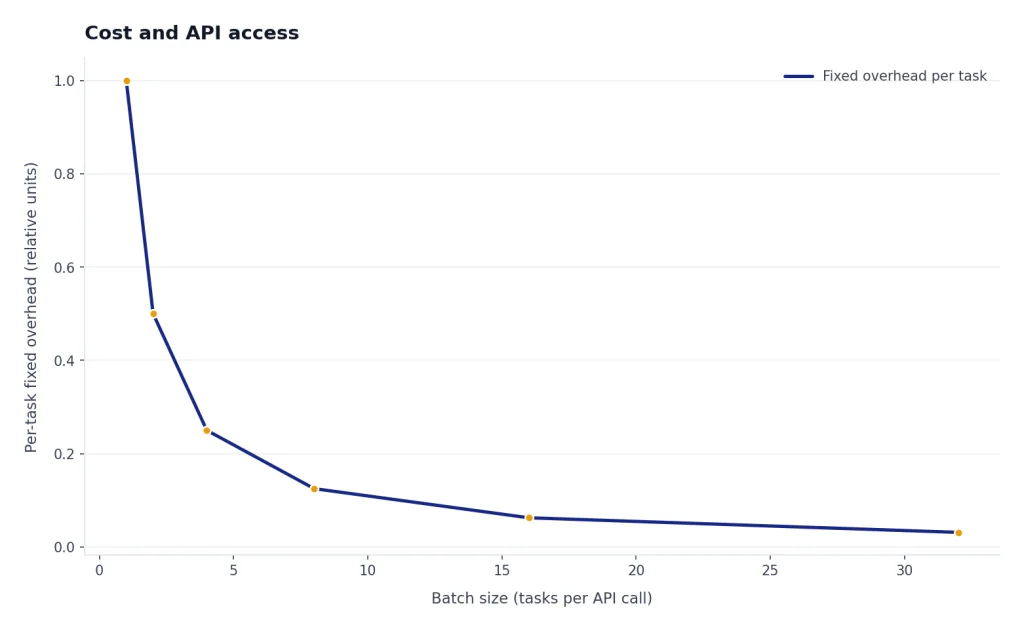

Cost and API access

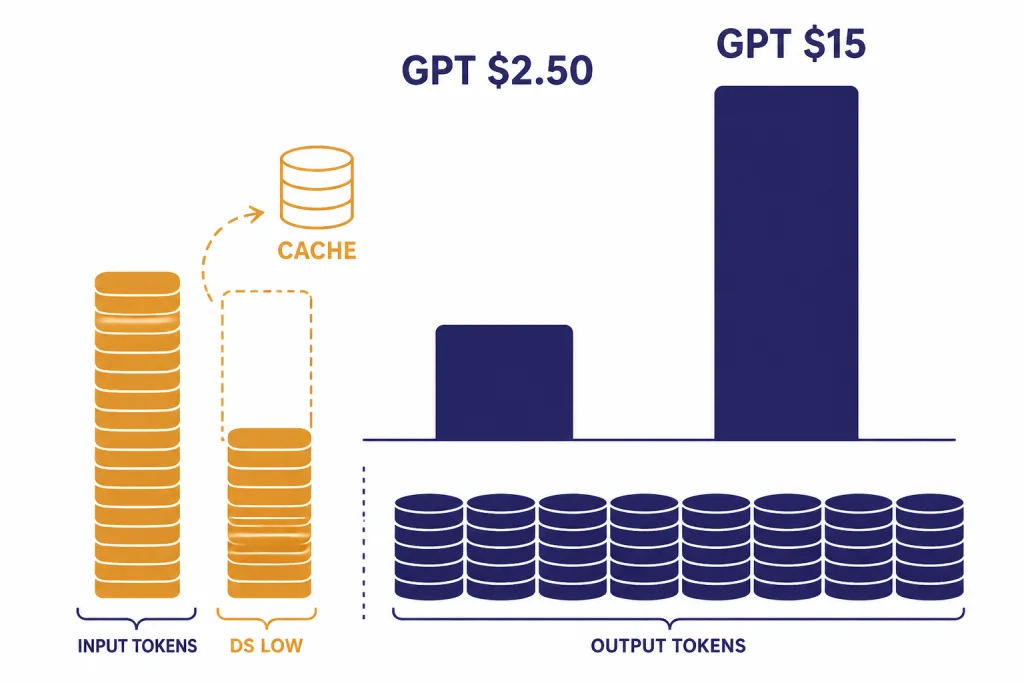

DeepSeek’s cost story is its biggest advantage, but exact March pricing needs care. OpenAI published GPT-5.4 API pricing at $2.50 per million input tokens, $0.25 per million cached input tokens, and $15 per million output tokens; GPT-5.4 Pro was listed at $30 per million input tokens and $180 per million output tokens.[1] DeepSeek’s official pricing page identified deepseek-chat and deepseek-reasoner as DeepSeek-V3.2 endpoints with a 128K context limit, but public March pricing trackers disagreed on whether common V3.2 usage should be read as $0.14/$0.28 or $0.28/$0.42 per million input/output tokens.[4][9] Treat DeepSeek as cheaper, but verify the live billing page before budgeting.

The cost gap changes product design. With GPT, you usually spend more time deciding which calls need the frontier model, which can use a smaller GPT model, and which can be cached or batched. With DeepSeek, you may be able to send more traffic to the same class of model before cost becomes the limiting factor. That makes DeepSeek attractive for summarization queues, internal search assistants, classification, test generation, and high-volume draft generation.

| API factor | GPT-5.4 | DeepSeek-V3.2 | What it means |

|---|---|---|---|

| Published release timing | March 5, 2026 | December 1, 2025 | GPT is the newer frontier release as of publication.[1][3] |

| Standard input price signal | $2.50 per 1M tokens | Public trackers showed $0.14–$0.28 per 1M tokens | DeepSeek is usually far cheaper for text API volume.[1][9] |

| Standard output price signal | $15 per 1M tokens | Public trackers showed $0.28–$0.42 per 1M tokens | Long generated outputs favor DeepSeek on cost.[1][9] |

| Context signal | GPT-5.4 includes standard 272K context, with experimental 1M context in Codex at higher usage accounting | DeepSeek pricing docs listed a 128K context limit for V3.2 endpoints | GPT has the larger official long-context path; DeepSeek is cheaper for common long prompts.[1][4] |

| Provider model | Managed OpenAI platform | Hosted API plus open weights | DeepSeek gives more infrastructure options. |

If price is your main concern inside OpenAI’s ecosystem, compare GPT families before switching providers. Our OpenAI API pricing guide, GPT models comparison, and fastest GPT model analysis will help you decide whether a cheaper GPT model already solves the problem.

Open source and control

DeepSeek’s open-weight status is the main reason this comparison is different from GPT vs most proprietary competitors. DeepSeek’s V3.2 release links to model weights and a technical report, and its model card says the repository and model weights are under the MIT License.[3][7] DeepSeek-R1 was also announced with code and models under the MIT License.[8] This does not mean every part of the training process is open. It does mean developers can inspect and deploy weights in a way they cannot with GPT.

GPT is proprietary. OpenAI has not published an official parameter count for GPT-5.4, and the model is accessed through OpenAI-controlled products and APIs. That is not automatically bad. Proprietary access gives OpenAI room to package safety systems, tools, support, latency tiers, admin features, and compliance controls in one managed service. For many companies, that package is worth more than weight access.

The control question comes down to your team. If you have infrastructure engineers, model-serving experience, and a reason to own the stack, DeepSeek is compelling. If you need the model to work for sales, support, finance, legal, and product teams without building an AI platform first, GPT is simpler. For plan-level buying decisions, see ChatGPT Free vs Plus vs Pro, ChatGPT Pro vs Team, and ChatGPT Team vs Enterprise.

Privacy and business risk

GPT has the stronger business privacy story for organizations that need standard controls. OpenAI says it does not use data from ChatGPT Enterprise, ChatGPT Business, ChatGPT Edu, ChatGPT for Healthcare, ChatGPT for Teachers, or the API platform to train or improve models by default.[2] OpenAI also describes encryption at rest and in transit, data retention controls, and data residency options for eligible customers.[2] Those details matter for procurement.

DeepSeek’s hosted service needs more scrutiny. Its privacy policy says the services are provided and controlled by Hangzhou DeepSeek Artificial Intelligence Co., Ltd., registered in China.[5] The same policy says DeepSeek directly collects, processes, and stores personal data in the People’s Republic of China to provide the services.[5] It also says users have the right to opt out of using personal data for training models or optimizing technologies, which is different from a default business-data exclusion for API and enterprise customers.[5]

Self-hosting changes the DeepSeek risk profile. If you run the weights in your own environment, the hosted DeepSeek privacy policy is no longer the only issue. You still need to manage model security, logging, access control, output filtering, patching, and compliance. Open weights do not eliminate governance work. They move more of it onto your team.

Which should you use?

Use GPT for client-facing writing, complex research, multimodal work, spreadsheets, presentations, coding inside a managed assistant, and enterprise deployments. It is the better default when the user experience matters as much as the model. It is also the safer recommendation when your organization needs procurement documentation, account controls, and predictable product support.

Use DeepSeek for experimental infrastructure, high-volume internal text processing, self-hosting, open-weight research, and cost-sensitive developer tools. It is especially attractive when you can tolerate a rougher product layer in exchange for lower inference cost and more deployment freedom. It is not the easiest choice for nontechnical teams that just want a polished assistant.

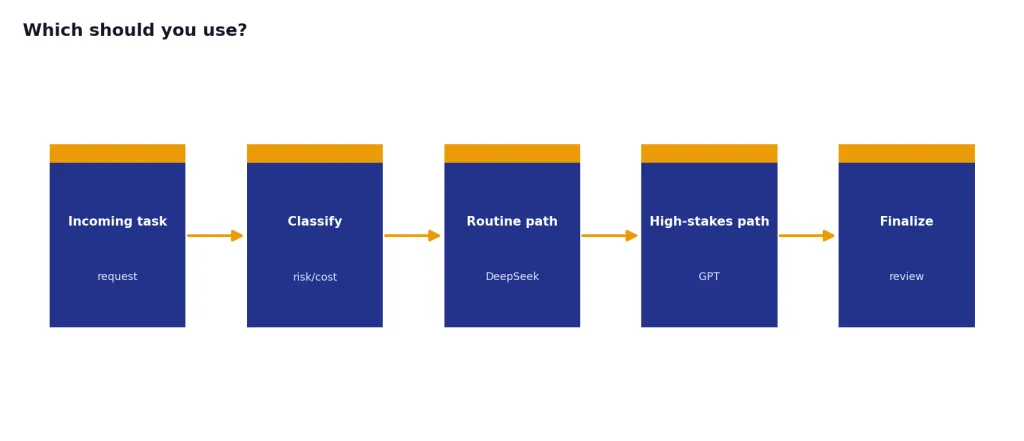

Use both if you are building serious AI software. A common architecture is to route routine, high-volume tasks to DeepSeek and reserve GPT for hard reasoning, final drafting, sensitive workflows, or user-facing responses. That gives you cost leverage without giving up quality where it matters. If you are still mapping the broader market, start with our ChatGPT alternatives 2026 list and best AI chatbot alternatives.

Our final recommendation is straightforward. GPT wins as the better product. DeepSeek wins as the more flexible open-weight challenger. The right choice depends less on ideology and more on whether your bottleneck is answer quality, compliance, cost, or control.

Frequently asked questions

Is DeepSeek better than GPT?

DeepSeek is better than GPT for open-weight control, self-hosting experiments, and many cost-sensitive API workloads. GPT is better for polished general use, business rollout, multimodal workflows, and managed tool use. Most users should start with GPT unless they specifically need DeepSeek’s openness or cost profile.

Is DeepSeek really open source?

DeepSeek is best described as open-weight rather than fully open in every possible sense. DeepSeek’s V3.2 model card says the repository and model weights are licensed under the MIT License.[7] That does not mean the full training dataset and every training decision are public.

Which is cheaper, GPT or DeepSeek?

DeepSeek is generally cheaper for text API workloads. OpenAI listed GPT-5.4 at $2.50 per million input tokens and $15 per million output tokens.[1] Public March pricing trackers for DeepSeek-V3.2 showed much lower rates, but they did not agree perfectly, so teams should confirm prices in the DeepSeek billing console before committing a budget.[9]

Which is better for coding?

GPT is better for most managed coding workflows because it is tied to Codex, tool use, and OpenAI’s broader product stack. DeepSeek is a strong choice for teams building their own coding assistant or code-review backend. If you value self-hosting and cost control more than an integrated assistant, DeepSeek deserves testing.

Is DeepSeek safe for business use?

DeepSeek can be used in business settings, but hosted use requires privacy review. DeepSeek’s privacy policy says it directly collects, processes, and stores personal data in the People’s Republic of China.[5] For sensitive work, compare that with OpenAI’s business-data commitments or consider self-hosting DeepSeek weights under your own controls.

Should I switch from ChatGPT to DeepSeek?

Switch only if cost, open weights, or self-hosting are your main reasons. If you use ChatGPT for writing, research, files, voice, images, or team collaboration, GPT remains the smoother product. Many technical teams should test DeepSeek as a backend option rather than replace ChatGPT entirely.