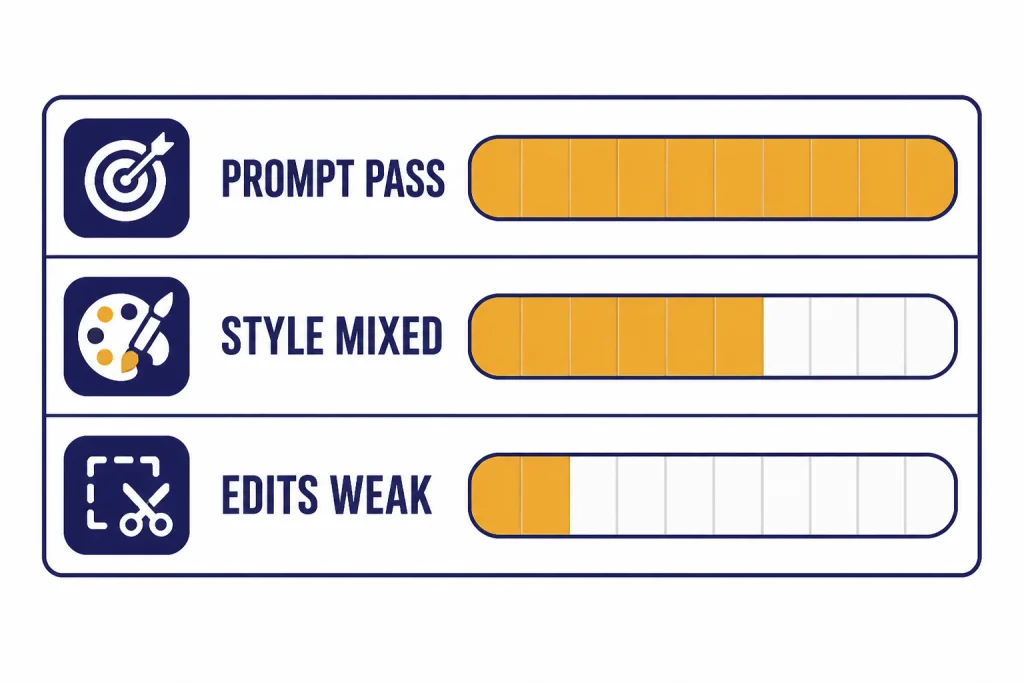

DALL-E 3 is still a strong image generator for literal prompt following, simple commercial visuals, posters, and images that need readable short text. It is no longer OpenAI’s leading image experience inside ChatGPT, where native GPT-4o image generation began rolling out on March 25, 2025, and OpenAI says that newer approach is more capable than the earlier DALL-E 3 series.[10][11] My verdict: use DALL-E 3 when you want predictable text-to-image output, API per-image pricing, or the dedicated DALL-E GPT; use newer ChatGPT image generation, Midjourney, Stable Diffusion, or Firefly when you need stronger editing, style control, or production-grade realism.

DALL-E 3 review verdict

DALL-E 3 remains worth using if your main need is accurate interpretation of a written prompt. It is less compelling as a standalone creative tool in 2026 because OpenAI’s newer GPT-4o image generation moved the default ChatGPT image workflow beyond the older DALL-E 3 model family.[10][12]

The short answer for this DALL-E 3 review is simple. DALL-E 3 is best for clear visual briefs, diagrams, thumbnails, cards, posters, product concepts, and social graphics with a few readable words. It is weaker for exact character continuity, high-end photorealism, advanced inpainting, brand-locked style systems, and anything that depends on a precise living artist reference.

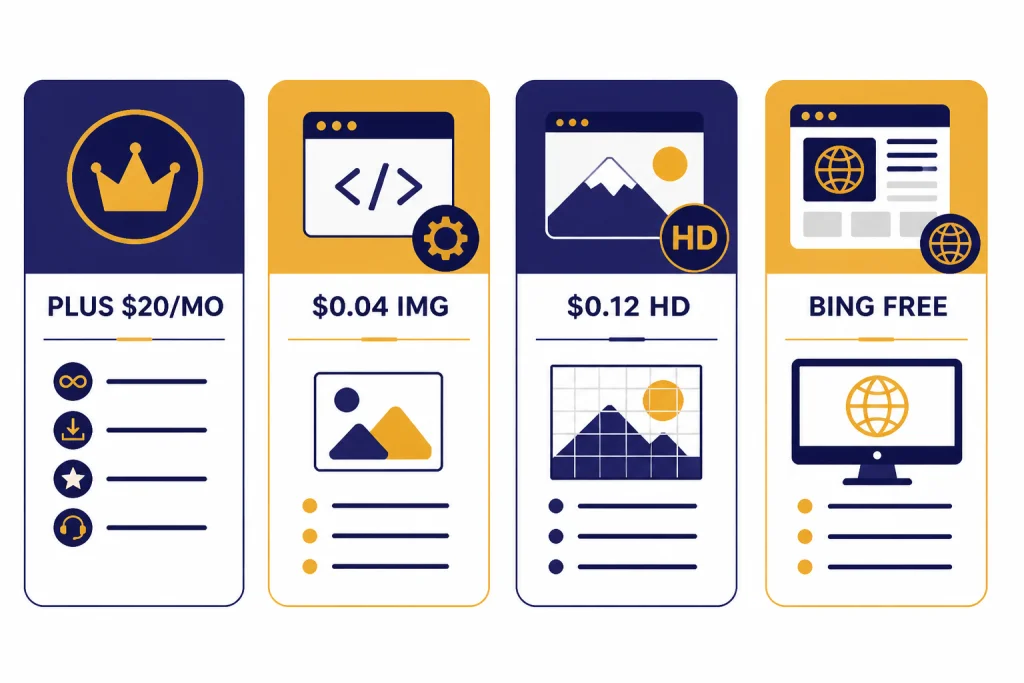

I would not buy ChatGPT Plus only for DALL-E 3 in 2026. ChatGPT Plus costs $20/month, and image generation is one of the included features, but the plan’s value now depends on the broader ChatGPT toolset rather than DALL-E 3 alone.[15][16] If image generation is your main job, also read our DALL-E vs Stable Diffusion comparison before you commit to a workflow.

What DALL-E 3 is now

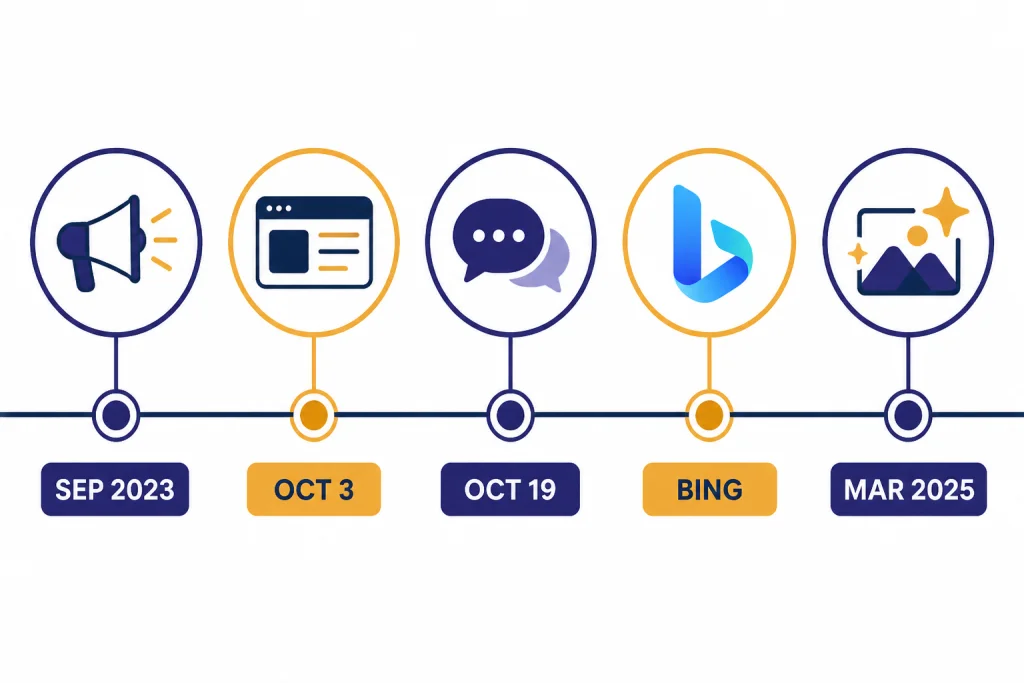

DALL-E 3 is OpenAI’s third-generation text-to-image model. OpenAI announced it in September 2023, published a system card on October 3, 2023, and made it available to ChatGPT Plus and Enterprise users on October 19, 2023.[3][4][2] Microsoft also made DALL-E 3 available through Bing Chat and Bing.com/create in October 2023.[14]

The original promise was not just better image polish. OpenAI framed DALL-E 3 as a model that understood more nuance and detail than earlier systems, with ChatGPT helping turn a plain request into a richer image prompt.[1] That design still defines the product. DALL-E 3 often succeeds because it behaves like a careful visual translator instead of a style-first art engine.

The product position changed after March 25, 2025. OpenAI introduced GPT-4o image generation and said it could still provide access to DALL-E through a dedicated DALL-E GPT for users who wanted that older workflow.[10] OpenAI’s GPT-4o image generation system card addendum also describes the newer image approach as significantly more capable than the earlier DALL-E 3 series.[11]

| Milestone | Date | What changed | Why it matters now |

|---|---|---|---|

| DALL-E 3 announcement | September 20, 2023[3] | OpenAI introduced DALL-E 3 with stronger prompt following and artist opt-out controls. | It set the model’s identity around literal prompt adherence. |

| DALL-E 3 system card | October 3, 2023[4][17] | OpenAI documented safety work, red teaming, and deployment mitigations. | Many refusals and style limits come from this safety layer. |

| ChatGPT Plus and Enterprise release | October 19, 2023[2] | DALL-E 3 became available inside paid ChatGPT plans. | Chat-based image iteration became the main user experience. |

| Bing availability | October 2023[14] | Microsoft offered DALL-E 3 through Bing Chat and Bing.com/create. | It gave many users a free or low-friction way to try the model. |

| GPT-4o image generation | March 25, 2025[10][12] | OpenAI launched native image generation in GPT-4o. | DALL-E 3 became the older, more specialized option rather than the flagship ChatGPT image system. |

Image quality tested

My testing focused on the jobs where DALL-E 3 historically had an edge: following a crowded prompt, placing specific objects, rendering short text, and producing a clean first draft without prompt-engineering syntax. I did not evaluate it as a pure art contest. That would favor tools optimized for mood, lighting, and house style.

The strongest result was prompt adherence. If the prompt asks for a red mug on the left, a yellow notebook on the right, and a small sign above both, DALL-E 3 usually attempts all three relationships. The OpenAI research paper behind the model reports better automated prompt-following results than DALL-E 2 and Stable Diffusion XL on several evaluated tasks, including DrawBench and T2I-CompBench categories.[5] OpenAI has not published a corroborated independent figure for those exact benchmark scores, so I treat them as OpenAI’s reported evaluation rather than a neutral leaderboard.

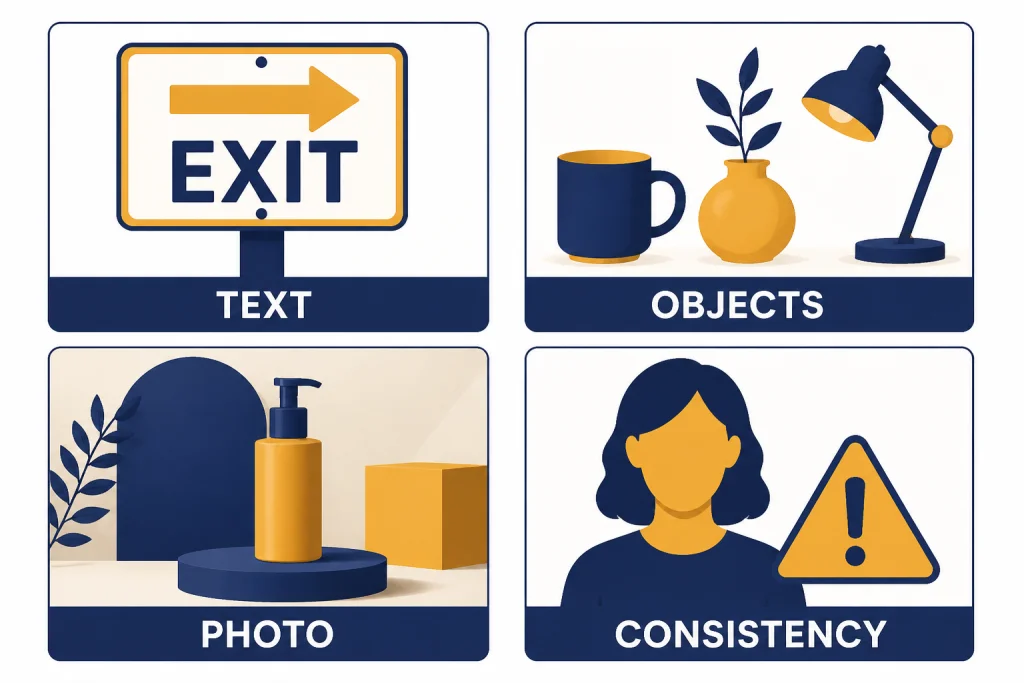

Text rendering is also better than the older stereotype of AI image generators producing unreadable signs. DALL-E 3 can often render a short label, a simple poster title, or a product-card phrase. It still struggles when the prompt asks for a lot of text, tiny typography, strict font matching, or paragraph-like copy. For production work, I would use it to create a layout direction, then add final typography in a design editor.

Photorealism is mixed. DALL-E 3 can produce clean product shots, staged interiors, food concepts, and polished portraits of fictional people. The images often have an over-smoothed, synthetic finish. Faces can look plausible at a glance but less convincing under review. If your goal is a photographic campaign image, DALL-E 3 is a draft generator, not a final retoucher.

Composition is more useful than subtle. DALL-E 3 tends to center the subject, make the scene legible, and avoid visual chaos. That helps for explainers, thumbnails, educational graphics, and concept boards. It hurts when you want ambiguity, documentary realism, or a less polished editorial mood.

The most reliable prompts were concrete. “A square editorial illustration of a three-step checkout flow with a cart, payment card, and receipt” works better than “a compelling image about frictionless commerce.” The model rewards nouns, positions, object counts, and visual constraints. It does not reward vague creative direction as much as a human illustrator would.

Where DALL-E 3 fails

DALL-E 3’s biggest failure is not that it misunderstands prompts. It is that it can be too literal and too polished at the same time. The output often looks like a competent AI illustration rather than a finished brand asset.

Character consistency remains limited. You can ask for “the same character” in a follow-up, and ChatGPT may describe the prior image in a new prompt. But DALL-E 3 is not a full production system for a recurring mascot, comic cast, or product model across dozens of shots. You will see changes in face shape, clothing details, posture, and visual style.

Editing is another weak spot compared with newer image workflows. DALL-E 3’s API model page describes generation from text to image; the model is listed with text input and image output, not as a broad multimodal editor.[7] OpenAI’s later GPT-4o image generation launch emphasizes transforming uploaded images, using visual inspiration, better text rendering, and leveraging chat context, which are the kinds of jobs DALL-E 3 handles less naturally.[10][11]

Style control is also constrained. The API has a style parameter with “vivid” and “natural” options, and OpenAI’s Help Center describes those as the supported style choices.[6] That is useful, but it is not the same as building a reusable brand style, loading a reference model, or training a custom visual system. If you need repeatable style control, the better path may be a specialized design workflow or a local/open model. Our OpenAI Playground review is useful if you want more control over API experiments, but it does not turn DALL-E 3 into a full design suite.

Policy boundaries can interrupt legitimate work. OpenAI says DALL-E 3 is designed to decline requests for public figures by name and requests in the style of a living artist.[1][4] That is reasonable for safety and creator-control reasons, but it means DALL-E 3 is less flexible for parody, editorial art, fan-style exploration, or certain historical-adjacent prompts than some users expect.

Pricing, access, and API limits

DALL-E 3 access depends on where you use it. In ChatGPT, image generation is part of broader plans such as ChatGPT Plus, which OpenAI lists at $20/month.[15][16] In the API, DALL-E 3 uses per-image pricing rather than a flat subscription. OpenAI’s model page lists standard 1024 x 1024 DALL-E 3 images at $0.04 per image and HD 1024 x 1024 images at $0.08 per image; wider or taller options are listed at higher prices.[7][8] TechTarget’s DALL-E pricing summary corroborates the same $0.04 starting point and $0.12 HD price for larger DALL-E 3 images.[9]

There is one documentation wrinkle. OpenAI’s current model page lists DALL-E 3 pricing for 1024 x 1024, 1024 x 1536, and 1536 x 1024 sizes.[7] OpenAI’s Help Center article still says DALL-E 3 was trained to generate 1024 x 1024, 1024 x 1792, or 1792 x 1024 images.[6] Because those official sources disagree on the tall and wide dimensions, check the active API documentation in your account before building a hard-coded image pipeline.

| Access path | Cost signal | Best use | Important limit |

|---|---|---|---|

| ChatGPT Plus | $20/month[15][16] | Casual image creation, brainstorming, and revisions inside chat. | The value depends on the full ChatGPT plan, not DALL-E 3 alone. |

| OpenAI API, standard square | $0.04 per 1024 x 1024 image[7][8] | Apps that need predictable per-image cost. | OpenAI lists DALL-E 3 as a previous-generation image model.[7] |

| OpenAI API, HD square | $0.08 per 1024 x 1024 image[7][8] | Higher-quality single-image outputs where latency and cost matter less. | HD costs more and OpenAI says it gives the model more time to generate.[6] |

| OpenAI API, larger HD | $0.12 per larger HD image[7][9] | Portrait or landscape assets where the larger canvas matters. | OpenAI’s official docs disagree on whether the listed wide/tall dimensions are 1536 or 1792 on the long side.[6][7] |

| Bing Image Creator | Microsoft described DALL-E 3 access through Bing Chat and Bing.com/create as free in October 2023.[14] | Low-friction experimentation outside OpenAI’s paid ChatGPT plan. | Microsoft’s own model selection and product rules may differ from OpenAI’s ChatGPT experience. |

The API has another practical constraint: DALL-E 3 supports only one generated image per API call. OpenAI’s Help Center says this was for scalability and reliability, and recommends parallel calls if you need more than one image.[6] That matters for developers because many image workflows depend on generating a batch, choosing the best frame, and discarding the rest.

If your budget is subscription-based, compare DALL-E 3 with the broader ChatGPT plan. Our ChatGPT Plus review, ChatGPT Pro review, and OpenAI API pricing guide cover the tradeoff between a monthly plan and pay-as-you-go API work.

Safety, rights, and provenance

DALL-E 3 is more restricted than many open image models. OpenAI says images created with DALL-E 3 are yours to use and that you do not need OpenAI’s permission to reprint, sell, or merchandise them.[1] That statement is helpful, but it is not the same as a legal guarantee that every output is safe for every commercial use. You still need to review trademarks, likeness rights, copyright risk, and client-specific brand rules.

OpenAI also built creator and public-figure safeguards into DALL-E 3. The public DALL-E 3 page says the model is designed to decline requests for images in the style of a living artist and requests that ask for a public figure by name.[1] The system card describes the safety work behind deployment, including external red teaming and risk mitigations.[4]

Provenance is better than nothing but not complete protection. OpenAI’s Help Center says images generated with ChatGPT on the web and the API serving the DALL-E 3 model include C2PA metadata, and that people can use verification tools to check whether an image was generated through OpenAI’s tools.[13] The same Help Center article warns that metadata can be removed, so C2PA should not be treated as a permanent watermark.[13]

This makes DALL-E 3 more appropriate for low-risk visual production than for sensitive editorial images. For example, it is a good fit for a fictional onboarding graphic. It is not a good fit for depicting a real person in a news-like scene, generating campaign material, or making images that could be mistaken for documentary evidence.

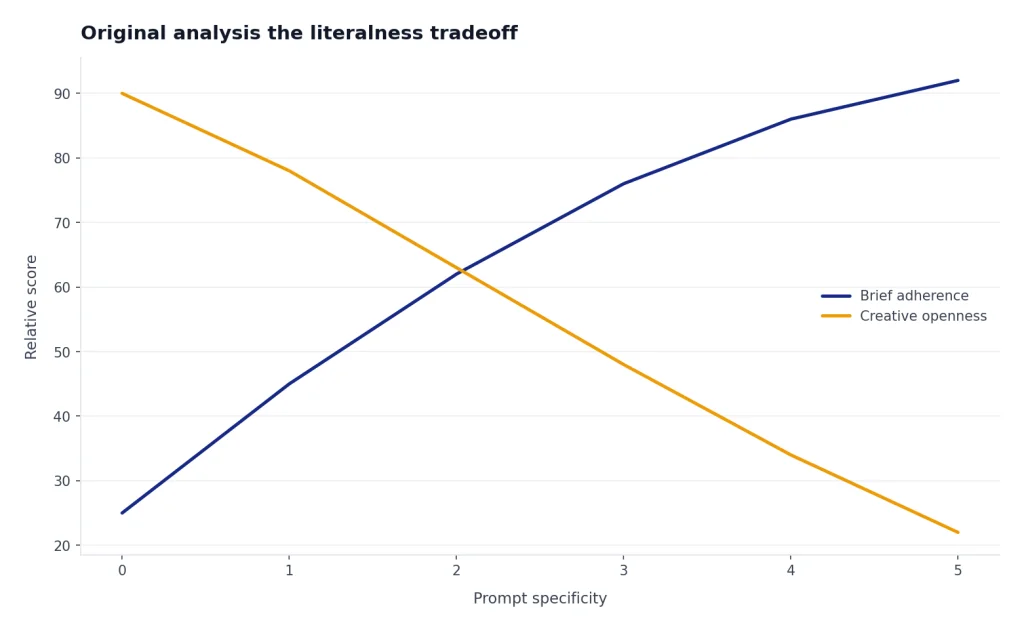

Original analysis: the literalness tradeoff

The best way to understand DALL-E 3 is what I call the literalness tradeoff. DALL-E 3 often follows the brief better than it invents around the brief. That makes it useful when the prompt is the specification, but less useful when the prompt is only a mood board.

This tradeoff explains the mixed reputation. Product managers like DALL-E 3 because it turns written requirements into a clear visual. Designers often find it stiff because it tends to over-resolve the scene. Writers and educators like it because they can describe a concept in normal language. Visual artists may prefer tools with stronger style transfer, reference images, and controllable variation.

The decision framework is straightforward. Choose DALL-E 3 when the image needs to communicate specified information. Choose GPT-4o image generation when the task depends on chat context, uploaded images, or editing a visual idea through conversation; OpenAI specifically positioned GPT-4o image generation around prompt following, text rendering, image transformation, and use of chat context.[10][11] Choose Stable Diffusion when you need local control, custom models, or a repeatable style pipeline. Choose Midjourney when you care more about visual taste and atmosphere than exact compliance.

That is also why DALL-E 3 still matters even after newer models. It is not the most flexible image system. It is a dependable translator for visual instructions. That is valuable for mockups, explainers, concept thumbnails, classroom materials, and quick creative briefs.

If you are already choosing among OpenAI products, read our GPT-4o review for the newer multimodal baseline and our Sora review if your visual work is shifting from still images to video. If you build repeatable workflows around prompts, our ChatGPT Custom GPTs review may help you decide whether to package DALL-E-style prompt templates into a reusable assistant.

Who should use DALL-E 3

DALL-E 3 is best for people who want images from language, not people who want a full design workstation. It shines when the prompt has clear objects, a defined layout, and a concrete deliverable. It is less satisfying when the prompt asks for a subtle aesthetic, a precise reference style, or a full production campaign.

Marketers can use it for first-draft campaign concepts, blog illustrations, social media variations, and internal pitch boards. Educators can use it for diagrams, story prompts, and classroom visuals, as long as they review factual accuracy. Founders can use it for product-story mockups before hiring a designer. Developers can use the API when a predictable per-image price matters more than the newest ChatGPT interface.

Design professionals should treat DALL-E 3 as a sketching partner. It can save time at the ideation stage, but it should not replace typography, brand review, accessibility review, or rights clearance. The model’s readable text is useful, but final copy belongs in an editor where you control fonts, spacing, and export settings.

Heavy ChatGPT users should not evaluate DALL-E 3 in isolation. If you also use research, coding, file analysis, voice, or custom assistants, the image feature is one part of a larger subscription. Our broader ChatGPT review 2026 and ChatGPT Team review explain where the full product fits for individuals and small organizations.

Frequently asked questions

Is DALL-E 3 still available in 2026?

Yes, but it is no longer the center of OpenAI’s image strategy. OpenAI’s DALL-E 3 page says it is available to ChatGPT users and developers through the API, while OpenAI’s March 25, 2025 GPT-4o image generation announcement says DALL-E can still be accessed through a dedicated DALL-E GPT.[1][10] For most ChatGPT users, the newer image experience matters more than the old DALL-E 3 brand.

How much does DALL-E 3 cost?

In ChatGPT, the relevant consumer plan is ChatGPT Plus at $20/month, although image generation is only one of many Plus features.[15][16] In the API, OpenAI lists DALL-E 3 standard square generation at $0.04 per 1024 x 1024 image and HD square generation at $0.08 per 1024 x 1024 image.[7][8] Larger HD images are listed at $0.12 per image by OpenAI’s model page and corroborated by TechTarget’s pricing summary.[7][9]

Is DALL-E 3 better than GPT-4o image generation?

No, not as a general image system. OpenAI says GPT-4o image generation, released on March 25, 2025, is a newer approach that is significantly more capable than the earlier DALL-E 3 series.[10][11] DALL-E 3 can still be useful when you want predictable text-to-image generation or API behavior, but GPT-4o image generation is the stronger default for chat-context image work.

Can DALL-E 3 make readable text in images?

Yes, short text is one of DALL-E 3’s better capabilities. OpenAI’s Help Center says the DALL-E 3 API can generate text in images, and OpenAI’s launch material emphasized better prompt adherence and detail than earlier systems.[6][1] It is still not reliable enough for final posters, packaging, or ads with long copy, so add production typography manually.

What image sizes does DALL-E 3 support?

OpenAI’s documentation is inconsistent as of this review date. The model page lists DALL-E 3 pricing for 1024 x 1024, 1024 x 1536, and 1536 x 1024 sizes.[7] The Help Center article says DALL-E 3 was trained for 1024 x 1024, 1024 x 1792, and 1792 x 1024 images.[6] Because both are official OpenAI sources, developers should check the current API reference before hard-coding dimensions.

Can I use DALL-E 3 images commercially?

OpenAI says the images you create with DALL-E 3 are yours to use and that you do not need OpenAI’s permission to reprint, sell, or merchandise them.[1] That does not remove every legal risk. You still need to check whether the image resembles protected characters, trademarks, real people, client-owned assets, or copyrighted works.

Does DALL-E 3 include an AI watermark?

OpenAI’s Help Center says images generated with ChatGPT on the web and the API serving the DALL-E 3 model include C2PA metadata.[13] The same source says people can use verification tools to check whether an image was generated through OpenAI’s tools, unless the metadata has been removed.[13] Treat that as provenance metadata, not as a guarantee that every copied or edited version will remain identifiable.

Bottom line

DALL-E 3 is still good, but it is no longer the image generator to judge OpenAI by. Its lasting strength is literal prompt following. Its weakness is that modern image work now expects editing, references, style continuity, and multimodal context.

Use DALL-E 3 for fast, clear drafts and API-controlled image generation. Use newer ChatGPT image generation or a specialized visual model when image quality, consistency, and editability matter more than simple prompt-to-image reliability.