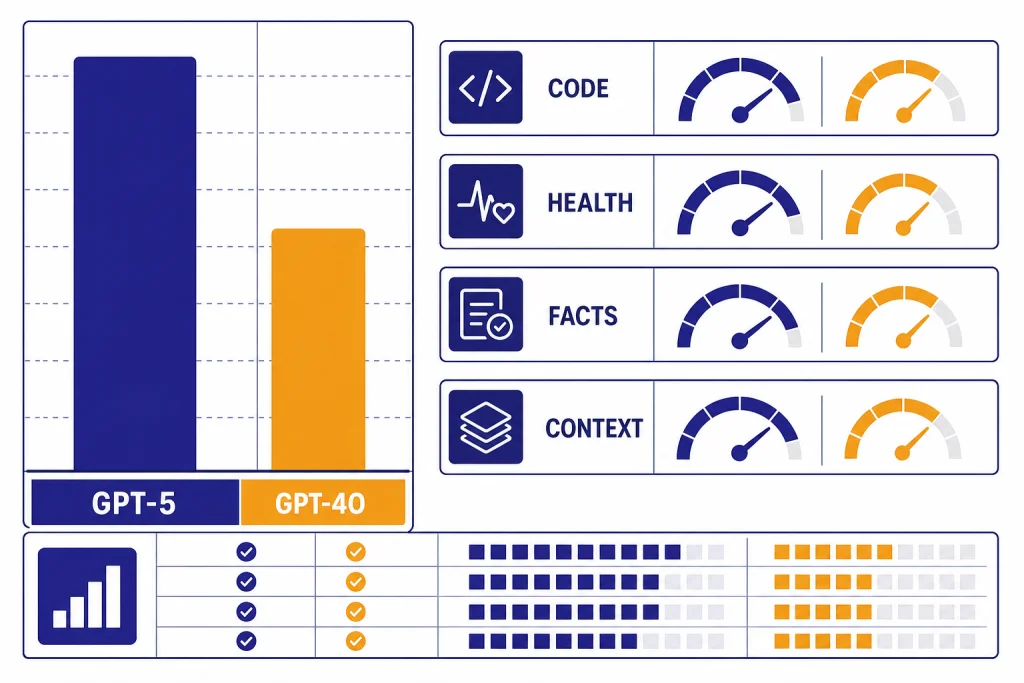

GPT-5 beats GPT-4o on the benchmark categories that matter for difficult work: math, coding, multimodal reasoning, health, and factuality.[1] The cleanest way to read this comparison is not “GPT-5 is warmer” or “GPT-4o is obsolete everywhere.” It is this: GPT-5 is the stronger model family for hard tasks and long context, while GPT-4o remains relevant mainly for legacy API workflows, tone preference, and apps already tuned around its behavior. For ChatGPT users, the comparison is now mostly historical because OpenAI retired GPT-4o from ChatGPT plans; API users can still compare them directly.[8]

Bottom line: GPT-5 is the stronger benchmark model

GPT-5 is the better choice when the task is hard enough to reward deeper reasoning. That includes multi-file coding, technical debugging, long documents, science questions, structured analysis, and high-stakes factual work that you still plan to verify. OpenAI’s GPT-5 launch emphasized state-of-the-art results across math, real-world coding, multimodal understanding, and health tasks.[1]

GPT-4o is not weak. It was OpenAI’s 2024 omni flagship, designed around fast multimodal interaction rather than explicit long-form reasoning.[4] Its value today is mostly practical: existing API apps, prompts tuned to its style, and workflows where changing model behavior costs more than the benchmark gain is worth. If you need a wider map of OpenAI’s current lineup, start with all GPT models side by side and how GPT models differ from the o-series.

Model profile: what is actually being compared

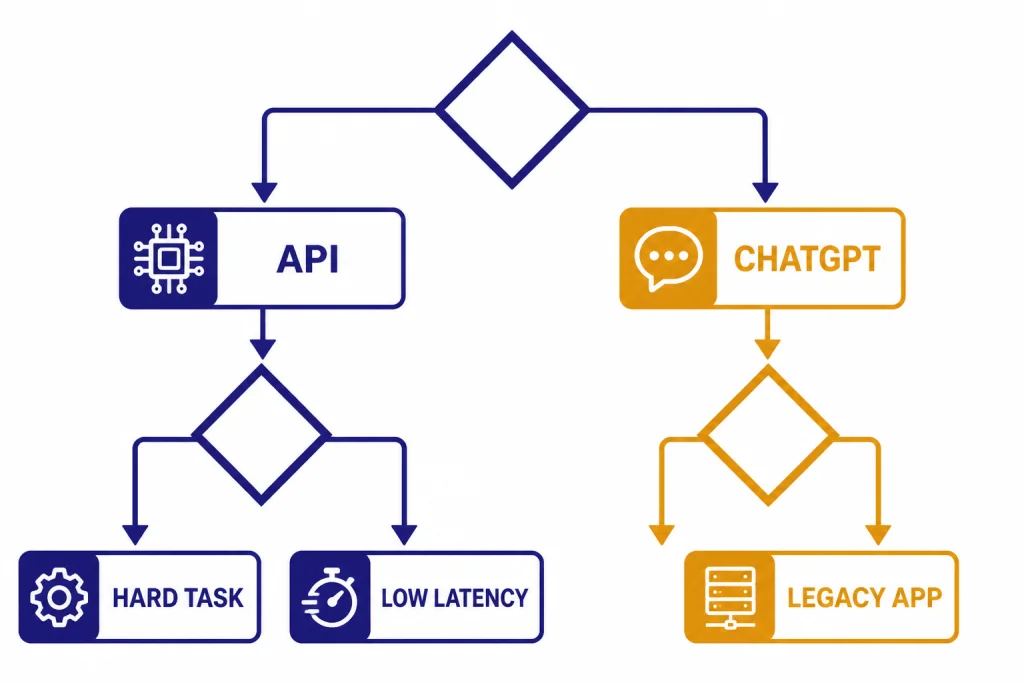

The phrase GPT-5 vs GPT-4o can mean two different things. In ChatGPT, GPT-5 was released as a unified system that routes between faster and deeper reasoning behavior. In the API, GPT-5 is exposed as a model family for reasoning, coding, and agentic work. GPT-4o is an omni model that accepts text and image input in the API and was originally presented as a real-time model across audio, vision, and text.[2] [4]

| Category | GPT-5 | GPT-4o |

|---|---|---|

| Launch | OpenAI introduced GPT-5 on August 7, 2025.[1] | OpenAI introduced GPT-4o on May 13, 2024.[4] |

| Design goal | Unified fast-plus-thinking system with routing for harder tasks.[2] | Omni model built for natural interaction across text, vision, and audio.[4] |

| API context | 400,000-token context window and 128,000 max output tokens.[7] | 128,000-token context window and 16,384 max output tokens.[6] |

| API price | $1.25 per 1M input tokens and $10.00 per 1M output tokens.[7] | $2.50 per 1M input tokens and $10.00 per 1M output tokens.[6] |

| ChatGPT status as of publication | Original GPT-5 Instant and Thinking were retired from ChatGPT on February 13, 2026, while API access continued.[8] | GPT-4o was retired from ChatGPT on February 13, 2026, with Custom GPT access for Business, Enterprise, and Edu ending after April 3, 2026.[8] |

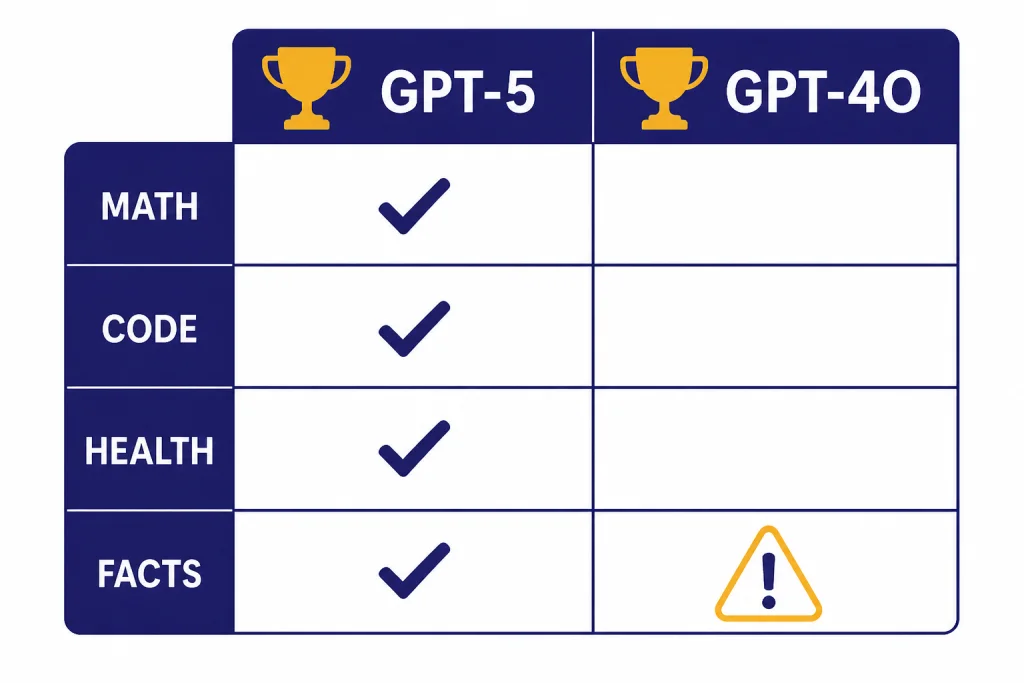

Benchmark results that matter

The cleanest benchmark story is not one giant average. GPT-5 and GPT-4o were often evaluated under different modes and prompt setups. GPT-5 numbers may use thinking or extended reasoning. GPT-4o numbers often come from older simple-evals rows or launch charts. The table below separates direct OpenAI comparisons from rows where OpenAI has not published an exact same-harness GPT-4o figure.

| Area | GPT-5 result | GPT-4o result | Readout |

|---|---|---|---|

| General simple-evals | OpenAI did not publish a single same-harness GPT-5 row in simple-evals. | For gpt-4o-2024-08-06, OpenAI simple-evals lists 88.7 on MMLU, 53.1 on GPQA, 75.9 on MATH, and 90.2 on HumanEval.[5] | Useful GPT-4o baseline, but not a direct GPT-5 matchup. |

| Math | 94.6% on AIME 2025 without tools.[1] | OpenAI has not published an official figure for this. | GPT-5 is the clear math benchmark leader by available OpenAI data. |

| Coding | 74.9% on SWE-bench Verified and 88% on Aider Polyglot.[1] | OpenAI has not published an official figure for this exact setup. | GPT-5 wins for serious coding benchmarks. |

| Multimodal understanding | 84.2% on MMMU.[1] | OpenAI has not published an official figure for this exact setup. | GPT-5 has the stronger official score. |

| HealthBench Hard | 46.2% for gpt-5-thinking and 25.5% for gpt-5-main.[2] | 0.0% for GPT-4o on the same HealthBench Hard comparison in the GPT-5 system card.[2] | GPT-5 wins by a wide margin. |

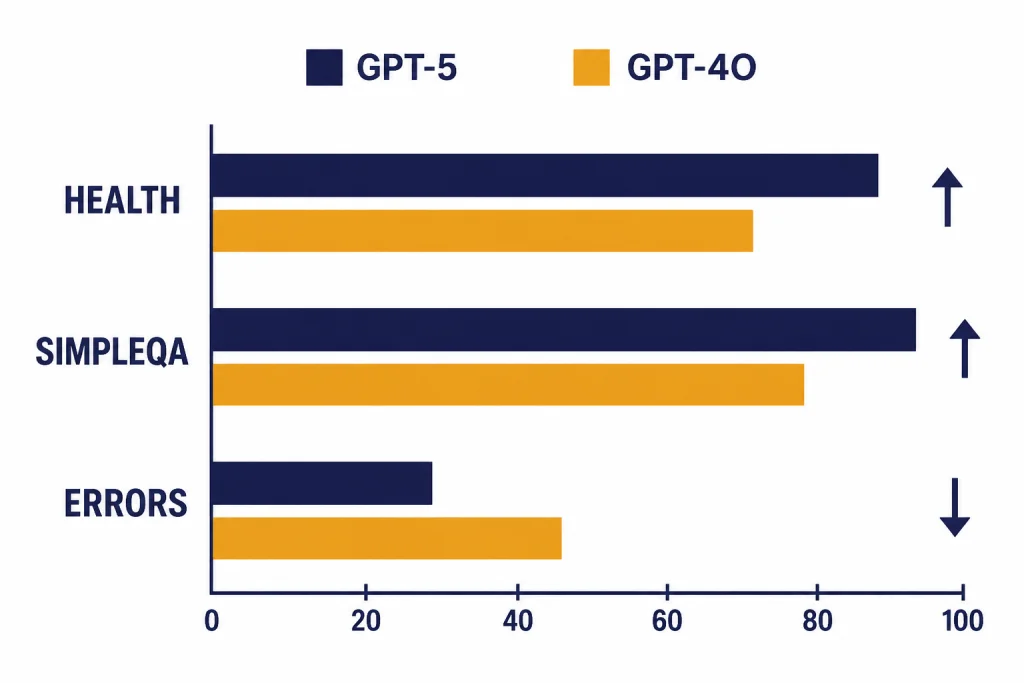

| SimpleQA with no web | 0.55 accuracy and 0.40 hallucination rate for gpt-5-thinking; 0.46 accuracy and 0.47 hallucination rate for gpt-5-main.[2] | 0.44 accuracy and 0.52 hallucination rate.[2] | GPT-5 wins, with the bigger gain in thinking mode. |

| Production factuality with browsing | OpenAI reports gpt-5-main had a 26% smaller hallucination rate than GPT-4o and 44% fewer responses with at least one major factual error.[2] | Baseline in that comparison. | GPT-5 wins on OpenAI’s production-traffic factuality test. |

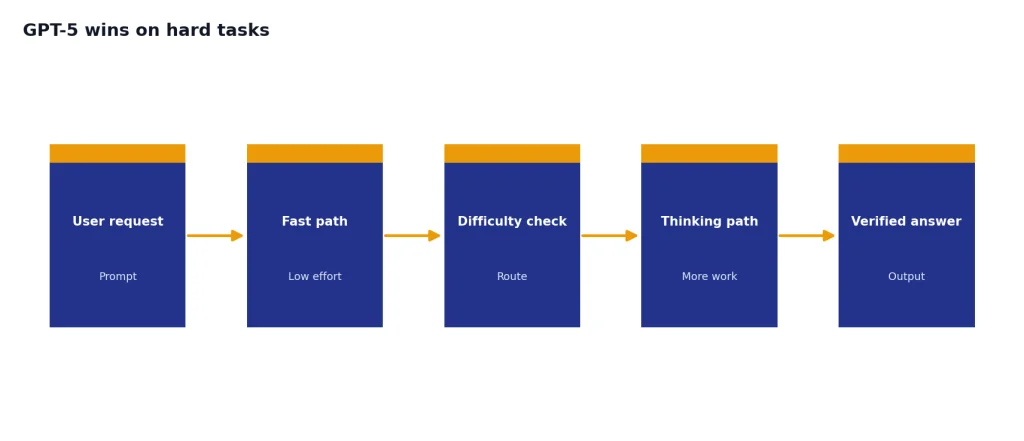

Why GPT-5 wins on hard tasks

GPT-5 wins because it can spend more work on the parts of a request that need it. The system card describes GPT-5 as a family that includes a fast main model and a deeper thinking model, with GPT-4o mapped as the predecessor to gpt-5-main.[2] That matters because many difficult prompts fail not from lack of vocabulary, but from shallow planning, missed constraints, or weak verification.

Coding is the clearest example. OpenAI’s developer launch says GPT-5 scored 74.9% on SWE-bench Verified and 88% on Aider Polyglot, and it describes GPT-5 as its best model for coding and agentic tasks.[3] If your workload looks like “read this issue, inspect the codebase, create a patch, run checks, and explain the fix,” GPT-5 is built for that pattern. For more on the upgrade path from older GPT lines, see our GPT-4 vs GPT-5 upgrade guide.

Factuality is the second major gap. The GPT-5 system card shows direct GPT-5 vs GPT-4o gains on production-traffic factuality and SimpleQA.[2] This does not mean GPT-5 is safe to trust blindly. It means GPT-5 gives you a better starting point when the output must survive citation checks, code review, or expert review.

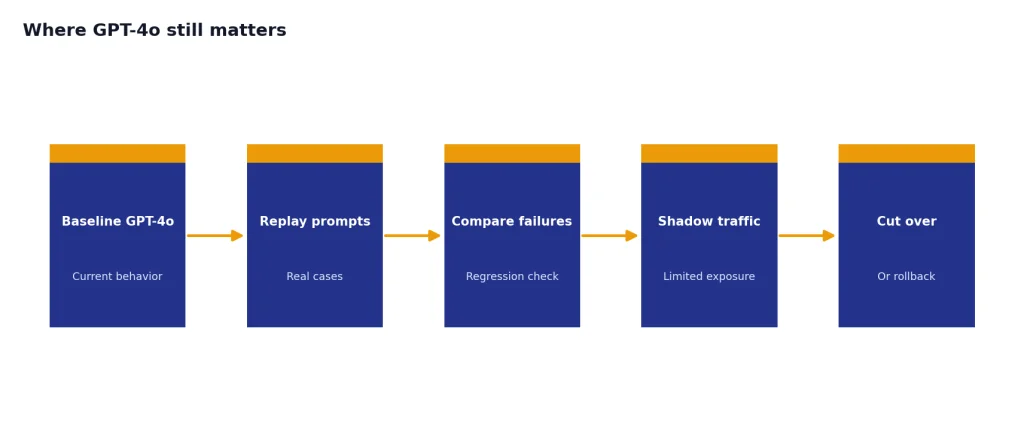

Where GPT-4o still matters

GPT-4o still matters because models are not only benchmark engines. GPT-4o was a major step toward natural multimodal interaction. OpenAI said it could respond to audio inputs in as little as 232 milliseconds, with an average of 320 milliseconds, and that it was 50% cheaper than GPT-4 Turbo in the API at launch.[4] Those launch-era strengths explain why many teams built around it.

The main reason to keep GPT-4o in an API workflow is migration risk. If your app has prompts, tests, and customer expectations tuned to GPT-4o, changing to GPT-5 can alter tone, refusal behavior, formatting, and tool-call patterns. A benchmark win does not automatically pay for a regression cycle. Teams should run their own evals before switching production traffic.

GPT-4o also remains useful as a reference point. It shows how much of the GPT-5 gain comes from deeper reasoning rather than simple chat fluency. If you are comparing older OpenAI models, pair this article with our GPT-4o and GPT-4 comparison and GPT latency benchmarks.

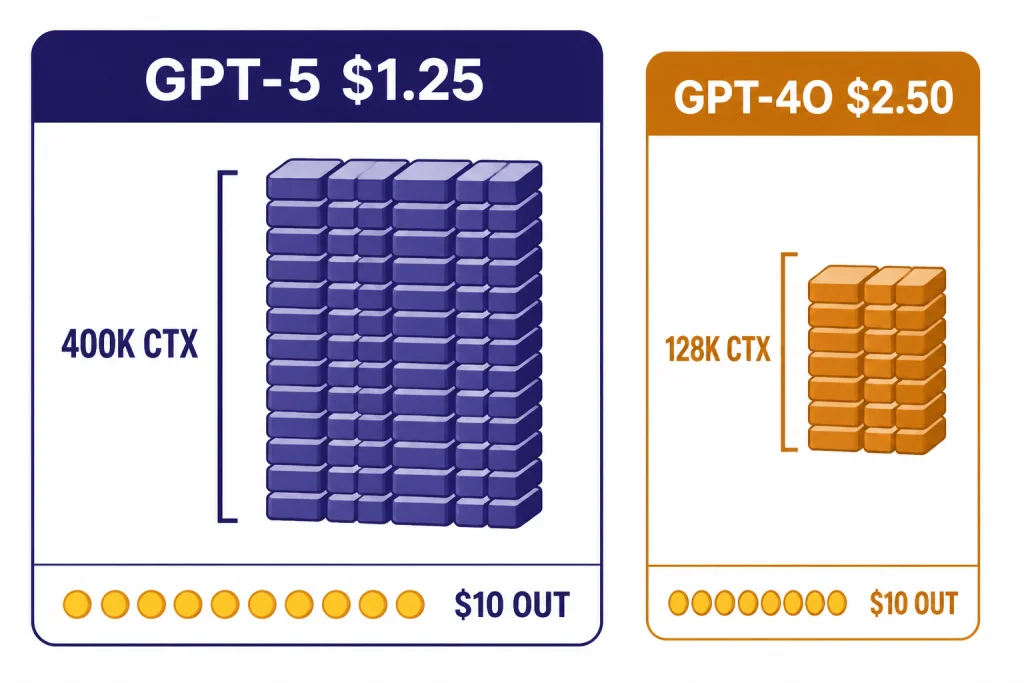

API cost and context window comparison

For developers, GPT-5 is not only stronger on hard benchmarks. It also has the better input-token price and the larger context window. OpenAI’s developer launch described the GPT-5 API limit as 272,000 input tokens plus 128,000 reasoning and output tokens, for 400,000 total context tokens.[3]

| API metric | GPT-5 | GPT-4o | What it means |

|---|---|---|---|

| Input price | $1.25 per 1M tokens.[7] | $2.50 per 1M tokens.[6] | GPT-5 is cheaper for large prompts and retrieval-heavy workloads. |

| Cached input price | $0.125 per 1M tokens.[7] | $1.25 per 1M tokens.[6] | GPT-5 has a bigger advantage when prompt caching applies. |

| Output price | $10.00 per 1M tokens.[7] | $10.00 per 1M tokens.[6] | The output sticker price is the same. |

| Context window | 400,000 tokens.[7] | 128,000 tokens.[6] | GPT-5 can carry much larger source material in one request. |

| Max output | 128,000 tokens.[7] | 16,384 tokens.[6] | GPT-5 can return much longer structured outputs. |

The practical takeaway is simple. Use GPT-5 for long-context analysis, coding agents, document transformation, and tasks where a failed answer is expensive. Use GPT-4o only if you have a specific compatibility reason. For budget planning, compare this with our OpenAI API pricing table and GPT context window reference.

ChatGPT availability on April 4, 2026

As of this article’s publication date, this comparison is mostly an API and benchmark comparison, not a normal ChatGPT model-picker comparison. OpenAI’s Help Center says GPT-4o and GPT-5 Instant/Thinking were retired from ChatGPT on February 13, 2026, while API access continued.[8] It also says Business, Enterprise, and Edu customers retained GPT-4o access in Custom GPTs until April 3, 2026, after which GPT-4o was fully retired across ChatGPT plans.[8]

If you are choosing a ChatGPT subscription, focus on the currently available model family and plan limits instead. Our ChatGPT plan comparison covers that decision. If you are tracking the later GPT-5 line, see GPT-5 vs GPT-5.1 changes.

How to choose between GPT-5 and GPT-4o

Pick GPT-5 when the task has a correctness burden. That includes code generation, bug fixing, tool use, long document synthesis, data extraction, science explanations, health-adjacent information that a clinician or specialist will review, and tasks with many constraints. GPT-5 is also the better first test if your prompt is longer than GPT-4o can comfortably carry.

- Choose GPT-5 for benchmark-sensitive work. Math, coding, factuality, health, and long context all point toward GPT-5.

- Choose GPT-5 for new API builds. The input price is lower, the context window is larger, and the model is designed for agentic tasks.

- Choose GPT-4o only for legacy compatibility. Keep it when your existing prompts, tests, or user experience depend on its exact behavior.

- Do not choose GPT-4o for current ChatGPT access. It has been retired from ChatGPT plans as of this article’s publication date.[8]

- Run a private eval before switching. Use production prompts, failure cases, and cost logs, not only public benchmark tables. The OpenAI Playground review can help you test model behavior quickly.

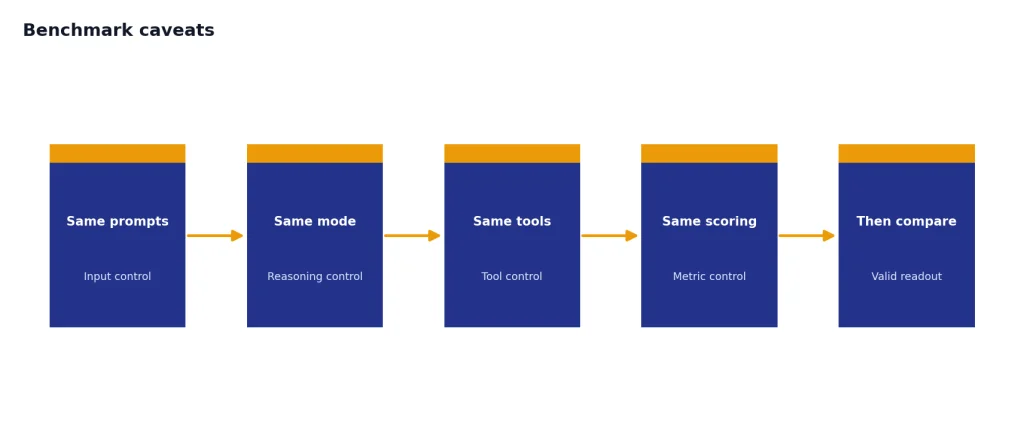

Benchmark caveats

Do not treat every GPT-5 number and every GPT-4o number as same-harness data. OpenAI’s simple-evals repository warns that evaluations are sensitive to prompting and that different publications use different formulations.[5] That is why this article distinguishes direct comparisons from rows where OpenAI has not published an official GPT-4o figure for the exact GPT-5 benchmark setup.

Factuality benchmarks also depend heavily on the task. OpenAI’s production-traffic evaluation showed a clear GPT-5 advantage over GPT-4o.[2] A TechRadar report on Vectara’s HHEM leaderboard described a much smaller grounded-summarization gap, with GPT-5 at 1.4% and GPT-4o at 1.49% hallucination rate.[9] The best interpretation is not that one source is useless. It is that factuality tests measure different failure modes.

For most readers, the decision still comes out the same. GPT-5 is the stronger benchmark model and the better default for new serious work. GPT-4o is a legacy option worth keeping only when compatibility, tone, or migration cost matters more than capability.

Frequently asked questions

Is GPT-5 better than GPT-4o?

Yes, for difficult benchmarked work. GPT-5 has the stronger official results in math, coding, multimodal understanding, health, and factuality.[1] GPT-4o can still be useful for legacy API apps or workflows tuned to its tone.

Is GPT-4o still available in ChatGPT?

No for normal ChatGPT use as of this article’s publication date. OpenAI says GPT-4o was retired from ChatGPT on February 13, 2026, and that remaining Custom GPT access for Business, Enterprise, and Edu ended after April 3, 2026.[8] API access continues.

Which model is cheaper in the OpenAI API?

GPT-5 is cheaper on input tokens. OpenAI lists GPT-5 at $1.25 per 1M input tokens and $10.00 per 1M output tokens, while GPT-4o is $2.50 per 1M input tokens and $10.00 per 1M output tokens.[7] [6] Actual bills still depend on prompt size, output length, caching, and retries.

Does GPT-5 have a larger context window than GPT-4o?

Yes. OpenAI lists GPT-5 with a 400,000-token context window and GPT-4o with a 128,000-token context window in the API docs.[7] [6] That makes GPT-5 the better fit for long files, multi-document research, and agent traces.

Is GPT-4o better for voice?

GPT-4o was originally introduced around real-time multimodal interaction, including low-latency audio behavior.[4] However, OpenAI’s retirement note says ChatGPT Voice is not changing as part of the GPT-4o text-model retirement because voice uses a similar base model but is ultimately different from the retired text GPT-4o model.[8]

Has OpenAI published parameter counts for GPT-5 or GPT-4o?

No. OpenAI has not published an official figure for this. Treat parameter-count claims from third parties as speculation unless OpenAI releases a primary source.