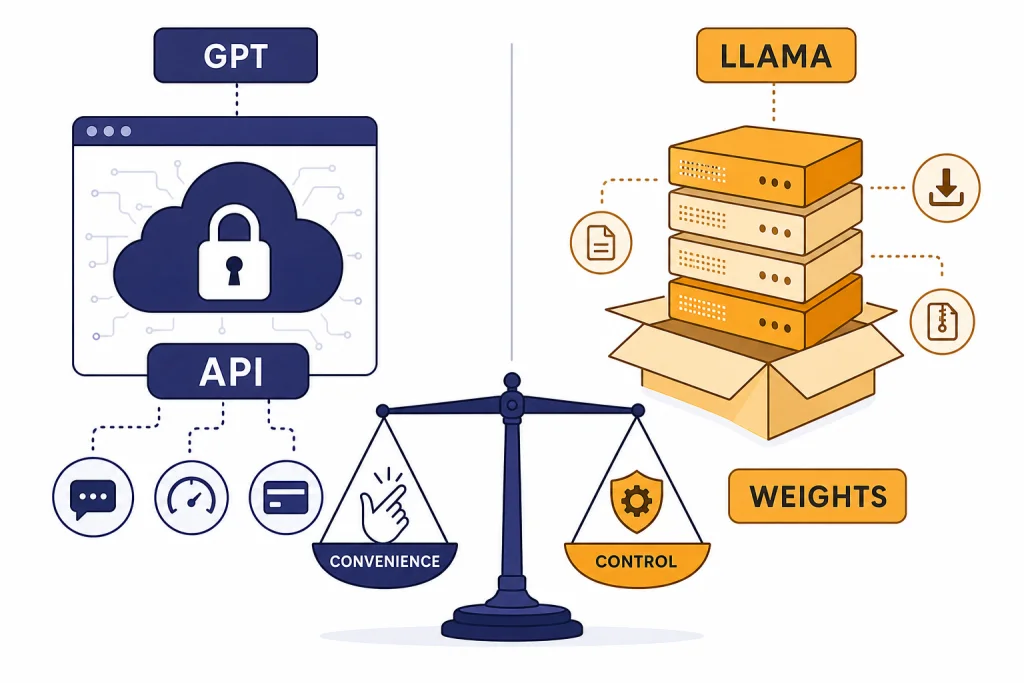

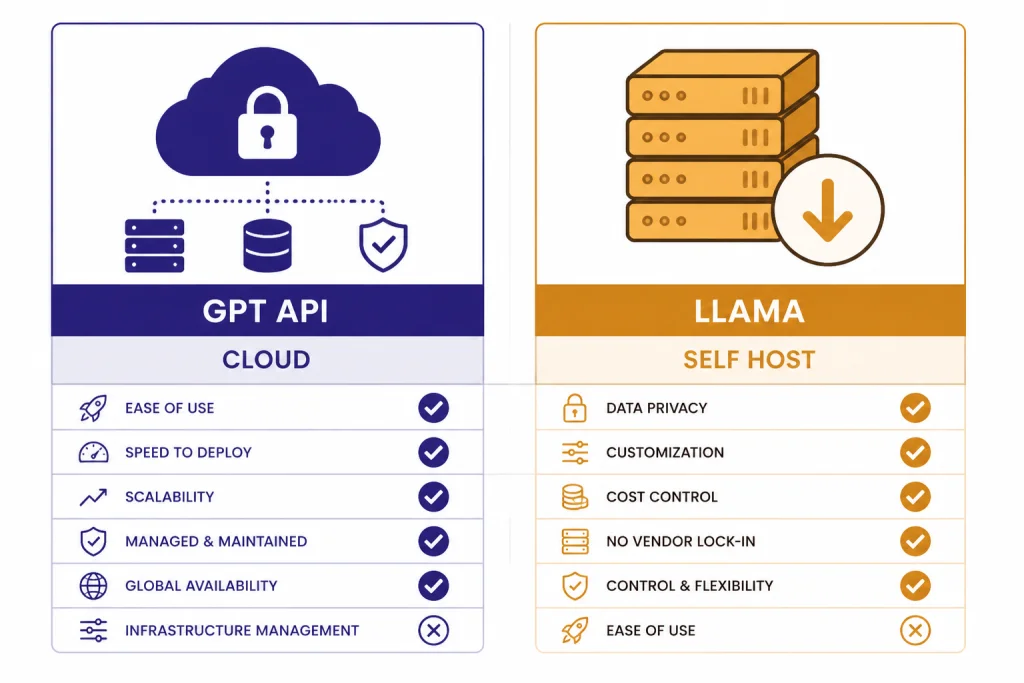

GPT vs Llama is mostly a choice between a managed frontier AI platform and a controllable open-weight model family. GPT models from OpenAI are usually stronger for hosted product features, enterprise support, tool use, and low-maintenance deployment. Llama is better when you need to run weights yourself, customize the model stack, avoid a single hosted API dependency, or keep inference inside your own infrastructure. The “open source” label needs care: Meta makes Llama weights broadly available, but Llama uses a custom license rather than a standard open source license. For most teams, GPT wins on convenience and reliability, while Llama wins on control and deployment freedom.

Quick verdict

Choose GPT if you want the shortest path to a production AI feature. OpenAI’s GPT-5.4 is available in ChatGPT, the API, and Codex, and OpenAI describes it as a frontier model for professional work involving reasoning, coding, tools, documents, spreadsheets, and presentations.[1] In the API, GPT-5.4 lists a 1,050,000-token context window, 128,000 max output tokens, and standard text pricing of $2.50 per 1 million input tokens and $15.00 per 1 million output tokens.[2]

Choose Llama if you want to own more of the stack. Meta’s Llama 4 release includes Llama 4 Scout and Llama 4 Maverick, with downloadable weights available through Llama.com and Hugging Face.[4] The Hugging Face model card lists Llama 4 Scout at 17B active parameters, 109B total parameters, and a 10M-token context length, while Llama 4 Maverick is listed at 17B active parameters, 400B total parameters, and a 1M-token context length.[5]

| Decision point | GPT | Llama |

|---|---|---|

| Best fit | Managed AI apps, agents, coding, enterprise workflows | Self-hosted, customized, privacy-sensitive, or cost-tuned deployments |

| Model access | Hosted API and ChatGPT product access | Downloadable weights under Meta’s custom Llama license |

| Operations | OpenAI handles serving, scaling, model updates, and infrastructure | Your team or hosting provider handles inference, scaling, monitoring, and upgrades |

| Transparency | Model weights and full architecture are not published for frontier GPT models | Weights are available, but training data and full reproducibility are still limited |

| Legal framing | Commercial API terms and product policies | Custom community license plus acceptable use policy |

What GPT and Llama mean

“GPT” is not one model. It is a family and product layer around OpenAI’s generative models. In a practical comparison, most buyers mean the latest GPT model available through ChatGPT or the OpenAI API. As of this article’s publication, GPT-5.4 is the relevant frontier GPT model to compare against Llama for professional text, coding, agentic, and multimodal workflows.[1] If you are comparing OpenAI models against each other, see all GPT models compared side by side, GPT-5 vs GPT-4o, and GPT vs the o-Series.

“Llama” is Meta’s model family. Llama 4 is the current family used for this comparison. Meta’s Llama 4 announcement introduced Llama 4 Scout and Llama 4 Maverick as the first available models in the Llama 4 group, and described Llama 4 Behemoth as a teacher model still in training at launch.[4] Llama is often called open source in everyday conversation because developers can download model weights. In strict licensing terms, it is better described as open-weight or source-available.

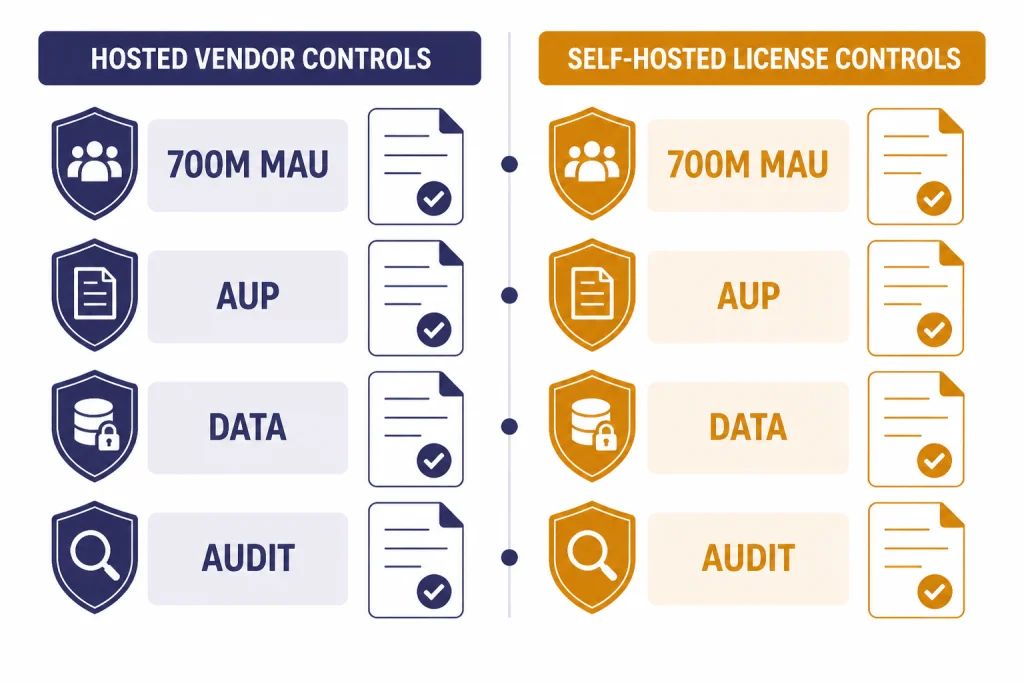

That distinction matters. The Open Source Initiative says open source AI should allow users to use the system for any purpose and without asking for permission.[7] Meta’s Llama 4 license includes conditions, including a rule that organizations above 700 million monthly active users on the Llama 4 release date must request a separate license from Meta.[6] That makes Llama more open than GPT in access to weights, but not equivalent to an OSI-style open source software project.

Closed GPT vs open-weight Llama

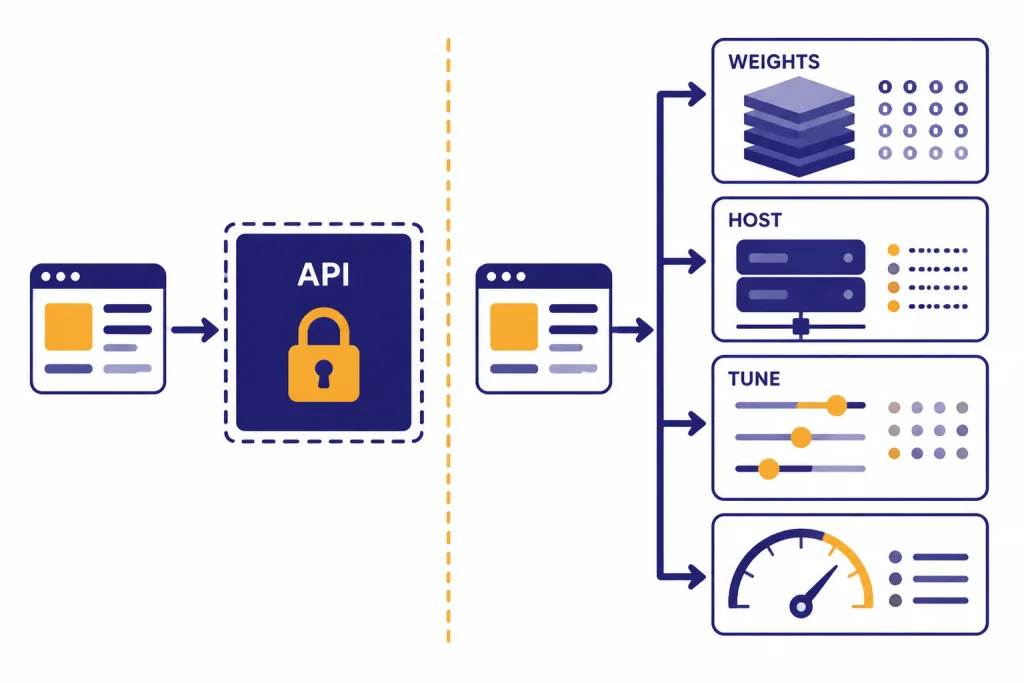

The core GPT vs Llama tradeoff is access. With GPT, you send prompts to OpenAI’s hosted service and receive outputs. You can tune prompts, system instructions, tools, retrieval, structured outputs, and application logic. You cannot download the frontier GPT weights, inspect the full architecture, or run GPT-5.4 on your own GPU cluster. OpenAI has not published an official parameter count for GPT-5.4.

With Llama, you can obtain model weights and run them yourself or through a third-party inference provider. That changes the engineering surface. You can select quantization formats, serving engines, hardware, retrieval systems, monitoring, guardrails, and fine-tuning strategy. You also inherit more responsibility. A Llama deployment needs capacity planning, latency tuning, model evaluation, safety layers, patching, and license review.

OpenAI does have separate open-weight models under the gpt-oss name. OpenAI introduced gpt-oss-120b and gpt-oss-20b as open-weight reasoning models, and its open models page says those models use the Apache 2.0 license.[8][9] They are relevant if your real comparison is “OpenAI open-weight vs Meta open-weight.” They are not the same as the managed frontier GPT line discussed here.

Think of the choice as a build-versus-buy decision. GPT is closer to buying a managed intelligence service. Llama is closer to adopting a powerful model component and building the surrounding platform yourself. That platform work can be worth it, but it is not free.

Quality, context, and multimodal use

GPT is usually the safer default when answer quality, tool use, and product polish matter more than infrastructure control. OpenAI positions GPT-5.4 around professional workflows, including coding, reasoning, tool use, and work across software environments.[1] The API documentation also lists support for streaming, function calling, structured outputs, image input, distillation, web search, file search, image generation, code interpreter, hosted shell, computer use, MCP, and related tools for GPT-5.4.[2]

Llama’s strongest advantage is that it brings serious capability into environments where you can control the weights. Llama 4 models use a mixture-of-experts architecture and support multilingual text and image input, with multilingual text and code output listed in the official model card.[5] Scout is the standout long-context option on paper because the model card lists a 10M-token context length.[5] Maverick is the larger total-parameter option, with 400B total parameters and 128 experts.[5]

Do not choose on context length alone. A very long window helps with book-scale review, huge logs, legal archives, codebase maps, and multi-document retrieval. It does not guarantee that the model will reason well over every token. For GPT-side planning, use context window sizes for every GPT model. For speed-sensitive GPT choices, use the fastest GPT model breakdown.

For multimodal work, the better choice depends on the workflow. GPT is often easier when you need hosted image input, tool calling, structured outputs, and a maintained application layer. Llama is attractive when images or text must stay in your private environment, or when your team wants to adapt the model around a narrow domain. For image-model tradeoffs outside this language-model comparison, see DALL-E vs Stable Diffusion and DALL-E vs Midjourney.

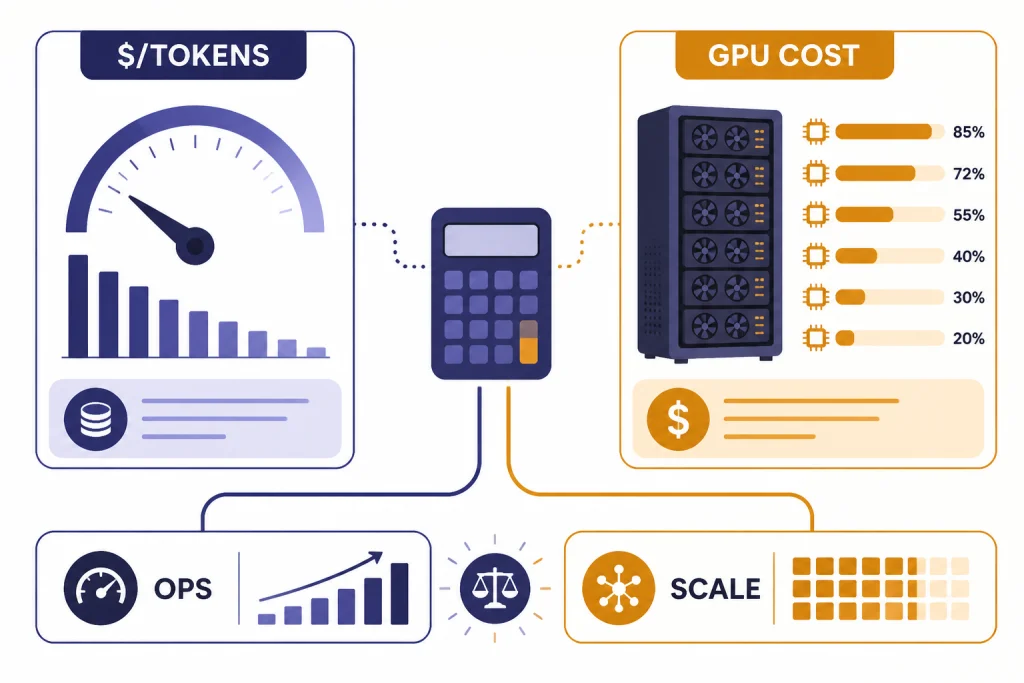

Cost, hosting, and operations

GPT cost is simple to estimate and easy to underestimate. GPT-5.4 standard API pricing is listed at $2.50 per 1 million input tokens, $0.25 per 1 million cached input tokens, and $15.00 per 1 million output tokens.[2] The same documentation says prompts above 272K input tokens on GPT-5.4 and GPT-5.4 pro are priced at 2x input and 1.5x output for the full session across standard, batch, and flex processing.[2] If you are building around OpenAI, keep OpenAI API pricing nearby.

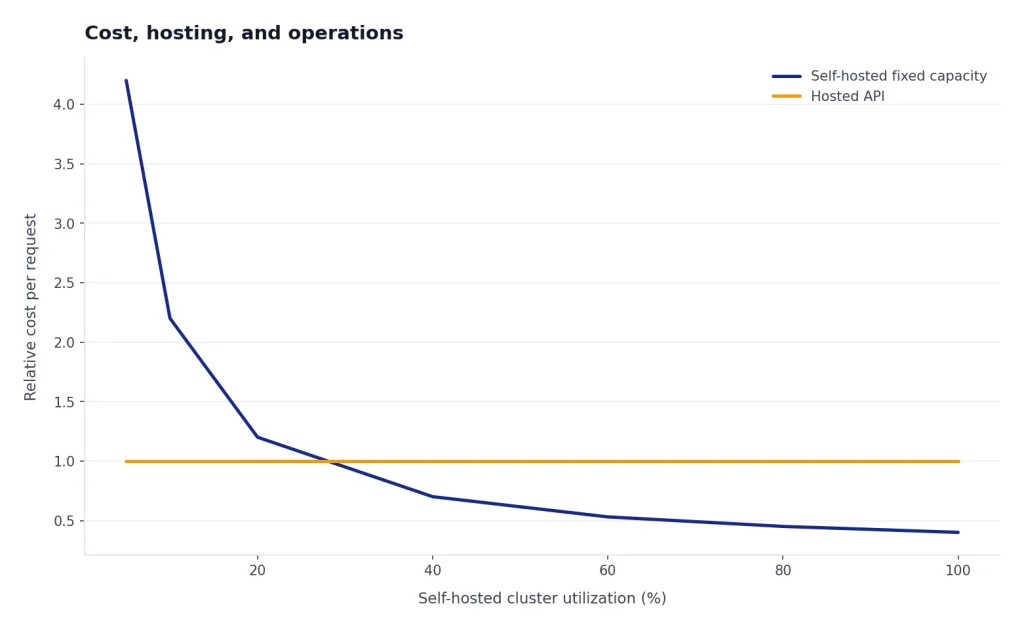

Llama cost is less standardized. You may pay nothing for the model weights, but you still pay for GPUs, inference servers, storage, network transfer, observability, evaluation, security, and engineering time. If you use a hosted Llama provider, you trade some control for a token price set by that provider. If you self-host, your cost curve depends on utilization. A busy cluster can be cheaper per request than a hosted frontier API. A lightly used cluster can be much more expensive.

The biggest hidden cost in Llama is operational maturity. Someone must manage model serving, autoscaling, batch jobs, failed requests, latency spikes, memory pressure, prompt logging, abuse monitoring, and safety filters. Someone must also decide when to upgrade checkpoints or fine-tunes. GPT shifts much of that work to OpenAI. Llama gives you the steering wheel and the maintenance bill.

For small teams, GPT often wins until usage is large enough to justify dedicated inference work. For larger engineering teams, Llama can make sense when inference volume is predictable, prompts are repetitive, data locality matters, or the model can be specialized for a narrow task. The break-even point is not universal. It depends on output length, cache hit rate, hardware utilization, latency targets, and engineering payroll.

Privacy, governance, and legal risk

GPT does not automatically mean weak privacy. OpenAI says it does not train its models on organization data by default for its API platform and business products, including ChatGPT Enterprise, ChatGPT Business, ChatGPT Edu, ChatGPT for Healthcare, and ChatGPT for Teachers.[3] That is useful for companies that need a managed vendor with enterprise privacy commitments, but it is still a third-party processing relationship. Legal, security, and procurement teams should review the contract, retention settings, logging, data residency, and product tier.

Llama can be stronger for data residency because you can run inference inside your own environment. That can reduce exposure to an external model API. It does not remove governance work. Your team still needs access controls, prompt logging policy, incident response, abuse prevention, red-team testing, and output review. Self-hosting also means your organization becomes responsible for more of the security surface.

The legal question is different for Llama. Meta’s Llama 4 Community License is a custom license, not a generic permissive open source license. It incorporates an acceptable use policy and includes the 700 million monthly active user threshold for a separate license request.[6] If your product has unusual scale, regulated use cases, competitive model-training plans, or EU-specific deployment constraints, have counsel review the current license before shipping.

The governance summary is simple. GPT gives you a vendor-managed compliance story and less infrastructure control. Llama gives you local control and more direct responsibility. Neither option eliminates the need for evaluation, human oversight, abuse monitoring, or clear internal rules.

Which one to choose

Pick GPT when you need high-quality general reasoning, fast implementation, strong tool support, and fewer infrastructure decisions. It is the better fit for internal assistants, document analysis, agentic workflows, coding help, customer-support copilots, research assistants, and applications where reliability matters more than owning model weights. If your decision is mainly about ChatGPT subscriptions rather than API design, compare ChatGPT Free vs Plus vs Pro, ChatGPT Plus vs Team, and ChatGPT Team vs Enterprise.

Pick Llama when control is the requirement, not a preference. It is the better fit for air-gapped workflows, private inference, custom model research, domain-specific fine-tuning, open-weight experimentation, and products that need to avoid hard dependency on a single hosted API. Llama is also useful when your team wants to inspect, compress, quantize, or serve the model in ways a hosted GPT endpoint does not allow.

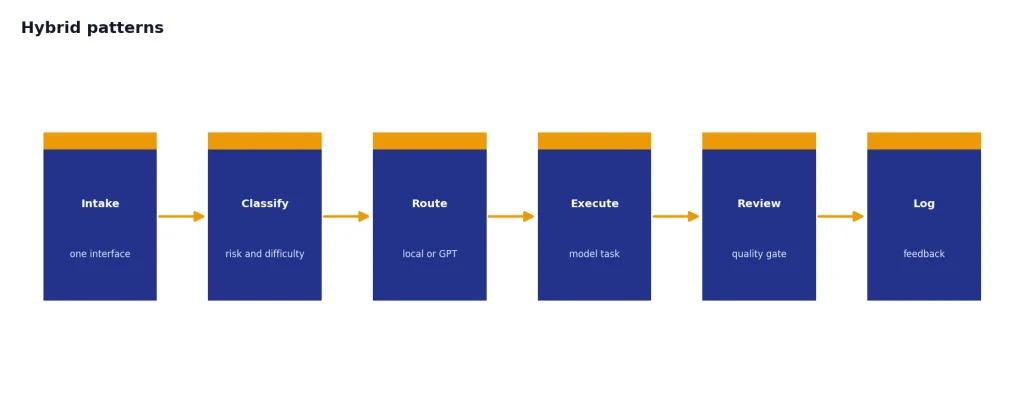

Use both if you can. Many strong systems route tasks by sensitivity, cost, and difficulty. A local Llama model can handle classification, extraction, first-pass drafting, private summarization, or high-volume low-risk requests. GPT can handle harder reasoning, tool-heavy tasks, final synthesis, and cases where quality matters more than marginal token cost. This is often the best architecture for serious teams.

If you are comparing beyond Meta, use GPT vs Mistral, GPT vs Qwen, and best AI chatbot alternatives to ChatGPT. The market changes quickly, and model choice should be revisited when pricing, context limits, latency, or licenses change.

Hybrid patterns

The most practical GPT vs Llama answer is often not either-or. A hybrid stack can keep routine and sensitive work local while reserving GPT for expensive reasoning. This pattern also reduces vendor lock-in. Your application can expose one internal interface while routing behind the scenes to GPT, Llama, or another model.

- Private-first routing: Send sensitive prompts to a self-hosted Llama model and escalate only approved summaries to GPT.

- Cost-tier routing: Use Llama for high-volume extraction and GPT for complex synthesis.

- Evaluation routing: Ask both models on critical tasks and compare outputs before a human review.

- Fallback routing: Keep Llama available if a hosted API has an outage or policy interruption.

- Specialist routing: Fine-tune or adapt Llama for a narrow domain while using GPT for broad reasoning.

Start with a small benchmark built from your own tasks. Include easy, medium, and hard prompts. Measure answer quality, latency, refusal behavior, cost, tool reliability, and failure recovery. Generic leaderboards help, but your workload is the only benchmark that decides whether GPT, Llama, or a hybrid system is best.

Frequently asked questions

Is Llama really open source?

Llama is open-weight, but it is not open source in the strict OSI sense. Meta provides downloadable weights under a custom Llama license. The license includes conditions, including a 700 million monthly active user threshold for separate licensing.[6]

Is GPT closed source?

The frontier GPT models are closed in the practical sense that you access them through OpenAI products and APIs rather than downloading their weights. OpenAI publishes product documentation, system cards, pricing, and usage guidance, but OpenAI has not published an official parameter count for GPT-5.4. OpenAI also has separate gpt-oss open-weight models, but those are not the same as the managed frontier GPT line.[8]

Which is cheaper, GPT or Llama?

GPT is easier to price because OpenAI publishes token rates for the API. GPT-5.4 standard pricing is $2.50 per 1 million input tokens and $15.00 per 1 million output tokens.[2] Llama can be cheaper at scale, but only if your hosting, GPU utilization, engineering, and maintenance costs are well managed.

Which is better for private data?

Llama is better when private inference must stay inside your own infrastructure. GPT can still be acceptable for many businesses because OpenAI says API and business product data is not used for training by default.[3] The right answer depends on your legal requirements, data sensitivity, retention needs, and vendor policy.

Can I fine-tune Llama more freely than GPT?

You have more control over Llama because the weights are available and you can run your own training or adaptation pipeline. You still must follow Meta’s license and acceptable use policy. GPT customization is usually done through the OpenAI platform using prompts, tools, retrieval, structured outputs, and supported fine-tuning options where available.

Should startups choose GPT or Llama first?

Most startups should prototype with GPT first because it reduces infrastructure work and shortens the path to a working product. Move some workloads to Llama later if cost, privacy, latency, or vendor dependency becomes a real constraint. Avoid self-hosting before you have enough usage to justify the operational burden.